IRJET-Machine Learning: Introduction, Algorithms and Implementation

advertisement

International Research Journal of Engineering and Technology (IRJET)

e-ISSN: 2395-0056

Volume: 06 Issue: 09 | Sep 2019

p-ISSN: 2395-0072

www.irjet.net

Machine Learning: Introduction, Algorithms and Implementation

Shubham Kumar1, Himanshu Pundir2

1,2Assisstant

Professor, Galgotias University, Greater Noida, U.P, India

---------------------------------------------------------------------------***--------------------------------------------------------------------------Abstract:- Over the previous couple of decades, Machine

Learning (ML) has advanced from the undertaking of

couple of PC devotees abusing the likelihood of PCs

figuring out how to make recreations, and a piece of

Mathematics (Statistics) that only sometimes thought to be

computational methodologies, to an autonomous research

discipline that has not just given the vital base to

measurable computational standards of learning

techniques, yet in addition has developed various

calculations that are routinely utilized for content

elucidation, design acknowledgment, and a numerous

other business purposes and has prompted a different

research enthusiasm for information mining to recognize

shrouded regularities or anomalies in social information

that developing by second. This paper centers around

clarifying the idea and development of Machine Learning, a

portion of the mainstream Machine Learning calculations

and attempt to think about three most prevalent

calculations dependent on some fundamental thoughts.

Sentiment140 dataset was utilized and execution of every

calculation as far as preparing time, expectation time and

exactness of forecast have been archived and thought

about.

Keywords: Machine Learning, Algorithm, Data, Training,

exactness

I. INTRODUCTION

AI is a worldview that may allude to gaining from past

involvement (which for this situation is past information)

to improve future execution. The sole focal point of this

field is programmed learning strategies. Learning alludes

to alteration or improvement of calculation dependent on

past "encounters" naturally with no outer help from

human.

While structuring a machine (a product framework), the

developer consistently has a particular reason as a main

priority.

For example, think about J. K. Rowling's Harry Potter

Series and Robert Galbraith's Cormoran Strike Series. To

affirm the case that it was in fact Rowling who had

composed those books under the name Galbraith, two

specialists were locked in by The London Sunday Times

and utilizing Forensic Machine Learning they had the

© 2019, IRJET

|

Impact Factor value: 7.34

|

option to demonstrate that the case was valid. They build

up an AI calculation and "prepared" it with Rowling's just

as different authors composing guides to look for and gain

proficiency with the basic examples and after that "test"

the books by Galbraith. The calculation reasoned that

Rowling's and Galbraith's composition coordinated the

most in a few viewpoints.

So as opposed to planning a calculation to address the

issue straightforwardly, utilizing Machine Learning, a

scientist look for a methodology through which the

machine, i.e., the calculation will think of its own answer

dependent on the model or preparing informational index

gave to it at first.

A. MACHINE

LEARNING:

INTERSECTION

STATISTICS AND COMPUTER SCIENCE

OF

AI was the marvelous outcome when Computer Science

and Statistics united. Software engineering centers around

structure machines that take care of specific issues, and

attempts to distinguish if issues are feasible by any stretch

of the imagination. The primary methodology that

Statistics in a general sense utilizes is information

surmising, demonstrating guesses and estimating

unwavering quality of the ends.

The characterizing ideaof Machine Learning is somewhat

unique however mostly reliant on both in any case.

Though Computer Science focus on physically

programming PCs, ML addresses the issue of getting PCs to

re-program themselves at whatever point presented to

new information dependent on some underlying learning

techniques gave. Then again, Statistics centers around

information surmising and likelihood, Machine Learning

incorporates extra worries about the attainability and

adequacy of models and calculations to process those

information, exacerbating a few learning assignments into

a minimal one and execution measures.

B. MACHINE LEARNING AND HUMAN LEARNING

A third research zone firmly identified with Machine

Learning is the investigation of human and creature

cerebrum in Neuroscience, Psychology, and related fields.

The scientists recommended that how a machine could

gain as a matter of fact most likely would not be essentially

ISO 9001:2008 Certified Journal

|

Page 893

International Research Journal of Engineering and Technology (IRJET)

e-ISSN: 2395-0056

Volume: 06 Issue: 09 | Sep 2019

p-ISSN: 2395-0072

www.irjet.net

unique in relation to how a creature or a human

personality learn with time and experience. Be that as it

may, the examination focused on taking care of AI issues

utilizing learning techniques for human mind did not yield

much encouraging outcome so far than the explores

worried about measurable - computational methodology.

This may be because of the way that human or creature

brain science remains not completely justifiable to date.

Notwithstanding these troubles, cooperation between

human learning and AI is expanding for AI is being utilized

to clarify a few learning procedures finding in human or

creatures. For instance, AI technique for transient contrast

was proposed to clarify neural flag in creature learning. It

is genuinely expected that this joint effort is to develop

significantly in coming years.

C. DATA MINING, ARTIFICIAL INTELLIGENCE AND

MACHINE LEARNING

By and by, these three controls are so interlaced and

covering that it's nearly to draw a limit or order among the

three. To place it at the end of the day, these three fields

are advantageously related and a blend of these

approaches may be utilized as a strategy to deliver

progressively proficient and sensitive outputs.

Generally, Data mining is essentially about deciphering any

sort of information, however it establishes the framework

for both man-made brainpower and AI. By and by, it test

data from different sources as well as it investigations and

perceives example and connections that exists in those

data that would have been hard to translate physically.

Subsequently, information mining is anything but a simple

strategy to demonstrate a theory yet technique for

illustration pertinent hypotheses. That mined information

and the relating examples and theories might be used the

reason for both AI and man-made brainpower.

Man-made brainpower might be extensively characterized

asmachinesthose being able to take care of a given issue

individually with no human intercession. The

arrangements are not programmed legitimately into the

framework yet the fundamental information and the AI

translating that information produce an answer

independent from anyone else. The understanding that

goes underneath is only an information mining calculation.

AI adopts elevate the strategy to a propelled level by giving

the information fundamental to a machine to prepare and

adjust appropriately when presented to new information.

This is known as "preparing". It centers on extracting data

from significantly large sets of information, and afterward

distinguishes and recognizes hidden examples utilizing

different factual measures to improve its capacity to

© 2019, IRJET

|

Impact Factor value: 7.34

|

decipher new information and produce increasingly

powerful outcomes. Clearly, a few parameters ought to be

"tuned" at the beginning level for better profitability.

AI is the foothold of computerized reasoning. It is farfetched to structure any machine having abilities

associated with knowledge, similar to language or vision,

to arrive without a moment's delay. That assignment

would have been practically difficult to tackle. Also, a

framework can not be considered totally shrewd on the off

chance that it did not have the capacity to take in and

improve from its past exposures.

II. PRESENT RESEARCH QUESTIONS& RELATED WORK

The Several applications referenced before proposes

significant advancement so far in ML calculations and their

principal hypothesis. The order is disclosing a few way,

testing a scope of learning issues. ML is an immense order

and over recent decades various analysts have included

their works in this field. The list of these works are

countably vast and referencing each work is out of the

extent of this paper. However this paper portrays the

principle research addresses that are being sought after at

present and give references to some of the ongoing

prominent deals with that task.

A. USING UNLABELLED DATA

LEARNING[10][11][25][26][27]

IN

SUPERVISED

Regulated learning calculations estimated the connection

among highlights and marks by characterizing an

estimator f : X → Y for a particular group of pre-marked

preparing information {□xi, yi□}. The fundamental test in

this methodology is pre-marked information isn't in every

case promptly accessible. So before applying Supervised

Classification, information should be preprocessed, sifted

and marked utilizing unaided learning, include extraction,

dimensionality decrease and so forth there by adding to

the absolute expense. This climb in expense can be

diminished adequately if the Supervised calculation can

utilize unlabelled information (e.g., pictures) also.

Strikingly, in numerous exceptional cases of learning

issues with extra presumptions, unlabelled information

can for sure be justified to improve the normal precision of

regulated learning. Like, consider grouping website pages

or distinguishing spam messages. As of now dynamic

analysts are truly considering new calculations or new

learning issues to abuse unlabelled information

proficiently.

ISO 9001:2008 Certified Journal

|

Page 894

International Research Journal of Engineering and Technology (IRJET)

e-ISSN: 2395-0056

Volume: 06 Issue: 09 | Sep 2019

p-ISSN: 2395-0072

B.

TRANSFERRINGTHE

[12][13][14][15][16]

LEARNING

www.irjet.net

EXPERIENCE

In numerous genuine issue, the regulated calculation may

include learning a group of related capacities (e.g., analysis

functions for medical clinics over the globe) as opposed to

a solitary capacity. Regardless of whether the analysis

functions for various urban areas (e.g., Kolkata and

London) are dared to be moderately unique, a few shared

characteristics are foreseen also. ML algorithms like

various leveled Bayesian methods give one methodology

that expect the learning parameters of both the capacities,

state for Kolkata and London individually, have some basic

earlier probabilities, and permits the information from

various city emergency clinics to overrule relevant priors

as fitting. The nuance further increments when the

exchange among the capacities are intensified.

C. LINKING DIFFERENT ML ALGORITHMS

Various ML calculations have been presented and probed

in various areas. One trail of research intends to find the

possible connections among the current ML calculations,

and fitting case or situations to utilize a specific

calculation. Consider, propositions two directed

arrangement calculations, Naive Bayes and Logistic

Regression.

Them

two

methodology

numerous

informational indexes particularly, however their

proportionality can be shown when actualized to explicit

kinds of preparing information (i.e., when the criteria of

Naive Bayes classifier are satisfied, and the quantity of

models in attempting set keeps an eye on interminability).

As a rule, the conceptual understanding of ML calculations,

their convergence highlights, and their respective

effectiveness and impediments to date remain an extreme

research concern.

D. BEST STRATEGICAL APPROACH FOR LEARNERS

WHICH COLLECTS THEIR OWN DATA

An outskirt research control centers around learning

frameworks that rather than precisely utilizing

information gathered by some different methods,

effectively gathers information for its very own handling

and learning. The exploration is given into finding the best

technique to totally hand over the control to the learning

calculation. For instance consider a medication testing

system which attempt to become familiar with the

accomplishment of the medication while observing the

uncovered patients for conceivable obscure symptoms and

attempt to thusly limiting them.

© 2019, IRJET

|

Impact Factor value: 7.34

|

E.

PRIVACY

[17][18][19][20]

PRESERVING

DATA

MINING

This methodology involves successfully applying

information mining and getting results without misusing

the fundamental information is pulling in assortment of

research networks and past.

Consider, a restorative conclusion routine trained with

information from medical clinics everywhere throughout

the world. Be that as it may, because of protection

concerns, this sort of utilizations isn't generally pursued.

Even if this introduces a cross street between information

mining and information security, continuous research says

a framework can have both. One proposed arrangement of

the above issue is to build up a mutual learning calculation

rather than a focal database. Every one of the clinics might

be permitted to utilize the calculation under precharacterized confinements to ensure the security of the

patients and after that hand it over to the following. This is

a blasting examination area, joining measurable misuse of

information and later cryptographic systems to guarantee

information protection.

F. NEVER-ENDING LEARNERS [21][22][23][24]

The vast majority of the AI undertakings involves

preparing the learner using certain informational indexes,

at that point putting aside the student and use the yield.

While, learning in people and different creatures adapt

constantly, adjusting various aptitudes in progression with

experience, and utilize these learnings and capacities in an

altogether synergistic way. Despite of sizeable business

utilizations

of

ML

calculations,

learning

in

machines(computers)to date has remained strikingly

lacking contrasted with learning in human or creature. An

elective methodology that more diligently capture the

variety, adroitness and accumulating character of learning

in human, is named as endless learning. For example, the

Never Ending Language Learner (NELL)[8] is a student

whose capacity is figuring out how to peruse website

pages and has been accounted for to peruse the internet

consistently since January 2010. NELL has obtained almost

80 million certainty weighted sentiments (Example, served

with(tea, bread rolls)) and has had the option to learn

million sets of highlights and parameters that capacitate it

to gain these convictions. Besides, it has turned out to be

equipped in perusing (removing) more convictions, and

oust old inaccurate ones, adding to a gathering of certainty

and provenance for every conviction and there by

improving each day than the last.

ISO 9001:2008 Certified Journal

|

Page 895

International Research Journal of Engineering and Technology (IRJET)

e-ISSN: 2395-0056

Volume: 06 Issue: 09 | Sep 2019

p-ISSN: 2395-0072

www.irjet.net

III. CATEGORISATION OF ML ALGORITHMS

B. ALGORITHMS GROUPED BY SIMILARITY

A staggering number of ML calculation have been

structured and presented over past years. Not every

person of them are generally known. Some of them didn't

fulfill or take care of the issue, so another was presented in

its place. Here the calculations are extensively assembled

into two classification and those two gatherings are

further sub-isolated. This segment attempt to name most

well known ML calculations and the following area looks at

three most broadly utilized ML calculations.

1. Regression Algorithms

A. GROUP BY LEARNING STYLE

2. Instance-based Algorithms

1. Supervised learning — Input information or preparing

information has a pre-decided name for example

Genuine/False, Positive/Negative, Spam/Not Spam and so

forth. A capacity or a classifier is constructed and prepared

to foresee the name of test information. The classifier is

appropriately tuned (parameter esteems are adjusted)to

accomplish a reasonable degree of exactness.

Occurrence based or memory-based learning model stores

cases of preparing information as opposed to building up

an exact meaning of objective capacity. At whatever point

another issue or model is experienced, it is analyzed as per

the put away examples so as to decide or anticipate the

objective capacity value. It can just supplant a put away

case by another one if that is a superior fit than the

previous. Because of this, they are otherwise called champ

take-all technique. Models: K-Nearest Neighbor (KNN),

Learning Vector Quantisation (LVQ), Self-Organizing Map

(SOM), Locally Weighted Learning (LWL) and so on.

2. Unsupervised learning - Input information or

preparing information isn't marked. A classifier is

structured by finding existing examples or bunch in the

preparation datasets.

3. Semi-administered learning - Training dataset

contains both marked and unlabelled information. The

classifieris train to gain proficiency with the examples to

order and mark the information just as to foresee.

4. Reinforcement learning - The calculation is prepared

to guide activity to circumstance so the reward or input

sign is augmented. The classifier isn't customized

directlyto pick the activity, however rather prepared to

find the most compensating activities by experimentation.

5. Transduction - Though it has comparative attributes

with administer adapting, yet it doesn't build up an

unequivocal classifier. It attempts to anticipate the yield

dependent on preparing information, preparing name, and

test data.

6. Learning to learn - The classifier is trained to learn

from the predisposition it initiated during past stages.

7. It is vital and proficient to compose the ML calculations

as for learning techniques when one have to think about

the essentialness of the preparation information and pick

the grouping decide that give the more prominent degree

of precision.

© 2019, IRJET

|

Impact Factor value: 7.34

|

Relapse examination is a piece of prescient investigation

and adventures the co-connection between ward (target)

and autonomous factors. The striking relapse models are:

Linear Regression, Logistic Regression, Stepwise

Regression , Ordinary Least Squares Regression (OLSR),

Multivariate Adaptive Regression Splines (MARS) , Locally

Estimated Scatterplot Smoothing (LOESS) and so forth.

3. Regularisation Algorithm

Regularization is essentially the way toward checking over

fitting or decrease the exceptions. Regularization is only a

straightforward yet ground-breaking alteration that is

increased with other existing ML models commonly

Regressive Models. It smoothes up the relapse line by

castigating any twisted of the bend that attempts to

coordinate the anomalies. Examples: Ridge Regression,

Least Absolute Shrinkage and Selection Operator (LASSO) ,

Elastic Net, Least-Angle Regression (LARS) and so forth.

4. Decision Tree Algorithms

A choice tree builds a tree like structure including of

potential answers for an issue dependent on specific

limitations. It is so named for it starts with a solitary

straightforward choice or root, which at that point forks

off into various branches until a choice or expectation is

made, shaping a tree.

They are favored for its capacity to formalize the issue

close by procedure that thus aides distinguishing potential

arrangements quicker and more precisely than others.

Models: Classification and Regression Tree (CART),

Iterative Dichotomiser 3 (ID3), C4.5 and C5.0, Chi-squared

Automatic Interaction Detection (CHAID) , Decision Stump,

M5, Conditional Decision Trees and so forth.

ISO 9001:2008 Certified Journal

|

Page 896

International Research Journal of Engineering and Technology (IRJET)

e-ISSN: 2395-0056

Volume: 06 Issue: 09 | Sep 2019

p-ISSN: 2395-0072

www.irjet.net

5. Bayesian Algorithms

10. Deep Learning Algorithms

A gathering of ML calculations utilize Bayes' Theorem to

take care of arrangement and relapse issues.

These are increasingly modernized renditions of ANNs

that benefit from the lavish supply of information today.

Examples: Naive Bayes, Gaussian Naive Bayes, Multinomial

Naive Bayes, Averaged One-Dependence Estimators

(AODE), Bayesian Belief Network (BBN), Bayesian

Network (BN) and so forth.

They are utilizes larger neural systems to tackle semidirected issues where real segment of a proliferate

information is unlabelled or not grouped. Models: Deep

Boltzmann Machine (DBM), Deep Belief Networks (DBN),

Convolutional Neural Network (CNN), Stacked AutoEncoders and so forth.

6. Support Vector Machine (SVM)

SVM is so prevalent a ML strategy that it tends to be its

very own gathering. It uses an isolating hyper plane or a

choice plane to demarcate choice limits among a lot of

information points classified with various marks. It is a

carefully regulated grouping calculation. As such, the

calculation builds up an ideal hyper plane using input

information or preparing information and this choice

plane in turns categories new models. In light of the

portion being used, SVM can perform both straight and

nonlinear arrangement.

7. Clustering Algorithms

Grouping is worried about utilizing instilled design in

datasets to arrange and name the information accordingly

.Examples: K-Means, K-Medians, Affinity Propagation,

Spectral Clustering, Ward progressive bunching,

Agglomerative grouping. DBSCAN, Gaussian Mixtures,

Birch, Mean Shift, Expectation Maximization (EM) and so

forth.

8. Association Rule Learning Algorithms

Affiliation standards help discover correlation between

apparently unassociated information. They are generally

utilized by internet business sites to anticipate client

practices and future needs to elevate certain engaging

items to him. Models: Apriori calculation, Eclat calculation

and so forth.

A model dependent on the constructed and activities of

real neural systems of people or animals. ANNs are viewed

as non-straight models as it attempts to find complex

relationship among information and yield information.

However, it draws test from information as opposed to

thinking about the whole set and along these lines

diminishing expense and time. Models: Perceptron, BackPropagation, Hop-field Network, Radial Basis Function

Network (RBFN) and so on.

|

Impact Factor value: 7.34

Dimensionality decrease is normally utilized to lessen a

bigger informational collection to its most discriminative

segments to contain applicable data and portray itwith less

highlights. This gives a legitimate representation for

information with various highlights or of high

dimensionality and aides in actualizing regulated grouping

more efficiently. Examples: Principal Component Analysis

(PCA), Principal Component Regression (PCR), Partial

Least Squares Regression (PLSR), Sammon Mapping,

Multidimensional Scaling (MDS), Projection Pursuit, Linear

Discriminant Analysis (LDA), Mixture Discriminant

Analysis (MDA), Quadratic Discriminant Analysis (QDA),

Flexible Discriminant Analysis (FDA) and so forth.

12. Ensemble Algorithms

The primary reason for an outfit strategy is to coordinate

the projections of a few more fragile estimators that are

separately prepared so as to lift up or upgrade

generalisability or vigor over a solitary estimator. The

sorts of students and the way to fuse them is painstakingly

picked as to amplify the exactness. Models: Boosting,

Bootstrapped Aggregation (Bagging), AdaBoost, Stacked

Generalization (mixing), Gradient Boosting Machines

(GBM), Gradient Boosted Regression Trees (GBRT),

Random Forest, Extremely Randomized Trees and so on.

IV. MEASURING AND COMPARING PERFORMANCES OF

POPULAR ML ALGORITHMS

9. Artificial Neural Network (ANN) Algorithms

© 2019, IRJET

11. Dimensionality Reduction Algorithms

|

Despite the fact that different scientists have added to ML

and various calculations and strategies have been

presented as referenced before, in the event that it is

firmly contemplated the vast majority of the handy ML

approach incorporates three principle administered

calculation or their variation. These three are in particular,

Naive Bayes, Support Vector Machine and Decision Tree.

Lion's share of specialists have used the idea of these

three, be it straightforwardly or with a boosting

calculation to improve the proficiency further. These three

ISO 9001:2008 Certified Journal

|

Page 897

International Research Journal of Engineering and Technology (IRJET)

e-ISSN: 2395-0056

Volume: 06 Issue: 09 | Sep 2019

p-ISSN: 2395-0072

www.irjet.net

calculations are talked about quickly in the accompanying

area.

A. NAIVE BAYES CLASSIFIER

It is a regulated arrangement method developed utilizing

Bayes' Theorem of restrictive likelihood with a 'Guileless'

supposition that each pair of highlight is commonly

autonomous. That is, in less difficult words, nearness of an

element isn't affected by nearness of another using any

and all means. Regardless of this over-rearranged

suspicion, NB classifiers performed very well in numerous

down to earth circumstances, as in content

characterization and spam recognition. Just a modest

quantity of preparing information is need to gauge certain

parameters. Alongside, NB classifiers have impressively

outflanked even exceedingly propelled characterization

strategies.

B. SUPPORT VECTOR MACHINE

SVM, another directed characterization calculation

proposed by Vapnik in 1960s have as of late pulled in a

noteworthy consideration of researchers. The basic

geometrical explanation of this methodology includes

determining an ideal isolating plane or hyper plane that

isolates the two classes or bunches of information focuses

legitimately and is equidistant from them two. SVM was

defined at first for direct dispersion of information focuses.

Afterward, the bit capacity was acquainted with handle

non-direct pieces of information also.

C. DECISION TREE

A grouping tree, prevalently known as choice tree is one of

the best managed learning calculation. It develops a chart

or tree that utilizes spreading strategy to show each

probable result of a choice. In a choice tree portrayal, each

inward hub tests a component, each branch compares to

result of the parent hub and each leaf at long last allots the

class mark. To characterize an example, a top-down

methodology is connected beginning at the base of the

tree. For a specific element or hub, the branch concurring

to the estimation of the information point for that trait is

considered till a leaf is come to or a mark is chosen.

Presently, the exhibitions of these three were generally

contrasted utilizing a lot of tweets and names positive,

negative and nonpartisan. The crude tweets were taken

from Sentiment140 informational collection. At that point

those are pre-prepared and named utilizing a python

program. Every one of these classifier were presented to

same information. Same calculation of highlight

determination, dimensionality decrease and k-crease

© 2019, IRJET

|

Impact Factor value: 7.34

|

approval were utilized for every situation. The calculations

were looked at dependent on the preparation time,

forecast time and precision of the expectation. The trial

result is given underneath.

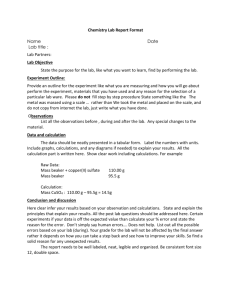

Table - 1: Comparison between Gaussian NB, SVM and

Decision Tree

Be that as it may, effectiveness of a calculation to some

degree relies upon the informational index and the area it

is connected to. Under specific conditions, a ML

calculations may beat the other.

V. APPLICATIONS

One clear indication of progression in ML is its significant

genuine applications, some of which are quickly portrayed

heredity is to be noticed that until 1985 there was no

signifiant business uses of ML calculations.

A. SPEECH RECOGNITION

All present discourse acknowledgment frameworks

accessible in the market use AI ways to deal with train the

framework for better exactness. By and by, the majority of

such frameworks actualize learning in two unmistakable

stages: pre-shipping speaker-free preparing and postshipping speaker-subordinate preparing.

B. COMPUTER VISION.

Dominant part of ongoing vision frameworks, e.g., facial

acknowledgment virtual products, frameworks equipped

for programmed characterization tiny pictures of cells,

utilize AI approaches for better precision. For instance, the

US Post Office utilizes a PC vision framework with a

penmanship analyser in this manner prepared to sort

letters with transcribed locations naturally with an

exactness level as high as 85%.

C. BIO-SURVEILLANCE

Several government activities to follow plausible flare-ups

of diseasesuses ML calculations. Consider the RODS

ISO 9001:2008 Certified Journal

|

Page 898

International Research Journal of Engineering and Technology (IRJET)

e-ISSN: 2395-0056

Volume: 06 Issue: 09 | Sep 2019

p-ISSN: 2395-0072

www.irjet.net

venture in western Pennsylvania. This undertaking gathers

affirmations reports to crisis rooms in the emergency

clinics there, and the a ML programming framework is

prepared utilizing the profiles of conceded patients in

request to recognize distorted manifestations, their

examples and areal circulation. Research is continuous to

incorporate some extra information in the framework, as

over-the-counter meds' buy history to give more training

data. Multifaceted nature of this sort of mind boggling and

dynamic informational collections can be taken care of

effectively utilizing robotized learning strategies as it

were.

D. ROBOT OR AUTOMATION CONTROL

ML strategies are to a great extent utilized in robot and

computerized frameworks. For instance, consider the

utilization of ML to get control strategies for stable flight

and aerobatics of helicopter. Oneself driving autos created

by Google usesML to prepare from gathered landscape

information.

E. EMPIRICAL SCIENCE EXPERIMENTS

An enormous gathering information serious science

controls use ML techniques in a few of it looks into. For

instance, ML is being actualized in hereditary qualities, to

recognize unordinary heavenly items in cosmology, and in

Neuroscience and mental examination.

The other little scale yet significant use of ML includes

spam separating, extortion discovery, point ID and

prescient examination (e.g., climate conjecture, securities

exchange forecast, showcase study and so forth.).

VI. FUTURE SCOPE

AI is explore territory that has pulled in a great deal of

splendid personalities and it can possibly unveil further.

Be that as it may, the three most significant future subissues are picked to be talked about here.

A. EXPLAINING HUMAN LEARNING

|

Impact Factor value: 7.34

B. PROGRAMMING LANGUAGES CONTAINING MACHINE

LEARNING PRIMITIVES

In majority of uses, ML calculations are consolidated with

physically coded programs as part of an application

programming. The need of another programming language

that is independent to help physically composed

subroutines just as those defined as "to be scholarly." It

could enable the coder to define a set of data sources

yields of each "to be educated" program and opt for a

calculation from the gathering of fundamental learning

methods already conferred in the language. Programming

dialects like Python (Sckit-learn), R and so on previously

utilizing this idea in littler degree. Be that as it may, an

interesting new inquiry is raised as to develop a

modeltodefinerelevant learning background for every

subroutine labeled as "to be picked up", timing, and

security in instance of anyunforeseenmodification to the

program's function.

C. PERCEPTION

A summed up idea of PC perception that can interface ML

calculations which are used in various type of PC

observation today including however not restricted to

exceedingly propelled vision, discourse acknowledgment

and so on., is another potential research territory. One idea

provoking problem is the incorporation of different senses

(e.g., locate, hear, contact and so on) to set up a framework

which utilize self-directed figuring out how to assess one

tangible knowledge using the others. Inquires about in

formative brain science have noted progressively

compelling learning in humanswhenvarious input

modalities are provided, and examines on co-preparing

techniques insinuatesimilar results.

VII. CONCLUSIONS

A referenced before, AI speculations have been perceived

fitting to comprehend features of learning in people and

creatures. Fortification learning calculations gauge the

dopaminergic neurones initiated exercises in creatures

during remuneration based learning with astonishing

precision. ML calculations for revealing sporadic

delineations of normally showing up pictures foresee

visual highlights recognized in creatures' underlying visual

cortex. All things considered, the significant drivers in

human or creature learning like incitement, ghastliness,

© 2019, IRJET

earnestness, hunger, instinctual activities and learning by

experimentation over various time scales, are not yet

considered in ML calculations. This a potential chance to

find a progressively summed up idea of discovering that

entails both creatures and machine.

|

The foremost target of ML scientists is to plan

progressively productive (as far as both time and

space)and down to earth broadly useful learning

techniques that can perform better over a boundless area.

With regards to ML, the productivity with which a strategy

uses information assets that is additionally a significant

exhibition worldview alongside reality unpredictability.

Higher precision of expectation and humanly interpretable

forecast principles are additionally of high significance.

ISO 9001:2008 Certified Journal

|

Page 899

International Research Journal of Engineering and Technology (IRJET)

e-ISSN: 2395-0056

Volume: 06 Issue: 09 | Sep 2019

p-ISSN: 2395-0072

www.irjet.net

Being totally information driven and being able to analyze

a lot of information in littler interims of time, ML

calculations has an edge over manual or direct

programming.

Additionally

they

are

frequently

increasingly exact and not inclined to human inclination.

Think about the accompanying situations:

4. E. Osuna, R. Freund, and F. Girosi. Support vector

machines: training and applications. AI Memo 1602, MIT,

May 1997.

Improvement of a product to explain discernment

assignments utilizing sensors, similar to discourse

acknowledgment, PC vision and so on. It is simple for

anybody to name a picture of a letter by the letter set it

indicates, however structuring a calculation to play out

this assignment is troublesome.

6. C.J.C. Burges. A tutorial on support vector machines for

pattern recognition. Data Mining and Knowledge

Discovery, 2(2):1-47, 1998.

Customisation of a product as indicated by the earth it is

conveyed to. Consider, discourse acknowledgment

programming projects that must be modified by the

requirements of the client. Like internet business locales

that alters the items shown by clients or email peruser that

empowers spam identification according to client

inclinations. Direct programming does not have the

capacity to adjust when presented to various condition.

ML provides a programming the adaptability and flexibility

when vital. Regardless of some application (e.g., to

compose network duplication programs) where ML may

neglect to be useful, with increment of information assets

and expanding request in customized customisable

programming, ML will flourish in not so distant future.

Other than programming advancement, ML will most likely

however help reform the general outlook of Computer

Science. By changing the characterizing question from

"how to program a PC" to "how to empower it to program

itself," ML convents the improvement of devices that are

self-observing, self-diagnosing and self-fixing, and the uses

of the information stream accessible inside the program

instead of simply preparing it. In like manner, it will help

change Statistical principles, by providing more

computational position. Clearly, the two Statistics and

Computer Science will likewise adorn ML as they create

and contribute more advanced theories to change the

method for learning.

References

1. T. M. Mitchell, Machine Learning, McGraw-Hill

International, 1997.

5. V. Vapnik. Statistical Learning Theory. John Wiley &

Sons, 1998.

7. Taiwo Oladipupo Ayodele, Types of Machine Learning

Algorithms, New Advances in Machine Learning, Yagang

Zhang (Ed.), InTech, 2010

8. T. Mitchell, W. Cohen, E. Hruschka, P. Talukdar, J.

Betteridge, A. Carlson, B. Dalvi, M. Gardner, B. Kisiel, J.

Krishnamurthy, N. Lao, K. Mazaitis, T. Mohamed, N.

Nakashole, E. Platanios,A. Ritter, M. Samadi, B. Settles, R.

Wang, D. Wijaya, A. Gupta, X. Chen, A. Saparov, M. Greaves,

J. Welling, Never-Ending Learning, Proceedings of the

Twenty-Ninth AAAI Conference on Artificial Intelligence,

2014

9. Pedregosa et al.,Scikit-learn: Machine Learning in

Python, JMLR 12, pp. 2825-2830, 2011.

10. Wang, J. and Jebara, T. and Chang, S.-F. Semi-supervised

learning using greedy max- cut.Journal of Machine

Learning Research, Volume 14(1), 771-800 2013

11. Chapelle, O. and Sindhwani, V. and Keerthi, S. S.

Optimization Techniques for Semi- Supervised Support

Vector Machines, Journal of Machine Learning Research,

Volume 9, 203–233, 2013

12. J. Baxter. A model of inductive bias learning. Journal of

Artificial Intelligence Research, 12:149–198, 2000.

13. S. Ben-David and R. Schuller. Exploiting task

relatedness for multiple task learning. In Conference on

Learning Theory, 2003.

14. W. Dai, G. Xue, Q. Yang, and Y. Yu, Transferring Naive

Bayes classifiers for text classification.AAAI Conference on

Artificial Intelligence, 2007.

2. T.M. Mitchel, The Discipline of Machine Learning, CMUML-06-108, 2006

15. H. Hlynsson. Transfer learning using the minimum

description length principle with a decision tree

application. Master’s thesis, University of Amsterdam,

2007.

3. N. Cristianini and J. Shawe-Taylor. An Introduction to

Support Vector Machines. Cambridge University Press,

2000.

16. Z. Marx, M. Rosenstein, L. Kaelbling, and T. Dietterich.

Transfer learning with an ensemble of background tasks.

In NIPS Workshop on Transfer Learning, 2005.

© 2019, IRJET

|

Impact Factor value: 7.34

|

ISO 9001:2008 Certified Journal

|

Page 900

International Research Journal of Engineering and Technology (IRJET)

e-ISSN: 2395-0056

Volume: 06 Issue: 09 | Sep 2019

p-ISSN: 2395-0072

www.irjet.net

17. R Conway and D Strip, Selective partial access to a

database, In Proceedings of ACM Annual Conference, 85 89, 1976

18. P D Stachour and B M Thuraisingham Design of LDV A

multilevel secure relational databasemanagement system,

IEEE Trans. Knowledge and Data Eng., Volume 2, Issue 2,

190 - 209, 1990

19. R Oppliger, Internet security: Firewalls and beyond,

Comm. ACM, Volume 40, Issue 5, 92 -102, 1997

20. Rakesh Agrawal, Ramakrishnan Srikant, Privacy

Preserving Data Mining, SIGMOD '00 Proceedings of the

2000 ACM SIGMOD international conference on

Management of data, Volume 29 Issue 2,Pages 439-450,

2000

21. A. Carlson, J. Betteridge, B.Kisiel, B.Settles,E.

R.Hruschka Jr, and T. M. Mitchell, Toward an architecture

for never-ending language learning, AAAI, volume 5, 3,

2010

22. X. Chen, A. Shrivastava, and A. Gupta, Neil: Extracting

visual knowledge from web data, In Proceedings of ICCV,

2013.

23. P. Donmezand J. G. Carbonell, Proactive learning: costsensitive active learning with multiple imperfect oracles.

In Proceedings of the 17th ACM conference on Information and knowledge management, 619–628. ACM,

2008

24. T. M.Mitchell, J. Allen, P. Chalasani, J. Cheng, O. Etzioni,

M. N. Ringuetteand J. C. Schlimmer, Theo: A framework for

self-improving systems, Arch. for Intelli- gence 323–356,

1991

25. Gregory, P. A. and Gail, A. C. Self-supervised ARTMAP

Neural Networks, Volume 23, 265-282, 2010

26. Cour, T. and Sapp, B. and Taskar, B. Learning from

partial labels, Journal of Machine Learning Research,

Volume 12, 1501-1536 2012

27. Adankon, M. and Cheriet, M. Genetic algorithm-based

training for semi-supervised SVM, Neural Computing and

Applications , Volume 19(8), 1197-1206, 2010

© 2019, IRJET

|

Impact Factor value: 7.34

|

ISO 9001:2008 Certified Journal

|

Page 901