Lecture 2: Principles of

Photography & Imaging

© 2019 Dr. Sarhat M Adam

BSc in civil Engineering

Msc in Geodetic Surveying

PhD in Engineering Surveying & Space Geodesy

Note – Figures and materials in the slides may be the authors own work or extracted from internet websites, Materials by Duhok or Nottingham universities staff and their slides, author's own knowledge, or various internet image sources and books.

Introduction

◼

Photography, which means “drawing with light,”

◼ Idea originated long before cameras.

◼ Ancient Arab observed it in their tents.

◼ Children observe it in their childhood. d

1

Fig01

Introduction

◼ In 1700 , French artist used the idea in the figure for drawing object.

◼ In 1839, the French Louis Daguerre made a pinhole box to capture photograph without an artist.

◼ Many improvements have been made, but the basic principles of image capturing remained essentially unchanged.

◼ The modern innovation technology of imaging is Digital Camera.

Fundamentals Optics

◼ Film and digital cameras have lens which depend upon optical elements.

◼ Optics has two branches

◼

Physical Optics

◼

Lights travel through a transmitting medium such as air in a series of electromagnetic waves emanating from a point source.

◼

Can be visualized as a group of concentric circles expanding or radiating away from a light source.

Fig02

Fundamentals Optics

◼ Light wave has frequency, amplitude, and wavelength.

◼ Frequency: number of waves pass a point in a unit of time.

◼ Amplitude: height of the crest or depth of the trough.

◼

Wavelength: distance of full cycle.

◼ V is velocity, in units m/s

◼

◼

◼ f: frequency, in cycles/s or hertz

Λ: wavelength, in m Fig03 light moving at the rate of 2.99792458 × 10 8 (m/s) in a vacuum.

◼

Fundamentals Optics

Fig04

Bundle of rays emanating from a point source in accordance with the concept of geometric optics.

Refraction of light rays

Fig05

• n & n′ are refractive index of the 1 st & 2 nd medium, respectively.

• Angles φ & φ′ are measured from the normal to the incident and refracted rays, respectively.

Fundamentals Optics

◼ Light rays may change directions by reflection.

◼ When a light ray strikes a highly polished metal mirror, it is reflected so that the angle of reflection φ′′ = incidence angle φ.

Fig06

(a) Firstsurface mirror demonstrating the angle of incidence φ and angle of refection

φ′′ (b) back-surfaced mirror.

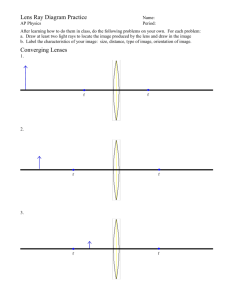

Lenses

◼ Simple lens is optical glass with two or one spherical surface.

◼ To gather light rays and bring them to focus at opposite side.

◼ Act like tiny pinhole allows a single light ray from each object point to pass.

◼ Tiny hole of diameter d

1 of the pinhole camera illustrated in F shape figure produces an inverted image of the object.

◼ When an object is illuminated, each point in the object reflects a bundle of light rays.

◼ An infinite number of image points, focused in the image plane

Lenses

Fig07

Camera VS Eye iris retina

• The iris limits the amounts of light from entering our eyes.

• In camera is represented by diaphragm.

Lenses

◼

◼

◼

◼

◼

The optical axis line joining the centers of curvature of the spherical surfaces of the lens (points O1 and O2).

R1 and R2 are the radii of the lens Surfaces.

Rays parallel to OA come to focus at F (focal point of the lens). f is the focal length (F to center of lens).

A plane perpendicular to the optical axis passing through the focal point is called the plane of infinite focus.

Fig08

Lenses

◼ A single ray of light traveling through air (n = 1.0003) enters a convex glass lens (n′ = 1.52) having a radius of 5.00 centimeters

(cm), as shown in Figure. If the light ray is parallel to and 1.00 cm above the optical axis of the lens, what are the angles of incidence ϕ and refraction ϕ′ for the air-to-glass interface?

Fig09

Lenses

◼ Answer

Fig10

Lenses

◼ Equation 1 defines the relationship between the object distance

(o), the focal length (f), and the image distance (i).

◼ The focal length is a characteristic of the thin lens, and it specifics the distance at which parallel rays come to a focus.

---------- (1)

Fig11

Object distance=infinity

1/o too small for object at infinity so

Image distance = focal length https://www.physicsclassroom.com/c lass/refrn/Lesson-5/Converging-

Lenses-Object-Image-Relations

Lenses

◼ Example

Find the image distance for an object distance of 50.0 m and a focal length of 50.0 cm.

Solution

Thick Lenses

◼ Former assumption on lenses considered thickness negligible.

◼ With thick lenses, this assumption is no longer valid.

◼ Nodal points must be defined for thick lenses.

◼

Called incident nodal point and the emergent nodal point.

Fig12

Fig13

Aerial Camera with 15 unit lenses

Thick Lenses

◼

(f) of thick lens is the distance from N′ to this plane of infinite focus.

◼ It is impossible for a single lens to produce a perfect image.

◼ Always be somewhat blurred and geometrically distorted.

◼ The imperfections called aberrations.

◼ Using of additional lens elements able to correct for aberrations.

◼ Lens distortions do not degrade image quality but deteriorate the geometric quality (or positional accuracy) of the image.

◼ They are ether symmetric radial, or decentering.

◼ Both occur if light rays are bent, or change directions.

◼

◼

◼

Lenses

Angle formed between the camera’s lens and the width of area seen on the photograph.

f = distance between lens &focal plane

Wide angle lenses (short f) excessively exaggerate displacement of tall objects best for flat terrain

Single lens Camera

◼ Most important fundamental instrument in Photogrammetry.

◼ Geometry is depicted in fig01 and similar to that of pinhole, fig 07.

◼

◼

The size of aperture (d

2

) is much larger than a pinhole.

Object & image distances governed by the lens formula

◼ Pphotographing objects at great distances, the term 1/o approaches zero and image distance i is then equal to f.

◼ In aerial photography, O is very great with respect to I, therefore aerial cameras are manufactured with their focus fixed for infinity.

◼

This is accomplished by fixing image distance equal to the focal length of the camera lens.

Fundamentals Optics

Illuminance

Fundamentals Optics

Shutter & Aperture

◼ illuminance and time of exposure unit is meter candle-seconds

◼ f-stop settings variation made by aperture which controlled by

Diaphragm.

◼ As the diameter of the aperture increases, enabling faster exposures, DoF become less & lens distortion become more.

◼ To maximize DoF, slow shutter speed and large f-stop setting.

◼ To photograph rapid moving objects, a fast shutter speed is essential.

◼

If aperture area is doubled, total exposure is doubled.

◼ If shutter time is halved and aperture area is doubled, total exposure remains unchanged.

◼

◼

◼

◼

Fundamentals Optics

Shutter & Aperture

Nominal f-stop (1, 1.4, 2.0, 2.8, 4.0, 5.6, 8.0, 11, 16, 22, and 32).

The aperture diameter equals the lens focal length. (f/d)=1.0. f-1.4 halves the aperture area from that of f-1.

Nominal f-stops listed previously halves the aperture area of the preceding one.

Fundamentals Optics

Shutter & Aperture

Fundamentals Optics

Shutter & Aperture

3.

4.

◼

1.

2.

Fundamentals Optics

Shutter & Aperture

Digital Camera in addition to manual, has

Fully automatic mode, where both f-stop and shutter speed are appropriately selected.

Aperture priority mode, where the user inputs a fixed f-stop and the camera selects the appropriate shutter speed.

Shutter priority mode, where the user inputs a fixed shutter speed and the camera selects the appropriate f-stop.

In “Program” mode, the camera automatically chooses the Aperture and the Shutter

Speed based on the amount of light.

Fundamentals Optics

Shutter & Aperture

◼

Fundamentals Optics

Example

Suppose that a photograph is optimally exposed with an stop setting of the correct

1/1000s?

f

-stop setting if shutter speed is changed to

f

-

f

-4 and a shutter speed of 1/500 s. What is

Solution

Total exposure is the product of diaphragm area and shutter speed. This product must remain the same for the 1/1000 -s shutter speed as it was for the 1/500-s shutter speed, or

A

1 time

1

=A

2 time

2

◼

Let

d

1 and

d

2 be diaphragm diameters for 1/500- and

1/1000 -s shutter times, respectively.

Fundamentals Optics

Example

◼

Then the respective diaphragm areas are

◼

◼

◼

◼

◼

◼

Fundamentals Optics

Digital Images

Digital image is divided into a fine grid of “picture elements,” or pixels.

Consists of an array of integers, referred to as

digital numbers (gray level

, or degree of darkness). many thousands or millions of these pixels available in one image.

Numbers in the range 0 to 255 can be accommodated by 1 byte.

Each 1 byte consists of 8 binary digits. or bits.

An 8-bit value can store 2 8 , or

256, values.18*11=198 Bytes.

◼

◼

◼

1.

Fundamentals Optics

Digital Images

Discrete sampling ,

a process in which digital image produced

In this process, small image area (a pixel) is “sensed”

◼

To determine the amount of electromagnetic energy GSD

Discrete Sampling

has two fundamental characteristics:

◼

◼

Geometric (Spatial) Resolution

Physical size of an individual pixel

Smaller pixel sizes corresponding to higher geometric resolution

160*160 80*80 40*40 20*20 10*10

2.

Fundamentals Optics

Digital Images

2.

1.

Radiometric Resolution

Level of quantization

Discrete quantization levels are 256, 32, 8, and 2

8bits, 4bits, 3bits, and 1 bits.

Spectral resolution

Digital Images

◼

2. Spectral resolution

Sun emits electromagnetic energy, entire range called electromagnetic spectrum.

◼

◼

◼

Electromagnetic energy travels in sinusoidal oscillations called waves.

The velocity of electromagnetic energy in a vacuum is constant.

C=Fƛ

Visible spectrum are very composed of only a very small portion.

Fundamentals Optics

Digital Images

2. Spectral resolution

Fundamentals Optics

Digital Images

Example

A 3000-row by 3000-column satellite image has three spectral channels.

If each pixel is represented by 8 bits (1 byte) per channel, how many bytes of computer memory are required to store the image?

Fundamentals Optics

Lens resolution

◼ Resolution is important in photogrammetry

◼ Resolution or resolving of lens

◼ Measures by two methods

◼ Lines/mm and MTF

Fundamentals Optics

Lens DoF

◼ Object distance that can be accommodated by a lens

◼

Without deterioration

◼

Aperture dependent.

◼ The shorter the focal

◼ The shorter the f, the greater its depth of field.

Camera inner parts

Aperture

Camera inner parts

◼

1.

2.

Fundamentals Optics two general classifications

Metric Camera

◼ Specifically for photogrammetric applications.

◼

◼

◼

◼

Have fiducial marks built into their focal planes.

Have calibrated CCD arrays, which enable accurate recovery of their principal points.

Stably constructed and completely calibrated (f, p, &, lens distr. Can be applied over long period.

Large format cameras (9”*9”)

Nonmetric Camera

◼

◼

For low accuracy requirements and budgets.

◼ characterized by an adjustable principal distance, no film flattening or fiducial marks, lenses with relatively large distortions, and lens distortion values vary with different focus settings ((i.e., different principal distances).

◼

◼

Digital image Display

The colour of any pixel can be represented by 3D coordinates in what is known as BGR (or RGB) colour space.

If quantified as an 8-bit value, this range from 0 to 255 for R, G, and B.

Digital image Display

◼

◼

◼

The intensity-hue-saturation (IHS) system, on the other hand, is more readily understood by humans.

cylindrical coordinates in which the height, angle, and radius represent intensity, hue, & saturation, respectively.

Axis of the cylinder is the grey line, extends from origin to max RGB.

Digital image Display

◼

◼

Intensity = brightness & hue = the specific mixture of wavelengths that define the colour & saturation = the boldness of the colour.

Hue