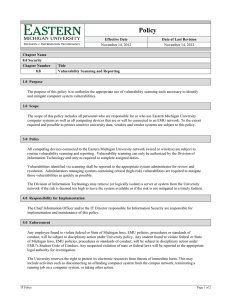

NIST Tech guide for info sec testing & assessment

advertisement