On-Chip Networks (NoCs)

Seminar on Embedded System Architecture (Prof. A. Strey)

Simone Pellegrini

simone.pellegrini@uibk.ac.at

University of Innsbruck – December 10, 2009

1

Introduction

Advancements in chip manufacturing technology allow, nowadays, the integration of several hardware components into a single integrated circuit reducing both manufacturing costs and system dimensions. System-on-a-Chip (SoC) integrates – on a single chip – several building blocks, or IPs (Intellectual Property), such as general-purpose programmable processors, DSPs, accelerators, memory

blocks, I/O blocks, etc. Recently, the exponential growth of the number of IPs employed in SoC designs is arising the need for a more efficient and scalable on-chip core-to-core interconnection.

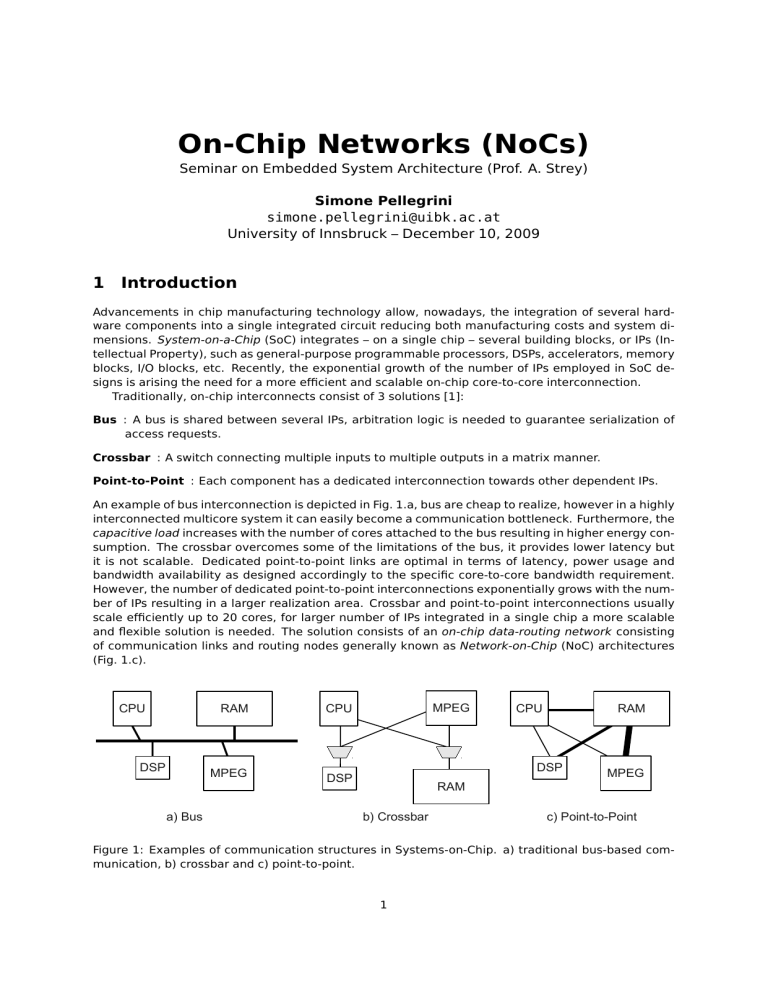

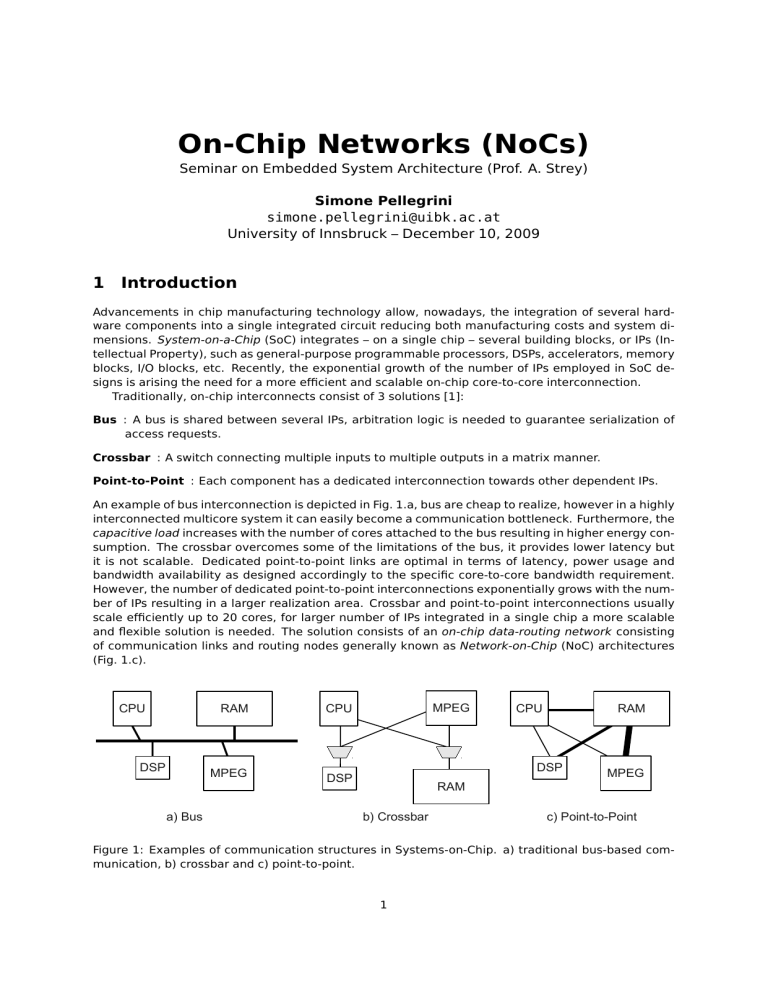

Traditionally, on-chip interconnects consist of 3 solutions [1]:

Bus : A bus is shared between several IPs, arbitration logic is needed to guarantee serialization of

access requests.

Crossbar : A switch connecting multiple inputs to multiple outputs in a matrix manner.

Point-to-Point : Each component has a dedicated interconnection towards other dependent IPs.

An example of bus interconnection is depicted in Fig. 1.a, bus are cheap to realize, however in a highly

interconnected multicore system it can easily become a communication bottleneck. Furthermore, the

capacitive load increases with the number of cores attached to the bus resulting in higher energy consumption. The crossbar overcomes some of the limitations of the bus, it provides lower latency but

it is not scalable. Dedicated point-to-point links are optimal in terms of latency, power usage and

bandwidth availability as designed accordingly to the specific core-to-core bandwidth requirement.

However, the number of dedicated point-to-point interconnections exponentially grows with the number of IPs resulting in a larger realization area. Crossbar and point-to-point interconnections usually

scale efficiently up to 20 cores, for larger number of IPs integrated in a single chip a more scalable

and flexible solution is needed. The solution consists of an on-chip data-routing network consisting

of communication links and routing nodes generally known as Network-on-Chip (NoC) architectures

(Fig. 1.c).

CPU

DSP

a) Bus

RAM

CPU

MPEG

DSP

MPEG

CPU

RAM

DSP

MPEG

RAM

b) Crossbar

c) Point-to-Point

Figure 1: Examples of communication structures in Systems-on-Chip. a) traditional bus-based communication, b) crossbar and c) point-to-point.

1

2

Basic NoCs’ Building Blocks

A NoC consists of routing nodes spread out

across the chip connected via communication

links. In Fig. 2 the main components of a NoC

are depicted:

Network Adapter implements the interface

by which cores (IP blocks) connect to the

NoC. Its function is to decouple computation (the cores) from communication (the

network).

switch

NA

Routing node routes the data according to

chosen protocols. It implements the routing strategy.

Figure 2: Example of On-Chip Network.

Link connects the nodes, providing the raw

bandwidth. It may consist of one or more

logical or physical channels.

Several NoC designs have been proposed in literature, while some of them aim at low-latency others

are more oriented to maximize bandwidth. Like computer networks, a NoC is usually designed to

met application requirements and at each level (network adapter, routing and link) several design

trade-offs must be considered.

2.1

Network Adapter (NA)

NA handles the end-to-end flow control, encapsulating the messages or transactions generated by

the cores for the routing strategy of the network. At this level messages are broken into packets

and, depending from the underlying network, additional routing information (like destination core)

are encoded into a packet header. Packets are further decomposed into flits (flow control units)

which again are divided into phits (physical units), which are the minimum size datagram that can be

transmitted in a single link transaction. A flit is usually composed by 1 to 2 phits. The NA implements

a Core Interface (CI) at the core side and a Network interface (NI) at network side. The level of

decoupling introduced by the NA may vary. A high degree of decoupling allows for easy reuse of

cores. On the other hand, a lower level of decoupling (a more network-aware core) has the potential

to make more optimal use of the network resources. Standard sockets can be used to implement the

CI, the Open Core Protocol (OCP) and the Virtual Component Interface (VCI) are two examples widely

used in SoCs.

2.2

Network Level (routing)

The main responsibility of the network is to deliver messages from a sender IP to a receiver core. A

NoC is defined by its topology and the protocol implemented by it.

2.2.1

Topology

Topology concerns the layout and connectivity of the nodes and links on the chip. Two forms of

interconnections are explored in modern NoCs: regular and irregular topologies. Regular topologies

are divided into 3 classes k-ary n-cube, k-ary tree and k-ary n-dimensional fat tree, where k is the

degree of each dimension and n is the number of dimensions. Most NoCs implement regular forms

of network ,topology that can be laid out on a chip surface, for example, k-ary 2-cube, commonly

known as grid-based topology. Generally, a grid topology makes better use of links (utilization), while

tree-based topologies are useful for exploiting locality of traffic and better optimize the bandwidth.

Irregular forms of topologies are based on the concept of clustering (frequently used in GALS chips)

and derived by mixing different forms in a hierarchical, hybrid, or asymmetric way.

2

2.2.2

Protocol

The protocol concerns the strategy of moving data through the network and it is implemented at the

router level. A generic scheme of a router is showed in Fig. 2. The switch is the physical component which deals with data transport by connecting input and output buffers. Switch connections

are dictated by the routing algorithm which defines the path a message has to follow to reach its

destination.

The wide majority of NoCs are based on packet-switching networks. Alike circuit-switching networks, where a circuit from source to destination is set up and reserved until the transfer of data

is completed, in packet-switching networks packets are forwarded on a per-hop basis (each packet

contains routing information and data). Most common forward strategies are the following:

Store-and-forward the node stores the complete packet and forwards it based on the information

within its header. The packet may stall if the router does not have sufficient buffer space.

Wormhole the node looks at the header of the packet (stored in the first flit) to determine its next

hop and immediately forwards it. The subsequent flits are forwarded as they arrive to the same

destination node. As no buffering is done, wormhole routing attains a minimal packet latency.

The main drawback is that a stalling packet can occupy all the links a worm spans.

Virtual cut-through works like the wormhole routing but before forwarding a packet the node waits

for a guarantee that the next node in the path will accept the entire packet.

The main forwarding technique used in NoCs is wormhole because of the low latency and the small

realization area as no buffering is required. Most often connection-less routing is employed for best

effort (BE) while connection-oriented routing is preferable for guarantee throughput (GT) needed

when applications have QoS requirements.

Once the destination node is known, in order to determine to which of the switch’s output ports

the message should be forwarded, static or dynamic techniques can be used.

Deterministic routing the path is determined by packet source and destination. Popular deterministic routing schemes for NoC are source routing and X-Y routing (2D dimension order routing).

In X-Y routing, for example, the packet follows the rows first, then moves along the columns

toward the destination or vice versa. In source routing, the routing information are directly

encoded into the packet header by the sending IP.

Adaptive routing the routing path is decided on a per-hop basis. Adaptive schemes involve dynamic arbitration mechanisms, for example, based on local link congestion. This results in a

more complex node implementations but offers benefits like dynamic load balancing.

Deterministic routing is preferred as it is easier to implement, adaptive routing, on the other hand,

tends to concentrate traffic at the centre of the network resulting in increased congestion there [1].

Beside the delivery of messages from the source to the destination core, the network level is

also responsible of ensuring the correct operation, also known as flow control. Deadlock and livelock

should be detected and resolved. Deadlock occurs when network resources (e.g., link bandwidth or

buffer space) are suspended waiting for each other to be released, i.e. where one path is blocked

leading to others being blocked in a cyclic way. Livelock occurs when resources constantly change

state waiting for other to finish. Methods to avoid deadlock, and livelock can be applied either locally

at the nodes or globally by ensuring logical separation of data streams by applying end-to-end control

mechanisms. Deadlocks can be avoided by using Virtual Channels (VC), VCs allow multiple logical

channels to share a single physical channel. When one logical channel stalls, the physical node

can continue serving other logical channels (VCs require additional buffering as several independent

queues must be provided).

2.3

Link Level

Link-level research regards the node-to-node links, the goal is to design point-to-point interconnections which are fast, low power and reliable. As chip manufacturing technology scales, the effects

of wires on link delay and power consumption increase. Due to physical limitations, is not possible

3

to produce long and fast wires, so segmentation is done by inserting repeater buffers at a regular

distance to keep the delay linearly dependent on the length of the wire. Partition of long wires into

pipeline stages as an alternative segmentation technique is an effective way of increasing throughput. This comes at expense of latency as pipeline stages are more complex than standard repeaters

(buffering is required). In future chip interconnects, optical wires are gaining more and more interests. The idea consist in integrating the technology used in fiber optic wires on the chip surface. As

shown in Fig. 3, for links longer than 3-4 mm optical interconnects can dramatically reduce signal

delay and power consumption [1].

3

NoC Analysis

Metrics usually employed to describe a NoC can

be divided into two categories: performance and

cost. Latency, bandwidth, throughput and jitter

are the most common performance factors while

cost is often referred to power consumption and

area usage. Design a NoC usually requires to

find the optimal trade-off between application

needs (usually expressed in terms of traffic requirements), area usage and power consumption. For example choosing adaptive routing

scheme in favour of static ones can have benefits on the average latency as the network load

increases. Routing algorithm can also optimize

the aggregate bandwidth as well as link utilization. The jitter, at last, refers to the delay variation of received packets in a flow caused by network congestion or improper queuing. Knowledge of the application traffic behaviour can

help in compensating the jitter via a proper

buffer dimensioning which allows to absorb the

effect of traffic bursts.

Figure 3: Delay comparison of optical and electriFrom the point of view of costs, reduce the cal interconnect (with and without repeaters) in a

power consumption is one of the main objec- projected 50 nm technology

tives. There are two main terms which are used

to measure the power consumption of a NoC system: (i) power per communicated bit and (ii) idle

power. Idle power is often reduced by using asynchronous circuits in implementing some of the NoC

components, e.g. the NA.

4

Available NoC Solutions

Design of a NoC is possible by means of libraries which give a high level description of NoC components that can be assembled together by system designers to suite the application needs. Available

NoC libraries can be classified corresponding to the two following metrics as depicted in Fig.4:

Parametrizability at system-level : the ease with which a system-level NoC characteristic can be

changed at instantiation time. The NoC description may encompass a wide range of parameters,

such as: number of slots in the switch, pipeline stages in the links, number of ports of the

network, and others.

Granularity of NoC : describe the abstraction level at which the NoC component is described. At

fine level the level the network can be assembled by putting together basic building blocks

which at the coarse level, the NoC can be described as a system core.

In the next sections two concrete systems which employ NoC will be analyzed: Xpipes and the RAW

processor. Xpipes is a library with the purpose of providing to system designers the building blocks

4

to instantiate custom NoCs for SoC systems. The RAW processor consists of a general purpose multicore CPU architecture which use NoC-based interconnect between processing cores.

4.1

Xpipes

Xpipes is a library of highly parameterised soft macros

(network interface, switch and switch-to-switch link) that

can be turned into instance-specific network components

at instantiation time. Components can be assembled together allowing users to explore several NoC designs (e.g.

different topologies) to better fit the specific application

needs.

The high degree of parameterisation of Xpipes network

building blocks regards both global network-specific parameters (such as flit size, maximum number of hops between any two nodes, etc.) and block-specific parameters

(content of routing tables for source-based routing, switch

I/O ports, number of virtual channels, etc.). The Xpipes

NoC backbone relies on a wormhole switching technique

and makes use of a static routing algorithm called street

sign routing. Important attention is given to reliability as

distributed error detection techniques are implemented at

Figure 4: Available NoC solutions classilink level. To achieve at high clock frequency, in Xpipes

fication.

links are pipelined in order to optimize throughput. The

size of segments can be tailored to the desired clock frequency. Overall, the delay for a flit to traverse from across one link and node is 2N + M cycles, where N is number of pipeline stages and M

the switch stages.

One of the main advantage of Xpipes over other NoC libraries is the provided tool set. The

XpipesCompiler is a tool designed to automatically instantiates an application-specific custom communication infrastructure using Xpipes components from the system specification. The output of the

XpipesCompiler is a SystemC description that can be fed to a back-end RTL synthesis tool for silicon

implementation.

4.2

RAW Processor

The RAW microprocessor is a general purpose multicore CPU first designed at MIT [3]. The main focus

is to exploit ILP across several CPU cores (called tiles) whose functional units are connected through

a NoC. The first prototype, realized in 1997, had 16 individual tiles and a clock speed of 225 MHz.

RAW processor design is optimized for low-latency communication and for efficient execution of

parallel codes. Tiles are interconnected using four 32-bit full-duplex networks-on-chip, a 2 dimensional grid topology is used thus each tile connects to its four neighbours. The limited length of wires,

which is no greater than the width of a tile, allows high clock frequencies. Two of the networks are

static and managed by a single static router (which is optimized for low-latency), while the remaining

two are dynamic. The interesting aspect of the RAW processor is that the networks are integrated directly into the processors’ pipeline as depicted in Fig. 5. This architecture enables an ALU-to-network

latency of 4 clock cycles (for a 8 stage pipeline tile).

The static router is a 5 stages pipeline that controls 2 routing crossbars and thus 2 physical networks. Routing is performed by programming the static routers in a per-clock-basis. These instructions are generated by the compiler and as the traffic pattern is extracted from the application at

compile time, router preparation can be pipelined allowing data words to be forwarded towards the

correct port upon arrival.

The dynamic network is based on packet-switching and the wormhole routing protocol is used. The

packet header contains the destination tile, an user field and the length of the message. Dynamic

routing introduces higher latency as additional clock cycles are required to process the message

header. Two dynamic networks are implemented to handle deadlocks. The memory network has a

5

Figure 5: Raw compute processor pipeline.

restricted usage model that uses deadlock avoidance. The general network usage is instead unrestricted and when deadlocks happen the memory network is used to restore the correct functionality.

The success of the RAW architecture is demonstrate by the Tilera company, founded by former

MIT members, which distributes CPUs based on the RAW design. Current top microprocessor is the

Tile-GX which packs into a single chip 100 tiles at a clock speed of 1,5 Ghz [4]. Also Intel recently

presented the Single-chip Cloud Computer (SCC) which packs 48 tiles connected through a NoC [5].

5

Summary

On-Chip networks are gaining more and more interest for both embedded SoC systems and general

purpose CPUs as the number of integrated cores is constantly increasing and traditional interconnects

do not scale. As chip manufacturing technology scales down, computation is getting cheaper and

communication is becoming the main source of power consumption. NoC tries to solve the problem

by reducing the complexity and the number of chip interconnections and by limiting the wire length

to allow higher clock speeds. Several NoC libraries and NoC-based general purpose CPUs have been

introduced making effective programmability of these chips one of the next big research challenges.

References

[1] Bjerregaard, Tobias and Mahadevan, Shankar: A survey of research and practices of Network-onchip, ACM Computing Surveys, Volume 38, Number 1, 2006.

[2] Davide Bertozzi and Luca Benini: Xpipes: A Network-on-Chip Architecture for Gigascale Systemson-Chip, IEEE Circuits and Systems Magazine, Volume 4, Issue 2, page(s): 18-31, 2004.

[3] Michael B. Taylor et al.: The RAW microprocessor: A Computational Fabric for Software circuits

and General Purpose Programs, IEEE Micro, Volume 22, Issue 2, page(s): 25- 35, 2002.

[4] Tilera: Tile-GX Processors Family, http://www.tilera.com/products/TILE-Gx.php.

[5]

Intel Research: Single-chip Cloud Computer,

Tera-Scale/1826.htm

6

http://techresearch.intel.com/articles/