Get PDF - Wiley Online Library

advertisement

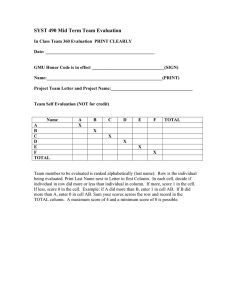

Richard L. Church Ross A. Gerrard The Multi-level Location Set Covering Model The clawical Location Set Covering Problem involves$nding the smallest number of facilities and their locations so that each demand is covered by at least one facility. It was$& introduced by Toregas in 1970. This problem can represent several different application settings including the location of emergency services and the selection of conservation sites. The Location Set Covering Problem can be formulated as a 0-1 integer-programming model. Roth (1969) and Toregas and ReVelle (1973) developed reduction approaches that can systematically eliminate redundant columns and rows as well as identqy essential sites. Such approaches can @en reduce a problem to a size that is considerably smaller and easily solved by linear programming using branch and bound. Extensions to the Location Set Covering Model have been proposed so that additional levels of coverage are either encouraged or required. This paper focuses on one of the extended model f o m called the Multi-level Location Set Covering Model. The reduction rules of Roth and of Toregas and ReVelk violate properties found in the multi-level model. This paper proposes a new set of reduction rules that can be used f o r the multi-level model as well as the classic single-level model. A demonstration of these new reduction rules is presented which indicates that such problems may be subject to signijicant reductions in both the numbers of demands as well as sites. 1. INTRODUCTION The Location Set Covering Problem (LSCP) was defined more than thirty years ago by Toregas (1970) and in the pages of Geographical Analysis by Toregas and ReVelle (1973). This location problem involves finding the smallest number of facilities (and their locations) such that each demand is no farther than a pre-specified distance or time away from its closest facility. Such a problem is called a “covering” problem in that it requires that each demand be served or “covered within some maximum time or distance standard. A demand is defined as covered if one or more The authors would like to acknowledge Lance Craighead and the helpful comments that the received after a presentation of this research at a workshop s onsored by the Craighead EnvironmentafResearch Institute. They also want to acknowledge very helpfu~commentsfrom the reviewers. Richard Church is Professor, Department of Geography, University of Calijornia at Santa Barbara. E-mail: church@geog.ucsb.edu.Ross Gemard is Assistant Researcher, Department of Geography, University of Calijornia at Santa Barbara. E-mail: gerrard@geog.ucsb.edu GeographicalAnalysis, Vol. 35, No. 4 (October 2003) The Ohio State University Submitted:June 7,2002. Revised version accepted:January 10,2003. 278 / Geographical Analysis facilities are located within the maximum distance or time standard of that demand. The classical LSCP requires that each demand is covered at least once. A related problem involves the location of a fixed number of facilities and seeks to maximize the coverage of demand. This second type of covering problem is called the Maximal Covering Location Problem (MCLP) (Church and ReVelle 1974).Since the development of these two juxtaposed problem forms there have been numerous applications and extensions. There are circumstances where the provision of a service needs more than one “covering”facility (see Daskin, Hogan, and ReVelle 1988).This occurs when facilities may not always be available. For example, assume that ambulances are being located at dispatching points in order to serve demand across an urban area. When the nearest ambulance is busy, then the next closest available ambulance will need to be assigned to a call when it is received. If the closest available ambulance is farther than the service standard then that demand/call for service is not provided service within the coverage standard. To handle such issues, models have been developed that seek multiple coverage. Two examples of multiple coverage exist: stochastidprobabilistic and deterministic.A good example of a probabilistic multiple cover model is the maximal expected coverage model of Daskin (1983).The simple back up covering model of Hogan and ReVelle (1986) is a good example of a deterministic cover model that involves maximizing second-level coverage. Toregas was the first to recognize the possible need for multi-level coverage (Toregas 1970; 1971). Toregas defined the Multi-level Location Set Covering Problem (ML-LSCP)as a search for the smallest number of facilities needed to cover each demand a preset number of times, where the need for coverage might vary between demands. Some demand areas might need coverage three times, others two times, some one time, etc. A solution to the MLLSCP would represent the most efficient deployment of resources that meets all prespecified cover conditions. It is this model to which we devote the remainder of this paper. The ML-LSCP subsumes as a special case the LSCP, which is an applied form of the minimum cardinality set covering problem. Since the minimum cardinality set covering problem is NP-hard, the ML-LSCP is NP-hard as well. This means, essentially, that some problem instances will not be solvable optimally within a reasonable computation time. Hence, it may be necessary to rely on heuristics to solve large or difficult-to-solve problems. Toregas and ReVelle (1973)found that a specific instance of a LSCP may yield to a reductions algorithm, which could possibly reduce a problem instance in terms of both rows and columns. Sometimes this reductions algorithm completely solves a problem. Most of the time, however, the reductions process yields a “reduced problem that can be solved by inspection or by a limited use of linear programming (LP) with branch and bound. There is the possibility that the reductions algorithm will result in few if any changes. However, the cost of attempting the reductions algorithm is relatively insignificant, so this is not a serious issue. The bottom line is that solving an LSCP should first be approached with the reductions algorithm, followed by either a heuristic or an optimal procedure. We might liken the use of the reductions algorithm as a pre-processing step, before a solution algorithm or heuristic is applied. The effectiveness of a reductions approach for a ML-LSCP is the main topic of this paper. In the next section, we present an integer programming formulation for the original LSCP and the ML-LSCP. We then describe the reductions algorithm of Toregas and ReVelle (1973). Unfortunately, the reductions algorithm for the LSCP cannot be applied to the ML-LSCP. To address this, we present a new set of extended reduction rules that can be applied to the ML-LSCP. Finally, we give results of this new reductions algorithm for a set of test problems and provide conclusions and suggestions for further work. Richard L. Church and Ross A. Gerrard / 279 2. THE LSCP AND ML-LSCP MODELS Assume that we have a number of potential sites that can be used for the placement of a facility. These sites can correspond to nodes of a network, discrete points on a continuous terrain surface, or some other representation. We will refer to sites by the indexj, wherej = 1,2,3,. ..,n, and n is the total number of sites. Further, assume that there are a number of demands, each of which is to be covered. Demands may be represented as nodes of a network, discrete points on a continuous terrain, or some other representation. Demands will be represented by the index i, where i = 1,2,3,.. .,m, and m is the number of discrete demands. We further require a definition of cover. The definition of cover used by Toregas and ReVelle (1972) stated that a demand at i is covered by a facility a t j if sitej is within a imaximum &stance or time in reaching i. They used this definition for the location of emergency service facilities, such as fire stations. Essentially, they wanted to locate the smallest number of facilities needed in which each demand would be covered. For fire department planning, this translates into finding the smallest number of needed stations and their locations such that each demand area is covered within a maximum emergency response time or distance. They formulated this problem as an integer-programming model using the following notation: 1, if sitej can cover demand i 1, if sitej is selected for a facility Their formulation for the LSCP is: n Min Z = xj j=l n C ayxj 2 1 for each demand i = 1,2,...,m xj for each sitej = 1,2,...,n j=l =0,l The objective involves minimizing the number of sites selected for facilities. There is one constraint of type (2) for each demand i. For a given i, this constraint requires that the number of sites selected that can provide cover to demand i must be greater than or equal to 1.The second constraint (3)refers to the integer requirements of the site selection variables. This model is a special case of the more general set covering model. The set covering model has the same structure as the above model except that it utilizes the following objective: n Min Z = c c j x j j=1 where: (4) 280 / Geographical Analysis cj = the cost of selecting sitej This more general model has been used in many settings, where the indexj might refer to sites, workers, crews, portfolios, etc., and where the index i might refer to demand, shifts, flights, risk distribution, etc. Basically, the LSCP is a set covering problem where cj = 1for allj. One of the more recent suggested applications of the LSCP is that given by Underhill (1994).Underhill stated that many of the attempts to select sites for reserve design for the protection of species fit within the definition of a set covering problem. In this context, sites are tracts of land that contain dfferent biota of concern. Each site is basically known in terms of the biological elements that it contains. Such biological elements may be, for example, threatened or endangered species. Each species is considered a demand. This reserve site selection problem is to find the smallest number of sites needed such that each species of concern is represented by at least one of the selected sites. There are circumstances where the provision of a service needs more than one “covering” facility. This occurs when facilities may not always be available or when there is value or “peace-of-mind that is associatedwith providing additional levels of coverage. Toregas (1970) defined the ML-LSCP as a search for the smallest number of facilities needed to cover each demand a preset number of times, where the need for coverage might vary between demands. To formulate this problem, consider the following additional notation: Zj = the desired number of times demand i needs to be covered We can then formulate the ML-LSCP by replacing constraints (2) of the LSCP with the following: n C ayxj2 li for each demand i = 1,2,...,m (5) j=1 The only difference between this model and the LSCP is that the requirement for coverage of a demand is no longer set at one, but may be set at some integer level greater than one. It is important to note that in the above model, each site can be selected only once. A different version of the ML-LSCP can be found in Batta and Mannur (1990) and Van Slyke (1982). Batta and Mannur describe an emergency vehicle location problem in which each demand needs multiple coverage, and multiple vehicles can be placed at a given site. Thus, their model uses non-negative integer xj variables as compared to the binary xj variables used above. Xiaoming and Van Slyke (1984)designate this type of model the unbounded multi-cover problem. This is a major distinction in the two forms of the ML-LSCP as the latter form is appropriatewhen multiple servers can be allocated to a location, and the former is appropriate when a site can be selected at most once for its role as a serverkover. For example, The Nature Conservancy (Chaplin et al. 2000) used a multiple cover model to search for solutions that might represent species several times, rather than just once, and where each site could be chosen at most once. It would not make sense to be able to select a site more than once in terms of its role in covering species. Finally the multi-level set covering form of this problem using varylng cost coefficients can be found in Van Slyke (1981) and Hall and Hochbaum (1992). Hall and Hochbaum present a special purpose algorithm for that problem, but they do not explore the use of reduction rules. It is important to note that when xj = 0,l the ML-LSCP may not have a feasible n solution. If ay j=l < li for some i, then fewer sites exist that can cover i than the de- Richard L. Church and Ross A. Gerrard / 281 mand for coverage Zi. To recognize the limits in providing coverage, no Zi value should n exceed C ay . We will assume this property for the remainder of the paper. j=1 Any solution technique that has been developed for the set covering problem can be used to solve an LSCP. A number of solution approaches have been developed or applied to the set covering problem. They include the greedy algorithm (Chvatal 1979), expanded forms of greedy (Vasko and Wilson 1984; 1986; Feo and Resende 1989), Lagrangian relaxation with subgradient optimization (Christofides and Paixao 1993; Haddadi 1997),LP with branch and bound (Current and O’Kelly 1992),as well as many others (see, for example, Beasley 1987). These techniques are traditionally applied to the original problem, even when certain rules might be applied in advance to reduce the size of such problems from the outset. In the next section we discuss the reduction rules developed for the LSCP. 3. REDUCTION RULES FOR THE LSCP Roth (1969) and Toregas and ReVelle (1973) explored the use of reduction rules for the set covering and location set covering models respectively. Garfinkel and Nemhauser (1972) also present reduction rules for the set covering model. Church and Meadows (1979), Current and Schilling (1990), and Downs and Camm (1996) have utilized these rules on the LSCP and demonstrated how they can be tailored for the MCLP with great success. Williams and ReVelle (1997) have suggested using such rules in solving the species representation problem. These rules, when applied to a given problem, might result in substantial reductions of problem size. They are relatively easy to program, are not computationally intensive, and can be very effective. Thus, such rules make sense to apply as a first step to solving a location set covering, maximal covering or general set covering problem. The form of the rules may vary though, depending upon the definition of the problem. The rules described here are applicable for the LSCP. The first rule is associated with identifylng an essential site: Definition of Essential Site: a sitej is essential if there exists at least one i where = 0 for d l k # j . ay = 1and where A site is classified as essential if it uniquely covers one or more demands. An essential site exists in a row if the coverage matrix, i.e., [ay],contains only one nonzero entry for that row. If a site can be classified as essential, it must be selected. Otherwise, it would be impossible to provide coverage to those demands uniquely covered by that site. If a site is essential, it then is selected for the solution, and its column can then be eliminated from the coverage matrix. All demand covered by that essential site can now be considered satisfied and their rows removed from the coverage matrix as well. Thus, the presence of an essential site means that a partial solution can be constructed, and the resulting coverage matrix can be reduced by one column and at least one row. The resulting coverage matrix represents the remaining unresolved problem, in terms of columns (i.e.,xj variables) and rows (i.e.,demand constraints not yet satisfied).The second reduction rule is based upon the following definition: Definition of Dominated Column: a columnj in the coverage matrix is said to dominate a column k in the coverage matrix if for every row i in the coverage matrix, ay 2 aik. If a columnj exists that can cover exactly the same demand as another column k or 282 / Geographical Analysis more, then aY aik for each i. Since each demand needs to be covered only once, the dominated site is clearly inferior and can be eliminated from consideration, thereby reducing the size of the remaining problem. Note that it is possible that two sites could cover exactly the same demand (and hence each dominates the other). In this case, the sites are equivalent, and one of them can be removed from the problem. Now consider an alternate form of domination: Definition of Row Domination: a row i is said to dominate another row s in the coverage matrix if for every columnj in the coverage matrix, aq 5 ad. If row i dominates rows, it can be seen that the service set for demand i is contained within the service set for demand s. If demand i is satisfied, then demand s will automatically be satisfied.Thus, it is not necessary to include the requirement for demand s, as coverage will be provided to s if coverage is provided to i. Dominated rows can therefore be eliminated from the coverage matrix. The three reduction rules can be used in the following manner: LSCP Reductions Algorithm (Toregas and ReVelIe 1973): Step 1: Select any essential sites for the solution. For each essential site, remove its respective column from the coverage matrix as well as remove any row that is covered by that column. Step 2: Identify dominated columns and remove them from the coverage matrix. Step 3: Identify dominated rows and eliminate them from the coverage matrix. Step 4: Repeat steps 1through 3, until either no coverage matrix remains or no further reductions can be found. The reductions algorithm will result in two possible outcomes: Case 1, no matrix is left: An optimal solution to the LSCP has been generated and is comprised of the essential sites selected during the reductions algorithm, or Case 2, a matrix remains: A partial solution to the LSCP has been identified and is comprised of the essential sites selected during the reductions algorithm. The remaining coverage matrix represents a reduced covering problem that must be solved using an additional technique, such as linear programming with branch and bound. Often the reductions technique can make substantial reductions in problem size. The remaining “smaller” problem may be solvable by inspection but often requires a heuristic or solution algorithm (see Toregas 1971). Now that we have presented the LSCP reductions algorithm, we proceed to a discussion of reductions and the MLLSCP. 4. THE MULTI-LEVEL LSCP AND REDUCTIONS One logical question is: can the LSCP model reductions rules work for the MLLSCP? Unfortunately, the answer to this is a resounding NO. There are properties associated with the ML-LSCP that represent “rule-busters’’for the LSCP reductions process of Toregas and ReVelle (1973). First, it can be shown that a dominated column in an LSCP problem might well be needed in the ML-LSCP. Second, a column Richard L. Church and Ross A. Gerrard / 283 may be essential when its coverage of an element is not unique. Third, if row A dominates row B in the LSCP, it might not be a dominating row in the ML-LSCP, as it is possible that Zb > I,. There is, however, a set of generalized reductions rules that can be defined for the ML-LSCP, which is the subject of the remainder of this section. For each of the reductions processes defined for the LSCP, we will show in this section that there are extended forms that can be defined for the ML-LSCP. As described above for the LSCP, a site is essential to demand i if it is the only remaining site that can provide coverage to demand i. If multiple coverage is to be provided, then more than one site will be needed to cover a given demand, and more than one site may be considered essential. Consider the following definition: n Definition of a set of essential sites: If for a given demand i, aq = l i , then all j= 1 sites in that row where ag = 1are required for coverage and are essential. If we should ever encounter the condition that the number of sites that exist to cover a given demand just equals the requirement for multi-level coverage, 1, then all of the sites will be needed for coverage. As we select each site, s, as essential, the column for that site is eliminated, and the right-hand side value, lk, of each demand constraint k where aks = 1is reduced by one. This reduction in right-hand side values reflects the fact that as each site is chosen, additional coverage levels are provided to those demands covered by the essential sites, and the remaining demand for coverage is reduced. The row associated with identifying a set of essential sites can also be eliminated as the remaining demand for coverage will be zero. Thus, the presence of a set of essential sites means that a partial solution can be constructed, and the resulting coverage matrix can be reduced by one or more columns and at least one row. The second reduction rule is associated with dominated columns. As we stated before, the definition of column domination used in the LSCP does not apply in general to the ML-LSCP. The reason for this is that many columns may be needed to provide multi-level coverage, and some of these may not meet the column domination definition described for the LSCP. We can, however, build the basis for column reductions. Consider the following definition: Definition of Singleton Column: a columnj in the coverage matrix is defined as a “singleton” if columnj has only one nonzero entry in the coverage matrix (i.e.,a4 = 1 for only one i). A column may be a singleton at the beginning of the reductions process, or it may be rendered a singleton as rows that it covers are satisfied and are eliminated in the reductions process. It is also possible that a column may be reduced to having no nonzero entries at all as all of the demands that it could cover have already been satisfied as essential sites have been identified and chosen. If this happens, that column is effectively useless for the remaining problem and can be eliminated. In the case of singleton columns, consider the following definition: Definition of a “singleton-based”row: If a demand constraint k exists where each nonzero entry aks is associated with a singleton column, s, then the constraint row is said to be a “singleton-based row. If we encounter a singleton-based row, k, we can select Zk of the singleton columns to cover the row and eliminate the remaining singleton columns in that row. We will then satisfy the demand for multi-level coverage for that row, and the row can be 284 / Geographical Analysis eliminated. As singletons in row k only appear in that row, and only singletons cover that row, then without any loss of generality, we can pick what is necessary to cover that row and discard the rest. It is more likely that a row exists that contains both singleton columns and non-singleton columns than a row with only singleton columns. Let: Ci= the number of non-singleton columns that have an entry in row i Now define three types of column domination: Column Domination type 1: If a row i exists that has a nonzero combination of singleton columns and non-singleton columns where Ci> Zi, then all singleton columns in row i can be eliminated. Column Domination type 2: If a row i exists that has a nonzero combination of singleton columns and non-singleton columns where Ci= li, then all singleton columns in row i can be eliminated and all non-singleton columns in row i can be selected as essential. Column Domination type 3: If a row i exists that has a nonzero combination of singleton columns and non-singleton columns where Ci< li, then exactly li - Ci singleton columns in row i can be selected as essential (with the rest of the singletons being eliminated) along with all non-singleton columns in row i. If in a given constraint row i there is an excess of non-singleton columns to choose from, i.e., Ci> Zi, then all singletons can be eliminated. This is the basis for type 1 domination. If in a given constraint row i, the number of non-singleton columns equals that of the needed number for multi-level coverage, then all non-singleton columns are essential, and the singleton columns associated with that row can be eliminated. This forms the basis for type 2 domination. Finally, if the multi-level demand for coverage for i exceeds the number of non-singleton columns in that row, then they surely dominate as a set any other choices (i.e,, singletons), but some of the singletons will also be needed to satisfy the multi-level coverage constraint. This is the basis for column domination type 3. There are two additional types of column dominance that can be identified. These involve analyzing all sites that can provide coverage to a given demand i. If demand i has not yet been satisfied in terms of the desired level of multiple coverage, presumably a set of sites exits that can cover that i. Call it Q,. If a site exists in Q, that globally dominates (with respect to LSCP column dominance) all other sites in Q,, then that site can be chosen to serve i. We formalize this as: Column domination type 4: If a row k exists that has not yet been satisfied in terms of coverage, let Q, = (i I akj = I}.CP is the set of sites that have yet to be eliminated and cover demand k. If there exists a sitej, such that for every g E 'P, aig 5 ay for all active coverage rows i, then sitej globally dominates the other sites that can cover k and can be chosen for the solution set without loss of generality. If a site is identified as globally dominant for a given demand k that has yet to be satisfied, then that site can be chosen for the solution. Upon making this choice, the column is eliminated from the coverage matrix, and li is reduced by 1for all rows i which are covered by that site. It should be recognized that the computational work to test for type 4 column dominance is considerably more than that of the simple column dominance test in LSCP. This is because a column needs to be tested for dominance Richard L. Church and Ross A. Gerrard / 285 over all sites in the set CD. Consequently, it would make sense to use this test only after all other tests have been utilized. Column domination type 5: Suppose that columnj can cover a selected set of demand, N j where MaxL = Max (ZJ. Over all demand i that are covered by sitej, iE N j MaxL represents the largest unmet coverage level. If there exist MaxL or more columns that dominate columnj (as in the sense of column domination for the LSCP reduction rules), then columnj is dominated, and it can be eliminated from further consideration. Type 5 column domination is the general form of the column domination rule for the single level LSCP problem. In essence, if a column is dominated by enough separate columns, it will never be needed for any of the demand in which that column can provide coverage. For a single-level problem, all it takes is one column to dominate a specific columnj for removal of columnj. For subsequent levels of coverage demand, additional dominating sites are needed in order to remove a column. Row dominance can also exist in an ML-LSCP. For example, it is possible that two constraint rows, A and B, have the same column entries and also have the same righthand side values (i.e,, I, = &), then the two constraint rows are equivalent, and one can be eliminated as being redundant. We can expand 011 this: Definition of Row Domination: A row a is said to dominate another row b in the coverage matrix if for every columnj in the coverage matrix, aaj 5 a@and Z,> Zb. For a row A to dominate another row B, it must have a demand level 1, that is at least as high as the demand level for B as well as have the same or fewer nonzero entries as B. The definition of row dominance in the LSCP is a special case of this more general form. The ML-LSCP reduction rules can be used in the following manner: ML-LSCP Reductions Algorithm: Step 1: Select any essential sites for being needed in the solution. For each essential site, remove its respective column from the coverage matrix as well as reduce respective Zi values by 1 (for all rows i that are covered by that column). Essential sites are n ag = li and in “singleton-based rows. For singleton- identifiable in rows i where j=1 based rows, select what is necessary (Zi columns) and eliminate the remaining singleton columns from the problem. Eliminate associated rows. Step 2: Perform tests for column domination types 1,2. 3, 4, and 5 . Select essential columns when identified; update the coverage matrix to reflect column selections and eliminations. (Note if any two columns of the coverage matrix appear only in rows where the remaining demand for coverage is 1, then the LSCP column domination test can be applied.) Step 3: Identify dominated rows and eliminate them from the coverage matrix. Step 4: Repeat steps 1 through 3, until either no coverage matrix remains or no further reductions can be found. 286 / Geographical Analysis As with the LSCP reductions algorithm, the ML-LSCP reductions algorithm will result in two possible outcomes: Case 1, no matrix is left: An optimal solution to the ML-LSCP has been generated and is comprised of the sites selected during the reductions algorithm, or Case 2, a matrix remains: A partial solution to the ML-LSCP problem has been identified and is comprised of the sites selected during the reductions algorithm. The remaining coverage matrix represents a reduced covering problem that must be solved using an additional technique, like linear programming with branch and bound. We have developed a list processing routine that was coded in Fortran 77 to accomplish the reductions process for the ML-LSCP. This procedure was compiled using a GNU 77 compiler on a Sun Ultra-Sparc 10 workstation (approximately450 MHz) using the SunOS environment.The code was developed as a research program, without a lot of concern for solution efficiency, but more for studying the efficacy of each reductions step. 5 . AN APPLICATION OF THE EXTENDED REDUCTIONS ALGORITHM The reductions process has been applied to a set of four different problem data sets. They include a 55 node problem (Swain 1971; the complete data are given in Church and Weaver 1986),the 79 node Zarzal data set of Eaton et al. (1981),the 150 node problem of Waters (1977), and the 372 node problem of Ruggles and Church (1996).Results of the reductions process for a number of different coverage &stances and levels are given in Tables 1through 4. It was assumed that each node was a potential facility site as well as a point of demand. Each table presents results for a different problem size (e.g., Table 1 gives results for the 55 node problem of Swain). The value of S for a given problem represents the coverage distance/time used to generate the coverage matrix. The value of L represents the desired number of times each demand is to be covered. Size of the coverage list refers to the number of coverage entries in a coverage matrix, or the length of the coverage list used in the reductions program. This list length can be used to calculate the density of the matrix in the 0-1 programming formulation, by dividing this number by the problem size. The results of reductions are given in terms of the reduced problem size, given as remaining rows by remaining columns and in terms of the reduced coverage list size. Finally, percentage reductions in problem size and list length are given. For example, the reductions code applied to the 55 node problem of Swain for S = 10 and L = 2 took 0.09 seconds and resulted in a reduced problem of 23 rows and 28 columns and a reduced coverage list of 150 (see Table 1).This results in a problem size reduction of 79 percent and a list size reduction of 72 percent. Overall, percentage problem size reductions ranged from 42 percent to 100 percent over all four data sets and problems evaluated, amounts that will significantly enhance any follow-up solution procedure that one elects to use. Since the reductions code can solve one level coverage problems (i.e., LSCP), it is useful to compare the percentage reductions made between L = 1and L > 1problems. In many of our results, the amount of reductions made for L = 1problems are larger than reductions made at higher L levels. However, no clear pattern seems to emerge that would allow one to draw general conclusions.The amount of reductions that are achieved seems to depend on characteristics of the data set and coverage distance used. Reductions tended to be lowest for the 372 node problems, especially for S = 1.0. Inspection of the reduced coverage matrices associated with these problems TABLE 1 Results of the ML-LSCP reductions process applied to the 55 node problem of Washington, D.C. (original problem size 55 x 55). s 15. 12. 10. L Level 1 2 3 4 1 2 3 4 1 2 3 4 size of cover list Reduced Problem Size Reduced Cover list size 1075 1075 1075 1075 739 739 739 739 541 541 541 541 Ox0 16 x 21 30 x 41 31 x 47 Ox0 25 x 24 28 x 45 22 x 29 5x6 23 x 28 31 x 36 6x5 0 104 446 536 0 229 337 246 12 150 289 20 % prolilern size reduction % list size rrduchon Reductions Time (seconds) 100 8!3 59 52 100 80 58 79 99 79 63 99 100 90 59 50 100 69 54 67 98 72 47 96 0.12 0.28 0.42 0.49 0.07 0.14 0.14 0.12 0.07 0.09 0.02 0.05 TABLE 2 Results of the ML-LSCP reductions process applied to the 79 node problem of Zarzal, Colombia (original problem size 79 x 79). S 30. 20. 12. Size of cover list L Level 787 787 447 447 289 289 1 2 1 2 1 2 Reduced Problem Size Ox0 Ox0 Ox0 Ox0 ox0 Ox0 Reduced Cover list size 0 0 0 0 0 0 % prolilern size reduction % list size reduction Reductions Time (seconds) 100 100 100 100 100 100 100 100 100 100 100 100 0.01 0.01 0.01 0.01 0.01 0.01 TABLE 3 Results of the ML-LSCP reductions process applied to the 150 node problem of London, Ontario (original problem size 150 x 150). Size of S L Level cover list ~ 500. 350. 200. 1 2 3 1 2 3 1 2 3 Reduced Problem Size Reduced Cover list size B prohlem size reduction % list size reduction Reductions Time (seconds) Ox0 Ox0 Ox0 22 x 25 51x67 63 x 116 63 x 68 102 x 118 102 x 123 0 0 0 152 1389 3264 1076 2807 2918 100 100 100 97 85 68 81 47 44 100 100 100 98 86 66 73 29 27 0.93 0.94 0.94 68.15 79.15 99.18 13.34 24.40 32.81 ~~ 15288 15288 15288 9706 9706 9706 3972 3972 3972 TABLE 4 Results of the ML-LSCP reductions process applied to the 372 node problem of the Basin of Mexico (original problem size (372 x 372). s 1. 0.5 L Level Size of cover list Reduced Problem Size Reduced Cover list size % problem size reduction 1 2 3 1 2 3 4064 4064 4064 3445 3445 3445 254 x 309 254 x 310 254 x 317 112 x 103 112 x 186 112 x 278 2382 2388 2422 293 495 717 43 43 42 92 85 78 % list size reduction 41 41 40 91 86 79 Reductions Time (seconds) 3.72 2.30 2.36 0.22 0.28 0.34 288 / Geographical Analysis indicated a large number of almost equivalent rows and columns, each varymg from others by one or two elements, depicting a large cyclic-type matrix that was not reducible. Reductions were generally the highest for problems that were relatively sparse, i.e., the 79 node problem. The 79 node network is almost a tree network comprised of few cycles, and this probably helps to explain why reductions were complete for every problem. Overall, one can conclude that reductions procedures presented in this paper can be quite effective at reducing problem size for the ML-LSCP. Even though the reductions code was not optimized for efficiency, most of the computation times were less than a second. However, several of the solution times for the 150 node problem exceeded a minute. Virtually all of the time for such applications involved searching for type 4 column domination. The type 4 column domination test did not significantly add to overall reductions, so there may be problems where such a test is not warranted, either by its limited effectiveness or by the amount of computational effort needed. 6. CONCLUSION The classic Location Set Covering Model involves identifylng the smallest number of needed facilities and their locations such that each demand is covered. This paper addresses an extended version of the LSCP called the Multi-level Location Set Covering Problem. This model was envisioned even at the outset of the development of the LSCP (Toregas 1970).This ML-LSCP involves finding a minimum number of facilities needed to cover each demand a preset number of times. We assumed that the facilities cannot be co-located and must be distributed at most one per site. This type of model fits well into the framework of reserve site selection for the protection of imperiled species (Underhill 1994; Chaplin et al. 2000). Although the multi-level set covering problem has been the subject of some research, no one has addressed the applicability of reductions rules to the ML-LSCP. Toregas and ReVelle (1972; 1973) and Roth (1969) discovered reduction rules that could help solve the classic LSCP model. We have attempted to open the frontier by expandmg the reductions process to the ML-LSCP. First, we described why the LSCP reductions rules could not be applied to the ML-LSCP. Then, we proposed a new set of expanded rules that can be applied to the ML-LSCP, building upon the earlier work of Toregas and ReVelle (1972; 1973). Finally, we presented a set of applications of the reductions process. The reductions algorithm developed in this paper was found to reduce a number of problems and in a few cases solve a problem in its entirety. We conclude that all applications of the ML-LSCP should consider adding a reductions process as the first step. The focus of this paper has been on the solution of the ML-LSCP where each site could be selected at most once, which makes sense for an application like the reserve site selection problem, or even a personnel selection problem for inspection teams (see Klimberg, ReVelle, and Cohon 1991). For the case of emergency equipment, it may be realistic to allow placement of multiple units at a given location. This type of decision policy goes beyond the scope of this paper. It would seem important to examine how reduction rules could be expanded for this problem as well. LITERATURE CITED Batta, R., and N. R. Mannu: (1990).“Covering-Location Models for Emergency Situations that Require Multiple Response Units. Management Science 36,16-23. Beasley, J. E. (1987).“An Algorithm for the Set Covering Problem.” European ]oumaZ ofOperationa2 Research 31,85-93. Chaplin, S. J., R. A. Gerrard, H. M. Watson, L. L. Master, and S. R. Flack (2000).“The Geograph of Imperilment: Targeting Conservation Towards Critical Biodiversity Areas.” In Precious Heritage: %atus of Richard L. Church and Ross A. Gerrard / 289 Biodiversit in the United States, edited by B. A. Stein, L. S. Kutner, and J. S. Adams. New York: Oxford University Less. Christofides, N., and Paxao (1993). “Algorithmsfor Large Scale Set Covering Problems.” Annals of Op- erations Research 13,261-277. Church, R. L., and M. E. Meadows (1979). “Location Modeling Utilizing Maximum Distance Criteria.” Geographical Analysis 11,358-73. Church, R., and C. ReVelle (1974). “The Maximal Covering Location Problem.” Papers of the Regional Science Association 32, 101-18. Church, R. L., and J. R. Weaver (1986). “Theoretical Links Between Median and Coverage Location Problems.” Annals of Operations Research 6, 1-19. Chvatal, V. (1979). ‘% Greedy Heuristic for the Set-Covering Problem.” Mathematics of Operations Research 26,233-35. Current, J,, and M. O’Kelly (1992). “Locating Emergency Warning Sirens.” Decision Sciences 23,221-34. Current, J., and D. Schilling (1990). “Analysis Errors Due to Demand Data A regation in Set Covering and Maximal Covering Location Problems.” Geographical Analysis 22, 116-%. Daskin, M. (1983). “A Maximum Ex ected Coverin Location Model: Formulation, Properties and Heuristic Solution.” Transportation gcience 17,48-6$. Daskin, M. S., K. Hogan, and C. ReVelle (1988). “Integration of Multiple, Excess, Backup, and Expected Covering Models.” Environment and Planning B: Planning and Design 15, 15-35. Downs B. T., and J. D. Camm (1996).“An Exact Algorithm for the M.aximal Covering Problem.” Naval Research Logistics 43, 435-61. Eaton, D. , R. L. Church, V. L. Bennett, B. L. Hamon, and L. G. V. Lopez (1981). “On the Deployment of Healtk Resources in Rural Valle Del Cauca, Colombia.” TZMS Studies in the Management Sciences 17,331-59. Feo, T. A,, and M. G. C. Resende (1989). “A Probabilistic Heuristic for a Computationally Difficult Set Covering Problem.” Operations Research Letters 8,67-71. Garfinkel, R. S., and G. L. Nemhauser (1972). Znteger Programming. New York: John Wiley and Sons, Inc. Haddadi, S. (1997). “Sim le Lagrangian Heuristic for the Set Covering Problem.” European Journal of Operational Research 9$,200-204. Hall, N. G., and D. S. Hochbaum (1992). “The Multicovering Problem.” European Journal of Operational Research 62,323-39. Hogan, K., and C. ReVelle (1986). “Concepts and Applications of Backup Coverage.” Management Science 32,1434-44. Klimber , G R., C. ReVelle, and J. Cohon (1991). “A Multiob’ectiveApproach to Evaluatin and Planning the AlFocation of Inspection Resources.” European Journa?of Operational Research 52, &-64. Roth, R. (1969). “Computer Solutions to Minimum-Cover Problems.” Operations Research 17,455-65. Ruggles, A., and R. L. Church (1996). “Spatial Allocation in Archaeology: An 0 portunity for Re-evaluation.” In N e w Methods, Old Problems: Geogra hic Znformation Systems in ddern Archaeological Research, edited by H. D. G. Mascher. Carbondafe: Southern Illinois University Press. Swain, R. (1971). “A Decomposition Algorithm for a Class of Facility Location Problems.” Ph.D. diss., Cornell University Toregas, C. (1970). “A Covering Formulation for the Location of Public Service Facilities.” M.S. thesis, Cornell University. Toregas, C. (1971). “Location Under Maximal Travel Time Constraints.” Ph.D. diss., Cornell University. Toregas, C., and C. ReVelle (1972). “0 timal Location Under Time or Distance Constraints.” Papers of the Regional Science Association 28, 13!-43: Toregas, C., and C. ReVelle (1973). “Binary Logic Solutions to a Class of Location Problem.” Geographical Analysis 5, 145-55. Underlkl, L. (1994). “Optimal and Suboptimal Reserve Selection Algorithms.” Biological Consemation 70,85-87. Van Slyke, R. (1981). “Covering Problems in C31 Systems.”Technical Re ort #8012, Department of Electrical Engineering and Computer Science, Stevens Institute of Technoyogv, Hoboken, N.J. Van Slyke, R. (1982). “Redundant Set Covering in Telecommunications Networks.” In Proceedings IEEE Large Scale Systems Symposium, Virginia Beach, Va., October 11-13,217-22. Vasko, F., and G. Wilson (1984). “‘AnEfficient Heuristic for Large Sei Covering Problems.” Naval Research Logistics Quarterly 32, 163-71. . (1986). “Hybrid Heuristics for Minimum Cardinality Set Covering Problems.” Naval Research Logistics Quarterly 33, 241-49. Waters, N. (1977). “Methodology for Servicingthe Geography of Urban Fire: An Exploration with Special Reference to London, Ontario.” Ph.D. diss., University of Western Ontario. Williams, J. C., and C. S. ReVelle (1997). “Ap 1 n Mathematical Programming to Reserve Selection.”Environmental Modeling and Assessment 2, f6V-g. Xiaoming, L., and R. Van Slyke (1984). “Primal-Dual Heuristics for Multiple Set Covering.” Congressus Numerantiurn 41,63-73.