A Geometric Analysis of the AWGN Channel with a (σ, ρ)

advertisement

A Geometric Analysis of the AWGN Channel with

a (σ, ρ)-Power Constraint

Varun Jog

Venkat Anantharam

EECS, UC Berkeley

Berkeley, CA-94720

Email: varunjog@eecs.berkeley.edu

EECS, UC Berkeley

Berkeley, CA-94720

Email: ananth@eecs.berkeley.edu

Abstract—We consider the additive white Gaussian noise

(AWGN) channel with a (σ, ρ)-power constraint, which is

motivated by energy harvesting communication systems. This

constraint imposes a limit of σ + kρ on the total power of

any k ≥ 1 consecutive transmitted symbols in a codeword. We

analyze the capacity of this channel geometrically, by considering

the set Sn (σ, ρ) ⊆ Rn which is the set of all n-length sequences

satisfying the (σ, ρ)-power constraints. For a noise power of

ν, we obtain an upper bound on capacity by considering the

volume of the Minkowski sum

√ of Sn (σ, ρ) and the n-dimensional

Euclidean ball of radius nν. We analyze this bound using

a result from convex geometry known as Steiner’s formula,

which gives the volume of this Minkowski sum in terms of the

intrinsic volumes of Sn (σ, ρ). We show that as n increases, the

logarithms of the intrinsic volumes of {Sn (σ, ρ)} converge to a

limit function under an appropriate scaling. An upper bound on

capacity is obtained in terms of the limit function, thus pinning

down the asymptotic capacity of the (σ, ρ)-power constrained

AWGN channel in the low-noise regime. We derive stronger

results when σ = 0, corresponding to the amplitude-constrained

AWGN channel.

Keywords: Additive white Gaussian noise, Shannon capacity,

energy harvesting, Steiner’s formula, intrinsic volumes.

I. I NTRODUCTION

The additive white Gaussian noise (AWGN) channel is one

of the most basic channel models in information theory. This

channel is represented by a sequence of channel inputs denoted

by Xi , and an input-independent additive noise Zi . The noise

variables Zi are assumed to be independent and identically

distributed as N (0, ν). The channel output Yi is given by

Yi = Xi + Zi for i ≥ 1.

(1)

The Shannon capacity of this channel is infinite when there

are no constraints on the channel inputs Xi ; however, practical

considerations always constrain the input in some manner. The

input constraints are often defined in terms of the power of the

input. For a channel input (x1 , x2 , . . . , xn ), the most common

power constraints encountered are:

(AP): An average power constraint of P > 0, which says

that

n

X

x2i ≤ nP.

i=1

(PP): A peak power constraint of A > 0, which says that

|xi | ≤ A, for all 1 ≤ i ≤ n.

The AWGN channel with the (AP) constraint was first

analyzed by Shannon [1]. Shannon showed that the capacity

C for this constraint 1 is given by

P

1

,

(2)

C = sup I(X; Y ) = log 1 +

2

ν

E[X 2 ]≤P

and the supremum is attained when X ∼ N (0, P ).

Compared to the (AP) constraint, fewer results exist about

the (PP) constrained AWGN. Smith [2] showed that the

channel capacity C is given by

C = sup I(X; Y ).

(3)

|X|≤A

Unlike the (AP) case, the supremum in equation (3) does not

have a closed-form expression. Smith used tools from complex

analysis to show that the supremum is achieved by a discrete,

finitely-supported distribution, and proposed an algorithm to

numerically evaluate the optimal distribution, and thereby the

capacity. Similar results were obtained for the quadrature

Gaussian channel by Shamai & Bar-David [3].

In this paper, we consider the following power constraint

called the (σ, ρ)-power constraint:

Definition 1. Let σ, ρ ≥ 0. A codeword (x1 , x2 , . . . , xn )

satisfies a (σ, ρ)-power constraint if

l

X

x2j ≤ σ + (l − k)ρ , ∀ 0 ≤ k < l ≤ n.

(4)

j=k+1

This constraint was first introduced in an earlier work [4],

and is motivated by an energy harvesting channel. For a

transmitter that harvests ρ units of energy per time slot and is

equipped with a battery of capacity σ, the power constraint on

transmitted sequences is precisely the (σ, ρ)-power constraint.

Let Sn (σ, ρ) be the set of all n-length sequences satisfying

the (σ, ρ)-power constraint. We look at the growth rate of the

volume of the sequence {Sn (σ, ρ)}, defined by

log Vol(Sn (σ, ρ))

,

(5)

n

where the limit exists due to subadditivity. Theorem 2 in [4]

established that the Shannon capacity C of a (σ, ρ)-power

v(σ, ρ) := lim

n→∞

1 All

logarithms in this paper are to base e

constrained AWGN channel with noise power ν satisfies

1

e2v(σ,ρ)

1

ρ

.

(6)

log 1 +

≤ C ≤ log 1 +

2

2πeν

2

ν

The upper bound is simply the channel capacity when σ = ∞

and the battery is initially empty [5], and the lower bound

is obtained via a simple application of the entropy power

inequality. The upper bound is not satisfactory as it does

not depend on σ. Furthermore, the lower and upper bounds

do not converge asymptotically as ν → 0: the lower bound

is v(σ, ρ) − 21 log 2πeν + O(ν) and the upper bound is

ρ

1

2 log ν + O(ν), and they differ by O(1). This implies that at

least one of these bounds is loose in the low-noise regime.

One might expect the upper bound to be loose, since it

disregards the effects of a finite value of σ on the capacity.

To rigorize this intuition, it is useful to think of coding with

the (σ, ρ)-constraints as trying to fit the largest number of

centers of noise balls into Sn (σ, ρ) such that the noise balls

are asymptotically approximately disjoint. As the noise power

ν decreases, so does the size of the noise balls, so one can

imagine a very efficient packing of the small balls such that

they occupy almost all the space. The total number of balls is

then roughly

# of balls ≈

Vol(Sn (σ, ρ))

,

Vol(Noise ball)

(7)

so that the capacity is roughly

1

Vol(Sn (σ, ρ)

1

log (# of balls ) = log

(8)

n

n

Vol(Noise ball)

1

≈ v(σ, ρ) − log 2πeν.

(9)

2

Expression (9) approximately equals the lower bound in (6)

for small values of ν. Turning this intuition into a proof is

nontrivial. Firstly, the intuitive explanation above ignores the

evolution of Sn (σ, ρ) with the dimension n. For instance,

consider the equations (7)-(9), where Sn (σ, ρ) is replaced by

the set Kn , given by

n−1 A A

1

1

Kn = − ,

× − n−1 , n−1 .

2 2

2

2

In this case, equations (7)-(9) might suggest that the asymptotic capacity is approximately log(A/2) − 12 log 2πeν, rather

than log A − 12 log 2πeν. Secondly, since we are only packing

the centers of noise balls in Sn (σ, ρ) and not balls themselves,

equation (8) is not entirely accurate.

In Section II of this paper, we make equations (7)-(9)

precise by establishing a new upper bound on capacity that is

asymptotically equal to expression (9). As in [4], our approach

involves a volume calculation; however, the improved upper

bound is in terms of the volume of the Minkowski sum of

Sn (σ, ρ) and a “noise ball.” We analyze this bound for the

special case of σ = 0 in Section III where it can be evaluated

in a closed form. We then consider the general case of σ > 0 in

Section IV. Although the upper bound does not admit a closed

form expression in this case, it is still possible to establish the

correctess of the asymptotic capacity expression in equation

(9) in the low-noise regime.

II. VOLUME BASED UPPER BOUND ON CAPACITY

√

Let B

√ of

√n ( nν) denote the n-dimensional Euclidean ball

sum of Sn (σ, ρ) and Bn ( nν)

radius nν. The Minkowski √

is denoted by Sn (σ, ρ) ⊕ Bn ( nν) and is the set

√

{xn + z n | xn ∈ Sn (σ, ρ), z n ∈ Bn ( nν)}.

Theorem 1. The capacity C of an AWGN channel with a

(σ, ρ)-power constraint and noise power ν satisfies

p

1

C ≤ lim lim sup log Vol Sn (σ, ρ) ⊕ Bn

n(ν + )

→0+ n→∞ n

1

− log 2πeν.

(10)

2

Proof: For n ∈ N, let Fn be the set of all probability

distributions supported on Sn (σ, ρ). The channel capacity

(Theorem 1, [4]) is

1

I(X n ; Y n ).

(11)

sup

C = lim

n→∞ n p n (xn )∈F

n

X

p

Let pX n (xn ) ∈ Fn . Denote Sn (σ, ρ) ⊕ Bn ( n(ν + ) ) by

Cn . Let > 0, and let δn := P (Y n ∈

/ Cn ) . By the law of large

numbers, we have δn → 0. Let χ be the indicator variable for

the event {Y n ∈ Cn }. Then

h(Y n ) = H(δn ) + δ̄n h(Y n |χ = 1) + δn h(Y n |χ = 0)

≤ H(δn ) + δ̄n log Vol(Cn ) + δn h(Y n |χ = 0). (12)

where ā = 1 − a. Since kX n k2 ≤ σ + nρ with probability 1,

we have the following bound on power of Y n :

E[kY n k2 ] = E[kX n k2 ] + E[kZ n k2 ] ≤ σ + nρ + nν.

This translates to the bound

E[kY n k2 | χ = 0] ≤

n(ρ + ν + σ/n)

,

δn

so

n

2πe(ρ + ν + σ/n)

log

.

2

δn

Substituting into inequality (12) and dividing by n gives

h(Y n | χ = 0) ≤

h(Y n )

H(δn )

log Vol(Cn ) δn

2πe(ρ + ν + σ/n)

≤

+ δ̄n

+

log

.

n

n

n

2

δn

Since this holds for any choice of pX n ∈ Fn , we obtain

H(δn )

log Vol(Cn )

1

h(Y n ) ≤

+ δ̄n

n

n

n

pX n ∈Fn

δn

2πe(ρ + ν + σ/n)

+

log

.

2

δn

Taking the limsup in n, we arrive at

sup

1

log Vol(Cn )

h(Y n ) ≤ lim sup

n

n

n→∞ pX n ∈Fn

n→∞

p

log Vol(Sn (σ, ρ) ⊕ Bn ( n(ν + ) ))

= lim sup

.

n

n→∞

lim sup sup

Taking the limit as → 0+ and noting that capacity is

limn→∞ suppX n ∈Fn n1 h(Y n ) − 12 log 2πeν, we arrive at the

bound in expression (10).

We define a function ` : [0, ∞) → R, as the growth rate of

volume of the Minkowski sum, as follows:

√

1

`(ν) := lim sup log Vol(Sn (σ, ρ) ⊕ Bn ( nν )). (13)

n→∞ n

The upper bound may be restated as

1

C ≤ lim `(ν + ) − log 2πeν .

→0+

2

(14)

Theorem 2 (Steiner’s formula). Let Kn ⊆ Rn be a compact

convex set and let Bn ⊆ Rn be the unit ball. Let µj (Kn )

denote j-th intrinsic volume Kn and j = Vol(Bj ). Then

n

X

µn−j (Kn )j tj ,

∀t ≥ 0.

(15)

j=0

Intrinsic volumes are fundamental to convex and integral

geometry. They describe the global characteristics of a set,

including the volume, surface area, mean width, and Euler

characteristic. For more details, we refer the reader to Schneider [7] and section 14.2 of Schneider

√ & Weil [8]. Steiner’s

formula states that Vol(Sn (σ, ρ)⊕Bn ( nν)) depends not only

on the volumes of these sets, but also on the intrinsic volumes.

Intrinsic volumes are notoriously hard to compute even for

simple sets such as polytopes [6], so it is optimistic to expect

a closed form expression for the intrinsic volumes of Sn (σ, ρ).

Furthermore, the sets {Sn (σ, ρ)} evolve with the dimension n,

so it is also important to keep track of the evolution of the

intrinsic volumes in order to compute the overall volume via

Steiner’s formula.

The case σ = 0 is special, since Sn (σ, ρ) is equal to the

√ √

cube [− ρ, ρ]n . Then the intrinsic volumes have a simple

closed form. We focus on this case in the next section.

III. T HE CASE OF σ = 0

n→∞

√

1

log Vol([−A, A]n ⊕ Bn ( nν)),

n

(16)

and the upper bound on channel capacity is as in inequality

(14). The main result of this section is as follows:

Theorem 3. The function `(ν) is continuous on [0, ∞). For

ν > 0, we have

`(ν) = H(θ∗ ) + (1 − θ∗ ) log 2A +

θ∗

2πeν

log ∗ ,

2

θ

since {An } and {Bn } themselves satisfy such an inclusion

property. Hence, the sequence {log Vol(Cn )} is super-additive,

n)

and `(ν) given by the limit limn log Vol(C

is well-defined.

n

Using Steiner’s formula, we express the volume of Cn as

n X

√

n

Vol(Cn ) =

(18)

(2A)n−j j ( nν)j .

j

j=0

Since the volume is a sum of n + 1 terms, the exponential

growth rate of the volume is determined by the growth rate of

the largest term amongst the n + 1 terms. To formalize this

notion, we define the function fnν (θ), for 0 ≤ θ ≤ 1 as

√

1

Γ(n + 1)

π nθ/2

nθ̄

nθ

log

(2A) ( nν)

,

n

Γ(nθ̄ + 1)Γ(nθ + 1) Γ(nθ/2 + 1)

where θ̄ denotes 1 − θ, and write

Vol(Cn ) =

n

X

ν

enfn (j/n) .

j=0

ν

nfn

(θ̂n )

The largest term is e

, where

θ̂n = arg max fnν (j/n).

j/n

(19)

We thus have

lim fnν (θ̂n ) = `(ν).

n

The key step in our proof is to show that the sequence {fnν }

converges uniformly on [0, 1] to a limit function f ν , given by

θ

2πeν

log

.

2

θ

This uniform convergence enables us to prove that

f ν (θ) = H(θ) + θ̄ log 2A +

(20)

`(ν) = lim fnν (θ̂n ) = max f ν (θ).

√

To simplify notation, we denote A := ρ. We consider

the scalar AWGN channel with noise power ν and an input

amplitude constraint of A. Let the capacity of this channel be

C. Recall that the function `(ν) is defined as

`(ν) = lim sup

(1 − θ∗ )2

2A2

=

.

πν

θ∗ 3

Proof of Theorem 3: We describe the proof strategy;

for

√

details, see [9]. Let An := [−A, A]n , Bn := Bn ( nν), and

Cn := An ⊕ Bn . Then

Cm × Cn ⊆ Cm+n , for all m, n ≥ 1,

Although ` is clearly monotonically increasing, it is not clear a

priori whether ` is continuous. Note that since `(0) = v(σ, ρ),

the continuity of ` at 0 leads to an asymptotic upper bound as

in expression (9). To study the properties of `, we use a result

from convex geometry known as Steiner’s formula [6]:

Vol(Kn ⊕ tBn ) =

where H is the binary entropy function and θ∗ ∈ (0, 1) is the

unique solution to

(17)

n

θ

To prove the continuity of ` at points ν > 0, note that

toggling ν slightly does not change f ν significantly. Thus, the

value of the supremum, which is `(ν), also does not change

significantly. To prove continuity at 0, we differentiate f ν and

obtain θ∗ (ν) = arg maxθ f ν (θ) as the solution to

2A2

(1 − θ∗ )2

=

.

(21)

3

πν

θ∗

From equation (21), we note that ν → 0 implies θ∗ → 0 at

the rate cν 1/3 , where c is a constant. Substituting this into the

expression for f ν , we obtain

`(ν) = log 2A + O(ν 1/3 ),

(22)

For n ≥ 1, denote the intrinsic volumes of Sn (σ, ρ) by

{µn (i)}ni=0 . Define Gn : R → R and gn : R → R as

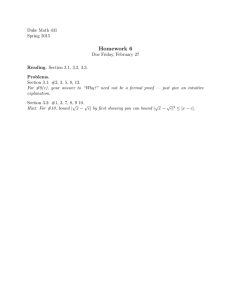

5

Lower bound

4.5

New upper bound

Bits/Channel Use

4

Gn (t) = log

Old upper bound

3.5

n

X

µn (j)ejt , gn (t) =

j=0

3

2.5

2

1.5

1

0.5

0

−4

−3

−2

−1

0

1

2

3

4

log(1/⌫)

Fig. 1. Plot showing the capacity bounds from inequality (6) along with

the new upper bound from Theorem 1, for amplitude A = 1. The new upper

bound and the lower bound converge asymptotically as ν → 0.

Gn (t)

.

n

(26)

Let Λ be the pointwise limit of the sequence of functions {gn },

and let Λ∗ be the convex conjugate of Λ. Then the following

hold:

1) `(ν) is continuous on [0, ∞).

2) For ν > 0,

2πeν

θ

∗

.

(27)

`(ν) = sup −Λ (1 − θ) + log

2

θ

θ∈[0,1]

Proof of Theorem 4: We again provide a proof sketch

and refer to [9] for the details. We first check that Sn (σ, ρ) is

convex and has well-defined intrinsic volumes. Using Steiner’s

formula for Sn (σ, ρ), we obtain

n

X

√

√

µn (n − j)j ( nν)j . (28)

Vol(Sn (σ, ρ) ⊕ Bn ( nν )) =

j=0

implying the continuity of ` at 0.

Corollary 3.1. As ν → 0, the capacity C of an AWGN channel

with amplitude constraint A satisfies

C = log 2A −

n

X

√

ν

Vol(Sn (σ, ρ) ⊕ Bn ( nν)) =

enfn (j/n) ,

1

log 2πeν + O(ν 1/3 ).

2

1

log 2πeν + O(ν 1/3 ).

2

(23)

The lower bound from inequality (6) leads to

C ≥ log 2A −

1

log 2πeν + O(ν).

2

(24)

Inequalities (23) and (24) establish the corollary.

We may use Theorem 3 to numerically evaluate θ∗ and

plot the corresponding upper bound from Theorem 1. Figure 1

shows the resulting plot, along with the bounds from inequality

(6). Note that the upper bound from expression (6) (“old

upper bound”) is not asymptotically tight in the low-noise

regime, but the bound from Theorem 1 (“new upper bound”)

is asymptotically tight.

IV. T HE CASE OF σ > 0

In this section, we parallel the upper-bounding technique

used in Section III for σ > 0. The set Sn (σ, ρ) is no longer an

easily identifiable set and the intrinsic volumes of Sn (σ, ρ) do

not have a closed-form expression; however, it is still possible

to obtain similar results.

Our main theorem is the following:

Theorem 4. Define `(ν) as

`(ν) = lim sup

n→∞

√

1

log Vol(Sn (σ, ρ) ⊕ Bn ( nν )).

n

(29)

j=0

Proof: Using equation (22) along with Theorem 1 gives

C ≤ log 2A −

We argue that the growth rate of the volume depends only on

the growth rate of the largest amongst the n + 1 terms. As in

Section III, we would like to express the volume as

(25)

for a sequence of functions {fnν } defined over [0, 1]. Define a

function an (θ) by linearly interpolating the values of an (j/n),

where the value of an (j/n) is given by:

j

1

an

(30)

= log µn (n − j) for 0 ≤ j ≤ n.

n

n

The function bνn (θ) is given by

bνn (θ) =

1

π nθ/2

log

(nν)nθ/2 for θ ∈ [0, 1]. (31)

n

Γ(nθ/2 + 1)

Define fnν : [0, 1] → R as

fnν (θ) = an (θ) + bνn (θ).

(32)

This definition ensures that equation (29) is satisfied. The

may be shown in a

uniform convergence of {bνn } to θ2 log 2πeν

θ

straightforward manner. The challenge is to show that {an (·)}

uniformly converge to the limit function −Λ∗ (1 − θ), which

yields uniform convergence of {fnν } to the desired expression

in (27). This convergence is established as follows:

1. We use the following crucial property:

Sm+n (σ, ρ) ⊆ Sm (σ, ρ) × Sn (σ, ρ).

(33)

The sequence of intrinsic volumes of the product set

Sm (σ, ρ) × Sn (σ, ρ) is given by the convolution of the

sequences µn (·) and µm (·) [6]. The monotonicity of

intrinsic volumes applied to the inclusion in (33) yields

µm+n ≤ µm ? µn ,

(34)

By continuity of ` at 0, we have that as ν → 0,

which then implies

Gm+n ≤ Gm + Gn .

(35)

The pointwise convergence of {gn } to a limit function Λ

follows from the subadditivity in expression (35). We then

use the Gärtner-Ellis theorem [10] from large deviations

to show a “large deviations upper bound” on the measures

{µn/n }∞

n=1 , which are scaled versions of {µn } defined

by µn/n nj := µn (j). We show that

1

log µn/n (I) ≤ − inf Λ∗ (x),

(36)

x∈I

n→∞ n

for any closed set I ⊆ R.

2. Let γ = d σρ e. It was shown in [4] that the sequence

{Sn (σ, ρ)} satisfies another crucial structural property:

lim sup

Sm+n+2γ ⊇ [Sm × 0] × [Sn × 0] ,

(37)

where 0 is the zero vector of length γ. This property is

used to establish a “large deviations lower bound” on the

measures {µn/n }:

1

log µn/n (F ) ≥ − inf Λ∗ (x),

(38)

x∈F

n

for any open set F ⊆ R.

3. The steps above show that {µn/n } converge in the “large

deviation sense,” with rate function Λ∗ . Such convergence

does not generally imply uniform or even pointwise convergence of the linearly interpolated functions {an (·)}.

We use the fact that for each n, the sequence µn (·)

is log-concave [11]. This fact, combined with the large

deviations convergence, may be used to prove uniform

convergence of {an } to −Λ∗ (1 − θ).

Letting f ν (θ) = −Λ∗ (1 − θ) + θ2 log 2πeν

θ , we follow a

sequence of steps similar to those in Section III to arrive at

lim inf

n→∞

`(ν) = sup f ν (θ).

(39)

θ

The continuity of `(ν) for ν > 0 follows by the same

argument in the proof of Theorem 3. Proving continuity at

ν = 0 is more involved, since we do not have an explicit

expression for θ∗ = arg maxθ f ν (θ). We use the following

facts: (a) For all ν > 0, we have f ν (θ∗ ) ≥ f ν (0) = v(σ, ρ)

(b) f ν (θ∗ ) → −∞ as ν → 0 if θ∗ is bounded away from

0, and conclude that θ∗ → 0 as ν → 0. We then use the

continuity of Λ∗ and the fact that θ2 log 2πeν

≤ πν for all

θ

θ ∈ [0, 1] to obtain the limit f ν (θ∗ ) → v(σ, ρ) as ν → 0.

Corollary 4.1. The capacity C of a (σ, ρ)-power constrained

AWGN channel with noise power ν → 0 satisfies

1

C = v(σ, ρ) − log 2πeν + (ν),

(40)

2

where (·) is a function such that limν→0 (ν) = 0.

Proof: Using the lower bound in inequality (6), we have

1

e2v(σ,ρ)

C ≥ log 1 +

2

2πeν

1

= v(σ, ρ) − log 2πeν + O(ν).

(41)

2

`(ν) = v(σ, ρ) + (ν)

for some (·) satisfying limν→0 (ν) = 0. Then

1

C ≤ v(σ, ρ) − log 2πeν + (ν).

(42)

2

Our claim follows from inequalities (41) and (42). Unlike the

case of σ = 0, we cannot give a precise rate at which (ν) →

0.

V. C ONCLUSION

We have analyzed an AWGN channel with a power constraint motivated by energy harvesting communication systems. We approached the problem from a geometric viewpoint

and established an upper bound√on channel capacity in terms of

the volume of Sn (σ, ρ) ⊕ Bn ( nν), according to the intrinsic

volumes of Sn (σ, ρ). For the special case σ = 0, corresponding to the peak power constrained AWGN channel, we

explicitly evaluated the upper bound and derived asymptotic

capacity results in the low-noise regime. For the general case

of σ > 0, we exploited the geometric properties of {Sn (σ, ρ)}:

A : Sm+n ⊆ Sn × Sm ,

B : [Sm × 0] × [Sn × 0] ⊆ Sm+n+2γ (σ, ρ), when γ = d σρ e

and 0 is the zero vector of length γ,

to show that the appropriately normalized intrinsic volumes of

{Sn (σ, ρ)} converge to a continuous limit function. The upper

bound on channel capacity is expressed in terms of the limit

function, and the continuity of the same enabled us to prove

asymptotic capacity results in the low-noise regime.

ACKNOWLEDGEMENTS

The research of the authors was supported by NSF grant

ECCS-1343398 and the NSF Science & Technology Center

grant CCF-0939370, Science of Information.

R EFERENCES

[1] C. Shannon, “A mathematical theory of communications, I and II,” Bell

Syst. Tech. J, vol. 27, pp. 379–423, 1948.

[2] J. G. Smith, “The information capacity of amplitude-and varianceconstrained scalar gaussian channels,” Information and Control, vol. 18,

no. 3, pp. 203–219, 1971.

[3] S. Shamai and I. Bar-David, “The capacity of average and peakpower-limited quadrature Gaussian channels,” IEEE Transactions on

Information Theory, vol. 41, no. 4, pp. 1060–1071, 1995.

[4] V. Jog and V. Anantharam, “An energy harvesting AWGN channel with a

finite battery,” in International Symposium on Information Theory (ISIT)

2014, pp. 806–810, June 2014.

[5] O. Ozel and S. Ulukus, “Achieving AWGN capacity under stochastic

energy harvesting,” IEEE Transactions on Information Theory, vol. 58,

no. 10, pp. 6471–6483, 2012.

[6] D. A. Klain and G.-C. Rota, Introduction to geometric probability.

Cambridge University Press, 1997.

[7] R. Schneider, Convex bodies: the Brunn Minkowski theory, vol. 151.

Cambridge University Press, 2013.

[8] R. Schneider and W. Weil, Stochastic and integral geometry. Springer,

2008.

[9] V. Jog and V. Anantharam, “A geometric analysis of the AWGN channel

with a (σ, ρ)-power constraint,” arXiv e-prints, April 2015. Available

at http://arxiv.org/abs/1504.05182.

[10] A. Dembo and O. Zeitouni, Large deviations techniques and applications, vol. 2. Springer, 1998.

[11] P. McMullen, “Inequalities between intrinsic volumes,” Monatshefte für

Mathematik, vol. 111, no. 1, pp. 47–53, 1991.