Generalized Beta Mixture of Gaussians

Advances in Neural Information Processing Systems 24 (2011)

Generalized Beta Mixture of Gaussians

Artin Armagan, David B. Dunson and Merlise Clyde

Presented by Shaobo Han, Duke University

July 22, 2013

A.Armagan et al., 2012

Generalized Beta Mixture of Gaussians

1 / 8

Outline

1

2

3

Generalized Beta Mixture of Normals

4

Equivalent Conjugate Hierarchies

5

A.Armagan et al., 2012

Generalized Beta Mixture of Gaussians

2 / 8

Introduction

Two alternatives in Bayesian sparse learning:

Point mass mixture priors

θ j

∼ ( 1 − π ) δ

0

+ π g

θ

, j = 1 , . . . , p

Continuous shrinkage priors

I

I

Inducing regularization penalties

Global-local shrinkage [N. G. Polson and J. G. Scott(2010)] 1

θ j

∼ N ( 0 , ψ j

τ ) , ψ j

∼ f , τ ∼ g e.g. the normal-exponential-gamma , the horseshoe and the generalized double Pareto .

I Computationally attractive

(1)

(2)

1 Shrinkage globally, act locally: sparse Bayesian regularization and prediction,

Bayesian statistics, 2010

A.Armagan et al., 2012

Generalized Beta Mixture of Gaussians

3 / 8

The horseshoe estimator

[ Sparse normal-means problem ] Suppose a p − dimensional vector y | θ ∼ N ( θ , I ) is observed.

θ j

| τ j

∼ N ( 0 , τ j

) , τ j

1 / 2

∼ C

+

( 0 , φ

1 / 2

) , j = 1 , . . . , p

With an appropriate transformation ρ j

= 1 / ( 1 + τ j

) ,

θ j

| ρ j

∼ N ( 0 , 1 /ρ j

− 1 ) , π ( ρ j

| φ ) ∝ ρ j

− 1 / 2

( 1 − ρ j

)

− 1 / 2

1

1 + ( φ − 1 ) ρ j

For fixed values φ = 1 [C. M. Carvalho, et. al. (2010)]

2

,

(3)

(4)

E

( θ j

| y ) =

Z

1

0 y j

( 1 − ρ j

) p ( ρ j

| y ) d ρ j

= { 1 −

E

( ρ j

| y ) } y j

(5)

Two major issues: robustness to large signals and shrinkage of noise .

Horseshoe prior: φ = 1, ρ j

∼ B ( 1 / 2 , 1 / 2 ) .

Strawderman-Berger prior: φ = 1, ρ j

∼ B ( 1 , 1 / 2 ) .

2

The horseshoe estimator for sparse signals, Biometrika, 2010

A.Armagan et al., 2012

Generalized Beta Mixture of Gaussians

4 / 8

TPB normal scale mixture prior

Definition 1

The three-parameter beta (TPB) distribution for a random variable X is defined by the density function f ( x ; a , b , φ ) =

Γ( a + b )

Γ( a )Γ( b )

φ b x b − 1

( 1 − x ) a − 1

{ 1 + ( φ − 1 ) x }

− ( a + b ) for 0 < x < 1, a > 0, b > 0 and φ > 0 and is denoted by T PB ( a , b , φ )

(6)

Definition 2

The TPB normal scale mixture representation for the distribution of random variable θ j is given by

θ j

| ρ j

∼ N ( 0 , 1 /ρ j

− 1 ) , ρ j

∼ T PB ( a , b , φ ) (7) where a > 0, b > 0 and φ > 0. The resulting marginal distribution on θ j denoted by T PBN ( a , b , φ ) .

is

A.Armagan et al., 2012

Generalized Beta Mixture of Gaussians

5 / 8

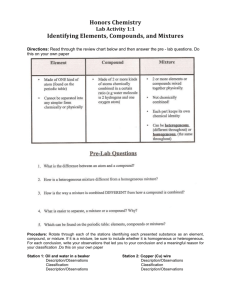

Shrinkage effects for different values of a , b and φ

θ j

| ρ j

∼ N ( 0 , 1 /ρ j

− 1 ) , ρ j

∼ T PB ( a , b , φ )

(a,b)=(1/2,1/2) (a,b)=(1,1/2) (a,b)=(1,1)

(8)

( a )

(a,b)=(1/2,2)

( b )

(a,b)=(2,2)

( c )

(a,b)=(5,2)

( d ) ( e ) ( f )

Figure 1:

φ = { 1 / 10 , 1 / 9 , 1 / 8 , 1 / 7 , 1 / 6 , 1 / 5 , 1 / 4 , 1 / 3 , 1 / 2 , 1 , 2 , 3 , 4 , 5 , 6 , 7 , 8 , 9 , 10 } considered for all pairs of a and b . The line corresponding to the lowest value of

φ is drawn with a dashed line.

A.Armagan et al., 2012

Generalized Beta Mixture of Gaussians

6 / 8

Equivalence of hierarchies

Proposition 3

If θ j

∼ T PBN ( a , b , φ ) , then

1

2

)

) .

.

θ

θ j j that τ j

∼ N ( 0 , τ j

∼ N ( 0 , τ j

)

)

,

,

τ

π j

(

∼ G ( a , λ j

τ j

) =

) and

Γ( a + b )

Γ( a )Γ( b )

φ

λ

− a j

τ

φ ∼ β

0

( a , b )

∼ G a − 1

(

(

1 b , φ

+ τ

) j

.

/φ )

− ( a + b ) which implies

, the inverted beta distribution with parameter a and b.

Corollary 4

If a = 1 in Proposition 3.1, then TPBN ≡ NEG . If ( a , b , φ ) = ( 1 , 1 / 2 , 1 ) in

Proposition 3.1, then TPBN ≡ SB ≡ NEG

Corollary 5

If θ

θ j j

∼ N ( 0 , τ j

) , τ j

1 / 2

∼ C +

( 0 , φ

1 / 2

) and φ

1 / 2 ∼ C +

( 0 , 1 ) , then

∼ T PBN ( 1 / 2 , 1 / 2 , φ ) , φ ∼ G ( 1 / 2 , ω ) , and ω ∼ G ( 1 / 2 , 1 ) .

A.Armagan et al., 2012

Generalized Beta Mixture of Gaussians

7 / 8

Experiments: sparse linear regression

(n,p)=(50,20) (n,p)=(250,100)

( a ) ( b )

Figure 2: Relative ME at different ( a , b , φ ) values for (a) Case 1 and (b) Case 2.

Both contains on average 10 nonzero elements.

(n,p)=(100,10000) ,

Figure 3: Posterior mean by sampling (square) and by approximate inference

(circle).

( a , b , φ ) = ( 1 , 1 / 2 , 10

− 4 )

A.Armagan et al., 2012

Generalized Beta Mixture of Gaussians

8 / 8