Efficient Integer DCT Architectures for HEVC

advertisement

IEEE TRANSACTIONS ON CIRCUITS AND SYSTEMS FOR VIDEO TECHNOLOGY

1

Efficient Integer DCT Architectures for HEVC

Pramod Kumar Meher, Senior Member, IEEE, Sang Yoon Park, Member, IEEE,

Basant Kumar Mohanty, Senior Member, IEEE, Khoon Seong Lim, and Chuohao Yeo, Member, IEEE

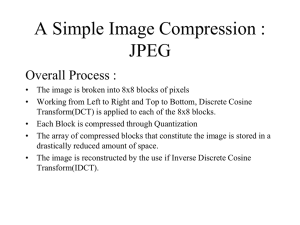

Abstract—In this paper, we present area- and power-efficient

architectures for the implementation of integer discrete cosine

transform (DCT) of different lengths to be used in High Efficiency

Video Coding (HEVC). We show that an efficient constant matrixmultiplication scheme can be used to derive parallel architectures

for 1-D integer DCT of different lengths. We also show that

the proposed structure could be reusable for DCT of lengths

4, 8, 16, and 32 with a throughput of 32 DCT coefficients

per cycle irrespective of the transform size. Moreover, the

proposed architecture could be pruned to reduce the complexity

of implementation substantially with only a marginal affect on

the coding performance. We propose power-efficient structures

for folded and full-parallel implementations of 2-D DCT. From

the synthesis result, it is found that the proposed architecture

involves nearly 14% less area-delay product (ADP) and 19% less

energy per sample (EPS) compared to the direct implementation

of the reference algorithm, in average, for integer DCT of lengths

4, 8, 16, and 32. Also, an additional 19% saving in ADP and 20%

saving in EPS can be achieved by the proposed pruning algorithm

with nearly the same throughput rate. The proposed architecture

is found to support Ultra-High-Definition (UHD) 7680×4320 @

60 fps video which is one of the applications of HEVC.

Index Terms—Discrete cosine transform (DCT), High Efficiency Video Coding, HEVC, H.265, integer DCT, video coding.

I. I NTRODUCTION

The discrete cosine transform (DCT) plays a vital role

in video compression due to its near-optimal de-correlation

efficiency [1]. Several variations of integer DCT have been

suggested in the last two decades to reduce the computational

complexity [2]–[6]. The new H.265/HEVC (High Efficiency

Video Coding) standard [7] has been recently finalized and

poised to replace H.264/AVC [8]. Some hardware architectures

for the integer DCT for HEVC have also been proposed for its

real-time implementation. Ahmed et al [9] have decomposed

the DCT matrices into sparse sub-matrices where the multiplications are avoided by using lifting scheme. Shen et al [10]

have used the multiplierless multiple constant multiplication

(MCM) approach for 4-point and 8-point DCT, and have used

the normal multipliers with sharing techniques for 16 and 32point DCTs. Park et al [11] have used Chen’s factorization

of DCT where the butterfly operation has been implemented

by the processing element with only shifters, adders, and

Manuscript submitted on xx, xx, xxxx. (Corresponding author: S. Y. Park.)

P. K. Meher, S. Y. Park, K. S. Lim, and C. Yeo are with the Institute

for Infocomm Research, 138632 Singapore (e-mail: {pkmeher, sypark, kslim,

chyeo}@i2r.a-star.edu.sg). B. K. Mohanty is with the Department of Electronics and Communication Engineering, Jaypee University of Engineering

and Technology, Raghogarh, Guna, Madhya Pradesh, India-473226 (e-mail:

bk.mohanti@juet.ac.in).

Copyright (c) 2013 IEEE. Personal use of this material is permitted.

However, permission to use this material for any other purposes must be

obtained from the IEEE by sending an email to pubs-permissions@ieee.org.

multiplexors. Budagavi and Sze [12] have proposed a unified

structure to be used for forward as well as inverse transform

after the matrix decomposition.

One key feature of HEVC is that it supports DCT of

different sizes such as 4, 8, 16, and 32. Therefore, the hardware

architecture should be flexible enough for the computation

of DCT of any of these lengths. The existing designs for

conventional DCT based on constant matrix multiplication

(CMM) and MCM can provide optimal solutions for the

computation of any of these lengths but they are not reusable

for any length to support the same throughput processing of

DCT of different transform lengths. Considering this issue,

we have analyzed the possible implementations of integer

DCT for HEVC in the context of resource requirement and

reusability, and based on that, we have derived the proposed

algorithm for hardware implementation. We have designed

scalable and reusable architectures for 1-D and 2-D integer

DCTs for HEVC which could be reused for any of the

prescribed lengths with the same throughput of processing

irrespective of transform size.

In the next section, we present algorithms for hardware

implementation of the HEVC integer DCTs of different lengths

4, 8, 16, and 32. In Section III, we illustrate the design of the

proposed architecture for the implementation of 4-point and 8point integer DCT along with a generalized design of integer

DCT of length N , which could be used for the DCT of length

N = 16 and 32. Moreover, we demonstrate the reusability

of the proposed solutions in this section. In Section IV,

we propose power-efficient designs of transposition buffers

for full-parallel and folded implementations of 2-D Integer

DCT. In Section V, we propose a bit-pruning scheme for

the implementation of integer DCT and present the impact

of pruning on forward and inverse transforms. In Section VI,

we compare the synthesis result of the proposed architecture

with those of existing architectures for HEVC.

II. A LGORITHM FOR H ARDWARE I MPLEMENTATION OF

I NTEGER DCT FOR HEVC

In the Joint Collaborative Team-Video Coding (JCT-VC),

which manages the standardization of HEVC, Core Experiment 10 (CE10) studied the design of core transforms over

several meeting cycles [13]. The eventual HEVC transform

design [14] involves coefficients of 8-bit size, but does not

allow full factorization unlike other competing proposals [13].

It however allows for both matrix multiplication and partial

butterfly implementation. In this section, we have used the

partial-butterfly algorithm of [14] for the computation of

integer DCT along with its efficient algorithmic transformation

for hardware implementation.

2

IEEE TRANSACTIONS ON CIRCUITS AND SYSTEMS FOR VIDEO TECHNOLOGY

A. Key Features of Integer DCT for HEVC

1

The N -point integer DCT for HEVC given by [14] can be

computed by partial butterfly approach using a (N/2)-point

DCT and a matrix-vector product of (N/2) × (N/2) matrix

with an (N/2)-point vector as

a(0)

y(0)

y(2)

a(1)

·

·

= CN/2

·

·

(1a)

·

·

a(N/2 − 2)

y(N − 4)

a(N/2 − 1)

y(N − 2)

and

b(0)

y(1)

y(3)

b(1)

·

·

·

·

= MN/2

·

·

b(N/2 − 2)

y(N − 3)

b(N/2 − 1)

y(N − 1)

(1b)

where

a(i) = x(i) + x(N − i − 1)

b(i) = x(i) − x(N − i − 1)

(4)

and

64

89

83

75

C8 =

64

50

36

18

64 64 64 64 64 64 64

75 50 18 −18 −50 −75 −89

36 −36 −83 −83 −36 36 83

−18 −89 −50 50 89 18 −75

. (5)

−64 −64 64 64 −64 −64 64

−89 18 75 −75 −18 89 −50

−83 83 −36 −36 83 −83 36

−50 75 −89 89 −75 50 −18

Based on (1) and (2), hardware oriented algorithms for DCT

computation can be derived in three stages as in Table I.

For 8, 16, and 32-point DCT, even indexed coefficients of

[y(0), y(2), y(4), · · ·y(N − 2)] are computed as 4, 8, and 16point DCTs of [a(0), a(1), a(2), · · ·a(N/2 − 1)], respectively,

according to (1a). In Table II, we have listed the arithmetic

complexities of the reference algorithm and the MCM-based

algorithm for 4, 8, 16, and 32-point DCT. Algorithms for

Inverse DCT (IDCT) can also be derived in a similar way.

(2)

for i = 0, 1, · · ·, N/2 − 1. X = [x(0), x(1), · · ·, x(N − 1)]

is the input vector and Y = [y(0), y(1), · · ·, y(N − 1)] is N point DCT of X. CN/2 is (N/2)-point integer DCT kernel

matrix of size (N/2) × (N/2). MN/2 is also a matrix of size

(N/2) × (N/2) and its (i, j)th entry is defined as

2i+1,j

mi,j

for 0 ≤ i, j ≤ N/2 − 1

N/2 = cN

are represented, respectively, as

64

64

64

64

83

36 −36 −83

C4 =

64 −64 −64

64

36 −83

83 −36

(3)

c2i+1,j

N

is the (2i + 1, j)th entry of the matrix CN .

where

Note that (1a) could be similarly decomposed, recursively,

further using CN/4 and MN/4 . We have referred to the direct

implementation of DCT based on (1)-(3) as the reference

algorithm in the rest of the paper.

B. Hardware Oriented Algorithm

Direct implementation of (1) requires N 2 /4 + MULN/2

multiplications, N 2 /4+N/2+ADDN/2 additions and 2 shifts

where MULN/2 and ADDN/2 are the number of multiplications and additions/subtractions of (N/2)-point DCT, respectively. Computation of (1) could be treated as a CMM problem

[15]–[17]. Since the absolute values of the coefficients in all

the rows and columns of matrix M in (1b) are identical, CMM

problem can be implemented as a set of N/2 MCMs which

will result in a highly regular architecture and will have lowcomplexity implementation.

The kernel matrices for 4, 8, 16, and 32-point integer DCT

for HEVC are given in [14], and 4 and 8-point integer DCT

1 While the standard defines the inverse DCT, we show the forward DCT

here since it is more directly related to the later parts of this paper. Also, note

that the transpose of the inverse DCT can be used as the forward DCT.

III. P ROPOSED A RCHITECTURES FOR I NTEGER DCT

C OMPUTATION

In this section, we present the proposed architecture for the

computation of integer DCT of length 4. We also present a

generalized architecture for integer DCT of higher lengths.

A. Proposed Architecture for 4-Point Integer DCT

The proposed architecture for 4-point integer DCT is shown

in Fig. 1(a). It consists of an input adder unit (IAU), a shiftadd unit (SAU) and an output adder unit (OAU). The IAU

computes a(0), a(1), b(0), and b(1) according to STAGE-1 of

the algorithm as described in Table I. The computation of ti,36

and ti,83 are performed by two SAUs according to STAGE2 of the algorithm. The computation of t0,64 and t1,64 does

not consume any logic since the shift operations could be rewired in hardware. The structure of SAU is shown in Fig. 1(b).

Outputs of the SAU are finally added by the OAU according

to STAGE-3 of the algorithm.

B. Proposed Architecture for Integer DCT of Length 8 and

Higher Length DCTs

The generalized architecture for N -point integer DCT based

on the proposed algorithm is shown in Fig. 2. It consists

of four units, namely the IAU, (N/2)-point integer DCT

unit, SAU, and OAU. The IAU computes a(i) and b(i) for

i = 0, 1, ..., N/2 − 1 according to STAGE-1 of the algorithm

of Section II-B. The SAU provides the result of multiplication

of input sample with DCT coefficient by STAGE-2 of the

algorithm. Finally, the OAU generates the output of DCT from

a binary adder tree of log2 N − 1 stages. Figs. 3(a), 3(b),

MEHER et al.: EFFICIENT INTEGER DCT ARCHITECTURES FOR HEVC

3

TABLE I

3-STAGE S H ARDWARE O RIENTED A LGORITHMS FOR THE C OMPUTATION OF 4, 8, 16, AND 32-P OINT DCT.

STAGE-1

STAGE-2

STAGE-3

STAGE-1

STAGE-2

STAGE-3

STAGE-1

STAGE-2

STAGE-3

STAGE-1

STAGE-2

STAGE-3

4-Point DCT Computation

a(i) = x(i) + x(3 − i), b(i) = x(i) − x(3 − i) for i = 0 and 1.

mi,9 = 9b(i) = (b(i) << 3) + b(i), ti,64 = 64a(i) = a(i) << 6, ti,83 = 83b(i) = (b(i) << 6) + (mi,9 << 1) + b(i),

ti,36 = 36b(i) = mi,9 << 2 for i = 0 and 1.

y(0) = t0,64 + t1,64 , y(2) = t0,64 − t1,64 , y(1) = t0,83 + t1,36 , y(3) = t0,36 − t1,83 .

8-Point DCT Computation

a(i) = x(i) + x(7 − i), b(i) = x(i) − x(7 − i) for 0 ≤ i ≤ 3.

mi,9 = 9b(i) = (b(i) << 3) + b(i), mi,25 = 25b(i) = (b(i) << 4) + mi,9 , ti,18 = 18b(i) = mi,9 << 1,

ti,50 = 50b(i) = mi,25 << 1, ti,75 = 75b(i) = ti,50 + mi,25 , ti,89 = 89b(i) = (b(i) << 6) + mi,25 for 0 ≤ i ≤ 3.

y(1) = t0,89 + t1,75 + t2,50 + t3,18 , y(3) = t0,75 − t1,18 − t2,89 − t3,50 ,

y(5) = t0,50 − t1,89 + t2,18 + t3,75 , y(7) = t0,18 − t1,50 + t2,75 − t3,89 .

16-Point DCT Computation

a(i) = x(i) + x(15 − i), b(i) = x(i) − x(15 − i) for 0 ≤ i ≤ 7.

mi,8 = 8b(i) = b(i) << 3, ti,9 = 9b(i) = mi,8 + b(i), mi,18 = 18b(i) = ti,9 << 1, mi,72 = 72b(i) = ti,9 << 3,

ti,25 = 25b(i) = (b(i) << 4) + ti,9 , ti,43 = 43b(i) = mi,18 + ti,25 , ti,57 = 57b(i) = ti,25 + (b(i) << 5),

ti,70 = 70b(i) = mi,72 − (b(i) << 1), ti,80 = 80b(i) = mi,72 + mi,8 , ti,87 = 87b(i) = (ti,43 << 1) + b(i),

ti,90 = 90b(i) = mi,72 + mi,18 for 0 ≤ i ≤ 7.

y(1) = t0,90 + t1,87 + t2,80 + t3,70 + t4,57 + t5,43 + t6,25 + t7,9 , y(3) = t0,87 + t1,57 + t2,9 − t3,43 − t4,80 − t5,90 − t6,70 − t7,25 ,

y(5) = t0,80 + t1,9 − t2,70 − t3,87 − t4,25 + t5,57 + t6,90 + t7,43 , y(7) = t0,70 − t1,43 − t2,87 + t3,9 + t4,90 + t5,25 − t6,80 − t7,57 ,

y(9) = t0,57 − t1,80 − t2,25 + t3,90 − t4,9 − t5,87 + t6,43 + t7,70 , y(11) = t0,43 − t1,90 + t2,57 + t3,25 − t4,87 + t5,70 + t6,9 − t7,80 ,

y(13) = t0,25 − t1,70 + t2,90 − t3,80 + t4,43 + t5,9 − t6,57 + t7,87 , y(15) = t0,9 − t1,25 + t2,43 − t3,57 + t4,70 − t5,80 + t6,87 − t7,90 .

32-Point DCT Computation

a(i) = x(i) + x(31 − i), b(i) = x(i) − x(31 − i) for 0 ≤ i ≤ 15.

mi,2 = 2b(i) = b(i) << 1, ti,4 = 4b(i) = b(i) << 2, ti,9 = 9b(i) = (b(i) << 3) + b(i), mi,18 = 18b(i) = ti,9 << 1,

mi,72 = 72b(i) = ti,9 << 3, ti,13 = 13b(i) = ti,9 + ti,4 , mi,52 = 52b(i) = ti,13 << 2, ti,22 = 22b(i) = mi,18 + ti,4 ,

ti,31 = 31b(i) = (b(i) << 5) − b(i), ti,38 = 38b(i) = (ti,9 << 2) + mi,2 , ti,46 = 46b(i) = (ti,22 << 1) + mi,2 ,

ti,54 = 54b(i) = mi,52 + mi,2 , ti,61 = 61b(i) = (ti,31 << 1) − b(i), ti,67 = 67b(i) = ti,54 + ti,13 ,

ti,73 = 73b(i) = mi,72 + b(i), ti,78 = 78b(i) = mi,52 + (ti,13 << 1), ti,82 = 82b(i) = ti,78 + ti,4 ,

ti,85 = 85b(i) = mi,72 + ti,13 , ti,88 = 88b(i) = ti,22 << 2, ti,90 = 90b(i) = mi,72 + mi,18 for 0 ≤ i ≤ 15.

y(1) = t0,90 + t1,90 + t2,88 + t3,85 + t4,82 + t5,78 + t6,73 + t7,67 + t8,61 + t9,54 + t10,46 + t11,38 + t12,31 + t13,22 + t14,13 + t15,4 ,

y(3) = t0,90 + t1,82 + t2,67 + t3,46 + t4,22 − t5,4 − t6,31 − t7,54 − t8,73 − t9,85 − t10,90 − t11,88 − t12,78 − t13,61 − t14,38 − t15,13 ,

y(5) = t0,88 + t1,67 + t2,31 − t3,13 − t4,54 − t5,82 − t6,90 − t7,78 − t8,46 − t9,4 + t10,38 + t11,73 + t12,90 + t13,85 + t14,61 + t15,22 ,

y(7) = t0,85 + t1,46 − t2,13 − t3,67 − t4,90 − t5,73 − t6,22 + t7,38 + t8,82 + t9,88 + t10,54 − t11,4 − t12,61 − t13,90 − t14,78 − t15,31 ,

y(9) = t0,82 + t1,22 − t2,54 − t3,90 − t4,61 + t5,13 + t6,78 + t7,85 + t8,31 − t9,46 − t10,90 − t11,67 + t12,4 + t13,73 + t14,88 + t15,38 ,

y(11) = t0,78 − t1,4 − t2,82 − t3,73 + t4,13 + t5,85 + t6,67 − t7,22 − t8,88 − t9,61 + t10,31 + t11,90 + t12,54 − t13,38 − t14,90 − t15,46 ,

y(13) = t0,73 − t1,31 − t2,90 − t3,22 + t4,78 + t5,67 − t6,38 − t7,90 − t8,13 + t9,82 + t10,61 − t11,46 − t12,88 − t13,4 + t14,85 + t15,54 ,

y(15) = t0,67 − t1,54 − t2,78 + t3,38 + t4,85 − t5,22 − t6,90 + t7,4 + t8,90 + t9,13 − t10,88 − t11,31 + t12,82 + t13,46 − t14,73 − t15,61 ,

y(17) = t0,61 − t1,73 − t2,46 + t3,82 + t4,31 − t5,88 − t6,13 + t7,90 − t8,4 − t9,90 + t10,22 + t11,85 − t12,38 − t13,78 + t14,54 + t15,67 ,

y(19) = t0,54 − t1,85 − t2,4 + t3,88 − t4,46 − t5,61 + t6,82 + t7,13 − t8,90 + t9,38 + t10,67 − t11,78 − t12,22 + t13,90 − t14,31 − t15,73 ,

y(21) = t0,46 − t1,90 + t2,38 + t3,54 − t4,90 + t5,31 + t6,61 − t7,88 + t8,22 + t9,67 − t10,85 + t11,13 + t12,73 − t13,82 + t14,4 + t15,78 ,

y(23) = t0,38 − t1,88 + t2,73 − t3,4 − t4,67 + t5,90 − t6,46 − t7,31 + t8,85 − t9,78 + t10,13 + t11,61 − t12,90 + t13,54 + t14,22 − t15,82 ,

y(25) = t0,31 − t1,78 + t2,90 − t3,61 + t4,4 + t5,54 − t6,88 + t7,82 − t8,38 − t9,22 + t10,73 − t11,90 + t12,67 − t13,13 − t14,46 + t15,85 ,

y(27) = t0,22 − t1,61 + t2,85 − t3,90 + t4,73 − t5,38 − t6,4 + t7,46 − t8,78 + t9,90 − t10,82 + t11,54 − t12,13 − t13,31 + t14,67 − t15,88 ,

y(29) = t0,13 − t1,38 + t2,61 − t3,78 + t4,88 − t5,90 + t6,85 − t7,73 + t8,54 − t9,31 + t10,4 + t11,22 − t12,46 + t13,67 − t14,82 + t15,90 ,

y(31) = t0,4 − t1,13 + t2,22 − t3,31 + t4,38 − t5,46 + t6,54 − t7,61 + t8,67 − t9,73 + t10,78 − t11,82 + t12,85 − t13,88 + t14,90 − t15,90 .

and 3(c) respectively illustrate the structures of IAU, SAU,

and OAU in the case of 8-point integer DCT. Four SAUs are

required to compute ti,89 , ti,75 , ti,50 , and ti,18 for i = 0, 1,

2, and 3 according to STAGE-2 of the algorithm. The outputs

of SAUs are finally added by two-stage adder tree according

to STAGE-3 of the algorithm. Structures for 16 and 32-point

integer DCT can also be obtained similarly.

TABLE II

C OMPUTATIONAL C OMPLEXITIES OF MCM-BASED H ARDWARE

O RIENTED A LGORITHM AND R EFERENCE A LGORITHM .

N

4

8

16

32

Reference Algorithm

MULT

ADD

SHIFT

4

20

84

340

8

28

100

372

2

2

2

2

MCM-Based Algorithm

ADD

SHIFT

14

50

186

682

10

30

86

278

C. Reusable Architecture for Integer DCT

The proposed reusable architecture for the implementation of DCT of any of the prescribed lengths is shown in

Fig. 4(a). There are two (N/2)-point DCT units in the structure. The input to one (N/2)-point DCT unit is fed through

(N/2) 2:1 MUXes that selects either [a(0), ..., a(N/2 −

1)] or [x(0), ..., x(N/2 − 1)], depending on whether it is

used for N -point DCT computation or for the DCT of a

lower size. The other (N/2)-point DCT unit takes the input

[x(N/2), ..., x(N − 1)] when it is used for the computation

of DCT of N/2 point or a lower size, otherwise, the input is

reset by an array of (N/2) AND gates to disable this (N/2)point DCT unit. The output of this (N/2)-point DCT unit is

4

IEEE TRANSACTIONS ON CIRCUITS AND SYSTEMS FOR VIDEO TECHNOLOGY

output adder unit

t0,64

A

input adder unit

A

x(0)

a(0)

<< 6

x(0)

x(7)

x(1)

x(6)

A

a(0)

x(2)

x(5)

A

A

A

x(3)

b(0)

shift-add unit

b(0)

a(1)

x(4)

A

A

A

A

A

b(1)

a(2)

b(2)

a(3)

b(3)

y(0)

_

t0,83

t0,36

x(3)

A

_

_

_

y(1)

(-)

x(1)

A

a(1)

t1,64

<< 6

(-)

A

(a)

y(2)

an.pdf

A

x(2)

shift-add unit

(-)

t1,36

t1,83

A

ti,75

A

y(3)

(-)

SAU-i.pdf

(a)

A

<< 3

A

<< 4

<< 6

b(i)

ti,89

A

<< 6

b(1)

<< 1

b(i)

A

<< 3

<< 1

ti,50

<< 1

ti,18

ti,83

A

(b)

ti,36

<< 1

t0,89 t3,18 t1,75 t2,50 t2,89 t1,18 t0,75 t3,50 t1,89 t2,18 t3,75 t0,50 t3,89 t0,18 t2,75 t1,50

W+6 W+8

W+8

W+7

A

A

(b)

W+8

_

_

<<A2

A

_

AA

A

_

A

ti,89/16A

stage-1

W+8

>> 1

Fig. 1.

Proposed architecture of 4-point integer DCT. (a) 4-point DCT

architecture. (b) Structure of SAU.

_

A

A

ti,75/16

stage-2

<< 1

A

A

A

prune-b.pdf

A

ti,50/16

W+9

a(1)

x(1)

N/2

y(1)

shift-add unit

N/2

shift-add unit

x(N-2)

b(N/2-1)

N/2

shift-add unit

ty(7)

i,18/16

<< 3

oau.pdf

y(N-2)

x(N-1)

y(5)

Fig. 3. Proposed architecture of 8-point integer DCT and IDCT. (a) Structure

of IAU. (b) Structure of SAU. (c) Structure of OAU.

output adder unit

input adder unit

b(1)

y(3)A

(c)

y(2)

(N/2)-point

integer

DCT unit

a(N/2-1)

b(0)

y(1)

b(i)

y(0)

a(0)

x(0)

y(3)

y(N-1)

combinational logics for control units are shown in Figs. 4(b)

and 4(c) for N = 16 and 32, respectively. For N = 8, m8 is a

1-bit signal which is used as sel 1 while sel 2 is not required

since 4-point DCT is the smallest DCT. The proposed structure

can compute one 32-point DCT, two 16-point DCTs, four 8point DCTs, and eight 4-point DCTs, while the throughput

remains the same as 32 DCT coefficients per cycle irrespective

of the desired transform size.

Fig. 2. Proposed generalized architecture for integer DCT of length N = 8,

16, and 32.

multiplexed with that of the OAU, which is preceded by the

SAUs and IAU of the structure. The N AND gates before

IAU are used to disable the IAU, SAU, and OAU when the

architecture is used to compute (N/2)-point DCT computation

or a lower size. The input of the control unit, mN is used to

decide the size of DCT computation. Specifically, for N = 32,

m32 is a 2-bits signal which is set to {00}, {01}, {10}, and

{11} to compute 4, 8, 16, and 32-point DCT, respectively.

The control unit generates sel 1 and sel 2, where sel 1 is

used as control signals of N MUXes and input of N AND

gates before IAU. sel 2 is used as the input m(N/2) to two

lower size reusable integer DCT units in recursive manner. The

IV. P ROPOSED S TRUCTURE FOR 2-D I NTEGER DCT

Using its separable property, a (N × N )-point 2-D integer DCT could be computed by row-column decomposition

technique in two distinct stages:

•

•

STAGE-1: N -point 1-D integer DCT is computed for

each column of the input matrix of size (N × N ) to

generate an intermediate output matrix of size (N × N ).

STAGE-2: N -point 1-D DCT is computed for each row

of the intermediate output matrix of size (N × N ) to

generate desired 2-D DCT of size (N × N ).

We present here a folded architecture and a full-parallel

architecture for the 2-D integer DCT, along with the necessary transposition buffer to match them without internal data

movement.

MEHER et al.: EFFICIENT INTEGER DCT ARCHITECTURES FOR HEVC

5

mN

control unit

sel_2

sel_1

0

control unit

y(2)

(N/2)-point

reusable integer

DCT unit

MUX

y(0)

a(0) a(1) a(N/2-1)

input adder unit

flexible-dct.pdf

x(N/2-1)

shift-add unit

a(0)a(1) a(N/2-1)

x(N/2)

x(N/2+1)

b(1)

x(0)

input adder unit

x(1)

b(N/2-1)

x(N-1)

x(N/2-1)

x(N-1)

b(0)

b(1)

N/2

shift add

shift-add

unit unit

N/2

N/2

shift add unit

(N/2)-point

reusable integer

b(N/2-1) DCT unit

N/2

0

1

y(1)

0

y(N-2)

1

y(3)

y(1)

0

y(3)

1

MUX

x(1)

y(2)

output adder unit

(N/2)-point N/2

reusable

shift-add

unit

integer

DCT unit N/2

b(0)

output adder unit

x(0)

y(N-2)

MUX

MUX unit

(N/2 2:1 MUX)

0

1

1

MUX

sel_2

sel_1

y(0)

MUX

1

0

MUX

mN

y(N-1)

y(N-1)

shift add unit

sel_2

(a)

flexible-dct.pdf

sel_1

control.pdf

m32(0)

m32(1)

sel_1

sel_2

m16(0)

m16(1)

sel_2(0)

sel_2(1)

(b)

(c)

Fig. 4. Proposed reusable architecture of integer DCT. (a) Proposed reusable architecture for N = 8, 16, and 32. (b) Control unit for N = 16. (c) Control

unit for N = 32.

A. Folded Structure for 2-D Integer DCT

The folded structure for the computation of (N × N )-point

2-D integer DCT is shown in Fig. 5(a). It consists of one

N -point 1-D DCT module and a transposition buffer. The

structure of the proposed 4 × 4 transposition buffer is shown

in Fig. 5(b). It consists of 16 registers arranged in 4 rows and

4 columns. (N × N ) transposition buffer can store N values

in any one column of registers by enabling them by one of the

enable signals ENi for i = 0, 1, · · ·, N − 1. One can select the

content of one of the rows of registers through the MUXes.

During the first N successive cycles, the DCT module

receives the successive columns of (N × N ) block of input

for the computation of STAGE-1, and stores the intermediate

results in the registers of successive columns in the transposition buffer. In the next N cycles, contents of successive rows

of the transposition buffer are selected by the MUXes and fed

as input to the 1-D DCT module. N MUXes are used at the

input of the 1-D DCT module to select either the columns

from the input buffer (during the first N cycles) or the rows

from the transposition buffer (during the next N cycles).

B. Full-Parallel Structure for 2-D Integer DCT

The full-parallel structure for (N × N )-point 2-D integer

DCT is shown in Fig. 6(a). It consists of two N -point 1D DCT modules and a transposition buffer. The structure

of the 4 × 4 transposition buffer for full-parallel structure

is shown in Fig. 6(b). It consists of 16 register cells (RC)

(shown in Fig. 6(c)) arranged in 4 rows and 4 columns. N ×N

transposition buffer can store N values in a cycle either rowwise or column-wise by selecting the inputs by the MUXes at

the input of RCs. The output from RCs can also be collected

either row-wise or column-wise. To read the output from the

buffer, N number of (2N − 1):1 MUXes (shown in Fig. 6(d))

are used, where outputs of the ith row and the ith column of

RCs are fed as input to the ith MUX. For the first N successive

cycles, the ith MUX provides output of N successive RCs on

6

IEEE TRANSACTIONS ON CIRCUITS AND SYSTEMS FOR VIDEO TECHNOLOGY

MUX

MUX

rows

of 2-D

DCT

output

N-point

integer

DCT unit

rows

of 2-D

DCT

output

MUX

columns

of 2-D

DCT

input

NxN

transposition

buffer

N-point

integer

DCT unit

columns

of 2-D

DCT

input

N-point integer

DCT unit

(a)

NxN

transposition

buffer

rows of

intermediate

output

columns of

intermediate

output

x0

y00

RC00

(a)

EN1

EN0

R03

R02

RC10

R21

N-point R13

integer

DCT unit

R22

rows

of 2-D

DCT

output

RC20

R23

y10

RC30

RC01

RC11

RC21

y20

y30

RC30

RC21

RC31

y30

RC31

R30

R31

R32

R33

xi

xj

xi

xj

MUX

y0

MUX

y1

MUX

y2

MUX

y3

RCijyij

Rij

ENij

clock

yij

Rij

ENij

clock

y01

y11

y11

y21

y21

y31

y31

(b)y0i

RCij

MUX

x3

R20

R12

MUX

x2

R11 NxN

transposition

buffer

N-point

R10

integer

DCT unit

RC02

y02

x2

RC11

y20

RC20

x1

columns

of 2-D

DCT

input

y00

y10

RC10

clock

R01

x3

RC03

y03

x3

clock

EN3

RC00

R00

y01

x1

clock

x0

RC01

x0

EN2

x2

x1

s0

s1

s2 ss0

1

s2

RC02

RC12

RC12

RC22

RC22

RC32

RC32

y02

y12

RC13

RC23

y32

RC33

RC33

y23

y23

RC23

y32

y13

y13

RC13

y22

y22

y03

RC03

y12

y33

y33

y1i y2i y3i yi0 yi1 yi2 yi3

y0i y1i y2i y3i yi0 yi1 yi2 yi3

7-to-1 MUX

7-to-1 MUX

i=0,1,2,3

i=0,1,2,3

yi

yi

(c)

(d)

(b)

Fig. 5. Folded structure of (N × N )-point 2-D integer DCT. (a) The folded

2-D DCT architecture. (b) Structure of the transposition buffer for input size

4 × 4.

the ith row. In the next N successive cycles, the ith MUX

provides output of N successive RCs on the ith column. By

this arrangement, in the first N cycles, we can read the output

of N successive columns of RCs and in the next N cycles,

we can read the output of N successive rows of RCs. The

transposition buffer in this case allows both read and write

operations concurrently. If for the N cycles, results are read

and stored column-wise now, then in the next N successive

cycles, results are read and stored in the transposition buffer

row-wise. The first 1-D DCT module receives the inputs

column-wise from the input buffer. It computes a column of

intermediate output and stores in the transposition buffer. The

second 1-D DCT module receives the rows of the intermediate

result from the transposition buffer and computes the rows of

2-D DCT output row-wise.

Suppose that in the first N cycles, the intermediate results

are stored column-wise and all the columns are filled in with

Fig. 6. Full-parallel structure of (N × N )-point 2-D integer DCT. (a) The

full-parallel 2-D DCT architecture. (b) Structure of the transposition buffer for

input size 4×4. (c) Register cell RCij. (d) 7-to-1 MUX for 4×4 transposition

buffer.

intermediated results, then in the next N cycles, contents of

successive rows of the transposition buffer are selected by

the MUXes and fed as input to the 1-D DCT module of the

second stage. During this period, the output of the 1-D DCT

module of first stage is stored row-wise. In the next N cycles,

results are read and written column-wise. The alternating

column-wise and row-wise read and write operations with the

transposition buffer continues. The transposition buffer in this

case introduces a pipeline latency of N cycles required to fill

in the transposition buffer for the first time.

V. P RUNED DCT A RCHITECTURE

Fig. 7(a) illustrates a typical hybrid video encoder, e.g.,

HEVC, and Fig. 7(b) shows the breakdown of the blocks

in the shaded region in Fig. 7(a) for Main Profile, where

the encoding and decoding chain involves DCT, quantization,

de-quantization, and IDCT based on the transform design

Encoder block diagram

MEHER et al.: EFFICIENT INTEGER DCT ARCHITECTURES FOR HEVC

input

video

-

transform

entropy

coding

quantization

7

bitstream

21 20

23 22

inverse

quantization

18

17

16

8

9

7

6

9

DCT-1

ti,50

ti,89

A

<< 6

ti,75

b(i)<<6

log2N

+22

DCT-2

inverse

quantization

0

mi,25

A

transform

>>

log2N-1

1

2

ti,75

reference

frame buffer

16

3

4

reconstructed

video

(a)

log2N

+15

5

ti , 50

in-loop

processing

inter

prediction

19

t i ,18

inverse

transform

intra

prediction

truncated

bits

shift-add unit (SAU)

SAU-i.pdf

>>

log2N+6

t i , 89

16

ti ,18

ti ,50

ti ,75

t i , 89

quantization

A

<< 4

b(i)

A

<< 3

<< 1

ti,50

<< 1

ti,18

mi,25

output of SAU

(a)

16

IDCT-1

log2N

+22

>>7

log2N

+15

clipping

log2N-1

16

IDCT-2

log2N

+22

>>12

log2N

+10

ti,89/16

A

<< 2

>> 1

inverse transform

ti,75/16

A

prune-b.pdf

ti,50/16

A

<< 1

(b)

b(i)

ti,18/16

A

Fig. 7. Encoding and decoding chain involving DCT in the HEVC codec. (a)

Block diagram of a typical hybrid video encoder, e.g., HEVC. Note that the

decoder contains a subset of the blocks in the encoder, except for the having

entropy decoding instead of entropy coding. (b) Breakdown of the blocks in

the shaded region in (a) for Main Profile.

<< 3

(b)

shift-add unit (SAU)

24

proposed in [14]. As shown in Fig. 7(b), a data before and after

the DCT/IDCT transform is constrained to have maximum 16

bits regardless of internal bit depth and DCT length. Therefore,

scaling operation is required to retain the wordlength of intermediate data. In the Main Profile which supports only 8-bit

samples, if bit truncations are not performed, the wordlength

of the DCT output would be log2 N + 6 bits more than that

of the input to avoid overflow. The output wordlengths of the

first and second forward transforms are scaled down to 16 bits

by truncating least significant log2 N − 1 and log2 N + 6 bits,

respectively, as shown in the figure. The resulting coefficients

from the inverse transforms are also scaled down by the

fixed scaling factor of 7 and 12. It should be noted that

additional clipping of log2 N − 1 most significant bits is

required to maintain 16 bits after the first inverse transform

and subsequent scaling.

The scaling operation, however, could be integrated with

the computation of the transform without significant impact

on the coding result. The SAU includes several left-shift

operations as shown in Figs. 1(b) and 3(b) whereas the scaling

process is equivalent to perform the right shift. Therefore,

by manipulating the shift operations in SAU circuit, we can

optimize the complexity of the proposed DCT structure.

Fig. 8(a) shows the dot diagram of the SAU to illustrate

the pruning with an example of the second forward DCT of

23

22

21

20

19

18

17

16

9

8

7

6

5

4

t0,89

t1, 75

t 2 ,50

t 3 ,18

pad.pdf

truncated

bits

output adder unit (OAU)

final truncation

y (0)

(c)

Fig. 8. (a) Dot diagram for the pruning of the SAU for the second 8-point

forward DCT. (b) The modified structure of the SAU after pruning. (c) Dot

diagram for the truncation after the SAU and the OAU to generate y(0) for

8-point DCT.

length 8. Each row of dot diagram contains 17 dots, which

represents output of the IAU or its shifted form (for 16 bits of

the input wordlength). The final sum without truncation should

pad.pdf

be 25 bits. But, we use only 16 bits in the final sum, and the

rest 9 bits are finally discarded. To reduce the computational

complexity, some of the least significant bits (LSB) in the SAU

(in the gray area in Fig. 8(a)) can be pruned. It is noted that the

worst-case error by the pruning scheme occurs when all the

truncated bits are one. In this case, the sum of truncated values

8

IEEE TRANSACTIONS ON CIRCUITS AND SYSTEMS FOR VIDEO TECHNOLOGY

TABLE III

T HE I NTERNAL R EGISTER W ORDLENGTH AND THE N UMBER OF P RUNED

B ITS FOR THE I MPLEMENTATION OF E ACH T RANSFORM (DCT-1, DCT-2,

IDCT-1 AND IDCT-2 IN F IG . 7( B )).

TABLE IV

E XPERIMENTAL R ESULTS FOR P ROPOSED M ETHODS C OMPARED TO

HM10.0 A NCHOR . BD- RATE R ESULTS FOR THE L UMA AND C HROMA (Cb

AND Cr) C OMPONENTS ARE SHOWN AS [Y(%) / Cb(%) / Cr(%)].

N

T

A

B

C

D

E

F

G

H

I

J

Configuration

PF(%)

PI(%)

PB(%)

9

16

16

16

8

8

7

7

0

0

1

1

1

4

4

4

0

4

3

3

0

0

0

5

0

0

1

0

1

8

7

12

16

16

16

16

16

16

16

12

Main AI

0.1/0.1/0.1

0.8/1.9/2.0

1.0/2.1/2.1

4

T1

T2

T3

T4

Main RA

0.1/0.1/0.2

0.8/1.5/1.6

0.9/1.7/1.8

Main LDB

0.1/0.3/0.0

0.9/1.9/1.4

1.0/1.8/1.6

0.1/0.1/0.0

1.0/1.7/1.4

1.1/1.6/1.5

8

T1

T2

T3

T4

9

16

16

16

8

8

7

7

1

1

2

2

2

4

4

4

0

4

3

3

0

1

0

5

0

0

2

0

2

9

7

12

15

16

16

16

16

16

16

13

Main LDP

16

T1

T2

T3

T4

9

16

16

16

8

8

7

7

2

2

3

3

3

4

4

4

0

4

3

3

0

2

0

5

0

0

3

0

3

10

7

12

14

16

16

16

16

16

16

14

32

T1

T2

T3

T4

9

16

16

16

8

8

7

7

3

3

4

4

4

4

4

4

0

4

3

3

0

3

0

5

0

0

4

0

4

11

7

12

13

16

16

16

16

16

16

15

A: input wordlength, B: bit increment in IAU/SAU without truncation, C:

bit increment in OAU without truncation, D: the number of truncated bits in

SAU, E: the number of truncated bits after SAU, F: the number of truncated

bits after OAU, G: the number of clipped bits, H: total scale factor

(H=D+E+F), I: the number of bits of SAU output (I=A+B-D-E), and J: the

number of bits of DCT output (J=A+B+C-D-E-F-G). T1, T2, T3, and T4

means DCT-1, DCT-2, IDCT-1, and IDCT-2 in Fig. 7(b), respectively.

amounts to 88, but it is only 17% of the weight of LSB of the

final output, 29 . Therefore, the impact of the proposed pruning

on the final output is not significant. However, as we prune

more bits, the truncation error increases rapidly. Fig. 8(b)

shows the modified structure of the SAU after pruning.

The output of the SAU is the product of DCT coefficient

with the input values. In the HEVC transform design [14], 16

bit multipliers are used for all internal multiplications. To have

the same precision, the output of SAU is also scaled down to

16 bits by truncating 4 more LSBs, which is shown in the

gray area before the OAU in Fig. 8(c) for the output addition

corresponding to the computation of y(0). The LSB of the

result of the OAU is truncated again to retain 16 bits transform

output. By careful pruning, the complexity of SAU and OAU

can be significantly reduced without significant impact on

the error performance. The overall reduction of the hardware

complexity by the pruning scheme is discussed in Section VI.

Table III lists the internal register wordlength and the number of pruned bits for the implementation of each transform

(DCT-1, DCT-2, IDCT-1, and IDCT-2 in Fig. 7(b)). In the

case of the second forward 8-point DCT (in the 7-th row

in Table III), the number of input wordlength is 16 bits. If

any truncation is not performed in the processing of IAU,

SAU, and OAU, the number of output wordlength becomes

25 bits by summation of values in column A, B, and C. By

the truncation procedure illustrated in Fig. 8 using the number

of truncated bits listed in the column D, E, and F of Table III,

the wordlength of DCT output becomes 16 bits. Note that the

scale factor listed in the column H satisfies the configuration

in Fig. 7(b) and the wordlength of the multiplier output and the

final DCT output as listed in the column I and J, respectively

is less than or equal to 16 bits satisfying constraints described

in [14] over all the transforms T1-T4 for N = 4, 8, 16, and

32.

In Fig. 2, as the odd-indexed output samples, y(1), y(3),...,

y(N − 1) are scaled down by pruning procedure, evenindexed output samples, y(0), y(2),..., y(N − 2) which are

from (N/2)-point integer DCT should also be scaled down

correspondingly. For example, the scaling factor of 8-point

second DCT (T2) is 1/29 as shown in column H in Table III,

the output of internal 4-point DCT should also be scaled down

by 1/29 . Since we have 4-point DCT with scaling factor 1/28

as shown in third row and column H, we can reuse it, and

perform additional scaling by 1/2.

To understand the impact of using the pruned transform

within HEVC, we have integrated them into the HEVC reference software, HM10.02 , for both luma and chroma residuals.

The proposed pruned forward DCT is a non-normative change

and can be implemented in encoders to produce HEVCcompliant bitstreams. Therefore, we perform one set of experiments, prune-forward (PF), in which the forward DCT

used is the proposed pruned version, while the inverse DCT

is the same as that defined in HEVC Final Draft International

Standard (FDIS) [7]. In addition, we perform two other sets

of experiments: (i) prune-inverse (PI), in which the forward

DCT is not pruned but the inverse DCT is pruned, and (ii)

prune-both (PB), where both forward and inverse DCT are

the pruned versions. Both PI and PB are no longer standards

compliant, since the pruned inverse DCT is different from what

is specified in the HEVC standard. We also note here that

both the encoder and decoder use the same pruned inverse

transforms, such that any coding loss is due to the loss in

accuracy of the inverse transform rather than drift between

encoder and decoder. We performed the tests using the JCT-VC

HM10.0 common test conditions [18] for the Main Profile, i.e.,

on the test sequences3 mandated for All-Intra (AI), RandomAccess (RA), Low-Delay B (LDB), and Low-Delay P (LDP)

configuration, over QP values of 22, 27, 32, and 37. Using the

HM10.0 as the anchor, we measure the BD-Rate [19] values

of each of the PF, PI and PB experiments to get a measure

2 Available

through SVN via http://hevc.hhi.fraunhofer.de/svn/svn

HEVCSoftware/tags/HM-10.0/.

3 The test sequences are classified into Classes A-F. Classes A through D

contain sequences with resolutions of 2560 × 1600, 1920 × 1080, 832 × 480,

416 × 240 respectively. Class E consists of video conferencing sequences of

resolution 1280 × 720, while Class F consists of screen content videos of

resolutions ranging from 832 × 480 to 1280 × 720.

MEHER et al.: EFFICIENT INTEGER DCT ARCHITECTURES FOR HEVC

9

TABLE V

A REA , T IME , AND P OWER C OMPLEXITIES OF P ROPOSED A RCHITECTURES AND D IRECT I MPLEMENTATION OF R EFERENCE A LGORITHM OF I NTEGER

DCT FOR VARIOUS L ENGTHS BASED ON S YNTHESIS R ESULT U SING TSMC 90-nm CMOS L IBRARY.

Design

Architecture

N

Area

Consumption

Computation

Time

Power

Consumption

Area-Delay

Product

Energy per

Sample

Excess AreaDelay Product

Excess Energy

per Sample

Using Reference Algorithm

4

8

16

32

4656

21457

88451

332889

2.84

3.25

3.67

3.96

0.28

1.68

7.45

29.75

13223

69735

324615

1318240

0.68

2.01

4.48

8.96

18.95%

51.23%

57.79%

51.06%

27.18%

59.03%

67.20%

68.66%

Proposed Algorithm

Before Pruning

4

8

16

32

4399

16772

66130

253432

2.95

3.34

3.92

4.48

0.26

1.31

5.73

23.17

12977

56018

259229

1135375

0.63

1.57

3.45

6.98

16.73%

21.48%

26.00%

30.10%

17.10%

24.02%

28.77%

31.46%

Proposed Algorithm

After Pruning

4

8

16

32

3794

13931

52480

196103

2.93

3.31

3.92

4.45

0.22

1.06

4.45

17.63

11116

46111

205721

872658

0.54

1.26

2.68

5.31

−−

−−

−−

−−

−−

−−

−−

−−

Area, computation time, and power consumption are measured in sq.um, ns, and mW, respectively. Area-delay product and energy per sample are in the

units of sq.um×ns and mW×ns, respectively.

of the coding performance impact. One can interpret the BDRate number as the percentage bitrate difference for the same

peak signal to noise ratio (PSNR); note that a positive BD-Rate

number indicates coding loss compared to the anchor. Table IV

summarizes the experimental results of using the different

combinations of the propose pruned forward and inverse DCT.

Using the pruned forward DCT alone (PF case) gives average

coding loss over each coding configuration of about 0.1% in

the luma component, suggesting that the use of the pruned

forward DCT in encoder has a insignificant coding impact.

On the other hand, PI gives average coding loss ranging from

0.8% to 1.0% in the luma component over the different coding

configurations. This is expected since the bit truncation after

each transform stage is larger. Finally, PB gives average coding

loss ranging from 0.9% - 1.1% in the luma component. It is

interesting to note that the coding loss in PB is approximately

the sum of that of PF and PI. We have also observed that the

coding performance difference for each of PF, PI and PB are

fairly consistent across the different classes of test sequences.

VI. I MPLEMENTATION R ESULTS AND D ISCUSSIONS

A. Synthesis Results of 1-D Integer DCT

We have coded the architecture derived from the reference

algorithm of Section II as well as the proposed architectures

for different transform lengths in VHDL, and synthesized

by Synopsys Design Compiler using TSMC 90-nm General

Purpose (GP) CMOS library [20]. The wordlength of input

samples are chosen to be 16 bits. The area, computation

time, and power consumption (at 100 MHz clock frequency)

obtained from the synthesis reports are shown in Table V. It is

found that the proposed architecture involves nearly 14% less

area-delay product (ADP) and 19% less energy per sample

(EPS) compared to the direct implementation of reference

algorithm, in average, for integer DCT of lengths 4, 8, 16, and

32. Additional 19% saving in ADP and 20% saving in EPS

are also achieved by the pruning scheme with nearly the same

throughput rate. The pruning scheme is more effective for

higher length DCT since the percentage of total area occupied

by the SAUs increases as DCT length increases, and hence

more adders are affected by the pruning scheme.

B. Comparison with the Existing Architectures

We have named the proposed reusable integer DCT architecture before applying pruning as reusable architecture-1 and

that after applying pruning as reusable architecture-2. The

processing rate of the proposed integer DCT unit is 16 pixels

per cycle considering 2-D folded structure since 2-D transform

of 32 × 32 block can be obtained in 64 cycles. In order to

support 8K Ultra-High-Definition (UHD) (7680×4320) @ 30

frames/sec (fps) and 4:2:0 YUV format which is one of the applications of HEVC [21], the proposed reusable architectures

should work at the operating frequency faster than 94 MHz

(7680×4320×30×1.5/16). The computation times of 5.56ns

and 5.27ns for reusable architectures-1 and 2, respectively

(obtained from the synthesis without any timing constraint)

are enough for this application. Also, the computation time

less than 5.358ns is needed in order to support 8K UHD @

60 fps, which can be achieved by slight increase in silicon

area when we synthesize the reusable architecture-1 with the

desired timing constraint. Table VI lists the synthesis results

of the proposed reusable architectures as well as the existing

architectures for HEVC for N = 32 in terms of gate count

which is normalized by area of 2-input NAND gate, maximum operating frequency, processing rate, throughput, and

supporting video format. The proposed reusable architecture2 requires larger area than the design of [11], but offers much

higher throughput. Also, the proposed architectures involve

less gate counts as well as higher throughput than the design of

[10]. Specially, the designs of [10] and [11] require very high

operational frequencies of 761 MHz and 1403 MHz in order to

support UHD @ 60 fps since 2-D transform of 32 × 32 block

can be computed in 261 cycles and 481 cycles, respectively.

However, 187 MHz operating frequency which is needed to

support 8K UHD @ 60 fps by the proposed architecture can be

10

IEEE TRANSACTIONS ON CIRCUITS AND SYSTEMS FOR VIDEO TECHNOLOGY

TABLE VI

C OMPARISON OF D IFFERENT 1-D I NTEGER DCT A RCHITECTURES WITH THE P ROPOSED A RCHITECTURES

Design

Technology

Gate Counts

Shen et al [10]

Park et al [11]

Reusable Architecture-1

Reusable Architecture-2

0.13-µm

0.18-µm

90-nm

90-nm

134 K

52 K

131 K

103 K

Max Freq.

350

300

187

187

TABLE VII

2-D I NTEGER T RANSFORM WITH F OLDED S TRUCTURE AND

F ULL -PARALLEL S TRUCTURE .

Architecture

Technology

Total Gate Counts

Operating Frequency

Processing Rate (pels/cycle)

Throughput

Total Power Consumption

Energy per Sample (EPS)

Folded

Full-parallel

TSMC 90-nm GP CMOS

208 K

347 K

187 MHz

16

32

2.992 G

5.984 G

40.04 mW

67.57 mW

13.38 pJ

11.29 pJ

obtained using TSMC 90-nm or newer technologies as shown

in Table VI. Also, we could obtain the frequency of 94 MHz

for UHD @ 30 fps and 4:2:0 YUV format using TSMC 0.15µm or newer technologies.

C. Synthesis Results of 2-D Integer DCT

We also synthesized the the folded and full-parallel structures for 2-D integer DCT. We have listed total gate counts,

processing rate, throughput, power consumption, and EPS in

Table VII. We set the operational frequency to 187 MHz for

both cases to support UHD @ 60 fps. The 2-D full-parallel

structure yields 32 samples in each cycle after initial latency

of 32 cycles providing double the throughput of the folded

structure. However, the full-parallel architecture consumes

1.69 times more power than the folded architecture since it

has two 1-D DCT units and nearly the same complexity of

transposition buffer while the throughput of full-parallel design

is double the throughput of folded design. Thus, the fullparallel design involves 15.6% less EPS.

VII. S UMMARY AND C ONCLUSIONS

In this paper, we have proposed area- and power-efficient architectures for the implementation of integer DCT of different

lengths to be used in HEVC. The computation of N -point 1-D

DCT involves an (N/2)-point 1-D DCT and a vector-matrix

multiplication with a constant matrix of size (N/2) × (N/2).

We have shown that the MCM-based architecture is highly

regular and involves significantly less area-delay complexity

and less energy consumption than the direct implementation

of matrix multiplication for odd DCT coefficients. We have

used the proposed architecture to derive a reusable architecture

for DCT which can compute the DCT of lengths 4, 8, 16,

and 32 with throughput of 32 output coefficients per cycle.

We have also shown that proposed design could be pruned to

reduce the area and power complexity of forward DCT without

MHz

MHz

MHz

MHz

Pixels/Cycle

4

2.12

16

16

Throughput

1.40

0.63

2.99

2.99

Gsps

Gsps

Gsps

Gsps

Supporting Video Format

4096x2048

3840x2160

7680x4320

7680x4320

@

@

@

@

30

30

60

60

fps

fps

fps

fps

significant loss of quality. We have proposed power-efficient

architectures for folded and full-parallel implementations of

2-D DCT, where no data movement takes place within the

transposition buffer. From the synthesis result, it is found that

the proposed algorithm with pruning involves nearly 30% less

ADP and 35% less EPS compared to the reference algorithm

in average for integer DCT of lengths 4, 8, 16, and 32 with

nearly the same throughput rate.

ACKNOWLEDGMENT

The authors would like to thank Dr. Arnaud Tisserand for

providing us HDL code for the computation of the integer

DCT based on their algorithm.

R EFERENCES

[1] N. Ahmed, T. Natarajan, and K. Rao, “Discrete cosine transform,” IEEE

Trans. Comput., vol. 100, no. 1, pp. 90–93, Jan. 1974.

[2] W. Cham and Y. Chan, “An order-16 integer cosine transform,” IEEE

Trans. Signal Process., vol. 39, no. 5, pp. 1205–1208, May 1991.

[3] Y. Chen, S. Oraintara, and T. Nguyen, “Video compression using integer

DCT,” in Proc. IEEE International Conference on Image Processing,

Sep. 2000, pp. 844–845.

[4] J. Wu and Y. Li, “A new type of integer DCT transform radix and

its rapid algorithm,” in Proc. International Conference on Electric

Information and Control Engineering, Apr. 2011, pp. 1063–1066.

[5] A. M. Ahmed and D. D. Day, “Comparison between the cosine and

Hartley based naturalness preserving transforms for image watermarking

and data hiding,” in Proc. First Canadian Conference on Computer and

Robot Vision, May 2004, pp. 247–251.

[6] M. N. Haggag, M. El-Sharkawy, and G. Fahmy, “Efficient fast

multiplication-free integer transformation for the 2-D DCT H.265 standard,” in Proc. International Conference on Image Processing, Sep.

2010, pp. 3769–3772.

[7] B. Bross, W.-J. Han, J.-R. Ohm, G. J. Sullivan, Y.-K. Wang, and

T. Wiegand, “High Efficiency Video Coding (HEVC) text specification

draft 10 (for FDIS & Consent),” in JCT-VC L1003, Geneva, Switzerland,

Jan. 2013.

[8] T. Wiegand, G. J. Sullivan, G. Bjontegaard, and A. Luthra, “Overview

of the H.264/AVC video coding standard,” IEEE Trans. Circuits Syst.

Video Technol., vol. 13, no. 7, pp. 560–576, Jul. 2003.

[9] A. Ahmed, M. U. Shahid, and A. Rehman, “N -point DCT VLSI

architecture for emerging HEVC standard,” VLSI Design, 2012.

[10] S. Shen, W. Shen, Y. Fan, and X. Zeng, “A unified 4/8/16/32-point

integer IDCT architecture for multiple video coding standards,” in Proc.

IEEE International Conference on Multimedia and Expo, Jul. 2012, pp.

788–793.

[11] J.-S. Park, W.-J. Nam, S.-M. Han, and S. Lee, “2-D large inverse

transform (16x16, 32x32) for HEVC (high efficiency video coding),”

Journal of Semiconductor Technology and Science, vol. 12, no. 2, pp.

203–211, Jun. 2012.

[12] M. Budagavi and V. Sze, “Unified forward+inverse transform architecture for HEVC,” in Proc. International Conference on Image Processing,

Sep. 2012, pp. 209–212.

[13] JCTVC-G040, CE10: Summary report on core transform

design. [Online]. Available: http://phenix.int-evry.fr/jct/doc end user/

documents/7 Geneva/wg11/JCTVC-G040-v3.zip

[14] JCTVC-G495, CE10: Core transform design for HEVC: proposal for

current HEVC transform. [Online]. Available: http://phenix.int-evry.fr/

jct/doc end user/documents/7 Geneva/wg11/JCTVC-G495-v2.zip

MEHER et al.: EFFICIENT INTEGER DCT ARCHITECTURES FOR HEVC

[15] M. Potkonjak, M. B. Srivastava, and A. P. Chandrakasan, “Multiple

constant multiplications: Efficient and versatile framework and algorithms for exploring common subexpression elimination,” IEEE Trans.

Comput.-Aided Des. Integr. Circuits Syst., vol. 15, no. 2, pp. 151–165,

Feb. 1996.

[16] M. D. Macleod and A. G. Dempster, “Common subexpression elimination algorithm for low-cost multiplierless implementation of matrix

multipliers,” Electronics Letters, vol. 40, no. 11, pp. 651–652, May 2004.

[17] N. Boullis and A. Tisserand, “Some optimizations of hardware multiplication by constant matrices,” IEEE Trans. Comput., vol. 54, no. 10,

pp. 1271–1282, Oct. 2005.

[18] F. Bossen, “Common HM test conditions and software reference configurations,” in JCT-VC L1100, Geneva, Switzerland, Jan. 2013.

[19] G. Bjontegaard, “Calculation of average PSNR differences between RD

curves,” in ITU-T SC16/Q6, VCEG-M33, Austin, USA, Apr. 2001.

[20] TSMC 90nm General-Purpose CMOS Standard Cell Libraries tcbn90ghp. [Online]. Available: http://www.tsmc.com/

[21] G. J. Sullivan, J.-R. Ohm, W.-J. Han, and T. Wiegand, “Overview of the

high efficiency video coding (HEVC) standard,” IEEE Trans. Circuits

Syst. Video Technol., vol. 22, no. 12, pp. 1649–1668, Dec. 2012.

Pramod Kumar Meher (SM’03) received the B.

Sc. (Honours) and M.Sc. degree in physics, and the

Ph.D. degree in science from Sambalpur University,

India, in 1976, 1978, and 1996, respectively.

Currently, he is a Senior Scientist with the Institute for Infocomm Research, Singapore. Previously,

he was a Professor of Computer Applications with

Utkal University, India, from 1997 to 2002, and a

Reader in electronics with Berhampur University,

India, from 1993 to 1997. His research interest includes design of dedicated and reconfigurable architectures for computation-intensive algorithms pertaining to signal, image and

video processing, communication, bio-informatics and intelligent computing.

He has contributed nearly 200 technical papers to various reputed journals

and conference proceedings.

Dr. Meher has served as a speaker for the Distinguished Lecturer Program

(DLP) of IEEE Circuits Systems Society during 2011 and 2012 and Associate

Editor of the IEEE TRANSACTIONS ON CIRCUITS AND SYSTEMSII: EXPRESS BRIEFS during 2008 to 2011. Currently, he is serving as

Associate Editor for the IEEE TRANSACTIONS ON CIRCUITS AND

SYSTEMS-I: REGULAR PAPERS, the IEEE TRANSACTIONS ON VERY

LARGE SCALE INTEGRATION (VLSI) SYSTEMS, and Journal of Circuits,

Systems, and Signal Processing. Dr. Meher is a Fellow of the Institution of

Electronics and Telecommunication Engineers, India. He was the recipient of

the Samanta Chandrasekhar Award for excellence in research in engineering

and technology for 1999.

Sang Yoon Park (S’03-M’11) received the B.S.

degree in electrical engineering and the M.S. and

Ph.D. degrees in electrical engineering and computer

science from Seoul National University, Seoul, Korea, in 2000, 2002, and 2006, respectively. He joined

the School of Electrical and Electronic Engineering,

Nanyang Technological University, Singapore as a

Research Fellow in 2007. Since 2008, he has been

with Institute for Infocomm Research, Singapore,

where he is currently a Research Scientist. His

research interest includes design of dedicated and

reconfigurable architectures for low-power and high-performance digital signal

processing systems.

11

Basant Kumar Mohanty (M’06-SM’11) received

M.Sc degree in Physics from Sambalpur University,

India, in 1989 and received Ph.D degree in the

field of VLSI for Digital Signal Processing from

Berhampur University, Orissa in 2000. In 2001 he

joined as Lecturer in EEE Department, BITS Pilani,

Rajasthan. Then he joined as an Assistant Professor

in the Department of ECE, Mody Institute of Education Research (Deemed University), Rajasthan. In

2003 he joined Jaypee University of Engineering and

Technology, Guna, Madhya Pradesh, where he becomes Associate Professor in 2005 and full Professor in 2007. His research interest includes design and implementation of low-power and high-performance

digital systems for resource constrained digital signal processing applications.

He has published nearly 50 technical papers. Dr.Mohanty serving as Associate

Editor for the Journal of Circuits, Systems, and Signal Processing. He is a

life time member of the Institution of Electronics and Telecommunication

Engineering, New Delhi, India, and he was the recipient of the Rashtriya

Gaurav Award conferred by India International friendship Society, New Delhi

for the year 2012.

Khoon Seong Lim received B.Eng. (with honors)

and M.Eng. degrees in electrical and electronic engineering from Nanyang Technological University,

Singapore, in 1999 and 2001, respectively. He was

a R&D DSP engineer with BITwave Private Limited, Singapore, working in the area of adaptive

microphone array beamforming in 2002. He joined

the School of Electrical and Electronic Engineering,

Nanyang Technological University, as a Research

Associate in 2003. Since 2006, he has been with

the Institute for Infocomm Research, Singapore,

where he is currently a Senior Research Engineer. His research interests

include embedded systems, sensor array signal processing, and digital signal

processing in general.

Chuohao Yeo (S’05, M’09) received the S.B. degree

in electrical science and engineering and the M.Eng.

degree in electrical engineering and computer science from the Massachusetts Institute of Technology

(MIT), Cambridge, MA, in 2002, and the Ph.D.

degree in electrical engineering and computer sciences from the University of California, Berkeley in

2009.

From 2005 to 2009, he was a graduate student

researcher at the Berkeley Audio Visual Signal Processing and Communication Systems Laboratory at

UC Berkeley. Since 2009, he has been a Scientist with the Signal Processing

Department at the Institute for Infocomm Research, Singapore, where he

leads a team that is actively involved in HEVC standardization activities.

His current research interests include image and video processing, coding

and communications, distributed source coding, and computer vision.

Dr. Yeo was a recipient of the Singapore Government Public Service

Commission Overseas Merit Scholarship from 1998 to 2002, and a recipient of

Singapore’s Agency for Science, Technology and Research National Science

Scholarship from 2004 to 2009. He received a Best Student Paper Award at

SPIE VCIP 2007 and a Best Short Paper Award at ACM MM 2008.