Solving Constant Coefficient Systems

advertisement

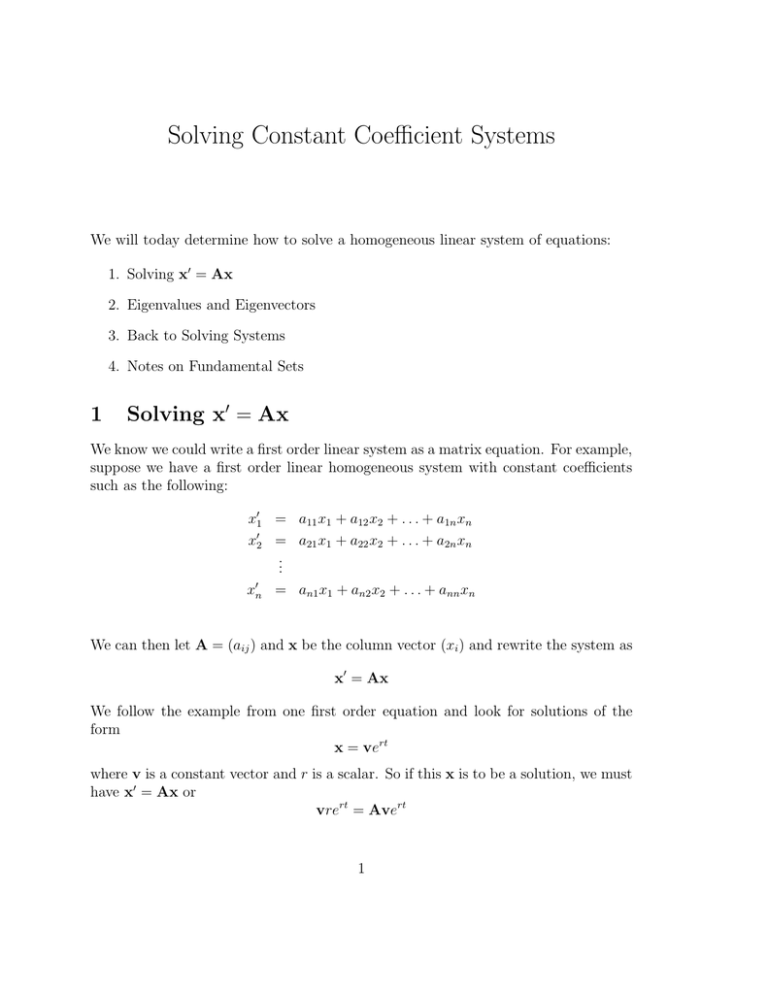

Solving Constant Coefficient Systems We will today determine how to solve a homogeneous linear system of equations: 1. Solving x′ = Ax 2. Eigenvalues and Eigenvectors 3. Back to Solving Systems 4. Notes on Fundamental Sets 1 Solving x′ = Ax We know we could write a first order linear system as a matrix equation. For example, suppose we have a first order linear homogeneous system with constant coefficients such as the following: x′1 = a11 x1 + a12 x2 + . . . + a1n xn x′2 = a21 x1 + a22 x2 + . . . + a2n xn .. . ′ xn = an1 x1 + an2 x2 + . . . + ann xn We can then let A = (aij ) and x be the column vector (xi ) and rewrite the system as x′ = Ax We follow the example from one first order equation and look for solutions of the form x = vert where v is a constant vector and r is a scalar. So if this x is to be a solution, we must have x′ = Ax or vrert = Avert 1 or equivalently (since ert 6= 0) (A − rI)v = 0 This is exactly the problem of determining the eigenvalues r and associated eigenvectors v of the matrix A. We know of course that in order to solve a system of n first order linear equations with constant coefficients in the form x′ = Ax we must be able to find n linearly independent vector solutions to (A − rI)v = 0. So if we manage to find n linearly independent solutions to our eigenvalue/eigenvector problem above, we will be able to solve the system. 2 Eigenvalues and Eigenvectors The eigenvalues of a matrix A are those values λ for which Ax = λx or (A − λI)x = 0 for some non-zero vector x. A vector x in the equation is called the eigenvector associated with eigenvalue λ. In order for (A − λI)x = 0 without x = 0, we must have the matrix (A − λI) be singular. Thus, we must have λ such that the determinant is zero: |A − λI| = 0 When we evaluate the determinant on the left, we will get a polynomial in the variable λ. This polynomial is what is referred to as the characteristic polynomial. An n × n matrix will have a degree n characteristic polynomial, and therefore n (possibly complex) roots. However, just as in the case for other polynomials, some of these roots might be repeated. Once we have found the eigenvalues, we plug each value of λ into (A − λI)x = 0 and solve for x to find an associated eigenvector. (There will be more than one solution; any constant multiple of an eigenvector is another eigenvector.) In practice, the best way to do this is by Gaussian elimination on the system. Example: Find the eigenvalues and a set of corresponding eigenvectors for −1 −2 0 7 0 A= 8 . −7 −4 1 We begin by finding the determinant |A − λI|: −1 − λ −2 0 8 7−λ 0 |A − λI| = −7 −4 1 − λ 2 We expand the determinant along the third column to get −1 − λ −2 0 8 7−λ 0 −7 −4 1 − λ = (1 − λ) −1 − λ −2 8 7−λ = (1 − λ)(λ2 − 6λ + 9) or (1−λ)(λ−3)2 . So the roots of the characteristic equation are λ = 1, and λ = 3, with λ = 3 being a root of multiplicity two. Thus, we have only two distinct eigenvalues. To find the eigenvector assosciated with λ = 1, we solve (A − λI)v = 0. Here, −2 −2 0 −1 − 1 −2 0 6 0 8 7−1 0 = 8 A − (1)I = −7 −4 0 −7 −4 1 − 1 We do this by using row operations on the augmented matrix −2 −2 0 0 6 0 0 8 −7 −4 0 0 We can divide the first row by −2 to get a 1 in the first column. If we then subtract 8 times row 1 from row 2, and add 7 times row 1 to row three, we can also eliminate all other entries in column 1: 1 1 0 0 0 −2 0 0 0 3 0 0 We then see that we can multiply row two by −1/2 and use it to eliminate the remaining entries in column 2, leaving us with 1 0 0 0 0 1 0 0 0 0 0 0 Thus, the solution has x1 = x2 = 0, and x3 is a free variable. So the column vector x1 = (0, 0, 1)T is an eigenvector, as is any constant multiple. Note that because eigenvectors are not unique (any constant multiple is also an eigenvector), when we solve for our eigenvector, we will always end with one row eliminated if we reduce correctly. Each eliminated row will correspond a free variable. (This is a good check that we have done the right things.) 3 For the eigenvector λ = 3, we get the augmented matrix 0 −4 −2 0 0 −1 − 3 −2 0 8 7 − 3 0 4 0 0 = 0 8 −7 −4 1 − 3 0 −7 −4 −2 0 which after row operations reduces to the standard form 1 0 −2 0 0 1 4 0 0 0 0 0 Thus we see that x1 − 2x3 = 0, and x2 + 4x3 = 0, so if we let x3 = 1, then x1 = 2 and x2 = −4. Thus we get an eigenvector x2 = (2, −4, 1)T . There are no other eigenvectors for λ = 3 which are not just constant multiples of this one. It is easy to check that our eigenvalues and eigenvectors are right: 0 0 −1 −2 0 7 0 0 = 0 8 1 1 −7 −4 1 So A(0, 0, 1)T = 1 · (0, 0, 1)T , as desired. For λ = 3, we get 6 2 −1 −2 0 7 0 −4 = −12 8 3 1 −7 −4 1 so A(2, −4, 1)T = 3 · (2, −4, 1)T , as we claimed. Example: Find the eigenvalues and eigenvectors for A= 1 2 3 2 ! Eigenvalues: Eigenvectors: Solution: The eigenvalues are λ = −1 with eigenvectors that are multiples of (1, −1)T , and eigenvalue are λ = 4 which has eigenvectors that are multiples of (2, 3)T . 4 3 Back to Solving Systems We started trying to find solutions of the form vert to a linear first order system x′ = Ax with n equations and constant coefficients. We determined that such solutions must satisfy (A − rI)v = 0; i.e., r must be an eigenvalue and v an associated eigenvector. Example: Find solutions of the form vert to the system 3 −2 2 −2 ′ x = ! x. Do they form a fundamental set? We find the eigenvalues of A: 3−λ −2 2 −2 − λ = (3 − λ)(−2 − λ) + 4 = λ2 − λ − 2 The roots are λ = 2 and λ = −1. If we plug λ = 2 into (A − λI)x = 0 we get 3−2 −2 2 −2 − 2 ! ! x1 x2 = 1 −2 2 −4 ! x1 x2 ! = 0 0 ! When solved, this gives x1 = 2x2 , so we have eigenvector (2, 1)T . Similarly, λ = −1 yields ! ! ! ! ! x1 x1 3+1 −2 4 −2 0 = = 2 −2 + 1 x2 2 −1 x2 0 and this gives us an eigenvector (1, 2)T . Thus we have solutions of the form x1 = ! 2 1 2t e , 1 2 and x2 = ! e−t Do our two solutions form a fundamental set? Check and find out! 2e2t e−t e2t 2e−t = 4et − et = 3et 6= 0 So we do have a fundamental set of solutions, and all solutions are of the form 2t c1 e 2 1 ! −t + c2 e 5 1 2 ! . 4 Notes on Fundamental Sets We have an approach (using eigenvalues and eigenvectors) for solving x′ = Ax, but under what conditions will we get a fundamental set of solutions? It is useful to note that if we have distinct eigenvalues, the corresponding eigenvectors will be linearly independent. Thus, if we have a system of n equations, and our matrix gives us n distinct eigenvalues λ1 , . . . , λn with eigenvectors v1 , . . . , vn , then the general solution will be c1 v1 eλ1 t + c2 v2 eλ2 t + · · · cn vn eλn t We saw this case in example above. It is also possible of course for us to have a repeated eigenvalue, but still have a full set of linearly independent eigenvectors. Example: Find the general solution to the system x′1 = x1 x′2 = x2 We have the matrix system 1 0 0 1 ′ x = ! x We find the eigenvalues of the identity matrix: 1−λ 0 0 1−λ = (1 − λ)2 = 0 So the only eigenvalue is λ = 1. However, when we solve the system 1−1 0 0 1−1 ! x= 0 0 0 0 ! x=0 we see that we have two free variables. So we can have both (0, 1)T and (1, 0)T as eigenvectors. Since we have two linearly independent eigenvectors, we have a general solution ! ! 0 1 t c1 e + c2 et 1 0 Note that in this example, we could have solved the original system quite easily by solving each equation independently of each other. For example, the general solution to x′1 = x1 is: x1 = 6 and the general solution to x′2 = x2 is x2 = and we would have gotten x1 = c1 et and x2 = c2 et just as we have here. This gives exactly the same solution as we found above. It may be that we have a repeated eigenvalue and do not get linearly independent eigenvectors, and thus cannot form a fundamental set of solutions. It is also possible to have complex eigenvalues (and even complex eigenvectors). We will deal with these cases over the next few days. 7