Limits at Infinity

advertisement

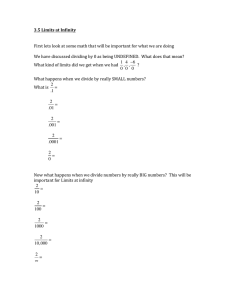

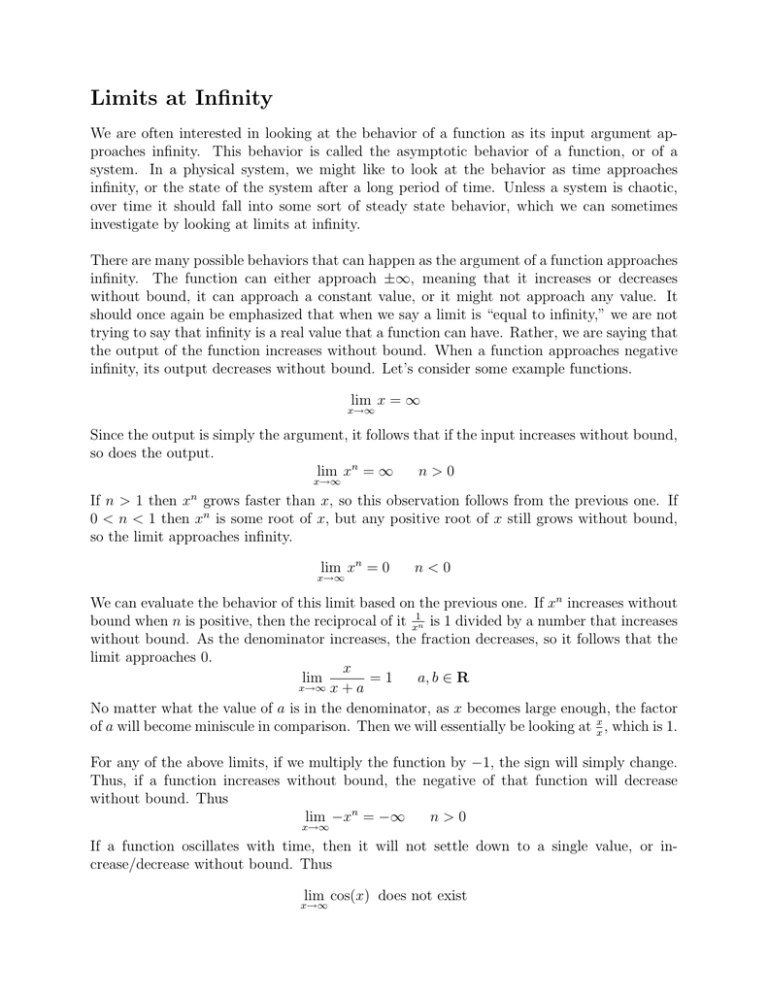

Limits at Infinity We are often interested in looking at the behavior of a function as its input argument approaches infinity. This behavior is called the asymptotic behavior of a function, or of a system. In a physical system, we might like to look at the behavior as time approaches infinity, or the state of the system after a long period of time. Unless a system is chaotic, over time it should fall into some sort of steady state behavior, which we can sometimes investigate by looking at limits at infinity. There are many possible behaviors that can happen as the argument of a function approaches infinity. The function can either approach ±∞, meaning that it increases or decreases without bound, it can approach a constant value, or it might not approach any value. It should once again be emphasized that when we say a limit is “equal to infinity,” we are not trying to say that infinity is a real value that a function can have. Rather, we are saying that the output of the function increases without bound. When a function approaches negative infinity, its output decreases without bound. Let’s consider some example functions. lim x = ∞ x→∞ Since the output is simply the argument, it follows that if the input increases without bound, so does the output. lim xn = ∞ n>0 x→∞ n If n > 1 then x grows faster than x, so this observation follows from the previous one. If 0 < n < 1 then xn is some root of x, but any positive root of x still grows without bound, so the limit approaches infinity. lim xn = 0 x→∞ n<0 We can evaluate the behavior of this limit based on the previous one. If xn increases without bound when n is positive, then the reciprocal of it x1n is 1 divided by a number that increases without bound. As the denominator increases, the fraction decreases, so it follows that the limit approaches 0. x lim =1 a, b ∈ R x→∞ x + a No matter what the value of a is in the denominator, as x becomes large enough, the factor of a will become miniscule in comparison. Then we will essentially be looking at xx , which is 1. For any of the above limits, if we multiply the function by −1, the sign will simply change. Thus, if a function increases without bound, the negative of that function will decrease without bound. Thus lim −xn = −∞ n>0 x→∞ If a function oscillates with time, then it will not settle down to a single value, or increase/decrease without bound. Thus lim cos(x) does not exist x→∞ If we modulate the cosine function with a decreasing amplitude, then we will have a function that approaches 0. lim x−2 cos(x) = 0 x→∞ As we can see above, there are numerous functions which approach ±∞ as their arguments become arbitrarily large. We are often interested in comparing the rate at which two functions increase or decrease. Comparing the rate at which functions approach ∞ Suppose limx→∞ f (x) = ∞ and limx→∞ g(x) = ∞. 1. f (x) approaches ∞ faster than g(x) if f (x) =∞ x→∞ g(x) lim 2. f (x) approaches ∞ slower than g(x) if f (x) =0 x→∞ g(x) lim 3. f (x) approaches ∞ at the same rate as g(x) if lim x→∞ f (x) =L g(x) where L is any real number other than 0. Three basic functions that approach ∞ as x → ∞ are ln(x), xn for n > 0, and eβx for β > 0. The preceding functions are listed in order of approaching ∞ from slowest to fastest (ie. ln(x) approaches ∞ slower than ex ). It doesn’t matter how small or large n and β are, these functions still approach ∞ in that ordering of speed (ie. x10000 approaches ∞ slower than e0.00001x ). However, the larger n or β is, the faster the function will grow compared to other functions of the same class (ie. x4 grows faster than x2 ). Finally, multiplying by any positive constant does not change the rate at which these functions approach ∞. Thus, x still grows faster than 10000 ln(x), and 2x approaches ∞ at the same rate as x does. In the same vein, we can compare functions that approach 0, and the rate at which they do so. A function approaches 0 faster than another if it becomes smaller faster than the other function. The easiest way to find these types of functions is to take the inverse of a function that approaches ∞. Comparing the rate at which functions approach 0 Suppose limx→∞ f (x) = 0 and limx→∞ g(x) = 0. 1. f (x) approaches 0 faster than g(x) if f (x) =0 x→∞ g(x) lim 2. f (x) approaches 0 slower than g(x) if f (x) =∞ x→∞ g(x) lim 3. f (x) approaches 0 at the same rate as g(x) if f (x) =L x→∞ g(x) lim where L is any real number other than 0. A few examples in order of the rate at which they approach 0 are x−n for n > 0, e−βx for 2 β > 0, and e−βx , in order from slowest to fastest. In general, by increasing the rate of change of an exponent, we will create a function that changes faster than other functions in the same x 2 class with a slower changing exponent. Thus, e−e decreases even more quickly than e−βx . One should be careful when trying to draw such conclusions about functions in different classes, as x−x does approach 0 more quickly than e−x . Once again, simply multiplying by a constant (it can be positive or negative in this case) does nothing to change the rate at which the functions approach 0.