Lecture 13 Wednesday, September 21 1. Expectation of a Discrete

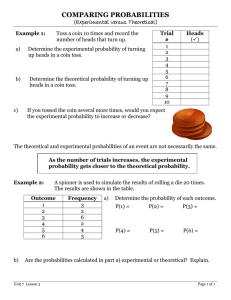

advertisement

Lecture 13

Wednesday, September 21

1. Expectation of a Discrete Random Variable

Setting. As usual we work in a probability space—a sample space S, a class of

events, and a probability measure P . Let X be a discrete random variable, i.e., a

real-valued function defined on S whose values can be arranged in a finite or infinite

sequence, and we let p(x) be the probability mass function of the random variable

X. Recall that p(x) is defined by

p(x) = P ({X = x}),

x ∈ R.

Definition. The expectation of X is defined by

X

xp(x).

(1)

E(X) =

x

provided the sum converges absolutely.

Remark. Intuitively, the expectation of a random variable is a weighted average

of its values, with each value being weighted by the probability with which it is

taken.

Remark. The meaning of the expression on the righthand side of (1) is that the

sum is taken over the terms that are nonzero. In particular, for a term to be added

in, the value x must be taken by X with positive probability.

Remark. Since X is a discrete random variable, the sum is either finite or it is an

ordinary infinite series. When the sum is an infinite series, we require that the series

be absolutely convergent.

Example 1. (From last time) Let X be the number of heads occurring when a

coin is tossed twice. Let p denote the probability the coin comes up heads, and let

q = 1 − p. Let p(x) be the probability mass function of X. Now, X takes the values

0, 1, and 2. We have that p(0) = q 2 , p(1) = 2pq, and p(2) = p2 . (To square this with

the formalities, note that p(x) = 0 unless x = 0, x = 1, or x = 2.) Thus

E(X) = 0 ·q 2 + 1 · (2pq) + 2 · p2

= 2p(p + q)

= 2p

Example 2. We consider the problem above, where now the coin is tossed n times.

We want to caclulate E(X).

1

2

Solution. We shall need the identity

n

n−1

k

=n

,

k

k−1

k = 1, 2, . . . , n.

We have, for k = 1, 2, . . . , n,

n

n!

k

= k

k!(n − k)!

k

(n − 1)!

= n

(k − 1)!(n − 1 − (k − 1))!

n−1

= n

k−1

Now, the caclulation of E(X):

E(X) =

=

=

=

n

X

n k n−k

k

p q

k

k=0

n

X

n k n−k

k

p q

k

k=1

n X

n − 1 k−1 n−1−(k−1)

np

p q

k−1

k=1

n−1 X

n − 1 j n−1−j

np

pq

j

j=0

= np(p + q)n−1

= np

Remark. Intuitively, if p is the probability of getting heads on one toss, then we

should get np heads in n tosses.

Example 3. We toss a coin until a head first appears. Let X be the number of

the trial on which this happens. Find E(X).

(We continue to assume that p denotes the probability that the coin turns up heads,

and that q = 1 − p.)

3

Solution.

Let p(x) be the probability mass function of X. We have already

calculated that for any positive integer n we have

p(n) = P ({X = n})

= q n−1 p

and so

E(X) =

∞

X

nq n−1 p

n=1

∞

X

= p

nq n−1

n=1

To evaluate the infinite series, we proceed as follows: We start with the geometric

series

∞

X

1

=

xn , −1 < x < 1.

1 − x n=0

We also know from calculus that we can differentiate a power series term-by-term

for x in the interval of convergence. Differentiating both sides, we find

∞

X

1

nxn−1

=

(1 − x)2

n=0

=

∞

X

nxn−1

n=1

1

(1 − q)2

1

= p 2

p

1

=

p

E(X) = p

Again, the result makes good intuitive sense: If the average number of heads per

toss is p, then we might well expect, on average, to wait until the 1/p-th trial for the

first head to appear.

4

2. Expectation of a Function of a Discrete Random Variable

We could have introduced expectation in a different way: For each point s of the

sample space, we multiply the value X(s) of the random variable X at s by the

probability P ({s} of s. Then we sum over the sample space. Thus our alternate

definition would be

X

E(X) =

X(s)P ({s}).

s∈S

Example 4. Let X be the number of heads occurring in 3 tosses of a coin, where

the probability of heads at each toss is p. We write out the sample space, P , and X

in tabular form.

s

P ({s}) X(s) X(s) · P ({s})

(t, t, t)

q3

0

0 · q3

2

(t, t, h)

pq

1

1 · pq 2

(t, h, t)

pq 2

1

1 · pq 2

2

(h, t, t)

pq

1

1 · pq 2

(h, h, t)

p2 q

2

2 · p2 q

2

(h, t, h)

pq

2

2 · p2 q

(t, h, h)

p2 q

2

2 · p2 q

3

(h, h, h)

p

3

3 · p3

When we sum over the last column, we obtain the same answer as when we apply

the definition. We illustrate the definition in a similar format.

s

(t, t, t)

(t, t, h)

(t, h, t)

(h, t, t)

(h, h, t)

(h, t, h)

(t, h, h)

(h, h, h)

x P ({X = x} x · P ({X = x})

0

q3

0 · q3

1

1

1

pq 2

1 · 3pq 2

2

2

2

p2 q

2 · 3p2 q

3

p3

3 · p3

Next suppose that we wanted to calculate the expectation of some function of a random variable, say of X 2 . Using our alternate, but equivalent definition, we have

X

(X(s))2 P ({s})

E(X 2 ) =

sinS

5

If we now collect terms using the distinct values of X we have

X

E(X 2 ) =

xP ({X = x})

x

=

X

xp(x)

x

where p(x) is the probability mass function of X.

The point here is that we do not have to find the probability mass function of

X 2.

The argument that we sketched here applies to any function of X, not just X 2 .

![MA1S12 (Timoney) Tutorial sheet 9a [March 26–31, 2014] Name: Solutions](http://s2.studylib.net/store/data/011008034_1-934d70453529095ae058088c61b34e01-300x300.png)

![MA1S12 (Timoney) Tutorial sheet 9c [March 26–31, 2014] Name: Solution](http://s2.studylib.net/store/data/011008036_1-950eb36831628245cb39529488a7e2c1-300x300.png)