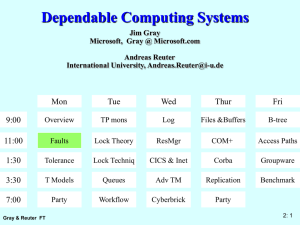

. Heisenbugs: Outline

advertisement

Heisenbugs:

A Probabilistic Approach to Availability

Jim Gray Microsoft Research

http://research.microsoft.com/~gray/Talks/

½ the slides are not shown (are hidden, so view with PPT to see them all

Outline

• Terminology and empirical measures

• General methods to mask faults.

• Software-fault tolerance

• Summary

Heisenbugs:

A Probabilistic Approach to Availability

There is considerable evidence that (1) production

systems have about one bug per thousand lines of

code (2) these bugs manifest themselves in

stochastically: failures are due to confluence of rare

events, (3) system mean-time-to-failure has a lower

bound of a decade or so. To make highly available

systems, architects must tolerate these failures by

providing instant repair (un-availability is approximated

by repair_time/time_to_fail so cutting the repair time in

half makes things twice as good. Ultimately, one builds

a set of standby servers which have both design

diversity and geographic diversity. This minimizes

common-mode failures.

Dependability: The 3 ITIES

• Reliability / Integrity:

does the right thing.

(Also large MTTF)

Integrity Security

Reliability

• Availability: does it now.

(Also small MTTR

MTTF+MTTR

Availability

System Availability:

if 90% of terminals up & 99% of DB up?

(=>89% of transactions are serviced on time).

• Holistic vs. Reductionist view

High Availability System Classes

Goal: Build Class 6 Systems

System Type

Unavailable

(min/year)

50,000

Unmanaged

5,000

Managed

500

Well Managed

50

Fault Tolerant

5

High-Availability

.5

Very-High-Availability

.05

Ultra-Availability

Availability

90.%

99.%

99.9%

99.99%

99.999%

99.9999%

99.99999%

Availability

Class

1

2

3

4

5

6

7

UnAvailability = MTTR/MTBF

can cut it in ½ by cutting MTTR or MTBF

Demo: looking at some nodes

• Look at http://httpmonitor/

• Internet Node availability:

92% mean,

97% median

Darrell Long (UCSC)

ftp://ftp.cse.ucsc.edu/pub/tr/

– ucsc-crl-90-46.ps.Z "A Study of the Reliability of Internet Sites"

– ucsc-crl-91-06.ps.Z "Estimating the Reliability of Hosts Using the

Internet"

– ucsc-crl-93-40.ps.Z "A Study of the Reliability of Hosts on the Internet"

– ucsc-crl-95-16.ps.Z "A Longitudinal Survey of Internet Host Reliability"

Sources of Failures

Power Failure:

Phone Lines

Soft

Hard

Hardware Modules:

Software:

MTTF

2000 hr

MTTR

1 hr

>.1 hr

4000 hr

100,000hr

.1 hr

10 hr

10hr (many are transient)

1 Bug/1000 Lines Of Code (after vendor-user testing)

=> Thousands of bugs in System!

Most software failures are transient: dump & restart system.

Useful fact: 8,760 hrs/year ~ 10k hr/year

Case Studies - Tandem Trends

Reported MTTF by Component

Mean Time to System Failure (years)

by Cause

450

400

maintenance

350

300

250

hardware

environment

200

operations

150

100

software

50

total

0

1985

1987

1989

1985

1987

1990

SOFTWARE

HARDWARE

MAINTENANCE

OPERATIONS

ENVIRONMENT

2

29

45

99

142

53

91

162

171

214

33

310

409

136

346

Years

Years

Years

Years

Years

SYSTEM

8

20

21

Years

Problem: Systematic Under-reporting

Many Software Faults are Soft

After

Design Review

Code Inspection

Alpha Test

Beta Test

10k Hrs Of Gamma Test (Production)

Most Software Faults Are Transient

MVS Functional Recovery Routines

Tandem Spooler

Adams

5:1

100:1

>100:1

Terminology:

Heisenbug: Works On Retry

Bohrbug: Faults Again On Retry

Adams: "Optimizing Preventative Service of Software Products", IBM J R&D,28.1,1984

Gray: "Why Do Computers Stop", Tandem TR85.7, 1985

Mourad: "The Reliability of the IBM/XA Operating System", 15 ISFTCS, 1985.

Summary of FT Studies

• Current Situation: ~4-year MTTF =>

Fault Tolerance Works.

• Hardware is GREAT (maintenance and MTTF).

• Software masks most hardware faults.

• Many hidden software outages in operations:

– New Software.

– Utilities.

• Must make all software ONLINE.

• Software seems to define a 30-year MTTF ceiling.

• Reasonable Goal: 100-year MTTF.

class 4 today => class 6 tomorrow.

Fault Tolerance vs Disaster Tolerance

• Fault-Tolerance: mask local faults

– RAID disks

– Uninterruptible Power Supplies

– Cluster Failover

• Disaster Tolerance: masks site failures

– Protects against fire, flood, sabotage,..

– Redundant system and service at remote site.

– Use design diversity

Outline

• Terminology and empirical measures

• General methods to mask faults.

• Software-fault tolerance

• Summary

Fault Tolerance Techniques

• FAIL FAST MODULES: work or stop

• SPARE MODULES : instant repair time.

• INDEPENDENT MODULE FAILS by design

MTTFPair ~ MTTF2/ MTTR (so want tiny MTTR)

• MESSAGE BASED OS: Fault Isolation

software has no shared memory.

• SESSION-ORIENTED COMM: Reliable messages

detect lost/duplicate messages

coordinate messages with commit

• PROCESS PAIRS :Mask Hardware & Software Faults

• TRANSACTIONS: give A.C.I.D. (simple fault model)

Example: the FT Bank

Fault Tolerant Computer

Backup System

System MTTF >10 YEAR (except for power & terminals)

Modularity & Repair are KEY:

vonNeumann needed 20,000x redundancy in wires and switches

We use 2x redundancy.

Redundant hardware can support peak loads (so not redundant)

Fail-Fast is Good, Repair is Needed

Lifecycle of a module

fail-fast gives

short fault latency

High Availability

is low UN-Availability

Unavailability MTTR

MTTF

Improving either MTTR or MTTF gives benefit

Simple redundancy does not help much.

Outline

• Terminology and empirical measures

• General methods to mask faults.

• Software-fault tolerance

• Summary

Key Idea

}

Architecture

Hardware Faults

Software

Masks

Environmental Faults

Distribution

Maintenance

• Software automates / eliminates operators

So,

• In the limit there are only software & design faults.

{

}

{

Software-fault tolerance is the key to dependability.

INVENT IT!

Software Techniques:

Learning from Hardware

Recall that most outages are not hardware.

Most outages in Fault Tolerant Systems are SOFTWARE

Fault Avoidance Techniques: Good & Correct design.

After that: Software Fault Tolerance Techniques:

Modularity (isolation, fault containment)

Design diversity

N-Version Programming: N-different implementations

Defensive Programming: Check parameters and data

Auditors: Check data structures in background

Transactions: to clean up state after a failure

Paradox: Need Fail-Fast Software

Fail-Fast and High-Availability Execution

Software N-Plexing: Design Diversity

N-Version Programming

Write the same program N-Times (N > 3)

Compare outputs of all programs and take majority vote

Process Pairs: Instant restart (repair)

Use Defensive programming to make a process fail-fast

Have restarted process ready in separate environment

Second process “takes over” if primary faults

Transaction mechanism can clean up distributed state

LOGICAL PROCESS = PROCESS PAIR

if takeover in middle of computation.

SESSION

PRIMARY

PROCESS

STATE

INFORMATION

BACKUP

PROCESS

What Is MTTF of N-Version Program?

First fails after MTTF/N

Second fails after MTTF/(N-1),...

so MTTF(1/N + 1/(N-1) + ... + 1/2)

harmonic series goes to infinity, but VERY slowly

for example 100-version programming gives

~4 MTTF of 1-version programming

Reduces variance

N-Version Programming Needs REPAIR

If a program fails, must reset its state from other

programs.

=> programs have common data/state representation.

How does this work for

Database Systems?

Operating Systems?

Network Systems?

Answer: I don’t know.

Why Process Pairs Mask Faults:

Many Software Faults are Soft

After

Design Review

Code Inspection

Alpha Test

Beta Test

10k Hrs Of Gamma Test (Production)

Most Software Faults Are Transient

MVS Functional Recovery Routines

Tandem Spooler

Adams

5:1

100:1

>100:1

Terminology:

Heisenbug: Works On Retry

Bohrbug: Faults Again On Retry

Adams: "Optimizing Preventative Service of Software Products", IBM J R&D,28.1,1984

Gray: "Why Do Computers Stop", Tandem TR85.7, 1985

Mourad: "The Reliability of the IBM/XA Operating System", 15 ISFTCS, 1985.

Process Pair Repair Strategy

If software fault (bug) is a Bohrbug, then there is no repair

“wait for the next release” or

“get an emergency bug fix” or

“get a new vendor”

LOGICAL PROCESS = PROCESS PAIR

SESSION

If software fault is a Heisenbug,

then repair is

PRIMARY

PROCESS

STATE

INFORMATION

BACKUP

PROCESS

reboot and retry or

switch to backup process (instant restart)

PROCESS PAIRS Tolerate Hardware Faults

Heisenbugs

Repair time is seconds, could be mili-seconds if time is critical

Flavors Of Process Pair:

Lockstep

Automatic

State Checkpointing

Delta Checkpointing

Persistent

How Takeover Masks Failures

Server Resets At Takeover But What About

LOGICAL PROCESS = PROCESS PAIR

SESSION

Answer:

PRIMARY

PROCESS

STATE

INFORMATION

BACKUP

PROCESS

Application State?

Database State?

Network State?

Use Transactions To Reset State!

Abort Transaction If Process Fails.

Keeps Network "Up"

Keeps System "Up"

Reprocesses Some Transactions On Failure

PROCESS PAIRS - SUMMARY

Transactions Give Reliability

Process Pairs Give Availability

Process Pairs Are Expensive & Hard To Program

Transactions + Persistent Process Pairs

=> Fault Tolerant Sessions &

Execution

When Tandem Converted To This Style

Saved 3x Messages

Saved 5x Message Bytes

Made Programming Easier

SYSTEM PAIRS

FOR HIGH AVAILABILITY

Primary

Backup

Programs, Data, Processes Replicated at two sites.

Pair looks like a single system.

System becomes logical concept

Like Process Pairs: System Pairs.

Backup receives transaction log (spooled if backup down).

If primary fails or operator Switches, backup offers service.

SYSTEM PAIR BENEFITS

Protects against ENVIRONMENT:

weather

utilities

sabotage

Protects against OPERATOR FAILURE:

two sites, two sets of operators

Protects against MAINTENANCE OUTAGES

work on backup

software/hardware install/upgrade/move...

Protects against HARDWARE FAILURES

backup takes over

Protects against TRANSIENT SOFTWARE ERRORR

Allows design diversity

different sites have different software/hardware)

Key Idea

}

Architecture

Hardware Faults

Software

Masks

Environmental Faults

Distribution

Maintenance

• Software automates / eliminates operators

So,

• In the limit there are only software & design faults.

Many are Heisenbugs

{

}

{

Software-fault tolerance is the key to dependability.

INVENT IT!

References

Adams, E. (1984). “Optimizing Preventative Service of Software Products.” IBM Journal of

Research and Development. 28(1): 2-14.0

Anderson, T. and B. Randell. (1979). Computing Systems Reliability.

Garcia-Molina, H. and C. A. Polyzois. (1990). Issues in Disaster Recovery. 35th IEEE

Compcon 90. 573-577.

Gray, J. (1986). Why Do Computers Stop and What Can We Do About It. 5th Symposium on

Reliability in Distributed Software and Database Systems. 3-12.

Gray, J. (1990). “A Census of Tandem System Availability between 1985 and 1990.” IEEE

Transactions on Reliability. 39(4): 409-418.

Gray, J. N., Reuter, A. (1993). Transaction Processing Concepts and Techniques. San Mateo,

Morgan Kaufmann.

Lampson, B. W. (1981). Atomic Transactions. Distributed Systems -- Architecture and

Implementation: An Advanced Course. ACM, Springer-Verlag.

Laprie, J. C. (1985). Dependable Computing and Fault Tolerance: Concepts and Terminology.

15’th FTCS. 2-11.

Long, D.D., J. L. Carroll, and C.J. Park (1991). A study of the reliability of Internet sites. Proc

10’th Symposium on Reliable Distributed Systems, pp. 177-186, Pisa, September 1991.

Darrell Long, Andrew Muir and Richard Golding, ``A Longitudinal Study of Internet Host

Reliability,'' Proceedings of the Symposium on Reliable Distributed Systems, Bad Neuenahr,

Germany: IEEE, September 1995, pp. 2-9