File 16374.

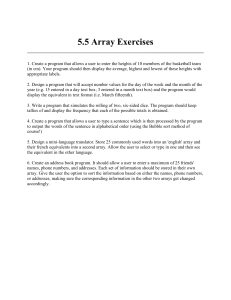

advertisement

File 16374. >> Daan Leijen: It's about ten years ago when I did my master's project, and I did my master's project not in Amsterdam but in Portland, Oregon with Simon Jones. I was excited about it because I only read his book, never seen him in person. It was a great event in my life. And he guided me in the first step of the functional programming world, and I'm still doing it with lots of pleasure. Today it's a real pleasure for me to introduce Simon as a host, and he's giving a talk today about data parallelism which is probably going to be really exciting. And Simon? >> Simon Jones: I know it's the row that nobody wants to sit in, but come to the front and I'm friendly. It's nice to be in a small room where everyone can ask questions. Thank you for doing me the honor of taking time out of your day to listen to this talk. This is joint with my colleague, who in the University of New South Wales in Australia, we have this collaboration which the sun never sets on the project. We work continuously going on. I've got -- I'll give a snapshot of what we're trying to do, and get to some of the technical details. I hope to give you some meat. That means I shall talk very quickly, and I don't know if you remember in the days of James Watts, steam engines used to go out of control going too fast. The governor would raise its arms and when it came out that would stop the steam getting through. If I appear to be shaking myself to pieces, start raising your arms. A good way is to ask questions, please ask questions, and if we don't get to the end of the talk that's fine. But we will finish on time. 25 to or half past? >> Simon Jones: What? >>: Usually takes an hour. I'll finish within an hour. Nobody wants to listen to an hour and a half talk. Here's the story. So quick orientation. We dual parallel programming and you can do the -- lots of -- I don't want to talk about that. Data parallelism is what we want to talk about today. This is good because it's easier to program because you only have one program counter. My how much easier that is to write. And you have one program counter manipulating lots of data. So in the data parallel world, I'm going to skip about -- I want to talk about data parallelism. Really the brand leader is flat data parallelism. Lots of examples. And here the idea you is do something, the same thing to a big blob -- data like a big array. You do the same thing to each element. This is very well developed. People have been working 20, 30, probably 40 years. Lots of examples of it working. Much less well-known is nest data parallelism I'm enthusiastic. Which I'm allowed to be because I didn't invent it. That's what this talk is about. Let me locate these two programming -- flat data parallelism works like. This for each I. in some big range do something to the I filament of the vector A, and this A. can be really big. One has million elements in your array. And you don't spawn 100 million threads that. Would be bad. Right? Because each thread here might only be doing a couple of floating point operations. Even though incredibly tiny things happening, we have a good implementation model, namely divide the array up to into chunks and each rips down its chunk of the array and at the end they synchronize. The thing you do to each element is itself sequential. So good cost model. But not every program fits into this programming paradigm. Here's -- so nesting data parallelism says the same idea do the same but there's something that you do can be a data parallel operation. So now each element of A. of I. might -- it might now not be in array of floats but in a way of structured things. The thing that you do is a data parallel operation itself. Now the con currency graph looks like. The outer most has three elements and each child may spawn because it's doing a data parallel operation no, reason to suppose they'll have the same amount. Get this divide and conquer and this might be very, very bushy and not very deep. We'll see examples of that in a sec. Or might be very deep and not bushy. Who knows. Implementing this directly is harder. Just going to implement this some kind of parallel machine that's what a lot of us try to do. I've tried to do actually and sometimes what you spawn for each of these guys and the thread float about the machine and you map them and processes pick up a thread and execute it for a bit and you have -- whether a process picks up one high up or low down, that's with the scheduling policy and last in, first out, very dynamic. You don't get much control of the granularity because these leaves are very tiny. So there's a serious danger of executing a lot of tiny threads. 100 million leaves to the tree and you don't want to execute the leaves in a separate thread. And it's difficult to get control of locality, too. Because you have processes pulling threads from anywhere and they've got pointers all over the place. Locality is not good. And on big machines that's a very bad thing. So this is all -- what I'm hoping to give you is sort of the top level idea of what it means, and why it might be tricky to implement directly. I'll give examples in a second. So far so good? Okay. So I claim is good for programmers because it enables a much wider range of programming styles. Here's some kind of quick application, I'll give examples of programs in a second. Sparse matrix kind of stuff is a the classic one where there's -- the amount of computation in one place may be very different than the amount in another, and the array is not all meet in rectangular. A divide and conquer algorithm like sort, just think about sort, I'll show you sort algorithm, that has a parallel tree which only branches with a two-way trajectory at every level, splits two ways. So there the tree each branching factor is very small. In a sparse array algorithm, the branching factor might be much bigger. I'm wanting to remark this encompasses straightforward divide and conquer algorithms as well. A bit more -- examples of graph algorithms, that walk over graphs, again data parallel, you say in the first step you can sort of in parallel look at the children of the first node in the graph and second step look at that. These are more speculative. Triangulation and there's I'm hopeful of those kind off algorithms, but all of these are not dense matrix. We know they start at one point and split it out. So just before I do this, let me remark that guy's plan was to say take a nested data parallel program, the one we want to write, and transform it at into a flat data parallel program, that is the one we want to run. So if we could do this well, this would be extremely cool, we write the program that we -So that's the overall plan. So guy did this in the early to mid '90s, and I feel like a guy who has come across a thousand dollar note lying on the pavement, the sidewalk, which everybody else is sort of walking around and ignoring. So I said, why are you ignoring it? It's a bit tricky doing this. This is big compiler transformation, but on a compiler guy so that's okay, I like doing tricky compiler stuff. And it's very difficult to do for imperative language. I'm not you are could. Here I have unfair advantage I have a functional language here. I'm for exploiting unfair advantages. So the big picture I'm trying to take this idea and update it for the 20th century, 21st century. Code. Are we okay so far? All right. Here's some code. This is what data parallel Haskell looks like. I'll take my favorite functional language, Haskell, and add data parallel constructs to it and give you a language that can do all Haskell can do at the moment, plus this extra stuff. Nestle, which was Guy's language, focused on this one application area. Not a very good general purpose programming language and as a result not many people use it. My hope is to smuggle -- lots of people not compared to C sharp but compared to -- a lot use Haskell. If I can smug tell into their desktops maybe people will use it. This data type here, pronounced array afloat or parallel array afloat. Just the vector. These are one-dimensional vectors, indexed by just by integers. This says, vector -- floats and another vector floats and produce as float and how does it work? Use this is notation like list comprehensions in Haskell, array comprehension. So this is just read as the array of all F1 times F2, where in parallel. So this -- these two vertical bars mean they're drawn together pairwise in synchrony. These in closing square bracket say I'd like to do in parallel. Do these in parallel. And sum P. takes vector of floats and produce as float so it comes to -- it does some kind of data parallel addition over the whole resulting vector. Does that make sense? This is the only way you get to specify parallel computation in data parallel Haskell. Only way to specify is using array comprehensions and operator here's. No spawn, join, lock. None of that. Just functional on arrays using this construct to say go parallel here. So the idea is you can understand this in a sequential way without thinking about parallelism at all. >>: [inaudible] could this be with P. >>: Yeah, there's a whole family of these operators, all right, which includes zip with. Pretty much anything you can do with a list, where there's a P. version that says do it on a parallel vector. Does that make sense? Indeed first approximation, you can think of these as like just clever parallel implementations of list. Yeah? >>: How do I specify? These are the arguments to the functions which might be generated by other functions so in the end we have to get them from somewhere. Might read them from a disc, generate them by doing -- whole bunch of random data. Might, I don't know, generate from small amount of data that generates big intermediate vector. Is that what you meant? >>: Concerning special generating -- >>: Oh, no. Nothing special. Here's a small parallel array. So that's how I could generate one, I could write it down as part of my program. That's like writing a literal in your C sharp program. Literal arrays but more common to compute from something else or to get -- read raw data and read more data into a function that goes, I don't know, file name to array afloat. Does that make sense? >>: Is there any reason you couldn't replace sum with a generalized language. >>: Could we have fold P, you mean? >>: Well, can I put my own -- you said no and I'm trying ->>: You could put your -- so you mean here is a function, does it have to be sum P.? No it could be the another. Sum P. is not connected to square brackets. These square brackets generate a vector and are happening to apply sum P. to it but I could apply -- yes, I could apply just a value. These are just values. Okay? Now, so this is of course only flat data parallelism. I want to show you nested data parallelism. Sparse vector multiplication. How am I going to represent a sparse matrix? I'm going to represent a sparse matrix by a dense vector of sparse vectors. What's a sparse vector? Just going to be a vector of in float pairs, so these are the index value pairs for the nonzero elements of the vector. So this is meant to be -- this SV mold takes a sparse vector and dense vector and multiplies corresponding elements and adds up the result. How does it work? It says, well, it says it's -- take the array of all F. times the -element of -- IF is joined from SV. This bang here is the indexing operator. Okay? And then I finally add them all up. >>: [inaudible] operator from Haskell? >>: Yes, it probably shouldn't actually, so I think it is the normal indexing operator. This has to be the parallel operating indexor. Not the same type. >>: wouldn't it make sense putting them in a class so you could reuse your [inaudible] and both ->> Simon Jones: Yes, you mean, the Haskell type class so you could -- yes, the whole thing is meant to extend to Haskell type classes. I'm trying to concentrate on essentials. Yes, you could say numb, array -- yes, absolutely. The whole thing should lift across numerics. Okay. So now we can do -- now we can see nested date parallelism. Here's a sparse matrix vector multiplication. Takes a sparse matrix, dense vector of a sparse vector, multiplies by a dense vector. What does it do? Says for every row of the sparse matrix, this SV guy is now one of these sparse vectors, use SV mold, that's the guy on previous slide. This guy. So he takes a sparse matrix, right in so sparse and matrix multiply says for each row, use SV is to multiply by V. and then add up those results. So this is nested data parallelism in action. Why is it nested? Because this outer loop is saying do something in parallel to each row, but in here the thing that I'm doing to each row is a data parallel, go back. Data parallel operation here. So I'm doing a data parallel operation to each element of a data parallel matrix. So I like this example because it shows so clearly why you want nested data parallelism. It would be so stupid to say SV-- that's a data operation. Terribly sorry, you can't call it here. But -- that's not very modular and composable. Okay. Well, now, how we can implement this. This is a bit tricky. So here's the way the matrix looks. It's a -- dense vector of sparse vectors, and so each of these yellow guys is the vector of index value pairs. So this is a matrix, one column for each -- sorry, maybe I should put it on one side, one column for each row. Each is a row of the matrix and some rows have nonzero elements and some have very few. How to execute this thing in data parallel. We could chop -- you have to imagine this is perhaps quite long. We could chop these up evenly across the processes. But then it might be very unbalanced because there's a short patch here, idle process. Or say no, no. Perhaps there's not very many rows but an awfully long column. Perhaps I'd be better sequentially iterating through this top guy and doing data parallel on the bottom. But which to choose? How would you know? How would an automatic machine know? And what happens if it's neither one or the other. Different in different parts of this. Hard to do. Guys, amazing idea is this. He said look, why don't we take all of those and put them end to end. In a big flat array. Here it is. That's all the data. Laid out and keep on one side. The purple guy, segment descriptor that says where each of the rows begins in big guy. So this is a big data transformation. I've taken an array of pointers, slapped it into one giant thing, bookkeeping on the side. Now you may imagine that you might take the program I showed you before and transform it, so instead of manipulating the data structure that it was originally working on, it's manipulating this one. And furthermore, what I'd like to do is chop this guy into chunks across processes, without regard to the boundary of the sub elements. Okay. So now I want you to imagine saying, I started with the original programming, you have to do this transformation by hand. You have to keep them in one row. Chop up evenly across the processes and get the bookkeeping right. I'm hoping that this moment you're losing the will to live. [laughter] >> Simon Jones: This is what compilers are for. And so guy rather remarkably showed you could take the programming transform it systematically to this flattened form. And I'll show you how to do that. Okay. Yes? >>: [inaudible]. >>: These parallel arrays are strict. Right? So when you demand one of these parallel vectors, you demand all of its elements. If you don't demand the parallel array at all, then you don't demand any of them. If you demand any, you hand in all of them. That's the particulars. It's also the keys to -- if you have array of floats, you don't want to have array of pointers. You want to array of honest to goodness, 64-bit floating numbers. No monkey business with Haskell nonsense about lazy evaluation. You can have thunks for the array of a -- but not for each tiny element of it. That's the deal. So yes, it has an effect on the lazy semantics but that's what you get if you want to do this data parallel stuff vaguely efficiently. That's the plan. I've got to give you two other quick examples of doing nested data parallel programming, different application areas because I don't want to think this is good for sparse matrix, weather forecasting but not anything else. Broad spectrum. Here's an example of searching -- this is sort of if you like not produce in data parallel. Haskell. Here's the idea. You got a -- you're trying to search a document base for some string. And return an array of pairs of the document and within that document all the places where that word occurred. Does that make sense? That's the type of search. What is a document base? It's array of documents. What is a document? Array of strings. There's the nested structure again. Document base is now array of array of strings. So going back to the previous slide. You can see what we're going toned up doing is putting all the document, strings in all the documents end to end. Lining them up in a big row across the whole machine and chopping them up. Good? Write the code for search. How we going to do it? Well, first going to write a function word which takes a single document and a string and finds all the places possibly none, where that string occurs in the document. So how am I going to do that? Assume I have that. And I'm going to write search. How to search use such a thing. Well, search from a document base and a string returns the array of all D, I. That's the list of currencies of a word in one document. In the document D. Where D. is joined from the documents, that's the array of documents. And what is I.s? The result of calling word -- this guy. Given a particular document in the string, I return the array of all the places where that document occurs. And then I better just check that I. is not empty. Because I don't want to list in my result documents with an empty array of occurrences, and then I'm done. Good? You convinced this is the good code for search? Better write -- what's that? Let's see. So I need to take all the -- looking for a particular string in a document. So what do I better do? I'll just look and this is very brutal algorithm. I'll take every possible starting position and see if the string matches the document, starting at that position. So what am I going to do? I'm going to take pairs of I. S pairs. I'm going to zip positions against D. So what's positions? Position is one to the length of document. So in effect every -- when I say the document is an array of strings, so these, the words in the document. Right? So I'm not -- so all this zip is doing is it's producing a list -- producing a array of pairs in which each string in the document is paired with its occurrence position. All right? And now all I've got to do is check the S. is equal to -- I'll take each such pair and for anywhere S.-2, that's this guy matches the string I'm looking for, I'll return to my result and I'm done. Are you convinced? Good, so we're done -- that's Google's business model. But it's just a different paradigm. Notice this paradigm is -- the way in which it's the same as the sparse matrix is it's two levels. Outer level, and an inner level. So can you do more? Clearly we could do three but can we do -- how many? Here's quick sort. And quick sort it looks very different again. So sort is what it's going to do. It's going to take parallel array of floats and return parallel array of floats. And how does it work? We better do the base case first. If the array is short, that should be length P. If short, return it. If it isn't short, what am I going to do I need to construct the array of all the numbers that are less than the pivot elements. I'll pick the zero one. Find all the Fs, which are smaller than M. This we haven't seen array comprehensions can contain filters. Just say array of all F. F. smaller than M. Those are the ones less, equal and greater than. Do that in parallel. Now, so this is the weird bit. Look at this. What does this say? Essay, is the result of applying sort to A, where A. is joined from WU. A little two element guy. This is weird, isn't it? All right. But I didn't like -- I could have written sort of LT, and sort of GR. But that wouldn't have happened in data parallel. If I just had two sub expressions, floating about my program. By putting them in brackets I'm saying map sort over this two element array. And that's the data parallel bit. That says do the same thing to each element of the array, only two of them, but do the same thing to each of them, please. Away we go. Seems slightly contrived but you get used to T even if only doing two-way data parallel. You list the two elements, put them in a list, draw them out again as it were, map sort of them. If you think about this list, remember the representation of the array, that I told you that LT is itself a long array, GT, is long array. And when you do the flattening transformation, what happens. All the LT guys get lined up. After them come the GT guys. To this array is represented by a long array with all the ones less than the pivot followed by the greater than pivot. That's quick sort. What Tony Hall invented. Partition them and shuffle them around and then you apply to one segment and the other segment. So in a way even though it look funny, the same thing going on. You can see the structure of the algorithm like this. In the first step of sort we split into two chunks. There's still as many elements as before, modular the ones equal to the pivot. We've lost those. These lines are shrinking and each stage we split them in half and sort them. Each step, the cost model, simplest cost model, infinite number of processes and whenever you do a data parallel step, all work on one element of the aggregate in parallel. This is one data parallel step and here's another. >>: You put them in the range to be able to use the same mechanism. >>: Yes. >>: Also suggesting that's how with you should think of it? Simpler to think of as a sort LT, in parallel with DR. >> Simon Jones: Maybe it would be, but if I was to say sort LT, and then sort of, I don't know append, you'd have to say somehow, do these in parallel, and then append. But also it's very important it's the same thing. You can't say sort LT, and in parallel do something different to GR. >>: Compiler won't know how to split it? >>: Simon Jones: No, what they have one program counter. Right? So here are two program counters, one for code for sort and sort two. The idea is here the one program counter is going to go sort, sort, sort, and all the processes are going to be processing mixtures of LTs they don't care which data but doing the sort algorithm. >>: Wouldn't be it single matter to allow the syntax with the parallel bar ->>: If it was the same function, so you have a parallel bar and you must have this -- must be identical. Sure. Yeah, so maybe you should say -- once you say this might be identical, then you say maybe I could put that out, maybe I should say sort LT, GR. Now we're very close. Was that we provide at the moment. After experience of using this stuff, maybe people will bleat enough. Knowing it's quite nice to be able to sate place that parallelism comes from is parallel map. That's it. That's where it comes from. We can dress it up various ways. That's -- I hadn't thought about that, it's a good question. >>: Make that as long as you want. And then [inaudible]. >>: Yes, so here exactly two. And sometimes there's an exact number. Sometimes of course the number of elements in there varies dynamically, like in the previous examples. Just happened here was static. And so maybe for the static case some alternative syntax would be good, but it's very important that it's the same code for both branches. It took me some while to internalize this. But that's the essence, you do the same thing, what varies is the data you do it to. Okay, good. Any other questions? I think I'm almost done with programming examples. I'm going to start talking about how we might implement all of this. >>: if you already have thrown out [inaudible] then why ->>: So laziness is not important here. Purity is. So strict pure language would be fine. But there aren't many of them because my favorite mantra is laziness kept us pure. Lazy language if you do prints or assignments so variables in lazy computation, you rapidly die, because you don't know whether they're going to happen or which order. Laziness is a powerful incentive to purity. In a strict language like ML, very tempting to just do that little [inaudible] and then this transformation, remember the transformation we talked about is deeply screwed up by random side effects happening, as you may imagine. Yes? >>: [inaudible] F sharp and do this. >>: Maybe we can do -- I think very interesting question is how could we gain control over side effects in F sharp or C sharp? I think that's an interesting research topic but one I don't know the answer to. I don't think it's a just do this thing at all. I think that's an interesting quite challenge research area. Which you're thinking about, right? Joe Duffy is writing papers, producing. Panthera. And we have types with -- yeah, so maybe -- so perhaps the way to say is if we can't do this in has, it will be really tough to do in C sharp. So it is a were, I'm using my unfair advantage. And even then it's difficult, right? So I'm going to bust a gut on doing it on Haskell and maybe you can by throwing more intelligent people at, it you'll be able to do a more general setting. >>: This butterfly type algorithm. >>: Sure, yes. So the prefix sum kind of things is I've shown you -- I haven't shown you very many but it is clear there's some functions like this built in. So sum P. the reason it's not fold plus is because we'd like to know the operation is associative. And parallel prefix sum is also a very common pattern. Is built into some of these. The so we provide quite a rich library of these kind of operators that essentially embody a lot of cleverness that comes in with MPI. >>: You have to edit ->>: That's right, yes. Well, actually, it's in the libraries. But -- so you could -- but the libraries below the level of obstruction of who are giving to programmers. Yes. >>: This has the advantage knowing it's associative. >>: No [multiple speakers]. >>: Everybody has this program and we all bail out in the same way by saying promise it's associative or I'll provide with you a fixed number that there's no -- or they're ->>: Otherwise you're at the wrong end. >>: I think he's just biding his time. >>: Okay. So much for list. So just to remark that flattening, transformation that does this flattening operation isn't enough to get good performance. Think about this again. I showed you. I said compute the vector of F1 times F2 and add it up. It will be a disaster in practice because you take two big vectors, you compute another big vector and add it up. Now, nobody would do that. They would run down multiplying element wise and adding as they go. So we want to get that. So that means some kind of fusion is going to take place. So I'm going to maybe show you a little about how we plan to do this aggressive. Otherwise you get good scalability but bad constant factors. And even if you say look, it's nice linear scaling but runs like a dog. People are enthusiastic. We have to deal with the constant factor, too. Okay. This is just repeating what we said earlier. Flattening infusion. This is not just routine matter because Haskell is a high order. Necessary he will -Nestle had very few -And Nestle did no fusion at all. Good scalability. But we want to do this fusion stuff. Quite a lot of challenges involved in doing this process and that's I think that's partly why guy's ideas haven't been used so widely as yet because this is quite a big compiler challenge. But if -- if successful, then this data parallel stuff is good for targeting not only multicore but it's also good for targeting a distributed machine. Maybe the back end instead of generating just machine code for S86. MPI, to deal with cluster. Or GPU, big data parallel machines. To be honest, I don't know how to do that yet. But for the first time I feel there's some chance of getting a general purpose like Haskell to work in data parallel on things like GPUs and distribute machines because the data parallelism gives us a handle. That we never had before. So this is wildly speculative as far as I'm concerned. I'm content to concentrate on a near term thing, shared memory. But I'm pretty confident that this, it will eventually happen. After all, had a kind of distributed memory version and Gabriel had one in her thesis. Prototypical examples. . Bit about implementation, then. Several key pieces of technology. The flattening or vectorization that changes the shape of the arrays and flattens out nested parallelism into flat. This stuff about inside the compiler because these -- an array of -- I'll show this is represented in a [inaudible] that turns ought to have quite significant impact on the internal type system that [inaudible] uses, our compiler. Then we want to divide up the work and rather than that being a bit of black magic, that is a -- and we have to do aggressive fusion. There's a stack of blobs that have to work together. The big payoff is that if we can do this, then we get a compiler that isn't just special purpose compiler for data compiler language. A general purpose compiler in which many of the transformations being done are useful or use existing mechanisms of in lining and rewriting that the compiler uses anyway. Sort of customizes them for this purpose. So flattening, I want to show you a little program how we might go about transforming down this pipeline sequence. In order to show you anything even vaguely -- small program. This is the sparse matrix, vector, here it is. Sum of F. times VI where F. is joined from sparse vector. First is desugar it. This is square bracket notation is just syntactic sugar for what? Well, for uses of map P. Map P. is the parallel map operation. That's the heart of data parallelism. Map P. is the guy who says do this function in parallel on every element of this array. Okay? What's the function? So we're going to do a map P. over SV, the sparse vector. And what's the function? It matches on the IF pairs and then multiplies F. by this one. Adds it up. That's just getting rid of the syntactic sugar. Now function applications. Next thing is the flattening transformation. This is going to be where we take this guy and transform him into a data parallel, a flat data parallel program. So how does that work? Let's look at this line at the bottom. These here just the type signatures. So the type of SV here hasn't changed. What have I done here? Sum P. that's the same. But look at what's happened. No map P. anymore. In, I've turned the structure of the program kind of inside out. Not forget the transformation. Just read it. Second is a function that -- this is first. Vector of AB pairs and produces vector of As or Bs. So second produces essentially all the second -- all the floating point values in the array. Star power is the vectorized version of multiplication. Takes vectors. B. permute that's the list of -- that's the vector of all the indices. Remember the -- we're looking at this vector pair. The first is going to pick those integers and produce array. And B. permute is vectorized version of indexing. Takes a vector and a vector of indices and returns a vector of values, the same length as this one. Just the vectorized version of indexing. So good way to think of this program is it's as if we've generated code for a vector machines that provides as primitives, you know, a vector multiply, and vector second, and vector first and vector indexing. Imagine you're compiling for machine for which those are the instructions. All right, well, now, this instruction executes in parallel. This executes in parallel, so you see the way the map is being turned inside out. What I was trying to get. Rather than -- almost as if I pushed the map down to the leaves. This is important slide. I'm going to show you how you might hope to make this transformation, but I want you to get some kind of intuition for what the target looks like. Just think -- execute this on a vector machine. This is pretty much where Nestle stopped. Guy simply implemented vector multiply. Directly machine code or something. And ran the resulting program. Generating sort of new arrays. This generates a hell of a lot of intermediate arrays. One here, one here. One here. One there. So lots of intermediate arrays. But scales really well. >>: Of the vectorized things at the bottom, which are sort of mechanically generated through the process and which represent sort of a library of [inaudible] built into ->>: Okay, so here I've chosen a example in which I'm not making calls to any nonbuilt-in functions, right? So all of these are built in. But you might reasonably say, what if instead of index called some user defined function, right? On V. and I. Well, then, presumably I would have had to call the vectorized version of that user defined function. In here. And so when I look at that function definition, I better the vectorization process had better take the function and generate a -- vectorized version of it I can call here. I take the entire program and for each function definition in turn I generate its vectorized version that instead of taking, if the function used to take an integer it now takes a vector of integers. And then I can call those within here. They in turn will use the vector instructions, so it's almost as if the entire program gets lifted to the vector world. And so, let's see. So here is -- but where did the map go? Here was a map. I don't see any map lifted here. But the key thing is the map guy is the very guy that goes away because you turns into specialized version of function. The way to say. If I see map P. I call F. up arrow instead. Over here, second up arrow is really map P. of -- that's the right way to think of this type. Look at it. If I take map P. of second, that would lift it from an A. to B, to -- yeah, you get that. So for every function of Type II to T, 2, I'll generate a lifted function of array to -with the intent that the lifted version has the semantics of map P. F, that's my plan. And so then and each of the -- uses these vector operations. Now, how does this up arrow transformation work? Well, kind of a one level it's pretty simple. Here is the simplest one could you imagine. X. plus one. How am I going to generate the lifted version which has array? We can imagine what it looks like. This X. now is a vector. So I'm going to take X, the vector plus, and then what happened to this one? Well, vector plus takes two vectors. I can't get a vector on one side and a number on the other side. So I better replicate that one, that's sort of fluff up the one to be vector of the same length as X. That's what replicate P. does. It takes a size and a number, just generates array that length. Does that make sense? Okay, so now you can imagine this lifting transformation, walks over the structure of the function. And when it sees a local available, like X. here, it leaves it, of course the new X. has a different type than the old X. If you imagine as a crude syntactic transformation, leave the variable alone. If you see a global like plus, you use its lifted version instead. And if you see a constant, K. like this, you use replicate instead and we'll need auxiliary version. Not as simple as syntactic replacement. I'm hoping to give you the idea that this lifting transformation might be done by walking over the juncture and generating fresh code. Okay, now, what's the problem? There's a tricky problem here. What happens if F, here -- there's a new F. This is the F. in the original program and it looked like array event to array event. Now what? Let's say the definition in the original program was F. of A was map PG. Over A. Right? Simple definition. Well, by the time I've taken G, so G. is somewhere else in the program G. is -- right? I've lift that's good. Replaced with G. lifted. Now what happens when I want to construct F. up arrow? Its type is presumably array of array to -- what's its code look like? Calls oh, dear, G. lifted lifted. And of course it must because this A. is array of array of A.s, right? So oh, dear we have to go back to G. and lift it again. Make the G lifted version. And now I hope you're getting the feeling I don't know where this will end. How many lifting of functions I do need? And maybe if I'm using recursive functions I would never get to the end of it. Right? So here's the cleverness. If you represent the arrays the right ways. What does G. lifted do? Similar to G. lifted. This is going to be represented by single array of INTs to together with segment descriptor. Maybe we could get away with using G lifted on array and that was the idea. So here's the way it actually works. We got this array of INTs, so here's the same as before, except I've filled in what happens. What we're going to do is take A, we're going to concatenate, put all of those together. Willy-nilly. Slap them together. Now I can use G lifted on that. But now I have a big blob of data. I need to reform it to have the same shape as the original guy. How can I do that? I've got the shape. The original guy, A, has a shape. So segment P. takes this and it only use this is argument to -- shape information and it takes this guy, these Bs, that's the payload, the actual data, and returns a reshape to write. Does that make sense? I'm hoping at this point you're thinking, yeah, the types match up and I can see it would work but it would run like a dog. Take these arrays, I can concatenate them together and tear them apart and reshape them and it's a disaster. >>: But if you think about, it remember that this array of array of A.s in the first place is represented or strung out in a row. So concatenation doesn't actually do anything except screw with the segment descriptor. The bit that shows the layout. And similarly reshaping doesn't do anything to the data. It just fiddles with the second descriptor. That leads us to want to talk a little about the way in which -- the way in which we express more precisely this business about how arrays -- arrays of arrays are represented in the intermediate language. That's what we want to talk about next. I'm hoping at this point you have some feeling for how we can do vectorization and have it actually bottom out because we don't need to construct G lifted lifted. That's the high order by the that I wanted to communicate. Any questions? Yeah? >>: You're still at the point where the program here is expressed as a bunch of vector operations with intermediate ->>: Yes. >>: Okay. >>: Absolutely. Haven't done fusion to get rid of them. Still in this -- so good scalability, poor constant factor. >>: And if I had a filter on predicates. >>: Yes, so I haven't shown you how filter P. gets translated but indeed I'm going to have some filter P. operations here that take -- vector bullions and shrink all the ones that are true. Right? And in the end, data parallel computations often do require interposes communication. That's going to, right? Or at least if I imagine, how is filter P. implemented? Is it -- what its type is? Let's -- let's -- the sort of most primitive operation which isn't filter P. but like that. So if I take array of [inaudible] and array of A. and it gives me back a shorter array of A. These are true, right? You can see each processor could do that independently. But now the data might be -- one person might have lots of trues and another only a few. This guy is not lined up in a nice balanced way. So you might want in your algorithm to do some rebalancing at intervals and that's the way data parallel algorithms work. There's a sort of everybody talks to everybody phase and rebalance the data and we're going to want to express that explicitly in this compilation pipeline. So that you get control of it. I don't mean the programmer gets control. The compiler can by doing program transformations express that decision rather than having it left to magic in the [inaudible] system. Did that -- for this purpose think of it as a primitive. Yes. All right, so array representation. Now, we already talked about saying, we talked about "thunk"s and laziness. Array of pointers to doubles, is too slow. Arrays of doubles to be represented as blobs. What about arrays of pairs? A pair represented which a pointer, to heap allocated pair. Array of pairs, pointer, to pair pointer, and these are pointers are scattered all over the machine. Locality go dead. What's the standard, what are the high performance device who really care about this stuff? What do they do? They transpose it. Right? Represent array of pairs as pair of arrays. That's what we would like to do. And we'd like to express that, so what I didn't say is that inside [inaudible] we take a typed source program, and do this transformation and at every stage we'd like the program to stay well typed. So we have a type system that can describe the idea of this transposition. If you like. Because one reason for doing that is it keeps the compiler sane. Good sanity check on the compiler to make sure every stage the program is well typed, but quite a lot of code gets written in libraries in the post vectorization world, right, they're not going through the vectorizer at all. It's helpful to be able to write the libraries in a typed way. Libraries get complicated. Here's how we're going to express this. Arrays of A, a data family, and all that means is array of A. is a type but I'm not going to tell you how it's represented yet. And then data declaration. This is Haskell source code. GHC kind of Haskell source code. This says -- this data instant says by the array of doubles is represented by -- this AD is data constructor. Think of this very much like an ordinary Haskell data declaration in which you would say, when you declare new data types, you say, data T, equals and then you give constructors like leaf, of INT, or node of tree and tree, right? So these guys of a constructors of the type, right and they have one -- zero or more arguments. So this shy this as a data type declaration that says, well, array of double is represented, just one constructor, it is not two. Just one and called AD, and payload is bite array. And array of pairs is represented by -- here's the constructor, AP, and it's got two components now. Array of As and Bs. This represents -- representation just applies recursively down. Interestingly, that means that first, lifted, is a constant time operation. Here's first lifted. It takes array of AB pairs and delivers an A. How does it work? Well, it does patent matching on -- array of AB pairs represented by AP, of something and something. So just matches on the AP, just like normal patent matching and functional programming like when you're writing a function over a list, match on cons and anything else. No nonsense about unstitching the pairs like when you say map first down a list. Here constant time. So this is rather good vector operation because it's fast. What about nested arrays. This is where the fun is. So here's the data for array of arrays. Represented by AN, why AN? Data constructor. Payload, flat. Right in remember I said you represent it by all the data arranged that flat. That's the array of A. here. And then we have this guy, which is the segment descriptor. This is the beginning points of each of the subarrays in here. The indices of the shapes. So this is the shape description. All right? Does that make sense, representation? Had that's assembly embodied in code what I've previously showed in pictures. Now concat P. It takes array of A. What does it do? Takes one of these AN guys because anythings that array of array must be built with AN, and dumps the shape and returns the data. All right? What does segment P. do? Takes something with some shape and some data, so it takes this guy has some shape, that's the segment descriptor. Take the data over here and slap them together. Constant time operation. There you are. This is. I thought first saw this in some of these other things, I thought so cool. Concat P, constant time. Yes? >>: Property of by construction you never have a shape and a data -- mismatch. I can't take the segment information from one [inaudible] and slap it onto another. I mean, I could here. >>: I could here, yes. >>: So here, I could -- so there is a -- these indices should match this, right, and if I was just randomly programming, I could construct things that didn't. But this stuff is -- the library writers see. And what we show to programmers is at the top. So there's still, array out of bonds errors are still eminently possible. Not solved that problem. Same techniques would apply. Vectorizes ->>: Right, yeah. Yeah. >>: I have a question about the family of [inaudible]. >>: What happens if I haven't give anticipate data instance. Suppose I had array of trees, those guys. So we'll generate you, give then data type declaration, we'll generate for you a data instance declaration that represents it. So here I've shown how to represent products. Over here I need to represent sums. That's a whole little world. And even more fun when you want to say how do I represent arrays of functions. How am I going to represent them. That's when things get really exciting. >>: It's 2:30 and I promised -- I'm happy to stop and do more discussion afterwards but I would rather be more or less finished by 25 to. So I'm going to skip -- essentially skip the rest of the talk. I want to show you why functions are tricky here. So remember I said this vectorization transformation, generates the lifted version. Suppose it two arguments rather than one. Like this T 1 arrow, open bracket 21 to T 2. Lifted version looks like this, that's the obvious thing but very compositional kind of transformation because I've said, if that was hidden or polymorphic or something, bad things might well happen to you. What would be much nicer, more compositional transform would say, F1 F lifted takes array of T 1 -- that would be the obvious way to as it were, lift this type. Right? Remembering that it's really T 1 arrow up, [inaudible] to T 3. And that leads us to ask, what might the -- what might the instance declaration for this look like. And that's actually quite a hard question and what we do -what amounts to closure conversion on the program to deal with that. But all is not lost. Just finished hot off the press a paper describing this algorithm in a way that for the first time I feel as if I understand. So it would be on my home page in a few days. I've said man we will and gabby will sort it now, but now I feel I understand it. It will be on my home page. Harnessing the multi[inaudible] same title as this talk. The steps that I mention in the first slide that we to do with chunking. How do you divide a computation across the processes and then a bit about chunking and then about fusion, right? So now I've divided across the processes. When I'm thinking about the code on one processor, I have to fuse these arrays. I don't want to do the fusing too early or I won't be able to do the chunking. On this first chunk then fuse. And so whole interesting stuff about fusion. So there's this big stack of things, I've really only talked in any detail about the first two. All of these things have to work together. My -- this is -- I mean this is a research project because I can't promise to you that all of this will work together. I feel like somebody who is building a stack of already rather complicated things on top of each other and hoping whole tower doesn't fall over. Fairly ambitious to make this all work. It's just about at the point where, we can start to actually run programs and try them out for real. Initially it won't work well because what happens you is put your program in and it runs not like a dog but a dog with three legs amputated. Because something has gone wrong here. Gives the right answer but very, very slowly because it generated some grotesque intermediates. For small programs we do this on -- this is sparse matrix vector multiplier. Reasonably good speed up. You should be suspicious, blah blah, because you want to know what the constant factor is. It is no good if on one process or millionth of the speed at C. Then you need a million processes to equal one process of doing C. here, the baseline is the one process version goes slower than C. but not much. So this is very tiny program. So you can read not much into this graph except that we're not already dead. At the first hurdle. So caution, caution. Okay. And this is quick summary. Let me just remark at the ends here. Just at the stage in which we got a version that other people might be able to use if they're sort of friendly and accommodating types. This isn't ready for doing your genome. Database. New application error, you -- could data power Haskell do something. We'd be interested in learning whether -- in what way it failed is probably the best way to say it at the moment. I'm optimistic for the future because I think this whole data parallel game is the only way we'll harness lots of processes. Okay, that's it. [applause] >>: Question. Parallel program, has the property -- very independently operating each element. >>: Yes. >>: Something like -- for computation or need to look the your neighbor's -- >>: Yes, good question. [inaudible] tend to work -- so sometimes you look at your neighbors in two dimensions. That's difficult in sparse computation. In fact this whole nested data stuff, as I understand it, doesn't really work very well for dense computation. Rather embarrassing. I'd like to say we can do dense, sparse. Everything. Dense computations because you can do clever arithmetic. The guy above you in just subtract a thousand and you get to your neighbor, that relies on a lot of detailed knowledge about exactly the layout of the array. Can't do that here. You can get to your neighbor's right and left. Shift the array. That's not hard. So I think to do [inaudible] style computation, you have to essentially do more work than do you if you're doing in had FORTRAN. As it were, pass along the [inaudible] row before and row after and there's a bit more. In some ways that's reflecting what's really happening. To be honest, I don't really know. I've not tried it hard enough for real -- I think it's not a disaster. But we need a bit of experience to know whether it's going to work for dense. I suspect if it's truly dense and you know anything and it's two-dimensional and high performance and FORTRAN does the job -- my goal is to go faster than FORTRAN by being able to write algorithms that would make your head hurt too bad to write FORTRAN. That kind of faster. Faster by being crafty. Allowing to you think bigger thoughts. Yeah? >>: You talk about compilation. I wonder what kind of runtime you have to make here. As soon as you don't statically know the length. You have to filter. Now you have all sorts of packing issues. That I don't see described here. >>: That's true. When you do a filter, followed by map. So you do -- a filter and then you do the same thing to each element. If the map is doing something very tiny. Then you might do filter and do map before rebalancing. But if the map is doing something big, you might be better to rebalance before the map. So that's ->>: So activity of the filter ->>: Exactly. It might be -- it's more how unbalanced it is. That's the really hard bit. Right? If it leaves some processes idle --s even with your sparse array, you've got the fixed representation but get things out of it that are vastly different size. >>: I think that part isn't so bad. The bad thing about filters, you can get a lot of data on one and only a little on the other and you need to rebalance. The rebalancing operations are tricky. Where to replace the rebalancing is open problem. I don't I don't have a solid answer for that and we don't have a way for the programmer to take control of where rebalancing takes place. >>: You can't talk about in the high level program at the moment and maybe, you know, perhaps that will turn out to be the high order by the in due course. And we'll need to address that directly. Yeah? >>: Have you looked at where -- you know, the tradeoff of manual rebalancing versus ->>: Or where those different techniques would fit? >>: So [inaudible] kind of dynamic thing that just says processes look around for work and grab it out of some kind of work ->>: Indication of instead of doing the rebalance, when something didn't have enough data and got done, it goes ->>: Yes, see the difficulty here I suppose the name of the game with all this data parallelism stuff is rather than having a very dynamic approach where we create work of all kinds of work, you know, bits here and there and we throw it into some pool. Instead we're trying to have a much more disciplined, to make it sound good, but sort of control or restricted form of parallelism which means that each process knows its job and the data that its operating on is local to it. Right? So I'm not quite sure whether you could mix the -- to what extent could you mix the two. That's an interesting question but I don't really know the answer to it. >>: So is a function of how much data you are working with as well, data parallelism tends to drive multiple passes of the same data. Which is bad -blocking opportunity. >>: Well ->>: Data parallel here is described drives the granularity of the operation. If you can say I'm going to chunk to some granularity and then I can work above, that I should be able to have my cake and eat it too from a scheduling point. >>: It's possible near the roots of a data parallel tree you have nice big tasks, that you could schedule in a dynamic way. And near the leaves of the tree where everything is very tiny, you want to go to the restricted thing. I'm not sure what you said about repeatedly processing the same data ->>: Quick sort example. So if I start with something that doesn't fit in cash, I will reduce down the problems will fit in cache and I might like to bias my scheduling, once it fits in cache, let me restrict my attention to that instead of doing the whole vector. >>: Weigh showed you, we'll do one step which deals with whole vector. Of another step. Rather than -- some point maybe just want to say go sequential on this. Chunk at a time. >>: Sequential at the top line. >>: Once my problems, you know -- once one fits into cache, I may focus on that. And get back to the other. >>: Right. >>: Good question. >>: Now there's no intercommunications, path you can distributed environment talk with ->>: The drive link guys. No, I was hoping that I might find Michael or Chendu here. >>: Go to California. >>: Yes. >>: To be honest, if you can generate your plans such that it's a separable chunk, GHC generates each as a separate app, they'll schedule it. They don't care ->>: When I said I'd like -- one possibility is sort of back end. More speculative back end, generate MPI stuff. >>: F sharp, generate dry link code F sharp. Could call Haskell just as easily. >>: I'm not ->>: Yeah. That sort of off the horizon things I feel as if I know how to do. But the idea the back end of this, rather than sort of taking control all the way down to the leaves, to just generate calls to some other infrastructure to do the communication so forth. Like MP I, is -- yeah. >>: Okay. >>: Hint from the tech support. [applause] >>: Okay. Good. >>: Yes, I'm running around the rest of the day. If you'd like to chat about any of, this send an e-mail because -- I'm here until tomorrow night at about -- when do I have to leave? 7 tomorrow evening. That's it. Unless you come to Cambridge. Especially if you have applications, I'd be interested to talk to you. Was I going to say anything else? Maybe not. Papers. On my home page, not this week but next week, by the time I get back, the new paper will be there. Tutorial.