>> Yuval Peres: So well, without further ado, Allan... about mixing time and space.

advertisement

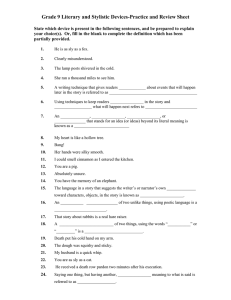

>> Yuval Peres: So well, without further ado, Allan Sly from Berkeley will tell us about mixing time and space. >> Allan Sly: Okay. Thanks, Yuval, and it's a pleasure to be back here again. So I'm going to tell you -- so I was here a bit over a year ago and told you some results about mixing on -- for the Glauber dynamics on random graphs for some results that weren't sharp, and now I'll tell you a bit about some knew results that are. And then at the end, I'll tell you a bit about some of the other work I've been working on. So first of all it will be the Glauber dynamics on random graphs, and I'll also talk about general graphs and mainly for the Ising model. Okay. So I'll run through some definitions which probably everyone here knows by heart. So mainly be talking about the Ising model, the model of magnetic systems, so it's probability distribution over configurations, so assignments of plusses and minuses to each of the vertices, and this weighted so the configurations with more pluses next to pluses and minuses next to minuses get more weight. Beta the inverse temperature will regulate the strength of the interactions and so be interested in how the behavior changes for different values of data. Okay. I'll be talking by guess two kinds of mixing for these graphs, for -- sorry, for the Ising model. Spatial mixing and temporal mixing. Buy spatial mixing I essentially mean that if you take two sets of vertices that are distant, the spins or the pluses and minuses will be essentially independent as the distance between them grows. And there are several ways you could formalize this notion. And I'll talk about a couple of them through this talk, but first of all, I'll talk about uniqueness. So this says if you have some say finite set of vertices A and is it affected by conditioning vertices at distance R from it, and in particular does that affect go to zero as R goes to infinity or is it bounded away from zero? And if it goes to zero, then we'll say the model has uniqueness and this will be one notion of spatial mixing because it will say things that are far away, conditioning vertices far away don't really affect the spins in A. And the other type of mixing I'll talk about will be temporal mixing by which I mean the mixing time of the Glauber dynamics, so this is a Markov chain which updates vertices one at a time. Yeah. [laughter]. Yeah. And so this will be the Markov chain that's reversible with respect to the stationary distribution and it will be ergotics, so over time it will converge to the stationary distribution. So one step you pick a vertex, forget about what the spin is there, and update it according to the conditional distribution given it's neighbors. And this will just depend on how many plusses and how many minuses it's next to. So the main question we'll be looking at is how long does it take to reach the stationary distribution or close to it? And this will be measured in terms of the mixing time, so the time it takes for the total variation between the Markov chain and the stationary distribution to be less than some constant from worse case starting point. And just by convention I've got one on two is the constant. So you can either talk about the discreet version of the chain or the continuous one. It will be convenient for this talk to talk about the continuous version where we update each of the vertices at rate one according to [inaudible]. This will make the mixing time smaller by a factor. N. But it will just otherwise doesn't change the results. And so the results will apply for both the secrete and the continuous versions, but the secrete will be the one, the mixing time will be a factor of N larger. Okay. So originally the graphs we're interested in were the Glauber dynamics were Erdos-Renyi random graphs and so these graphs as I'm sure you also know like GNP is where you have N vertices and connect pairs of vertices with probability P independently. And there's huge amounts known about these graphs. We'll let P grow like D or I guess decrease like D over N so that you have a constant average degree of D. And so several key properties that these graphs will be important, and these hold with high probability over the graph so the average degrees D. It's locally tree like and locally looks like a Galton-Watson branching process with Poisson D offspring distribution and the maximum degree grows like log N and log log N. And this is important because the essentially most results about mixing times are given in terms of something like if the maximum degree of the graph is less than this and the -- and beta is less than that, then the mixing time is log N or something. But here it grows, the maximum degree grows with N so anything of that sort doesn't apply. Another property of these graphs, make proving things about the Glauber dynamics more difficult is the fact that the size of neighbors grows exponentially with the radius, at least until for a typical vertex, because locally they look like trees and Galton-Watson branching process trees whereas a lot of -- well, most proofs about the Glauber dynamics tend to apply just for -- or tend to use the fact that you can take bowls that have the surface to volume ratio goes to zero is when you take large radiuses. Okay. So last time I was here I said the -- I told you about a result where when D tangent of beta is less than one E squared than the Glauber dynamics mixes in time or bounded by some polynomial N to the power of the constant for almost all Erdos-Renyi random graphs. But at the time we conjectured that this one E squared term here should actually just be one. And this was really just an artifact of the proof. And indeed that turns out to be the case. So when D tangent of beta is less than one, then on almost all Erdos-Renyi random graphs the mixing time is N to the theta of one on log log N. So by theta of one on log log N I just mean bounded above and below by a constant on log log N. Where this comes from is essentially within the graph you have these high degree vertices of order log N only log log N and in fact with high probability you'll have a star with degree approximately log N on log log N, and you can analyze the mixing time of the Glauber dynamics on them pretty simply. To get a lower bound of this form. And it turns out that's essentially the right upper bound as well. This threshold here corresponds to the uniqueness threshold on a Gatson-Watson branching process with Poisson offspring distribution. Essentially the D comes from the fact that this is the branching rate of the graph and so this -- and so this is a result of lines. And this turns out to be tight because if you take almost all graphs GND on N, then the mixing time is exponential in N, if D tangent of beta is greater than one. And this follows from results of Dembo and Montanari, where they calculated the partition function for the Ising model on random graphs. And so you can show that there's a bottleneck going between predominantly plus and predominantly minus configurations. The exponentially small amount of the measure is on balance configurations with equal numbers of plusses or minuses. >>: Is this also sharp in [inaudible] mixing time also sharp? >> Allan Sly: Yes. Yes. So ->>: [inaudible] faster? >> Allan Sly: No. So there's -- it's. >>: [inaudible]. >>: [inaudible]. >> Allan Sly: Yes. So it's bounded above and below by N to the constant over log log N. So if different constants ->>: [inaudible]. >> Allan Sly: Okay. >>: [inaudible]. >> Allan Sly: Sorry. Yeah. Yeah. Okay. Right. So by this I mean the mixing time. In continuous time. Okay. And we can also say something about general graphs of maximum degree D that when D minus one tangent beta is less than one then the Glauber dynamics mixes in theta of log N. And this corresponds to the uniqueness threshold on the D regular tree because the branching rate's D minus one rather than D here. And again this is sharp in the sense that for almost all random D regular graphs then the mixing time is exponential in N for when D minus one tangent beta is greater than one. Okay. So any questions about the results? So I'll tell you how to prove this result and then how to modify the proof in order to prove the random graph one. Because this will be slightly simpler. Okay. So the proof will used monotone coupling, so ->>: [inaudible] back and forth. >> Allan Sly: Yes. >>: Standard Gaussian [inaudible]. >> Allan Sly: Yes. Right. Okay. So, yeah, you take the monotone coupling, so you start off with -- so you have two copies of the chain starting from the O plus and the O minus configurations. Yeah, I'll do another little graphic. And you couple them so that X plus is always greater than or equal to X minus. And eventually, and in this case in a very short period of time, they'll be the same. And so to prove bounds on the mixing time about using couplings we want to show that they have coupled with probability close to one at time T and that gives you an upper bound on the mixing time. And so to do this, we're going to bound the maximum over all vertices V of the probability of the disagreement at time T between the two chains and show that this decays exponentially over time. And the proof heavily relies on kind of new sensoring results of Paries and Winkler [phonetic], so the -- we construct two new market chains, Y plus and Y minus, and so with a vertex V, which will be arbitrary, a radius R, which will be a constant depending you know on D and beta and a time capital T. And the construction goes by saying -- and will start off in the O plus and O minus configurations as usual, and up until time capital T, it will just be the normal Glauber dynamics. So you can say that they're equal to X. And then after time capital T, I'm going to sensor all updates in this green area outside of a ball or radius R about the vertex V. And then but inside I'm going to -- will continue doing updates as per usual. >>: So you don't do the updates [inaudible]. >> Allan Sly: No more updates outside of this ball after time capital T. So the spins in these vertices will all be fixed forever. >>: So you just ignore the steps you way the choose the vertex? >> Allan Sly: Yeah. Yeah. So if you decide you were going to do an update and a vertex outside of the ball then you just don't do it. And so the sensoring lemma which applies, so we call these chains sensor dynamics and the sensoring lemma, which applies to very general ways of sensoring, essentially says that we can say that Y plus stochastically dominates X plus and X minus stochastically dominates Y minus, so in particular the chance that we have a disagreement at the vertex V in the sensor chains is greater than or equal to the chance that we have a disagreement in the unsensored chains. So we use this construction to bound the probability of a disagreement at V. At some time after time capital T. So are there any questions about the construction? >>: [inaudible] supposed to be V or ->> Allan Sly: Well, this is actually posted to any vertex in the graph. But V will be the important one, right. Okay. So what does the dynamics look like? Well, at time capital T, any updates outside of the ball stops, so we have this frozen boundary condition. It's just the boundary we have in the original Glauber dynamics. Or actually two boundary conditions, one associated with the plus chain and one associated with the minus chain. But inside the ball -- and this will just be a finite graph, it continues going on mixing as it always did. And then so in a short period of time, this will become close to its stationary distribution, and so then the question will be what will be the effect of the different boundary conditions on the vertices V? So in particular, we can bound the chance that a disagreement at V by the difference of the boundary conditions under the equilibrium measure given the two boundary conditions plus some term that will come from the fact that you never get exactly to the stationary distribution. So by taking a time S large enough at time capital T plus S, the effect of the fact that, you know, not quite at the stationary distribution can be made small, and also you only need to consider this when there's some disagreement in the ball of the boundary. >>: [inaudible]. >> Allan Sly: Yeah. No, alpha here is like some constant in terms of the mixing time of the -- the mixing time, the worst case mixing time of the ball of maximum degree D of radius R say. But, yeah, essentially we can make this term small. Okay. And so now it's a question of the spatial mixing expect of two different boundary conditions. And so the first assumption I'll make is the -- well, I'm going to cheat first of all and say let's assume that inside the ball the graph is a tree and that later on -- well, I'll tell -- and then I'll tell you how to get around that assumption. Say you have two different boundary conditions and say they differ at just one vertex, what's the effect of the marginal spin at vertex V? Well, it was shown in Kenia, Mossel and Perez [phonetic] that it's bounded by tangent beta to the power of R essentially. Regard -- independent of the boundary conditions. Because the extreme case is essentially when you have a free boundary condition. >>: [inaudible]. >> Allan Sly: Yes. Sorry. Good. No one noticed that in the last two times I've used this slide. Including me. >>: [inaudible]. >> Allan Sly: Yeah. So this should be minus V and prime here. >>: [inaudible]. >> Allan Sly: I have a bound on it at least. [laughter]. And hopefully a lower bound here. And so what we need -- and so changing one vertex has an effect of [inaudible] to the R, changing K vertices affected by at most K times tangent beta to the R. So the expected difference in the two boundary conditions is just -- is bounded by the expected number of disagreements on the boundary times tangent betas to the R. And so the total number of vertices on the boundary is like D times D minus one to the R or bounded by that and the chance of a particular vertex having a disagreement is bounded by the maximum chance of a disagreement. And so now we have this D minus one tangent beta terms, which our hypothesis was that this was less than one, and then if we -- so by taking R to the large enough, we can make this constant as small as we like but like less than a half or so would be good. And, yeah, this is the bound that we want. And okay. So I haven't said what we'd do when it's not a tree, but essentially we use [inaudible] tree of self-avoiding walk's construction and then looking at the boundary condition on these regular trees is like looking at a boundary condition on his tree of self avoiding walks but I won't go into that part. But you get this same bound. So if you put the two bounds together and take a maximum overall the vertices, when S is large enough so the ball inside is mixed well, then the maximum chance of a disagreement is reduced by half. And so when you take a larger time to be large enough constant times log N then the expected number of disagreements is below of one and you'll have mixed. And you get a lower -yeah. So that's the upper bound and the lower bound is standard by Hayes and Sinclair and there are other easy ways of showing that, too. Okay. So that's essentially the proof for the graphs of maximum degree D. Are there any questions about that before I move on to random graphs? Okay. So now to modify this argument to Erdos-Renyi random graphs we need to take larger or balls that are growing within and so a large constant times log log N will do. And this is essentially because in a random graph you can have bits that are denser than usual, and so you need a bigger ball in order to establish the kind of spatial mixing result that we need. But in order to then apply the same proof, we need to be able to bound the mixing time inside these balls which are now growing, and so this essentially means bounding the mixing time on Erdos-Renyi random graphs with Poisson offspring distribution and we do this by bounding the exposure of the graph and so the exposure is, well it's minimal over all labelings V1 through to VM of. >>: [inaudible]. >> Allan Sly: Yeah, the cup -- yeah. >>: [inaudible]. >> Allan Sly: The cut width ->>: The cut width [inaudible]. All one thing [inaudible] know the standard ->> Allan Sly: Okay. >>: [inaudible]. >> Allan Sly: Okay. So apparently I just got that update now. So this is the cut width of the graph. So you look at all labelings of the vertices or orderings of the vertices and take the maximum or taken the minimum over the maximum of the number of edges between over I from V1 to VI and its complement. And this is -- and this can be used to bound the mixing time essentially or in this case it will get an exponential in the cut width as the important term. And so we need to bound this on Galton-Watson branching process with Poisson offspring distribution and this can be just done recursively because. >>: [inaudible] which has some [inaudible]. >> Allan Sly: Offspring distribution and M levels. Yeah. So people in -- so not only had the previous audiences [inaudible]. >>: [inaudible]. >> Allan Sly: Yeah. And also the author of the slides, too, I think. But ->>: [inaudible]. >> Allan Sly: Yeah. Sorry. So -- so it's plus [inaudible] plus a large constant times M the depth. And you can do this inductively on the depth, so each -- so you have a root with Poisson number of children, each of the children have cut width that's stochastic -- independently stochastically dominated by a constant times M minus one plus a Poisson and if you kind of put them together in the construct a sequence of vertices in the obvious way from these, then and do a bunch of calculations, essentially bounding things about Poisson random variables, then ->>: Do you optimize them in the order. >> Allan Sly: Yes. >>: Or do you take them [inaudible]. >> Allan Sly: No, I mean you order them in increasing or decreasing -- yeah. You take the right ordering of them. So, yeah, if you order the subtrees in increasing order, then, yeah. If the constant's large enough, you get this bound. And then by taking a maximum over all the vertices, this gives you a bound of order log N on log log N and if you put that back in the bound on the mixing time that gives you bound on the mixing time of these local balls, and then if you applied the previous proof then you get the result. Okay. So these techniques I guess also apply for the hard core model on bipartite graphs so we can't get a -- we couldn't prove it on completely general graphs, on completely general D regular bipartite graphs but if the girth is large enough, then we can prove bounds on the mixing time and also for almost all random D regular bipartite graphs up to the uniqueness threshold again. Okay. So in the remaining time, I'll tell you a bit about some other work I've been doing. Or done. So I guess last time I was here, I told you that we'd worked on the coloring version of this mixing time for Erdos-Renyi random graphs, and here we -- so the previous approach doesn't work because the coloring isn't monotone system. So the sensoring lemma didn't apply, at least in the sense we used doesn't quite make sense because there's no ordering. There's no monotone coupling. [inaudible] showed that if the number of colors grows like log log N then you get polynomial mixing of the Glauber dynamics for colorings of Erdos-Renyi random graphs. They conjectured that with a constant number of colors you should still have polynomial mixing and that we were subsequently able to prove that. >>: [inaudible]. >> Allan Sly: Good question. So the answer is a long way away. We were happy just that it was a constant. But I'll tell you a little bit about what the conjectured picture is for which you should have fast mixing from the -- and it comes from the spin glass community of people like Mazad, Parisse and Zakema [phonetic]. And their picture is the -- if you look at the space of colorings where two colorings are adjacent, if they just differ at a single vertex, then when the average degree is small, the space of coloring should just be one big connected set, and you might expect the Glauber dynamics or local markup chains to work well. Although this seems very hard to prove. Our results are somewhere back with really low average degrees compared with the number of colors. And then up to some threshold, the space of colorings breaks up into exponentially many clusters, and here Markov chain techniques can't be expected to work because the Glauber dynamics won't be ergotic for a start and -- yes, so the ->>: [inaudible]. >> Allan Sly: Yeah. And the distance between the clusters is -- okay, order log N so in fact there isn't even really good algorithms to find clustering -- to find colorings in this regime. And then after -- then you have the coloring threshold after which you have no colorings. >>: [inaudible]. >> Allan Sly: Yeah. Sorry. Linear. Yeah. So the reason why I mention this is the -- this threshold is conjectured to be the reconstruction threshold for colorings on trees, so I'll quickly tell you about what that means. So if you have an infinite tree and the right one here will be the Galton-Watson branching process tree with Poisson offspring distribution, although the actual results would also apply equally to the DRE tree, because these will be asymptotic results for large D and the fluctuations you get in Poisson for large D are smaller than the other areas in the results. So the reconstruction is another sort of spatial mixing, essentially saying that, say you look at the colors at level M down from the root. Do the -- does this -- how many information does this provide you about the color of the root? So if the mutual information between the colors at level M and the colors at the root is bounded away from zero as M goes to infinity, you say you have reconstruction, otherwise you have nonreconstruction. When -- so for large Q number of colors in D, Mossel and Perez showed the essentially Q log Q number of colors -- well, the degree greater than Q log Q is needed for re-- is sufficient for reconstruction. And this is just by kind of the simplest algorithm that you could do where you say well if a -- you look at a parent and all the colors appear amongst its children but one, then obviously you know that the other color must be the color of the parent. And when you have ->>: [inaudible]. [laughter]. >> Allan Sly: Sorry about that. >>: [inaudible]. >> Allan Sly: The reason why I mention that was that even though this is quite a simple algorithm, and it's something that can be -- you can analyze, and essentially this is what the analysis shows, it turns out to be extremely close to -well what the true threshold is and possibly? True reconstruction threshold and figuring out whether or not it is is something I've been thinking about. But definitely up to -- actually I don't think that's the right reconstruction threshold and at least the case when you have five colors, it isn't so probably isn't in general. But the ->>: You don't think [inaudible]. >> Allan Sly: Sorry. Well, either, actually. But the threshold you -- so this is the threshold you get for when the simple algorithm works. And I don't think that's the right threshold. But it's very close because essentially the difference in the bounds is in the third order term and just replacing a one with a one minus log 2. So, yeah, it's fairly close to the truth. >>: [inaudible]. >> Allan Sly: How do you prove this? So essentially you want to look at -generate inequalities for the expected value of the posterior distributions given the roots and create recursions for that using the -- using all the conditional independence that you have here. And something that if you do the calculations in the right way you see that when you're just a bit below this threshold, the information you have just starts to really reduce quite rapidly because once there are a few colors that you don't know very well, then the picture that you have -so once you're a bit uncertain about what a color is, then the uncertainty just builds and builds and builds. Yeah. So it's -- yeah. So when you're around this value, if I -- if I say just didn't tell you the values of a very small proportion of the colors, you'd -- you wouldn't be able to reconstruct it. So this is partly because you're a very long way from the what's called the Carsten Steger bound, which, well, mention in the next slide which is the say if you think of this as a Markov chain going down each of the branches of the tree, then -- and land is the second eigenvalue of the transition matrix, then the Carsten Steger bound says that you have reconstruction when D lambda squared is greater than one. Now, for clarification, this would say that you have reconstruction when the degree is about Q minus one squared. But actually you have reconstruction when it's about Q log Q. So you're a long way away from the Carsten Steger bound. Once you start introducing a bit of noise and you can't do this kind of recursive, simple -- sorry to keep calling it simple, but [laughter] -- but if you keep using the -- this reconstruction algorithm, introducing a little bit of noise means that the information will start decaying really quickly. I've already talked -- I talked about these results when I was here in summer, so I guess I'll just say that they confirmed large parts of conjectures by Mozard [phonetic] and Montanari from work on spin glasses using numerical simulations and essentially the Carsten Steger bound is tight when Q equals three and is degree is large and not tight when Q is equal or greater than five. And can also say what the asymptotics are for large degrees. Okay. So one of the themes of this talk was essentially that understanding what happens on trees or Gibbs measures on trees tells you about Gibbs measures on general graphs, and so I thought I'd tell you an example which says the opposite. Essentially that you have -- that there exists a Gibbs measure which is basically an anti-ferromagnetic Potts model with an extra special state where you have uniqueness on the D regular tree but not on the specially instructed D regular graph. And so this is essentially cliques joined together in the right way. And this was a counterexample to a conjecture that Elchanan made that the regular tree should always be the extreme case for spatial mixing and, yeah, turns out to not always be true. So probably shouldn't talk too much about counterexamples to my advisors conjectures. [laughter]. Unless you have any ->>: He started out by [inaudible] [laughter]. One question, it's still open for [inaudible]. >> Allan Sly: Yes. >>: Monotone or [inaudible]. >> Allan Sly: Monotone. Yeah. >>: [inaudible]. >>: [inaudible]. >> Allan Sly: Yeah. >>: [inaudible]. >> Allan Sly: Yeah. >>: General monotone system is [inaudible]. >> Allan Sly: And it's also still open for if you replaced uniqueness with strong spatial mixing. And the kind of -- the kind of examples here like this one wouldn't apply in that case. Okay? I've also done like work on -- like reconstruction from another point of view, so here's a problem -- so this was with Guy Bressler and Elchanan and you have -- so this time I'll tell you the values of the Markov random fields but not the underlying graph. And so you want to reconstruct what the graph is. Now, if I just give you one example of it, it is just like basically zeros and ones so you wouldn't be able to say anything. But if I give you independent copies of the drawn from the stationary distribution, how many do you need, and how can you reconstructs the underlying graph, and just using simple combinatorial properties about Markov random fields under for bounded degree and with some mild nondegeneracy conditions. We showed essentially log N samples is enough and that you could do this with a rigorous polynomial bound on the running time in terms of the number of vertices. I'm generally interested in mixing time problems, so another -- yeah, so another -- so the Glauber dynamics is used in lots of -- can be used generally to sample from complicated, high dimensional distributions and it turns out in practice it actually is used by people and so sociologists look at what are called exponential random graphs, and these models for social networks and the idea is to incorporate more triangles than you would otherwise have in the graph, so this is basically if you have a couple of friends, they're a lot more likely to be friends than just two random people in the population. And they actually use this model and actually use the Glauber dynamics to sample from it. And so this is ->>: A sample [inaudible]. >> Allan Sly: And so it's a distribution on graphs. And they want to be able to sample from the graphs in order to first of all, see what they look like, and also calculate things and be able to do statistical inference on them. But we showed the -- in the asymptotics as the number of vertices grows, we could work out when you have fast and slow mixing and either what you have is that you have fast mixing, in which case you have in the limit these graphs are essentially Erdos-Renyi random graphs and so you have no more triangles than you would otherwise expect, so they -- or the Glauber dynamics takes exponentially long to mix. So in either case there are real problems with it. >>: The Glauber dynamics [inaudible]. >> Allan Sly: Yes. So one step of the Glauber dynamics is essentially to choose a pair of vertices, work out the conditional probability that an edge should be in the graph, and either plot -- choose to place or not place an edge there according to that probability. Yeah? And so we can -- so we have like explicit -- we can explicitly say when it's fast mixing and slow mixing. >>: So would it make any difference if you [inaudible] algorithm [inaudible]. >> Allan Sly: No, because there's exponential bottlenecks that cause the slow mixing. >>: So this shows that the results of the sociologists are invalid? >> Allan Sly: So it would not give me a lot of faith in them. I mean, maybe for the certain values of parameters in small values of N they say something, but generally -- so generally what happens with this is it's what's the most efficient way of -- so the probability it's just like you put the number of triangles into the Hamilton one and so you weight graphs that have more triangles more heavily, properly normalized and so what's the most efficient way to add more triangles? It's not by coming up with the -- an intricate graph that has lots of triangles, it's by adding more edges and still keeping them really random. So you get so basically what you get is a Erdos-Renyi random graph with a higher average degree. Maybe when N is small, you get some more structure, but ->>: [inaudible]. >> Allan Sly: Sorry? >>: [inaudible] bottle neck. >> Allan Sly: Okay. So if you started off with Erdos-Renyi random graphs so essentially we come up with a function, so this will be P between zero and one, and if you have a fixed point in this equation what it means is that if you start off with an Erdos-Renyi random graph G and P and update an edge, then the probability that you place an edge will be P. And so essentially, and so when there are multiple solutions to this, it's the slow mixing case, and it means that if you start off with an Erdos-Renyi random graph at P1 or say P2 here, then it would stay very close in distribution to either of these values. So you could generally have either very sparse graphs or very dense graphs, depending on what this function looks like as kind of metastable states for the distribution. >>: So the [inaudible] two [inaudible]. >> Allan Sly: I mean, in general, so in general we can't say a lot about what happens in this slow mixing state, although ->>: Because if it was really just like this, it wouldn't be so much ->> Allan Sly: No. >>: [inaudible] already interested in something like that. >> Allan Sly: Yeah. No. And that's probably what it's like. Although still -- but we can't -- we can't prove that it looks like that and that you don't have more inhomogeneous graphs that really where most of the mass of the distribution lies. But if you did believe that it was like that, then you may as well just take an Erdos-Renyi random graph with these probabilities. Because they'll be very similar to these in the sense that if you count the number of any small type of subgraph, they'll be close to what you would expect in an Erdos-Renyi random graph. >>: [inaudible]. >> Allan Sly: Yeah. >>: [inaudible]. >> Allan Sly: Yeah. >>: Presents some [inaudible] by which, you know, those second order friends might get to, friends first order. >> Allan Sly: Yeah. >>: [inaudible]. [laughter]. >> Allan Sly: No, just space reasons. So, yeah, so I've seen like talks on this where they say oh, well, we take the graph of the 9/11 terrorist plotter, so the colors here indicate which plane they're on and then say, well, this is what we knew before the attacks, can we infer extra edges in the graph? And so they say that works well. But -- and maybe because -- maybe it works well because the graphs aren't actually distributed according to the exponential random graph distribution, so, yeah, so these just say which flight they're on, and so I think that really high degree vertex one was the lead guy. And finally also work on -- with EL on cutoff problems so I'm sure you've heard what the cutoff is, just like abrupt convergence of -- to the stationary distribution in some narrow window and over the summer we proved cutoff for simple random walk and non-backtracking random walk on random, almost a random D regular graph, and also for the Ising model when you have strong spatial mixing on the [inaudible]. And I've also done work on continuous probability, but I think it's -- yeah? Thank you. [applause]. >> Yuval Peres: Any other questions? >> Allan Sly: Yes? >>: So going back to the Ising model on your first results, you calculated the threshold for aspects of ->> Allan Sly: Yeah. >>: That was general graphs. Before that, were they ->> Allan Sly: Yes. >>: [inaudible]. Now, so if you fix beta then you have monotonicity of the threshold in D. But can you say anything more precise stochastic monotonicity mixing time in D? >> Allan Sly: No. [laughter]. We don't have -- I mean, this is N to the theta of one on log log N. There will be some dependence. So I should have said they'll be some dependence on D and beta in here exact -- but we don't have particularly good control over what that is. And ->>: No, it couldn't be by calculation. >> Allan Sly: Yeah. And -- yeah. And we don't have any general arguments about that either. I think -- I think generally that's pretty hard. >>: All right. [inaudible] general question when you say coupling [inaudible] always. >>: This is a special case which [inaudible]. >> Allan Sly: Yeah. Yeah. Don't know. Sorry. >> Yuval Peres: Any other questions? [applause]