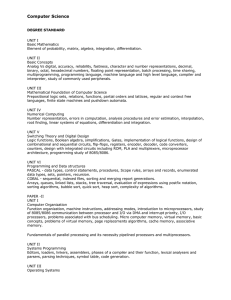

CS2110: SW Development Methods Analysis of Algorithms

advertisement

CS2110: SW Development Methods

Analysis of Algorithms

• Reading: Chapter 5 of MSD text

– Except Section 5.5 on recursion (next unit)

Goals for this Unit

• Begin a focus on data structures and algorithms

• Understand the nature of the performance of

algorithms

• Understand how we measure performance

– Used to describe performance of various data

structures

• Begin to see the role of algorithms in the study

of Computer Science

• Note: CS2110 starts the study of this

– Continued in CS2150 and CS4102

Algorithm

• An algorithm is

– a detailed step-by-step method for

– solving a problem

• Computer algorithms

• Properties of algorithms

– Steps are precisely stated

– Determinism: based on inputs, previous steps

– Algorithm terminates

– Also: correctness, generality, efficiency

C_M_L_X_T_

This is an important CS term for

this unit. Can you guess?

Choose a the set of letters below

(their order is jumbled).

1.

2.

3.

4.

ROUFO

UBIRA

OUMAJ

IPEYO

Data Structures

•

Some definitions of data structure:

A. a scheme for organizing related pieces of

information

B. A logical relationship among data elements that is

designed to support specific data manipulation

functions

C. Contiguous memory used to hold an ordered

collection of fields of different types

•

•

(FYI, I think B is the best one of the above.)

Examples: ArrayList, HashSet, trees, tables,

stacks, queues

–

Is a PlayList an example of this?

Abstract Data Types

• Remember data abstraction?

• Important CS concept:

Abstract Data Type (ADT)

– a model of data items stored, and

– a set of operations that manipulate them

• ADT: no implementation implied

– A particular data structure implements an ADT

• Remember: “data structure” defines how it’s

implemented. A data structure is not abstract.

Clicker Q: Java and ADTs

• Java’s design reflects the idea of ADTs

• Question: Which Java language-feature

most closely supports programming using

the idea of ADTs? Why?

1.

2.

3.

4.

A superclass

An abstract class

An interface

A function object (e.g. Comparator)

Why do Java interfaces “match” ADTs?

1. Interfaces don’t have data fields,

so they’re not a good match!

2. Interfaces are good abstractions.

3. Inheritance of interface is

important for ADTs.

4. An interface can be used without

knowing how the concrete class is

implemented.

ADT operations

• ADTs define operations and a given data structure

implements these

– Think and design using ADTs, then code using data structures

• There may more than one data structure that

implements the ADT we need

– Advantages and disadvantages

– Efficiency / performance is often a major consideration

• Question: how do we compare efficiency of

implementations?

• Answer: We compare the algorithms that implement the

operations.

– In Java: in the concrete class, how data is stored, how methods

are coded

Why Not Just Time Algorithms?

Why Not Just Time Algorithms?

• We want a measure of work that gives us

a direct measure of the efficiency of the

algorithm

– independent of computer, programming

language, programmer, and other

implementation details.

– Usually depending on the size of the input

– Also often dependent on the nature of the

input

• Best-case, worst-case, average

Analysis of Algorithms

• Use mathematics as our tool for analyzing

algorithm performance

– Measure the algorithm itself, its nature

– Not its implementation or its execution

• Need to count something

– Cost or number of steps is a function of input

size n: e.g. for input size n, cost is f(n)

– Count all steps in an algorithm? (Hopefully

avoid this!)

Counting Operations

• Strategy: choose one operation or one section

of code such that

– The total work is always roughly proportional to how

often that’s done

• So we’ll just count:

– An algorithm’s “basic operation”

– Or, an algorithms’ “critical section”

• Sometimes the basic operation is some action

that’s fundamentally central to how the

algorithm works

– Example: Search a List for a target involves

comparing each list-item to the target.

– The comparison operation is “fundamental.”

Asymptotic Analysis

• Given some formula f(n) for the count/cost of

some thing based on the input size

–

–

–

–

–

We’re going to focus on its “order”

f(n) = 2n ---> Exponential function

f(n) = 100n2 + 50n + 7 ---> Quadratic function

f(n) = 30 n lg n – 10 ---> Log-linear function

f(n) = 1000n ---> Linear function

• These functions grow at different rates

– As inputs get larger, the amount they increase differs

• “Asymptotic” – how do things change as the

input size n gets larger?

Comparison of Growth Rates

Comparison of Growth Rates (2)

250

f(n) = n

f(n) = log(n)

f(n) = n log(n)

f(n) = n^2

f(n) = n^3

f(n) = 2^n

0

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20

Comparison of Growth Rates (3)

1000

f(n) = n

f(n) = log(n)

f(n) = n log(n)

f(n) = n^2

f(n) = n^3

f(n) = 2^n

0

1

3

5

7

9

11

13

15

17

19

Order Classes

• For a given algorithm, we count something:

– f(n) = 100n2 + 50n + 7 ---> Quadratic function

– How different is this than this?

f(n) = 20n2 + 7n + 2

– For large inputs?

• Order class: a “label” for all functions with the

same highest-order term

– Label form: O(n2) or Θ(n2) or a few others

– “Big-Oh” used most often

When Does this Matter?

• Size of input matters a lot!

– For small inputs, we care a lot less

– But what’s a big input?

• Hard to know. For some algorithms, smaller than

you think!

Common Order Classes

• Order classes group “equivalently” efficient

algorithms

– O(1) – constant time! Input size doesn’t matter

– O(lg n) – logarithmic time. Very efficient. E.g. binary

search (after sorting)

– O(n) – linear time

– O(n lg n) – log-linear time. E.g. best sorting

algorithms

– O(n2) – quadratic time. E.g. poorer sorting algorithms

– O(n3) – cubic time

– ….

– O(2n) – exponential time. Many important problems,

often about optimization.

Order Classes Details

• What does the label mean? O(n2)

– Set of all functions that grow at the same rate as n2

or more slowly

– I.e. as efficient as any “n2” or more efficient,

but no worse

– So this is an upper-bound on how inefficient an

algorithm can be

• Usage: We might say: Algorithm A is O(n2)

– Means Algorithm A’s efficiency grows like a quadratic

algorithm or grows more slowly. (As good or better)

• What about that other label, Θ(n2)?

– Set of all functions that grow at exactly the same rate

– A more precise bound

Earlier we said this about Maps:

• “Lookup values for a key” -- an important

task

– Java has two concrete classes that implement

Map interface

• Efficiency trade-offs

– HashMap has O(1) lookup cost

– TreeMap is O(lg n) which is still very good

– But TreeMap can print in sorted order in O(n),

and HashMap can’t.

Maximum Sum Subvector

• Input: vector X of N floats (pos. and neg.)

• Output: maximum sum of any contigous

subvector X[i]…X[j]

31 -41 59 26 -53 58 97 -93 -23 84

9/1/02

24

One Solution

maxSoFar = 0

for i = 1 to N

for j = i to N {

sum = 0

for k = i to j {

sum += X[k]

}

maxSoFar = max(maxSoFar, sum)

}

• Complexity? O(n3) You can do better!

9/1/02

25

Two Important Problems

• Search

– Given a list of items and a target value

– Find if/where it is in the list

• Return special value if not there (“sentinel” value)

– Note we’ve specified this at an abstract level

• Haven’t said how to implement this

• Is this specification complete? Why not?

• Sorting

– Given a list of items

– Re-arrange them in some non-decreasing order

• With solutions to these two, we can do many useful

things!

• What to count for complexity analysis? For both, the

basic operation is:

comparing two list-elements

I understand how Binary Search works

1.

2.

3.

4.

5.

Strongly Agree

Agree

Neutral

Disagree

Strongly Disagree

I can explain why Bin Search is efficient

1.

2.

3.

4.

5.

Strongly Agree

Agree

Neutral

Disagree

Strongly Disagree

Sequential Search Algorithm

• Sequential Search

– AKA linear search

– Look through the list until we reach the end

or find the target

– Best-case? Worst-case?

– Order class: O(n)

– Advantages: simple to code, no assumptions

about list

Binary Search Algorithm

• Binary Search

– Input list must be sorted

– Strategy: Eliminate about half items left with one

comparison

• Look in the middle

• If target larger, must be in the 2nd half

• If target smaller, must be in the 1st half

• Complexity: O(lg n)

• Must sort list first, but…

• Much more efficient than sequential search

– Especially if search is done many times (sort once,

search many times)

• Note: Java provides static binarySearch()

Class Activity

• We’ll give you some binary search inputs

– Array of int values, plus a target to find

– You tell us

• what index is returned, and

• the sequence of index values that were compared

to the target to get that answer

• Work in two’s or three’s, and we’ll call

on groups to explain

Binary Search Example #1

Input: -1 4 5 11 13 and target 4

Index returned is?

Vote on indices (positions) compared to

target-value:

1.

2.

3.

4.

5.

-1 4

0 1

5 -1 4

2 0 1

other

Binary Search Example #2

Input: -5 -2 -1 4 5 11 13 14 17 18

and target 3

Index returned is?

Vote on indices compared below:

1.

2.

3.

4.

5234

4123

4234

other

Binary Search Example #3

Input: -1 4 5 11 13 and target 13

Index returned is?

Vote on indices compared below:

1.

2.

3.

4.

0 1 2 3 4

2 4

2 3 4

other

Note on last examples

• Input size doubled from 5 to 10

• How did the worst-case number of

comparisons change?

– Also double?

– Something else

• Note binary search is O(lg n)

– What does this imply here?

How to Sort?

• Many sorting algorithms have been found!

– Problem is a case-study in algorithm design

– You’ll see more of these in CS4102

• Some “straightforward” sorts

– Insertion Sort, Selection Sort, Bubble Sort

– O(n2)

• More efficient sorts

– Quicksort, mergesort, heapsort

– O(n lg n)

• Note: these are for sorting in memory, not on

disk

Sorting in CS2110

• sort() in Collections and Arrays class

– “under the hood”, it’s something called

mergesort

– Θ(n lg n) worst-case -- as good as we can do

– Use it! But we won’t explain how it works

(yet)

• Later we’ll see how mergesort works

Discussion Question

• Binary search is faster than sequential

search

– But extra cost! Must sort the list first!

• When do you think it’s worth it?

Reminder Slide: Order Classes

• For a given algorithm, we count something:

– f(n) = 100n2 + 50n + 7 ---> Quadratic function

– How different is this than this?

f(n) = 20n2 + 7n + 2

– For large inputs?

• Order class: a “label” for all functions with

the same highest-order term

– Label form: O(n2) or Θ(n2) or a few others

– “Big-Oh” used most often

Reminders: Input and Performance

• Input matters:

– First, we said for small size of inputs, often no

difference

• Also, often focus on the worst-case input

– Why?

• Sometimes interested in the average-case

input

– Why?

• Also the best-case, but do we care?

Reminder: What to Count

• Often count some “basic operation”

• Or, we count a “critical section”

• Examples:

– The block of code most deeply nested in a

nested set of loops

– An operation like comparison in sorting

– An expensive operation like multiplication or

database query

Bigger Issues in Complexity

• Many practical problems seem to require

exponential time

– Θ(2n)

– This grows much faster more quickly than any

polynomial

– Such problems are called intractable problems

– A famous subset of these are called

NP-complete problems

• Most famous theoretical question in CS:

– Is it really impossible to find a polynomial algorithm

for the NP-complete problems? (Does P=NP?)

Example

• Weighted-graph problems

• Find the cheapest way to connect things?

– O(n2)

• Find the shortest path between two points? All

pairs of points?

– O(n2) or better

• But, find the shortest path that visits all points in

the graph?

– Best we can do is exponential, Θ(2n) or worse

– Is it impossible to do better? No one knows!

Summary and Major Points

• When we measure algorithm complexity:

– Base this on size of input

– Count some basic operation or how often a critical

section is executed

– Get a formula f(n) for this

– Then we think about it in terms of its “label”, the

order class O( f(n) )

• “Big-Oh” means as efficient as “class f(n) or better

– We usually use order-class to compare algorithms

– We can measure worst-case, average-case

What’s Next?

• Why this topic now?

• Reminder:

– Data structures are design choices that

implement ADTs

– How to choose? Why is one better?

– Often efficiency of their methods

• (Design trade-offs)