Naïve Bayes Classification

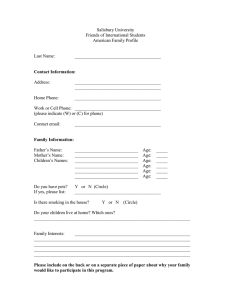

advertisement

Naïve Bayes Classification

CS 6243 Machine Learning

Modified from the slides by Dr. Raymond J. Mooney

http://www.cs.utexas.edu/~mooney/cs391L/

1

Probability Basics

• Definition (informal)

– Probabilities are numbers assigned to events

that indicate “how likely” it is that the event

will occur when a random experiment is

performed

– A probability law for a random experiment is a

rule that assigns probabilities to the events in

the experiment

– The sample space S of a random experiment is

the set of all possible outcomes

2

Probabilistic Calculus

• All probabilities between 0 and 1

0 P( A) 1

• If A, B are mutually exclusive:

– P(A B) = P(A) + P(B)

• Thus: P(not(A)) = P(Ac) = 1 – P(A)

S

A

B

3

Conditional probability

• The joint probability of two events A and B P(AB), or

simply P(A, B) is the probability that event A and B occur

at the same time.

• The conditional probability of P(A|B) is the probability

that A occurs given B occurred.

P(A | B) = P(A B) / P(B)

<=> P(A B) = P(A | B) P(B)

<=> P(A B) = P(B|A) P(A)

4

Example

• Roll a die

– If I tell you the number is less than 4

– What is the probability of an even number?

• P(d = even | d < 4) = P(d = even d < 4) / P(d < 4)

• P(d = 2) / P(d = 1, 2, or 3) = (1/6) / (3/6) = 1/3

5

Independence

• A and B are independent iff:

P( A | B) P( A)

P( B | A) P( B)

These two constraints are logically equivalent

• Therefore, if A and B are independent:

P( A B)

P( A | B)

P( A)

P( B)

P( A B) P( A) P( B)

6

Examples

• Are P(d = even) and P(d < 4) independent?

–

–

–

–

P(d = even and d < 4) = 1/6

P(d = even) = ½

P(d < 4) = ½

½ * ½ > 1/6

• If your die actually has 8 faces, will P(d = even)

and P(d < 5) be independent?

• Are P(even in first roll) and P(even in second roll)

independent?

• Playing card, are the suit and rank independent?

7

Theorem of total probability

• Let B1, B2, …, BN be mutually exclusive events whose union equals

the sample space S. We refer to these sets as a partition of S.

• An event A can be represented as:

•

•

Since B1, B2, …, BN are mutually exclusive, then

P(A) = P(A B1) + P(A B2) + … + P(A BN) Marginalization

And therefore

P(A) = P(A|B1)*P(B1) + P(A|B2)*P(B2) + … + P(A|BN)*P(BN)

Exhaustive conditionalization

= i P(A | Bi) * P(Bi)

8

Example

• A loaded die:

– P(6) = 0.5

– P(1) = … = P(5) = 0.1

• Prob of even number?

P(even)

= P(even | d < 6) * P (d<6) +

P(even | d = 6) * P (d=6)

= 2/5 * 0.5 + 1 * 0.5

= 0.7

9

Another example

• A box of dice:

– 99% fair

– 1% loaded

• P(6) = 0.5.

• P(1) = … = P(5) = 0.1

– Randomly pick a die and roll, P(6)?

• P(6) = P(6 | F) * P(F) + P(6 | L) * P(L)

– 1/6 * 0.99 + 0.5 * 0.01 = 0.17

10

Bayes theorem

• P(A B) = P(B) * P(A | B) = P(A) * P(B | A)

Conditional probability

(likelihood)

=> P(B | A) =

Posterior probability

P ( A | B ) P (B )

Prior of B

P(A )

Prior of A (Normalizing constant)

This is known as Bayes Theorem or Bayes Rule, and is (one of) the

most useful relations in probability and statistics

Bayes Theorem is definitely the fundamental relation in Statistical Pattern

Recognition

11

Bayes theorem (cont’d)

• Given B1, B2, …, BN, a partition of the sample

space S. Suppose that event A occurs; what is the

probability of event Bj?

Posterior probability

Likelihood

Prior of Bj

• P(Bj | A) = P(A | Bj) * P(Bj) / P(A)

Normalizing constant

= P(A | Bj) * P(Bj) / jP(A | Bj)*P(Bj)

Bj: different models / hypotheses

(theorem of total probabilities)

In the observation of A, should you choose a model that maximizes

P(Bj | A) or P(A | Bj)? Depending on how much you know about Bj !

12

Example

• A test for a rare disease claims that it will report

positive for 99.5% of people with disease, and

negative 99.9% of time for those without.

• The disease is present in the population at 1 in

100,000

• What is P(disease | positive test)?

– P(D|+) = P(+|D)P(D)/P(+) = 0.01

• What is P(disease | negative test)?

– P(D|-) = P(-|D)P(D)/P(-) = 5e-8

13

Joint Distribution

• The joint probability distribution for a set of random variables,

X1,…,Xn gives the probability of every combination of values (an ndimensional array with vn values if all variables are discrete with v

values, all vn values must sum to 1): P(X1,…,Xn)

negative

positive

circle

square

red

0.20

0.02

blue

0.02

0.01

circle

square

red

0.05

0.30

blue

0.20

0.20

• The probability of all possible conjunctions (assignments of values to

some subset of variables) can be calculated by summing the

appropriate subset of values from the joint distribution.

P(red circle ) 0.20 0.05 0.25

P(red ) 0.20 0.02 0.05 0.3 0.57

• Therefore, all conditional probabilities can also be calculated.

P( positive | red circle )

P( positive red circle ) 0.20

0.80

P(red circle )

0.25

14

Probabilistic Classification

• Let Y be the random variable for the class which takes values

{y1,y2,…ym}.

• Let X be the random variable describing an instance consisting

of a vector of values for n features <X1,X2…Xn>, let xk be a

possible value for X and xij a possible value for Xi.

• For classification, we need to compute P(Y=yi | X=xk) for i=1…m

• However, given no other assumptions, this requires a table

giving the probability of each category for each possible instance

in the instance space, which is impossible to accurately estimate

from a reasonably-sized training set.

– Assuming Y and all Xi are binary, we need 2n entries to specify

P(Y=pos | X=xk) for each of the 2n possible xk’s since

P(Y=neg | X=xk) = 1 – P(Y=pos | X=xk)

– Compared to 2n+1 – 1 entries for the joint distribution P(Y,X1,X2…Xn)

15

Bayesian Categorization

• Determine category of xk by determining for each yi

P(Y yi | X xk )

P( X xk | Y yi ) P(Y yi )

P ( X xk )

• P(X=xk) can be determined since categories are

complete and disjoint.

m

m

i 1

i 1

P(Y yi | X xk )

P( X xk | Y yi ) P(Y yi )

1

P( X xk )

m

P( X xk ) P( X xk | Y yi ) P(Y yi )

i 1

16

Bayesian Categorization (cont.)

• Need to know:

– Priors: P(Y=yi)

– Conditionals: P(X=xk | Y=yi)

• P(Y=yi) are easily estimated from data.

– If ni of the examples in D are in yi then P(Y=yi) = ni / |D|

• Too many possible instances (e.g. 2n for binary

features) to estimate all P(X=xk | Y=yi).

• Need to make some sort of independence

assumptions about the features to make learning

tractable.

17

Conditional independence

• Chain rule:

P(x1, x2, x3) = P(x1, x2, x3) / P(x2, x3)

* P(x2, x3) / P(x3)

* P(x3)

= P(x1 | x2, x3) P(x2 | x3) P(x3)

• P(x1, x2, x3 | Y)

= P(x1 | x2, x3, Y) P(x2 | x3 , Y) P(x3 | Y)

= P(x1 | Y) P(x2 | Y) P(x3 , Y) assuming conditional independence

18

Naïve Bayes Generative Model

neg

pos pos

pos neg

pos neg

Category

med

sm lg

med

lg lg sm

sm med

red

blue

red grn red

red blue

red

circ

tri tricirc

circ circ

circ sqr

lg

sm

med med

sm lglg

sm

red

blue

grn grn

red blue

blue grn

circ

sqr

tri

circ

circ tri sqr

sqr tri

Size

Color

Shape

Size

Color

Shape

Positive

Negative

20

Naïve Bayes Inference Problem

lg red circ

??

??

neg

pos pos

pos neg

pos neg

Category

med

sm lg

med

lg lg sm

sm med

red

blue

red grn red

red blue

red

circ

tri tricirc

circ circ

circ sqr

lg

sm

med med

sm lglg

sm

red

blue

grn grn

red blue

blue grn

circ

sqr

tri

circ

circ tri sqr

sqr tri

Size

Color

Shape

Size

Color

Shape

Positive

Negative

21

Naïve Bayesian Categorization

• If we assume features of an instance are independent given

the category (conditionally independent).

n

P( X | Y ) P( X 1 , X 2 , X n | Y ) P( X i | Y )

i 1

• Therefore, we then only need to know P(Xi | Y) for each

possible pair of a feature-value and a category.

• If Y and all Xi and binary, this requires specifying only 2n

parameters:

– P(Xi=true | Y=true) and P(Xi=true | Y=false) for each Xi

– P(Xi=false | Y) = 1 – P(Xi=true | Y)

• Compared to specifying 2n parameters without any

independence assumptions.

22

Naïve Bayes Example

Probability

positive

negative

P(Y)

0.5

0.5

P(small | Y)

0.4

0.4

P(medium | Y)

0.1

0.2

P(large | Y)

0.5

0.4

P(red | Y)

0.9

0.3

P(blue | Y)

0.05

0.3

P(green | Y)

0.05

0.4

P(square | Y)

0.05

0.4

P(triangle | Y)

0.05

0.3

P(circle | Y)

0.9

0.3

Test Instance:

<medium ,red, circle>

23

Naïve Bayes Example

Probability

positive

negative

P(Y)

0.5

0.5

P(medium | Y)

0.1

0.2

P(red | Y)

0.9

0.3

P(circle | Y)

0.9

0.3

Test Instance:

<medium ,red, circle>

P(positive | X) = P(positive)*P(medium | positive)*P(red | positive)*P(circle | positive) / P(X)

0.5

*

0.1

*

0.9

*

0.9

= 0.0405 / P(X) = 0.0405 / 0.0495 = 0.8181

P(negative | X) = P(negative)*P(medium | negative)*P(red | negative)*P(circle | negative) / P(X)

0.5

*

0.2

*

0.3

* 0.3

= 0.009 / P(X) = 0.009 / 0.0495 = 0.1818

P(positive | X) + P(negative | X) = 0.0405 / P(X) + 0.009 / P(X) = 1

P(X) = (0.0405 + 0.009) = 0.0495

24

Estimating Probabilities

• Normally, probabilities are estimated based on observed

frequencies in the training data.

• If D contains nk examples in category yk, and nijk of these nk

examples have the jth value for feature Xi, xij, then:

P( X i xij | Y yk )

nijk

nk

• However, estimating such probabilities from small training

sets is error-prone.

• If due only to chance, a rare feature, Xi, is always false in

the training data, yk :P(Xi=true | Y=yk) = 0.

• If Xi=true then occurs in a test example, X, the result is that

yk: P(X | Y=yk) = 0 and yk: P(Y=yk | X) = 0

25

Probability Estimation Example

Ex

Size

Color

Shape

Category

1

small

red

circle

positive

2

large

red

circle

positive

3

small

red

triangle

negitive

4

large

blue

circle

negitive

Test Instance X:

<medium, red, circle>

Probability

positive

negative

P(Y)

0.5

0.5

P(small | Y)

0.5

0.5

P(medium | Y)

0.0

0.0

P(large | Y)

0.5

0.5

P(red | Y)

1.0

0.5

P(blue | Y)

0.0

0.5

P(green | Y)

0.0

0.0

P(square | Y)

0.0

0.0

P(triangle | Y)

0.0

0.5

P(circle | Y)

1.0

0.5

P(positive | X) = 0.5 * 0.0 * 1.0 * 1.0 / P(X) = 0

P(negative | X) = 0.5 * 0.0 * 0.5 * 0.5 / P(X) = 0

26

Smoothing

• To account for estimation from small samples,

probability estimates are adjusted or smoothed.

• Laplace smoothing using an m-estimate assumes that

each feature is given a prior probability, p, that is

assumed to have been previously observed in a

“virtual” sample of size m.

P( X i xij | Y yk )

nijk mp

nk m

• For binary features, p is simply assumed to be 0.5.

27

Laplace Smothing Example

• Assume training set contains 10 positive examples:

– 4: small

– 0: medium

– 6: large

• Estimate parameters as follows (if m=1, p=1/3)

–

–

–

–

P(small | positive) = (4 + 1/3) / (10 + 1) = 0.394

P(medium | positive) = (0 + 1/3) / (10 + 1) = 0.03

P(large | positive) = (6 + 1/3) / (10 + 1) = 0.576

P(small or medium or large | positive) =

1.0

28

Missing values

• Training: instance is not included in

frequency count for attribute value-class

combination

• Classification: attribute will be omitted

from calculation

• Example: Outlook Temp. Humidity Windy Play

?

Cool

High

True

?

Likelihood of “yes” = 3/9 3/9 3/9 9/14 = 0.0238

Likelihood of “no” = 1/5 4/5 3/5 5/14 = 0.0343

P(“yes”) = 0.0238 / (0.0238 + 0.0343) = 41%

P(“no”) = 0.0343 / (0.0238 + 0.0343) = 59%

29

witten&eibe

Continuous Attributes

• If Xi is a continuous feature rather than a discrete one, need

another way to calculate P(Xi | Y).

• Assume that Xi has a Gaussian distribution whose mean

and variance depends on Y.

• During training, for each combination of a continuous

feature Xi and a class value for Y, yk, estimate a mean, μik ,

and standard deviation σik based on the values of feature Xi

in class yk in the training data.

• During testing, estimate P(Xi | Y=yk) for a given example,

using the Gaussian distribution defined by μik and σik .

P ( X i | Y yk )

1

ik

( X i ik ) 2

exp

2

2

2

ik

30

Statistics for

weather data

Outlook

Temperature

Humidity

Windy

Yes

No

Yes

No

Yes

No

Sunny

2

3

64, 68,

65, 71,

65, 70,

70, 85,

Overcast

4

0

69, 70,

72, 80,

70, 75,

90, 91,

Rainy

3

2

72, …

85, …

80, …

95, …

Sunny

2/9

3/5

=73

=75

=79

Overcast

4/9

0/5

=6.2

=7.9

=10.2

Rainy

3/9

2/5

Play

Yes

No

Yes

No

False

6

2

9

5

True

3

3

=86

False

6/9

2/5

=9.7

True

3/9

3/5

9/14 5/14

• Example density value:

f (temperature 66 | yes )

1

2 6.2

e

( 66 73 )2

26.22

0.0340

31

witten&eibe

Classifying a new day

• A new day:

Outlook

Temp.

Humidity

Windy

Play

Sunny

66

90

true

?

Likelihood of “yes” = 2/9 0.0340 0.0221 3/9 9/14 = 0.000036

Likelihood of “no” = 3/5 0.0291 0.0380 3/5 5/14 = 0.000136

P(“yes”) = 0.000036 / (0.000036 + 0. 000136) = 20.9%

P(“no”) = 0.000136 / (0.000036 + 0. 000136) = 79.1%

• Missing values during training are not

included in calculation of mean and standard

deviation

32

witten&eibe

*Probability densities

• Relationship between probability and density:

Pr[c x c ] f (c)

2

2

• But: this doesn’t change calculation of a

posteriori probabilities because cancels out

• Exact relationship:

b

Pr[ a x b]

f (t )dt

a

33

witten&eibe

Naïve Bayes: discussion

•

•

•

•

•

•

Naïve Bayes works surprisingly well (even if independence

assumption is clearly violated)

– Experiments show it to be quite competitive with other

classification methods on standard UCI datasets.

Why? Because classification doesn’t require accurate probability

estimates as long as maximum probability is assigned to correct class

However: adding too many redundant attributes will cause problems

(e.g. identical attributes)

Note also: many numeric attributes are not normally distributed (

kernel density estimators)

Does not perform any search of the hypothesis space. Directly

constructs a hypothesis from parameter estimates that are easily

calculated from the training data.

Typically handles noise well since it does not even focus on

completely fitting the training data.

34

witten&eibe

Naïve Bayes Extensions

• Improvements:

– select best attributes (e.g. with greedy search)

– often works as well or better with just a

fraction of all attributes

• Bayesian Networks

35

witten&eibe