Large Scale Machine Translation Architectures Qin Gao

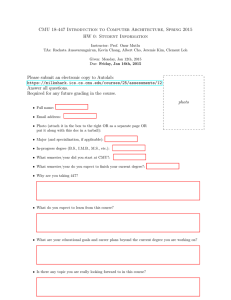

advertisement

Large Scale Machine Translation Architectures Qin Gao Outline Typical Problems in Machine Translation Program Model for Machine Translation MapReduce Required System Component Supporting software Distributed streaming data storage system Distributed structured data storage system Integrating – How to make a full-distributed system 2016/7/24 Qin Gao, LTI, CMU 2 Why large scale MT We need more data.. But… 2016/7/24 Qin Gao, LTI, CMU 3 Some representative MT problems Counting events in corpora ◦ Ngram count Sorting ◦ Phrase table extraction Preprocessing Data ◦ Parsing, tokenizing, etc Iterative optimization ◦ GIZA++ (All EM algorithms) 2016/7/24 Qin Gao, LTI, CMU 4 Characteristics of different tasks Counting events in corpora ◦ Extract knowledge from data Sorting ◦ Process data, knowledge is inside data Preprocessing Data ◦ Process data, require external knowledge Iterative optimization ◦ For each iteration, process data using existing knowledge and update knowledge 2016/7/24 Qin Gao, LTI, CMU 5 Components required for large scale MT Data Knowledge 2016/7/24 Qin Gao, LTI, CMU 6 Components required for large scale MT Data Knowledge 2016/7/24 Qin Gao, LTI, CMU 7 Components required for large scale MT Data Stream Data Processor Structured Knowledge Knowledge 2016/7/24 Qin Gao, LTI, CMU 8 Problem for each component Stream data: ◦ As the amount of data grows, even a complete navigation is impossible. Processor: ◦ Single processor’s computation power is not enough Knowledge: ◦ The size of the table is too large to fit into memory ◦ Cache-based/distributed knowledge base suffers from low speed 2016/7/24 Qin Gao, LTI, CMU 9 Make it simple: What is the underlying problem? We have a huge cake and we want to cut them into pieces and eat. Different cases: ◦ We just need to eat the cake. ◦ We also want to count how many peanuts inside the cake ◦ (Sometimes)We have only one folk! 2016/7/24 Qin Gao, LTI, CMU 10 Parallelization Data Knowledge 2016/7/24 Qin Gao, LTI, CMU 11 Solutions Large-scale distributed processing ◦ MapReduce: Simplified Data Processing on Large Clusters, Jeffrey Dean, Sanjay Ghemawat, Communications of the ACM, vol. 51, no. 1 (2008), pp. 107-113. Handling huge streaming data ◦ The Google File System, Sanjay Ghemawat, Howard Gobioff, Shun-Tak Leung, Proceedings of the 19th ACM Symposium on Operating Systems Principles, 2003, pp. 20-43. Handling structured data ◦ Large Language Models in Machine Translation, Thorsten Brants, Ashok C. Popat, Peng Xu, Franz J. Och, Jeffrey Dean, Proceedings of the 2007 Joint Conference on Empirical Methods in Natural Language Processing and Computational Natural Language Learning (EMNLP-CoNLL), pp. 858-867. ◦ Bigtable: A Distributed Storage System for Structured Data, Fay Chang, Jeffrey Dean, Sanjay Ghemawat, Wilson C. Hsieh, Deborah A. Wallach, Mike Burrows, Tushar Chandra, Andrew Fikes, Robert E. Gruber, 7th USENIX Symposium on Operating Systems Design and Implementation (OSDI), 2006, pp. 205-218. 2016/7/24 Qin Gao, LTI, CMU 12 MapReduce MapReduce can refer to ◦ A programming model that deal with massive, unordered, streaming data processing tasks(MUD) ◦ A set of supporting software environment implemented by Google Inc Alternative implementation: ◦ Hadoop by Apache fundation 2016/7/24 Qin Gao, LTI, CMU 13 MapReduce programming model Abstracts the computation into two functions: ◦ MAP ◦ Reduce User is responsible for the implementation of the Map and Reduce functions, and supporting software take care of executing them 2016/7/24 Qin Gao, LTI, CMU 14 Representation of data The streaming data is abstracted as a sequence of key/value pairs Example: ◦ (sentence_id : sentence_content) 2016/7/24 Qin Gao, LTI, CMU 15 Map function The Map function takes an input key/value pair, and output a set of intermediate key/value pairs Key1 : Value1 Map() Key1 : Value1 Key2 : Value2 Key3 : Value3 …….. Key2 : Value2 Map() Key1 : Value2 Key2 : Value1 Key3 : Value3 …….. 2016/7/24 Qin Gao, LTI, CMU 16 Reduce function Reduce function accepts one intermediate key and a set of intermediate values, and produce the result Key1 : Value1 Key1 : Value2 Key1 : Value3 Reduce() Result Reduce() Result …….. Key2 : Value1 Key2 : Value2 Key2 : Value3 …….. 2016/7/24 Qin Gao, LTI, CMU 17 The architecture of MapReduce Map function Reduce Function Distributed Sort 2016/7/24 Qin Gao, LTI, CMU 18 Benefit of MapReduce Automatic splitting data Fault tolerance High-throughput computing, uses the nodes efficiently Most important: Simplicity, just need to convert your algorithm to the MapReduce model. 2016/7/24 Qin Gao, LTI, CMU 19 Requirement for expressing algorithm in MapReduce Process Unordered data ◦ The data must be unordered, which means no matter in what order the data is processed, the result should be the same Produce Independent intermediate key ◦ Reduce function can not see the value of other keys 2016/7/24 Qin Gao, LTI, CMU 20 Example Distributed Word Count (1) ◦ ◦ ◦ ◦ ◦ Input key : word Input value : 1 Intermediate key : constant Intermediate value: 1 Reduce() : Count all intermediate values Distributed Word Count (2) ◦ ◦ ◦ ◦ Input key : Document/Sentence ID Input value : Document/Sentence content Intermediate key : constant Intermediate value: number of words in the document/sentence ◦ Reduce() : Count all intermediate values 2016/7/24 Qin Gao, LTI, CMU 21 Example 2 Distributed unigram count ◦ ◦ ◦ ◦ Input key : Document/Sentence ID Input value : Document/Sentence content Intermediate key : Word Intermediate value: Number of the word in the document/sentence ◦ Reduce() : Count all intermediate values 2016/7/24 Qin Gao, LTI, CMU 22 Example 3 Distributed Sort ◦ Input key : Entry key ◦ Input value : Entry content ◦ Intermediate key : Entry key (modification may be needed for ascend/descend order) ◦ Intermediate value: Entry content ◦ Reduce() : All the entry content Making use of built-in sorting functionality 2016/7/24 Qin Gao, LTI, CMU 23 Supporting MapReduce: Distributed Storage Reminder what we are dealing with in MapReduce: ◦ Massive, unordered, streaming data Motivation: ◦ We need to store large amount of data ◦ Make use of storage in all the nodes ◦ Automatic replication Fault tolerant Avoid hot spots client can read from many servers Google FS and Hadoop FS (HDFS) 2016/7/24 Qin Gao, LTI, CMU 24 Design principle of Google FS Optimizing for special workload: ◦ Large streaming reads, small random reads ◦ Large streaming writes, rare modification Support concurrent appending ◦ It actually assumes data are unordered High sustained bandwidth is more important than low latency, fast response time is not important Fault tolerant 2016/7/24 Qin Gao, LTI, CMU 25 Google FS Architecture Optimize for large streaming reading and large, concurrent writing Small random reading/writing is also supported, but not optimized Allow appending to existing files File are spitted into chunks and stored in several chunk servers A master is responsible for storage and query of chunk information 2016/7/24 Qin Gao, LTI, CMU 26 Google FS architecture 2016/7/24 Qin Gao, LTI, CMU 27 Replication When a chunk is frequently or “simultaneously” read from a client, the client may fail A fault in one client may cause the file not usable Solution: store the chunks in multiple machines. The number of replica of each chunk : replication factor 2016/7/24 Qin Gao, LTI, CMU 28 HDFS HDFS shares similar design principle of Google FS Write-once-read-many : Can only write file once, even appending is now allowed “Moving computation is cheaper than moving data” 2016/7/24 Qin Gao, LTI, CMU 29 Are we done? NO… Problems about the existing architecture 2016/7/24 Qin Gao, LTI, CMU 30 We are good at dealing with data What about knowledge? I.E. structured data? What if the size of the knowledge is HUGE? 2016/7/24 Qin Gao, LTI, CMU 31 A good example: GIZA A typical EM algorithm World Alignment Collect Counts Has More Sentences? Y Has More Iterations? Y N Normalize Counts N 2016/7/24 Qin Gao, LTI, CMU 32 When parallelized: seems to be a perfect MapReduce application Word Alignment Word Alignment Word Alignment Collect Counts Collect Counts Collect Counts Has More Sentences? N Y Has More Iterations? Y Has More Sentences? Y N Has More Sentences? Y N Normalize Counts N Run on cluster 2016/7/24 Qin Gao, LTI, CMU 33 However: Memory Large parallel corpus … Corpus chunks Map Reduce Count tables . ... .. . . .... .. . ... .. . . .... .. . ... .. . . .... .. . ... .. . . . . . .... .. . . . .. . . Combined count table . ... .. . . .... .. Data I/O Memory Renormalization Statistical lexicon Redistribute for next iteration . ... .. . . . . . .... .. . . . .. . . 2016/7/24 Qin Gao, LTI, CMU 34 Huge tables Lexicon probability table: T-Table Up to 3G in early stages As the number of workers increases, they all need to load this 3G file! And all the nodes need to have 3G+ memory – we need a cluster of super computers? 2016/7/24 Qin Gao, LTI, CMU 35 Another example, decoding Consider language models, what can we do if the language model grows to several TBs We need storage/query mechanism for large, structured data Consideration: ◦ Distributed storage ◦ Fast access: network has high latency 2016/7/24 Qin Gao, LTI, CMU 36 Google Language Model Storage: ◦ Central storage or distributed storage How to deal with latency? ◦ Modify the decoder, collect a number of queries and send them in one time. It is a specific application, we still need something more general. 2016/7/24 Qin Gao, LTI, CMU 37 Again, made in Google: Bigtable It is the specially optimized for structured data Serving many applications now It is not a complete database Definition: ◦ A Bigtable is a sparse, distributed, persistent, multi-dimensional, sorted map 2016/7/24 Qin Gao, LTI, CMU 38 Data model in Bigtable Four dimension table: ◦ ◦ ◦ ◦ Row Column family Column Timestamp Column family Column Row Timestamp 2016/7/24 Qin Gao, LTI, CMU 39 Distributed storage unit : Tablet A tablet consists a range of rows Tablets can be stored in different nodes, and served by different servers Concurrent reading multiple rows can be fast 2016/7/24 Qin Gao, LTI, CMU 40 Random access unit : Column family Each tablet is a string-to-string map (Though not mentioned, the API shows that: ) In the level of column family, the index is loaded into memory so fast random access is possible Column family should be fixed 2016/7/24 Qin Gao, LTI, CMU 41 Tables inside table: Column and Timestamp Column can be any arbitrary string value Timestamp is an integer Value is byte array Actually it is a table of tables 2016/7/24 Qin Gao, LTI, CMU 42 Performance Number of 1000-byte values read/write per second. What is shocking: ◦ Effective IO for random read (from GFS) is more than 100 MB/second ◦ Effective IO for random read from memory is more than 3 GB/second 2016/7/24 Qin Gao, LTI, CMU 43 An example : Phrase Table Row: First bigram/trigram of the source phrase Column Family: Length of source phrase or some hashed number of remaining part of source phrase Column: Remaining part of the source phrase Value: All the phrase pairs of the source phrase 2016/7/24 Qin Gao, LTI, CMU 44 Benefit Different source phrase comes from different servers The load is balanced and the reading can be concurrent and much faster. Filtering the phrase table before decoding becomes much more efficient. 2016/7/24 Qin Gao, LTI, CMU 45 Another Example: GIZA++ Lexicon table: ◦ ◦ ◦ ◦ Row: Source word id Column Family: nothing Column: Target word id Value: The probability value With a simple local cache, the table loading can be extremely efficient comparing to current implemenetation 2016/7/24 Qin Gao, LTI, CMU 46 Conclusion Strangely, the talk is all about how Google does it A useful framework for distributed MT systems require three components: ◦ MapReduce software ◦ Distributed streaming data storage system ◦ Distributed structured data storage system 2016/7/24 Qin Gao, LTI, CMU 47 Open Source Alternatives MapReduce Library Hadoop GoogleFS Hadoop FS (HDFS) BigTable HyperTable 2016/7/24 Qin Gao, LTI, CMU 48 THANK YOU! 2016/7/24 Qin Gao, LTI, CMU 49