Sort-First, Distributed Memory Parallel Visualization and Rendering Wes Bethel, R3vis Corporation and

advertisement

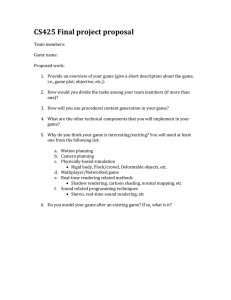

Sort-First, Distributed Memory Parallel Visualization and Rendering Wes Bethel, R3vis Corporation and Lawrence Berkeley National Laboratory Parallel Visualization and Graphics Workshop Sunday October 18, 2003, Seattle, Washington The Actual Presentation Title: Why Distributed Memory Parallel Rendering is a Challenge: Combining OpenRM Scene Graph and Chromium for General Purpose Use on Distributed Memory Clusters Outline: • • • • • • Problem Statement, Desired Outcomes Sort-First Parallel Architectural Overview The Many Ways I Broke Chromium Scene Graph Considerations Demo? Conclusions 2 Motivation and Problem Statement The Allure of COTS solutions: • • • • Performance of COTS GPUs exceed that of custom silicon. Attractive price/performance of COTS platforms (e.g., x86 PCs). Gigabit Ethernet is cheap: $100/NIC, $500 8-port switch. Can build a screamer cluster for about $2K/node. We’re accustomed to “nice, friendly” software infrastructure. E.g., hardware accelerated Xinerama. Enter Chromium – the means to use a bunch of PCs to do parallel rendering. Parallel submission of graphics streams is a “custom solution,” and presents challenges. Want a flexible, resilient API to interface between parallel visualization/rendering applications and Chromium. 3 4 Our Approach Distributed memory parallel visualization application design: amortizes expensive data I/O and visualization across many nodes. The scene graph layer mediates interaction between the application and the rendering subsystem: portability, “hide” the icky parallel rendering details, provides an infrastructure for accelerating rendering. Chromium provides routing of graphics commands to support hardware accelerated rendering on a variety of platforms. Focus on COTS solutions: all hardware and software we used is cheap (PC cluster) or free (software). Focus on simplicity: our sample applications are straightforward in implementation, easily reproducible by others and highly portable. Want an infrastructure that is suitable for use regardless of the type of parallel programming model used by the application: • No “parallel objects” in the Scene Graph!!!!! 5 Our Approach, ctd. 6 The Many Ways I Broke Chromium Retained mode object “namespace” collisions in parallel submission. Broadcasting: how to burn up bandwidth without even trying! Scene Graph Issues: to be discussed in our PVG paper presentation on Monday. 7 The “Collision” Problem Want to use OpenGL retained-mode semantics and structures to realize performance gains in DM environment Problem: • “Namespace collision” of retained-mode identifiers during parallel submission of graphics commands. Example: Process A: Process A: GLuint n = glNewList(1); GLuint n = glNewList(1); printf(“ id==%d\n”); printf(“ id==%d\n”,n); // id==0 // id == 0 // build list, draw with list // build list, draw with list The problem exists for all OpenGL retained mode objects: display lists, texture objects and programs. The problem extends to all OpenGL retained mode objects: display lists, texture object id’s, programs. 8 Manifestation of Collision Problem Show image of four textured quads when the problem is present. 9 Desired Result Show image of four textured quads when the problem is fixed. 10 Resolving the Collision Problem New CR configuration file options: • shared_textures, shared_display_lists, shared_programs • When set to 1, beware of collisions in parallel submission. • When set to 0, collisions resolved in parallel submission. Using shared_* to zero will enforce “unique retained mode identifiers” across all parallel submitters. Thanks, Brian! 11 The Broadcast Problem What’s the Problem?: • Geometry and textures from N application PEs is replicated across M crservers. Bumped into limits of memory and bandwidth. • To Chromium, a display list is an opaque blob of stuff. Tilesort doesn’t peek inside a display list to see where it should be sent. Early performance testing showed two types of broadcasting: • Display lists being broadcast from one tilesort to all servers. • Textures associated with textured geometry in display lists was being broadcast from one tilesort to all servers. 12 Broadcast Workarounds (Short Term) Help Chromium decide how to route textures with the GL_OBJECT_BBOX_CR extension. Don’t use display lists (for now). • Immediate mode geometry isn’t broadcast. Sorting/routing is accelerated using GL_OBJECT_BBOX_CR to provide hints to tilesort; it doesn’t have to look at all vertices in a geometry blob to perform routing decisions. • For scientific visualization, which generates lots of geometry, this is clearly a problem. • Our volume rendering application uses 3D textures (N**3 data) and textured geometry (N**2 data), so the “heavy payload” data isn’t broadcast. The cost is immediate mode transmission of geometry (approximately 36KB/frame of geometry as compared to 160MB/frame of texture data). 13 Broadcast Workarounds (Long Term) Funding for Chromium developers to implement display list caching and routing, similar to existing capabilities to manage texture objects. Lurking problems: • “Aging” of display lists: the crserver is like a “roach motel”: display lists check in, but they never check out. • Adding retained mode object aging and management to applications is an unreasonable burden (IMO). • There exists no commonly accepted mechanism for LRU aging, etc. in the graphics API. Such an aging mechanism will probably show up as an extension. • Better as an extension with tunable parameters than requiring applications to “reach deeply” into graphics API implementations. 14 Scene Graph Issues and Performance Analysis Discussed in our 2003 PVG Paper (Monday afternoon). • Our “parallel scene graph” implementation can be used by any parallel application, regardless of the type of parallel programming model used by the application developer. • The “big issue” in sort-first is how much data is duplicated. What we’ve seen so far is about 1.8x duplication was required for the first frame (in a hardware accelerated volume rendering application). • While the scene graph supports any type of parallel operation, certain types of synchronization are required to ensure correct rendering. These can be achieved using only Chromium barriers – no “parallel objects” in the scene graph are required. 15 Some Performance Graphs Bandwidth vs. Traffic Peak Bandwidth, LOD vs. Non-LOD, Six Displays 70 60 40 6 Displays LOD 30 6 Displays No LOD 20 Aggregate 3D Texture Traffic 10 0 400 13 Frame Number 15 17 4 Displays 300 6 Displays 200 9 Displays 100 16 Displays 0 20 Displays 16 11 13 9 10 7 7 5 4 3 1 1 MBytes MBytes 50 24 Displays Fram e Num ber 16 Parallel Scene Graph API Stuff Collectives • rmPipeSetCommSize(), rmPipeGetCommSize() • rmPipeSetMyRank(), rmPipeGetMyRank() Chromium-specific • rmPipeBArrierCreateCR() • Creates a Chromium barrier, number of participants is set by value specified using rmPipeSetCommSize() • rmPipeBarrierExecCR() • Doesn’t block application code execution. • Used to synchronize rendering execution from rmPipeGetCommSize() streams of graphics commands. 17 Demo Applications: Parallel Isosurface 18 Demo Application: Parallel Volume Rendering 19 Demo Application: Parallel Volume Rendering with LOD Volumes 20 Conclusions We met our objectives: • General purpose infrastructure for doing parallel visualization and hardware accelerated rendering on PC clusters. • The infrastructure can be used by any parallel application, regardless of the parallel programming model. The architecture scaled well from one to 24 displays, supporting extremely high-resolution output (e.g, 7860x4096). We bumped into network bandwidth limits (not a big surprise). Display lists are still broadcast in Chromium. Please fund them to add this much-needed capability, which is fundamental for efficient sort-first operation of clusters. 21 Sources of Software OpenRM Scene Graph: www.openrm.org Source code for OpenRM+Chromium applications: www.openrm.org. Chromium: chromium.sourceforge.net. 22 Acknowledgement This work was supported by the U. S. Department of Energy, Office of Science, Office of Advanced Scientific Computing Research under SBIR grant DE-FE0302ER83443. The authors wish to thank Randall Frank of the ASCI/VIEWS program at Lawrence Livermore National Laboratory and the Scientific Computing and Imaging Institute at the University of Utah for use of computing facilities during the course of this research. The Argon shock bubble dataset was provided courtesy of John Bell and Vince Beckner at the Center for Computational Sciences and Engineering, Lawrence Berkeley National Laboratory. 23