Distance Metric Learning: A Comprehensive Survey Liu Yang Advisor: Rong Jin

advertisement

Distance Metric Learning:

A Comprehensive Survey

Liu Yang

Advisor: Rong Jin

May 8th, 2006

Outline

Introduction

Supervised Global Distance Metric Learning

Supervised Local Distance Metric Learning

Unsupervised Distance Metric Learning

Distance Metric Learning based on SVM

Kernel Methods for Distance Metrics Learning

Conclusions

Introduction

Definition

Distance Metric learning is to learn a distance metric for the

input space of data from a given collection of pair of

similar/dissimilar points that preserves the distance relation

among the training data pairs.

Importance

Many machine learning algorithms, heavily rely on the

distance metric for the input data patterns. e.g. kNN

A learned metric can significantly improve the performance

in classification, clustering and retrieval tasks:

e.g. KNN classifier, spectral clustering, content-based

image retrieval (CBIR).

Contributions of this Survey

Review distance metric learning under different learning

conditions

supervised learning vs. unsupervised learning

learning in a global sense vs. in a local sense

distance matrix based on linear kernel vs. nonlinear

kernel

Discuss central techniques of distance metric learning

K nearest neighbor

dimension reduction

semidefinite programming

kernel learning

large margin classification

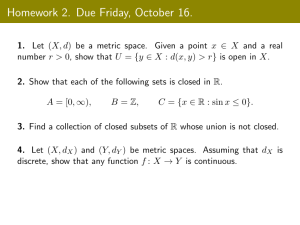

Global Distance Metric Learning

by Convex Programming

Supervised

Distance Metric Learning

Local

Unsupervised

Distance Metric Learning

Distance Metric Learning

based on SVM

Kernel Methods for

Distance Metrics Learning

Local Adaptive Distance

Metric Learning

Relevant Component Analysis

Neighborhood Components Analysis

Linear embedding

PCA, MDS

Nonlinear embedding

LLE, ISOMAP, Laplacian Eigenmaps

Large Margin Nearest Neighbor

Based Distance Metric Learning

Cast Kernel Margin

Maximization into a SDP problem

Kernel Alignment with SDP

Learning with Idealized Kernel

Outline

Introduction

Supervised Global Distance Metric Learning

Supervised Local Distance Metric Learning

Unsupervised Distance Metric Learning

Distance Metric Learning based on SVM

Kernel Methods for Distance Metrics Learning

Supervised Global Distance Metric

Learning (Xing et al. 2003)

Equivalence constraints: S {( xi , x j ) | xi and x j belong to the same class}

Inequivalence constraints: D {( xi , x j ) | xi and x j belong to different classes},

d 2A ( x, y ) x y

2

A

( x y )T A( x y ), A S mm is the distance metric

Goal : keep all the data points within the same classes close,

while separating all the data points from different classes.

Formulate as a constrained convex programming problem

minimize the distance between the data pairs in S

Subject to data pairs in D are well separated

Global Distance Metric Learning (Cont’d)

min

mm

AR

( xi , x j )S

xi x j

2

A

s.t.

A 0,

( xi , x j )D

xi x j

2

1

A

A is positive semi-definite

Ensure the negativity and the triangle inequality of the metric

The number of parameters is quadratic in the number of features

Difficult to scale to a large number of features

Simplify the computation

Global Distance Metric Learning:

Example I

(a) Data Dist. of the original dataset

(b) Data scaled by the global metric

Keep all the data points within the same classes close

Separate all the data points from different classes

Global Distance Metric Learning:

Example II

(a) Original data

(b) rescaling by learned

full A

(c) Rescaling by learned

diagonal A

Diagonalize distance metric A can simplify computation, but

could lead to disastrous results

Problems with Global Distance

Metric Learning

(a) Data Dist. of the original dataset

(b) Data scaled by the global metric

Multimodal data distributions prevent global distance metrics

from simultaneously satisfying constraints on within-class

compactness and between-class separability.

Outline

Introduction

Supervised Global Distance Metric Learning

Supervised Local Distance Metric Learning

Unsupervised Distance Metric Learning

Distance Metric Learning based on SVM

Kernel Methods for Distance Metrics Learning

Conclusions

Supervised Local Distance Metric

Learning

Local Adaptive Distance Metric Learning

Local Feature Relevance

Locally Adaptive Feature Relevance Analysis

Local Linear Discriminative Analysis

Neighborhood Components Analysis

Relevant Component Analysis

Local Adaptive Distance Metric

Learning

K Nearest Neighbor Classifier

1

Pr(j | x0 )

N ( x0 )

xi N ( x0 )

( yi j )

N ( x0 ) : nearest neighbors of x0

x , y ,

1

1

, xn , yn : training examples

1

( yi j )

0

yi j

o.w.

Local Adaptive Distance Metric

Learning

Assumption of KNN

Pr(y|x) in the local NN is constant or smooth

However, this is not necessarily true!

Near class boundaries

Irrelevant dimensions

Modified local neighborhood by a distance metric

Elongate the distance along the dimensions where

the class labels change rapidly

Squeeze the distance along the dimensions that are

almost independent from the class labels

Local Feature Relevance

[J. Friedman,1994]

Assume least-squared estimate for predicting f(x) is

Ef f (x)p(x)dx

Conditioned at x i z, then the least-squared estimate of f(x)

p(x) (xi z )

p(x|x

=z)

=

E[ f | xi z ] f (x)p(x|x i =z)dx,

i

p(x') (xi ' z)

The improvement in prediction error with knowing x i z

I i2 ( z ) E[( f (x)-Ef ) 2 | xi z ] E[( f (x)-E(f (x) | xi z ) 2 | xi z ] ( Ef E[ f | xi z ]) 2

Consider z ( z1 , , zm ) , a measure of relative influence of the

ith input variable to the variation ofp f(x) at x = z is given by

ri 2 ( z ) I i2 ( zi ) / I k2 ( zk )

k 1

Locally Adaptive Feature Relevance

Analysis [C. Domeniconi, 2002]

Use a Chi-squared distance analysis to compute metric for

producing a neighborhood, in which

The posterior probabilities are approximately constant

Highly adaptive to query locations

Chi-squared distance between the true and estimated posterior

at the test point x 0

2

J

[ p( j | X) p( j | x 0 )]

p( j | x 0 )

j 1

r (X, x 0 )

Use the Chi-squared distance for feature relevance:

---- to tell to which extent the ith dimension can be relied on for

predicting p(j|x 0 )

Local Relevance Measure

in ith Dimension

ri (z) measures the distance between Pr(j|z) and the conditional

expectation of Pr(j|x) at location z

Calculate ri (z) for each point z in the neighborhood of x 0

J

ri (z) =

j 1

[Pr( j | z ) Pr( j | xi zi )]2

Pr( j | xi zi )

Pr( j | xi zi ) E (Pr(j|x) | xi zi ) is

a conditional expectation of p(j|x)

The closer Pr( j | xi zi ) is to p(j|z), the more information the ith

dimension provides for predicting p(j|z)

Locally Adaptive Feature

Relevance Analysis

A local relevance measure in dimension i

ri (x 0 )

1

K

zN (x 0 )

ri ( z )

N (x 0 ) is

the neighborhood of x 0

Relative relevance

w i ( x0 )

Ri ( x0 ) t

, where Ri ( x0 ) (max

q

R (x )

l 1

l

q

j 1

rj ( x0 )) ri ( x0 )

t

0

t= 1 or 2, corresponds to linear and quadratic weighting.

Weighted distance

q

D(x,y) =

2

w

(

x

y

)

i i i

i=1

Local Linear Discriminative Analysis

[T. Hastie et al. 1996]

LDA finds principle eigenvectors of matrix T = Sw -1Sb.

to keep patterns from the same class close

separate patterns from different classes apart

Sb : the between-class covariance matrix

Sw : the within-class covariance matrix

LDA metric : stacking principle eigenvectors of T together

Local Linear

Discriminative Analysis

Need local adaptation of the nearest neighbor metric

Initialize as identical matrix

Given a testing point x 0 , iterate below two steps:

Estimate Sb and Sw based on the local neighbor

of x 0 measured by

Form a local metric behaving like LDA metric

1

2

1

2

1

2

Sw [ Sw Sb Sw I]Sw

1

2

is a small tuning parameter to prevent neighborhoods

extending to infinity

Local Linear Discriminative Analysis

Local Sb shows the inconsistency of the class centriods

The estimated metric

shrinks the neighborhood in directions in which the local class

centroids differ to produce a neighborhood in which the class

centriod coincide

shrinks neighborhoods in directions orthogonal to these local

decision boundaries, and elongates them parallel to the boundaries.

Neighborhood Components Analysis

[J. Goldberger et al. 2005]

NCA learns a Mahalanobis distance metric for the KNN

classifier by maximizing the leave-one-out cross validation.

The probability of classifying x i correctly, pi pij

weighted counting involving pairwise distance jC

i

2

Here Ci { j | ci c j }, pij

exp( Ax i Ax j )

exp( Ax i Ax k )

2

k i

The expected number of correctly classification points:

n

f(A) = pi ,

i=1

Overfitting, Scalability problem, # parameters is quadratic in #features.

RCA [N. Shen et al. 2002]

Constructs a Mahalanobis distance metric based on a sum of

in-chunklet covariance matrices

Chunklet : data have same but unknown class labels

unlabeled data

chuklet data

labeled data

Sum of in-chunklet covariance matrices for p points in k chunklets:

n

^

^

1 k j

C (x ji m j )(x ji m j ) T ,

p j 1 i 1

^

Apply linear transformation

nj

ji i=1

^

chunklet j : {x } , with mean m j

1

^ 2

yC x

Information maximization

under chunklet constraints

[A. Bar-Hillel etal, 2003]

Maximizes the mutual information I(X,Y)

Constraints: within-chunklet compactness

k

max I(X,Y) s.t.

f F

nj

1

y

y

m

ji j

p j 1 i 1

2

K . (*)

m yj is the transformed mean in the jth chunklet.

K is threshold constant.

Let B =A T A, (*) can be further written into

k

2

nj

1

max | B | s.t. x ji m j

B

p j 1 i 1

K, B

B

0

RCA algorithm applied to

synthetic Gaussian data

(a) The fully labeled data set with 3 classes.

(b) Same data unlabeled; classes' structure is less evident.

(c) The set of chunklets

(d) The centered chunklets, and their empirical covariance.

(e) The RCA transformation applied to the chunklets. (centered)

(f) The original data after applying the RCA transformation.

Outline

Introduction

Supervised Global Distance Metric Learning

Supervised Local Distance Metric Learning

Unsupervised Distance Metric Learning

Distance Metric Learning based on SVM

Kernel Methods for Distance Metrics Learning

Conclusions

Unsupervised Distance Metric Learning

Most dimension reduction approaches are to learn a distance

metric without label information. e.g. PCA

I will present five methods for dimensionality reduction.

linear

nonlinear

Global PCA, MDS

ISOMAP

Local

LLE, Laplacian Eigenmap

A Unified Framework for Dimension Reduction

Solution 1

Solution 2

Dimensionality Reduction Algorithms

PCA finds the subspace that best preserves the variance of the data.

MDS finds the subspace that best preserves the interpoint distances.

Isomap finds the subspace that best preserves the geodesic

interpoint distances. [Tenenbaum et al, 2000].

LLE finds the subspace that best preserves the local linear structure

of the data [Roweis and Saul, 2000].

Laplacian Eigenmap finds the subspace that best preserves local

neighborhood information in the adjacency graph [M. Belkin and P.

Niyogi,2003].

Multidimensional Scaling (MDS)

MDS finds the rank m projection that best preserves the

inter-point distance given by matrix D

Converts distances to inner products B= (D)= XT X

Calculate X

[VMDS, MDS] =eig(B)

X = V MDS (

1

MDS 2

)

Rank m projections Y closet to X

MDS

m

Y= V

(

1

MDS 2

m

)

Given the distance matrix among

cities, MDS produces this map:

PCA (Principal Component Analysis)

PCA finds the subspace that best preserves the data variance.

=Var(X)

[V PCA , PCA ]=eig( )

PCA projection of X with rank m

Y = VPCAX

m

PCA vs. MDS

VPCA XVMDS , PCA MDS , YPCA (

1

PCA 2

) YMDS

In the Euclidean case, MDS only differs from PCA by

starting with D and calculating X.

Isometric Feature Mapping (ISOMAP)

[Tenenbaum et al, 2000]

Geodesic :the shortest curve on a manifold

that connects two points on the manifold

e.g. on a sphere, geodesics are great circles

Geodesic distance: length of the geodesic

Points far apart measured by geodesic dist.

appear close measured by Euclidean dist.

A

B

ISOMAP

Take a distance matrix as input

Construct a weighted graph G based on neighborhood relations

Estimate pairwise geodesic distance by

“a sequence of short hops” on G

Apply MDS to the geodesic distance matrix

Locally Linear Embedding (LLE)

[Roweis and Saul, 2000]

LLE finds the subspace that best preserves the local

linear structure of the data

Assumption: manifold is locally “linear”

Each sample in the input space is a linearly weighted

average of its neighbors.

A good projection should best preserve this geometric

locality property

LLE

W: a linear representation of every data point by its neighbors

Choose W by minimized the reconstruction

error

2

n

K

i=1

j 1

minimizing x i Wij xij

n

s.t.

W

j 1

ij

1, x i ; Wij 0 if x j is not a neighbor of x i

Calculate a neighborhood preserving mapping Y, by minimizing

the reconstruction error

K

(Y)= yi Wij* yij , where W* arg min (W)

i 1

W

Y is given by the eigenvectors of the m lowest nonzero

eigenvalues of matrix (I-W)T (I-W)

Laplacian Eigenmap

[M. Belkin and P. Niyogi,2003]

Laplacian Eigenmap finds the subspace that best preserves local

neighborhood information in adjacency graph

Graph Laplacian: Given a graph G with weight matrix W

D is a diagonal matrix with Dii Wji

L =D –W is the graph Laplacian j

Detailed steps:

Construct adjacency graph G.

Weight the edges: Wij 1, if nodes i and j are connected, and 0 otw.

Generalized eigen-decomposition of Lf=Df

Embedding : eigenvectors with top m nonzero eigenvalues

A Unified Framework for

Dimension Reduction Algorithms

All use an eigendecomposition to obtain a lower-dimensional

embedding of data lying on a non-linear manifold.

H

^

Normalize affinity matrix

ij

j

H

H

^

eig (H) the m largest positive eigenvalues t and eigenvectors v t

The embedding of x i has two alternative solutions

Solution 1 : (MDS & Isomap)

ei with eit = t vit

^

ei , e j is the best approximation of H ij in the squared error sense.

Solution 2 : (LLE & Laplacian Eigenmap)

yi with yit = v ti

Outline

Introduction

Supervised Global Distance Metric Learning

Supervised Local Distance Metric Learning

Unsupervised Distance Metric Learning

Distance Metric Learning based on SVM

Kernel Methods for Distance Metrics Learning

Conclusions

Distance Metric Learning based on SVM

Large Margin Nearest Neighbor Based Distance Metric

Learning

Objective Function

Reformulation as SDP

Cast Kernel Margin Maximization into a SDP Problem

Maximum Margin

Cast into SDP problem

Apply to Hard Margin and Soft Margin

Large Margin Nearest Neighbor

Based Distance Metric Learning

[K. Weinberger et al., 2006]

Learns a Mahanalobis distance metric in the kNN classification

setting by SDP, that

Enforces the k-nearest neighbors belong to the same class

examples from different classes are separated by a large margin

After training

k=3 target neighbors lie within a smaller radius

differently labeled inputs lie outside this smaller radius with a

margin of at least one unit distance.

Large Margin Nearest Neighbor

Based Distance Metric Learning

Cost function:

(L) = ij L(x i -x j ) 2 Cij (1 yil )[1 L(x i -x j ) 2 L(x i -x l ) 2 ]

2

ij

2

2

ijl

[ z ] max(z,0) denotes the standard hinge loss and the constant C > 0.

yij {0,1} indicate whether or not the label yi and y j match

ij {0,1} indicate whether x j is a target neighbor of x i

Penalize large distances between each input and its target neighbors

The hinge loss is incurred by differently labeled inputs whose

distances do not exceed the distance from input x i to any of its target

neighbors by one absolute unit of distance

-> do not threaten to invade each other’s neighborhoods

Reformulation as SDP

2

Let L(x i -x j ) (x i -x j )T M(x i -x j ), and introducing slack variable ijl

2

The resulting SDP is :

min ij (x i x j )T M(x i x j ) Cij (1 yil )ijl

M

ij

ijl

s.t. (x i x j )T M(x i x j ) (x i x l ) T M(x i x l ) 1 ijl

ijl 0, M

=0

Cast Kernel Margin Maximization

into a SDP Problem

[G. R. G. Lanckriet et al, 2004]

Maximum margin : the decision boundary has the maximum

minimum distance from the closest training point.

Hard Margin: linearly separable

Soft Margin: nonlinearly separable

The performance measure, generalized from dual solution of

different maximizing margin problem

wC , (K) max 2 T e T (G ( K ) I ) : C 0, T y 0

with 0 on the training data w.r.t K. G is Gram matrix.

Cast into SDP Problem

min

K,t, , ,

min wC , (K) s.t. trace(K) = c

K =0

Hard Margin

t

s.t. trace(K)=c, K

=0, 0, 0,

G(K tr I ntr ) e y

0

T

T

(e y) t-2C e

min w(K tr ) s.t. trace(K)=c. Here w(K tr ) =w ,0 (K tr )

K =0

1-norm soft margin

min wS 1 (K tr ) s.t. trace(K)=c. Here wS1 (K tr ) =w C,0 (K tr )

K =0

2-norm soft margin

min wS 2 (K tr ) s.t. trace(K)=c. Here wS 2 (K tr ) =w , (K tr )

K =0

Outline

Introduction

Supervised Global Distance Metric Learning

Supervised Local Distance Metric Learning

Unsupervised Distance Metric Learning

Distance Metric Learning based on SVM

Kernel Methods for Distance Metrics Learning

Conclusions

Kernel Methods for

Distance Metrics Learning

Learning a good kernel is equivalent to distance metric

learning

Kernel Alignment

Kernel Alignment with SDP

Learning with Idealized Kernel

Ideal Kernel

The Idealized Kernel

Kernel Alignment

[N. Cristianini,2001]

A measure of similarity between two kernel functions or between

a kernel and a target function

The inner product between

two kernel matrices based on kernel k1

n

and k2.

K1 , K 2 F K1 (x i , x j )K 2 (x i , x j )

i , j1

^

The alignment of K1 and K2 w.r.t S:

A(S, k1 , k 2 )

K1 , K 2

K1 , K 1

F

F

K2 , K2

Measure the degree of agreement between a kernel and a given

learning task.

K1 , yy T

^

A(S, k1 , k 2 )

m

K1 , K1

, y { 1}m

F

F

F

Kernel Alignment with SDP

[G. R. G. Lanckriet et al, 2004]

Optimizing the alignment between a set of labels and a

kernel matrix using SDP in a transductive setting.

K tr

K= T

K

tr,t

K tr,t

, where K ij (x i ), (x j ) ,i, j =1,

K

, n tr n t .

Optimizing an objective function over the training data

block -> automatic tuning of testing data block

^

max A( S , K1 , yyT ) s.t. K

K

Introduce A with

A,K

=A and trace(A) 1 ,

KT K

max K tr , yy T

=0, trace(K) =1

this reduces to

F

s.t. trace(A) 1, K

=0,

A KT

K

I

n

=0.

Learning with Idealized Kernel

[J. T. Kwok and I.W. Tsang,2003]

Idealize a given kernel by making it more similar to the

ideal kernel matrix.

1, y(x i ) y(x j )

*

Ideal kernel: k (x i , x j )

0, y(x i ) y(x j )

~

Idealized kernel: k = k +

2

k*

~

K,K*

The alignment of k will be greater than k, if n2 n2

n , n are the number of positive and negative samples.

Under the original distance metric M:

k(xi , x j ) = x iT Mx j , M =0; dij2 (x i - x j )T M(x i - x j )

2

~

~

~

d

yi =y j

ij

Kii K jj 2 Kij 2

dij yi y j

Idealized kernel

~2

We modify d ij (x i - x j ) A A(x i - x j )

Search for a matrix A under which

different classes : pulled apart by an amount of at least

same class :getting close together.

T

T

2

d

yi = y j

ij

d 2

dij yi y j

~

2

ij

Introduce slack variables for error tolerance

1 2 C

min B 2 S

B, , ij 2

NS

1

C

ij D

ND

(x i ,x j )S

ij , where B= AA T

(x i ,x j )D

~

2 ~2

dij dij ij , (x i , x j ) D

s.t.

, ij 0, 0,

~

d 2 d 2 , (x , x ) S

ij

ij

i

j

ij

Conclusions

A comprehensive review, covers:

Supervised distance metric learning

Unsupervised distance metric learning

Maximum margin based distance metric learning

approaches

Kernel methods towards distance metrics

Challenge:

Unsupervised distance metric learning.

Going local in a principle manner.

Learn an explicit nonlinear distance metric in the local

sense.

Efficiency issue.