W4118 Operating Systems Instructor: Junfeng Yang 0

advertisement

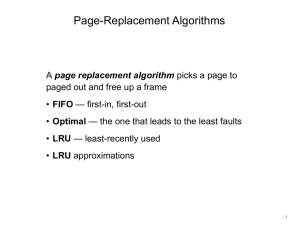

W4118 Operating Systems Instructor: Junfeng Yang 0 Last lecture: VM Implementation Operations OS + hardware must provide to support virtual memory Page fault Continue process • Locate page • Bring page into memory OS decisions When to bring a on-disk page into memory? What page to throw out to disk? • Demand paging • Request paging • Prepaging • OPT, RANDOM, FIFO, LRU, MRU 1 Today Virtual Memory Implementation Implementing LRU How to approximate LRU efficiently? Linux Memory Management 2 Implementing LRU: hardware A counter for each page Every time page is referenced, save system clock into the counter of the page Page replacement: scan through pages to find the one with the oldest clock Problem: have to search all pages/counters! 3 Implementing LRU: software A doubly linked list of pages Every time page is referenced, move it to the front of the list Page replacement: remove the page from back of list Avoid scanning of all pages Problem: too expensive Requires 6 pointer updates for each page reference High contention on multiprocessor 4 Example software LRU implementation 5 LRU: Concept vs. Reality LRU is considered to be a reasonably good algorithm Problem is in implementing it efficiently Hardware implementation: counter per page, copied per memory reference, have to search pages on page replacement to find oldest Software implementation: no search, but pointer swap on each memory reference, high contention In practice, settle for efficient approximate LRU Find an old page, but not necessarily the oldest LRU is approximation anyway, so approximate more 6 Clock (second-chance) Algorithm Goal: remove a page that has not been referenced recently good LRU-approximate algorithm Idea: combine FIFO and LRU A reference bit per page Memory reference: hardware sets bit to 1 Page replacement: OS finds a page with reference bit cleared OS traverses pages, clearing bits over time 7 Clock Algorithm Implementation OS circulates through pages, clearing reference bits and finding a page with reference bit set to 0 Keep pages in a circular list = clock Pointer to next victim = clock hand 8 Second-Chance (clock) Page-Replacement Algorithm 9 Clock Algorithm Reference string: 1, 2, 3, 4, 1, 2, 5, 1, 2, 3, 4, 5 1 1 5 1 5 1 5 1 2 1 2 0 1 1 1 1 3 1 3 0 3 0 2 1 4 1 4 0 4 0 4 0 5 1 4 1 4 1 1 1 1 0 5 1 2 1 2 0 2 0 3 1 3 0 3 0 10 Clock Algorithm Extension Problem of clock algorithm: does not differentiate dirty v.s. clean pages Dirty page: pages that have been modified and need to be written back to disk More expensive to replace dirty pages than clean pages One extra disk write (5 ms) 11 Clock Algorithm Extension Use dirty bit to give preference to dirty pages On page reference Read: hardware sets reference bit Write: hardware sets dirty bit Page replacement reference = 0, dirty = 0 victim page reference = 0, dirty = 1 skip (don’t change) reference = 1, dirty = 0 reference = 0, dirty = 0 reference = 1, dirty = 1 reference = 0, dirty = 1 advance hand, repeat If no victim page found, run swap daemon to flush unreferenced dirty pages to the disk, repeat 12 Problem with LRU-based Algorithms When memory is too small to hold past LRU does handle repeated scan well when data set is bigger than memory • 5-frame memory with 1 2 3 4 5 1 2 3 4 5 1 2 3 4 5 Solution: Most Recently Used (MRU) algorithm Replace most recently used pages Best for repeated scans 13 Problem with LRU-based Algorithms (cont.) LRU ignores frequency Intuition: a frequently accessed page is more likely to be accessed in the future than a page accessed just once Problematic workload: scanning large data set • 1 2 3 1 2 3 1 2 3 1 2 3 … (pages frequently used) • 4 5 6 7 8 9 10 11 12 … (pages used just once) Solution: track access frequency Least Frequently Used (LFU) algorithm • Expensive Approximate LFU: • LRU-k: throw out the page with the oldest timestamp of the k’th recent reference • LRU-2Q: don’t move pages based on a single reference; try to differentiate hot and cold pages 14 Linux Page Replacement Algorithm Similar to LRU 2Q algorithm Two LRU lists Active list: hot pages, recently referenced Inactive list: cold pages, not recently referenced Page replacement: select from inactive list Transition of page between active and inactive requires two references or “missing references” Allocating Memory to Processes Split pages among processes Split pages among users Global allocation Thrashing Example System repeats Set of processes frequently referencing 5 pages Only 4 frames in physical memory Reference page not in memory Replace a page in memory with newly referenced page Thrashing system busy reading and writing instead of executing useful instructions • CPU utilization low Average memory access time equals disk access time • Illusion breaks: memory appears slow as disk rather than disks appearing fast as memory Add more processes, thrashing get worse Working Set Informal Definition Collection of pages the process is referencing frequently Collection of pages that must be resident to avoid thrashing Methods exist to estimate working set of process to avoid thrashing Memory Management Summary “All problems in computer science can be solved by another level of indirection” David Wheeler Different memory management techniques Contiguous allocation Paging Segmentation Paging + segmentation In practice, hierarchical paging is most widely used; segmentation loses is popularity • Some RISC architectures do not even support segmentation Virtual memory OS and hardware exploit locality to provide illusion of fast memory as large as disk • Similar technique used throughout entire memory hierarchy Page replacement algorithms • LRU: Past predicts future • No silver bullet: choose algorithm based on workload 19 Current Trends Memory management: less critical now Segmentation becomes less popular Better TLB coverage Smaller page tables, less page to manage Internal fragmentation Larger virtual address space Some RISC chips don’t event support segmentation Larger page sizes (even multiple page sizes) Personal computer v.s. time-sharing machines Memory is cheap Larger physical memory 64-bit address space Sparse address spaces File I/O using the virtual memory system Memory mapped I/O: mmap() 20 Today Virtual Memory Implementation Implementing LRU How to approximate LRU efficiently? Linux Memory Management Page replacement Segmentation and Paging Dynamic memory allocation 21 Page descriptor Keep track of the status of each page frame struct papge, include/linux/mm.h Each descriptor has two bits relevant to page replacement policy PG_active: is page on active_list? PG_referenced: was page referenced recently? Memory Zone Keep track of pages in different zones struct zone, include/linux/mmzone.h ZONE_DMA: <16MB ZONE_NORMAL: 16MB-896MB ZONE_HIGHMEM: >896MB Two LRU list of pages active_list inactive_list Functions lru_cache_add*(): add to page cache mark_page_accessed(): move pages from inactive to active page_referenced(): test if a page is referenced refill_inactive_zone(): move pages from active to inactive When to replace page Usually free_more_memory() Today Virtual Memory Implementation Implementing LRU How to approximate LRU efficiently? Linux Memory Management Page replacement Segmentation and Paging Dynamic memory allocation 25 Recall: x86 segmentation and paging hardware CPU generates logical address Given to segmentation unit • Which produces linear addresses Linear address given to paging unit • Which generates physical address in main memory • Paging units form equivalent of MMU 26 Recall: Linux Process Address Space 4G kernel mode Kernel space 3G User-mode stack-area User space user mode Shared runtime-libraries Task’s code and data 0 process descriptor and kernel-mode stack Kernel space is also mapped into user space from user mode to kernel mode, no need to switch address spaces Linux Segmentation Linux does not use segmentation X86 segmentation hardware cannot be disabled, so Linux just hacks segmentation table More portable since some RISC architectures don’t support segmentation Hierarchical paging is flexible enough arch/i386/kernel/head.S Set base to 0x00000000, limit to 0xffffffff Logical addresses == linear addresses Protection Descriptor Privilege Level indicates if we are in privileged mode or user mode • User code segment: DPL = 3 • Kernel code segment: DPL = 0 Linux Paging Linux uses paging to translate logical addresses to physical addresses Page model splits a linear address into five parts Global dir Upper dir Middle dir Table Offset 29 Kernel Address Space Layout Physical Memory Mapping 0xC0000000 Persistent vmalloc … vmalloc area area High Memory Mappings Fix-mapped Linear addresses 0xFFFFFFFF Linux Page Table Operations include/asm-i386/pgtable.h arch/i386/mm/hugetlbpage.c Examples mk_pte 31 TLB Flush Operations include/asm-i386/tlbflush.h Flushing TLB on X86 load cr3: flush all TLB entries invlpg addr: flush a single TLB entry 32 Today Virtual Memory Implementation Implementing LRU How to approximate LRU efficiently? Linux Memory Management Page replacement Segmentation and Paging Dynamic memory allocation 33 Linux Page Allocation Linux use a buddy allocator for page allocation Allocation restrictions: 2^n pages Allocation of k pages: Raise to nearest 2^n Search free lists for appropriate size • Recursively divide larger blocks until reach block of correct size • “buddy” blocks Free Buddy Allocator: Fast, simple allocation for blocks that are 2^n bytes [Knuth 1968] Recursively coalesce block with buddy if buddy free Example: allocate a 256-page block mm/page_alloc.c Advantages and Disadvantages of Buddy Allocation Advantages Fast and simple compared to general dynamic memory allocation Avoid external fragmentation by keeping free pages contiguous • Can use paging, but three problems: – DMA bypasses paging – Modifying page table leads to TLB flush – Cannot use “super page” to increase TLB coverage Disadvantages Internal fragmentation • Allocation of block of k pages when k != 2^n • Allocation of small objects (smaller than a page) The Linux Slab Allocator For objects smaller than a page Implemented on top of page allocator Each memory region is called a cache Two types of slab allocator Fixed-size slab allocator: cache contains objects of same size • for frequently allocated objects General-purpose slab allocator: caches contain objects of size 2^n • for less frequently allocated objects • For allocation of object with size k, round to nearest 2^n mm/slab.c Advantages and Disadvantages of slab allocation Advantages Fast: no need to allocate and free page frames • Allocation: no search of objects with the right size for fixed-size allocator; simple search for generalpurpose allocator • Free: no merge with adjacent free blocks Reduce internal fragmentation: many objects in one page Disadvantages Memory overhead for bookkeeping Internal fragmentation