Virtual Memory and I/O Mingsheng Hong

advertisement

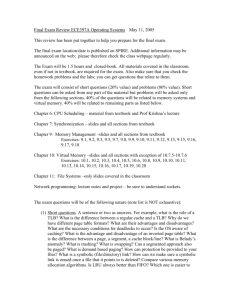

Virtual Memory and I/O Mingsheng Hong I/O Systems Major I/O Hardware Hard disks, network adaptors … Problems related with I/O Systems Various types of Hardware – device drivers to provide OS with a unified I/O interface Typically much slower than CPU and memory speed – system bottleneck Too much CPU involvement in I/O operations Techniques to Improve I/O Performance Buffering e.g. download a file from network DMA Caching CPU cache, TLB, file cache.. Other Techniques to Improve I/O Performance Virtual Memory Page Remapping (IOLite) Allows (cached) files and memory to be shared by different processes without extra data copy Prefetching Data (Software Pretching and Caching for TLBs) Prefetches and caches page table entries Summary of First Paper IO-Lite: A Unified I/O Buffering and Caching System (Pai et al. Best Paper of 3rd OSDI, 1999) A unified I/O System Uses immutable data buffers to store all I/O data (only one physical copy) Uses VM page remapping IPC file system (disk files, file cache) network subsystem Summary of Second Paper Software Prefetching and Caching for Translation Lookaside buffers (Bala et al. 1994) A software approach to help reduce TLB misses Works well for IPC-intensive systems Bigger performance gain for future systems Features of IO-Lite Eliminates redundant data copying Eliminates Multiple buffering Saves CPU work & avoids cache pollution Saves main memory => improves hit rate of file cache Enables cross-subsystem optimizations Cache Internet checksum Supports application-specific cache replacement policies Related work before IO-Lite I/O APIs should preserve copy semantics Memory-mapped files Copy On Write Fbufs Key Data Structures Immutable Buffers and Buffer Aggregates Discussion I When we pass a buffer aggregate from process A to process B, how to efficiently do VM page remapping (modify B’s page table entries)? Possible Approach 1: find any empty entry, and modify the VM address contained in buffer aggregate Very inefficient Possible Approach 2: reserve the range of virtual addresses of buffers in the address space of each process Basically limited the total size of buffers – How about dynamically allocated buffers? Impact of Immutable I/O Buffers Copy-On-Write Optimization Modified values are stored in a new buffer, as opposed to “in-place modification” Three situations when the data object is … Completely modified Partially modified (modification localized) Allocates a new buffer Chains unmodified and modified portions of data Partially modified (modification not localized) Compares the cost of writing an entire object with that of chaining; chooses the cheaper method Discussion II How to measure the two costs? Heuristics needed Fragmented data v.s. clustered data Chained data increase reading cost Similar to shadow page technique used in System R Should the cost of retrieving data from buffer also be considered? What does IO-Lite do? Reduces extra data copy in IPC file system (disk files, file cache) network subsystem Makes possible cross-subsystem optimization IO-Lite and IPC Operations on Buffers & Aggregates When I/O data is transferred When buffer is deallocated Pass related aggregates by value Associated buffers are passed by reference Buffer returned to a memory pool Buffer’s VM page mappings persist When buffer is reused (by the same process) No further VM map changes required (Temporarily) grant write permission to associated producer process Io-Lite and Filesystem IO-Lite I/O APIs Provided Filesystem cache reorganized IOL_read(int fd, IOL_Agg **aggr, size_t size) IOL_write(int fd, IOL_Agg **aggr) IOL_write operations are atomic – concurrency support I/O functions in stdio library reimplemented Buffer aggregates (pointers to data), instead of file data, are stored in cache Copy Semantics ensured Suppose a portion of a cached file is read, and then is overwritten Copy Semantics Illustration 1 File Cache Buffer 1 Buffer Aggregate (in user process) Copy Semantics Illustration 2 File Cache Buffer 1 Buffer Aggregate (in user process) Copy Semantics Illustration 3 File Cache Buffer 1 Buffer Aggregate (in user process) Buffer 2 More on File Cache Management & VM Paging Cache replacement policy (can be customized) The eviction order is by current reference status & time of last file access Evict one entry when the file cache “appears” to be too large Added one entry on every file cache miss When a buffer page is paged out, data will be written back to swap space, and possibly to several other disk locations (for different files) IO-Lite and Network Subsystem Access control and protection for processes ACL related with buffer pools Must determine the ACL of a data object prior to allocating memory for it Early demultiplexing technique to determine ACL for each incoming packet A Cross-Subsystem Optimization Internet checksum caching Cache the computed checksum for each slice of a buffer aggregate Increment the version number when buffer is reallocated – can be used to check whether data changed Works well for static files. Also has a big benefit on the CGI programs that chain dynamic data with static data Performance – Competitors Flash Web server – a high performance HTTP server Flash-Lite – A modified version of Flash using IO-Lite API Apache 1.3.1 – representing the widely used Web server today Performance – Static Content requesting Performance – CGI Programs Performance – Real Workload Average request size: 17KBytes Performance – WAN Effects Memory for buffers = # clients * Tss Performance – Other Applications Conclusion on I/O-Lite A unified framework of I/O subsystems Impressive performance in Web applications due to copy-avoidance & checksum caching Software Prefetching & Caching for TLBs Prefetching & Caching Never applied to TLB misses in a software approach Improves overall performance by up to 3% But has a great potential on newer architectures Clock Speed: 40MHz => 200 MHz Issues in Virtual Memory User Address Space is typically huge … TLB to cache page tables Software support to help reduce TLB misses Motivations TLB misses occur more frequently in Microkernel-based OS RISC computers handle TLB misses in software (trap) IPCs have a bigger impact on system performance Approach Use a software approach to prefetch and cache TLB entries Experiments done on MIPS R3000based (RISC) architecture with Mach 3.0 Applications chosen from standard benchmarks, as well as a synthetic IPCintensive benchmark Discussion The way the authors motivate their paper A right approach for a particular type of system A valid Argument for future computer systems regarding performance gain Figures of experimental results mostly showing the reduced number of TLB misses, instead of overall performance improvement A synthetic IPC-intensive application to support their approach Prefetching: What entries to prefetch? L1U: user address spaces L1K: kernel data structures L2: user (L1U) page tables Stack segments Code segments Data segments L3: L1K and L2 page tables Prefetching: Details On the first IPC call, probe hardware TLB on the PIC path and enter related TLB entries into PTLB On Subsequent IPC calls, entries are prefetched into PTLB by a hashed lookup Entries are stored in upmapped, cached physical memory Prefetching: Performance Prefetching: Performance Rate of TLB misses? Caching: Software Victim Cache Use a region of unmapped, cached memory to cache entries evicted from hardware TLB PTE lookup sequence: hardware TLB STLB generic trap handler Caching: Benefits A faster trap path for TLB misses Avoids overhead of context switch Eliminates (reduces?) cascaded TLB misses Caching: Performance Average STLB penalties Kernel TLB hit rates Caching: Performance Prefetching + Caching: Performance Worse than using PTLB alone! (Don’t understand the authors comment to justify it…) Discussion SLTB (caching) is better than PLTB. So using it alone suffices. Is it possible to improve the IPC performance using both VM page remapping and software prefetching & caching?