Fair Share Scheduling Ethan Bolker Yiping Ding UMass-Boston

advertisement

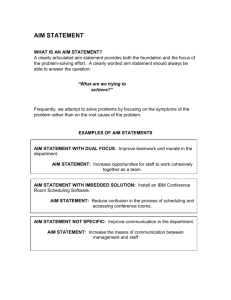

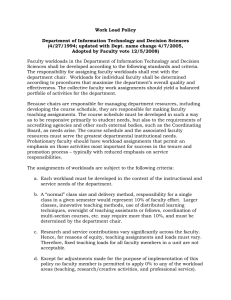

Fair Share Scheduling Ethan Bolker UMass-Boston BMC Software Yiping Ding BMC Software CMG2000 Orlando, Florida December 13, 2000 Coming Attractions • • • • • • • Scheduling for performance Fair share semantics Priority scheduling; conservation laws Predicting transaction response time Experimental evidence Hierarchical share allocation What you can take away with you Dec 13, 2000 Fair Share Scheduling 2 Strategy • Tell the story with pictures when I can • Question/interrupt at any time, please • If you want to preserve the drama, don’t read ahead Dec 13, 2000 Fair Share Scheduling 3 References • Conference proceedings (talk != paper) • www.bmc.com/patrol/fairshare www/cs.umb.edu/~eb/goalmode Acknowledgements • • • • Jeff Buzen Dan Keefe Oliver Chen Chris Thornley Dec 13, 2000 • Aaron Ball • Tom Larard • Anatoliy Rikun Fair Share Scheduling 4 Scheduling for Performance • Administrator specifies performance goals – Response times: IBM OS/390 (not today) – Resource allocations (shares): Unix offerings from HP (PRM) IBM (WLM) Sun (SRM) • Operating system dispatches jobs in an attempt to meet goals Dec 13, 2000 Fair Share Scheduling 5 Scheduling for Performance OS complex scheduling software Performance Goals report fast computation update analytic algorithms query Model response time measure frequently workload Dec 13, 2000 Fair Share Scheduling 6 Modeling • Real system – Complex, dynamic, frequent state changes – Hard to tease out cause and effect • Model – Static snapshot, deals in averages and probabilities – Fast enlightening answers to “what if ” questions • Abstraction helps you understand real system Dec 13, 2000 Fair Share Scheduling 7 Shares • Administrator specifies fractions of various system resources allocated to workloads: e.g. “Dataminer owns 30% of the CPU” • Resource: CPU cycles, disk access, memory, network bandwidth, number of processes,... • Workload: group of users, processes, applications, … • Precise meanings depend on vendor’s implementation Dec 13, 2000 Fair Share Scheduling 8 Workloads • Batch – Jobs always ready to run (latent demand) – Known: job service time – Performance metric: throughput • Transaction – Jobs arrive at random, queue for service – Known: job service time and throughput – Performance metric: response time Dec 13, 2000 Fair Share Scheduling 9 CPU Shares • Administrator gives workload w CPU share fw • Normalize shares so that w fw = 1 • w gets fraction fw of CPU time slices when at least one of its jobs is ready for service • Can it use more if competing workloads idle? No : think share = cap Yes : think share = guarantee Dec 13, 2000 Fair Share Scheduling 10 Shares As Caps • Good for accounting (sell fraction of web server) • Available now from IBM, HP, soon from Sun • Straightforward: dedicated system share f batch wkl throughput f transaction wkl utilization response time u r Dec 13, 2000 Fair Share Scheduling need f > u ! r(1 u)/(f u) 11 Shares As Guarantees • Good for performance + economy (production and development share CPU) • When all workloads are batch, guarantees are caps • IBM and Sun report tests of this case – confirm predictions – validate OS implementation • No one seems to have studied transaction workloads Dec 13, 2000 Fair Share Scheduling 12 Guarantees for Transaction Workloads • May have share < utilization (!) • Large share is like high priority • Each workload’s response time depends on utilizations and shares of all other workloads • Our model predicts response times given shares Dec 13, 2000 Fair Share Scheduling 13 There’s No Such Thing As a Free Lunch • High priority workload response time can be low only because someone else’s is high • Response time conservation law: Weighted average response time is constant, independent of priority scheduling scheme: workloads w wrw = C • For a uniprocessor, C = U/(1-U) • For queueing theory wizards: C is queue length Dec 13, 2000 Fair Share Scheduling 14 Two Workloads r2 : workload 2 response time (Uniprocessor Formulas) conservation law: (r1 , r2 ) lies on the line 1r1 + 2r2 = U/(1-U) r1 : workload 1 response time 15 Two Workloads r2 : workload 2 response time (Uniprocessor Formulas) constraint resulting from workload 1 1r1 u1 /(1- u1 ) r1 : workload 1 response time 16 Two Workloads r2 : workload 2 response time (Uniprocessor Formulas) Workload 1 runs at high priority: V(1,2) = (s1 /(1- u1 ), s2 /(1- u1 )(1-U) ) constraint resulting from workload 1 1r1 u1 /(1- u1 ) r1 : workload 1 response time 17 Two Workloads r2 : workload 2 response time (Uniprocessor Formulas) 1r1 + 2r2 = U/(1-U) V(2,1) r1 : workload 1 response time 2r2 u2 /(1- u2 ) 18 Two Workloads r2 : workload 2 response time (Uniprocessor Formulas) V(1,2) = (s1 /(1- u1 ), s2 /(1- u1 )(1-U) ) 1r1 + 2r2 = U/(1-U) 1r1 u1 /(1- u1 ) V(2,1) r1 : workload 1 response time 2r2 u2 /(1- u2 ) 19 Three Workloads • Response time vector (r1, r2, r3) lies on plane 1 r1 + 2 r2 + 3 r3 = C • We know a constraint for each workload w: w rw Cw • Conservation applies to each pair of wkls as well: 1 r1 + 2 r2 C12 • Achievable region has one vertex for each priority ordering of workloads: 3! = 6 in all • Hence its name: the permutahedron Dec 13, 2000 Fair Share Scheduling 20 Three Workload Permutahedron 3! = 6 vertices (priority orders) 23 - 2 = 6 edges (conservation constraints) r3 V(2,1,3) 1r1 + 2r2 + 3r3 = C V(1,2,3) r2 r1 Dec 13, 2000 Fair Share Scheduling 21 Three Workload Benchmark IBM WLM u1= 0.15, u2 = 0.2, u3 = 0.4 vary f1, f2, f3 subject to f1 + f2 + f3 = 1, measure r1, r2, r3 Four Workload Permutahedron 4! = 24 vertices (priority orders) 24 - 2 = 14 facets (conservation constraints) Simplicial geometry and transportation polytopes, Trans. Amer. Math. Soc. 217 (1976) 138. Response Time Conservation • Provable in model assuming – Random arrivals, varying service times – Queue discipline independent of job attributes (fifo, class based priority scheduling, …) • Observable in our benchmarks • Can fail: shortest job first minimizes average response time – printer queues – supermarket express checkout lines Dec 13, 2000 Fair Share Scheduling 24 Predict Response Times From Shares • Given shares f1 , f2 , f3 ,… we want to know vector V = r1 , r2 , r3 ,... of workload response times • Assume response time conservation: V will be a point in the permutahedron • What point? Dec 13, 2000 Fair Share Scheduling 25 Predict Response Times From Shares (Two Workloads) • Reasonable modeling assumption: f1 = 1, f2 = 0 means workload 1 runs at high priority • For arbitrary shares: workload priority order is (1,2) with probability f1 (2,1) with probability f2 (probability = fraction of time) • Compute average workload response time: r1 = f1 (wkl 1 response at high priority) + f2 (wkl 1 response at low priority ) Dec 13, 2000 Fair Share Scheduling 26 r2 : workload 2 response time Predict Response Times From Shares in this picture f1 2/3, f2 1/3 V(1,2) : f1 =1, f2 =0 f2 V = f1 V(1,2) + f2 V(2,1) f1 r1 : workload 1 response time V(2,1) : f1 =0, f2 =1 27 Model Validation IBM WLM u1= 0.24, u2 = 0.47 vary f1, f2 subject to f1 + f2 = 1, measure r1, r2 28 Conservation Confirmed conservation law 29 Heavy vs. Light Workloads u1= 0.24, u2 = 0.47; u2 2 u1 30 Glitch? strange WLM anomaly near f1 = f2 = 1/2 (reproducible) 31 Shares vs. Utilizations • Remember: when shares are just guarantees f < u is possible for transaction workloads • Think: large share is like high priority • Share allocations affect light workloads more than heavy ones (like priority) • It makes sense to give an important light workload a large share Dec 13, 2000 Fair Share Scheduling 32 Three Transaction Workloads 1 ??? 2 ??? 3 ??? • Three workloads, each with utilization 0.32 jobs/second 1.0 seconds/job = 0.32 = 32% • CPU 96% busy, so average (conserved) response time is 1.0/(10.96) = 25 seconds • Individual workload average response times depend on shares Dec 13, 2000 Fair Share Scheduling 33 Three Transaction Workloads 1 32.0 2 48.0 3 20.0 sum 80.0 • Normalized f3 = 0.20 means 20% of the time workload 3 (development) would be dispatched at highest priority • During that time, workload priority order is (3,1,2) for 32/80 of the time, (3,2,1) for 48/80 • Probability( priority order is 312 ) = 0.20(32/80) = 0.08 Dec 13, 2000 Fair Share Scheduling 34 Three Transaction Workloads • Formulas in paper in proceedings • Average predicted response time weighted by throughput 25 seconds (as expected) • Hard to understand intuitively • Software helps Dec 13, 2000 Fair Share Scheduling 35 The Fair Share Applet • Screen captures on last and next slides from www.bmc.com/patrol/fairshare • Experiment with “what if” fair share modeling • Watch a simulation • Random virtual job generator for the simulation is the same one used to generate random real jobs for our benchmark studies Dec 13, 2000 Fair Share Scheduling 36 note change from 32% Three Transaction Workloads 37 jobs currently on run queue Simulation 38 When the Model Fails • Real CPU uses round robin scheduling to deliver time slices • Short jobs never wait for long jobs to complete • That resembles shortest job first, so response time conservation law fails • At high utilization, simulation shows smaller response times than predicted by model • Response time conservation law yields conservative predictions Dec 13, 2000 Fair Share Scheduling 39 Share Allocation Hierarchy development share 0.2 production share 0.8 customer 1 share 0.4 customer 2 share 0.6 x • Workloads at leaves of tree • Shares are relative fractions of parent • Production gets 80% of resources x x x Customer 1 gets 40% of that 80% • Users in development share 20% • Available for Sun/Solaris. IBM/Aix offers tiers. Dec 13, 2000 Fair Share Scheduling 40 Don’t Flatten the Tree! For transaction workloads, shares as guarantees: production share 0.8 customer 1 share 0.4 development share 0.2 customer 2 share 0.6 is not the same as: customer 1 share 0.40.8 Dec 13, 2000 customer 2 share 0.6 0.8 Fair Share Scheduling development share 0.2 41 Response Time Predictions customer1 customer2 development share 32/80 48 /80 20 response times flat 23.2 14.2 37.6 tree 11.1 8.1 55.8 Response times computed using formulas in the paper, implemented in the fairshare applet. Why does customer1 do so much better with hierarchical allocation? Dec 13, 2000 Fair Share Scheduling 42 Response Time Predictions customer1 customer2 development share 32/80 48 /80 20 response times flat 23.2 14.2 37.6 tree 11.1 8.1 55.8 Often customer1 competes just with development. When that happens he gets 80% of the CPU in tree mode, 32/(32+20) 60% in flat mode Customers do well when they pool shares to buy in bulk. Dec 13, 2000 Fair Share Scheduling 43 Fair Share Scheduling • Batch and transaction workloads behave quite differently (and often unintuitively) when shares are guarantees • A model helps you understand share semantics • Shares are not utilizations • Shares resemble priorities • Response time is conserved • Don’t flatten trees • Enjoy the applet Dec 13, 2000 Fair Share Scheduling 44 Fair Share Scheduling Ethan Bolker UMass-Boston BMC Software Yiping Ding BMC Software CMG2000 Orlando, Florida December 13, 2000