Bayesian Models, Prior Knowledge, and Data Fusion for Monitoring Messages and Identifying Authors

Bayesian Models, Prior

Knowledge, and Data Fusion for

Monitoring Messages and

Identifying Authors.

Paul Kantor Rutgers

May 14, 2007

• The Team

• Bayes’ Methods

Outline

• Method of Evaluation

• A toy Example

• Expert Knowledge

• Efficiency issues

• Entity Resolution

• Conclusions

Many collaborator

• The Team

• Bayes’ Methods

• Method of Evaluation

• A toy Example

• Expert Knowledge

• Efficiency issues

• Entity Resolution

• Conclusions

• Principals

• Fred Roberts

• David Madigan

• Dave D. Lewis

• Paul Kantor

•

Programmers

• Vladimir Menkov

• Alex Genkin

•

Now Ph.Ds

•

REU Students

• Suhrid • Ross Sowell

Balakrishnan

• Diana Michalek

• Dmitriy

• Jordana Chord

Fradkin

• Melissa

• Aynur Dayanik

Mitchell

• Andrei

Anghelescu

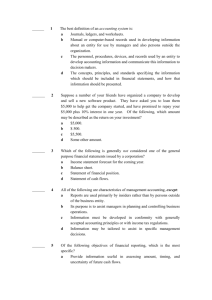

Overview of Bayes

• The Team

• Bayes’ Methods

• Method of Evaluation

• A toy Example

• Expert Knowledge

• Efficiency issues

• Entity Resolution

• Conclusions

• Personally I

– go to Frequentist Church on Sunday

– shop at Bayes’ the rest of the week

Making accurate predictions

• Be careful to not over fit the training data

– Way to avoid this: use a prior distribution

• 2 types: Gaussian and

Laplace

Laplace

If you use Gaussian Prior

• For Gaussian prior(ridge): age/100

Every feature enters the models glu ped npreg skin

0 intercept

0.05

0.1

0.15

0.2

0.25

0.3

If you use a LaPlace Prior

•For Laplace prior(Lasso):

N

arg inf

1 n i

1 log( 1

exp(

w

T x i y i

))

j w j age/100

Features are added slowly, and require stronger evidence glu bp bmi/100 ped npreg

120 100 80 60 skin

40 20 intercept

0

Success Qualifier

• The Team

• Bayes’ Methods

• Method of Evaluation

• A toy Example

• Expert Knowledge

• Efficiency issues

• Entity Resolution

• Conclusions

AUC =average distance, from the bottom of the list, of the items we’d like to see at the top. Null Hypothesis: Distributed as the average•sum of P uniform variates.

Test Corpus

• The Team

• Bayes’ Methods

• Method of Evaluation

• A toy Example

• Expert Knowledge

• Efficiency issues

• Entity Resolution

• Conclusions

• We took ten authors who were prolific authors between 1997 and 2002 and who had papers which were easy to disambiguate manually so that we could check the results of BBR. We then chose six KINDS

OF features from these people‘s work to be used in training and testing BBR in the hopes that a specific Kind of features might prove more useful in identifying authors than another

• Keywords

• Co-Author Names

• Addresses (words)

• Abstract

• Addresses (n-grams)

• Title

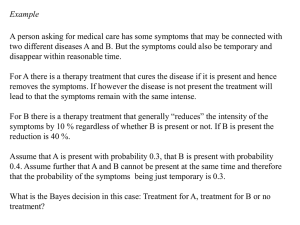

Domain Knowledge and Optimization in

Bayesian Logistic

Regression

• The Team

• Bayes’ Methods

• Method of Evaluation

• A toy Example

• Expert Knowledge

• Efficiency issues

• Entity Resolution

• Conclusions

Thanks to

Dave Lewis

David D. Lewis Consulting, LLC

Outline

• Bayesian logistic regression

• Advances

• Using domain knowledge to reduce the need for training data

• Speeding up training and classification

• Online training

Logistic Regression in Text and

Data Mining

• Classification as a fundamental primitive

– Text categorization: classes = content distinctions

– Filtering: classes = user interests

– Entity resolution: classes = entities

• Bayesian logistic regression

– Probabilistic classifier allows combining outputs

– Prior allows creating sparse models and combining training data and domain knowledge

– Our KDD-funded BBR and BMR software now widely used

A Lasso Logistic Model

(category “grain”)

Word corn wheat rice sindt madagascar import grain contract

Beta Word Beta

29.78

formal -1.15

20.56

holder -1.43

11.33

hungarian -6.15

10.56

rubber -7.12

6.83

special

6.79

… …

-7.25

6.77

beet -13.24

3.08

rockwood -13.61

Using Expert Knowledge in Text

Classification

• What do we know about a category:

– Category description (e.g. MESH- MEdical Subject

Headings)

– Human knowledge of good predictor words

– Reference materials (e.g. CIA Factbook)

• All give clues to good predictor words for a category

– We convert these to a prior on parameter values for words

– Other classification tasks, e.g. entity resolution, have expert knowledge also

Constructing Informative Prior Distributions from Domain

Knowledge in Text Classification

Aynur Dayanik, David D. Lewis, David Madigan, Vladimir

Menkov, and Alexander Genkin, January 2006

Corpora

• TREC Genomics. Presence or absence of certain mesh headings

• ModApte “top 10 categories” (Wu and

Srihari)

• RCV1 A-B ……see next slide…...

Categories. We selected a subset of the Reuters

Region categories whose names exactly matched the names of geographical regions with entries in the CIA World Factbook (see below) and which had one or more positive examples in our large

(23, 149 document) training set. There were 189 such matches, from which we chose the 27 with names beginning with the letter A or B to work with, reserving the rest for future use.

Some experimental results

• Documents are represented by the so-called tf.idf representation.

• Prior information can be used either to change the variance , or set the mode (an offset or bias). Those results are shown in red. Lasso with no prior information is shown in black.

Lasso

Var/TFIDF

Mode/TFIDF

Ridge

Var/TFIDF

Mode/TFIDF

Large Training Sets

(3700 to 23000 examples)

ModApte Bio

Articles

54.2

55.2

53.3

26.3

52.2

41.9

84.1

84.6

83.6

82.9

83.8

83.1

RCV1-v2

62.9

70.8

64.5

42.2

68.9

62.9

Lasso

Var/TFIDF

Mode/TFIDF

Ridge

Var/TFIDF

Mode/TFIDF

Tiny Training Sets

(5 positive examples + 5 random examples)

ModApte RCV1-v2 Bio

Articles

29.6

34.3

36.4

18.8

35.7

33.9

42.7

61.3

58.5

27.1

61.5

62.1

52.1

50.7

51.5

23.0

53.0

48.8

Findings

• For lots of training data, adding domain knowledge doesn’t help much

• For little training data, it helps more, and more often.

• It is more effective when used to set the priors, than when used as “additional training data”.

Speeding Up Classification

• The Team

• Bayes’ Methods

• Method of Evaluation

• A toy Example

• Expert Knowledge

• Efficiency issues

• Entity Resolution

• Conclusions

• Completed new version of BMRclassify

– Replaces old BBRclassify and BMRclassify

• More flexible

– Can apply 1000’s of binary and polytomous classifiers simultaneously

– Allows meaningful names for features

• Inverted index to classifier suites for speed

– 25x speedup over old BMRclassify and BBRclassify

Online & Reduced Memory

Training

• Rapid updating of classifiers as new data arrives

• Use training sets too big to fit in memory

– Larger training sets, when available, give higher accuracy

KDD Entity Resolution

Challenges

• The Team

• Bayes’ Methods

• Method of Evaluation

• A toy Example

• Expert Knowledge

• Efficiency issues

• Entity Resolution

• Conclusions

• ER1b: Is this pair of persons who have been possibly renamed, both really the same person?

• ER2a: Which of these persons, in the author list, is using a pseudonym?

KDD Entity Resolution

Challenges

• ER1b: Is this pair of persons who have been possibly renamed, both really the same person? (NO) YES

• Smith and Jones

• Smith Jones and Wesson

• ER2a: Which of these persons, in the author list, is using a pseudonym?

This one!

Conclusions drawn from KDD-1

• On ER1b several of our submissions topped the rankings based on accuracy (the only measure used). Best : dimacs-er1bmodelavg-tdf-aaan

– probabilities for all document pairs from 11

CLUTO methods and 1 Doc. Sim. model; summed; Vectors included some author address information

And ….

– dimacs-er1b-modelavg-tdf-noaaan was second

– no AAAN info in the vectors.

– Third :dimacs-er1b-modelavg-binary-noaaan

– combining many models, no binary representation.

– CONCLUSION: Model averaging ( alias : data fusion, combination) is better than any of the parts.

Conclusions on ER2a

• On ER2a measures were accuracy, squared error, ROC area (AUC) and cross entropy.

–

Our : dimacs-er2a-single-X; 3rd (accuracy) ; 4th by

AUC.

– trained binary logistic regression, using binary vectors (with AAAN info).

– probability for “no replacement” = product of conditional probabilities for individual authors. Some post-processing using AAAN info.

– No information from the text itself

And …...

– Omitting the AAAN post-processing ( dimacs-er2asingle ), somewhat worse (4th by accuracy and 6th by

AUC).

•

Every kind of information helps, even if it is there by accident.

ER2: Which authors belong:The affinity of authors to each other

(naïve)

ER2: Which authors belong?

More sophisticated model

• Update the probability that y is an author of

D yielding, after some work, the formula:

R

h ( r

1

h ) p ( y | D , z p ( y | D , z

0 )

0 ) y k

1

1

c c u [ u [ y ] y ]

a

A / A

D

1

c a c a

p ( z p ( z

0 )

0 )

Read More at:

•

Simulated Entity Resolution by Diverse

Means: DIMACS Work on the KDD

Challenge of 2005

Andrei Anghelescu, Aynur Dayanik,

Dmitriy Fradkin, Alex Genkin, Paul Kantor,

David Lewis, David Madigan, Ilya Muchnik and Fred Roberts, December 2005,

DIMACS Technical Report 2005-42

Read more at

• http://www.stat.rutgers.edu/~madigan/PAPE

RS/tc-dk.pdf

Streaming algorithms

• L1

• Good performance

•

Algorithms for Sparse Linear Classifiers in the Massive Data Setting .

S. Balakrishnan and D. Madigan. Journal of

Machine Learning Research, submitted,

2006.

Summary of findings

• The Team

• Bayes’ Methods

• Method of Evaluation

• A toy Example

• Expert Knowledge

• Efficiency issues

• Entity Resolution

• Conclusions • With little training data, Bayesian methods work better when they can use general knowledge about the target group

• To determine whether several “records” refer to the same “person” there is no

“magic bullet” and combining many methods is feasible and powerful

• to detect an imposter in a group, “social” methods based on combing probabilities are effective (we did not use info in the papers)

• The Team

• Bayes’ Methods

• Method of Evaluation

• A toy Example

• Expert Knowledge

• Efficiency issues

• Entity Resolution

• Conclusions