رد قیقحت یناریا نمجنا یللملا نیب سنارفنک نیلوا 1386 ایلمع ت

advertisement

اولین کنفرانس بین المللی انجمن ایرانی تحقیق در

1386 بهمن--- دانشگاه کیش--- عملیات

A differential model for solving an optimization problem with intervalvalued objective function

Sohrab Effati 1, Morteza Pakdaman2

Department of mathematics, Tarbiat Moallem University of Sabzevar, Sabzevar, Iran

Abstract:

In this paper we apply a differential model (neural network model) for solving an

optimization problem with interval valued objective function. To construct the

differential model, here we used Karush-Kuhn-Tucker optimality conditions.

1. Model definition

Suppose denotes the set of all closed and bounded intervals in R. So if a, b be two

closed and bounded intervals ( a, b ), we denote them with a [a L , a U ] and

b [b L , bU ] and we have the following operations in :

[ka L , kaU ] if k 0

a b [a L b L , a U bU ], a [a U ,a L ], ka U

[ka , ka L ] f k 0

Where k is a real number. We define the following Hausdorff metric in :

d H (a, b) max{| a L b L |, | aU bU |}.

Also a function f : R n is called an interval-valued function, i.e. for each

X R n , f(X) is a closed interval in R..

Proposition1. 1. ([see [1]) If f be an interval valued function , then f is

continuous at x R n if and only if f L and f U are continuous at x.

Definition1. 1. Let X be an open set in R . An interval valued function f with

f ( x) [ f L ( x), f U ( x)] is called weakly differentiable at x 0 if the real valued

functions f

L

and f U are differentiable at x 0 . Now we define a partial ordering on

; If a, b then we write a LU b if and only if a L b L and a U bU . Also we

write a LU b if and only if a LU b and a b . Following this we call f, LUconvex at x’ if f (x'(1 ) x) LU f ( x' ) (1 ) f ( x) for (0,1) and x X .

Proposition1. 2 ([see [1]) f is LU-convex at x if and only if f L and f U are

convex at x.

Consider the following optimization problem ( with interval valued objective

function):

minimize

f(X) f(x 1 , x 2 ,..., x n ) [f L (x 1 , x 2 ,..., x n ), f U (x 1 , x 2 ,..., x n )] [f L (X), f U (X)]

subject to

X (x 1 , x 2 ,..., x n ) R n ,

1

2

Effati@sttu.ac.ir

Pakdaman.m@gmail.com

(1)

1

اولین کنفرانس بین المللی انجمن ایرانی تحقیق در

1386 بهمن--- دانشگاه کیش--- عملیات

Where is the feasible set which is a convex subset of R n . Also (1) can be

formulated as follows:

minimize

f(X) f(x 1 , x 2 ,..., x n ) [f L (x 1 , x 2 ,..., x n ), f U (x 1 , x 2 ,..., x n )] [f L (X), f U (X)]

subject to

g i (X) 0 , i 1,2,..., m

(2)

The meaning of minimization in (2) follows from the partial ordering ' LU ' .

Definition1. 2. Let x * be a feasible solution. We say that x * is a type1 solution of

problem (2) if there exists no x X such that f ( x) LU f ( x * ).

Theorem1. 1. (see [1]) Suppose that the objective function f : R n is LUconvex and weakly differentiable at x * and g i , i 1,2,..., m satisfy the KKT

assumptions at x * (see [2 ) if there exist Lagrange multipliers 0 L , U R and

0 i R (i=1,2,…,m) such that :

m

(i ) L f L ( x * ) U f U ( x * ) i g i ( x * ) 0;

i 1

(ii ) i g i ( x ) 0 for all i 1,2,..., m

*

Then x * is a type1 solution of problem (2).

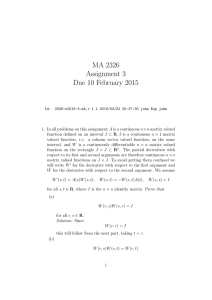

2. Solving Method

Wu (see [1]) proved the KKT optimality conditions for problem (2) (theorem 1.1).

Using these conditions we propose the following differential model for solving problem

(2):

L

U

dx j

g ( x)

( x) m

L f ( x )

U f

y i i , j 1,2,..,n.

x j

x j

x j

dt

i 1

(3)

dy i

dt g i ( x), y i 0 i 1,2,..., m.

Theorem1.2. If the dynamical system (3) converges to a stable state x(.) and y(.)

then the state x(.) is convergent to the optimal solution ( type1 solution) of problem (3).

Proof. (The proof is similar to [3]).

3. Conclusions

Here using KKT optimality conditions we proposed a differential system which is

convergent to type1 solution. The proof of it’s convergence ( proof of theorem 1.2) is

similar to the proof of theorem 1 from [3].

References

[1]. H. C .Wu, The Karush-Kuhn-Tucker optimality conditions in an optimization problem with intervalvalued objective function, European Journal of Operational Research, 176 (2007) 46-59.

[2]. M. S. Bazaraa, H. D. Sherali, C. M. Shetty, Nonlinear Programming, Wiley, NY, 1993.

[3]. S. Effati, M. Baymani, A new nonlinear neural network for solving convex nonlinear programming

problems, Applied Mathematics and Computation, 168 (2005) 1370-1379.

2