goadrich.thesis.ppt

advertisement

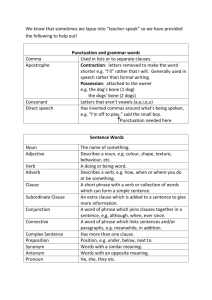

Learning Ensembles of FirstOrder Clauses That Optimize Precision-Recall Curves Mark Goadrich Computer Sciences Department University of Wisconsin - Madison Ph. D. Defense August 13th, 2007 Biomedical Information Extraction QuickTime™ and a TIFF (Uncompressed) decompressor are needed to see this picture. *image courtesy of SEER Cancer Training Site Structured Database Biomedical Information Extraction http://www.geneontology.org Biomedical Information Extraction NPL3 encodes a nuclear protein with an RNA recognition motif and similarities to a family of proteins involved in RNA metabolism. ykuD was transcribed by SigK RNA polymerase from T4 of sporulation. Mutations in the COL3A1 gene have been implicated as a cause of type IV Ehlers-Danlos syndrome, a disease leading to aortic rupture in early adult life. Outline Biomedical Information Extraction Inductive Logic Programming Gleaner Extensions to Gleaner – GleanerSRL – Negative Salt – F-Measure Search – Clause Weighting (time permitting) Inductive Logic Programming Machine Learning – Classify data into categories – Divide data into train and test sets – Generate hypotheses on train set and then measure performance on test set In ILP, data are Objects … – person, block, molecule, word, phrase, … and Relations between them – grandfather, has_bond, is_member, … Seeing Text as Relational Objects verb(…) alphanumeric(…) phrase_child(…, …) Word internal_caps(…) Phrase noun_phrase(…) phrase_parent(…, …) Sentence long_sentence(…) Protein Localization Clause prot_loc(Protein,Location,Sentence) :phrase_contains_some_alphanumeric(Protein,E), phrase_contains_some_internal_cap_word(Protein,E), phrase_next(Protein,_), different_phrases(Protein,Location), one_POS_in_phrase(Location,noun), phrase_contains_some_arg2_10x_word(Location,_), phrase_previous(Location,_), avg_length_sentence(Sentence). ILP Background Seed Example – A positive example that our clause must cover Bottom Clause – All predicates which are true about seed prot_loc(P,L,S) example seed prot_loc(P,L,S):- alphanumeric(P) prot_loc(P,L,S):- alphanumeric(P),leading_cap(L) Clause Evaluation Prediction vs Actual Positive or Negative True or False prediction actual T P FP FN TN Focus on positive examples Recall = Precision = TP TP + FN TP TP + FP F1 Score = 2PR P +R Protein Localization Clause prot_loc(Protein,Location,Sentence) :phrase_contains_some_alphanumeric(Protein,E), phrase_contains_some_internal_cap_word(Protein,E), phrase_next(Protein,_), different_phrases(Protein,Location), one_POS_in_phrase(Location,noun), phrase_contains_some_arg2_10x_word(Location,_), phrase_previous(Location,_), avg_length_sentence(Sentence). 0.15 Recall 0.51 Precision 0.23 F1 Score Aleph (Srinivasan ‘03) Aleph learns theories of clauses – Pick positive seed example – Use heuristic search to find best clause – Pick new seed from uncovered positives and repeat until threshold of positives covered Sequential learning is time-consuming Can we reduce time with ensembles? And also increase quality? Outline Biomedical Information Extraction Inductive Logic Programming Gleaner Extensions to Gleaner – GleanerSRL – Negative Salt – F-Measure Search – Clause Weighting Gleaner (Goadrich et al. ‘04, ‘06) Definition of Gleaner – One who gathers grain left behind by reapers Key Ideas of Gleaner – Use Aleph as underlying ILP clause engine – Search clause space with Rapid Random Restart – Keep wide range of clauses usually discarded – Create separate theories for diverse recall Precision Gleaner - Learning Recall Create B Bins Generate Clauses Record Best per Bin Gleaner - Learning Seed K . . . Seed 3 Seed 2 Seed 1 Recall Gleaner - Ensemble Clauses from bin 5 Pos ex1: prot_loc(…) 12 ex2: prot_loc(…) 47 ex3: prot_loc(…) 55 Neg Pos ex1: prot_loc(…) ex2: 12 47 . ex598: prot_loc(…) 5 ex599: prot_loc(…) 14 ex600: prot_loc(…) 2 ex601: prot_loc(…) 18 Pos Neg . . . Neg Pos . Gleaner - Ensemble Score Precision Recall pos3: prot_loc(…) 55 1.00 0.05 neg28: prot_loc(…) 52 0.50 0.05 pos2: prot_loc(…) 47 0.66 0.10 neg4: prot_loc(…) 18 0.12 0.85 neg475: prot_loc(…) 17 pos9: prot_loc(…) 17 0.13 0.90 . neg15: prot_loc(…) . 1.0 Precision Examples Recall 16 0.12 0.90 1.0 Gleaner - Overlap For each bin, take the topmost curve Precision Recall How to Use Gleaner (Version 1) Precision Recall = 0.50 Precision = 0.70 Recall Generate Tuneset Curve User Selects Recall Bin Return Testset Classifications Ordered By Their Score Gleaner Algorithm Divide space into B bins For K positive seed examples – Perform RRR search with precision x recall heuristic – Save best clause found in each bin b For each bin b – Combine clauses in b to form theoryb – Find L of K threshold for theorym which performs best in bin b on tuneset Evaluate thresholded theories on testset Aleph Ensembles (Dutra et al ‘02) Compare to ensembles of theories Ensemble Algorithm – Use K different initial seeds – Learn K theories containing C rules – Rank examples by the number of theories YPD Protein Localization Hand-labeled dataset (Ray & Craven ’01) – 7,245 sentences from 871 abstracts – Examples are phrase-phrase combinations 1,810 positive & 279,154 negative 1.6 GB of background knowledge – Structural, Statistical, Lexical and Ontological – In total, 200+ distinct background predicates Performed five-fold cross-validation Evaluation Metrics Area Under PrecisionRecall Curve (AUC-PR) – All curves standardized to cover full recall range – Averaged AUC-PR over 5 folds 1.0 Precision Number of clauses considered – Rough estimate of time Recall 1.0 PR Curves - 100,000 Clauses Protein Localization Results Other Relational Datasets Genetic Disorder (Ray & Craven ’01) – 233 positive & 103,959 negative Protein Interaction (Bunescu et al ‘04) – 799 positive & 76,678 negative Advisor (Richardson and Domingos ‘04) – Students, Professors, Courses, Papers, etc. – 113 positive & 2,711 negative Genetic Disorder Results Protein Interaction Results Advisor Results Gleaner Summary Gleaner makes use of clauses that are not the highest scoring ones for improved speed and quality Issues with Gleaner – Output is PR curve, not probability – Redundant clauses across seeds – L of K clause combination Outline Biomedical Information Extraction Inductive Logic Programming Gleaner Extensions to Gleaner – GleanerSRL – Negative Salt – F-Measure Search – Clause Weighting Estimating Probabilities - SRL Given highly skewed relational datasets Produce accurate probability estimates Gleaner only produces PR curves Precision Recall Gleaner Algorithm GleanerSRL Algorithm (Goadrich ‘07) Divide space into B bins For K positive seed examples – Perform RRR search with precision x recall heuristic – Save best found in each bin b Create For each propositional binclause b feature-vectors – Combine clauses b to or form theoryb Learn scores with in SVM other – Find L of K threshold theorym which propositional learningfor algorithms performs best in bin b on tuneset Calibrate scores into probabilities Evaluate thresholded theories on testset Evaluate probabilities with Cross Entropy GleanerSRL Algorithm Precision Learning with Gleaner Recall Generate Clauses Create B Bins Record Best per Bin Repeat for K seeds Creating Feature Vectors Clauses from bin 5 K Boolean Pos 1 Neg 0 1 Binned Pos Pos . . . Neg ex1: prot_loc(…) 12 1 1 . . . 0 Learning Scores via SVM Calibrating Probabilities Use Isotonic Regression (Zadrozny & Elkan ‘03) to transform SVM scores into probabilities 0.50 0.66 1.00 Probability 0.00 Class 0 0 1 0 1 1 0 1 1 Score -2 -0.4 0.2 0.4 0.5 0.9 1.3 1.7 15 Examples GleanerSRL Results for Advisor (Davis et al. 05) (Davis et al. 07) Outline Biomedical Information Extraction Inductive Logic Programming Gleaner Extensions to Gleaner – GleanerSRL – Negative Salt – F-Measure Search – Clause Weighting Diversity of Gleaner Clauses Negative Salt Seed Example – A positive example that our clause must cover Salt Example – A negative example that our clause should prot_loc(P,L,S) avoid seed salt Gleaner Algorithm Divide space into B bins For K positive seed examples – Select PerformNegative RRR search Salt example with precision x recall – heuristic Perform RRR search with salt-avoiding – heuristic Save best clause found in each bin b – Save best found in each bin b For each binclause b For each bin b – Combine clauses in b to form theoryb – Combine Find L of K clauses threshold in bfor to theory form theory m which b best in bin bfor ontheory tuneset – performs Find L of K threshold m which performsthresholded best in bin b theories on tuneseton testset Evaluate Evaluate thresholded theories on testset Diversity of Negative Salt Effect of Salt on Theorym Choice Negative Salt AUC-PR Outline Biomedical Information Extraction Inductive Logic Programming Gleaner Extensions to Gleaner – GleanerSRL – Negative Salt – F-Measure Search – Clause Weighting Gleaner Algorithm Divide space into B bins For K positive seed examples – Perform RRR search with F precision Measurex heuristic recall – heuristic Save best clause found in each bin b For each bin b – Combine clauses in b to form theoryb – Find L of K threshold for theorym which performs best in bin b on tuneset Evaluate thresholded theories on testset RRR Search Heuristic Heuristic function directs RRR search Can provide direction through F Measure Low values for High values for encourage Precision encourage Recall F0.01 Measure Search QuickTime™ and a TIFF (Uncompressed) decompressor are needed to see this picture. F1 Measure Search QuickTime™ and a TIFF (Uncompressed) decompressor are needed to see this picture. F100 Measure Search QuickTime™ and a TIFF (Uncompressed) decompressor are needed to see this picture. F Measure AUC-PR Results Genetic Disorder Localization Protein Weighting Clauses Alter the L of K combination in Gleaner Within Single Theory – Cumulative weighting schemes successful – Precision highest-scoring scheme Within Gleaner – Precision beats Equal Wgt’ed and Naïve Bayes – Significant results on genetic-disorder dataset Clauses from bin 5 Weighting Clauses Pos Cumulative W 11 Neg Pos ∑(precision of each matching clause) ∑(recall of each matching clause) 00 ∑(F1 measure of each matching clause) W 13 ex1: prot_loc(…) W 14 Pos . . . Naïve Bayes and TAN 00 learn probability for example Ranked List max(precision of each matching clause) Weighted Vote Neg ave(precision of each matching clause) Dominance Results Statistically significant dominance in i,j Precision is never dominated Naïve Bayes competitive with cumulative Weighting Gleaner Results Conclusions and Future Work Gleaner is a flexible and fast ensemble algorithm for highly skewed ILP datasets Other Work – Proper interpolation of PR Space (Goadrich et al. ‘04, ‘06) – Relationship of PR and ROC Curves (Davis and Goadrich ‘06) Future Work – Explore Gleaner on propositional datasets – Learn heuristic function for diversity (Oliphant and Shavlik ‘07) Acknowledgements USA DARPA Grant F30602-01-2-0571 USA Air Force Grant F30602-01-2-0571 USA NLM Grant 5T15LM007359-02 USA NLM Grant 1R01LM07050-01 UW Condor Group Jude Shavlik, Louis Oliphant, David Page, Vitor Santos Costa, Ines Dutra, Soumya Ray, Marios Skounakis, Mark Craven, Burr Settles, Patricia Brennan, AnHai Doan, Jesse Davis, Frank DiMaio, Ameet Soni, Irene Ong, Laura Goadrich, all 6th Floor MSCers