dimaio.ilp04.ppt

advertisement

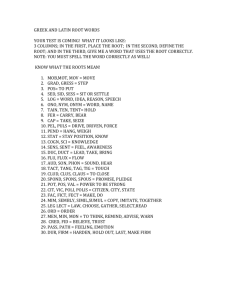

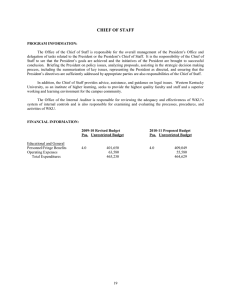

Learning an Approximation to Inductive Logic Programming Clause Evaluation Frank DiMaio and Jude Shavlik Computer Sciences Department University of Wisconsin - Madison USA Inductive Logic Programming 8 September 2004 Motivation • Given bottom clause |E| examples maximum clause length c • ILP’s runtime assuming constant-time clause evaluation c O( || |E| ) for exhaustive search O( || |E| ) for greedy search Motivation • Evaluation time of a clause on 1 example exponential in # variables (Dantsin et al 2001) • Many clause evaluations in datasets with long bottom clauses, long maximum clause length, or many examples • Result: long running time ILP Time Complexity • Search algorithm improvements Better heuristic functions, search strategy Random uniform sampling (Srinivasan, 2000) Stochastic search (Rückart & Kramer, 2003) ILP Time Complexity • Faster clause evaluations Clause reordering & optimizing (Blockeel et al 2002, Santos Costa et al 2003) Stochastic matching (Sebag et al, 2000) Sampling the training examples • Evaluation of a candidate still O(|E|) Outline • Bottom clause and ILP search space •Learning a fast approximation to the clause evaluation function •Using the clause evaluation function approximation to speed up ILP Bottom Clause Given background knowledge as facts and relations in first-order logic C B A onTop(blockB,blockA,ex2). onTop(blockC,blockB,ex2). above(A,B,C) :- onTopOf(A,B,C). above(A,B,C) :- onTopOf(A,Z,C), above(Z,B,C). Generate example’s bottom clause () by saturating that example (Muggleton, 1995) is the complete set all fully ground literals connected to example Bottom Clause ex2: C B A onTop(blockB,blockA,ex2). onTop (blockC,blockB,ex2). above(A,B,C) :- onTopOf(A,B,C). above(A,B,C) :- onTopOf(A,Z,C), above(Z,B,C). positive(ex) :onTop(blockB,blockA,ex2), onTop(blockB,blockA ,ex2onTop(blockC,blockB,ex2), ), onTop(blockC,blockB,ex2), above(blockB,blockA,ex2), above(blockB,blockA,ex2), above(blockC,blockB,ex2), above(blockC,blockB,ex2), above(blockC,blockA,ex2). above(blockC,blockA,ex2). Building Candidate Hypotheses positive(E). positive(E) :onTopOf(A,B,E), above(B,C,E). positive(ex2) :onTopOf(blockB,blockA,ex2), onTopOf(blockC,blockB,ex2), above(blockB,blockA,ex2), above(blockC,blockB,ex2), ... A Faster Clause Evaluation • Our idea: predict clause’s evaluation in O(1) time (i.e., independent of number of examples) • Use multilayer feed-forward neural network to approximately score candidate clauses • NN inputs specify bottom clause literals selected • There is a unique input for every candidate clause in the search space Neural Network Topology Selected literals from containsBlock(ex2,blockB) onTopOf(blockB,blockA) isRound(blockA) isRound(blockB) Candidate Clause positive(A) :containsBlock(A,B), onTopOf(B,C), isRound(B), isRound(C). Neural Network Topology Selected literals from containsBlock(ex2,blockB) onTopOf(blockB,blockA) isRound(blockA) isRound(blockB) containsBlock(ex2,blockB) 1 onTopOf(blockB,blockA) 1 isRed(blockA) 0 isRound(blockA) 1 isBlue(blockB) 0 Candidate Clause positive(A) :containsBlock(A,B), onTopOf(B,C), isRound(B), isRound(C). Neural Network Topology Selected literals from containsBlock(ex2,blockB) onTopOf(blockB,blockA) isRound(blockA) count(containsBlock) 1 count(onTopOf) 1 count(isRed) 0 count(isRound) 2 isRound(blockB) Candidate Clause positive(A) :containsBlock(A,B), onTopOf(B,C), isRound(B), isRound(C). Neural Network Topology Selected literals from containsBlock(ex2,blockB) onTopOf(blockB,blockA) isRound(blockA) isRound(blockB) length 5 number of variables 3 number of shared variables 3 Candidate Clause positive(A) :containsBlock(A,B), onTopOf(B,C), isRound(B), isRound(C). Neural Network Topology containsBlock(ex2,blockB) 1 onTopOf(block2B,blockA) 1 isRed(blockA) 0 isRound(blockA) 1 isBlue(blockB) 0 Σ Predicted Negative Cover … Σ Predicted Positive Cover count(containsBlock) 1 count(onTopOf) 1 count(isRed) 0 count(isRound) 2 … length 5 number of variables 3 number of shared variables 3 Experiments • Trained (clause → score) on benchmark datasets Carcinogenesis Mutagenesis Protein Metabolism Nuclear Smuggling • Clauses generated by uniform random sampling • Clause evaluation metric compression = posCovered – negCovered – length + 1 totalPositives • 10-fold cross-validation learning curves Results 10-fold c.v. Testset RMS Error 0.16 0.14 Protein Metabolism 0.12 Nuclear Smuggling Mutagenesis 0.10 Carcinogenesis 0.08 0.06 0.04 0.02 0.00 0 200 400 600 800 Training Set Size (number of clauses) 1000 Why not just use a fraction of examples? We compare squared error of 1. estimating scores with trained network 2. estimating scores using subset of examples Learning vs. Sampling 0.15 10% sampling 25% 50% 90% Neural Net RMS Error 0.10 0.05 0.00 Protein Metabolism Carcinogenesis Mutagenesis Nuclear Smuggling Using the Trained Network 1. Rapidly explore search space 2. Explore network-defined surface 3. Extract concepts from trained network Online Training Algorithm Begin with initial burn-in training When new clauses are evaluated on actual data, yielding I/O pair <C,[P,N]> insert <C,[P,N]> into recent_cache if one of top 100 clauses seen so far insert <C,[P,N]> sorted into best_cache At regular interval train net on recent_cache for fixed number of epochs train net on best_cache for fixed number of epochs 1. Rapidly explore search space • O(1) clause evaluation tool • Whenever a clause evaluation is needed, approximate on network • Before expanding network-approximated clause, evaluate against real data • Behavior depends on underlying search Branch and bound – optimize order of evaluation A* (aleph’s default) – ignore non-promising clauses 1. Rapidly explore search space pos(A). pos(A) :- f(A,B). pos(A) :f(A,B),g(A). open list pos(A) :- g(A). pos(A) :f(A,B),g(B). pos(A) :f(A,B). 2.3NN current node pos(A). 0 1. Rapidly explore search space pos(A). pos(A) :- f(A,B). pos(A) :f(A,B),g(A). open list pos(A) :- g(A). current node pos(A) :f(A,B),g(B). pos(A) :g(A). 3.7NN pos(A) :f(A,B). 2.3NN pos(A). 0 1. Rapidly explore search space pos(A). pos(A) :- f(A,B). pos(A) :f(A,B),g(A). open list pos(A) :- g(A). current node pos(A) :f(A,B),g(B). pos(A) :f(A,B) 2.3NN pos(A) :g(A). 2 pos(A) :g(A). 3.72NN 1. Rapidly explore search space pos(A). pos(A) :- f(A,B). pos(A) :f(A,B),g(A). open list pos(A) :- g(A). pos(A) :f(A,B),g(B). pos(A) :g(A). 2 current node pos(A) :f(A,B). 2.34NN 1. Rapidly explore search space pos(A). pos(A) :- f(A,B). pos(A) :f(A,B),g(A). open list pos(A) :- g(A). current node pos(A) :f(A,B),g(B). pos(A) :f(A,B) 4 pos(A) :g(A). 2 1. Rapidly explore search space pos(A). pos(A) :- f(A,B). pos(A) :f(A,B),g(A). open list pos(A) :- g(A). pos(A) :f(A,B),g(B). pos(A) :g(A). 2 current node pos(A) :f(A,B). 4 1. Rapidly explore search space pos(A). pos(A) :- f(A,B). pos(A) :f(A,B),g(A). open list pos(A) :- g(A). pos(A) :f(A,B),g(B). current node pos(A) :pos(A) :f(A,B),g(A). g(A). 5.7NN 2 pos(A) :f(A,B) 4 pos(A) :f(A,B),g(B). 1.6NN 2. Explore network-defined surface • Trained network defines function over space of candidate clauses 2. Explore network-defined surface • Explore this surface using stochastic gradient ascent • Rapid random restarts (Zelezny et al, 2002) random clause generation short local search • Use network-defined surface to make “intelligent” rapid random restarts (Boyan & Moore, 2000) Algorithm Illustration Alternate 1. searching network-defined surface 2. exploring clause evaluation function surface Network approx. clause eval. fn. Clause evaluation fn. Candidate Clauses 3. Extract concepts from trained net • Extract decision tree from trained neural network (Craven & Shavlik 1995) • Predicate invention High-weight edges into single hidden unit Add invented predicates to background Biased-RRR Results Protein Metabolism Average Coverage Carcinogenesis 40 35 30 30 20 10 25 0 20 0 15 biased-RRR 10 5 0 500 1000 1500 Clause Evaluations 200 300 400 Nuclear Smuggling RRR 0 100 2000 50 40 30 20 10 0 0 5000 10000 Future Work • Implement and test other uses (#1 and #3) for utilizing trained neural network • Look at relative ranking of network predictions rather than squared error Rankprop concerned with correctly predicting ranking (Caruana et al, 1997) • Approximation quality in phase transition? (Botta et al, 2003) Conclusion • Can learn to accurately estimate score of candidate clauses • Several potential uses for speeding up ILP • Helps scale ILP to ever larger (#ex’s, search space size) datasets Acknowledgements • NLM Grant 1T15 LM007359-01 • US Air Force Grant F30602-01-2-0571 • NLM Grant 1R01 LM07050-01