Assessment in College Teaching Ursula Waln, Director of Student Learning Assessment

Assessment in College Teaching

Ursula Waln, Director of Student Learning Assessment

Central New Mexico Community College

Overview

Grading, Assessment & the Purpose of these Slides

Grading and Assessment Go Hand-in-Hand

Grading

• Summarizing student performance symbolically

• Percentages correct

• Points earned

• Letter grades

• A holistic evaluation of work

• Used to communicate student success relative to criteria and/or other students

Assessment

• Analyzing student learning

• What students learned well

• What they didn’t learn so well

• The factors that influenced the learning

• A multifaceted evaluation of student progress

• Used to identify ways to improve learning

• Does not have to involve grades

The Purpose of these Slides

• These slides aim provide an overview of three techniques instructors can use to get the most out of their course-level assessment efforts:

Classroom Assessment Techniques (CATs)

• For formative assessment

Item Analysis

• For objective evaluation

• For subjective evaluation

Classroom Assessment Techniques

For Formative Assessment

A Comprehensive, Authoritative Resource

• Angelo, T. A., & Cross, K. P. (1993). Classroom assessment techniques: A handbook for college teachers (2 nd ed.). San

Francisco, CA: Jossey-Bass.

• Describes 50 commonly used classroom assessment techniques (CATs)

• Emphasizes the importance of having clear learning goals

• Promotes planned, intentional use to gauge student progress

• Encourages discussing results with the students

• To promote learning

• To teach students to monitor their own learning progress

• Encourages the use of insights gained to redirect instruction

• Examples of CATs are briefly described in the following 10 slides.

Prior Knowledge, Recall & Understanding

• Misconception/Preconception Check

• Having students write answers to questions designed to uncover prior knowledge or beliefs that may impede learning

• Empty Outlines

• Providing students with an empty or partially completed outline and having them fill it in

• Memory Matrix

• Giving students a table with column and row headings and having them fill in the intersecting cells with relevant details, match the categories, etc.

Skill in Analysis & Critical Thinking

• Categorizing Grid

• Giving students a table with row headings and having students match by category and write in corresponding items from a separate list

• Content, Form, and Function Outlines

• Having students outline the what, how, and why related to a concept

• Analytic Memos

• Having students write a one- or two-page analysis of a problem or issue as if they were writing to an employer, client, stakeholder, politician, etc.

Skill in Synthesis & Creative Thinking

• Approximate Analogies

• Having students complete the analogy A is to B as ___ is to ___, with A and B provided

• Concept Maps

• Having students illustrate relationships between concepts by creating a visual layout bubbles and arrows connecting words and/or phrases

• Annotated Portfolios

• Having students create portfolios presenting a limited number of works related to the specific course, a narrative, and maybe supporting documentation

Skill in Problem Solving

• Problem Recognition Tasks

• Presenting students with a few examples of common problem types and then asking them to identify the particular type of problem each represents

• What’s the Principle?

• Presenting students with a few examples of common problem types and then asking them to state the principle that best applies to each problem

• Documented Problem Solutions

• Having students not only show their work, but also explain next to it in writing how they worked the problem out (“show and tell”)

Skill in Application & Performance

• Directed Paraphrasing

• Having students paraphrase part of a lesson for a specific audience and purpose

• Application Cards

• Handing out an index card (or slip of scratch paper) and having students write down at least one ‘real-world’ application for what they have learned

• Paper or Project Prospectus

• Having students create a brief, structured plan for a paper or project, anticipating and identifying the elements to be developed

Awareness of Attitudes & Values

• Profiles of Admirable Individuals

• Having students write a brief, focused profile of an individual – in a field related to the course – whose values, skills, or actions they admire

• Everyday Ethical Dilemmas

• Presenting students with a case study that poses an ethical dilemma – related to the course – and having them write anonymous responses

• Course-Related Self-Confidence Surveys

• Having students write responses to a few questions aimed at measuring their self-confidence in relation to a specific skill or ability

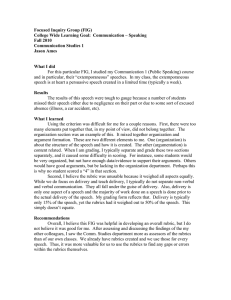

Self-Awareness as Learners

• Focused Autobiographical Sketches

• Having students write one to two pages about a single, successful learning experience in their past relevant to the learning in the course

• Interest/Knowledge/Skills Checklists

• Giving students a checklist of the course topics and/or skills and having them rate their level of interest, skill, and/or knowledge for each

• Goal Ranking and Matching

• Having students write down a few goals they hope to achieve – in relation to the course/ program – and rank those goals; then comparing student goals to instructor/program goals to help students better understand what the course/program is about

Course-Related Study Skills & Behaviors

• Productive Study-Time Logs

• Having students record how much time they spend studying, when they study, and/or how productively they study

• Punctuated Lectures

• Stopping periodically during lectures and having students reflect upon and then write briefly about their listening behavior just prior and how it helped or hindered their learning

• Process Analysis

• Having student keep a record of the step they take in carrying out an assignment and then reflect on how well their approach worked

Reactions to Instruction

• Teacher-Designed Feedback Forms

• Having students respond anonymously to 3 to 7 questions in multiplechoice, Likert scale, or short-answer formats to get course-specific feedback

• Group Instructional Feedback Technique

• Having someone else (other than the instructor) poll students on what works, what doesn’t, and what could be done to improve the course

• Classroom Assessment Quality Circles

• Involving groups of students in conducting structured, ongoing assessment of course materials, activities, and assignments and suggesting ways to improve student learning

Reactions to Class Activities & Materials

• Group-Work Evaluations

• Having students answer questions to evaluate team dynamics and learning experiences following cooperative learning activities

• Reading Rating Sheets

• Having students rate their own reading behaviors and/or the interest, relevance, etc., of a reading assignment

• Exam Evaluations

• Having students provide feedback that reflects on the degree to which an exam (and preparing for it) helped them to learn the material, how fair they think the exam is as an assessment of their learning, etc.

Item Analysis

For Objective Evaluation

Item Analysis

• Looks at frequency of correct responses (or behaviors) in connection with overall performance

• Used to examine item reliability

• How consistently a question or performance criterion discriminates between high and low performers

• Can be useful in improving validity of measures

• Can help instructors decide whether to eliminate certain items from the grade calculations

• Can reveal specific strengths and gaps in student learning

Click to go to:

How Item Analysis Works

• Groups students by the highest, mid-range, and lowest overall scores and examines item responses by group

• Assumes that higher-scoring students have a higher probability of getting any given item correct than do lower-scoring students

• May have studied and/or practiced more and understood the material better

• May have greater test-taking savvy, less anxiety, etc.

• Produces a calculation for each item

• Do it yourself to easily calculate a group difference or discrimination index

• Use EAC Outcomes (a Blackboard plug-in made available to all CNM faculty by the Nursing program) to generate a point-biserial correlation coefficient

• Gives the instructor a way to analyze performance on each item

Click to go to:

One Way to Do Item Analysis by Hand

Shared by Linda Suskie at the NMHEAR Conference, 2015

Item Tally of those in Top 27% who missed item*

1

2

3

4

||||| ||

|||

Tally of those in the Middle

46% who missed item

||||| |||||

||||| |||||

||

||||| |||||

||||| |||||

|

||||| ||||

Tally of those in the Lower

27% who missed item*

||||| |||||

||||| ||

||||| |||||

||||| ||||

||

||||| |||||

|

Total % Who

Missed Item

34%

40%

5%

17%

Group

Difference

(# in Lower minus # in Top)

17

12

-1

11

* You can use whatever portion you want for the top and lower groups, but they need to be equal. Using 27% is accepted convention (Truman Kelley, 1939).

Another Way to Do Item Analysis by Hand

Rasch Item Discrimination Index (D)

3

4

1

2

N=31 because the upper and lower group each contain 31 students (115 students tested)

Item # in Upper

Group who answered correctly

(# UG )

Portion of UG who answered correctly

(p UG)

# in Lower

Group who answered correctly

(# LG )

Portion of LG who answered correctly

(p LG

)

Discrimination

Index (D)

D = p UG −p LG

D = or

# 𝑈𝐺 −# 𝐿𝐺

𝑁

31

24

1.00 (100%)

0.77 (77%)

14

12

0.45 (45%)

0.39 (39%)

0.55

0.38

28

31

0.90 (90%)

1.00 (100%)

29

20

0.93 (93%)

0.65 (65%)

-0.03

0.35

A discrimination index of 0.4 or greater is generally regarded as high and anything less than 0.2 as low (R.L. Ebel, 1954).

The Same Thing but Less Complicated

Rasch Item Discrimination Index (D)

3

4

1

2

N in Upper and Lower Groups is 31 (27% of 115 students)

Item # in Upper

Group who answered correctly

(# UG )

# in Lower

Group who answered correctly

(# LG )

Discrimination

Index (D)

D =

# 𝑈𝐺 −# 𝐿𝐺

𝑁

31

24

14

12

0.55

0.38

28

31

29

20

-0.03

0.35

It isn’t necessary to calculate the portions of correct responses in each group if you use the formula shown here.

N=.27 115 = 31

31−14

= 0.55

31

24−12

= 0.38

31

28−29

= -0.03

31

31−20

= 0.35

31

Example of an EAC Outcomes Report

A point-biserial correlation is the Pearson correlation between responses to a particular item and scores on the total test (with or without that item).

Correlation coefficients range from -1 to 1.

This is available to CNM faculty through Blackboard course tools.

Identifying Key Questions

• A key (a.k.a. signature) question is one that provides information about student learning in relation to a specific instructional objective (or student learning outcome statement).

• The item analysis methods shown in the preceding slides can help you identify and improve the reliability of key questions.

• A low level of discrimination may indicate a need to tweak the wording.

• Improving discrimination value also improves question validity.

• The more valid an assessment measure, the more useful it is in gauging student learning.

Click to go to:

Detailed Multiple-Choice Item Analysis

• The detailed item analysis method shown on the next slide is for use with key multiple-choice items.

• This type of analysis can provide clues to the nature of students’ misunderstanding, provided:

• The item is a valid measure of the instructional objective

• Incorrect options (distractors) are written to be diagnostic (i.e., to reveal misconceptions or breakdowns in understanding)

Click to go to:

Example of a Detailed Item Analysis

Item 2 of 4. The correct option is E. (115 students tested)

Item Response Pattern

Upper

27%

Middle

46%

A

||

6.5%

|||

6%

B

|||||

16%

||||| |||||

||||

26%

C

||

4%

D

|

2%

E

||||| ||||| |||||

||||| ||||

77.5%

||||| ||||| |||||

||||| ||||| ||||| |||

62%

Lower

27%

|||||

16%

||||| ||

23%

|||||

16%

||

6%

||||| ||||| ||

39%

Grand

Total

10

8.5%

26

23%

7

6%

3

2.5%

69

60%

Row Total

31

53

31

115

These results suggest that distractor B might provide the greatest clue about breakdown in students’ understanding, followed by distractor A, then C.

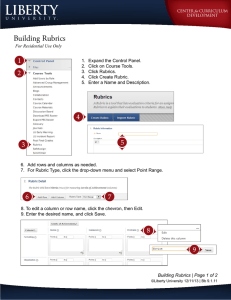

Descriptive Rubrics

For Subjective Evaluation

Rubric: Just Another Word for Scoring Guide

• A rubric is any scoring guide that lists specific criteria, such as a checklist or a rating scale.

• Checklists are used for objective evaluation (did it or did not do it).

• Rating scales are used for subjective evaluation (gradations of quality).

• Descriptive rubrics are rating scales that contain descriptions of what constitutes each level of performance.

• Maybe call them descriptive scoring guides if you don’t like the word rubric.

• Most people who talk about rubrics are referring to descriptive rubrics, not checklists or rating scales.

Click to go to:

The Purpose of Descriptive Rubrics

• Descriptive rubrics are used to lend objectivity to evaluations that are inherently subjective, e.g.:

• Grading of artwork, papers, performances, projects, speeches, etc.

• Assessing overall student progress toward specific learning outcomes (course and/or program level)

• Monitoring developmental levels of individuals as they progress through a program (‘developmental rubrics’).

• Conducting employee performance evaluations.

• Assessing group progress toward a goal.

• When used by multiple evaluators, descriptive rubrics can minimize differences in rater thresholds (especially if normed).

Click to go to:

Why Use Descriptive Rubrics in Class?

• In giving assignments, descriptive rubrics can help clarify the instructor’s expectations and grading criteria for students.

• Students can ask more informed questions about the assignment.

• A clear sense of what is expected can inspire students to achieve more.

• The rubric helps explain to students why they received the grade they did.

• Descriptive rubrics help instructors remain fair and consistent in their scoring of student work (more so than rating scales).

• Scoring is easier and faster when descriptions clearly distinguish levels.

• The effects of scoring fatigue (e.g., grading more generously toward the bottom of a stack due to disappointed expectations) are minimized.

Click to go to:

Why Use Descriptive Rubrics for Assessment?

• Clearly identifying benchmark levels of performance and describing what learning looks like at each level establishes a solid framework for interpreting multiple measures of performance.

• Student performance on different types of assignments and at different points in the learning process can be interpreted for analysis using a descriptive rubric as a central reference.

• With rubrics that describe what goal achievement looks like, instructors can more readily identify and assess the strength of connections between:

• Course assignments and course goals

• Course assignments and program goals

Click to go to:

Two Common Types of Descriptive Rubrics

Holistic

• Each level of performance has just one comprehensive description.

• Descriptions may be organized in columns or rows.

• Useful for quick and general assessment and feedback.

Analytic

• Each level of performance has descriptions for each of the performance criteria.

• Descriptions are organized in a matrix.

• Useful for detailed assessment and feedback.

Click to go to:

Example of a Holistic Rubric

Performance

Levels

Proficient

(10 points)

Intermediate

(6 points)

Emerging

(3 points)

Descriptions

Ideas are expressed clearly and succinctly. Arguments are developed logically and with sensitivity to audience and context. Original and interesting concepts and/or unique perspectives are introduced.

Ideas are clearly expressed but not fully developed or supported by logic and may lack originality, interest, and/or consideration of alternative points of view.

Expression of ideas is either undeveloped or significantly hindered by errors in logic, grammatical and/or mechanical errors, and/or overreliance on jargon and/or idioms.

Example of an Analytic Rubric

Delve, Mintz, and Stewart’s (1990) Service Learning Model

Developmental

Variables

Intervention

Mode

Setting

Commitment

Frequency

Duration

Behavior

Needs

Outcomes

Balance

Challenges

Supports

Phase 1

Exploration

Group

Minimal community interaction—Prefers oncampus activities

One Time

Short Term

Participate in Incentive

Activities

Feeling Good

Becoming Involved

Concern about new environments

Activities are Nonthreatening and

Structured

Phase 2

Clarification

Group (beginning to identify with group)

Phase 3

Realization

Group that shares focus or independently

Phase 4

Activation

Group that shares focus or independently

Trying many types of contact

Direct contact with community

Direct contact with community—intense focus on issue or cause

Several Activities or Sites Consistently at One Site Consistently at One Site or with one issue

Long Term Commitment to Group

Long Term Commitment to Activity, Site, or Issue

Lifelong Commitment to

Issue (beginnings of Civic

Responsibility)

Advocate for Issue(s)

Phase 5

Internalization

Individual

Frequent and committed involvement

Consistently at One Site or focused on particular issues

Lifelong Commitment to

Social Justice

Identify with Group

Camaraderie

Belonging to a Group

Choosing from Multiple

Opportunities/Group

Process

Group Setting,

Identification and

Activities are Structured

Commit to Activity, Site, or Issue

Understanding Activity,

Site, or Issue

Confronting Diversity and

Breaking from Group

Reflective-Supervisors,

Coordinators, Faculty, and Other Volunteers

Changing Lifestyle

Questioning

Authority/Adjusting to

Peer Pressure

Reflective-Partners,

Clients, and Other

Volunteers

Promote Values in self and others

Living One’s Values

Living Consistently with

Values

Community—Have

Achieved a Considerable

Inner Support System

Another Example of an Analytic Rubric

AAC&U Ethical Reasoning Value Rubric

Ethical Self-

Awareness

Understanding

Different Ethical

Perspectives/

Concepts

Ethical Issue

Recognition

Application of

Ethical

Perspectives/

Concepts

Evaluation of

Different Ethical

Perspectives/

Concepts

Capstone

Student states a position and can state the objections to, assumptions and implications of and can reasonably defend against the objections to, assumptions and implications of different ethical perspectives/concepts, and the student's defense is adequate and effective.

Milestones

4

Student discusses in detail/analyzes both core beliefs and the origins of the core beliefs and discussion has greater depth and clarity.

Student names the theory or theories, can present the gist of said theory or theories, and accurately explains the details of the theory or theories used.

3

Student discusses in detail/analyzes both core beliefs and the origins of the core beliefs.

2

Student states both core beliefs and the origins of the core beliefs.

Student can name the major theory or theories she/he uses, can present the gist of said theory or theories, and attempts to explain the details of the theory or theories used, but has some inaccuracies.

Student can name the major theory she/he uses, and is only able to present the gist of the named theory.

Student can recognize ethical issues when presented in a complex, multilayered (gray) context AND can recognize crossrelationships among the issues.

Student can independently apply ethical perspectives/concepts to an ethical question, accurately, and is able to consider full implications of the application.

Student can recognize ethical issues when issues are presented in a complex, multilayered (gray) context OR can grasp cross-relationships among the issues.

Student can independently apply ethical perspectives/concepts to an ethical question, accurately, but does not consider the specific implications of the application.

Student states a position and can state the objections to, assumptions and implications of, and respond to the objections to, assumptions and implications of different ethical perspectives/concepts, but the student's response is inadequate.

Benchmark

1

Student states either their core beliefs or articulates the origins of the core beliefs but not both.

Student only names the major theory she/he uses.

Student can recognize basic and obvious ethical issues and grasp (incompletely) the complexities or interrelationships among the issues.

Student can recognize basic and obvious ethical issues but fails to grasp complexity or interrelationships.

Student can apply ethical perspectives/concepts to an ethical question, independently (to a new example) and the application is inaccurate.

Student states a position and can state the objections to, assumptions and implications of different ethical perspectives/concepts but does not respond to them (and ultimately objections, assumptions, and implications are compartmentalized by student and do not affect student's position.)

Student can apply ethical perspectives/concepts to an ethical question with support (using examples, in a class, in a group, or a fixed-choice setting) but is unable to apply ethical perspectives/concepts independently (to a new example.).

Student states a position but cannot state the objections to and assumptions and limitations of the different perspectives/concepts.

There are No Rules for Developing Rubrics

• Form typically follows function, so how one sets up a descriptive rubric is usually determined by how one plans to use it.

• Performance levels are usually column headings but can function just as wall as row headings.

• Performance levels can be arranged in ascending or descending order, and one can include as many levels as one wants.

• Descriptions can focus only on positive manifestations or include references to missing or negative characteristics.

• Some use grid lines while others do not.

Click to go to:

Descriptive rubrics can help pull together results from multiple measures for a more comprehensive picture of student learning.

Rubric

Using the Model

• To pull together multiple measures for an overall assessment of student learning:

• Take a random sample from each assignment and re-score those using the rubric (instead of the grading criteria), or rate the students as a group based on overall performance on each assignment.

• Then, combine the results, weighting their relative importance based on:

• At what stage in the learning process the results were obtained

• How well you think students understood the assignment or testing process

• How closely the learning measured relates to the instructional objectives

• Factors that could have biased the results

• Your own observations, knowledge of the situations, and professional judgment

Click to go to:

“

Remember that when you do assessment, whether in the department, the general education program, or at the institutional level, you are not trying to achieve the perfect research design; you are trying to gather enough data to provide a reasonable basis for action. You are looking for something to work on.

”

Woolvard, B. E. (2010). Assessment clear and simple: Apractical guide for institutions, departments,

and general education. San Fransisco, CA: Jossey-Bass.