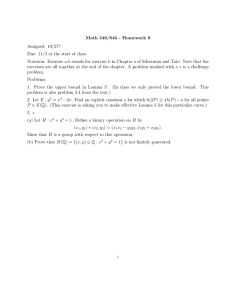

Class handout concerning Lemma 1.22 (a portion of Section 1.5)

advertisement

OR-ST-MA 706 Page |1 COMMENTS CONCERNING LEMMA 1.22 Comments concerning Lemma 1.22: Suppose that x 0 is a solution to Problem (P). Then f ( x0 ) S X , x0 . Many results concerning optimality conditions for nonlinear programming problems (e.g. Karush-Kuhn-Tucker conditions, Lagrange multipliers) are special cases of Lemma 1.22. For special problems, the form of S X , x0 must the determined. This will be developed below. Lemma 1.25 Suppose g : E n E m is differentiable at x 0 , suppose there exists z E n such that g ( x 0 ) z 0 , and let B x : g ( x) g ( x 0 ) . Then S ( B; x 0 ) x E n : g ( x 0 ) x 0 , and thus S B; x0 g ( x0 )T , E m , 0 . Proof: Part 1 Let z E n be such that g ( x 0 ) z 0 ; then g ( x0 )( z ) 0 and g ( x0 ( z )) g ( x0 ), such that 0 , for some 0 . Define x k x 0 ( z ) ; x k B and x k x 0 as k . k k Let k ; then k ( x k x0 ) z , thus k ( x k x0 ) z . Then z S ( B, x0 ) and y : g ( x 0 ) y 0 S(B,x 0 ) . Now let x be such that g ( x0 ) x 0 . Then g ( x0 ) ( z) (1 ) x 0, (0,1) ( z ) (1 ) x S B; x 0 , (0,1) Letting 0 , we have x S B; x 0 . Thus far we have shown x : g ( x ) x 0 S B; x . 0 0 Part 2 Let x S B, x 0 ; then there exist x k B such that x k x 0 and k 0 such that k ( x k x0 ) x . Since g is differentiable at x 0 , g ( x k ) g ( x 0 ) g ( x 0 )( x k x 0 ) x k x 0 ( x k ; x 0 ) , where ( x k x0 ) 0 as k . Since x k B, g ( x k ) g ( x0 ) , and 0 lim k g ( x k ) g ( x0 ) lim g ( x0 ) k ( x k x0 ) g ( x0 ) x . k k Hence S B; x 0 x : g ( x 0 ) x 0 . QED OR-ST-MA 706 Page |2 Remark: g ( x 0 ) z 0 says that the gradients g1 ( x 0 ), , g m ( x 0 ) are in an open halfspace. In particular, this condition holds if the gradient vectors are linearly independent ( i.e. if g ( x0 ) has full rank). This follows from Lemma 1.19. Consider Problem (P’’): Min f ( x) x En s.t. gi ( x) 0, i 1,, m g1 Notation: g g m X x : g ( x) 0 I i : gi ( x 0 ) 0 g I ( x 0 ) k n : rows are the gradients of the active constraints Lemma 1.22 If x0 is a solution to Problem (P’’), then f ( x0 ) S X , x0 . Now S X , x0 S x : g ( x) g ( x ) 0 , x . 0 I 0 I By Lemma 1.25, if there exist y such that g I ( x 0 ) y 0 , then S X , x 0 x : g I ( x 0 ) x 0 and S X , x g ( x ) , 0 0 T I 0 . So f ( x0 ) S X , x0 f ( x0 ) g I ( x0 )T ˆ , ˆ 0, ˆ E k Define 0, i I . Then f ( x0 ) g ( x 0 )T 0 , i gi ( x 0 ) 0 , and 0 (which are just the K-K-T conditions). i Remark: The multiplier corresponding to f ( x 0 ) is positive since we are assuming there exist y such that g I ( x 0 ) y 0 . If no such y exists, then we have only the following: S X , x 0 x : g I ( x 0 ) x 0 (see Part 2 of the proof for Lemma 1.25) This implies x : g I ( x0 ) x 0 S X , x0 g I ( x0 )T , 0 S X , x 0 . If no such y exists, the by Lemma 1.19, there exist nonzero 0 such that g I ( x 0 )T 0 and one obtains the Fritz-John Conditions (Theorem 1.20) : there exist 0 0, 0, (0 , ) 0, 0f ( x 0 ) g I ( x 0 )T 0 . EQUALITY CONSTRAINTS Consider the equality constrained problem: Min f ( x) x E n s.t. h j ( x) 0, j 1, , p OR-ST-MA 706 Page |3 f, hj’s continuously differentiable h1 h X x E n : h( x) 0 hp Lemma 1.26 Let h : E n E p be continuously differentiable at x 0 , and let C x E n : h( x) h( x 0 ) . If h( x 0 ) has full rank, then S (C ; x 0 ) x : h( x 0 ) x 0 and hence S C; x h( x ) , 0 E p . 0 T Thus, Lemma 1.22 says f ( x0 ) S X ; x0 . This means that f ( x0 ) h( x0 )T . p In other words, f ( x0 ) j h j ( x 0 ) 0 (which is just Lagrange Multipliers). j 1 Consider the general nonlinear programming problem: Min f ( x) x En s.t. gi ( x) 0, i 1,, m h j ( x) 0, j 1, , p f, gi’s, hj’s are continuously differentiable g g1 gm T I i : g ( x ) 0 h h1 hp T X x E n : gi ( x) 0, i 1, , m; h j ( x) 0, j 1, , p 0 i Lemma 1.27 Let v1 : E n E m and v2 : E n E p be differentiable and continuously differentiable, respectively, at x 0 . If v2 ( x 0 ) is of full rank, if there exist y such that v1 ( x 0 ) y 0 and v2 ( x 0 ) y 0 , and if C x : v1 ( x) v1 ( x 0 ), v2 ( x) v2 ( x 0 ) , then S (C; x 0 ) x E n : v1 ( x 0 ) x 0, v2 ( x 0 ) x 0 And thus S (C; x ) v ( x ) 0 0 T 1 v2 ( x 0 )T , 0 Now Lemma 1.22 says that if x 0 solves Problem (P), then f ( x0 ) S X ; x0 . Note that S ( X , x0 ) S x E n : g I ( x) g ( x0 ) 0, h( x) h( x0 ) 0 , x0 . OR-ST-MA 706 Page |4 By Lemma 1.27, if there exist y E n such that g I ( x 0 ) y 0 and h( x0 ) y 0 , and if h( x 0 ) is full rank; then S X , x 0 x : g I ( x 0 ) x 0, h( x 0 ) x 0 and S X , x g ( x ) h( x ) , 0 0 T 0 T I 0 . So f ( x0 ) S X , x0 implies that there exist ˆ E k , ˆ 0, E p such that f ( x0 ) g I ( x0 )T ˆ h( x0 )T . Define i 0, i I ; then you get the following conditions (K-K-T). f ( x0 ) g ( x0 )T h( x 0 )T 0 i gi ( x 0 ) 0, i 1, , m 0 Remark: The assumption that there exist y E n such that g I ( x 0 ) y 0 and h( x0 ) y 0 , and h( x 0 ) is at full rank comprise a constraint qualification. It holds if gi ( x 0 ), i I , and h j ( x0 ) are linearly independent.