1.4 Inequality Constrained Optimization

advertisement

Spring 2016 Version

A TEXT FOR NONLINEAR PROGRAMMING

Thomas W. Reiland

Statistics Department

North Carolina State University

Raleigh, NC 27695-8203

Office: (919) 515-1939

Email: reiland@ncsu.edu

1

Table of Contents

Chapter I: Optimality Conditions..................................................Error! Bookmark not defined.

§ 1.1 Differentiability.................................................................Error! Bookmark not defined.

§ 1.2. Unconstrained Optimization ............................................Error! Bookmark not defined.

§ 1.3 Equality Constrained Optimization...................................Error! Bookmark not defined.

§ 1.3.1 Interpretation of Lagrange Multipliers..........................Error! Bookmark not defined.

§ 1.3.2 Second order Conditions – Equality Constraints ..........Error! Bookmark not defined.

§ 1.3.3 The General Case ..........................................................Error! Bookmark not defined.

§ 1.4 Inequality Constrained Optimization ................................................................................ 2

§ 1.5 Constraint Qualifications (CQ) ........................................Error! Bookmark not defined.

§ 1.6 Second-order Optimality Conditions ...............................Error! Bookmark not defined.

§ 1.7 Constraint Qualifications and Relationships Among Constraint Qualifications ..... Error!

Bookmark not defined.

Chapter II: Convexity ...................................................................Error! Bookmark not defined.

§ 2.1 Convex Sets ......................................................................Error! Bookmark not defined.

§ 2.2 Convex Functions ............................................................Error! Bookmark not defined.

§ 2.3 Subgradients and differentiable convex functions ............Error! Bookmark not defined.

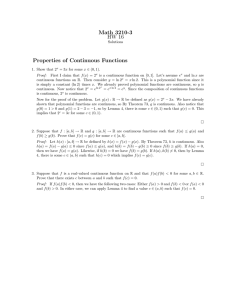

§ 1.4 Inequality Constrained Optimization

Generic Problem:

Minimize f ( x) , x X E n , f is continuously differentiable

Definition 1.4.1 Let X E n be nonempty and let x cl ( X ) (closure of X ). The set of feasible

directions of X at x , denoted by D ( x ) is described by :

D( x ) d E n : x d X , (0, ), 0

At this point, the reader is encouraged to review the definitions (1.1.5 & 1.1.6) for local

minimum and global minimum points.

Consider d E n such that f ( x )T d 0 . At a local minimum x , these d’s must have an empty

intersection with D ( x ) . Refer to the figure below.

2

Theorem 1.13 If x is a local minimum of f ( x) subject to x X , then F0

where F0 d E n : f ( x )T d 0 .

Proof: Suppose there exists d F0

D ( x ) ,

D ( x ) . Then there exists 1 0 such that

f ( x d ) f ( x ), (0, ) . Also, there exists 2 such that x d X , (0, 2 ) .

Let min(1 , 2 ) . Then x d X and f ( x d ) f ( x ) if (0, ) .#

This contradicts that x is a local minimum. QED.

Consider Problem (P): Min f ( x) , x E n

s.t. gi ( x) 0, i 1,, m

h j ( x) 0, j 1, , p

f, gi’s, hj’s have continuous 1st partial derivatives

Define X x E n : gi ( x) 0, h j ( x) 0, i 1, , m, j 1, , p as the feasible region.

Revised definitions for local/global minimum points:

Local Minimum: x X is a local minimum of Problem (P) if there exists ˆ 0 such that

f ( x) f ( x ), x X Nˆ ( x ) .

Global Minimum: x X is a global minimum of Problem (P) if f ( x) f ( x ), x X .

Definition 1.4.2 A nonempty set C E n is called a cone if x C implies that x C , 0 .

If, in addition, C is convex, the C is called a convex cone.

3

Note: A set S E n is convex if, for x1 , x2 S , x1 (1 ) x2 S , [0,1] .

Example 1-26: Refer to the corresponding figures below.

x ( x1 , x2 )T E 2 such that x1 0, x2 0 , together with x (0, 0)T is a convex

(1)

cone (not closed).

(2)

Any linear subspace L of E n is a convex cone.

(3)

Given a collection x1 ,, xN E n , then all the nonnegative linear combinations

x 1 x1 2 x2 N xN form a convex cone.

1

1

x1 x2

2

1

1

2

e.g. (3b)

x1 x2

5

3

This is a cone but it is not convex.

e.g. (3a)

(4)

(1)

(3a)

x2

x2

x1

x1

(3b)

(4)

x1

x2

x1

x2

Lemma 1.14 A cone C E n is convex if and only if x y C , x, y C

4

Proof:

If C is convex and x, y C , then z

2z x y C

1

1

x y C .

2

2

Suppose x, y C and 0 1 , then (1 ) x, y C

and (1 ) x y C since the sum of two vectors in C is again in C.

C is convex.

Remark: Every point x in a neighborhood of x can be written as x x z where z 0

if and only if x x ( since z x x ).

Definition 1.4.3 The cone of feasible directions at x , denoted D( x ) , is a follows:

D( x ) z E n : x z X , 0 , for some 0

D( x ) actually is a cone but not necessarily a convex cone and is important in many

algorithms. For now, it holds our interest because if x is a local minimum and z D( x ) , then

f ( x z ) f ( x ) for sufficiently small θ. Our goal is to characterize D( x ) in terms of the

constraint functions gi and hj.

Definition 1.4.4 Z 1 ( x ) is the linearizing cone of the feasible region evaluated at x .

Z 1 ( x ) z E n : z T gi ( x ) 0, i I ( x ), z T h j ( x ) 0, j 1, , p

5

Where I ( x ) is the indicator function of the active inequality constraints.

I ( x ) i : g i ( x ) 0

Define Z 2 ( x ) z E n : z T f ( x ) 0

Remark: Z 1 ( x ) is a closed convex cone. Suppose z1 , z2 Z 1 ( x ) . Then

( z1 z2 )T gi ( x ) z1T gi ( x ) zT2 gi ( x ) 0, i I ( x ) , and ( z1 z2 )T hj ( x ) 0, j 1,, p .

Lemma 1.15 D( x ) Z 1 ( x )

Proof: Suppose z T g k ( x ) 0 for some k I ( x ) , where z D( x ) ; in other words,

suppose there exists a z Z 1 ( x ) but the z D( x ) .

Then gk ( x z) gk ( x ) zT gk ( x ) z ( x z; x ) where

( x z; x ) 0 as 0 . If θ is small enough, then zT g k ( x ) ( x z; x ) 0 .

Since g k ( x ) 0 , we have g k ( x z ) 0 , which contradicts z D( x ) .

Therefore, z T gi ( x ) 0, i I ( x ) .

Similarly, we can show zT hj ( x ) 0, j=1,, p, z D( x ) .

Remark: Since Z 1 ( x ) is closed, in fact we have D( x ) Z 1 ( x ) . QED.

Lemma 1.16 If z Z 2 ( x ) , then there exists a point x x z sufficiently close to x such

that f ( x) f ( x ) .

Proof: zT f ( x ) lim

f ( x z ) f ( x )

0

0

f (x z ) f ( x ) 0 for θ sufficiently small. QED.

Example 1-27: Min f ( x) x1

x E2

s.t. g1 ( x) (1 x1 )3 x2 0

(1)

g2 ( x) x1 0

(2)

(3)

g3 ( x) x2 0

6

x 1 0 is feasible with I ( x ) 1,3

T

1

f ( x )

0

0

g1 ( x )

1

0

g 3 ( x )

1

Z 1 ( x ) z E 2 : z T gi ( x ) 0, i 1,3 z E 2 : z2 0, z2 0 z E 2 : z 2 0

Z 2 ( x ) z E 2 : z T f ( x ) 0 z E 2 : z1 0 z E 2 : z1 0

D( x ) z E 2 : x z X , 0 , for some 0

1 z1

x z

z2

(1)

( z1 )3 z2 0

(2)

1 z1 0

(3)

z2 0

(3) z2 0

(1) and (3) ( z1 )3 0 z1 0

Evaluating the multiple cases for z1 , z2 leads to the final result below which is

easily verified graphically using the figure above. The reader is encouraged to verify the result

analytically as an exercise.

7

D( x ) z E 2 : z1 0, z2 0

Note that D( x ) Z 1 ( x ) .

Lemma 1.17 Farkas’ Lemma: Let A be an m n matrix; let c E n . Then exactly one of the

following two systems has a solution.

System 1: Ax 0 and cT x 0 for some x E n

System 2: AT y c, y 0 for some y E m

Remark: The following is an equivalent statement:

cT x 0, x satisfying Ax 0 if and only if there exists y 0 E m such that AT y c

Example 1-28: Geometric Interpretation of Lemma 1.17

Let m = 4, n = 2. Define ai = rows of A, i = 1,…,4

System 2 has a solution if c lies in the convex cone generated by the rows of A.

System 1 has a solution if the closed convex cone x : Ax 0 has a nonempty intersection with

open half-space x : cT x 0 .

Definition 1.4.5 Define the Lagrangian associated with Problem (P) as

m

p

i 1

j 1

L( x, , ) f ( x) i gi ( x) j h j ( x)

8

Theorem 1.18 Suppose that x X . Then Z 1 ( x ) Z 2 ( x ) if and only if there exist

E m , E p such that:

m

p

i 1

j 1

x L( x , , ) f ( x ) igi ( x ) j h j ( x ) 0

(i)

i gi ( x ) 0, i 1, , m

(ii)

(Complementary slackness)

0

(iii)

These conditions are natural candidates to become the desired extension of the necessary

conditions for equality constraints. They can become necessary conditions for Problem (P) if we

can guarantee that Z 1 ( x ) Z 2 ( x ) at x , a local solution to (P). But Z 1 ( x ) Z 2 ( x )

is a geometric optimality condition; we would prefer algebraic optimality conditions.

Proof:

Z 1 ( x ) since 0 Z 1 ( x )

Z 1 ( x ) Z 2 ( x ) if and only if for every z satisfying

z T gi ( x ) 0, i I ( x )

(1)

z h j ( x ) 0, j 1,, p

T

(2)

we have

zT f ( x ) 0

(3)

(2) is equivalent to

zT h j ( x ) 0, j 1,, p

(4)

z T h j ( x ) 0, j 1,, p

(5)

From Lemma 1.17, (3) holds for all z satisfying (1), (4), (5) if and only if there exist

0, 1 0, 2 0 such that

f ( x )

iI ( x )

p

igi ( x ) ( 1j 2j )h j ( x )

j 1

Let gi ( x ), hj ( x ), hj ( x ) be the rows of A and f ( x ) c in reference to

Lemma 1.17.

Let i 0 for i I ( x ), 1 2 , and conclude that Z 1 ( x ) Z 2 ( x ) if and only

if (i) – (iii) hold. QED.

9

Example 1-27 Continued: We found Z 1 ( x ) z E 2 : z2 0 and Z 2 ( x ) z E 2 : z1 0 .

3

Z 1 ( x ) Z 2 ( x ) e.g. z Z 1 ( x ) Z 2 ( x )

0

T

So there are no that satisfy (i) – (iii) even though x 1 0 is the optimal solution.

Example 1-28: Min f ( x) ( x1 3) 2 ( x2 22 )

s.t. g1 ( x) x12 x22 5 0

g 2 ( x) x1 x2 3 0

g3 ( x) x1 0

g 4 ( x) x2 0

Let x 9 5

6

5

T

. I ( x ) {2} . g 2 ( x ) 1 1

T

Z 1 ( x ) Z 2 ( x )

e.g. 5 5 ( Z 1

f ( x ) 12

5

8

5

Z 1 ( x ) z E 2 : z T g 2 ( x ) 0 z E 2 : z1 z2 0 z E 2 : z1 x2 0

Z 2 ( x ) z E 2 : zT f ( x ) 0 z E 2 : 12 z1 8 z2 0

5

5

T

No exist that satisfy (i) – (iii) at x .

10

Z2)

T

Let x 2 1 ; I ( x ) {1, 2}

T

g1 ( x ) 4 2

g 2 ( x ) 1 1

T

T

f ( x ) 2 2

T

Z ( x ) z E

Z 1 ( x ) z E 2 : z T gi ( x ) 0, i 1, 2 z E 2 : 4 z1 2 z 2 0, z1 z2 0

1

2

: z2 2 z1 , z2 z1

The graph below illustrates this scenario (not including the line z2 z1 ).

Z 2 ( x ) z E 2 : z T f ( x ) 0 z E 2 : 2 z1 2 z2 0 z E 2 : z2 z1

Z 1 ( x ) Z 2 ( x ) ; so there exist E 4 such that (i) – (iii) are satisfied.

In the graph below, note that 2 1 is optimal and that 1 3 4 0 and 2 2 .

T

Recall that we’ve said (i) – (iii) can become necessary conditions for a local optimal

point to Problem (P) if we can guarantee Z 1 ( x ) Z 2 ( x ) at the local optimal point x . It is

11

possible to derive “weak” necessary conditions for optimality without requiring

Z 1 ( x ) Z 2 ( x ) at the solution by introducing a multiplier for the objective function.

Definition 1.4.6 Define the Weak Langrangian L associated with Problem (P) as the

following:

m

p

i 1

j 1

L( x, , ) 0 f ( x) i gi ( x) j h j ( x)

L:E E

n

m 1

E

p

Lemma 1.19 Theory of the Alternative:

Let A be a m n matrix. Then either there exist x E n such that

Ax 0

m

Or there exist u E where u 0 and u 0 such that

uT A 0

But never both.

(1)

(2)

Example 1-29: The figure below illustrates Lemma 1.19 depicting ai as the rows of the matrix

A with m = 3 and n = 2.

Proof of Lemma 1.19:

Suppose there exist x and u such that (1) and (2) are satisfied; the following must be true:

uT Ax 0 and uT Ax 0

#

Suppose now there does not exist x satisfying (1). This means that for any x E n we

cannot find a negative number w satisfying the following:

n

a x

j 1

ij

j

w, i 1,, m

12

Letting z w x , c 1 0

T

e 1

where

0 E n 1 , A e A

m( n 1)

T

1 E m and invoking Farka’s Lemma (1.17), we conclude that there exist

T

u E m , u 0 such that

m

u

i 1

m

i

1

u a

i 1

i ij

0, j 1, , n

Therefore, u solves (2). QED.

Theorem 1.20 Fritz-John Conditions

Suppose that f, gi, i = 1,…,m, hj, j = 1,…,p have continuous first partial derivatives on an

open set containing X. If x is a solution to Problem (P), then there exists E m 1 and

E p such that the following hold:

m

p

i 1

j 1

x L( x , , ) 0f ( x ) igi ( x ) j h j ( x ) 0

(iv)

i gi ( x ) 0, i 1,, m

(v)

( , ) 0, 0

(vi)

Proof: (only for ≥ constraints; proof for equality constraints is similar)

Modified Problem: min f ( x)

x En

s.t. gi ( x) 0, i 1,, m

Must proof existence of a E m 1 to show the following three conditions:

m

0f ( x ) igi ( x ) 0

(iv)’

i gi ( x ) 0, i 1,, m

(v)’

0, 0

(vi)’

i 1

Case 1: if gi ( ) 0 i , then I ( x ) . Choose 0 1, 1 2 m .

Then (iv)’ – (vi)’ hold

Case 2: Suppose I ( x ) . Then for every z E n satisfying

z T gi ( x ) 0, i I ( x )

Then we cannot have the following

zT f ( x ) 0

13

(1)

(2)

This is true since if there exist z satisfying (1), then there exists 1 0

such that if 0 1 , x x z satisfies gi ( x) 0, i 1,, m (i.e. x is feasible). Also, since

(2) holds, there exists 2 such that f ( x) f ( x ) for x x z , 0 2 .

Let min 1 , 2 , then for x x z, 0, , f ( x) f ( x ) , and

gi ( x ) 0 contradicting that x is a local minimum. Thus the system (1) and (2) has no

solution.

f ( x)

A gi ( x) i I ( x )

By Lemma 1.19 there exists a 0, 0 , such that

0f ( x )

iI ( x )

i gi ( x ) 0 .

m

Letting i 0 for i I ( x ) , we get 0f ( x ) igi ( x ) 0

i 1

and gi ( x ) 0, i 1,, m . QED.

i

Example 1-30: Min f ( x) x1

s.t. g1 ( x) (1 x1 )3 x2 0

g2 ( x) x1 0

g3 ( x) x2 0

1

At x , 0 0, 1 1, 2 0, 3 1 satisfies the Fritz-John conditions.

0

1

0

0

f ( x )

g1 ( x )

g 3 ( x )

0

1

1

14

Example 1-30 illustrates the weaknesses of the Fritz-John conditions.

Substituting in 0 0 , conditions (iv) – (vi) are satisfied at (1,0) for any differentiable objective

function whether it has a local minimum at that point or not.

Remark: Problem (P) includes both inequality ( ≥ ) and equality ( = ) constraints because

equality-inequality problems can be converted to problems having only one type of constraint

but this increases the number of variables or the number of constraints (which can weaken the

results).

g ( x) 0 g ( x) y 2 0

h( x) 0 h( x) 0, h( x) 0

If we rewrite each equality ( = ) constraint in Problem (P)

h j ( x) g m j ( x) 0, j 1, , p

h j ( x) g m p j ( x) 0, j 1, , p

Then choose 0 1 m , m 1 m 2 p 1 , then (iv) – (vi) are satisfied for

every feasible x.

15