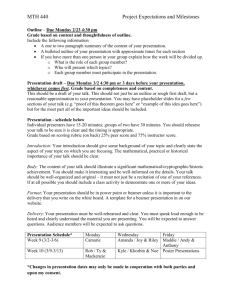

L22-sb-pipelineII-ca..

advertisement

inst.eecs.berkeley.edu/~cs61c CS61C : Machine Structures Lecture #22 CPU Design: Pipelining to Improve Performance II 2007-8-1 Scott Beamer, Instructor CS61C L22 CPU Design : Pipelining to Improve Performance II (1) Beamer, Summer 2007 © UCB Review: Processor Pipelining (1/2) • “Pipeline registers” are added to the datapath/controller to neatly divide the single cycle processor into “pipeline stages”. • Optimal Pipeline • Each stage is executing part of an instruction each clock cycle. • One inst. finishes during each clock cycle. • On average, execute far more quickly. • What makes this work well? • Similarities between instructions allow us to use same stages for all instructions (generally). • Each stage takes about the same amount of time as all others: little wasted time. CS61C L22 CPU Design : Pipelining to Improve Performance II (2) Beamer, Summer 2007 © UCB Review: Pipeline (2/2) • Pipelining is a BIG IDEA • widely used concept • What makes it less than perfect? • Structural hazards: Conflicts for resources. Suppose we had only one cache? Need more HW resources • Control hazards: Branch instructions effect which instructions come next. Delayed branch • Data hazards: Data flow between instructions. Forwarding CS61C L22 CPU Design : Pipelining to Improve Performance II (3) Beamer, Summer 2007 © UCB Graphical Pipeline Representation (In Reg, right half highlight read, left half write) Time (clock cycles) Reg Reg D$ Reg I$ Reg D$ Reg I$ Reg ALU D$ Reg I$ Reg ALU I$ D$ ALU Reg ALU I$ ALU I n s Load t Add r. Store O Sub r d Or e r D$ CS61C L22 CPU Design : Pipelining to Improve Performance II (4) Reg Beamer, Summer 2007 © UCB Control Hazard: Branching (1/8) Time (clock cycles) ALU I n I$ D$ Reg Reg beq s I$ D$ Reg Reg t Instr 1 r. I$ D$ Reg Reg Instr 2 O I$ D$ Reg Reg Instr 3 r I$ D$ Reg Reg d Instr 4 e r Where do we do the compare for the branch? ALU ALU ALU ALU CS61C L22 CPU Design : Pipelining to Improve Performance II (5) Beamer, Summer 2007 © UCB Control Hazard: Branching (2/8) • We had put branch decision-making hardware in ALU stage • therefore two more instructions after the branch will always be fetched, whether or not the branch is taken • Desired functionality of a branch • if we do not take the branch, don’t waste any time and continue executing normally • if we take the branch, don’t execute any instructions after the branch, just go to the desired label CS61C L22 CPU Design : Pipelining to Improve Performance II (6) Beamer, Summer 2007 © UCB Control Hazard: Branching (3/8) • Initial Solution: Stall until decision is made • insert “no-op” instructions (those that accomplish nothing, just take time) or hold up the fetch of the next instruction (for 2 cycles). • Drawback: branches take 3 clock cycles each (assuming comparator is put in ALU stage) CS61C L22 CPU Design : Pipelining to Improve Performance II (7) Beamer, Summer 2007 © UCB Control Hazard: Branching (4/8) • Optimization #1: • insert special branch comparator in Stage 2 • as soon as instruction is decoded (Opcode identifies it as a branch), immediately make a decision and set the new value of the PC • Benefit: since branch is complete in Stage 2, only one unnecessary instruction is fetched, so only one no-op is needed • Side Note: This means that branches are idle in Stages 3, 4 and 5. CS61C L22 CPU Design : Pipelining to Improve Performance II (8) Beamer, Summer 2007 © UCB Control Hazard: Branching (5/8) Time (clock cycles) ALU I n I$ D$ Reg Reg beq s I$ D$ Reg Reg t Instr 1 r. I$ D$ Reg Reg Instr 2 O I$ D$ Reg Reg Instr 3 r I$ D$ Reg Reg d Instr 4 e r Branch comparator moved to Decode stage. ALU ALU ALU ALU CS61C L22 CPU Design : Pipelining to Improve Performance II (9) Beamer, Summer 2007 © UCB Control Hazard: Branching (6a/8) Time (clock cycles) beq Reg I$ D$ Reg Reg ALU add I$ ALU I n s t r. • User inserting no-op instruction D$ Reg ALU bub bub bub bub bub O nop ble ble ble ble ble r D$ Reg Reg I$ d lw e r • Impact: 2 clock cycles per branch instruction slow CS61C L22 CPU Design : Pipelining to Improve Performance II (10) Beamer, Summer 2007 © UCB Control Hazard: Branching (6b/8) Time (clock cycles) beq Reg I$ D$ Reg Reg ALU add I$ ALU I n s t r. • Controller inserting a single bubble D$ Reg ALU bub O lw D$ Reg Reg I$ ble r d e r • Impact: 2 clock cycles per branch instruction slow CS61C L22 CPU Design : Pipelining to Improve Performance II (11) Beamer, Summer 2007 © UCB Control Hazard: Branching (7/8) • Optimization #2: Redefine branches • Old definition: if we take the branch, none of the instructions after the branch get executed by accident • New definition: whether or not we take the branch, the single instruction immediately following the branch gets executed (called the branch-delay slot) • The term “Delayed Branch” means we always execute inst after branch • This optimization is used on the MIPS CS61C L22 CPU Design : Pipelining to Improve Performance II (12) Beamer, Summer 2007 © UCB Control Hazard: Branching (8/8) • Notes on Branch-Delay Slot • Worst-Case Scenario: can always put a no-op in the branch-delay slot • Better Case: can find an instruction preceding the branch which can be placed in the branch-delay slot without affecting flow of the program re-ordering instructions is a common method of speeding up programs compiler must be very smart in order to find instructions to do this usually can find such an instruction at least 50% of the time Jumps also have a delay slot… CS61C L22 CPU Design : Pipelining to Improve Performance II (13) Beamer, Summer 2007 © UCB Example: Nondelayed vs. Delayed Branch Nondelayed Branch or $8, $9 ,$10 Delayed Branch add $1 ,$2,$3 add $1 ,$2,$3 sub $4, $5,$6 sub $4, $5,$6 beq $1, $4, Exit beq $1, $4, Exit or xor $10, $1,$11 xor $10, $1,$11 Exit: $8, $9 ,$10 Exit: CS61C L22 CPU Design : Pipelining to Improve Performance II (14) Beamer, Summer 2007 © UCB Out-of-Order Laundry: Don’t Wait 6 PM 7 T a s k 8 9 10 3030 30 30 30 30 30 A 11 12 1 2 AM Time bubble B C O D r d E e r F A depends on D; rest continue; need more resources to allow out-of-order CS61C L22 CPU Design : Pipelining to Improve Performance II (15) Beamer, Summer 2007 © UCB Superscalar Laundry: Parallel per stage 6 PM 7 T a s k 8 9 10 B C O D r d E e r F 12 1 2 AM Time 3030 30 30 30 A 11 (light clothing) (dark clothing) (very dirty clothing) (light clothing) (dark clothing) (very dirty clothing) More resources, HW to match mix of parallel tasks? CS61C L22 CPU Design : Pipelining to Improve Performance II (16) Beamer, Summer 2007 © UCB Superscalar Laundry: Mismatch Mix 6 PM 7 T a s k 8 9 10 O r B d C e r D 12 1 2 AM Time 3030 30 30 30 30 30 A 11 (light clothing) (light clothing) (dark clothing) (light clothing) Task mix underutilizes extra resources CS61C L22 CPU Design : Pipelining to Improve Performance II (17) Beamer, Summer 2007 © UCB Real-world pipelining problem • You’re the manager of a HUGE assembly plant to build computers. Box • Main pipeline • 10 minutes/ pipeline stage • 60 stages • Latency: 10hr Problem: need to run 2 hr test before done..help! CS61C L22 CPU Design : Pipelining to Improve Performance II (18) Beamer, Summer 2007 © UCB Real-world pipelining problem solution 1 • You remember: “a pipeline frequency is limited by its slowest stage”, so… Box • Main pipeline • 10 minutes/ 2hours/ pipeline stage • 60 stages • Latency: 120hr 10hr Problem: need to run 2 hr test before done..help! CS61C L22 CPU Design : Pipelining to Improve Performance II (19) Beamer, Summer 2007 © UCB Real-world pipelining problem solution 2 • Create a sub-pipeline! Box • Main pipeline • 10 minutes/ pipeline stage • 60 stages CS61C L22 CPU Design : Pipelining to Improve Performance II (20) 2hr test (12 CPUs in this pipeline) Beamer, Summer 2007 © UCB Peer Instruction (1/2) Assume 1 instr/clock, delayed branch, 5 stage pipeline, forwarding, interlock on unresolved load hazards (after 103 loops, so pipeline full) Loop: lw addu sw addiu bne nop $t0, $t0, $t0, $s1, $s1, 0($s1) $t0, $s2 0($s1) $s1, -4 $zero, Loop •How many pipeline stages (clock cycles) per loop iteration to execute this code? CS61C L22 CPU Design : Pipelining to Improve Performance II (21) 1 2 3 4 5 6 7 8 9 10 Beamer, Summer 2007 © UCB Peer Instruction Answer (1/2) • Assume 1 instr/clock, delayed branch, 5 stage pipeline, forwarding, interlock on unresolved load hazards. 103 iterations, so pipeline full. 2. (data hazard so stall) Loop: 1. lw $t0, 0($s1) 3. addu $t0, $t0, $s2 $t0, 0($s1) 6. (!= in DCD) 4. sw 5. addiu $s1, $s1, -4 7. bne $s1, $zero, Loop 8. nop (delayed branch so exec. nop) • How many pipeline stages (clock cycles) per loop iteration to execute this code? 1 2 3 4 5 6 CS61C L22 CPU Design : Pipelining to Improve Performance II (22) 7 8 9 10 Beamer, Summer 2007 © UCB Peer Instruction (2/2) Assume 1 instr/clock, delayed branch, 5 stage pipeline, forwarding, interlock on unresolved load hazards (after 103 loops, so pipeline full). Rewrite this code to reduce pipeline stages (clock cycles) per loop to as few as possible. Loop: lw addu sw addiu bne nop $t0, $t0, $t0, $s1, $s1, 0($s1) $t0, $s2 0($s1) $s1, -4 $zero, Loop •How many pipeline stages (clock cycles) per loop iteration to execute this code? CS61C L22 CPU Design : Pipelining to Improve Performance II (23) 1 2 3 4 5 6 7 8 9 10 Beamer, Summer 2007 © UCB Peer Instruction (2/2) How long to execute? • Rewrite this code to reduce clock cycles per loop to as few as possible: Loop: 1. lw 2. addiu 3. addu 4. bne 5. sw (no hazard since extra cycle) $t0, $s1, $t0, $s1, $t0, 0($s1) $s1, -4 $t0, $s2 $zero, Loop +4($s1) (modified sw to put past addiu) • How many pipeline stages (clock cycles) per loop iteration to execute your revised code? (assume pipeline is full) 1 2 3 4 5 6 CS61C L22 CPU Design : Pipelining to Improve Performance II (24) 7 8 9 10 Beamer, Summer 2007 © UCB “And in Early Conclusion..” • Pipeline challenge is hazards • Forwarding helps w/many data hazards • Delayed branch helps with control hazard in 5 stage pipeline • Load delay slot / interlock necessary • More aggressive performance: • Superscalar • Out-of-order execution CS61C L22 CPU Design : Pipelining to Improve Performance II (25) Beamer, Summer 2007 © UCB Administrivia • Assignments • HW7 due 8/2 • Proj3 due 8/5 • Midterm Regrades due Today • Logisim in lab is now 2.1.6 •java -jar ~cs61c/bin/logisim • Valerie’s OH on Thursday moved to 1011 for this week CS61C L22 CPU Design : Pipelining to Improve Performance II (26) Beamer, Summer 2007 © UCB Why Doesn’t It Work? • DO NOT MESS WITH THE CLOCK • Crafty veterans may do it very rarely and carefully • Doing so will cause unpredictable and hard to track errors • Following slides are from CS 150 Lecture by Prof. Katz CS61C L22 CPU Design : Pipelining to Improve Performance II (27) Beamer, Summer 2007 © UCB Cascading Edge-triggered Flip-Flops • Shift register • New value goes into first stage • While previous value of first stage goes into second stage • Consider setup/hold/propagation delays (prop must be > hold) IN D Q Q0 D Q Q1 CLK OUT 100 IN Q0 Q1 CLK CS61C L22 CPU Design : Pipelining to Improve Performance II (28) Beamer, Summer 2007 © UCB Cascading Edge-triggered Flip-Flops • Shift register • New value goes into first stage • While previous value of first stage goes into second stage • Consider setup/hold/propagation delays (prop must be > hold) IN CLK D Q Q0 D Q Q1 OUT Clk1 Delay 100 IN Q0 Q1 CLK Clk1 CS61C L22 CPU Design : Pipelining to Improve Performance II (29) Beamer, Summer 2007 © UCB Clock Skew • The problem • Correct behavior assumes next state of all storage elements determined by all storage elements at the same time • Difficult in high-performance systems because time for clock to arrive at flip-flop is comparable to delays through logic (and will soon become greater than logic delay) • Effect of skew on cascaded flip-flops: 100 In Q0 Q1 CLK1 is a delayed version of CLK CLK CLK1 original state: IN = 0, Q0 = 1, Q1 = 1 due to skew, next state becomes: Q0 = 0, Q1 = 0, and not Q0 = 0, Q1 = 1 CS61C L22 CPU Design : Pipelining to Improve Performance II (30) Beamer, Summer 2007 © UCB Why Gating of Clocks is Bad! LD Reg Clk Reg gatedClK Clk LD GOOD BAD Do NOT Mess With Clock Signals! CS61C L22 CPU Design : Pipelining to Improve Performance II (31) Beamer, Summer 2007 © UCB Why Gating of Clocks is Bad! LD generated by FSM shortly after rising edge of CLK Clk LD gatedClk Runt pulse plays HAVOC with register internals! NASTY HACK: delay LD through negative edge triggered FF to ensure that it won’t change during next positive edge event Clk LDn gatedClk Clk skew PLUS LD delayed by half clock cycle … What is the effect on your register transfers? Do NOT Mess With Clock Signals! CS61C L22 CPU Design : Pipelining to Improve Performance II (32) Beamer, Summer 2007 © UCB The Big Picture Computer Processor Memory (active) (passive) (where Control programs, (“brain”) data live Datapath when (“brawn”) running) CS61C L22 CPU Design : Pipelining to Improve Performance II (33) Devices Input Output Keyboard, Mouse Disk, Network Display, Printer Beamer, Summer 2007 © UCB Memory Hierarchy Storage in computer systems: • Processor • holds data in register file (~100 Bytes) • Registers accessed on nanosecond timescale • Memory (we’ll call “main memory”) • More capacity than registers (~Gbytes) • Access time ~50-100 ns • Hundreds of clock cycles per memory access?! • Disk • HUGE capacity (virtually limitless) • VERY slow: runs ~milliseconds CS61C L22 CPU Design : Pipelining to Improve Performance II (34) Beamer, Summer 2007 © UCB Motivation: Why We Use Caches (written $) CPU µProc 60%/yr. 2000 1999 1998 1996 1995 1994 1993 1992 1991 1988 1987 1986 1985 1984 1983 1982 1981 1980 1 1990 10 1997 Processor-Memory Performance Gap: (grows 50% / year) DRAM DRAM 7%/yr. 100 1989 Performance 1000 • 1989 first Intel CPU with cache on chip • 1998 Pentium III has two levels of cache on chip CS61C L22 CPU Design : Pipelining to Improve Performance II (35) Beamer, Summer 2007 © UCB Memory Caching • Mismatch between processor and memory speeds leads us to add a new level: a memory cache • Implemented with same IC processing technology as the CPU (usually integrated on same chip): faster but more expensive than DRAM memory. • Cache is a copy of a subset of main memory. • Most processors have separate caches for instructions and data. CS61C L22 CPU Design : Pipelining to Improve Performance II (36) Beamer, Summer 2007 © UCB Memory Hierarchy Processor Higher Levels in memory hierarchy Lower Level 1 Level 2 Increasing Distance from Proc., Decreasing speed Level 3 ... Level n Size of memory at each level As we move to deeper levels the latency goes up and price per bit goes down. CS61C L22 CPU Design : Pipelining to Improve Performance II (37) Beamer, Summer 2007 © UCB Memory Hierarchy • If level closer to Processor, it is: • smaller • faster • subset of lower levels (contains most recently used data) • Lowest Level (usually disk) contains all available data (or does it go beyond the disk?) • Memory Hierarchy presents the processor with the illusion of a very large very fast memory. CS61C L22 CPU Design : Pipelining to Improve Performance II (38) Beamer, Summer 2007 © UCB