John Lazzaro Dave Patterson

advertisement

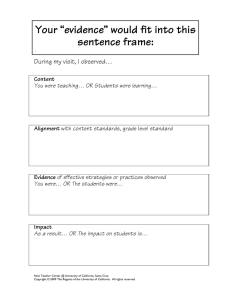

CS152 – Computer Architecture and Engineering Lecture 18 – ECC, RAID, Bandwidth vs. Latency 2004-10-28 John Lazzaro (www.cs.berkeley.edu/~lazzaro) Dave Patterson (www.cs.berkeley.edu/~patterson) www-inst.eecs.berkeley.edu/~cs152/ CS 152 L18 Disks, RAID, BW (1) Fall 2004 © UC Regents Review • Buses are an important technique for building large-scale systems – Their speed is critically dependent on factors such as length, number of devices, etc. – Critically limited by capacitance • Direct Memory Access (dma) allows fast, burst transfer into processor’s memory: – Processor’s memory acts like a slave – Probably requires some form of cache-coherence so that DMA’ed memory can be invalidated from cache. • Networks and switches popular for LAN, WAN • Networks and switches starting to replace buses on desktop, even inside chips CS 152 L18 Disks, RAID, BW (2) Fall 2004 © UC Regents Review: ATA cables •Serial ATA, Rounded parallel ATA, Ribbon parallel ATA cables • 40 inches max vs. 18 inch •Serial ATA cables are thin CS 152 L18 Disks, RAID, BW (3) Fall 2004 © UC Regents Outline • ECC • RAID: Old School & Update • Latency vs. Bandwidth (if time permits) CS 152 L18 Disks, RAID, BW (4) Fall 2004 © UC Regents Error-Detecting Codes •Computer memories can make errors occasionally •To guard against errors, some memories use error-detecting codes or error-correcting codes (ECC) => extra bits are added to each memory word • When a word is read out of memory, the extra bits are checked to see if an error has occurred and, if using ECC, correct them • Data + extra bits called “code words” CS 152 L18 Disks, RAID, BW (5) Fall 2004 © UC Regents Error-Detecting Codes •Given 2 code words, can determine how many corresponding bits differ. •To determine how many bits differ, just compute the bitwise Boolean EXCLUSIVE OR of the two codewords, and count the number of 1 bits in the result •The number of bit positions in which two codewords differ is called the Hamming distance •if two code words are a Hamming distance d apart, it will require d single-bit errors to convert one into the other CS 152 L18 Disks, RAID, BW (6) Fall 2004 © UC Regents Error-Detecting Codes For example, the code words 11110001 and 00110000 are a Hamming distance 3 apart because it takes 3 single-bit errors to convert one into the other. Xor 11110001 00110000 -------11000001 3 1’s = Hamming distance 3 CS 152 L18 Disks, RAID, BW (7) Fall 2004 © UC Regents Error-Detecting Codes •As a simple example of an error-detecting code, consider a code in which a single parity bit is appended to the data. •The parity bit is chosen so that the number of 1 bits in the codeword is even (or odd). • E.g., if even parity, parity bit for 11110001 is 1. • Such a parity code has Hamming distance 2, since any single-bit error produces a codeword with the wrong parity • It takes 2 single-bit errors to go from a valid codeword to another valid codeword => detect single bit errors. • Whenever a word containing the wrong parity is read from memory, an error condition is signaled. • The program cannot continue, but at least no incorrect results are computed. CS 152 L18 Disks, RAID, BW (8) Fall 2004 © UC Regents Error-Correcting Codes • a Hamming distance of 2k + 1 is required to be able to correct k errors in any data word •As a simple example of an error-correcting code, consider a code with only four valid code words: 0000000000, 0000011111, 1111100000, and 1111111111 •This code has a distance 5, which means that it can correct double errors. • If the codeword 0000000111 arrives, the receiver knows that the original must have been 0000011111 (if there was no more than a double error). If, however, a triple error changes 0000000000 into 0000000111, the error cannot be corrected. • CS 152 L18 Disks, RAID, BW (9) Fall 2004 © UC Regents Hamming Codes • How many parity-bits are needed? • m parity-bits can code 2m-1-m info-bits Info-bits Parity-bits <5 3 <12 4 <27 5 <58 6 <121 7 • How correct single error (SEC) and detect 2 errors (DED)? • How many “SEC/DED” bits for 64 bits data? CS 152 L18 Disks, RAID, BW (10) Fall 2004 © UC Regents Administrivia - HW 3, Lab 4 Lab 4 is next: Plan by Thur for TA, Meet with TA Friday, Final Monday CS 152 L18 Disks, RAID, BW (11) Fall 2004 © UC Regents ECC Hamming Code. • Hamming Coding is a coding method for detecting and correcting errors. • • • • – ECC Hamming distance between 2 coded words must be ≥ 3 Number bits from right, starting with 1 All bits whose bit number is a power of 2 are parity bits We use EVEN PARITY in this example This example shows a 4 data bits 7 6 5 4 3 2 1 D D D P D P P D - D - D - P (EVEN PARITY) D D - - D P - (EVEN PARITY) D D D P - - - (EVEN PARITY) 7-BIT CODEWORD •Bit 1 will check (parity) in all the bit positions that use a 1 in their number •Bit 2 will check all the bit positions that use a 2 in their number •Bit 4 will check all the bit positions that use a 4 in their number •Etc. CS 152 L18 Disks, RAID, BW (12) Fall 2004 © UC Regents Example: Hamming Code. Example: The message 1101 would be sent as 1100110, since: 7 6 5 4 3 2 1 EVEN PARITY 1 1 0 0 1 1 0 7-BIT CODEWORD 1 - 0 - 1 - 0 (EVEN PARITY) 1 1 - - 1 1 - (EVEN PARITY) 1 1 0 0 - - - (EVEN PARITY) If number of 1s is even then Parity = 0 Else Parity = 1 Let us consider the case where an error caused by the channel transmitted message 1100110 ------------> BIT: 7654321 CS 152 L18 Disks, RAID, BW (13) received message 1110110 BIT: 7 6 5 4 3 2 1 Fall 2004 © UC Regents Example: Hamming Code. transmitted message 1100110 ------------> BIT: 7654321 received message 1110110 BIT: 7 6 5 4 3 2 1 The above error (in bit 5) can be corrected by examining which of the three parity bits was affected by the bad bit: 7 6 5 4 3 2 1 1 1 1 0 1 1 0 7-BIT CODEWORD 1 - 1 - 1 - 0 (EVEN PARITY) NOT! 1 1 1 - - 1 1 - (EVEN PARITY) OK! 0 1 1 1 0 - - - (EVEN PARITY) NOT! 1 bad parity bits labeled 101 point directly to the bad bit since 101 binary equals 5 CS 152 L18 Disks, RAID, BW (14) Fall 2004 © UC Regents Will Hamming Code detect and correct errors on parity bits? Yes! transmitted message 1100110 ------------> BIT: 7654321 received message 1100111 BIT: 7 6 5 4 3 2 1 The above error in parity bit (bit 1) can be corrected by examining as below: 7 6 5 4 3 2 1 1 1 0 0 1 1 1 7-BIT CODEWORD 1 - 0 - 1 - 0 (EVEN PARITY) NOT! 1 1 1 - - 1 1 - (EVEN PARITY) OK! 0 1 1 0 0 - - - (EVEN PARITY) OK! 0 the bad parity bits labeled 001 point directly to the bad bit since 001 binary equals 1. In this example error in parity bit 1 is detected and can be corrected by flipping it to a 0 CS 152 L18 Disks, RAID, BW (15) Fall 2004 © UC Regents RAID Beginnings • We had worked on 3 generations of Reduced Instruction Set Computer (RISC) processors 1980 – 1987 • Our expectation: I/O will become a performance bottleneck if doesn’t get faster • Randy Katz gets Macintosh with disk along side • “Use PC disks to build fast I/O to keep pace with RISC?” CS 152 L18 Disks, RAID, BW (16) Fall 2004 © UC Regents Redundant Array of Inexpensive Disks (1987-93) • Hard to explain ideas, given past disk array efforts • Paper to educate, differentiate? • RAID paper spread like virus • Products from Compaq, EMC, IBM, • RAID I • Sun 4/280, 128 MB of DRAM, • 4 dual-string SCSI controllers, • 28 5.25” 340 MB disks + SW • RAID II • Gbit/s net + 144 3.5” 320 MB disks • 1st Network Attached Storage • Ousterhout: Log Structured File Sys. widely used (NetAp) • Today RAID ~ $25B industry; 80% of server disks in RAID • 1998 IEEE Storage Award Students: Peter Chen, Ann Chevernak, Garth Gibson, Ed Lee, Ethan Miller, Mary Baker, John Hartman, Kim Keeton, Mendel Rosenblum, Ken Sherriff, … CS 152 L18 Disks, RAID, BW (17) Fall 2004 © UC Regents Latency Lags Bandwidth 10000 Over last 20 to 25 years, for network 1000 disk, DRAM, MPU, Latency Lags Relative BW Bandwidth: Improve 100 • Bandwidth Improved ment 120X to 2200X 10 • But Latency Improved (Latency improvement only 4X to 20X = Bandwidth improvement) 1 • Look at examples, 1 10 100 Relative Latency Improvement reasons for it CS 152 L18 Disks, RAID, BW (18) Fall 2004 © UC Regents Disks: Archaic(Nostalgic) v. Modern(Newfangled) • • • • • • CDC Wren I, 1983 3600 RPM 0.03 GBytes capacity Tracks/Inch: 800 Bits/Inch: 9550 Three 5.25” platters • Bandwidth: 0.6 MBytes/sec • Latency: 48.3 ms • Cache: none CS 152 L18 Disks, RAID, BW (19) • • • • • • Seagate 373453, 2003 15000 RPM (4X) 73.4 GBytes (2500X) Tracks/Inch: 64000 (80X) Bits/Inch: 533,000 (60X) Four 2.5” platters (in 3.5” form factor) • Bandwidth: 86 MBytes/sec (140X) • Latency: 5.7 ms (8X) • Cache: 8 MBytes Fall 2004 © UC Regents Latency Lags Bandwidth (for last ~20 years) • Performance Milestones 10000 1000 Relative BW 100 Improve ment Disk 10 (Latency improvement = Bandwidth improvement) 1 1 10 • Disk: 3600, 5400, 7200, 100 10000, 15000 RPM (8x, 143x) Relative Latency Improvement CS 152 L18 Disks, RAID, BW (20) (latency = simple operation w/o contention BW = best-case) Fall 2004 © UC Regents Memory:Archaic(Nostalgic)v. Modern(Newfangled) • 1980 DRAM (asynchronous) • 0.06 Mbits/chip • 64,000 xtors, 35 mm2 • 16-bit data bus per module, 16 pins/chip • 13 Mbytes/sec • Latency: 225 ns • (no block transfer) CS 152 L18 Disks, RAID, BW (21) • 2000 Double Data Rate Synchr (clocked) DRAM • 256.00 Mbits/chip (4000X) • 256,000,000 xtors, 204 mm2 • 64-bit data bus per DIMM, 66 pins/chip (4X) • 1600 Mbytes/sec (120X) • Latency: 52 ns (4X) • Block transfers (page mode) Fall 2004 © UC Regents Latency Lags Bandwidth (last ~20 years) 10000 • Performance Milestones 1000 Relative Memory BW 100 Improve ment Disk • Memory Module: 16bit plain 10 DRAM, Page Mode DRAM, 32b, 64b, SDRAM, (Latency improvement DDR SDRAM (4x,120x) = Bandwidth improvement) 1 • Disk: 3600, 5400, 7200, 1 10 100 10000, 15000 RPM (8x, 143x) Relative Latency Improvement CS 152 L18 Disks, RAID, BW (22) (latency = simple operation w/o contention BW = best-case) Fall 2004 © UC Regents LANs: Archaic(Nostalgic)v. Modern(Newfangled) • Ethernet 802.3 • Year of Standard: 1978 • 10 Mbits/s link speed • Latency: 3000 msec • Shared media • Coaxial cable Coaxial Cable: • Ethernet 802.3ae • Year of Standard: 2003 • 10,000 Mbits/s (1000X) link speed • Latency: 190 msec (15X) • Switched media • Category 5 copper wire "Cat 5" is 4 twisted pairs in bundle Plastic Covering Twisted Pair: Braided outer conductor Insulator Copper core CS 152 L18 Disks, RAID, BW (23) Copper, 1mm thick, twisted to avoid antenna effect Fall 2004 © UC Regents Latency Lags Bandwidth (last ~20 years) 10000 • Performance Milestones 1000 Network • Ethernet: 10Mb, 100Mb, 1000Mb, 10000 Mb/s (16x,1000x) • Memory Module: 16bit plain DRAM, Page Mode DRAM, 10 32b, 64b, SDRAM, DDR SDRAM (4x,120x) (Latency improvement = Bandwidth improvement) 1 • Disk: 3600, 5400, 7200, 1 10 100 10000, 15000 RPM (8x, 143x) Relative Latency Improvement Relative Memory BW 100 Improve ment Disk CS 152 L18 Disks, RAID, BW (24) (latency = simple operation w/o contention BW = best-case) Fall 2004 © UC Regents CPUs: Archaic(Nostalgic) v. Modern(Newfangled) • • • • • • • 1982 Intel 80286 12.5 MHz 2 MIPS (peak) Latency 320 ns 134,000 xtors, 47 mm2 16-bit data bus, 68 pins Microcode interpreter, separate FPU chip • (no caches) CS 152 L18 Disks, RAID, BW (25) • • • • • • • 2001 Intel Pentium 4 1500 MHz (120X) 4500 MIPS (peak) (2250X) Latency 15 ns (20X) 42,000,000 xtors, 217 mm2 64-bit data bus, 423 pins 3-way superscalar, Dynamic translate to RISC, Superpipelined (22 stage), Out-of-Order execution • On-chip 8KB Data caches, 96KB Instr. Trace cache, 256KB L2 cache Fall 2004 © UC Regents Latency Lags Bandwidth (last ~20 years) 10000 • Performance Milestones • Processor: ‘286, ‘386, ‘486, 1000 Pentium, Pentium Pro, Network Pentium 4 (21x,2250x) Relative Memory Disk • Ethernet: 10Mb, 100Mb, BW 100 Improve 1000Mb, 10000 Mb/s (16x,1000x) ment • Memory Module: 16bit plain 10 DRAM, Page Mode DRAM, 32b, 64b, SDRAM, (Latency improvement DDR SDRAM (4x,120x) = Bandwidth improvement) 1 • Disk : 3600, 5400, 7200, 1 10 100 10000, 15000 RPM (8x, 143x) Relative Latency Improvement Note: Processor Biggest, Memory Smallest Processor CS 152 L18 Disks, RAID, BW (26) (latency = simple operation w/o contention BW = best-case) Fall 2004 © UC Regents Annual Improvement per Technology CPU DRAM LAN Disk Annual Bandwidth Improvement (all milestones) 1.50 1.27 1.39 1.28 Annual Latency Improvement (all milestones) 1.17 1.07 1.12 1.11 • Again, CPU fastest change, DRAM slowest • But what about recent BW, Latency change? Annual Bandwidth Improvement (last 3 milestones) 1.55 1.30 1.78 1.29 Annual Latency Improvement (last 3 milestones) 1.22 1.06 1.13 1.09 • How summarize BW vs. Latency change? CS 152 L18 Disks, RAID, BW (27) Fall 2004 © UC Regents Towards a Rule of Thumb • How long for Bandwidth to Double? Time for Bandwidth to Double (Years, all milestones) 1.7 2.9 2.1 2.8 • How much does Latency Improve in that time? Latency Improvement in Time for Bandwidth to Double (all milestones) 1.3 1.2 1.3 1.3 • But what about recently? Time for Bandwidth to Double (Years, last 3 milestones) 1.6 2.7 1.2 2.7 Latency Improvement in Time for Bandwidth to Double (last 3 milestones) 1.4 1.2 1.2 1.3 • Despite faster LAN, all 1.2X to 1.4X CS 152 L18 Disks, RAID, BW (28) Fall 2004 © UC Regents Rule of Thumb for Latency Lagging BW • In the time that bandwidth doubles, latency improves by no more than a factor of 1.2 to 1.4 • Stated alternatively: Bandwidth improves by more than the square of the improvement in Latency (and capacity improves faster than bandwidth) CS 152 L18 Disks, RAID, BW (29) Fall 2004 © UC Regents 6 Reasons Latency Lags Bandwidth 1. Moore’s Law helps BW more than latency • Faster transistors, more transistors, more pins help Bandwidth • • • • • MPU Transistors: DRAM Transistors: MPU Pins: DRAM Pins: 0.130 vs. 42 M xtors 0.064 vs. 256 M xtors 68 vs. 423 pins 16 vs. 66 pins (300X) (4000X) (6X) (4X) Smaller, faster transistors but communicate over (relatively) longer lines: limits latency • • • Feature size: MPU Die Size: DRAM Die Size: CS 152 L18 Disks, RAID, BW (30) 1.5 to 3 vs. 0.18 micron (8X,17X) 35 vs. 204 mm2 (ratio sqrt 2X) 47 vs. 217 mm2 (ratio sqrt 2X) Fall 2004 © UC Regents 6 Reasons Latency Lags Bandwidth (cont’d) 2. Distance limits latency • • • Size of DRAM block long bit and word lines most of DRAM access time Speed of light and computers on network 1. & 2. explains linear latency vs. square BW? 3. Bandwidth easier to sell (“bigger=better”) • • • • E.g., 10 Gbits/s Ethernet (“10 Gig”) vs. 10 msec latency Ethernet 4400 MB/s DIMM (“PC4400”) vs. 50 ns latency Even if just marketing, customers now trained Since bandwidth sells, more resources thrown at bandwidth, which further tips the balance CS 152 L18 Disks, RAID, BW (31) Fall 2004 © UC Regents 6 Reasons Latency Lags Bandwidth (cont’d) 4. Latency helps BW, but not vice versa • Spinning disk faster improves both bandwidth and rotational latency • • • • • 3600 RPM 15000 RPM = 4.2X Average rotational latency: 8.3 ms 2.0 ms Things being equal, also helps BW by 4.2X Lower DRAM latency More access/second (higher bandwidth) Higher linear density helps disk BW (and capacity), but not disk Latency • 9,550 BPI 533,000 BPI 60X in BW CS 152 L18 Disks, RAID, BW (32) Fall 2004 © UC Regents 6 Reasons Latency Lags Bandwidth (cont’d) 5. Bandwidth hurts latency • • Queues help Bandwidth, hurt Latency (Queuing Theory) Adding chips to widen a memory module increases Bandwidth but higher fan-out on address lines may increase Latency 6. Operating System overhead hurts Latency more than Bandwidth • Long messages amortize overhead; overhead bigger part of short messages CS 152 L18 Disks, RAID, BW (33) Fall 2004 © UC Regents 3 Ways to Cope with Latency Lags Bandwidth “If a problem has no solution, it may not be a problem, but a fact--not to be solved, but to be coped with over time” — Shimon Peres (“Peres’s Law”) 1. Caching (Leveraging Capacity) • Processor caches, file cache, disk cache 2. Replication (Leveraging Capacity) • Read from nearest head in RAID, from nearest site in content distribution 3. Prediction (Leveraging Bandwidth) • Branches + Prefetching: disk, caches CS 152 L18 Disks, RAID, BW (34) Fall 2004 © UC Regents HW BW Example: Micro Massively Parallel Processor (mMMP) • Intel 4004 (1971): 4-bit processor, 2312 transistors, 0.4 MHz, 10 micron PMOS, 11 mm2 chip • RISC II (1983): 32-bit, 5 stage pipeline, 40,760 transistors, 3 MHz, 3 micron NMOS, 60 mm2 chip – 4004 shrinks to ~ 1 mm2 at 3 micron • 250 mm2 chip, 0.090 micron CMOS = 2312 RISC IIs + Icache + Dcache – RISC II shrinks to ~ 0.05 mm2 at 0.09 mi. – Caches via DRAM or 1 transistor SRAM (www.t-ram.com) – Proximity Communication via capacitive coupling at > 1 TB/s (Ivan Sutherland@Sun) • Processor = new transistor? Cost of Ownership, Dependability, Security v. Cost/Perf. Fall =>2004 mMPP © UC Regents CS 152 L18 Disks, RAID, BW (35) Too Optimistic so Far (its even worse)? • Optimistic: Cache, Replication, Prefetch get more popular to cope with imbalance • Pessimistic: These 3 already fully deployed, so must find next set of tricks to cope; hard! • Its even worse: bandwidth gains multiplied by replicated components parallelism – simultaneous communication in switched LAN – multiple disks in a disk array – multiple memory modules in a large memory – multiple processors in a cluster or SMP CS 152 L18 Disks, RAID, BW (36) Fall 2004 © UC Regents Conclusion: Latency Lags Bandwidth • For disk, LAN, memory, and MPU, in the time that bandwidth doubles, latency improves by no more than 1.2X to 1.4X – BW improves by square of latency improvement • Innovations may yield one-time latency reduction, but unrelenting BW improvement • If everything improves at the same rate, then nothing really changes – When rates vary, require real innovation • HW and SW developers should innovate assuming Latency Lags Bandwidth CS 152 L18 Disks, RAID, BW (37) Fall 2004 © UC Regents