Object Recognition with Informative Features and Linear Classification Authors: Vidal-Naquet & Ullman

advertisement

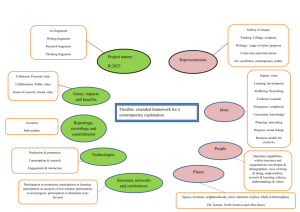

Object Recognition with Informative Features and Linear Classification Authors: Vidal-Naquet & Ullman Presenter: David Bradley Vs. • Image fragments make good features – especially when training data is limited • Image fragments contain more information than wavelets – allows for simpler classifiers • Information theory framework for feature selection Intermediate complexity What’s in a feature? • You and your favorite learning algorithm settle down for a nice game of 20 questions • Except since it is a learning algorithm it can’t talk, and the game really becomes 20 answers: 10110010110000111001 • Have you asked the right questions? • What information are you really giving it? • How easy will it be for it to say “Aha, you are thinking of the side view of a car!” “Pseudo-Inverse” • In general image reconstruction from features provides a good intuition of what information they are providing Wavelet coefficients • Asks the question “how much is the current block of pixels like my wavelet pattern?” • This set of wavelets can entirely represent a 2x2 pixel block: • So if you give your learning algorithm all of the wavelet coefficients then you have given it all of the information it could possibly need, right? Sometimes wavelets work well • Viola and Jones Face Detector • Trained on 24x24 pixel windows • Cascade Structure (32 classifiers total): – Initial 2-feature classifier rejects 60% of non-faces – Second, 5-feature classifier rejects 80% of non-faces Initial 2-feature Classifier But they can require a lot of training data to use correctly • Rest of the Viola and Jones Face Detector – 3 20-feature classifiers – 2 50-feature classifiers – 20 200-feature classifiers • In the later stages it is tough to learn what combinations of wavelet questions to ask. • Surely there must be an easier way… Image fragments • Represent the opposite extreme • Wavelets are basic image building blocks. • Fragments are highly specific to the patterns they come from • Present in the image if cross-correlation > threshold • Ideally if one could label all possible images (and search them quickly): – Use whole images as fragments – All vision problems become easy – Just look for the match Dealing with the non-ideal world • Want to find fragments that: – Generalize well – Are specific to the class – Add information that other fragments haven’t already given us. • What metric should we use to find the best fragments? Information Theory Review • Entropy: the minimum # of bits required to encode a signal Shannon Entropy Conditional Entropy Mutual Information Class Entropy Conditional Entropy • I(C, F) = H(C) – H(C|F) Feature • High mutual information means that knowing the feature value reduces the number of bits needed to encode the class Picking features with Mutual Information • Not practical to exhaustively search for the combination of features with the highest mutual information. • Instead do a greedy search for the feature whose minimum pair-wise information gain with the feature set already chosen is the highest. Picking features with Mutual Information X1 X2 Pick the most pairwise independent variable X3 X4 Low pair-wise information gain indicates variables are dependent Features picked for cars The Details • Image Database – 573 14x21 pixel car side-view images • Cars occupied approx 10x15 pixels – 461 14x21 pixel non-car images • 4 classifiers were trained for 20 cross-validation iterations to generate results – 200 car and 200 non-car images in the training set – 100 car images to extract fragments from Features • Extracted 59200 fragments from the first 100 images – 4x4 to 10x14 pixel image patches – Taken from the 10x15 pixel region containing the car. • Location restricted to a 5x5 area around original location • Used 2 scales of wavelets from the 10x15 region • Selected 168 features total Classifiers • Linear SVM • Tree Augmented Network (TAN) – Models feature’s class dependency and biggest pairwise feature dependency – Quadratic decision surface in feature space Occasional information loss due to overfitting More Information About Fragments • Torralba et al. Sharing Visual Features for Multiclass and Multiview Object Detection. CVPR 2004. – http://web.mit.edu/torralba/www/extendedCVPR2004.pdf • ICCV Short Course (great matlab demo) – http://people.csail.mit.edu/torralba/iccv2005/ Objections • Wavelet features chosen are very weak – Images were very low resolution, maybe too low-res for more complicated wavelets • Data set is too easy – Side-views of cars have low intra-class variability – Cars and faces have very stable and predictable appearances – not hard enough to stress the fragment + linear SVM classifier, so TAN shows no improvement. • Didn’t compare fragments against successful wavelet application – Schneiderman & Kanade car detector • Do the fragment-based classifiers effectively get 100 more training images?