1\

advertisement

CALIFORNIA STATE UNIVERSITY, NORTHRIDGE

RIDGE REGRESSION:

1\

AN EXAMINNriON

OF THE BIASING PARl.<;.METER

A ·thesis S1J.bmitted in partial satisfaction of the

req1J.irements for the degree of Master of Science in

Health Sciencey

Biostatistics

&Epidemiology

by

John R. C. Odencran"l::.z

January, .1979

The Thesis of John Odencrantz is approved:

Madison

Bernard Hanes, Committee Chairman

California State University, Northridge

ii

ACKNOWLEDGMENTS

I would like to thank the members of my committee

for their comments and suggestions.

In particular, I wish

to thank Dr. Bernard Hanes for his support and encouragement, without which this thesis would not have been

possible.

iii

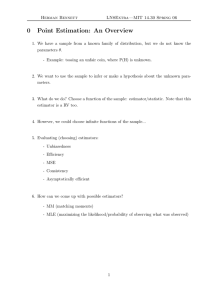

TABLE OF CONTENTS

Page

APPROVAL • • • •

.

ACKNOWLEDGI-IENTS

. ii

. iii

vi

ABSTRACT

Chapter

1

INTRODUCTION . •

1

BACKGROUND •

1

PURPOSE

4

2

REVIEW OF THE LITERATURE .

5

3

OPTIMIZATION AND GENERALIZED RIDGE

REGRESSION . •

• • • • • • • •

9

THE CRITERIA FOR OPTIMIZA'riON

9

DERIVING AN OPTIMUM FOR GENERALIZED

RIDGE REGRESSION • . • • • . . • • • • • 12

4

ESTIMATING THE RIDGE OPTIMUM . • • • .

16

AN ALTERNATIVE OP'l'IMUM FOR GENER~.LIZED

RIDGE REGRESSION . • . . • . • • . .

19

OPTIMIZING THE ORDINARY RIDGE ESTH1.i\'rOR

• 27

2

RIDGE SOLUTIONS FOR THE E(L }

CRITERION • • • • • • • 1• •

27

THE E(L 2 ) SOLUTION OF HOCKING, SPEED,

2

AND LYNN . • • • • • • . • • • • .

• 30

MALLOWS' E(L 2 ) RIDGE SOLUTION • •

2

• 32

THE

• 35

LAWLESS~WANG

ESTIMATOR . • .

THE McDONALD-GALARNEAU ESTIMATOR • .

iv

. • • 36

Chapter

5

Page

OBENCHAIN'S ESTI.tv1ATOR FOR ORDINARY

RIDGE REGRESSION

. • • • • •

40

A REVIEW AND EVALUATION OF THE ORDINARY

RIDGE SOLUTIONS • • • • • • • •

41

CONCLUSIONS • •

44

BIBLIOGRAPHY

46

APPENDIX

I

50

APPENDIX

II

APPENDIX III

e

. . . . .

e

G

e

e

e

e

e

e

59

68

~

APPENDIX

IV

72

APPENDIX

v

79

APPENDIX

VI

90

APPENDIX VII

••••

·:;:

v

•• • • • • •

oP-.

!!t

••

96

ABSTRACT

RIDGE REGRESSION:

AN EXAMINATION OF THE

BIASING PARAMETER

by

John R. C. Odencrantz

Master of Science in Health Science

Biostatistics and Epidemiology

Ridge regression is an alternative to least

squares for highly collinear systems of predictor variables.

It differs from least squares in having a biasing

parameter, k, added to the main diagonal of the X'X

matrix.

A number of rules for choosing k have been pro-

posed, all of which give different solutions.

Fundamen-

tal in applying ridge regression is deciding which rule

to use.

This thesis examines some of the proposed methods

of choosing the biasing parameter.

The two types of

ridge regression, generalized ridge and ordinary ridge, .

are considered separately.

Derivations are given for the

vi

different solutions, with stress on the intent and

underlying assumptions of each.

To permit an evaluation

of relative performance, the results of Wichern and

Churchill's (1978) simulation study are included.

The generalized ridge solutions are derived:

those of Hoerl and Kennard (1970) and Hemmerle and Brantle

(1978) .

In its original form, the solution of Hoerl and

Kennard was iterative.

Hemmerle's (1975) reduction of the

Hoerl-Kennard iteration to a single step is included, as

is a simpler and more intuitive way of achieving the same

result.

Several proposed solutions to the ordinary form

of ridge regression are given.

One of these (Mallows,

(1973) is shown to be incorrect in its final algebraic

form, and a numerical approach is suggested instead.

appendix of numerical examples is added.

Finally, some background results relating to

ridge regression are included.

Among these are a

derivation of ordinary ridge regression from a theorem

in quadratic response surfaces and a presentation of

Marquardt's (1970)

"fractional rank" estimator, a

technique closely related to ridge regression.

_vii

An

Chapter 1

INTRODUC'riON

Background

The standard model for multiple linear regression

is

( 1.1)

where X is an (nxp) matrix of predictor variables, y is

an (nxl) vector of responses, e is an (nxl) error vector

such that E(e)

=

=

0 and E (ee')

-

unknown constant, and

f

cr

2

r ,

-·n

where cr

2

is an

is a (pxl) vector of unknown

regression coefficients.

The usual solution for {1.1) is the Gaussian least

squares estimator

B=

where

S

(X'X)-lX'y,

is (pxl) and E(S)

(1.2)

=~

Multiple regression is among the most popular

tools for the analysis of health data.

Typically such

data are extensive and involve many survey variables,

some of them highly correlated.

Thus regression models

which attempt to make full use of the available information will often be multicollinear.

1

2

This leads to difficulties:

S is

so unstable for

multicollinear data that even minor perturbations of the

data can change the solution drastically (Hoerl, 1962).

The mean square error is likely to be unreasonably large

and the

the

B vector

B vector

tends to have a much greater norm than

it is estimating (Hoerl and Kennard, 1970A).

Under conditions of multicollinearity the

investigator often chooses to drop variables from the

model.

Popular statistical methods of doing this include

stepwise techniques (Efroymson, 1960), calculation of all

possible subsets (Garside, 1971), and regression on principal components (Massy, 1965).

Of these, stepwise methods are the easiest in

terms of computation and interpretation, and are included

in most statistical packages.

However, stepwise methods

are of little use for multicollinear data.

Their intended

function is to eliminate variables with no predicting

power from orthogonal systems of predictor variables.

Principal components regression and selection of

a best predictor subset out of all possible subsets may

both yield satisfactory results subject to the selection

criterion.

Principal components regression (Appendix I)

requires interpretational effort, but its structural simplicity has much to recommend it.

This is especially true

in very large systems where the computation of all possible subsets becomes impractical.

3

If the purpose of the regression model is to

predict one variable from a set of' other variables, dropping predictors makes sense for multicollinear data.

A

subset of predictor variables will specify the response

variable almost as precisely as will the total set.

The problem is that regression, especially in

areas such as epidemiology, is likely to have as its true

intent the explaining of some effect in terms of other

observables.

Since the relationships between the various

predictors are seldom completely understood (otherwise

multicollinearity could be avoided), some loss of explanatory information is bound to accompany reductions in the

model.

The ridge estimator of Hoerl and Kennard (1970A&B)

is another method of handling multicollinearity.

Vari-

ables may still be dropped (Hoerl and Kennard, 1970B;

McDonald and Schwing, 1973), but the emphasis is on transforming the estimators to achieve greater stability and

smaller mean square error.

The ridge estimator is given

by

{1.2)

where I is a (pxp) identity matrix, k is a constant, S*

is (pxl) , and X and

1 are the same as in the least

squares estimator.

The relationship between

A

squares solution

and~*,

~'

the ridge solution, is

the least

4

( 1. 3)

(Hoerl and Kennard, 1970A).

Since

B is

unbiased, it follows that the ridge

solution is biased for kiO.

Usually k, the biasing param-

eter, is chosen to minimize the mean square error of

B*.

In practice, much of ridge regression centers

around estimating the best biasing parameter.

Hoerl and

Kennard considered this problem in their 1970 papers, and

several authors have proposed solutions since then.

Purpose

The purpose of this thesis is to review the rules

currently proposed for determining the biasing parameter.

'I'he theoretical basis for each rule will be given, and

the results of a simulation study comparing some of the

estimators (Wichern and Churchill, 1970) will be presented.

Solutions considered are those of Hoerl and

Kennard (1970A), Mallows (1973), Hemmerle (1975), Hoerl,

e!:_ al.

(l975), McDonald and Galarneau (1975), Hoerl and

Kennard (1976), Lawless and Wang (1976), Hocking, et al.

(1977), Hemmerle and Brantle (1978), and Obenchain (1978).

Chapter 2

REVIEW OF THE LITERATURE

The effects of multicollinearity are well known,

and have been detailed by Farrar and Glauber (1967),

Hoerl and Kennard (1970A), Snee (1973), and Mason, et al.

(1975).

Several authors have also pointed out the preva-

lence of multicollinearity in real data, and examples may

be found in HcDonald and Schwing (1973) and in Gorman and

Toman (1966) .

Computationally, the problem posed by redundant

variables is a fundamental one:

vert a singular matrix.

it is impossible to in-

It is true that in most cases

collinearity does not imply true singularity, but for

practical purposes nearly singular matrices may produce

useless answers.

Hoerl (1962) suggested the application of response

surface methodology (Box and Wilson, 1951; Hoerl, 1959)

to ill-conditioned regression problems.

His ridge esti-

mator was a solution to the Langrangian problem of minimizing the residual sum of squares for a given estimator

norm(Appendix II}.

It differed from Gaussian regression

in having a biasing parameter, k, added to the main diagonal of the correlation matrix.

The only restriction on

k was that it be positive, a result of theoretical

5

6

considerations proposed earlier by Hoerl (1959) and

later proven by Draper (1963).

This was the basis of ridge regression, but, as

Hoerl remarked in the same (1962) paper, the theory was

incomplete.

In particular, no proof had been offered

that the ridge estimator was a good one in terms of mean

square error.

Hoerl's (1964) review of ridge analysis

did not include ridge regression except in a comment that

more work was needed.

A systematic development of the method appeared

later in two papers (Hoerl and Kennard, 1970A&B), and

included the following:

{1) rederivation of the ridge estimator, showing it

to be of minimum length for a given residual sum

of squares (Appendix II),

(2) proof that there exists some ridge estimator

having a smaller mean square error than the

corresponding least-squares estimatorr

(3) a description of a "canonical" form of ridge

regression involving transformed variables,

(4) an algorithm for finding a best (in the mean

square error sense) estimator for the canonical

{generalized) form of ridge regression, and

{5) the graphical ridge trace.

Thus the 1970 papers of Hoerl and Kennard

presented both the theoretical basis for ridge regression

7

and considerable extensions of the technique.

The

methodology used by McDonald and Schwing (1973) in studying air pollution was precisely that given in the second

of the Hoerl-Kennard papers.

Since the appearance of ridge regression, there

has been interest in its relationship to other biased

estimators.

Marquardt (1970) showed that a number of

properties are shared by ridge regression and his "fractional rank" (Appendix I) generalization of the principal

components estimator.

Goldstein and Smith (1974), ex-

tending the work of Lindley and Smith (1972) found that

the ridge solution actually approximates the fractional

rank solution.

Assessments of the relative power of ridge and

other estimators have been made by Mayer and Wilke (1973)

and by Hocking, et al.

(1976).

Mayer and Wilke derived

ordinary ridge estimators and shrunken estimators (Stein,

1960; Sclove, 1968) as minimum norm (for a given residual

sum of. squares) estimators in the class of linear transforms of least squares estimators.

They found that

shrunken estimators had minimum variance among those

studied.

Hocking, et al. carried the generalization still

further, finding a class of estimators that included

principal components estimators and generalized ridge

regression as well as shrunken estimators and ordinary

8

ridge regression.

They concluded the generalized ridge

was most effective at minimizing the mean square error.

The determination of an optimal biasing parameter

was considered by Hoerl and Kennard in their fundamental

work.

Their solution was iterative and involved only the

generalized ridge estimator.

Hemmerle (1975) found that

iteration was not necessary, and Hemmerle and Brantle

(1978) developed an alternative solution, again restricted

to generalized ridge regression.

Biasing parameters for the ordinary ridge

estimator have been considered by Mallows (1973),

Farebrother (1975), Hoerl, et al.

{1975), Hoerl and

Kennard (1976), Lawless and Wang (1976),

~1cDonald

and

Galarneau (1975), Hocking, et al (1976), Obenchain (1978),

and Wichern and Churchill (1978).

In the following sections, some of the solutions

introduced above will be examined in detail.

Chapter 3

OPTIMIZATION AND GENERALIZED RIDGE REGRESSION

The Criteria for Optimization

The simplest form of the ridge estimator is

(3.1)

where X is an (nxp) matrix of n observations on p

predictor variables, y is an (nxl) vector of observations

on the response variable,

k is a constant,

and~*

l

is a

is a (pxp) identity matrix,

(pxl) vector of estimators.

The k in (3.1) can in theory take on any positive

valuei therefore (3.1) has infinitely many possible solutions.

Since these will not all be equally useful to a

researcher using ridge regression, some means of choosing

a value for k is needed.

This in turn means that the

criteria by which a solution is considered to be a good

one must be established.

Hoerl (1962) and, later, Hoerl and Kennard

(1970A&B) favored stability as a criterion.

Stability,

in the sense of Hoerl and Kennard, meant the extent to

which changes in k affect

B*; an estimator is stable or

unstable in this sense depending on whether the absolute

values of the individual terms of dB*/dk are large or

9

10

small.

The values which S* takes on as k changes are

referred to as the ridge trace.

Vinod (1976) objected to this concept of

stability because a strict application of it to any

problem would lead to the conclusion that the optimal k

has an infinitely large value.

He proposed a modified

ridge trace with the k axis replaced by an m-axis defined

as

1'

m = p-EA./(A.+k),

1

l.

(3.2)

l.

where p is as before the number of independent variables

and A· is the ith eigenvalue of X'X.

1

The advantage of

this modification is that the point of maximal stability

for each term d(S*) ./dm is at some m which corresponds to

-

l.

a finite k.

Since

d~*/dm

is a vector, it is of no immediate

use as a test statistic.

Vinod "scalarized" it through

a statistic he termed the Index of Stability of Relative

Magnitudes (ISRM):

=

ISRM

'

2 )/SA.)-1)

2

E((p(A./(A.+k)

l.

1

l.

( 3. 3)

l.

where

-

s

=

v

2

l:A./(A.+k) •

1

l.

l.

The ISRM is zero for othogonal predictor systems,

nonzero for nonorthogonal systems, and large in absolute

value for seriously nonorthogonal systems.

To some

11

extent, it indicates how much a given model resembles an

orthogonal system.

Various considerations of stability or sensitivity

in the estimator have been closely associated with ridge

regression from the inception of the technique.

However,

stability, whether as defined by Hoerl (1962), Hoerl and

Kennard (1970A), or Vinod (1976) cannot be satisfactorily

equated with any statistical concept outside ridge regression.

For this reason, there is interest in finding

other criteria by which a ridge solution can be considered

optimal.

A widely accepted basis for evaluating estimators

is their mean square error.

In the case of ridge regres-

sion, investigators have considered both the ordinary

mean square error defined as

(3.4)

where

S* is the ridge estimator and S is equal to the

expected value of the least-squares estimator, and the

criterion of Stein (1960), defined as

(3.5)

The best ridge estimators in the mean square

error sense are those which minimize one of the expected

values

12

2

E (Ll >

=

E ( ( B*-8)

<§_*-..@_))

I

(3.6)

or

(3.7)

With some algebra (Appendix III) ,(3.6) can be

expressed either as

( 3. 8)

or as

E (L

where a

2

2

1

)

'

=

2

y

2

2

L: ( cr A . +k a . ) / ( A. +k)

1

1

.

1

1

2

(3.9)

,

is the residual mean square error and a. is the

1

ith term of a

=

Q'f, Q being the matrix of eigenvectors

=

of X'X such that Q'X'XQ

Similarly,

~'

the matrix of eigenvalues.

(3.7) can be written either as

(3.10)

or as

=

?

2

L:(o: A.

1

1

2 +\.k 2 a. 2 )/(A. ~.k) 2 •

1

1

1

(3.11)

Deriving an Optimum for Generalized Ridge Regression

A comparison of (3.8) and (3.10) with (3.9) and

(3.11) shows that (3.9) and (3.11) are algebraically simpler than the other two.

For this reason, it is the

practice among researchers investigating optima for ridge

13

regression to use these simpler, transformed forms.

The

X matrix of predictor variables is transformed by postmultiplying it by Q (see Appendix III), which is then

substituted into (3.1) in place of X.

The resulting

estimator is

(3.12)

or, equivalently,

(3.12)

where A is the ·diagonal matrix of

X'X.

Let

and let a

a and 8,

a be

=

~i'

the

eigenval~es

=

o,

Then the relationships between a and

~,

the value of a* which corresponds to k

E(a).

of

and~* and ~* are a = o'~,

a = o's,

and ~*

= Q'~*·

This is a more general case of principal components reression

(Appendix I).

2

2

) and E(L; ) are found by

1

differentiating (3.9) and (3.11) with respect to k and

The optima for E(L

setting the results equal to zero.

=

Thus

'L(~.ka. 2 -~.cr 2 )/(~.+k) 3

1

1

1

1

1

=

0

(3.13)

and

(3.14)

determine the optima.

14

Although (3.13) and (3.14) optimize k on the basis

of t he L

1

2

an dL

2

..

.

J

crlterla,

t h ey are not ana1

ytlc

so.u-

2

tions for k, nor can they be solved analytically for the

general case.

The only way to find a general analytic

solution would be to solve each term of (3.13) and (3.14)

separately for k, which would mean that in general the k.

l

for --the i th term would not be the same as the k . for the

J

jth term.

Let K be a diagonal matrix whose ith non zero

entry, k 1 , is positive but not necessarily the same as

k:.

its jth non zero entry,

J

The generalized ridge esti-

mator is

( 3.15)

'l'he generalized ridge estimator differs from the

ordinary ridge estimator in two respects.

requirement that k.

l

=

First, the

k. satisfies the conditions for a

J

Lagrangian system which minimizes the norm of a ridge

estimator for a given residual sum of squares (Appendix

II) •

The generalized ridge estimator is thus not of

minimum norm.

Secondly, it is not generally the case

the Q'KQ

Therefore, 'it is not true that

=

Qa.*

K.

= Q(A+K)-lQ'~'y

(3.16)

is the same as

Qa.*

=

Q(A+Q'KQ)-lQ'X'y.

( 3 .1 7)

15

This means that the generalized ridge estimator which

optimizes

E((~*-a)

1

E( (Qa*-Qa)

1

(~*-a))

(Qa*-~)).

will not in general optimize

(See Appendix III)

Consequently,

2

2

the E(L 1 > and E(L 2 > criteria are defined, for the

generalized ridge estimator, to be

(3.18)

and

2

=

E(L 2 )

E ( (a*-a)

-

-

'A (a*-a)).

(3.19)

---

In practice, the same definitions are used in

ordinary ridge regression, as well.

The reason for this

is that the data are rescaled so that X 1 X is a correlation

matrix before undergoing a principal components transformation.

Transforming the rescaled variates is not a

linear transpormation of the original rlata.

To solve (3.18) and (3.19) we have, from (3.13}

and

(3~14)

2

2

(A.k.a. -A.a )

l

l

l

l

=

0

(3.20)

and

'

2

2

2 2

(A.l ka.l -A.l a )

=

0

(3.21)

For both, the solution is

k.

l

= a 2 /a.l 2 .

(3.22)

16

Estimating the Ridge Optimum

Equation (3.22) is expressed in terms of unknown

parameters and therefore has to be estimated.

thing would be to replace cr 2 by 8

2

and a.

l

2

The obvious

by &,

2

1.

, the

least squares estimates, but ill-conditioning will tend

2

to rna k e a. 2 1 arger t h an a ...

A

1

1

For a more satisfactory solution, Hoerl and

Kennard (1970A) suggested the following iterative

procedure:

(1) Estimate k. using k.

1

(2) Compute a.*

1

(3) Compute k.

1

1

=

=

= "'2;"'

a. a 1.2.

(A.+k.)-l{Q·'X'y) ..

1

1

-

-

~

1

2

2

d /(a.*)

1

(4} Repeat (2) and (3) until ai* and ki stablize,

i.e., until the iteration no longer changes them.

Note that the process does not attempt to reestimate

&2 .

The reason for this is that, uncondition2

2

ally, the maximum likelihood estimate of cr is 8 , and

because the obvious re-estimation o~

&2

around a* will

always exceed the least squares estimate.

(A brief dis-

cussion of estimating o 2 around the ridge estimator may

be found in Obenchain (1978) .)

As it happens, the convergence points of ki and

a.* can be determined without actually iterating.

1

17

Hemmerle (1975) first proved this (Appendix IV).

Rather

than his algebraic proof, a more intuitive approach is

presented here.

If the iteration converges somewhere, then

a.*= "(A.+k.)-l(Q'X'y).

1

1

1

-

-

-

(3.23)

1

and

(3.24)

must have the same values for k. and a.* at the

1

convergence point.

1

The simplest way to find this point

is to determine where they do have the same values.

Squaring (3.23) and eliminating (a.*)

2

from both equations

1

gives

(k.)+A.

1

1

2 2

(J

=0

(3.25)

From the quadratic formula, the solution to this

is

k.1

=

(2'~'~)i2-2AifJ2~ /<Q'K'y)i4-4AifJ2(Q'~'y)i2

28

( 3. 26)

2

which has two possible values.

To see which is correct,

consieer the curves defined by

(a . *)

1

and

2

=

2

(J /k .

1

, k . >0

1

(3.27)

18

(a.*)

2

=

1

2

(A.+k.)- (Q'X'y) . 2 , k.>O.

l

l

-

-

-

l

(3.28)

l

The following are true:

(1) As ki approaches zero, c >c , and

1 2

(2) as ki goes to infinity, c >c .

1 2

Figure I illustrates the case where

There are two points of interse.ction,

designated k' and k", between

c1

and

c2 •

For this case

the following is also true:

The second derivative with respect to k of the

c1

difference between the inverses of

stant.

and

c2

is a con-

Along with relationships (1) and (2), this

implies (3).

The Hoerl-Kennard iterative procedure can be

expressed as two recursive formulas:

k.

1

=

2

a2 /(a.*)

1

(3.29)

and

=

where the " ==

identity.

from

c2

to

11

2

(),.+k.)(Q'X'v).

l

l

_ _ ..L1

2

(3.30)

indicates a computation rather than an

Then (3.29) represents a horizontal movement

c1 ,

while (3.30) represents a vertical movement

19

from

c1

to

c2 •

Note that this is true for all positive

k .•

1.

This iterative process specified by (3.29) and

(3.30) can be initiated at any positive k .•

If it is

1.

initiated precisely at k' or k" there will of course be

no change with iteration.

If the starting point is at

some k.<k 11 there will be convergence to k', either from

1.

the left or from the right.

Initial values greater than

k" will cause k.1. to increase indefinitely.

Although (3.26) has two possible solutions, only

one of the two is associated with convergence.

This

implies that the minus sign should always be chosen in

(3.26).

Figure

B~

shows the case where

Convergence is from the left only, and

<:>2,,-1

v

A

c1

1.

A

a.

-2

1.

and

c2

=

~-

inter-

sect in only one point.

For Figure

solution.

c,

<:>2' -1

v

"A

A.

1.

a.1.

-2

<~

..

and there is no

If an iteration is attempted, k. increases

1.

indefinitely and ai* goes to zero.

Appendix IV shows the equivalence of these

results with those of Hemmerle.

An Alternative Optimum for Generalized Ridge Regression

The iteration of Hoerl and Kennard is based on

the idea of finding a theoretical optimum (k.

1.

and then estimating that optimum.

=

0

2

/a.

1.

2

)

An alternative method,

20

'..

\

.

'.

\

\

\

I

---+l.cC:.ON'I~~c.e

r C.eNVSf'G~\.1C.€'

\<'

Figure A:

Hoerl-Kennard iteration on the ith term, ai*~

of a generalized ridge estimator.

~2,

u II..

1

-L.a.. -2

1

=

~

21

(a....;r)"'.

\

\.

'.\

\

.\

\.

\

.

I

I

---"~ I

---'l"'

1 'D1\IE'~e.'Nc:;.e'

c.owv~e;,...u:.e

k'

Figure B:

Hoerl-Kennard iteration on the ith term, a.*,

1

of a generalized ridge estimator.

22

\

'\

\.

\.

\

2..

1\

\

ex..

.

\

Figure C:

Hoerl-Kennard iteration on the ith term, a.*,

l

of a generalized ridge estimator.

(5

2, -L.. -2

1\o

a.

>

l

l

~

23

given by Hemmerle and Brantle (1978)

is to find an

estimator for the optimization criterion and then optimize

the estimator.

'rhe following derivation is based on the

paper of Hemn1erle and Brantle (1978):

From (3.9),

.

.,

2

2

2

2

E( (a*-a) '(a*-a)) = L:(A..a +k. a. )I(A..+k.)

-

-

-

-

1

l

.

l

l

l

Since a.*= (A..I(A..+k.))a., then E(a.*-a.)

l

l

.

l

"' 2

E({l-A..I(A.+k.))a.

l

l

l

l

l

l

)

=

=

L:k.

l

(k.I(A..+k.))

l

l

l

2

(a

2

l

2

(3.31)

l

=

IA..+a.

l

2

l

)

therefore

E ( ( a*-a) ' ( a*-a))

'P

1

2

l

(a

2

2

2

I A..l +a.l } I (A.l +k.l ) .

(3.32)

Combining (3.32) and (3.31),

E (

(a*-.£) ' ( a*-a))

=

E( (a*-8)

-

.

-

1

(a*-a))

-

-

+a 2'L:(A..-k.)I(A..(A.+k.)).

1

l

l

l

l

l

(3.33)

Recalling that the A.. are diagonal elements of

l

E(L

2

1

~'

} can be estimated by

(3.34)

Similarly,

E( (a*-a)

so that

'A(a*-~))

=

E( (~*-a)

1

1\(a*-a))

2

.

-1

+a Trace((!I.-K) (1\+K)

)

(3.35)

24

is an unbiased estimator of E(L

2

2

)•

Define

=

v.

l.

:\./{:\.+k.)

l.

l.

(3.36)

l.

so that

* = a.v

..

l. l.

a.·

l.

The ith component, M.,

of (3.34) may be written as

l.

{3.37)

"Ylhere

A

Ll

2

=

1

l:M.

1

l.

'

l.

and

L2

2

-

l::\.M .•

1

l.

A

To minimize Ll

2

A

and L2

2

differentiate M. with

l.

respect to v.:

l.

{3.38)

or, equivalently,

2

v.l. = 1-$ 2 j:\.6.

. •

l. l.

{3.39)

25

Since v. as defined in (3.36) must lie between

1

zero and one, it follows that (3.39) cannot be used for

2

optimization if 8 /(~.&.

1

2

1

)>1.

However, note that M. is

1

quadratic for v. and so increases monotonically as v.

1

1

moves away from the minimum point.

Since the object is

to ·minimize M., it follows that v. should be as close as

1

1

possible to the optimum point.

v.

1

=

Hence the solution is

2

2

8 1 < ~.1 &1. Ha

{l-62/(A.&2)

.

1 1

(3.40)

82/(~.&.2)>1

0

1

1

which corresponds to

{&.1 (1-62/(1..&.2))

1 1

*

ai --

82/(~.&.2)'1

1

1

(3.41)

82/(~.&.2)>1

0

1

The case where

1

2

8 2 /(~.&.

)>1

1 1

is similar to the case

where a.* is constrained for other reasons, such as taking

1

into account prior information about a ..

1

Assuming the

constraint excludes the optimum from the permissible solution region, ai* will lie on the boundary.

For example,

if ai* is constrained so that ai*#A and if

2

2

&. (l-8 /(X.&. )<A, then the solution will be a.*= A.

l.

1~1

1

In practice, it is unlikely that constraints will

be applied directly to the a.*, but it is quite possible

1

26

that the B·*

will be constrained, since there could easily

1

be prior information about the

s.1

(recall that the

s.1

are

related to the nontransformed predictor variables).

Optimization when the Si* are constrained is

difficult, however, because inequalities become complicated under linear transformations.

A constraint on one

Bi* will transform into constraints on several ai*' with

the possible solutions for any one variable partially

dependent on what solutions are chosen for the other

variables.

Hemmerle and Brantle (1978) considered this

problem and propsed a quadratic programming algorithm

as a solution.

The details are given in their paper.

Chapter 4

OPTIMIZING THE ORDINARY RIDGE ESTIMATOR

The preceding chapter motivated the generalized

ridge estimator through the impossibility of obtaining

(3~13)

algebraic solutions to

or (3.14).

Nonetheless,

the ordinary ridge estimator is considered useful for

certain types of problems, so optimizing it is of some

interest.

A number of solutions have been proposed, and

some of them will be considered in this chapter.

Ridge Solutions for the E(L

1

2)

Criterion

The condition for minimizing E(L 2 ) is given by

1

equation (3.13).

An algebraic solution for (3.13) does not exist,

but it is possible to solve it numerically.

choice would be Newton-Raphson iteration.

The obvious

Recall that

(3.13) is

=

1

~(A.ka.

1

l

2

l

2

-A.cr )/(A.+k)

l

l

3

=

0

Then

f

~(3cr

1

2

A.+A.a.

l

l

-~

2

(A.-2k))/(A.+k)

l

l

4

,

(4.1)

and the solution is

( 4. 2)

27

28

where k. is the value of kat the jth iteration and

J

k1

=

0.

Iteration continues until convergence is

achieved.

The difficulty with this solution is that it is

time-consuming.

since

.

a.1

Since the ai must be estimated, and

has too large an absolute value for ill-

conditioned data, the Newton-Raphson iteration must be

nested within the framework of a Hoerl-Kennard iteration,

which naturally involves a great deal of computation.

For this reason the solfttion has not been widely used.

Dempst.er, et al.

(1977) have raised other objections to

its use, but not in sufficient detail to permit an evaluation of their merit.

In contrast to this doubly iterative procedure,

a solution proposed by Wichern and Churchill (1978) is

extremely simple but admittedly not optimal.

pointed out in Hoerl and Kennard (1970A),

As was

(3.13) is nega-

tive for k<0 2Aa max ) 2 , where Iamax I is the largest of the

!ail·

Since this is so, and since (3.13) is negative

from k

=

0 up to the point of minimization, a solution

based on k

= 0 2 /(amax ) 2 will have a smaller mean square

error than the least squares solution.

as a possible biasing parameter.

This suggests

Since the mean square

2

2

error will be minimized by 0 2 /(a max ) 2 <k<0 /(a m1n

. ) , (4.3)

29

is somewhat conservative,

meaning that it does not

produce as much bias as would be theoretically optimal.

Wichern and Churchill attribute (4.3) to Hoerl

and Kennard, although Hoerl and Kennard did not actually

suggest it as an estimator.

To avoid confusion, it will

be referred to here as the Hoerl-Kennard conservative

estimator.

Hoerl, Kennard, and Baldwin (1975) found an

algebraic solution to (3.13) by_assuming, somewhat unrealistically, that X'X is an identity matrix.

case li

=

In that

1 for all i, so (3.13) gives

.,

kl:a.

1

2

l.

-pcr

2

=

(4.4)

0,

and then

(4.5)

Another way to obtain this result, also given in

Hoerl, et al.

(1975) is to use the harmonic mean of the

optimal k. given by equation (3.22), i.e. , k.

.

l.

.

l.

=

2

(j

2

/eti .

If kh is the harmonic mean, then

=

l'

1/pl:l/k.

l.

1

=

'P

1/pl:a./cr

1

l.

2

=

2"f

1/pcr l:a.

1

l.

2

=

( 4. 6)

Therefore, kh

=

2

pcr /.§.'.§_.

30

Hoerl, et al. proposed that the least-squares

for~·£

estimator should be used

in (4.4).

and Kennard (1976) noted that, since

estimate of

B'B

§•§

Later, Hoerl

is not a good

when the data are ill-conditioned, an

iterative process similar to their earlier one (1970A)

should be used.

The E(L

2

1

) Solution of Hocking, Speed, and Lynn

Hocking, et al.

(1977) considered a class of

estimators expressible as

a*= Ba

where

a

(4.7)

is, as before, the transformed least-squares

linear estimator, and where B is a (pxp) diagonal matrix.

For ridge regression, the ith element of B is

b.=

(l+k./A.)

l.

l.

l

-1

1

( 4. 8)

A. ( 1-b.) /b. .

( 4. 9)

which is equivalent to

k.

l.

=

l.

l

l.

For ordinary ridge regression, k.

l

=

k for every i.

In other words,

A·l. (1-b.)/b.

l.

l.

=

Ap (1-b p )/b p

i=l, ... ,p-1.

( 4 .10)

There is thus a constant ratio between Ai and bi/(1-bi).

From this, Hocking, et al.

(1977) suggested that the k.

l.

31

could be combined through a least-squares formula, i.e.,

k

=

.,

1'

2

2

(L\.b./(1-b.) )/(L:b . ./(1-b.) ) •

1

1

1

1

1

1

(4.1.1)

1

This is derived from setting A. as the dependent

1

variable and b.1 I ( 1-b.1 ) as

th~

independ_.ent variable.

It

would be equally feasible to do the reverse, obtaining

k

=

.,

..,

2

(L:A.b./(1-b.))/L:A . •

1

1

1

1

1

(4.12)

1

It is necessary to determine values for the b.

1

before (4.11) can be evaluated.

the procedure of Hoerl, et al.

vidual optima of (3.22):

=

given as k.

1

1

1

(1975) and used the indi-

= a 2 /a.1 2 .

k.

1

This can also be

2

2

A. a I A. a. , so, in accord with ( 4 . 9) •

1

=

( 1-b . ) /b .

Hocking, et al. followed

1

a

2

1

I ( A1. a 1. 2 ) •

(4.13)

Hence (4.11) and (4.12) become, respectively,

k

=

,

2 2 2

1'

2 4 '4 ·_

(L:A.

a.1 ja )/(L:A.

a./a

t

1 1

1 1

1 .

q

2

1'

(l:A.

1

1

2

a.

1

2

=

1'

2 4

)/(L:A. a. )

1

1

1

(4.14)

and

2 2 'P 2

= 1/a 2"'L:A.

a. /L:A . •

1 1

1

1 1

(4.15)

Least-squares estimates could be used for the a.;

1

alternatively an iterative process similar to those of

32

Hoerl and Kennard could be used ..

Mallows' E(L

2

2

) Ridge Solution

Some of the results for the E(L 2 ) criterion can

1

.

2

be extended to E (I, ) :

the Newton-Raphson technique is

2

applicable to (3.23), for example, and the results of

Hocking, et al. can apply to either criterion.

A. more

interesting approach is that of Mallows (1973).

Mallows' solution begins with the "scaled summed

mean squared error," defined as

(4.16)

!t should be noticed that Jk differs from L2

the constant 1/cr

2

2

only by

.

From Appendix !II,

(4.17)

where

(4.18)

and

(4.19)

The residual sum of squares is

33

(4.20)

from which

E(RSSk)

=

2

cr V* k +B k

(4.21)

where

(4.22)

The estimator of E(Jk) is thus

(4.23)

which is to be minimized.

Since the residual sum of

squares about the least-squares model is constant it can

be disregarded in the minimization.

Let

(4.24)

be the variable to be minimized.

and ( 4. 24)

From (4.20)

1

(4.23)

1

1

(4.26)

34

Recall that Q was defined earlier as the matrix

of eigenvectors such that Q'X'XQ

of eigenvalues \. of X'X.

~

=

A, the diagonal matrix

Transforming (4.26) by Q and

1

letting

=

Q'X'y,

1

+2l:\.j(\.+k)

l

=

1/~

1

1

21

'

l:\. (Z./(\.+k)-z./\.) 2 +2~\,j(\.+k)

l

1

1

1

1

1

1

1

1

(4.27)

To minimize this with respect to k,

(4.28)

for which Mallows' solution is

(l+k)/k

(4.29)

and then

( 4. 30)

This solution does not seem to be correct.

can be solved numerically (Appendix V).

However (4~28)

35

Mallows' solution is somewhat similar to the

generalized ridge solution of Hemmerle and Brantle (1978)

in that it optimizes an estimator rather than estimating

a theoretical optimum.

The Lawless-Wang Estimator

Lawless and Wang (1976) derive a solution for

ordinary ridge regression as follows:

Defining a as

before to be the transformation off, i.e., a= QB, suppose a is a multivariate normal random variable with a

distribution defined by

a-N(O,a

2

( 4. 31)

I),

-p--a-p

where p is the dimensionality of

B.

The Bayesian estimator for a is a*, where

a*.

1

=

(A./(A.+a 2/a 2 ))a.

1

1

a

1

i

=

1, ... , p

(4.32)

(Goldstein and Smith, 1975) . To estimate a 2; a o, 2 , f'1rst

notice that if a-N (O,a

-

p -

2

I ) , then

a -p

and

=

2

l

p+ ( L: A . ) a

1

1

a

2

Ia •

'

Since X'X is in correlation form, L:X. = p.

1

1

36

Therefore,

?

2

2

E(EA.a. jpa -1

1

1

1

=

a

2

a

ja

2

•

2

Since aa 2 is expected to be much greater than a ,

2

2

a a 2 ;a 2 can be estimated by EA.a.

/pa .

1 1 1

The Lawless-Wang

optimum is thus

(4.33)

The McDonald-Galarneau Estimator

In contrast to the estimators discussed above,

the estimator of McDonald and Galarneau (1975) is not

based on considerations of mean square error.

Instead,

i t is intended to find the solution whose norm is as close

to the norm of

B as

possible.

To determine this solution,

note that

or, equivalently,

Thus, an estimator of

f'f

is

(4.34)

Equation (4.34) cannot be solved algebraically

for the general case, and McDonald and Galarneau

37

suggested a trial-and-error process involving 201 values

for the interval (0,1).

Iteration·would be a more effi-

cient means, however, and the Newton-Raphson method will

work for this problem.

Specifically, the problem is to

find k such that

(4.35)

or, equivalently,

{ 4. 36)

Iterate as follows:

=

k.-f(k,)/fl (k,)

J

.J

1

J

where

and

f

I

(.k . )

J

=

l

E { 2 (A . +k . )

1

1

J

-3

. (X I y) .

- -

2

)

,

1

k. being the value of k at the jth iteration.

J

Obenchain's Estimator for Ordinary Ridge Regression

Suppose it is assumed that a nearly exact estimate

Of' lies somewhere along the ridge trace, but that it is

unclear just where.

The method of Obenchain (1978)

38

permits a ~* to be determined fo~ any desired probability

level, say, f, that ~* is a better estimate than

least squares estimator.

S,

the

Consider a Scheffe confidence

ellipsoid (Scheffe, 1961) about the least squares estimator.

Searle (1971) gives as an f-level confidence

A

region about

_@.

( 4. 37)

where p is the nQmber of independent variables, n is the

number of observations, and F(p,n-p-l;f) is the value of

the F distribution with p and (n-p-1) degrees of freedom,

A

having a probability of f.

If 8 is the least-squares es-

timator, this ellipsoid covers the· true unknown value of

~with

proability 1-f.

The ridge trace is a subset of points in the

space.

~-

It may be visualized as a path running from the

least-squares estimator (where k

(where k is infinite) .

=

~

0) to the point

=

Q_

Assuming it intersects the boun-

dary of the confidence ellipsoid, it will do so at only

one point.

This point Obenchain chooses as his estimator.

Expressed as a formula, his solution is:

choose 8* such

that

(8*-S} 'X'X(8*-S)

-·-----

=

2

p& F(p,n-p-l;f).

(4.38)

Obenchain's solution must be interpreted with a

certain amount of caution.

ability that

~*

The F value is not the prob-

is a better estimate of 8 than is

A

~'

39

Ll KE L\ HCC:D

SPAC.E"

.4--

\OO(\-~)<!Jo

c.o~ F=\ DENC.E

RE<SlON

Figure D:

Obenchain's method: ridge trace and

accompanying 100(1-f}% confidence region

for a two-variable example.

(Adapted

from Obenchain, 1978}

40

because points outside the confidence ellipsoid may very

A

well be closer to S than to

~*(see

FigureD).

Further, if it is assumed that

~

lies somewhere

along the ridge trace, the F value is still not the probability that ~* is a better estimate than is

S.

The

reason is that a distribution (in this case the F) which

holds for a space will not generally hold for a onedimensional path through that space.

In practice, it is easiest to evaluate (4.38) by

choosing an arbitrary

ated F value.

~*

and then determining the associ-

This fact, as well as the fact that a

satisfactory probability would be difficult to set without some prior knowledge of the associated k, make it

difficult to view Obenchain's estimator as a point solution.

It appears instead to be an additional means of

examining the ridge trace.

Vinod's Estimator for Ordinary Ridge Regression

Vinod's estimator for k was presented in the

preceding chapter.

To repeat it here, it is as follows:

Find a k which minimizes

?

E(p(~./(~.+k)

1

1

1

2 -

?

)s~.-1)~~

where

-

s

=

1'

r~./(~.+k)

1

1

1

2

.

I

(4.39)

41

This cannot be solved algebraically, and so must

Wichern and Churchill (1978) examined a

be estimated.

range of values and chose the most satisfactory solution.

Assuming there was only one local minimum, Newton-Raphson

iteration would work, since (4.39) can be differentiated

with respect to k.

The result is a bit complicated, but

a convergence scheme is more efficient than examining a

set of values and choosing the smallest one.

A Review and Evaluation of the Ordinary Ridge Solutions

Because of the number of solutions for ordinary

ridge regression and the length of some of the derivations, a quick overview would be helpful.

r1ost of the estimators presented above are

intended to minimize the mean square error, either in a

classical or Bayesian sense.

Vinod's (1975)

1

The exceptions to this are

which is based on stability, McDonald and

Galarneau's (1975)

1

which is based on estimator norm, and

Obenchain's (1978), which is based on confidence intervals.

Of the estimators based on the classical mean

2

square error (E(L 1 )) 1 the Hoerl-Kennard theoretical

optimum and the Hoerl-Kennard conservative estimate were

derivable without requiring further assumptions.

The

Hoerl-Kennard conservative solution is intended to be

better than the least-squares solution but does not

minimize the mean square error.

42

The solutions of Hoerl, et al.

(1975), Hoerl and

Kennard (1976), and Hocking, et al.

(1977) are intended

to minimize the mean square error.

However, they are

all arbitrary to a certain degree, or else require additional assumptions such as the assumption by Hoerl, et al.

that the predictor variables are uncorrelated.

The solutions of Lawless and Wang (1976) and

Mallows (1973) are optimal in a Bayesian sense.

The

Bayesian assumption for Lawless and Wang's solution was

given in its derivation, while that of Mallows' solution

is implicit in the E(L 2 ) criterion itself. Stein (1960)

2

and Efron and Horris (1973) detail this Bayesian approach.

The question of how well these estimators perform

in practice has been considered by McDonald and Galarneau

(1975), Hemmerle and Brantle (1978) and Wichern and

Churchill (1978).

All three studies used the simulation

method of McDonald and Galarneau (1975).

Wichern and Churchill's study was the most

thorough and comprehensive, comparing the least squares

estimator with five ridge solutions.

Of these five, the

McDonald-Galarneau estimator and the Hoerl-Kennard conservative estimator were the most consistent in reducing the

mean square error associated with the least squares

solution.

The other ridge estimators considered were those

of Hoerl, et al.

(1975), Lawless and Wang (1976), and

43

Vinod (1975).

All three were quite variable in terms

of performance, especially Vinod's.

Appendix VII

presents the results of the Wichern-Chruchill study

in more detail.

Chapter 5

CONCLUSIONS

As was indicated in Chapter 1, the linear least

squares estimator

suffers from a number of drawbacks when the predictor

variables X. are collinear.

These drawbacks include in-

1

A

stablility for minor changes in data,

S.1 whose absolute

values are too large, and a large mean square error.

Yamamura (1977) reviews and discusses these problems.

Among the techniques which can be used to deal

with collinearity is ridge regression, a term which actually refers to two closely related estimators.

These

are the ordinary ridge estimator

and the generalized ridge estimator

where A is the matrix of eigenvalues of

X'~,

2

is the

matrix of associated eigenvectors, and K is a diagonal

matrix whose nonzero terms k. are positive but not

1

necessarily equal.

44

45

An important problem in ridge regression is

choosing the biasing parameter (k for ordinary ridge,

k.1. for generalized ridge).

In the case of generalized ridge this comes down

to a choice between two possible solutions:

one based on

estimating an optimum and the other based on optimizing

an estimator.

There is no reason to consider either of

these superior in general, though the second is more conservative and may be better for extremely large variances.

Ordinary ridge regression has a known theoretical

optimum, but it is difficult to solve.

As a resul·t,

there is interest in finding other solutions, often based

on combinations of the optimal k. ·from the generalized

1.

ridge estimates.

Due to the arbitrary nature of these combinations,

it is hard to justify them or evaluate them theoretically;

therefore comparisons based on simulation are of considerable importance.

Some work of this sort has already

been done, but no estimator evaluated so far has been consistently superior to the others, and it is likely that a

consistently superior ordinary ridge solution will not be

found.

The current feeling seems to be that more than one

solution should be examined when ridge regression is used.

'

46

BIBLIOGRAPHY

1.

Anderson, T. W.

Introduction to Multivariate

Statistical Analysis. New York:

John Wiley and

Sons, 1958.

2.

Box, G. E. P. and Wilson, K. B.

"On the Experimental

Attainment of Optimum Conditions." Journal of the

Royal Statistical Society, Series B. 13: 1-45,

1951.

3.

Brown, P. J.

"Centering and Scaling in Ridge

Regression." Technometrics.

19:

35-36, 1977.

4.

Dempster, A. P., Schatzoff, M., and Wermuth, N.

"A

Simulation Study of Alternatives to Ordinary Least

Squares." Journal of the American Statistical

Association.

72:

77-90, 1977.

5.

Draper, N. R.

"Ridge Analysis of Response Surfaces."

Technometrics.

5:

469-479, 1963.

6.

Efron, B. and Morris, C.

"Stein's Rule and Its

Competitors--An Empirical Bayes Approach."

Journal of the American Statistical Association.

68: 117-130, 1973.

7.

Efroymson, M. A.

"Multiple Regression Analysis."

Mathematical Methods for Digital Computers, Vol. 1.

A. Ralston ( ed) , Ne\v York: J"ohn Wiley and Sons,

1960, pp. 191-203.

8.

Farrar, D. E. and Glauber, R. R.

"Multicollinearity

in Regression Analysis:

the Problem Revisited."

The Review of Economics and Statistics. 49:

92107, 1967.

9.

Garside, M. J.

"The Best Subset in Multiple

Regression Analysis·." Applied Statistics.

196-200, 1965.

10.

14:

Goldstein, M. and Smith, A. F. M.

"Ridge-Type

Es-timators for Regression Analysis." Journal of

the Royal Statistical Society, Series B.

36:

284-291, 1974.

47

11.

Hemmerle, W. J.

"An Explicit Solution for

Generalized Ridge Regression." Technometrics.

17:309-314, 1975.

12.

Hemmerle, W. J. and Brantle, T. F.

"Explicit and

. Constrained Generalized Ridge Estimators."

Technometrics. 20: 109-120, 1978.

13.

Hocking, R. R., Speed, F. M., and Lynn, M. J.

"A

Class of Biased Estimators in Linear Regression."

Technometrics. 18: 425-438, 1976.

14.

"OptimUm Solution of Many Variables

Hoerl, A. E.

Equations." Chemical Engineering Progress. 55:

69-78, 1976.

15.

"Applications of Ridge Analysis to

Regression Problems." Chemical Engineering

Progress.

58:

54-59, 1962.

16.

Hoerl, A. E. and Kennard, R. W.

"Ridge Regression:

Biased Estimation for Nonorthogonal Problems."

Technometrics. 12: 55-67, 1970A.

17.

"Ridge Regression: Application to

Nonorthogonal Problems." Technome~rics. 12:

69-82, 1970B.

18.

"Ridge Regression:

Iterative Estimation

of the Biasing Parameter." Communications in

.Statistics. 4: 105-123, 1975.

19.

Hoerl, A. E., Kennard, R. Tt7., and Baldwin, K. F.

"Ridge Regression: Some Simulations."

Communications in Statistics.

4: 105-123, 1975.

20.

Hotelling, H.

"Analysis of a Complex of Statistical

Variables Into Principal Components." Journal of

Educational Psychology.

24:

417-441; ~91-520,

1933.

21.

"Simplified Calculation of Principal

Components." Psychometrika. 1: 27-35, 1936.

22.

Kempthorne, 0. Discussion on a paper by D. V.

Lindley and A. F. M. Smith. Journal of the Royal

Statistical Society, Series B:

34:

33-36, 1972.

23.

11

Lawless, J. F. and Wang, P.

A Simulation Study of

Ridge and Other Estimators." Communications in

Statistics.

5:

307-323, 1976:

48

24.

Lindley, D. V. and Smith, A. F. M. "Bayes

Estimators for the Linear Model" (with discussion).

Journal of the Royal Statistic~l Society, Series

=---~~--~~~~~~----------------------~

B. 34: 1-41, 1972.

25.

Mallows, c. P.

"Some Comments on cp."

metrics. 15: 661-675, 1973.

26.

Marquardt, D. W.

"Generalized Inverses, Ridge

Regression, Biased Linear Estimation, and Nonlinear Estimation." Technometrics. 12: 591-611,

1970.

27.

Mason, R. L., Gunst, R. F., and Weber, J. T.

"Regression Analysis and Problems with Multicollinearity." Communications in Statist.ics. 4:

277-292, 1975.

28.

Massy, W. F. "Principal Components Regression in

Exploratory Statistical Research." Journal of the

AmericanStatistical Association. 60: 234-256,

1965.

29.

Mayer, L. S. and Wilke, T. A.

"On Biased Estimation

in Linear Models." Technometrics. 15: 497-508,

1973.

30.

McDonald, G. C. and Galarneau, D. J.

"A Monte Carlo

Evaluation of Some Ridge-Type Estimators." ,Journal

of the American Statistical Association. 70: 407416, 1975.

31.

McDonald, G. C. and Schwing, R. c. "Instabilities

of Regression Estimates Relating Air Pollution to

Mortality." Technometrics. 15: 463-481, 1973.

32.

Morrison, D. F. Multivariate Statistical Methods.

New York: McGraw-Hill, 1967.

33.

Newhouse, J. P. and Oman, S. D.

"An Evaluation of

Ridge Estimators." Rand Report No. 4-716-PR: 128, 1971.

34.

Obenchain, R. L.

"Classical F-Tests and Confidence

Regions for Ridge Regression." Technometrics.

19: 429-439, 1972.

35.

Pearson, K.

"On Lines and Planes of Closest Fit

to Systems of Points in Space." Philosophical

Magazine, Series 6. 2: 559-572, 1901.

Techno-

49

36.

Rao, C. R. Linear Statisti6al Inference and Its

Applications, 2nd ed. New York: John Wiley and

Sons, 1970, pp. 294-305.

37.

Scheffe, H. The Analysis of Variance.

John Wiley and Sons, 1959.

38.

Sclove, s. L.

"Improved Estimators for Coefficients

in Linear Regression." Journal of the American

Statistical Associati6n. 63: 597-606, f968.

39.

Searle, s. R. Linear Models. New York:

Wiley and Sons, 1971, pp. 100-116.

40.

Silvey, S. D.

"Multicollinearity and Imprecise

Estimation.n Journal of the Royal Statistical

Society, Series B.

31: 539-552, 1969.

41.

Snee, R.

"Some Aspects of Nonorthogonal Dat.a

Analysis .. " Journal of Quality Technology. 5:

67-79, 1973.

.

42.

Stein, C.

"Inadmissibility of the Usual Estimator

for the Mean of a Multivariate Normal Distribution." Proceedings of the ·Third Berkeley Symposium

on Mathemat.ical Statistics and P.robabili ty, Vol. 1.

Berkeley: University of Califorillla Press, 1956,

pp • 19 7- 2 0 6 •

New York:

John

43.

"Multiple Regression." Contributions

to Probab~lity and Statistics. I. Olkin (ed).

Stanford: Stanford University Press, 1960,

pp . 4 2 4- 4 4 3 •

44.

Wichern, D. W. and Churchill, G. A.

"A Comparison

of Ridge Estimators." Technometrics. 20: 301311, 1978.

45.

Yamamura, A. M.

"Ridge Regression: An Answer to

Multicollinearity." Unpublished Master's Thesis.

California State University, Northridge, 1977.

50

APPENDIX 1

PRINCIPAL COMPONENTS REGRESSION

Let X be an (nxp) matrix of n observations on p

variables standardized so that X'X is a correlation

matrix.

Suppose one wishes to find an (nxl) vector which

accounts for as much sample variance as possible.

An

algebraic statement of the problem is the following:

find a linear compound

(1)

such that the sample variance

=

Q 'X'XQ

L:L:q.lq.ls .. = 1--1

. . 1.

J

l.J

(2)

l.J

is maximized subject to

is (nxl),

.

x.

-1.

o1 'o 1 =

1.

is the ith column of X.

For this problem Y1

0 1 is a vector

and s . . is the ( i , j ) th

l.J

element of X'X, i.e., s .. is the sample covariance of X.

l.J

and X ..

-J

-1.

The solution of the problem is to use the

Lagrange multiplier A :

1

( 3)

51

where I is the identity matrix. ·To maximize, set (3)

to zero, which gives p simultaneous equations

(4)

The system of equations given by (4) is solved by choosing

A.

1

such that

=

IX'X-A II

- - 1-

It follows that A.

1

0

(5)

is an eigenvalue of X'X.

Premultiply-

ing ( 4) by Q • ,

1

(6)

and, recalling t.hat g_ • Q

1 1

A1

= =1

0 'X'XQ

- -1 =

Therefore, Q

1

=

1.

2

(7)

SYl .

is the

eigenvec~or

associated with

A. , and the magnitude of the sample variance sy

1

by A. 1 •

2

1

is given

2

Since the intention is to maximize sYl' A. 1 is the

largest eigenvalue of X'X.

The first principal component accounts for the

maximum possible variance in the observations.

Proceeding

inductively, the second principal component accounts for

as much of the remaining variance as possible.

ally, the problem is:

Algebraic-

find the linear compound

(8}

52

such that the sample variance

=

L:L:q. q. s ..

2 J 2 lJ

ij l

=

Q 'X'XQ

-2 - --2

(9}

is maximized subject to the constraints

o1 •o 2 =

0.

o2 •o 2 =

1 and

The first of these is as before a standardiza-

tion of length, while the second constrains Q to be

2

orthogonal to 0 1 .

where \

o1 •

2

and \

Again a Lagrangian system is used:

are the multipliers.

3

Premultiplying by

and setting to zero.

2Q

'X'XQ +\

-1 -- - 2 3

=0

Similar premultiplication of {4} by

( 11}

o2 •

implies

that

Q 'X'XQ

--2

-1 -

and hence A

3

=

= 0

0.

(12)

The second component thus satisfied

(X'X-\2_!_)Q2

= 0

and is solved by

IX'X-\2.!.1

=

0.

( 13)

53

Further, premultiplying (10) by Q ' and recalling that

2

1..

3

== 0,

Q 'X'XQ

-2--2

Since sy

that sYl

that

1..

2

(14)

2

2

is to be maximized subject to the fact

has already been accounted for by

1..

1 , it follows

2 is the second largest eigenvalue of X'X, and that

0 2 is the associated eigenvector.

The third principal com-

ponent will similarly be determined by the eigenvector

associated with the third largest eigenvalue and so forth.

Principal components are orthog-onal to each other

and, beginning with the first component, each accounts for

as much as possible of the variance that has not been accounted for by the preceding components.

Geometrically,

they correspond with the principal axes of the data

ellipsoid determined by X.

Principal components regression is linear

regression which uses the principal components as independent variables.

Let y be an (nxl) vector of observa-

tions on a response variable.

Then principal components

regression can be written as

(15)

where Q is the (pxp) matrix whose ith column is equal to

gi' the ith eigenvector of X'X.

Another way of writing

54

( 15) is

(16)

where A is the (pxp) diagonal matrix whose ith diagonal

A~,

entry is

the ith eigenvalue of X'X.

Note that (16)

may be transformed into the ordinary least squares solution by premultiplying it by Q, i.e.,

A

§_

=

QA

since (X'X)-l

-1

Q' X 1 Y1

= QA-lQ'.

(

17)

It may happen that some of the

eigenvalues are very nearly zero and should therefore be

eliminated from the model, since they account for almost

none of the predictor variance.

eigenvalues

Remembering that the

are in order of decreasing size, A can be

partitioned as follows:

(18)

where the last (p-r) eigenvalues are assumed to be zero.

We also partition Q:

(19)

where the last (p-r) eigenvectors correspond with the

zero-valued eigenvalues.

55

Since A

is by assumption a zero matrix, a

-p-r

generalized inverse of (X 1 X)

--r

-1 = Q A -lQ

(XIX)

- - r

-r-r

(Marquardt, 1970).

(X 1 X) -l

--r

-r

is

I

(20)

This ma.y also be written

=

r

~1/A.Q.Q,

1

1-1'--1

I

( 21)

where -Q.

is as before the ith eigenvector of Q.

1

To see

that (21) is correct, recall that q .. is the jth term of

1]

the ith eigenvector.

(X' X)

--r

-1

=

Then

QA-lQI

(22)

tvhere

Q

qll

q21

qrl

q12

q22

qr2

qlp

q2p

qrp

0

0

l/A. 2

0

=

and

1/A.l

0

-1

A

=

0

0

1/A.

r

56

2

l:q.l(l/1...)

I"'

f"

1

{K•X)

1

1

-1 r

L:q.2q.l(l/A.)

r 1 1

1

1

tq.lq.2(1/A.)

1 1

1

1

L:q. q. (1/A.)

1 1 1 1p

1

tq~

(1/A.)

1 1 2

1

L:q. q. (1/A.)

1 1 2 1P

1

r

( 2 3)

r

1

=

r

L:q. q. (1/A..)

1 1p 1 2

1

q.1pq.l(l/A.)

1

1

tq~

(1/A.)

1 1p

1

2

qil

qilqi2

qilqip

qi2qil

2

qi2

qi2qip

f1 {1/A.)

1

(24)

q.1pq.l

1

=

qipqi2

2

qip

r

L:l/A .Q.Q. I .

1

1-1-'-1

The estimator based on the first r eigenvalues would thus

be

A

B

=

.

Q A

-r-r

-1

( 25)

Q 'X'y.

-r - -

In practice, it would not be likely that the last

(p-r) eigenvalues would be precisely zero.

This means

that some method of deciding whether or not a particular

eigenvalue is zero is needed.

Specifically, some constant

c should be set so that X'X may be said to be of rank r

57

if the first r components account for all but some small

fraction of the variance, i.e., if'

The advantage to (25) as an estimator is chiefly

its simplicity.

Since the eigenvectors are all orthogonal

to e'ach other, they can be eliminated from the model

through a simple stepwise procedure.

tates the

decis~on

Also (26) facili-

of how many variables should be

dropped.

Marquardt (1970) considers the possibility of

using "fractional ranks."

His suggest.ion is to eliminate

those eigenvalues which are definitely considered to be

zero and to reduce the inflation caused by inverting the

smaller nonzero eigenvalues by adding a small constant

to the denominator of each term in (21).

To detail how this works, suppose that the rank

of X'X is considered to be greater than r but less than

r+l.

Specifically, suppose it is set to r+f, where f is

some number between zero and one.

Marquardt's·suggested

estimator is

( 27)

where, from (21) and (26),

=

r

L(A.+k),

1

l

( 28)

58

or, equivalently,

{29)

k == f.Ar+l/r

Marquardt's fractional rank estimator may be seen

as a compromise between principal components regression

and ordinary ridge regression, with part of the effects

of multicollinearity being eliminated through one

nique and part through the other.

tech~

All the same, it is

not clear, despite {28), that the concept of "fractional

ranks" is in itself particularly meaningful.

To illu-

strate this, consider the case where f is approximately

equal to one.

The result could very well be two dissimi-

lar estimators for models whose rank was in theory almost

identical, using {27) for one estimate and {25) for the

other.

59

APPENDIX II

RIDGE ANALYSIS AND RIDGE REGRESSION

A frequently occurring problem in multivariate

analysis is to find the stationary values of a function

f(x 1 , ... ,xp) of p variables x , ... ,xp subject to restric1

tions on the x. such as g.(x.l_ , .•• ,x)

1

J

p

=

0 (j

=

l, •.• ,n).

The method by which the problem is solved is that of

Lagrange multipliers.

=

F

Letting

•

f-L~... g.,

1 J J

(1)

w·here A. , ••• , A.n are unknowns, differentiate (1) with

1

respect to each x.1 and set the results equal to zero.

This yields p equations

·ap;ax.

1

=

1

"1

=

1, .•• , n) •

3f/3x.-~A..ag./3x.

J

)

1

=o

(i

=

l, ••• ,p).

(2)

Additionally,

g. == 0

J

(j

( 3)

is a

solution of (2) and (3) after eliminating the A.j.

Let

60

2

a

=

M(x)

M(x , • • • , x )

p

1

F

=

(4)

be the matrix of second order partial derivatives, and

let

M(~)

= M(a 1 , .•• ,ap) be the resulting after the solu-

tion {a , .•• ,a) has been substituted into (4).

p

1

Then if

M(a) is

(a) positive definite, i.e., y'My>O,

(b)

where

negative definite, i.e., y'My<O,

y' = (yl, .•. ,yp) is any

(lxp)

real vector, the

function f(x , ... ,xp) achieves

1

(a) a local minimum at x

=

a,

(b) a local maximum at

=

a,

respectively.

X

To see tnis, suppose F is expanded as a

Taylor series of partial derivatives about a and that h

represents a vector (h , ... ,hp)' of increments hi.

1

Then,

recalling that the first partial derivatives are zero at

x .., a,

(5}

61

where o(h 3 ) represents the higher order terms of the

series.

It is a feature of Taylor series that the effects

of the higher order terms can be made arbitrarily small if

the increments h. are small enough.

1

Since the first order

term is equal to zero, that means that the only term to be

con.sidered is the second order term

greater than zero if M(a)

~h

'M(a) h, which is

is positive definite.

plies that, for all small h, F(a+h)>F(a).

This im-

Therefore, if

h is such that the restrictions gj .= 0 are fulfilledr

(6)

and f(a)

is a

loc~l

for the case where

minimum.

M(~)

Similar reasoning holds

is negative definite.

To apply this to quadratic surfaces, consider the

second order response surface in p variables b , •.• ,bp

1

given by

(7)

where the point (0, .•. ,0) is the origin of measurement

for b , ..• ,bp.

1

The problem is to find the stationary

points of a sphere centered on the origin and having

radius R, in other words, to find the stationary points

of y subj ec·t to

62

(8)

Using the method of Lagrange multipliers, set

F

=

y-Ag, where A is the multiplier.

Then, taking the

first derivatives with respect to the b., rearranging,

1

and dividing by 2, this gives (see equation (2))

(9)

•

•

~s

•

•

•

•

8

.•

•

•

1 P b 1 +~s 2 p b 2 + ... +(s pp -A)b p =

"o

--·

ks

2 p

or, in matrix notation,

(S-AI)B

=

(10)

-~~

where

s

sll

~sl2

~s

~sl2

s22

~s

lp

8

2p

s2

11

' s =

=

...

~slp

~s

2p

,,'

spp

bl

..

=

sp

r

. B

b2

(11)

=

bp

One method of finding B would be to solve (8) and

(9} for b , ••. ,b p, and A.

1

Sometimes, however, the value

of R is not of great importance, in which case it is

63

possible to regard R as variable and A as fixed.

Thus

A can be inserted directly into (9), which can then be

solved for the b.'s, after which R can be computed from

l

( 8) •

Suppose, that, for some R, there is a multiplier

A* which gives as a solution to (9) the vector B*, and

that. A*>d.,

all i, where the d.l are the eigenvalues of S.

l

Eigenvalues are the roots of the equation

ls-drl = o,

(12)

and, if A*<d., all i, then, for an arbitrary (pxl) vector

l

=

z'M(B*)z

--~

--

where d.-A*>O.

l

y

=

f(B*)

=

z' (S-A*I)z

--

--

z'Sz-A*Z'z

--

--

=

z'z(d.-A*),

--

l

(13)

Therefore, M(B*) is positive definite and

is a local minimum.

To derive ridge regression from· this, let

f

X

X 11

x12

xlp

ryl

bl

x21

x22

x2p

y2

b2

=

I

X

nl

xn2

xnp

Y. =

I

Yn

B

=

(14)

bp