or the orks with y: Integrating assing f

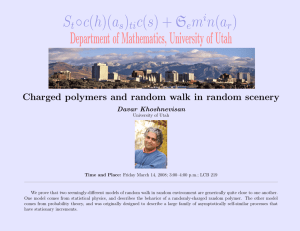

advertisement

University of Utah

School of Computing

http://www.cs.utah.edu/{~map,~ald,~wilson}

{map, ald, wilson}@cs.utah.edu

School of Computing, University of Utah

Mike Parker, Al Davis, Wilson Hsieh

Message-Passing for the

21st Century: Integrating

User-Level Networks with

SMT

University of Utah

School of Computing

• Deliver notification directly to user

-Message-passing interface for I/O and CPU communication

• Expose point-to-point links to software

-I/O architectures and CPU interconnects are becoming networks

-Frequencies and capacitance => point-to-point (buses are dead)

• Motivation

-Consider user software down to hardware

-Whole system approach

-In context of SMT system

• Message-passing

Introduction

University of Utah

School of Computing

CPU

disk

CPU

disk

System

Network

fb

CPU

net

CPU

System Architecture

LAN

University of Utah

School of Computing

-Support existing programming models

-Support existing communication models

-Arbitrary user-level message handlers

-General-purpose scheduling algorithms (no gang scheduling)

• General-purpose OS

-Modify (almost) existing architectures

• General-purpose architecture

-Scales at DRAM speeds!

-Mainly cache misses

• Avoid OS overhead

Goals

send()

notification

interrupt

University of Utah

School of Computing

-103 µs or 87% in cache misses

-380 L2-cache misses @ 270 ns / miss

receive()

Remote Node

End-to-End Latency

Overhead

Overhead

-119 µs interrupt latency (~17500 cycles @ 147 MHz)

• Sun Ultra 1, Solaris 2.5.1

Wire and Switch

Cache & TLB Overheads

NI

Kernel Code

User Code

Overhead

Local Node

Anatomy of a Message

University of Utah

School of Computing

System

Network

Cache

Message

NI

Cache

Core

L2 Cache

L1

SMT

Architecture

Controller

Memory

Cache

Message

NI

Cache

Core

L2 Cache

L1

SMT

University of Utah

School of Computing

-Hides/tolerates message latency

-Hides/tolerates message overhead

-Overlaps computation with communication

• SMT

System

Network

Architecture

Controller

Memory

Cache

Message

NI

Cache

Core

L2 Cache

L1

SMT

University of Utah

School of Computing

-Hardware can do receive without software help

• Efficient protocol

-Avoid OS overhead

• User accessible system network interface

System

Network

Architecture

Controller

Memory

Cache

Message

NI

Cache

Core

University of Utah

School of Computing

-Avoids wasting system bus bandwidth

-Avoids polluting L2 cache

-Supply data to CPU quickly on demand

-Cache incoming messages (victim cache to L2)

L2 Cache

L1

SMT

• Message cache (Receives)

System

Network

Architecture

Controller

Memory

Cache

Message

NI

Cache

Core

University of Utah

School of Computing

-Message composition area for PIO transfers

L2 Cache

L1

SMT

-Staging area for outgoing messages

• Message cache (Sends)

System

Network

Architecture

Controller

Memory

University of Utah

School of Computing

-Notify OS when SMT can’t take new thread

-Preset context

• Schedule new thread

-Notify OS if correct process not running

-Don’t change to kernel mode

-Legacy interrupt style

• Asynchronous Branch

-Allow control by external events

-Extend Tullsen’s thread synchronization table

• HW lock table

Notification Mechanisms

send()

University of Utah

School of Computing

notification

Remote Node

notification

interrupt

receive()

Remote Node

End-to-End Latency

Overhead

Overhead

send()

Wire and Switch

Cache & TLB Overheads

NI

Kernel Code

User Code

Overhead

Local Node

Wire and Switch

NI

Local Node

User Code

End-to-End Latency

End Result

send()

University of Utah

School of Computing

-Reduces and hides overhead

-Overlapping communication with computation

-Overlapping computation with computation

• Difference

Wire and Switch

NI

Local Node

User Code

notification

Remote Node

End-to-End Latency

End Result

University of Utah

School of Computing

-Libraries can support conventional communication styles

-Maintain Unix-level process protection

-General-purpose scheduling (no gang scheduling, etc.)

• Keep general-purpose OS / programming model

-User-level notifications

-Efficient zero-copy protocol

-User-level system network interface

-SMT

• Combine

Architecture Summary

University of Utah

School of Computing

-Alewife, J-Machine, & M-Machine

• Threaded MP machines

-Sender-based - Hamlyn, Avalanche

-Active Messages

• Efficient protocols

-U-Net, Shrimp, M-Machine,...

• User-level networks

-J-Machine & M-Machine - on processor chip

-Flash, Shrimp, Alewife, Tempest, Avalanche - on system bus

• Placing NI close to CPU

Related Work

University of Utah

School of Computing

-Modifications to “existing” architecture

-Message handlers need not be “trusted”

-Message cache avoids polluting L2 cache

-Messages received directly into user memory (Sender-Based Protocol)

• Distinguishing features of our architecture

-Avoid OS where possible

-User-level network interface

-Threaded execution

• Similarities

M-Machine

University of Utah

School of Computing

-4Gb/s - 32Gb/s system network

-4M - 16M L2

-32k - 128k L1

-2-4 GHz

-Will model 2-8 thread SMTs

• Look at tomorrow’s architecture

-Unmodified Solaris binaries

-Runs extensive BSD-based kernel

-Accurate cache, memory bus, MMC, I/O bus, and device models

• Extending L-RSIM (RSIM based)

Simulator

University of Utah

School of Computing

• User-level network interface & user-level interrupt

-I/O is network attached

• Used here in context of message arrival

-OS overheads scale at DRAM speeds

-Used to be infrequent

• Interrupts expensive due to legacy

• Expose interrupts to user

Another View

University of Utah

School of Computing

{map,ald,wilson}@cs.utah.edu

http://www.cs.utah.edu/{~map,~ald,~wilson}

Questions?