Document 13996778

advertisement

1

Cassandra DHT-based storage system

Vaibhav Shankar, Graduate Student, Indiana University Bloomington

Abstract— Cassandra is a structured distributed storage

system initially developed by Facebook when relational

database solutions proved too slow. It has since evolved into a

very scalable open source project managed by the Apache

software foundation. In this paper, we describe the motivation

behind the project, detailed design and a some real-world case

studies of Cassandra's performance. We end the survey with a

section on the limitations and further work planned on this

project

Index Terms— cassandra, database, distributed hash table,

distributed systems

1.Introduction

C

assandra[1] is a distributed data storage system

developed initially by Facebook engineers to facilitate

large scale distributed search in a rapidly resource-fluctuating

network. It has since been adopted by the Apache foundation

in an effort to promote development from a more widespread

community. Relational databases, largely due to their tight

structures, tend to be scale badly when required for large

scale distributed stores. Cassandra is an effort to effectively

manage distributed data stores for maximum performance

This paper is structured as follows. Section 2 describes a

brief history of related work which led to Cassandra's

development . Section 3 describes the implementation aspect

of Cassandra with a peep into the data model and structues

which make it different from conventional data stores.

Section 4 describes a case study of Facebook's Inbox search

and how Cassandra provides rapid search ability in a massive

data store like that. Section 5 describes the limitations of the

system and planned future works in the project.

2. Related Work

Distributing data for performance, availability and

durability has been widely studied in the file system and

database communities. P2P storage systems such as

Bittorrent[2] were one the proponents of this technology. P2P

systems, however, support typically flat namespaces, a

concept extended by distributed file systems which typically

support hierarchical namespaces. Systems like Ficus[3] and

Coda[4] replicate files for high availability at the expense of

consistency. Update conflicts are typically managed using

specialized conflict resolution procedures. The Google File

System (GFS)[5] is another distributed file system built for

hosting the state of Google's internal applications. GFS uses a

simple design inspired by typical static file systems with a

single master server (equivalent to UNIX inodes) for hosting

the entire metadata and where the data is split into chunks

and stored in chunk servers. However the GFS master is now

made fault tolerant using the Chubby[6] abstraction.

Bayou[6] is a distributed relational database system that

allows disconnected operations and provides eventual data

consistency. Among these systems, Bayou, Coda and Ficus

allow disconnected operations and are resilient to issues such

as network partitions and outages. These systems differ on

their conflict resolution procedures. All of them however,

guarantee eventual consistency. Similar to these systems,

Dynamo[7] allows read and write operations to continue even

during network partitions and resolves update conflicts using

different conflict resolution mechanisms, some client driven.

Traditional replicated relational database systems focus on

the problem of guaranteeing strong consistency of replicated

data. Although strong consistency provides the application

writer a convenient programming model, these systems are

limited in scalability and availability [8]. These systems are

not capable of handling network partitions because they

typically provide strong consistency guarantees. Cassandra

tries to address these issues at the cost of a customizable

eventual consistency guarantee. As we will demonstrate in

the following sections, the speed and scale obtained by

Cassandra more than make up for the overhead of eventual

consistency.

3.Technical Specifications

This section is sub-divided into three portions, the first

describes a generic DHT system, the second goes over the

data model of Cassandra and how it differs from

conventional relational database systems and the last one

describes how read and write operations work in this data

storage system

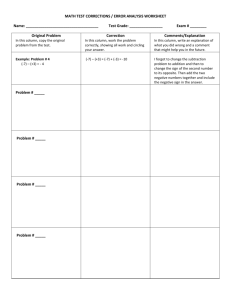

3.1 Distributed Hash Tables

Distributed hash tables are a very well studied distributed data

structure used extensively by peer-to-peer systems and later

adopted my almost all large scale distributed data stores. We

describe distributed hash tables as a simplified concept with 'nodes'

representing systems in the distributed system participating in

storage of data. Figure 1 shows the basic structure of a chord based

DHT.

The Chord based DHT works as follows. First, a good

hashing algorithm such as SHA-1 is used to translate keys to

a 160-bit address space. This address space is then divided

equally into a reduced node space which defines the

maximum number of participating nodes. This number is

typically a power of 2. Now, every node is assigned an ID by

applying this hashing algorithm to its IP address (or other

unique identification). Every file (or data object) which

needs to be stored is then given an ID and that is hashed

2

using the same scheme. Now, the file (or data object) is

stored on the first node which succeeds the hashed location.

Column Families

A column family is a container for columns, analogous to the

table in a relational system. A column family holds an

ordered list of columns, which are referenced by the column

name.

Column families have a configurable ordering (sort) applied

to the columns within each row. Out of the box ordering

implementations include ASCII, UTF-8, Long, and UUID

(lexical or time). However, APIs are provided for writing

one's own orderings as well for more complex structures.

Note that unlike conventional relational database models,

there is no relation between one column family and another

per se. Concepts such as foreign keys and table joins are not

implicitly provided (or required) by Cassandra's model.

Rows

In Cassandra, each column family is stored in a separate file,

and the file is sorted in row (i.e. key) major order. A row

does not keep related column families as mentioned before –

Cassandra maintains no information about related column

families. Instead, related columns that are accessed together

should be kept within the same column family.

Fig 1: Chord based DHT

Several improvements have been made over years of

distributed systems research. Now, more complex schemes

involving finger tables for faster lookup of the destination

node are available which make DHTs very fast and favorable

for managing distributed sharing.

3.2 Cassandra data model

At the core, Cassandra uses a chord based DHT to lookup

data. However, its data model is a significantly different

from conventional relational databases and is described in

detail here. We build the description of the model from

bottom up, starting with atomic data structures and building

up to the whole database.

Columns

The column is the lowest/smallest increment of data. It's a

tuple (triplet) that contains a name, a value and a timestamp.

Here's the interface definition of a Column:

struct Column {

1: binary name,

2: binary value,

3: i64 timestamp,

}

name represents metadata about the column (ex. 'email') and

value represents the value of the corresponding property (ex.

'foo@bar.org'). The timestamp field is used to resolve

conflicts on the latest copy and is generally provided by the

client. This does necessiate that the participating nodes in the

cluster be clock synchronized.

The row key is what determines what machine data is stored

on. This key is used by the hashing algorithm to determine

the ID of the node on which the row is stored. Thus, for each

key you can have data from multiple column families

associated with it.

A JSON representation of the row key -> column families ->

column structure is

{

"mccv":{

"Users":{

"emailAddress"{"name":"emailAddr

ess", "value":"foo@bar.com"},

"webSite":{"name":"webSite",

"value":"http://bar.com"}

},

"Stats":{

"visits":{"name":"visits",

"value":"243"}

}

},

"user2":{

"Users":{

"emailAddress":

{"name":"emailAddress",

"value":"user2@bar.com"},

"twitter":{"name":"twitter",

"value":"user2"}

}

}

}

Note that the key "mccv" identifies data in two different

column families, "Users" and "Stats". This does not imply

that data from these column families is related. The

3

semantics of having data for the same key in two different

column families is entirely up to the application. Also note

that within the "Users" column family, "mccv" and "user2"

have different column names defined. This is perfectly valid

in Cassandra. In fact there may be a virtually unlimited set of

column names defined, which leads to fairly common use of

the column name as a piece of runtime populated data. This

differs a lot from conventional RDBMS systems which do

have very structured tables with exactly same number of

columns in every row. As far as application programming

goes, Cassandra gives us more freedom to clearly express

data, much as modern day XML based systems do.

Super Columns

So far we've covered "normal" columns and rows. Cassandra

also supports super columns: columns whose values are

columns; that is, a super column is a (sorted) associative

array of columns. This is perhaps one of the most powerful

concepts used by applications using Cassandra.

One can thus think of columns and super columns in terms of

maps: A row in a regular column family is basically a sorted

map of column names to column values; a row in a super

column family is a sorted map of super column names to

maps of column names to column values.

A JSON description of this layout:

{

"mccv": {

"Tags": {

"cassandra": {

"incubator": {"incubator":

"http://incubator.apache.org/cassandra/"},

"jira": {"jira":

"http://issues.apache.org/jira/browse/CAS

SANDRA"}

},

"thrift": {

"jira": {"jira":

"http://issues.apache.org/jira/browse/THR

IFT"}

}

}

}

}

Here the column family is "Tags". We have two super

columns defined here, "cassandra" and "thrift". Within these

we have specific named bookmarks, each of which is a

column.

Just like normal columns, super columns are sparse: each row

may contain as many or as few as it likes; Cassandra imposes

no restrictions.

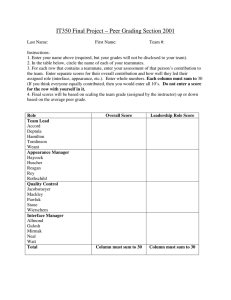

3.3 Reading and Writing to data store

Cassandra employs a very flexible mechanism to ensure

consistency of reads and writes. Figure 2 shows the basic

architecture of the storage system.

Fig 2: Cassandra I/O architecture

We describe write at first though options for read are more

or less the same. A write request could occur from any node

in the cluster. First, the information is written to a commit

log, the write operation does not return until the commit log

is written. After this, a data structure called a 'memtable' is

maintained in the memory of the node where the data is

written. Now, if the memtable becomes full, the write

happens onto a disk termed 'SStable', very similar to Google's

Bigtable. Note that these disks are available on the network

and not directly attached to the current node. Now,

replication options are provided, each of which determine the

level of consistency (and to some extent the performance) of

the system. Suppose we wanted M copies of the data to be

present in the storage system. Now, Cassandra provides

options wherein we can a) send the request and hope for the

best (zero acks), b) wait for one successful response, c) wait

for a quorum (M/2 + 1) responses or d) wait for all responses.

Typically, a quorum is preferred which provides good

consistency and speeds.

SStables use bloom filters to check for existence before

searching whole disks – this comes in handy when we

perform reads and don't find the data in the memtable. Reads

tend to be a little slower than writes, mostly because the

SStable file system ensures there is no overhead on the seek

time to write onto disk.

4. Case study – Facebook Inbox search

Facebook Inbox Search is a Facebook internal

functionality wherein they maintain a per user index of all

messages that have been exchanged between the sender and

the recipients of the message. There are two kinds of search

features that are enabled - (a) term search (b) interactions given the name of a person return all messages that the user

might have ever sent or received from that person. The

schema consists of two column families. For query (a) the

user id is the key and the words that make up the message

become the super column. Individual message identifiers of

the messages that contain the word become the columns

within the super column. For query (b) again the user id is

4

the key and the recipients id's are the super columns. For

each of these super columns the individual message

identifiers are the columns. In order to make the searches fast

Cassandra provides certain hooks for intelligent caching of

data. For instance when a user clicks into the search bar an

asynchronous message is sent to the Cassandra cluster to

prime the buffer cache with that user's index. This way when

the actual search query is executed the search results are

likely to already be in memory. The system currently stores

about 50+TB of data on a 150 node clusters. Some

performance excerpts derived from the original paper are

listed here:

Latency Stat

Search

Interactions

Term Search

References

[1] Lakshman, Malik – Cassandra – A Decentralized

Structured Storage System

[2] Cohen, Bram – Bittorrent, a new p2p app

[3] Peter Reiher, John Heidemann, David Ratner, Greg

Skinner, and Gerald Popek. Resolving file conflicts in

the ficus file system.

[4] Mahadev Satyanarayanan, James J. Kistler, Puneet

Kumar, Maria E. Okasaki, Ellen H. Siegel, and

Min

7.69ms

7.78ms

David C. Steere. Coda: A highly available le system

Median

15.69ms

18.27ms

for a distributed workstation environment.

Max

26.13ms

44.41ms

[5] Sanjay Ghemawat, Howard Gobio, and Shun-Tak

Leung. The google file system.

5. Limitations

•

All data for a single row must fit (on disk) on a

single machine in the cluster. Because row keys

alone are used to determine the nodes responsible

for replicating their data, the amount of data

associated with a single key has this upper bound.

•

A single column value may not be larger than 2GB,

this number is hardcoded and unlikely to change

•

Cassandra has two levels of indexes: key and

column. But in super column families there is a third

level of subcolumns; these are not indexed, and any

request for a subcolumn deserializes all the

subcolumns in that supercolumn

[6] D. B. Terry, M. M. Theimer, Karin Petersen, A. J.

Demers, M. J. Spreitzer, and C. H. Hauser. Managing

update conicts in bayou, a weakly connected

replicated storage system.

[7] Giuseppe de Candia, Deniz Hastorun, Madan

Jampani, Gunavardhan Kakulapati, Alex Pilchin,

Swaminathan Sivasubramanian, Peter Vosshall, and

Werner Vogels. Dynamo: amazon~Os highly available

key-value store.

[8] Jim Gray and Pat Helland. The dangers of replication and

a solution.

6. Conclusion

Cassandra is a storage system providing scalability, high

performance, and wide applicability. It has been clearly

demonstrated via this survey and its sources that Cassandra

can support a very high update throughput while delivering

low latency. Future works involves adding compression,

ability to support atomicity across keys and secondary index

support.

7. Acknowledgment

We would like to thank Dr. Judy Qiu for her guidance and

assistance to choose and find relevant material on the

Cassandra project. I would further like to thank all the

Associate Instructors and fellow students in the B534

Distributed Systems class at IU, Bloomington for their

valuable discussion and insight into this survey.