Adaptive annealing: sampling and counting a near-optimal connection between

advertisement

Adaptive annealing: a near-optimal

connection between

sampling and counting

Daniel Štefankovič

(University of Rochester)

Santosh Vempala

Eric Vigoda

(Georgia Tech)

Counting

independent sets

spanning trees

matchings

perfect matchings

k-colorings

Compute the number of

independent sets

(hard-core gas model)

independent set

subset S of vertices,

=

of a graph

no two in S are neighbors

# independent sets = 7

independent set = subset S of vertices

no two in S are neighbors

# independent sets = 5598861

independent set = subset S of vertices

no two in S are neighbors

graph G 6 # independent sets in G

#P-complete

#P-complete even for 3-regular graphs

(Dyer, Greenhill, 1997)

graph G 6 # independent sets in G

?

approximation

randomization

We would like to know Q

Goal: random variable

Y

such that

P( (1-ε)Q ≤ Y ≤ (1+ε)Q ) ≥ 1-δ

“Y gives (1±ε)-estimate”

(approx) counting ⇔ sampling

Valleau,Card’72 (physical chemistry),

Babai’79 (for matchings and colorings),

Jerrum,Valiant,V.Vazirani’86

the outcome of the JVV reduction:

random variables: X1 X2 ... Xt

such that

1) E[X X ... X ] = “WANTED”

1 2

t

2) the Xi are easy to estimate

V[Xi]

squared coefficient

=

O(1)

2

of variation (SCV)

E[Xi]

(approx) counting ⇔ sampling

1)

E[X1 X2 ... Xt]

= “WANTED”

2) the Xi are easy to estimate

V[Xi]

= O(1)

2

E[Xi]

Theorem (Dyer-Frieze’91)

2

2

O(t /ε ) samples (O(t/ε ) from each X )

2

i

give

1±ε estimator of “WANTED” with prob≥3/4

JVV for independent sets

GOAL: given a graph G, estimate the

number of independent sets of G

1

# independent sets =

P(

)

JVV for independent sets

P(

P(

?

?

?

X1

)P(

P(A∩B)=P(A)P(B|A)

)=

?

?

) P( )P( )

?

X2

Xi ∈ [0,1] and E[Xi] ≥½

X3

⇒

V[Xi]

X4

=

O(1)

E[Xi]2

Self-reducibility for independent sets

P(

?

?

?

)

5

=

7

Self-reducibility for independent sets

P(

?

?

?

7

=

5

)

5

=

7

Self-reducibility for independent sets

P(

?

?

?

7

=

5

)

5

=

7

7

=

5

Self-reducibility for independent sets

P(

?

?

)

5

=

3

3

=

5

Self-reducibility for independent sets

P(

?

?

)

5

=

3

3

=

5

5

=

3

Self-reducibility for independent sets

7

=

5

7 5 3

=

5 3 2

7 5

=

5 3

=7

JVV: If we have a sampler oracle:

graph G

SAMPLER

ORACLE

random

independent

set of G

then FPRAS using O(n2) samples.

JVV: If we have a sampler oracle:

graph G

SAMPLER

ORACLE

random

independent

set of G

then FPRAS using O(n2) samples.

ŠVV: If we have a sampler oracle:

β, graph G

SAMPLER

ORACLE

set from

gas-model

Gibbs at β

then FPRAS using O*(n) samples.

Application – independent sets

O*( |V| ) samples suffice for counting

Cost per sample (Vigoda’01,Dyer-Greenhill’01)

time = O*( |V| ) for graphs of degree ≤ 4.

Total running time:

O* ( |V|2 ).

Other applications

matchings

O*(n2m)

(using Jerrum, Sinclair’89)

spin systems:

Ising model

O*(n2) for β<βC

(using Marinelli, Olivieri’95)

k-colorings

O*(n2) for k>2Δ

(using Jerrum’95)

total running time

easy = hot

hard = cold

Hamiltonian

4

2

1

0

Big set = Ω

Hamiltonian

H : Ω → {0,...,n}

Goal: estimate

-1

|H (0)|

-1

|H (0)|

= E[X1] ... E[Xt ]

Distributions between hot and cold

β = inverse temperature

β = 0 ⇒ hot ⇒ uniform on Ω

β = ∞ ⇒ cold ⇒ uniform on H-1(0)

μβ (x) ∝ exp(-H(x)β)

(Gibbs distributions)

Distributions between hot and cold

μβ (x) ∝ exp(-H(x)β)

exp(-H(x)β)

μβ (x) =

Z(β)

Normalizing factor = partition function

Z(β)= ∑ exp(-H(x)β)

x∈Ω

Partition function

Z(β)= ∑ exp(-H(x)β)

x∈Ω

have:

want:

Z(0) = |Ω|

-1

Z(∞) = |H (0)|

Assumption:

we have a sampler oracle for μβ

exp(-H(x)β)

μβ (x) =

Z(β)

graph G

β

SAMPLER

ORACLE

subset of V

from μβ

Assumption:

we have a sampler oracle for μβ

exp(-H(x)β)

μβ (x) =

Z(β)

W ∼ μβ

Assumption:

we have a sampler oracle for μβ

exp(-H(x)β)

μβ (x) =

Z(β)

W ∼ μβ

X = exp(H(W)(β - α))

Assumption:

we have a sampler oracle for μβ

exp(-H(x)β)

μβ (x) =

Z(β)

W ∼ μβ

X = exp(H(W)(β - α))

can obtain the following ratio:

E[X] = ∑ μβ(s) X(s) =

s∈Ω

Z(α)

Z(β)

Our goal restated

Partition function

Z(β) = ∑ exp(-H(x)β)

x∈Ω

Goal: estimate

Z(∞) =

Z(β1) Z(β2)

Z(β0) Z(β1)

-1

Z(∞)=|H (0)|

...

Z(βt)

Z(βt-1)

β0 = 0 < β1 < β 2 < ... < βt = ∞

Z(0)

Our goal restated

Z(∞) =

Z(β1) Z(β2)

Z(β0) Z(β1)

...

Z(βt)

Z(βt-1)

Z(0)

Cooling schedule:

β0 = 0 < β1 < β 2 < ... < βt = ∞

How to choose the cooling schedule?

minimize length, while satisfying

V[Xi]

E[Xi]2

= O(1)

E[Xi] =

Z(βi)

Z(βi-1)

Parameters: A and n

Z(β) = ∑ exp(-H(x)β)

x∈Ω

Z(0) = A

H:Ω → {0,...,n}

n

Z(β) =

∑

ak e-β k

k=0

ak = |H-1(k)|

Parameters

Z(0) = A

H:Ω → {0,...,n}

A

n

V

2

E

≈ V!

V

perfect matchings

V!

V

k-colorings

V

k

E

independent sets

matchings

Previous cooling schedules

Z(0) = A

H:Ω → {0,...,n}

β0 = 0 < β1 < β 2 < ... < βt = ∞

“Safe steps”

β → β + 1/n

(Bezáková,Štefankovič,

β → β (1 + 1/ln A)

Vigoda,V.Vazirani’06)

ln A → ∞

Cooling schedules of length

O( n ln A)

O( (ln n) (ln A) )

(Bezáková,Štefankovič,

Vigoda,V.Vazirani’06)

No better fixed schedule possible

Z(0) = A

H:Ω → {0,...,n}

A schedule that works for all

-βn

A

Za(β) =

(1 + a e

)

1+a

(with a∈[0,A-1])

has LENGTH ≥ Ω( (ln n)(ln A) )

Parameters

Z(0) = A

H:Ω → {0,...,n}

Our main result:

can get adaptive schedule

*

1/2

of length O ( (ln A) )

Previously:

non-adaptive schedules

of length Ω*( ln A )

Related work

can get adaptive schedule

*

1/2

of length O ( (ln A) )

Lovász-Vempala

Volume of convex bodies in O*(n4)

schedule of length O(n1/2)

(non-adaptive cooling schedule)

Existential part

Lemma:

for every partition function there exists

a cooling schedule of length O*((ln A)1/2)

s

t

s

i

x

e

e

r

e

h

t

can get adaptive schedule

of length O* ( (ln A)1/2 )

Express SCV using partition function

(going from β to α) E[X] =

W ∼ μβ

E[X2]

2

E[X]

Z(α)

Z(β)

X = exp(H(W)(β - α))

=

Z(2α-β) Z(β)

Z(α)2

≤ C

E[X2]

2

E[X]

β

α

=

Z(2α-β) Z(β)

Z(α)2

≤ C

2α-β

f(γ)=ln Z(γ)

Proof:

≤ C’=(ln C)/2

f(γ)=ln Z(γ)

Proof:

1

f is decreasing

f is convex

f’(0) ≥ –n

f(0) ≤ ln A

either f or f’

changes a lot

Let K:=Δf

1

Δ(ln |f’|) ≥

K

f:[a,b] → R, convex, decreasing

can be “approximated” using

f’(a)

(f(a)-f(b))

f’(b)

segments

Technicality: getting to 2α-β

Proof:

β

α

2α-β

Technicality: getting to 2α-β

βi

Proof:

β

βi+1

α

2α-β

Technicality: getting to 2α-β

βi

Proof:

β

βi+1

α

2α-β

βi+2

Technicality: getting to 2α-β

βi

Proof:

ln ln A

extra

steps

β

βi+1

α

2α-β

βi+2

βi+3

Existential → Algorithmic

s

t

s

i

x

e

e

r

e

th

can get adaptive schedule

of length O* ( (ln A)1/2 )

can get adaptive schedule

*

1/2

of length O ( (ln A) )

Algorithmic construction

Our main result:

using a sampler oracle for μβ

exp(-H(x)β)

μβ (x) =

Z(β)

we can construct a cooling schedule of length

≤ 38 (ln A)1/2(ln ln A)(ln n)

Total number of oracle calls

≤ 107 (ln A) (ln ln A+ln n)7 ln (1/δ)

Algorithmic construction

current inverse temperature β

ideally move to α such that

B1 ≤

E[X2]

E[X]2

≤ B2

E[X] =

Z(α)

Z(β)

Algorithmic construction

current inverse temperature β

ideally move to α such that

B1 ≤

E[X2]

E[X]2

≤ B2

E[X] =

X is “easy to estimate”

Z(α)

Z(β)

Algorithmic construction

current inverse temperature β

ideally move to α such that

B1 ≤

E[X2]

E[X]2

≤ B2

E[X] =

Z(α)

Z(β)

we make progress (assuming B1>1)

Algorithmic construction

current inverse temperature β

ideally move to α such that

B1 ≤

E[X2]

E[X]2

≤ B2

E[X] =

need to construct a “feeler” for this

Z(α)

Z(β)

Algorithmic construction

current inverse temperature β

ideally move to α such that

B1 ≤

E[X2]

E[X]2

≤ B2

=

E[X] =

Z(β)

Z(2β−α)

Z(α)

Z(α)

need to construct a “feeler” for this

Z(α)

Z(β)

Algorithmic construction

current inverse temperature β

bad “feeler”

ideally move to α such that

B1 ≤

E[X2]

E[X]2

≤ B2

=

E[X] =

Z(β)

Z(2β−α)

Z(α)

Z(α)

need to construct a “feeler” for this

Z(α)

Z(β)

Rough estimator for

n

Z(β) =

∑

Z(β)

Z(α)

ak e-β k

k=0

For W ∼ μβ we have P(H(W)=k) =

ak e-β k

Z(β)

Rough estimator for

Z(β)

Z(α)

If H(X)=k likely at both α, β ⇒ rough

n

estimator

Z(β) =

∑

ak e-β k

k=0

For W ∼ μβ we have P(H(W)=k) =

For U ∼ μα we have P(H(U)=k) =

ak e-β k

Z(β)

ak e-α k

Z(α)

Rough estimator for

Z(β)

Z(α)

For W ∼ μβ we have P(H(W)=k) =

For U ∼ μα we have P(H(U)=k) =

P(H(U)=k) k(α-β) Z(β)

=

e

P(H(W)=k)

Z(α)

ak e-β k

Z(β)

ak e-α k

Z(α)

Z(β)

Rough estimator for

Z(α)

n

Z(β) =

∑

ak e-β k

k=0

For W ∼ μβ we have

P(H(W)∈[c,d]) =

d

∑ ak e-β k

k=c

Z(β)

Rough estimator for

If |α-β|⋅ |d-c| ≤ 1 then

Z(β)

Z(α)

Z(β)

1 Z(β)

P(H(U)∈[c,d]) ec(α-β)

≤

≤e

P(H(W)∈[c,d])

e Z(α)

Z(α)

We also need P(H(U) ∈ [c,d])

P(H(W) ∈ [c,d])

to be large.

Split {0,1,...,n} into h ≤ 4(ln n) ln A

intervals

[0],[1],[2],...,[c,c(1+1/ ln A)],...

for any inverse temperature β there

exists a interval with P(H(W)∈ I) ≥ 1/8h

We say that I is HEAVY for β

Algorithm

repeat

find an interval I which is heavy for

the current inverse temperature β

see how far I is heavy (until some β*)

use the interval I for the feeler

either

* make progress, or

* eliminate the interval I

Z(β)

Z(2β−α)

Z(α)

Z(α)

Algorithm

repeat

find an interval I which is heavy for

the current inverse temperature β

see how far I is heavy (until some β*)

use the interval I for the feeler

either

* make progress, or

* eliminate the interval I

* or make a “long move”

Z(β)

Z(2β−α)

Z(α)

Z(α)

if we have sampler oracles for μβ

then we can get adaptive schedule

of length t=O* ( (ln A)1/2 )

independent sets

O*(n2)

(using Vigoda’01, Dyer-Greenhill’01)

matchings

O*(n2m)

(using Jerrum, Sinclair’89)

spin systems:

Ising model

O*(n2) for β<βC

(using Marinelli, Olivieri’95)

k-colorings

O*(n2) for k>2Δ

(using Jerrum’95)

Appendix – proof of:

1)

E[X1 X2 ... Xt]

= “WANTED”

2) the Xi are easy to estimate

V[Xi]

= O(1)

2

E[Xi]

Theorem (Dyer-Frieze’91)

2

2

O(t /ε ) samples (O(t/ε ) from each X )

2

i

give

1±ε estimator of “WANTED” with prob≥3/4

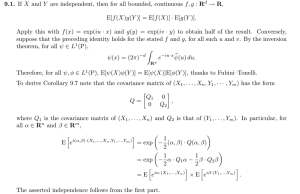

The Bienaymé-Chebyshev inequality

P( Y gives (1±ε)-estimate )

V[Y]

≥1E[Y]2

Y=

1

ε2

X1 + X2 + ... + Xn

n

The Bienaymé-Chebyshev inequality

P( Y gives (1±ε)-estimate )

squared coefficient

of variation SCV

V[Y]

1

=

2

E[Y]

n

V[Y]

≥1E[Y]2

V[X]

E[X]2

Y=

1

ε2

X1 + X2 + ... + Xn

n

The Bienaymé-Chebyshev inequality

Let X1,...,Xn,X be independent, identically

distributed random variables,

Q=E[X]. Let

Y=

X1 + X2 + ... + Xn

n

Then

P( Y gives (1±ε)-estimate of Q )

V[X] 1

≥12

2

ε

n E[X]

Chernoff’s bound

Let X1,...,Xn,X be independent, identically

distributed random variables, 0 ≤ X ≤ 1,

Q=E[X]. Let

Y=

X1 + X2 + ... + Xn

n

Then

P( Y gives (1±ε)-estimate of Q )

≥1– e

- ε2 . n . E[X] / 3

V[X]

n=

E[X]2

n=

0≤X≤1

1

E[X]

3

ln (1/δ)

ε2

1

ε2

1

δ

0≤X≤1

n=

n=

0≤X≤1

1

E[X]

1

E[X]

3

ln (1/δ)

ε2

1

ε2

1

δ

Median “boosting trick”

n=

1

E[X]

4

ε2

Y=

P( ∈

X1 + X2 + ... + Xn

n

) ≥ 3/4

=

(1-ε)Q

Y

(1+ε)Q

Median trick – repeat 2T times

(1-ε)Q

P( ∈

(1+ε)Q

) ≥ 3/4

⇒

P(

> T out of 2T

)≥1-e

⇒

P(

median is in )

≥1-e

-T/4

-T/4

0≤X≤1

n=

1

E[X]

+ median trick

n=

0≤X≤1

1

E[X]

3

ε2

ln (1/δ)

32

ln (1/δ)

2

ε

n=

V[X] 32

ln

(1/δ)

E[X]2 ε2

+ median trick

n=

0≤X≤1

1

E[X]

3

ε2

ln (1/δ)

Appendix – proof of:

1)

E[X1 X2 ... Xt]

= “WANTED”

2) the Xi are easy to estimate

V[Xi]

= O(1)

2

E[Xi]

Theorem (Dyer-Frieze’91)

2

2

O(t /ε ) samples (O(t/ε ) from each X )

2

i

give

1±ε estimator of “WANTED” with prob≥3/4

How precise do the Xi have to be?

First attempt – Chernoff’s bound

How precise do the Xi have to be?

First attempt – Chernoff’s bound

Main idea:

ε

ε

ε

ε

(1±

)(1±

)(1±

)... (1±

) ≈ 1±ε

t

t

t

t

How precise do the Xi have to be?

First attempt – Chernoff’s bound

Main idea:

ε

ε

ε

ε

(1±

)(1±

)(1±

)... (1±

) ≈ 1±ε

t

t

t

t

n=

Θ(

1

E[X]

1

ε2

ln (1/δ)

)

each term Ω (t2) samples ⇒ Ω (t3) total

How precise do the Xi have to be?

Bienaymé-Chebyshev is better

(Dyer-Frieze’1991)

X=X1 X2 ... Xt

GOAL: SCV(X) ≤ ε2/4

squared coefficient

of variation (SCV)

P( X gives (1±ε)-estimate )

V[X]

≥1E[X]2

1

ε2

How precise do the Xi have to be?

Bienaymé-Chebyshev is better

(Dyer-Frieze’1991)

Main idea:

ε2/4

2/4

SCV(Xi) ≤

⇒ SCV(X) <

ε

≈

t

SCV(X) = (1+SCV(X1)) ... (1+SCV(Xt)) - 1

SCV(X)=

V[X]

E[X]2

=

E[X2]

E[X]2

-1

How precise do the Xi have to be?

Bienaymé-Chebyshev is better

(Dyer-Frieze’1991)

Main idea:

X = X1 X2 ... Xt

ε2/4

2/4

SCV(Xi) ≤

⇒ SCV(X) <

ε

≈

t

each term Ο(t /ε2) samples ⇒ Ο(t2/ε2) total

if we have sampler oracles for μβ

then we can get adaptive schedule

of length t=O* ( (ln A)1/2 )

independent sets

O*(n2)

(using Vigoda’01, Dyer-Greenhill’01)

matchings

O*(n2m)

(using Jerrum, Sinclair’89)

spin systems:

Ising model

O*(n2) for β<βC

(using Marinelli, Olivieri’95)

k-colorings

O*(n2) for k>2Δ

(using Jerrum’95)