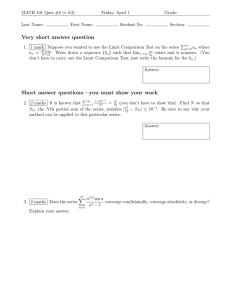

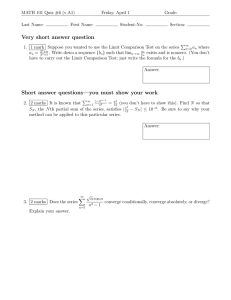

Lecture Notes No. 13 8. Neural Networks

advertisement

2.160 System Identification, Estimation, and Learning

Lecture Notes No. 13

March 22, 2006

8. Neural Networks

8.1 Physiological Background

Neuro-physiology

• A Human brain has approximately 14 billion neurons, 50 different kinds of

neurons. … uniform

• Massively-parallel, distributed processing

Very different from a computer (a Turing machine)

Image removed due to copyright reasons.

McCulloch and Pitts, Neuron Model 1943

Donald Hebb, Hebbian Rule, 1949

…Synapse reinforcement learning

Rosenflatt, 1959

…The perceptron convergence theorem

Synapses

x1

The Hebbian Rule

Input xi

out put y

fired

fired

w1

x2

w2

xi

∑

wi

y

f

The i-th synapse wi is

reinforced.

wn

xn

1

The electric conductivity increases at wi

y

ŷ

z

g( z)

g

1

e

z

logistic function, or

sinusoid function

(1)

n

z = ∑ wi x i

Error e = yˆ − y = g ( z ) − y

i =1

Gradient Descent method

(2)

∆wi = − ρ ⋅ grad wi e2 = − ρ 2e

ρ : learning rate

(3) ∴ ∆wi = −2 ρ g ' e xi

Rule

∆wi ∝ (Input xi ).(Error)

Supervised Learning

∂e

∂wi

1

1 + e −z

Unsupervised Learning.

Replacing e by ŷ yields the Hebbian

(4) g ( z ) =

∆wi ∝ (Input xi ).(output ŷ )

8.2 Stochastic Approximation

consider a linear output function for yˆ = g ( z ) :

n

(5)

yˆ = ∑ wi xi

i =1

Given N sample data

{( y , x ,...x )

j

j

1

j = 1,...N

j

n

} Training Data

Find w1 ,..., wn that minimize

(6)

(7)

JN =

1

N

n

∑ ( yˆ

j

− y j)

i =1

2

∆wi = − ρ ⋅ grad wi J N = − ρ

N

∂yˆ j

( yˆ − y )

∑

∂wi

j =1

N

j

j

∂yˆ j

for all the sample date j=1,…N:

This method requires to store the gradient ( yˆ − y )

∂wi

It is a type of batch processing.

j

j

2

A simpler method is to execute updating the weight ∆wi every time the training data is

presented.

(8)

(9)

∆wi [k ] = ρδ [k ]xi [k ]

for the k-th presentation

Where δ (k ) = y[k ] − ∑ wl [k ]xl [k ]

Correct output for the

training data presented

at the k -th time

Predicted output based on the

weights wi [k ] for the training data

presented at the k -th time

Learning procedure

Present all the N training data in any sequence, and repeat the N presentations, called an

“epoch”, many times… Recycling.

N predictions

( x [1] , y [1]) ... ( x [ N ] , y [ N ]) .

epoch 1

epoch 2

epoch 3

epoch 4

epoch p

This procedure is called the Widrow-Hoff algorithm.

Convergence: As the recycling is repeated infinite times, does the weight vector

converge to the optimal one: arg min J N ( w1 ...w n ) ?... Consistency

w

If a constant learning rate ρ > 0 is used, this does not converge, unless min J N = 0

JN

Learning

Rate

ρk

ex. ρ k =

constant

k

w2

w1

W-space

W0

Minimum

point

0

k

3

constant

, convergence can be guaranteed.

k

This is a special case of the Method of Stochastic Approximation.

If the learning rate is varied, eg. ρ k =

Expected Loss Function

E [L( w)] = ∫ L( x, y w) p( x )dx

(10)

(11)

∫ L( x, y w) = 2 ( y − yˆ (x w))

1

2

The stochastic approximation procedure for minimizing this expected loss function with

respect to weight w1 ,..., wn is

∂

(12) wi [k + 1] = wi [k ] − ρ [k ]

L( x[k ], y [k ] w[k ])

∂wi

Where x[k ]is the training data presented at the k -th iteration. This estimate is proved

consistent if the learning rate ρ [k ] satisfies.

1) lim ρ [k ] = 0

k →∞

(13)

2).

k

lim ∑ ρ [i ] = +∞

k →∞

i =1

k

2

lim ∑ ρ [i ] < ∞

k →∞

(14)

i =1

This condition prevents all the

weights from converging so fast

that error will remain forever

uncorrected.

This condition ensures that random

fluctuations are eventually

suppressed

lim E[( wi [k ] − wi 0 ) 2 ] = 0 ;

k →∞

The estimated weights converge to their optimal values with probability of 1.

Robbins and Monroe, 1951

This stochastic Approximation method in general needs more presentation of data, i.e. the

convergence process is slower than the batch processing. But the computation is very

simple.

8.3 Multi-Layer Perceptrons

The Exclusive OR Problem

Input

0

0

1

1

X1

0

1

0

1

X2

Output

0

1

1

0

y

Can a single neural unit (perceptron)

with weights w1 , w2 , w3 , produce the

XOR truth table?

No, it cannot

4

x2

x1

g( z)

w1

x2

w2

1

∑

Class 1

g

z

wi

x1

1

Class 0

(15)

(16)

z = w1 x1 + w2 x 2 + w3

Set z=0, then 0 = w1 x1 + w2 x2 + w3 represents a straight line in the x1 − x2 plane.

⎧1

g( z) = ⎨

⎩0

z>0

z≤0

Class 0 and class 1 cannot be

separated by a straight line. …

Not linearly separable.

Consider a nonlinear function in lieu of (15)

1

(17)

z = f ( x1 , x 2 ) = x1 + x 2 − 2 x1 x 2 −

3

1

f (0,0) = −

3

Class 0

1

f (1,1) = −

3

f (1,0) = f (0,1) =

2

>0

3

Class 1

x2

z>0

z<0

Next, replace x1 x 2 by a new variable x3

1

(18)

z = x1 + x 2 − 2 x3 −

3

x1

This is apparently a linear function: Linearly Separable.

5

Hidden Units

• Augment the original input patterns

• Decode the input and generate tan internal representation

x1

z=0

x3

x2

z<0

+1

∑

-1.5

+1

-2

+1

+1

1

z>0

y

∑

-1/3

x2

x1

Hidden Unit

Not directly visible from output

Extending this argument, we arrive at a multi-layer network having multiple hidden

layers between input and output layers.

Multi-Layer Perception

Layer 2

Layer 1

Layer m

Layer M

Layer 0

Input

Layer

Hidden Layers

Output

Layer

6