Homework Assignment 5 - SOLUTIONS Due Monday, November 9, 2015

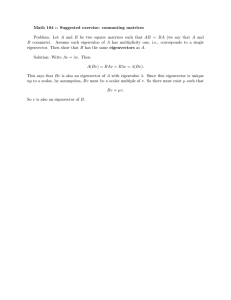

advertisement

CSCI 510/EENG 510

Image and Multidimensional Signal Processing

Fall 2015

Homework Assignment 5 - SOLUTIONS

Due Monday, November 9, 2015

Notes: Please email me your solutions for these problems (in order) as a single Word or PDF document. If you do

a problem on paper by hand, please scan it in and paste it into the document (although I would prefer it typed!).

1. (20 pts) Do Principal Components Analysis (PCA) on the pixels of the RGB image

“pears.png” from the Matlab image processing toolbox and show how it can be

represented with two values per pixel. Give:

a. The 3 eigenvalues and 3 eigenvectors for this image.

b. Show the color RGB image reconstructed from the top two principal

components.

c. Plot the reconstructed pixels in RGB space.

Solution:

We first convert the image, to a collection of (R,G,B) vectors. The image is 3-dimensional, of

size (height, width, depth), where depth is the number of bands (3). We convert it to a 2dimensional array that has size (height*width, depth). So each row represents an (R,G,B) pixel.

We can do this using Matlab’s “reshape” function. According to the help page for this function,

“reshape(X,M,N) returns the M-by-N matrix whose elements are taken columnwise from X.”

(a) We compute the mean and covariance of the collection of vectors. The principal components

are the eigenvectors of the covariance matrix. The Matlab code:

% HW5, problem 2

% Do principal component analysis on an RGB image

clear all

close all

RGB = im2double(imread('pears.png'));

% Convert 3-dimensional array array to 2D, where each row is a pixel (RGB)

X = reshape(RGB, [], 3);

N = size(X,1); % N is the number of pixels

% Get mean and covariance

mx = mean(X);

Cx = cov(X);

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

% Get eigenvalues and eigenvectors of Cx.

% Produces V,D such that Cx*V = V*D.

% So the eigenvectors are the columns of V.

[V,D] = eig(Cx);

e1 = V(:,3);

disp('Eigenvector e1:'), disp(e1);

e2 = V(:,2);

disp('Eigenvector e2:'), disp(e2);

e3 = V(:,1);

disp('Eigenvector e3:'), disp(e3);

d1 = D(3,3);

1

CSCI 510/EENG 510

Image and Multidimensional Signal Processing

Fall 2015

disp('Eigenvalue d1:'), disp(d1);

d2 = D(2,2);

disp('Eigenvalue d2:'), disp(d2);

d3 = D(1,1);

disp('Eigenvalue d3:'), disp(d3);

The resulting values:

Eigenvector e1:

0.6533

0.6041

0.4564

Eigenvector e2:

-0.6798

0.2026

0.7049

Eigenvector e3:

-0.3333

0.7708

-0.5430

Eigenvalue d1:

0.0679

Eigenvalue d2:

0.0033

Eigenvalue d3:

5.3952e-004

(b) We next transform the input vectors using the equation y = A(x – mx) where mx is the mean

of the vectors, and A is the matrix formed from the eigenvectors of Cx, such that the 1st row of

A is the eigenvector corresponding to the largest eigenvalue, the 2nd row is the eigenvector

corresponding to the second largest eigenvalue, etc. The Matlab code:

%

%

%

A

Construct matrix A such that the 1st row of A is the eigenvector

corresponding to the largest eigenvalue, the 2nd row is the eigenvector

corresponding to the second largest eigenvalue, etc.

= [e1'; e2'; e3'];

%

%

%

%

%

%

%

%

Y

Project input vectors x onto eigenvectors. For each (column) vector x,

we will use the equation y = A*(x - mx).

To explain the Matlab commands below:

X is our (N,3) array of vectors; each row is a vector.

mx is the mean of the vectors, size (1,3).

We first subtract off the mean using X - repmat(mx,N,1).

We then transpose that result so that each vector is a column.

We then apply our transform A to each column.

= A*(X - repmat(mx,N,1))';

% Y has size 3xN

% Display y vectors as images

[height,width,depth] = size(RGB);

Y1 = reshape(Y(1,:), height, width);

Y2 = reshape(Y(2,:), height, width);

Y3 = reshape(Y(3,:), height, width);

figure;

2

CSCI 510/EENG 510

Image and Multidimensional Signal Processing

Fall 2015

subplot(1,3,1), imshow(Y1,[]);

subplot(1,3,2), imshow(Y2,[]);

subplot(1,3,3), imshow(Y3,[]);

The Y1,Y2,Y3 vectors, displayed as images:

Next we reconstruct the original vectors using only the top two principal components, using the

equation xr = AT*y + mx. The Matlab code:

% Reconstruct image using only Y1 and Y2. For each (column) vector y,

% we will use the equation x = A'*y + mx.

% To explain the Matlab commands below:

%

Y is our (3,N) array of vectors; where each column is a vector.

%

A(1:2,:) is the first two rows of A.

%

Y(1:2,:) is the first two rows of Y.

%

A(1:2,:)' * Y(1:2,:) produces our transformed vectors (3xN); we

then

%

transpose that to make an array of size Nx3, and add the mean.

Xr = ( A(1:2,:)' * Y(1:2,:) )' + repmat(mx,N,1);

% Xr has size Nx3

% Display reconstructed image

Ir(:,:,1) = reshape(Xr(:,1), height, width);

Ir(:,:,2) = reshape(Xr(:,2), height, width);

Ir(:,:,3) = reshape(Xr(:,3), height, width);

figure, imshow(Ir);

The original image and the reconstructed image:

Original

Reconstructed

3

CSCI 510/EENG 510

Image and Multidimensional Signal Processing

Fall 2015

For this image, the reconstructed image is a very good approximation to the original image,

probably because it consists mostly of shades of green. We can predict the squared error of the

reconstructed image ... it is just the sum of the eigenvalues corresponding to the principal

components we discarded. For this image, that third eigenvalue was very small.

2. (20 pts) (This is exercise 6.15 in the Gonzalez and Woods textbook.) Consider the

following image composed of solid color squares. For discussing your answer, choose a

gray scale consisting of eight shades of gray, 0 through 7, where 0 is black and 7 is

white. Suppose that the image is converted to HSI color space.

a. Sketch the hue image and provide the values in the image.

b. Sketch the saturation image and provide the values in the image.

c. Sketch the intensity image and provide the values in the image.

If you cannot assign a specific value to a point in the resulting image, explain why.

Red

Green

Blue

Magenta

Cyan

Yellow

White

Black

Solution: The original image is

We use equations 6.2-2 through 6.2-4 to convert RGB to HSI. The following is from an Excel

spreadsheet:

4

CSCI 510/EENG 510

Color

red

green

blue

magenta

cyan

yellow

white

R

1

0

0

1

0

1

1

G

0

1

0

0

1

1

1

B

0

0

1

1

1

0

1

Image and Multidimensional Signal Processing

H

S

0.00

0.33

0.67

0.83

0.50

0.17

#DIV/0!

I

1.00

1.00

1.00

1.00

1.00

1.00

0.00

0.33

0.33

0.33

0.67

0.67

0.67

1.00

theta

0.00

120.00

120.00

60.00

180.00

60.00

#DIV/0!

Fall 2015

Scaled

H S I

0

7 2

2

7 2

5

7 2

6

7 5

4

7 5

1

7 5

### 0 7

The hue, saturation, and intensity images are shown below, from left to right. Note that the hue

for white (or any shade of gray) is undefined.

or, the values of these regions are:

3. (20 pts) In class you trained a decision tree to classify two types of shapes (“plus” and

“hearts”), and applied the tree to classify an unknown shape. Another type of classifier

is the “minimum distance” classifier, which chooses the class with the minimum

distance to the class center. Apply this classifier to the unknown shape, and find which

class it belongs to. Use the Mahalanobis distance as described in the lecture notes, and

give the value of the distance to the closest class. Use the same features that we used in

class (i.e., “area” and “solidity”). Although Matlab has a function to compute

Mahalanobis distance, don’t use that; compute it yourself instead.

Solution: The Mahalanobis distance from a vector x to the (mean) class center xc is

dm =

( x − xc ) C−1 ( x − xc ) where C is the covariance matrix of the class,

T

(

C = E ( x − xc )( x − xc )

T

) or C =

ij

1

N

∑( x − µ )( x

i

i

Using the Matlab code below, I get:

d1 (distance to the “hearts” class) is 5.75

5

j

− µj ) .

CSCI 510/EENG 510

Image and Multidimensional Signal Processing

d2 (distance to the “plus” class) is 0.86

So the shape should be classified as a “plus”.

% HW6 problem 1 - closest distance classifier

clear all

close all

% Read in images with training data

% Invert so that symbols are 1's and background is 0's

I1 = ~imread('hearts.bmp');

I2 = ~imread('plus.bmp');

[L1,n1] = bwlabel(I1);

[L2,n2] = bwlabel(I2);

% Ok, extract a vector of features for each symbol.

% We will use the features returned by "regionprops"

props1 = regionprops(L1, 'all');

props2 = regionprops(L2, 'all');

% Create a set of feature vectors. The elements of the feature vector are

X1(:,1) = cat(1, props1(:).Area);

X1(:,2) = cat(1, props1(:).Solidity);

X2(:,1) = cat(1, props2(:).Area);

X2(:,2) = cat(1, props2(:).Solidity);

m1 = mean(X1);

m2 = mean(X2);

% mean

C1 = cov(X1);

C2 = cov(X2);

% covariances

% Read the test image

I3 = ~imread('test.bmp');

[L3,n3] = bwlabel(I3);

if n3 ~= 1

fprintf('Hey! Too many objects!\n');

% Ok, extract a vector of features for the symbol.

% We will use the features returned by "regionprops"

props3 = regionprops(L3, 'all');

Xtest(1) = props3(1).Area;

Xtest(2) = props3(1).Solidity;

% Find the Mahalanobis distance to each centroid

d1 = sqrt( (Xtest-m1) * inv(C1) * (Xtest-m1)' )

d2 = sqrt( (Xtest-m2) * inv(C2) * (Xtest-m2)' )

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

% Let's plot the training vectors and their means

hold on

plot(X1(:,1), X1(:,2), '+r');

plot(X2(:,1), X2(:,2), '+g');

plot(m1(1), m1(2), 'or');

plot(m2(1), m2(2), 'og');

xlabel('Area'), ylabel('Solidity');

% Plot the contours of equal probability

6

end

Fall 2015

CSCI 510/EENG 510

Image and Multidimensional Signal Processing

Fall 2015

Xmin = min( cat(1,X1,X2) );

Xmax = max( cat(1,X1,X2) );

dX = (Xmax-Xmin)/50;

[f1,f2] = meshgrid(Xmin(1):dX(1):Xmax(1), Xmin(2):dX(2):Xmax(2));

Cinv = inv(C1);

detCsqrt = sqrt(det(C1));

for i=1:size(f1,1)

for j=1:size(f1,2)

x = [f1(i,j) f2(i,j)];

fX(i,j) = (1/(2*pi*detCsqrt)) * exp( -0.5*(x-m1)*Cinv*(x-m1)' );

end

end

fmax = 1/(2*pi*detCsqrt);

contour(f1,f2,fX, ...

'LevelList', [0.4*fmax 0.6*fmax 0.8*fmax]);

Cinv = inv(C2);

detCsqrt = sqrt(det(C2));

for i=1:size(f1,1)

for j=1:size(f1,2)

x = [f1(i,j) f2(i,j)];

fX(i,j) = (1/(2*pi*detCsqrt)) * exp( -0.5*(x-m2)*Cinv*(x-m2)' );

end

end

fmax = 1/(2*pi*detCsqrt);

contour(f1,f2,fX, ...

'LevelList', [0.4*fmax 0.6*fmax 0.8*fmax]);

% Plot the new shape on the feature space

plot(Xtest(1), Xtest(2), '+b');

The plot below confirms that the test shape (the blue “+”) is closer to the “plus” class center (the

green “o”) than the “hearts” class center (the red “o”). Contour lines are drawn at 0.4, 0.6, and

0.8 of the maximum.

7

CSCI 510/EENG 510

Image and Multidimensional Signal Processing

Fall 2015

0.95

0.9

0.85

0.8

Solidity

0.75

0.7

0.65

0.6

0.55

0.5

0.45

3000

4000

5000

6000

7000

Area

8000

9000

10000

11000

Note – another way to do this is with Matlab's function "mahal" which is in the statistics toolbox.

This returns the squared distance, so you have to take the square root:

sqrt(mahal(Xtest, X1))

sqrt(mahal(Xtest, X2))

4. (20 pts) Below is a small region of an image consisting of a noisy step edge. (The

original image had value=30 on the left of the step, and value=10 to the right of the

step.) It was corrupted by additive Gaussian noise with zero mean and variance = 6.5.

32

28

32

27

32

25

31

28

28

30

29

32

30

33

29

30

31

33

32

29

27

28

31

27

31

13

11

10

11

13

14

7

11

12

9

16

12

8

12

12

12

6

14

6

13

7

11

12

12

10

At the three points indicated by the boxes, compute the value of the adaptive filter

shown in Equation 5.3-12 in the textbook. (The size of the filter is 5x5.)

(Note to me: if you compute variance by dividing by N-1 instead of N, you get a slightly different result.

Not sure which is correct??)

Solution:

The noisy image looks like the figure below, with the points of interest marked with red boxes:

8

CSCI 510/EENG 510

Image and Multidimensional Signal Processing

Fall 2015

σ

The equation for the adaptive filter is: fˆ ( x, y ) =

g ( x, y ) − η2 [ g ( x, y ) − mL ] . We compute the

2

σL

local mean and variance in a 5x5 window surrounding each of the three points of interest. You

can do this by hand or write a small piece of Matlab code.

% Input data

g = [

32

25

28

31

32

28

27

28

32

30

29

32

30

33

29

30

31

33

32

29

27

28

31

27

31

13

11

10

11

13

14

7

11

12

9

16

12

8

12

12

12

6

14

6

13

7;

11;

12;

12;

10 ];

n = 2;

% size of filter is n-2:n+2

r = 3; c = 3;

% Point about which to compute

gsub = g(r-n:r+n,c-n:c+n);

mL = mean2(gsub);

% Local mean

vL = var(gsub(:));

% Local variance

f = g(r,c) - (vN/vL)*(g(r,c) - mL);

fprintf('At point (r,c)=(%d,%d), local mean = %f, local var = %f, filter

value = %f\n', ...

r,c, mL, vL, f);

r = 3; c = 8;

% Point about which to compute

gsub = g(r-n:r+n,c-n:c+n);

mL = mean2(gsub);

% Local mean

vL = var(gsub(:));

% Local variance

f = g(r,c) - (vN/vL)*(g(r,c) - mL);

fprintf('At point (r,c)=(%d,%d), local mean = %f, local var = %f, filter

value = %f\n', ...

r,c, mL, vL, f);

r = 3; c = 6;

% Point about which to compute

gsub = g(r-n:r+n,c-n:c+n);

mL = mean2(gsub);

% Local mean

vL = var(gsub(:));

% Local variance

f = g(r,c) - (vN/vL)*(g(r,c) - mL);

fprintf('At point (r,c)=(%d,%d), local mean = %f, local var = %f, filter

value = %f\n', ...

r,c, mL, vL, f);

The results are:

At point (r,c)=(3,3), local mean = 29.800000, local var = 4.666667, filter value = 29.721429

At point (r,c)=(3,8), local mean = 10.960000, local var = 6.623333, filter value = 10.904882

At point (r,c)=(3,6), local mean = 18.800000, local var = 90.250000, filter value = 10.633795

9

CSCI 510/EENG 510

Image and Multidimensional Signal Processing

Fall 2015

So you can see that the filter is working correctly. Where the local variance is comparable to the

estimated noise variance (i.e., on the left and right of the step edge), the filter outputs the local

mean. Where the local variance is much higher than the estimated noise variance (i.e., where the

window overlaps the step edge), the filter outputs a value approximately equal to the value at that

point.

As described in section 5.3.3, it is assumed that σ η2 ≤ σ L2 , although due to the random nature of

the local noise, it is possible for this condition to be violated in places. This might cause

problems such as the filter returning negative intensity values. The book suggest two approaches

for dealing with this: (1) test the ratio of σ η2 / σ L2 and set it to 1 if it is greater than 1, or (2) apply

the filter as shown and just rescale the intensity values at the end to eliminate negative values.

In our example, the filter does not generate any negative values. However, if you took approach

#1, you could change the statement that computes the filter to:

f = g(r,c) - min(1.0, vN/vL)*(g(r,c) - mL);

In this case, you would get the following output:

At point (r,c)=(3,3), local mean = 29.800000, local var = 4.666667, filter value = 29.800000

At point (r,c)=(3,8), local mean = 10.960000, local var = 6.623333, filter value = 10.904882

At point (r,c)=(3,6), local mean = 18.800000, local var = 90.250000, filter value = 10.633795

5. (20 pts) Give a summary of your progress so far on your final project. Describe your

approach, any findings or results obtained so far, and any problems that you are

encountering. Points will be given based on how much progress has been made, and

how specific the description is.

10