A Nearly-linear-time Spectral Algorithm for Balanced Graph Partitioning Lorenzo Orecchia

advertisement

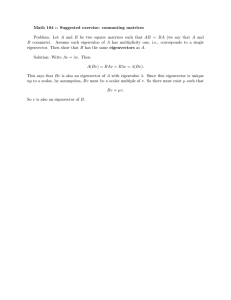

A Nearly-linear-time Spectral Algorithm

for Balanced Graph Partitioning

Lorenzo Orecchia

(MIT)

Joint work with

Nisheeth Vishnoi (MSR India) and Sushant Sachdeva (Princeton)

Spectral Algorithms for Graph Problems

What are they?

Spectral algorithms see the input graph as a linear operator

Adjacency matrix of a graph

representing similarities between

music artists.

Image by David Gleich.

Algorithmic primitives:

- matrix multiplication,

- eigenvector decomposition,

- Gaussian elimination

Spectral Algorithms for Graph Problems

Why are they useful …

… in practice?

• Simple to implement

• Can exploit very efficient linear algebra routines

• Perform provably well for many problems

… in theory?

• Many new interesting results based on spectral techniques:

Laplacian Solvers, Sparsification, s-t Maxflow, etc.

• Geometric viewpoint

• Connections to Markov Chains and Probability Theory

Spectral Algorithms for Graph Partitioning

Spectral algorithms are widely used in many graph-partitioning applications:

clustering, image segmentation, community-detection, etc.

CLASSICAL VIEW:

- Based on Cheeger’s Inequality

- Eigenvectors sweep-cuts reveal sparse cuts in the graph

NEW TREND:

- Random walk vectors replace eigenvectors:

• Fast Algorithms for Graph Partitioning

• Local Graph Partitioning

• Real Network Analysis

- Different random walks: PageRank, Heat-Kernel, etc.

Why Random Walks? A Practitioner’s View

Advantages of Random Walks:

1) Quick approximation to eigenvector in massive graphs

A= adjacency matrix

D = diagonal degree matrix

W = AD-1= natural random walk matrix

L = D– A = Laplacian matrix

Second Eigenvector of the Laplacian can be computed by iterating W:

For random y0 s.t. y0T D¡11 = 0 , compute

D¡1 W ty0

Why Random Walks? A Practitioner’s View

Advantages of Random Walks:

1) Quick approximation to eigenvector in massive graphs

A= adjacency matrix

D = diagonal degree matrix

W = AD-1= natural random walk matrix

L = D– A = Laplacian matrix

Second Eigenvector of the Laplacian can be computed by iterating W:

For random y0 s.t. y0T D¡11 = 0 , compute

D¡1 W ty0

In the limit,

x2 (L) =

D¡1 W t y0

limt!1 jjW t y0 jj ¡1 .

D

Why Random Walks? A Practitioner’s View

Advantages of Random Walks:

1) Quick approximation to eigenvector in massive graphs

A= adjacency matrix

D = diagonal degree matrix

W = AD-1= natural random walk matrix

L = D– A = Laplacian matrix

Second Eigenvector of the Laplacian can be computed by iterating W:

For random y0 s.t. y0T D¡11 = 0 , compute

D¡1 W ty0

In the limit,

x2 (L) =

D¡1 W t y0

limt!1 jjW t y0 jj ¡1 .

D

Heuristic: For massive graphs, pick t as large as computationally affordable.

Why Random Walks? A Practitioner’s View

Advantages of Random Walks:

1) Quick approximation to eigenvector in massive graphs

2) Statistically robust analogue of eigenvector

Real-world graphs are noisy

GROUND TRUTH GRAPH

Why Random Walks? A Practitioner’s View

Advantages of Random Walks:

1) Quick approximation to eigenvector in massive graphs

2) Statistically robust analogue of eigenvector

Real-world graphs are noisy

NOISY

MEASUREMENT

GROUND-TRUTH GRAPH

INPUT GRAPH

Why Random Walks? A Practitioner’s View

Advantages of Random Walks:

1) Quick approximation to eigenvector in massive graphs

2) Statistically robust analogue of eigenvector

NOISY

MEASUREMENT

GROUND-TRUTH GRAPH

INPUT GRAPH

GOAL: estimate eigenvector of ground-truth graph.

OBSERVATION: eigenvector of input graph can have very large variance,

as it can be very sensitive to noise

RANDOM-WALK VECTORS provide more stable estimates.

This Talk

QUESTION:

Why random-walk vectors in the design of fast algorithms?

This Talk

QUESTION:

Why random-walk vectors in the design of fast algorithms?

ANSWER: Stable, regularized version of the eigenvector

This Talk

QUESTION:

Why random-walk vectors in the design of fast algorithms?

ANSWER: Stable, regularized version of the eigenvector

GOALS OF THIS TALK:

1) Showcase how these ideas apply to balanced graph partitioning

2) Show optimization perspective on why random walks arise

Problem Definition

and

Overview of Results

Partitioning Graphs - Conductance

Undirected unweighted G = (V; E); jV j = n; jEj = m

S

Conductance of S µ V

Á(S) =

¹

jE(S;S)j

¹

minfvol(S);vol(S)g

Partitioning Graphs - Conductance

Undirected unweighted G = (V; E); jV j = n; jEj = m

vol(S) = jE(S; V )j = 8

S

¹ =4

jE(S; S)j

Á(S) =

Conductance of S µ V

Á(S) =

1

2

¹

jE(S;S)j

¹

minfvol(S);vol(S)g

Partitioning Graphs - Conductance

Undirected unweighted G = (V; E); jV j = n; jEj = m

vol(S) = jE(S; V )j = 8

S

¹ =4

jE(S; S)j

Á(S) =

Conductance of S µ V

Á(S) =

1

2

¹

jE(S;S)j

¹

minfvol(S);vol(S)g

Partitioning Graphs - Conductance

Undirected unweighted G = (V; E); jV j = n; jEj = m

vol(S) = jE(S; V )j = 8

S

¹ =4

jE(S; S)j

Á(S) =

Conductance of S µ V

Á(S) =

1

2

¹

jE(S;S)j

¹

minfvol(S);vol(S)g

Partitioning Graphs – Balanced Cut

NP-HARD DECISION PROBLEM

Does G have a b-balanced cut of conductance < ° ?

S

Á(S) < °

b

1

2

vol

(S)

>

>b

vol(V )

Partitioning Graphs – Balanced Cut

NP-HARD DECISION PROBLEM

Does G have a b-balanced cut of conductance < ° ?

S

Á(S) < °

b

1

2

vol

(S)

>

>b

vol(V )

• Important primitive for many recursive algorithms.

• Applications to clustering and graph decomposition.

Approximation Algorithms

NP-HARD DECISION PROBLEM

Does G have a b-balanced cut of conductance < ° ?

Algorithm

Method

Recursive Eigenvector

Spectral

[Leighton, Rao ‘88]

Flow

[Arora, Rao, Vazirani ‘04 ]

SDP (Flow + Spectral)

Distinguishes

¸ ° and

p

O( °)

O(° log n)

p

O(° log n)

Running Time

~

O(mn)

~ 23 )

O(m

[AK’07,

OSVV’08]

3

~

2

O(m )[Sherman ‘09]

Approximation Algorithms

DECISION PROBLEM

Does G have a b-balanced cut of conductance < ° ?

Algorithm

Method

Recursive Eigenvector

Spectral

[Leighton, Rao ‘88]

Flow

[Arora, Rao, Vazirani ‘04 ]

SDP (Flow + Spectral)

[Madry ’10]

SDP (Flow + Spectral)

Distinguishes

¸ ° and

p

O( °)

O(° log n)

p

O(° log n)

polylog² (n)

Running Time

~

O(mn)

~ 23 )

O(m

[AK’07,

OSVV’08]

3

~

2

O(m )[Sherman ‘09]

~ 1+² )

O(m

Fast Approximation Algorithms

DECISION PROBLEM

Does G have a b-balanced cut of conductance < ° ?

Algorithm

Method

Recursive Eigenvector

Spectral

[Leighton, Rao ‘88]

Flow

[Arora, Rao, Vazirani ‘04 ]

SDP (Flow + Spectral)

[Madry ’10]

SDP (Flow + Spectral)

Distinguishes

¸ ° and

p

O( °)

O(° log n)

p

O(° log n)

polylog(n)

Running Time

~

O(mn)

~ 23 )

O(m

[AK’07,

OSVV’08]

3

~

2

O(m )[Sherman ‘09]

~ 1+² )

O(m

Approximation Algorithms

Does G have a b-balanced cut of conductance < ° ?

Algorithm

Method

Distinguishes

¸ ° and

Running Time

Recursive Eigenvector

Spectral

p

O( °)

~

O(mn)

[Spielman, Teng ‘04]

[Andersen, Chung, Lang

‘07]

[Andersen, Peres ‘09]

OUR ALGORITHM

µq

¶

° log3 n

Local Random Walks O

Local Random Walks

Local Random Walks

Random Walks

µ

¶

³p

´

O

° log n

m

°2

µ ¶

~ m

O

°

µ

¶

m

O~ p

°

p

O( °)

~ (m)

O

³p

´

O

° log n

~

O

Approximation Algorithms

Does G have a b-balanced cut of conductance < ° ?

Algorithm

Method

Distinguishes

¸ ° and

Running Time

Recursive Eigenvector

Spectral

p

O( °)

~

O(mn)

[Spielman, Teng ‘04]

[Andersen, Chung, Lang

‘07]

[Andersen, Peres ‘09]

OUR ALGORITHM

µq

¶

° log3 n

Local Random Walks O

Local Random Walks

Local Random Walks

Random Walks

µ

¶

³p

´

O

° log n

m

°2

µ ¶

~ m

O

°

µ

¶

m

O~ p

°

p

O( °)

~ (m)

O

³p

´

O

° log n

~

O

Recursive Eigenvector Algorithm

INPUT: (G; b; °) DECISION: does there exists b-balanced S with Á(S) < ° ?

Recursive Eigenvector Algorithm

INPUT: (G; b; °) DECISION: does there exists b-balanced S with Á(S) < ° ?

• Compute slowest mixing eigenvector of G and corresponding Laplacian eigenvalue ¸2

G

Recursive Eigenvector Algorithm

INPUT: (G; b; °) DECISION: does there exists b-balanced S with Á(S) < ° ?

• Compute slowest mixing eigenvector of G and corresponding Laplacian eigenvalue ¸2

• If ¸2 ¸ °, output NO. Otherwise, sweep eigenvector to find S1 such that

p

Á(S1) · O( °):

G

S1

Recursive Eigenvector Algorithm

INPUT: (G; b; °) DECISION: does there exists b-balanced S with Á(S) < ° ?

• Compute slowest mixing eigenvector of G and corresponding Laplacian eigenvalue ¸2

• If ¸2 ¸ °, output NO. Otherwise, sweep eigenvector to find S1 such that

p

Á(S1) · O( °):

• If S1 is (b/2)-balanced. Output S1. Otherwise, consider the graph G1 induced by G on VS1 with self-loops replacing the edges going to S1.

G1

S1

Recursive Eigenvector Algorithm

INPUT: (G; b; °) DECISION: does there exists b-balanced S with Á(S) < ° ?

• Compute slowest mixing eigenvector of G and corresponding Laplacian eigenvalue ¸2

• If ¸2 ¸ °, output NO. Otherwise, sweep eigenvector to find S1 such that

p

Á(S1) · O( °):

• If S1 is (b/2)-balanced. Output S1. Otherwise, consider the graph G1 induced by G on VS1 with self-loops replacing the edges going to S1.

• Recurse on G1.

G1

S1

Recursive Eigenvector Algorithm

INPUT: (G; b; °) DECISION: does there exists b-balanced S with Á(S) < ° ?

• Compute slowest mixing eigenvector of G and corresponding Laplacian eigenvalue ¸2

• If ¸2 ¸ °, output NO. Otherwise, sweep eigenvector to find S1 such that

p

Á(S1) · O( °):

• If S1 is (b/2)-balanced. Output S1. Otherwise, consider the graph G1 induced by G on VS1 with self-loops replacing the edges going to S1.

• Recurse on G1.

S1

S2

Recursive Eigenvector Algorithm

INPUT: (G; b; °) DECISION: does there exists b-balanced S with Á(S) < ° ?

• Compute slowest mixing eigenvector of G and corresponding Laplacian eigenvalue ¸2

• If ¸2 ¸ °, output NO. Otherwise, sweep eigenvector to find S1 such that

p

Á(S1) · O( °):

• If S1 is (b/2)-balanced. Output S1. Otherwise, consider the graph G1 induced by G on VS1 with self-loops replacing the edges going to S1.

• Recurse on G1.

S1

S3

S4

S2

Recursive Eigenvector Algorithm

INPUT: (G; b; °) DECISION: does there exists b-balanced S with Á(S) < ° ?

• Compute slowest mixing eigenvector of G and corresponding Laplacian eigenvalue ¸2

• If ¸2 ¸ °, output NO. Otherwise, sweep eigenvector to find S1 such that

p

Á(S1) · O( °):

• If S1 is (b/2)-balanced. Output S1. Otherwise, consider the graph G1 induced by G on VS1 with self-loops replacing the edges going to S1.

• Recurse on G1.

• If the cut [Si becomes (b/2)-balanced, output [Si.

S1

S3

S4

S2

Recursive Eigenvector Algorithm

INPUT: (G; b; °) DECISION: does there exists b-balanced S with Á(S) < ° ?

• Compute slowest mixing eigenvector of G and corresponding Laplacian eigenvalue ¸2

• If ¸2 ¸ °, output NO. Otherwise, sweep eigenvector to find S1 such that

p

Á(S1) · O( °):

• If S1 is (b/2)-balanced. Output S1. Otherwise, consider the graph G1 induced by G on VS1 with self-loops replacing the edges going to S1.

• Recurse on G1.

• If the cut [Si becomes (b/2)-balanced, output [Si.

S1

S3

S2

¸2(G5) ¸ °

LARGE INDUCED EXPANDER = NO-CERTIFICATE

S4

Recursive Eigenvector Algorithm

INPUT: (G; b; °) DECISION: does there exists b-balanced S with Á(S) < ° ?

• Compute slowest mixing eigenvector of G and corresponding Laplacian eigenvalue ¸2

• If ¸2 ¸ °, output NO. Otherwise, sweep eigenvector to find S1 such that

p

Á(S1) · O( °):

• If S1 is (b/2)-balanced. Output S1. Otherwise, consider the graph G1 induced by G on VS1 with self-loops replacing the edges going to S1.

• Recurse on G1.

• If the cut [Si becomes (b/2)-balanced, output [Si.

S1

S3

S2

¸2(G5) ¸ °

~

~

RUNNING TIME:O(mn)

. O(m)

per iteration, O(n) iterations.

S4

Recursive Eigenvector: The Worst Case

EXPANDER

-(n) nearly-disconnected components

Varying

conductance

Recursive Eigenvector: The Worst Case

S2

S3

S1

EXPANDER

Varying

conductance

-(n) nearly-disconnected components

NB: Recursive Eigenvector eliminates one component per iteration.

-(n) iterations are necessary. Each iteration requires (mn) time.

Recursive Eigenvector: The Worst Case

S2

S3

S1

EXPANDER

Varying

conductance

-(n) nearly-disconnected components

NB: Recursive Eigenvector eliminates one component per iteration.

-(n) iterations are necessary. Each iteration requires (mn) time.

GOAL: Eliminate unbalanced low-conductance cuts faster.

Our Algorithm: Contributions

Algorithm

Recursive Eigenvector

OUR ALGORITHM

Method

Distinguishes ¸ ° and

Time

Eigenvector

p

O( °)

~

O(mn)

Random Walks

p

O( °)

~ (m)

O

MAIN FEATURES:

• Compute O(log n) global heat-kernel random-walk vectors at each iteration

• Unbalanced cuts are removed in O(log n) iterations

~ (m)

• Method to compute heat-kernel vectors, i.e. e¡tL v , in time O

TECHNICAL COMPONENTS:

1) New iterative algorithm with a simple random walk interpretation

2) Novel analysis of Lanczos methods for computing heat-kernel vectors

Why Random Walks

GOAL: Eliminate unbalanced cuts of low-conductance efficiently

• The graph eigenvector may be correlated with only one sparse unbalanced cut.

¿ for ¿ = log n/°.

• Consider the Heat-Kernel random walk HG

¿

HG

ei Probability vector for random

walk started at vertex i

¿

HG

ej

Why Random Walks

GOAL: Eliminate unbalanced cuts of low-conductance efficiently

• The graph eigenvector may be correlated with only one sparse unbalanced cut.

¿ for ¿ = log n/°.

• Consider the Heat-Kernel random walk-matrix HG

¿

HG

ei Probability vector for random

walk started at vertex i

¿

HG

ej

Long vectors are slow-mixing

random walks

Why Random Walks

GOAL: Eliminate unbalanced cuts of low-conductance efficiently

• The graph eigenvector may be correlated with only one sparse unbalanced cut.

¿ for ¿ = log n/°.

• Consider the Heat-Kernel random walk HG

Unbalanced cuts of

p

conductance <

.. °

Why Random Walks

GOAL: Eliminate unbalanced cuts of low-conductance efficiently

• The graph eigenvector may be correlated with only one sparse unbalanced cut.

SINGLE VECTOR

SINGLE CUT

¿ for ¿ = log n/°.

• Consider the Heat-Kernel random walk HG

VECTOR EMBEDDING

MULTIPLE CUTS

Unbalanced cuts of

p

conductance <

.. °

Heat-Kernel Random Walk:

A Regularization View

Background: Heat-Kernel Random Walk

For simplicity, take G to be d-regular.

• The Heat-Kernel Random Walk is a Continuous-Time Markov Chain over V,

modeling the diffusion of heat along the edges of G.

• Transitions take place in continuous time t, with an exponential distribution.

@p(t)

@t

= ¡L p(t)

d

¡ dt L

p(t) = e

p(0)

• The Heat Kernel can be interpreted as Poisson distribution over number of steps

of the natural random walk W=AD-1:

¡ dt L

e

¡t

=e

P1

tk

k

W

k=1 k!

Background: Heat-Kernel Random Walk

For simplicity, take G to be d-regular.

• The Heat-Kernel Random Walk is a Continuous-Time Markov Chain over V,

modeling the diffusion of heat along the edges of G.

• Transitions take place in continuous time t, with an exponential distribution.

@p(t)

@t

= ¡L p(t)

d

¡ dt L

p(t) = e

Notation

t

p(0) =: HG

p(0)

• The Heat Kernel can be interpreted as Poisson distribution over number of steps

of the natural random walk W=AD-1:

¡ dt L

e

¡t

=e

P1

tk

k

W

k=1 k!

Background: Heat-Kernel Random Walk

For simplicity, take G to be d-regular.

• The Heat-Kernel Random Walk is a Continuous-Time Markov Chain over V,

modeling the diffusion of heat along the edges of G.

• Transitions take place in continuous time t, with an exponential distribution.

@p(t)

@t

= ¡L p(t)

d

¡ dt L

p(t) = e

Notation

t

p(0) =: HG

p(0)

• The Heat Kernel can be interpreted as Poisson distribution over number of steps

of the natural random walk W=AD-1:

¡ dt L

e

¡t

=e

P1

tk

k

W

k=1 k!

• In practice, can replace Heat-Kernel with natural random walk W t

Regularized Spectral Optimization

Eigenvector Program

1

min xT Lx

d

s.t. jjxjj2 = 1

SDP Formulation

1

min

d

s.t.

L²X

I ²X =1

11T ² X = 0

T

x 1 =0

Xº0

Programs have same optimum. Take optimal solution

X ¤ = x¤ (x¤)T

Regularized Spectral Optimization

SDP Formulation

1

min

d

s.t.

L²X

I ²X =1

11T ² X = 0

Eigenvector decomposition of X:

X=

P

Density Matrix

Xº0

T

pi vi vi

8i; pi ¸ 0;

P

pi = 1;

8i; viT 1 = 0:

Eigenvalues of X define probability distribution

Regularized Spectral Optimization

SDP Formulation

1

min L ² X

d

s.t.

I ²X =1

J ²X =0

Density Matrix

Xº0

Eigenvalues of X define probability distribution

X ¤ = x¤ (x¤)T

¤

x

0

TRIVIAL DISTRIBUTION

0

1

Regularized Spectral Optimization

Regularized SDP

1

min L ² X + ´ ¢ F (X) Regularizer F

d

Parameter ´

s.t.

I ²X =1

J ²X =0

Xº0

The regularizer F forces the distribution of eigenvalues of X to be non-trivial

X ¤ = x¤ (x¤)T

X =

x

²1

REGULARIZATION

¤

¤

P

pi vi viT

²2

1¡²

Regularizers

Regularizers are SDP-versions of common regularizers

• von Neumann Entropy

FH (X) = Tr(X log X) =

• p-Norm, p>1

Fp(X) =

1

p

jjXjj

p

p

• And more, e.g. log-determinant.

=

P

1

p

Tr(X

)

p

pi log pi

=

1

p

P

ppi

Our Main Result

Regularized SDP

1

min L ² X + ´ ¢ F (X)

d

s.t.

I ²X =1

J ²X =0

Xº0

RESULT:

Explicit correspondence between regularizers and random walks

OPTIMAL SOLUTION OF REGULARIZED

PROGRAM

REGULARIZER

F = FH

Entropy

X ? / Ht

where t depends on ´

where ® depends on ´

F = Fp

p-Norm

1

X ? / Tq; p¡1

where q depends on ´

Discussion: Vector vs Density Matrix

Variable is density matrix, not vector

Q: Can we produce a single vector?

A: Density Matrix X describes distributions over vectors.

Assuming distribution is Gaussian, sample a vector

1

2

x=X u

where

u » N(0; In¡1)

For example, the vector

x = Ht=2 u

Random walk on random seed

is a sample from the solution of an entropy-regularized problem

PART 1

The New Iterative Procedure

Our Algorithm for Balanced Cut

IDEA BEHIND OUR ALGORITHM:

Replace eigenvector in recursive eigenvector algorithm with

¿ for ¿ = log n=°

Heat-Kernel random walk HG

¿ :

Consider the embedding {vi} given by HG

¿

vi = HG

ei

~1

n

Our Algorithm for Balanced Cut

IDEA BEHIND OUR ALGORITHM:

Replace eigenvector in recursive eigenvector algorithm with

¿ for ¿ = log n=°

Heat-Kernel random walk HG

Chosen to emphasize

cuts of conductance š

¿ :

Consider the embedding {vi} given by HG

¿

vi = HG

ei

~1

n

Stationary distribution is

uniform as G is regular

Our Algorithm for Balanced Cut

IDEA BEHIND OUR ALGORITHM:

Replace eigenvector in recursive eigenvector algorithm with

¿ for ¿ = log n=°

Heat-Kernel random walk HG

Chosen to emphasize

cuts of conductance š

¿ :

Consider the embedding {vi} given by HG

¿

vi = HG

ei

Stationary distribution is

uniform as G is regular

~1

n

MIXING:

¿

Define the total deviation from stationary for a set Sµ V for walk HG

¿

ª(HG

; S)

=

P

~1=njj2

jjv

¡

i

i2S

FUNDAMENTAL QUANTITY TO UNDERSTAND CUTS IN G

Our Algorithm: Case Analysis

Recall:

¿ = log n=°

¿

ª(HG

; S)

=

CASE 1: Random walks have mixed

P

¿

vi = HG

ei

ALL VECTORS ARE SHORT

¿

ª(HG

;V) ·

1

poly(n)

i2S

¿

jjHG

ei ¡ ~1=njj2

Our Algorithm: Case Analysis

Recall:

¿ = log n=°

¿

ª(HG

; S)

=

CASE 1: Random walks have mixed

P

i2S

¿

jjHG

ei ¡ ~1=njj2

¿

vi = HG

ei

ALL VECTORS ARE SHORT

¿

ª(HG

;V) ·

1

poly(n)

By definition of ¿

¸2 ¸ -(°)

ÁG ¸ -(°)

Our Algorithm

¿ = log n=°

¿

ª(HG

; S)

¿

vi = HG

ei

=

P

¿

2

~

jjH

e

¡

1=njj

i

G

i2S

CASE 2: Random walks have not mixed

¿

ª(HG

;V) >

1

poly(n)

p

We can either find an (b)-balanced cut with conductance · O( °)

Our Algorithm

¿ = log n=°

¿

ª(HG

; S)

=

¿

vi = HG

ei

P

¿

2

~

jjH

e

¡

1=njj

i

G

i2S

RANDOM PROJECTION

+

SWEEP CUT

CASE 2: Random walks have not mixed

¿

ª(HG

;V) >

1

poly(n)

p

·

O(

°)

We can either find an (b)-balanced cut with conductance

Our Algorithm

¿ = log n=°

¿

ª(HG

; S)

S1

=

P

¿

2

~

jjH

e

¡

1=njj

i

G

i2S

BALL

ROUNDING

CASE 2: Random walks have not mixed

¿

ª(HG

;V) >

1

poly(n)

p

·

O(

°)

We can either find an (b)-balanced cut with conductance

p

OR a ball cut yields S1 such that Á(S1) · O( Á) and

¿

¿

ª(HG

; S1) ¸ 12 ª(HG

; V ):

Our Algorithm: Iteration

¿ = log n=°

¿

ª(HG

; S)

=

S1

P

¿

2

~

jjH

e

¡

1=njj

i

G

i2S

p

CASE 2: We found an unbalanced cut S1 with Á(S1) · O( Á) and

¿

¿

ª(HG

; S1) ¸ 12 ª(HG

; V ):

Modify G=G(1) by adding edges across (S1; S¹1 ) to construct G(2).

Analogous to removing unbalanced cut S_1

in Recursive Eigenvector algorithm

Our Algorithm: Modifying G

p

CASE 2: We found an unbalanced cut S1 with Á(S1) · O( Á) and

¿

¿

ª(HG

; S1) ¸ 12 ª(HG

; V ):

Modify G=G(1) by adding edges across (S1; S¹1 ) to construct G(2).

Sj

Our Algorithm: Modifying G

p

CASE 2: We found an unbalanced cut S1 with Á(S1) · O( Á) and

¿

¿

ª(HG

; S1) ¸ 12 ª(HG

; V ):

Modify G=G(1) by adding edges across (S1; S¹1 ) to construct G(2).

S1

(t+1)

G

(t)

=G

+°

P

i2St

where Stari is the star graph rooted at vertex i.

Stari

Our Algorithm: Modifying G

p

CASE 2: We found an unbalanced cut S1 with Á(S1) · O( Á) and

¿

¿

ª(HG

; S1) ¸ 12 ª(HG

; V ):

Modify G=G(1) by adding edges across (S1; S¹1 ) to construct G(2).

S1

(t+1)

G

(t)

=G

+°

P

i2St

Stari

where Stari is the star graph rooted at vertex i.

The random walk can now escape S1 more easily.

Our Algorithm: Iteration

¿ = log n=°

¿

ª(HG

; S)

S1

=

P

¿

2

~

jjH

e

¡

1=njj

i

G

i2S

p

CASE 2: We found an unbalanced cut S1 with Á(S1) · O( Á) and

¿

¿

ª(HG

; S1) ¸ 12 ª(HG

; V ):

Modify G=G(1) by adding edges across (S1; S¹1 ) to construct G(2).

POTENTIAL REDUCTION:

1

¿

¿

¿

ª(HG

(t+1) ; V ) · ª(HG(t) ; V ) ¡ 2 ª(HG(t) ; St ) ·

3

¿

4 ª(HG(t) ; V )

Our Algorithm: Iteration

¿ = log n=°

¿

ª(HG

; S)

S1

=

P

¿

2

~

jjH

e

¡

1=njj

i

G

i2S

p

CASE 2: We found an unbalanced cut S1 with Á(S1) · O( Á) and

¿

¿

ª(HG

; S1) ¸ 12 ª(HG

; V ):

Modify G=G(1) by adding edges across (S1; S¹1 ) to construct G(2).

POTENTIAL REDUCTION:

1

¿

¿

¿

ª(HG

(t+1) ; V ) · ª(HG(t) ; V ) ¡ 2 ª(HG(t) ; St ) ·

3

¿

4 ª(HG(t) ; V )

CRUCIAL APPLICATION OF STABILITY OF RANDOM WALK

Summary and Potential Analysis

IN SUMMARY:

At every step t of the recursion, we either

1.

Produce a (b)-balanced cut of the required conductance, OR

Potential Reduction

IN SUMMARY:

At every step t of the recursion, we either

1.

Produce a (b)-balanced cut of the required conductance, OR

2.

Find that

¿

ª(HG

;V) ·

1

poly(n) , OR

Potential Reduction

IN SUMMARY:

At every step t of the recursion, we either

1.

Produce a (b)-balanced cut of the required conductance, OR

2.

Find that

¿

ª(HG

;V) ·

1

poly(n) , OR

3. Find an unbalanced cut St of the required conductance,

such that for the graph G(t+1), modified to have increased edges from St,

¿

ª(HG

(t+1) ; V ) ·

3

¿

4 ª(HG(t) ; V )

Potential Reduction

IN SUMMARY:

At every step t-1 of the recursion, we either

1.

Produce a (b)-balanced cut of the required conductance, OR

2.

Find that

¿

ª(HG

;V) ·

1

poly(n) , OR

3. Find an unbalanced cut Stof the required conductance,

such that for the process P(t+1), modified to have increased transitions from St,

¿

ª(HG

(t+1) ; V ) ·

3

¿

4 ª(HG(t) ; V )

After T=O(log n) iterations, if no low-conductance (b)-balanced cut is found:

¿

ª(HG

(T ) ; V ) ·

1

poly(n)

From this guarantee, using the definition of G(T), we derive an SDP-certificate

that no b-balanced cut of conductance O(°) exists in G.

NB: Only O(log n) iterations to remove unbalanced cuts.

Heat-Kernel and Certificates

• If no balanced cut of conductance is found, our potential analysis yields:

¿

ª(HG

(T ) ; V ) ·

1

poly(n)

L+°

PT ¡1 P

j=1

i2Sj

L(Stari ) º °L(KV )

Modified graph has ¸2 ¸ °

CLAIM: This is a certificate that no balanced cut of conductance < ° existed in G.

[Sj

Heat-Kernel and Certificates

• If no balanced cut of conductance is found, our potential analysis yields:

¿

ª(HG

(T ) ; V ) ·

1

poly(n)

L+°

PT ¡1 P

j=1

i2Sj

L(Stari ) º °L(KV )

Modified graph has ¸2 ¸ °

CLAIM: This is a certificate that no balanced cut of conductance < ° existed in G.

Balanced cut T

[Sj

Á(T ) ¸ °

j[Sj j

¡ ° jT j

Heat-Kernel and Certificates

• If no balanced cut of conductance is found, our potential analysis yields:

¿

ª(HG

(T ) ; V ) ·

1

poly(n)

L+°

PT ¡1 P

j=1

i2Sj

L(Stari ) º °L(KV )

Modified graph has ¸2 ¸ °

CLAIM: This is a certificate that no balanced cut of conductance < ° existed in G.

Balanced cut T

[Sj

Á(T ) ¸ °

j[Sj j

¡ ° jT j

¸°

b=2

¡° b

¸ °=2

Comparison with Recursive Eigenvector

RECURSIVE EIGENVECTOR:

We can only bound number of iterations by volume of graph removed.

(n) iterations possible.

OUR ALGORITHM:

Use variance of random walk as potential.

Only O(log n) iterations necessary.

ª(P; V ) =

P

i2V

jjP ei ¡ ~1=njj2

RIGHT SPECTRAL NOTION OF POTENTIAL

Comparison with Recursive Eigenvector

Recall worst-case example for Recursive Eigenvector:

EXPANDER

Comparison with Recursive Eigenvector

Recall worst-case example for Recursive Eigenvector:

One iteration of our algorithm

EXPANDER

Running Time

• Our Algorithm runs in O(log n) iterations.

• In one iteration, we perform some simple computation (projection, sweep cut)

¿

on the vector embedding HG

.

(t)

~

This takes time O(md)

, where d is the dimension of the embedding.

• Can use Johnson-Lindenstrauss to obtain d= O(log n).

• Hence, we only need to compute O(log2 n) matrix-vector products

¿

HG

(t) u

~

• We show how to perform one such product in time O(m)

for all ¿.

• OBSTACLE:

¿, the mean number of steps in the Heat-Kernel random walk, is (n2) for path.

PART 2

Matrix Exponential Computation

Computation of Matrix Exponential

GOAL: For symmetric diagonally-dominant A, with sparsity m,

compute vector v such that

jje¡A u ¡ vjj ·

~ (m).

in time O

1

poly(n)

Computation of Matrix Exponential

GOAL: For symmetric diagonally-dominant A, with sparsity m,

compute vector v such that

jje¡A u ¡ vjj ·

1

poly(n)

~ (m).

in time O

KNOWN:

p

~ jjAjj) matrix-vector multiplications by A

Lanczos method: O(

Computation of Matrix Exponential

GOAL: For symmetric diagonally-dominant A, with sparsity m,

compute vector v such that

jje¡A u ¡ vjj ·

1

poly(n)

~ (m).

in time O

KNOWN:

p

~ jjAjj) matrix-vector multiplications by A

Lanczos method: O(

A=

log n

° L

~ 1)

jjAjj = O(

°

µ

m

~

Running Time: O p

°

¶

Review of Lanczos Method

IDEA: Perform k matrix-vector multiplications

fu; Au; A2u; A3u; A4u; : : : ; Ak ug

Review of Lanczos Method

IDEA: Perform k matrix-vector multiplications

2

3

4

k

Rk = Spanfu; Au; A u; A u; A u; : : : ; A ug

k-Krylov

Subspace

Compute orthonormal basis Qk for Rk, consider A restricted to Rk

Tk = Qk AQTk

Review of Lanczos Method

IDEA: Perform k matrix-vector multiplications

2

3

4

k

Rk = Spanfu; Au; A u; A u; A u; : : : ; A ug

k-Krylov

Subspace

Compute orthonormal basis Qk for Rk, consider A restricted to Rk

Tk = Qk AQTk

As k grows, Tk is better approximation to A.

For many functions f, even for small k

Qk f(Tk )QTk ¼ f(A)

Review of Lanczos Method

IDEA: Perform k matrix-vector multiplications

2

3

4

k

Rk = Spanfu; Au; A u; A u; A u; : : : ; A ug

k-Krylov

Subspace

Compute orthonormal basis Qk for Rk, consider A restricted to Rk

Tk = Qk AQTk

As k grows, Tk is better approximation to A.

For many functions f, even for small k

Qk f(Tk )QTk ¼ f(A)

True if f is close to a polynomial of degree < k

Lanczos Method and Approximation

CLAIM: There exists a polynomial p such that

supx2(0;jjAjj) jp(x) ¡ e¡x j ·

p

~

and p has degree O(

jjAjj)

1

poly(n)

Lanczos Method and Approximation

CLAIM: There exists a polynomial p such that

supx2(0;jjAjj) jp(x) ¡ e¡x j ·

p

~

and p has degree O(

jjAjj)

p

~ jjAjj) = O

~

O(

µ

1

p

°

¶

1

poly(n)

matrix-vector multiplications by A

Lanczos Method and Approximation

CLAIM: There exists a polynomial p such that

supx2(0;jjAjj) jp(x) ¡ e¡x j ·

p

~

and p has degree O(

jjAjj)

p

~ jjAjj) = O

~

O(

µ

1

p

°

¶

1

poly(n)

matrix-vector multiplications by A

OPTIMAL for this approach

Lanczos Method II: Inverse Iteration

IDEA: Apply Lanczos method to

¡1

B = (I + A

)

k

Lanczos Method II: Inverse Iteration

IDEA: Apply Lanczos method to

¡1

B = (I + A

)

k

k-Krylov subspace:

Rk = Spanfu; Bu; B2u; B3u; B4u; : : : ; Bk ug

Lanczos Method II: Inverse Iteration

IDEA: Apply Lanczos method to

¡1

B = (I + A

)

k

k-Krylov subspace:

Rk = Spanfu; Bu; B2u; B3u; B4u; : : : ; Bk ug

APPROX: k-degree rational function approximation to e-x

Lanczos Method II: Inverse Iteration

IDEA: Apply Lanczos method to

¡1

B = (I + A

)

k

k-Krylov subspace:

Rk = Spanfu; Bu; B2u; B3u; B4u; : : : ; Bk ug

APPROX: k-degree rational function approximation to e-x

CLAIM: There exists a polynomial

p such that¯

¯

¯ p(x)

1

¡x ¯

supx2(0;jjAjj) ¯ (1+x=k)k ¡ e ¯ ·

poly(n)

and p has degree k = O(log n)

Lanczos Method II: Inverse Iteration

IDEA: Apply Lanczos method to

¡1

B = (I + A

)

k

k-Krylov subspace:

Rk = Spanfu; Bu; B2u; B3u; B4u; : : : ; Bk ug

APPROX: k-degree rational function approximation to e-x

CLAIM: There exists a polynomial

p such that¯

¯

¯ p(x)

1

¡x ¯

supx2(0;jjAjj) ¯ (1+x=k)k ¡ e ¯ ·

poly(n)

and p has degree k = O(log n)

Lanczos Method II: Inverse Iteration

IDEA: Apply Lanczos method to

¡1

B = (I + A

)

k

k-Krylov subspace:

Rk = Spanfu; Bu; B2u; B3u; B4u; : : : ; Bk ug

CLAIM: k = O(log n) matrix-vector multiplication by B

RUNNING TIME: Multiplication by B is SDD linear system

Time per

~

O(m)

multiplication

~

Total

O(m)

running time

Lanczos Method II: Conclusion

jje¡Au ¡ vjj · ±

GENERAL RESULT:

• For general SDD matrix A, running time is

~

O((m

+ n) ¢ log(2 + jjAjj) ¢ polylog(1=±))

CONTRIBUTION: Analysis has a number of obstacles

• Linear solver is approximate: error propagation in Lanczos

• Loss of symmetry

Conclusion

NOVEL ALGORITHMIC CONTRIBUTIONS

~

• Balanced-Cut Algorithm using Random Walks in time O(m)

~

• Computing the Heat-Kernel vectors in time O(m)

MAIN IDEA

Random walks provide a very useful

stable analogue of the graph eigenvector

via regularization

OPEN QUESTION

More applications of this idea?

Applications beyond design of fast algorithms?

A Different Interpretation

THEOREM:

Suppose eigenvector x yields an unbalanced cut S of low conductance

and no balanced cut of the required conductance.

S

P

di xi = 0

Then,

0

P

2

d

x

i

i

i2S

¸

1

2

P

i2V

di x2i :

In words, S contains most of the variance of the eigenvector.

A Different Interpretation

THEOREM:

Suppose eigenvector x yields an unbalanced cut S of low conductance

and no balanced cut of the required conductance.

P

di xi = 0

Then,

0

V-S

P

2

d

x

i

i

i2S

¸

1

2

P

i2V

di x2i :

In words, S contains most of the variance of the eigenvector.

QUESTION: Does this mean the graph induced by G on V–S is much closer to

have conductance at least °?

A Different Interpretation

THEOREM:

Suppose eigenvector x yields an unbalanced cut S of low conductance

and no balanced cut of the required conductance.

P

di xi = 0

Then,

0

V-S

P

2

d

x

i

i

i2S

¸

1

2

P

i2V

di x2i :

QUESTION: Does this mean the graph induced by G on V–S is much closer to

have conductance at least °?

ANSWER: NO. x may contain little or no information about G on V–S.

Next eigenvector may be only infinitesimally larger.

CONCLUSION: To make significant progress, we need an analogue of the

eigenvector that captures sparse

Theorems for Our Algorithm

THEOREM 1: (WALKS HAVE NOT MIXED)

ª(P (t) ; V ) >

1

poly(n)

Can find cut of

p

conductance O( °)

Theorems for Our Algorithm

THEOREM 1: (WALKS HAVE NOT MIXED)

ª(P (t) ; V ) >

1

poly(n)

Can find cut of

p

conductance O( °)

Proof: Recall that

(t)

¡¿Q(t)

¿ = log n=°

ª(P; V ) =

Use the definition of ¿ . The spectrum of P (t) implies that

P

=e

P

P

i2V

jjP ei ¡ ~1=njj2

(t)

(t)

2

(t)

jjP

e

¡

P

e

jj

·

O(°)

¢

ª(P

;V)

i

j

ij2E

EDGE LENGTH

TOTAL VARIANCE

Theorems for Our Algorithm

THEOREM 1: (WALKS HAVE NOT MIXED)

ª(P (t) ; V ) >

1

poly(n)

Can find cut of

p

conductance O( °)

Proof: Recall that

(t)

¡¿Q(t)

¿ = log n=°

ª(P; V ) =

Use the definition of ¿ . The spectrum of P (t) implies that

P

=e

P

P

i2V

jjP ei ¡ ~1=njj2

(t)

(t)

2

(t)

jjP

e

¡

P

e

jj

·

O(°)

¢

ª(P

;V)

i

j

ij2E

EDGE LENGTH

TOTAL VARIANCE

Hence, by a random projection of the embedding {P ei}, followed by a sweep cut,

we can recover the required cut.

SDP ROUNDING TECHNIQUE

Theorems for Our Algorithm

THEOREM 2: (WALKS HAVE MIXED)

ª(P (t) ; V ) ·

1

poly(n)

No (b)-balanced cut of

conductance O(°)

Theorems for Our Algorithm

THEOREM 2: (WALKS HAVE MIXED)

ª(P (t) ; V ) ·

No (b)-balanced cut of

conductance O(°)

1

poly(n)

Proof: Consider S = [ Si. Notice that S is unbalanced.

Assumption is equivalent to

¡¿L¡O(log n)

L(KV ) ² e

P

i2S

L(Si )

·

1

poly(n)

:

Theorems for Our Algorithm

THEOREM 2: (WALKS HAVE MIXED)

ª(P (t) ; V ) ·

No (b)-balanced cut of

conductance O(°)

1

poly(n)

Proof: Consider S = [ Si. Notice that S is unbalanced.

Assumption is equivalent to

¡¿L¡O(log n)

L(KV ) ² e

By taking logs,

L + O(°)

P

i2S

P

i2S

L(Si )

·

1

poly(n)

L(Si) º -(°)L(KV ):

:

SDP DUAL CERTIFICATE

Theorems for Our Algorithm

THEOREM 2: (WALKS HAVE MIXED)

ª(P (t) ; V ) ·

No (b)-balanced cut of

conductance O(°)

1

poly(n)

Proof: Consider S = [ Si. Notice that S is unbalanced.

Assumption is equivalent to

¡¿L¡O(log n)

L(KV ) ² e

By taking logs,

L + O(°)

P

i2S

P

i2S

L(Si )

·

1

poly(n)

L(Si) º -(°)L(KV ):

:

SDP DUAL CERTIFICATE

This is a certificate that no (1)-balanced cut of conductance O(°) exists, as

evaluating the quadratic form for a vector representing a balanced cut U yields

Á(U) ¸ -(°) ¡ vol(S) O(°) ¸ -(°)

vol(U)

as long as S is sufficiently unbalanced.

SDP Interpretation

Efi;jg2EG

Efi;jg2V £V

8i 2 V

Ej2V

jjvi ¡ vj jj2 · °;

1

2

jjvi ¡ vj jj =

;

2m

1 1

2

jjvi ¡ vj jj ·

¢

:

b 2m

0

SHORT EDGES

FIXED VARIANCE

LENGTH OF

STAR EDGES

SDP Interpretation

Efi;jg2EG

Efi;jg2V £V

8i 2 V

Ej2V

jjvi ¡ vj jj2 · °;

1

2

jjvi ¡ vj jj =

;

2m

1 1

2

jjvi ¡ vj jj ·

¢

:

b 2m

SHORT EDGES

FIXED VARIANCE

LENGTH OF

STAR EDGES

SHORT RADIUS

0