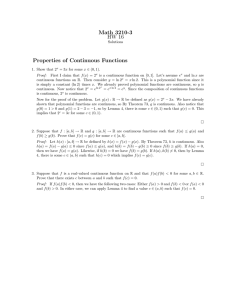

Probability Theory 1 Basic Probability September 27, 2012

advertisement

Probability Theory

September 27, 2012

1

Basic Probability

Definition: Algebra

If S is a set then a collection A of subsets of S is an algebra if

• S∈A

• A ∈ A =⇒ Ac ∈ A

Sn

• {Ai }ni=1 ∈ A =⇒ i=1 Ai ∈ A

Definition: σ-Algebra

If S is a set then a collection F of subsets of S is an algebra if

• S∈F

• A ∈ F =⇒ Ac ∈ F

S∞

• {Ai }∞

i=1 ∈ F =⇒

i=1 Ai ∈ F

Lemma:

The following are basic properties of σ-algebras

• φ∈F

• {Ai }∞

i=1 ∈ F =⇒

T∞

i=1

Ai ∈ F

Lemma:

If S is a non-empty set and G ∈ P(S)

then ∃F := σ(G) the smallest σ-algebra containing G

Proof:

We know that P(S) is a σ-algebra containing G

Define Γ := T

{A σ-algebra : G ∈ A}

Claim F = A∈Γ A

If this is a σ-algebra it is clearly the smallest one containing G by definition of intersection.

• S ∈ A ∀AT∈ Γ since these are σ-algebras

hence S ∈ A∈Γ A

• Let A ∈ F then A ∈ A ∀A ∈ Γ

hence Ac ∈ A ∀A ∈ Γ

hence Ac ∈ F

∞

∞

• Let {AS

i }i=1 ∈ F then {Ai }i=1 ∈ A

∞

hence Si=1 Ai ∈ A ∀A ∈ Γ

∞

hence i=1 Ai ∈ F

∀A ∈ Γ

1

1

BASIC PROBABILITY

Remark:

From the above lemma we say that F is the σ-algebra generated by G and that G is the generator of F

Definition: BorelQσ-Algebra

n

Let S = Rn , G := { i=1 [ai , bi ] : ai ≤ bi ∈ Q}

Then the Borel σ-algebra is B(Rn ) = σ(G)

Remark:

If S is a topological space then B(S) is the σ-algebra generated by open sets of S

Definition: Measurable Space

(S, F) is a measurable space where

• S is a set

• F is a σ-algebra

Definition: Measurable Set

A is measurable if A ∈ F where F is a σ-algebra

Definition: Additive

If S is a set, A is an algebra then

µ : A → R|[0,∞]

is additive if F, G ∈ A, F ∩ G = φ =⇒ µ(F ∪ G) = µ(F ) + µ(G)

Definition: σ-Additive

If S is a set, A is an algebra then

µ : A → R|[0,∞]

S

P

∞

∞

is σ-additive if {Ai }∞

i=1 ∈ A, Ai ∩ Aj = φ ∀i 6= j =⇒ µ

i=1 Ai =

i=1 µ(Ai )

Definition: Measure

If (S, F) is a measurable space and µ : F → R|[0,∞] is a measure if µ is σ-additive

Definition: Finite Measure

A measure µ on measurable space (S, F) is finite if µ(S) < ∞

Definition: σ-Finite Measure

A measure µ on measurable space (S, F) is σ-finite if

∃{Ai }∞

i=1 ∈ F s.t.

• µ(Ai ) < ∞ ∀i

S∞

• i=1 Ai = S

Definition: Probability Measure

A measure µ on S is a probability measure if µ(S) = 1

Definition: Measure Space

A measure space is (S, F, µ) where S is a set, F is a σ-algebra on S and µ is a measure on (S, F)

Definition: Probability Space

A measure space (S, F, µ) is a probability space if µ is a probability measure

Definition: π-System

A family Y of subsets of set S is a π-system if Y is closed under intersection

i.e. A, B ∈ Y =⇒ A ∩ B ∈ Y

Definition: Dynkin System

If S is a set and D is a set of subsets of S then D is a Dynkin system of S if

• S∈D

• A, B ∈ D, A ⊆ B =⇒ B \ A ∈ D

• {Ai }∞

i=1 ∈ D, Ai ∩ Aj = φ ∀i 6= j =⇒

S∞

i=1

Ai ∈ D

Remark:

We denote d(G) to be the smallest Dynkin system containing G which exists

Lemma:

If G is a π-system then σ(G) = d(G)

2

1

BASIC PROBABILITY

Proof:

Clearly every σ-algebra is also a Dynkin system hence d(G) ⊆ σ(G) is trivial

hence it remains to show that d(G) is a σ-algebra

• Clearly S ∈ d(G) by definition

• Suppose A ∈ d(G) then B \ A ∈ d(G) ∀B ∈ d(G)

hence Ac = S \ A ∈ d(G)

• Want to show that d(G) is a π-system

define D1 := {A ⊂ S : A ∩ B ∈ d(G) ∀B ∈ G}

Is a Dynkin system and G ⊆ D1

hence d(G) ⊆ D1

define D2 := {A ⊂ S : A ∩ B ∈ d(G) ∀B ∈ d(G)} ⊆ d(G)

Is a Dynkin system and G ⊆ D2

hence d(G) ⊆ D2

hence d(G) is a Dynkin system hence is a π-system

S∞

• Let {A1 }∞

i=1 ∈ d(G), we need to show that

i=1 Ai ∈ d(G)

Si−1

define Bi = Ai \ j=1 Ai ∈ d(G) ∀i

∞

[

Ai =

i=1

∞

[

Bi ∈ d(G)

i=1

since this is a disjoint union

hence indeed d(G) is a σ-algebra

Theorem:

Let (S, F, µ) be a probability space and suppose F = σ(G)

for some π-system G ∈ P(S)

If ν is another probability measure on (S, F) s.t.

µ(A) = ν(A) ∀A ∈ G

then µ = ν

Proof:

write

D = {A ∈ F : µ(A) = ν(A)}

is a Dynkin system with G ⊆ D hence

d(G) ⊆ D

hence by the previous lemma F = σ(G) = d(G) = D

Definition: Content

µ is a content on (S, A) if

• µ(φ) = 0

• µ(A ∪ B) = µ(A) + µ(B) ∀A, B ∈ A : A ∩ B = φ

Theorem Caratheodory’s Theorem

If µ is a σ-additive content on (S, A) where A is an algebra of S then µ extends to a measure on (S, σ(A))

Lemma: Cantor

If {Kn }∞

n=1 are compact sets in a metric space s.t.

Kn 6= φ ∀n and

Kn+1 ⊆ Kn ∀n

then

∞

\

Kn 6= φ

n=1

Proof:

Choose xn ∈ Kn for each n ∈ N since Kn 6= φ

3

1

{xn }∞

n=r

Notice that

∈ Kr since Kn are decreasing

∃{xnk }∞

k=1 , x0 ∈ K1 s.t.

lim xnk = x0

k→∞

by compactness

{xnk }∞

k=r ∈ Kr

T∞

hence x0 ∈ Kn ∀n hence x0 ∈ n=1 Kn

Lemma:

If {An }∞

n=1 ⊆ A and µ is a σ-additive content s.t.

• A = a{Int(a, b) : −∞ ≤ a ≤ b ≤ ∞}

where

(

(a, b]

b<∞

Int(a, b) =

(a, ∞) b = ∞

• An+1 ⊆ An

• limn→∞ An = φ

• µ(An ) < ∞

∀n

then

lim µ(An ) = 0

n→∞

Proof:

By contradiction assume that limn→∞ µ(An ) = δ > 0

then µ(An ) ≥ δ ∀n ∈ N

We can choose sets {Fn }∞

n=1 ∈ A s.t.

• F n ⊆ An for each n

• µ(An ) − µ(Fn ) ≤ 2−n δ

• Fn+1 ⊆ Fn

then we have that

µ(Fn ) ≥ µ(An ) − δ2−n ≥ δ − δ2−n ≥ δ/2

hence Fn 6= φ

moreover F n 6= φ

F n is compact so by the previous lemma

∞

\

F n 6= φ

n=1

but

∞

\

Fn ⊆

n=1

which is a contradiction to

∞

\

n=1

∞

\

An 6= φ

n=1

An = lim An = φ

n→∞

Proposition:

The Lebesgue measure L on (R, A) where

A = a{Int(a, b) : −∞ ≤ a ≤ b ≤ ∞}

extends to a measure on (R, σ(A) = (R, B(R))

Proof:

By Caratheodory’s theorem it suffices to show that L is σ-additive

4

BASIC PROBABILITY

1

i.e.

∀{Ai }∞

i=1

∈ A pairwise disjoint s.t.

S∞

i=1

Ai ∈ A we have that

!

∞

∞

[

X

L

Ai =

L(Ai )

i=1

i=1

For Af inite := {A ∈ A : L(A) < ∞}

S∞

suppose {Ai }∞

i=1

Sn ∈ Af inite be pairwise disjoint s.t. A = i=1 Ai ∈ Af inite

Define Bn := i=1 Ai

n

X

L(A) = L(A \ Bn ) +

L(Ai )

i=1

by the previous lemma limn→∞ L(A \ Bn ) = 0

hence

L

∞

[

!

Ai

=

i=1

∞

X

L(Ai )

i=1

so it remains to show this holds for A ∈ A \ Af inite

suppose A = (−∞, a]

S∞

let {Ai }∞

i=1 ∈ A be pairwise disjoint s.t.

i=1 Ai = A

Since {Ai }∞

are

pairwise

disjoint

∃

A

∈

{Ai }∞

1 k

i=1

i=1 s.t. Ak = (−∞, ak ]

for some ak

L(A) ≥ 0 since it is a content hence

∞ = L(Ak ) ≤

∞

X

L(Ai )

i=1

∞ = L(Ak ) ≤ L

∞

[

!

Ai

i=1

hence indeed the proposition holds.

Definition: Measurable Function

If (S, F), (S 0 , F 0 ) are measurable spaces then

f : S → S 0 is (S, S 0 )-measurable if

∀A0 ∈ F 0 f −1 (A0 ) ∈ F

Lemma:

If (S, F), (S 0 , F 0 ) are measurable spaces and

F 0 = σ(G), then it is sufficient that

f −1 (A) ∈ F ∀A ∈ G

for f to be measurable.

Corollary:

Let (S, F) is measurable and f : S → R

then if {f ≤ a} ∈ F ∀a ∈ R then f is (S, R) measurable.

Proof:

By the previous lemma take G = {(−∞, a] : a ∈ R} which generates B(R)

Lemma:

If {fi }∞

i=1 are measurable then

• h1 h2

• αh1 + βh2

• h1 ◦ h2

• infi hi

• liminfi hi

• limsupi hi

5

BASIC PROBABILITY

1

BASIC PROBABILITY

are measurable when well defined.

Corollary:

Let S be a topological space with σ-algebra B(S)

If f : S → R is continuous then it is Borel measurable.

Proof:

Open sets generate B(R)

Since the preimage of open sets by a continuous function are open we have that ∀A open f −1 (A) is open hence is

in the σ-algebra generated by open sets in S

Definition: Random Variable

If (Ω, F, P) is a probability space and (S, F 0 ) is measurable then

X : Ω → S is a random variable if it is a measurable mapping

i.e.

∀A0 ∈ F 0 X −1 (A0 ) ∈ F

Definition: Push Forward Measure

If X : Ω → S is a random variable on (Ω, F, P) then the distribution of X is the probability measure

PX (A) = P({X ∈ A})

∀A ∈ F 0

Definition: Cummulative Distribution Function

Define

FX (x) = PX ((−∞, x]) = P({X ≤ x})

Lemma:

Suppose F = FX for some random variable X then

• F : R → [0, 1] is non decreasing

• F is right-continuous

• limx→−∞ F (x) = 0

• limx→∞ F (x) = 1

Lemma:

If F : R → [0, 1] s.t.

• F : R → [0, 1] is non decreasing

• F is right-continuous

• limx→−∞ F (x) = 0

• limx→∞ F (x) = 1

then ∃(Ω, F, P) and random variable X s.t. FX = F

Definition: Product σ-Algebra

If (Si , Fi )ni=1 are measurable spaces then the product σ-algebra on these is

n

O

Fi = σ({A1 × ... × An : Ai ∈ Fi })

i=1

Lemma:

If (Ω, F), (Si , Fi )ni=1 are measurable spaces then

{Xi : Ω → Si }ni=1 are all random variables N

iff

n

Z := (X1 , ...Xn ) : Ω → S1 × ... × Sn is (F, i=1 Fi )-measurable

Proof:

Suppose Z is a random variable

let πi : S1 × ... × Sn → Si be the ith canonical projection

then Xi = πi ◦ Z

6

2 INDEPENDENCE

Compositions of measurable functions are measurable so it is sufficient that the projections πi are measurable

which is true since

πi−1 (A) = R × ... × R × A × R × ... × R

Nn

Suppose {Xi }ni=1 are random variables and let A ∈ i=1 Fi

Z −1 (A) = {ω : z(ω) ∈ A}

= {ω : Xi (ω) ∈ Ai ∀i}

= {ω : ω ∈ Xi−1 (Ai ) ∀i}

n

\

=

Xi−1 Ai ∈ F

i=1

Definition: Joint Distribution

If {Xi }ni=1 : (Ω, F) → (Si , Fi )

are random variables for measurable spaces (Ω, F), (Si , Fi )ni=1

Nn

then the distribution of Z = (X1 , ...Xn ) : (Ω, F) → (Sn × ... × Sn , i=1 Fi )

is called the joint distribution of (X1 , ...Xn ):

n

\

PZ (A) = P({Z ∈ A}) = P

{Xi ∈ Ai }

i=1

2

Independence

Definition: Independence

Let (Ω, F, P) be a probability space then

• Sub-σ algebras {Fi }ni=1 are independent if

whenever {ik }nk=1 are distinct and Aik ∈ Fik then

n

k

\

Y

Ai k =

P(Aik )

P

i=1

i=1

• Random variables {Xi }ni=1 are independent if

the sub-σ algebras {σ(Xi )}ni=1 are independent

where σ(Xi ) = σ({Xi−1 (A) : A ∈ Fi })

• Events {Ei }ni=1 ∈ F are independent if

the sub-σ algebras {σ(Ei )}ni=1 are independent

where σ(Ei ) = {φ, Ω, Ei , Ω \ Ei }

Lemma:

Suppose G, H are sub-σ algebras of F on the probability space (Ω, F, P)

s.t. G = σ(I), H = σ(J )

for π-systems I, J

then G, H are independent iff

P(I ∩ J) = P(I)P(J)

∀I ∈ I, J ∈ J

Proof:

Independence =⇒ P(I ∩ J) = P(I)P(J)

∀I ∈ I, J ∈ J

is trivial since independence =⇒ P(I ∩ J) = P(I)P(J)

∀I ∈ G ⊃ I, J ∈ H ⊃ J

Define µ(A) = P(A ∩ I), ν(A) = P(A)P(I)

∀I ∈ I, A ∈ H

clearly these are measures and by the property we have that they coincide on H

Define η(A) = P(A ∩ H), κ(A) = P(A)P(H)

∀A ∈ G, H ∈ H

clearly these are measures and by the property we have that they coincide on G

7

2

So indeed we have independence.

Corollary:

Let (Ω, F, P) be a probability space and X, Y : Ω → R be random variables.

If

P({X ≤ a} ∩ {Y ≤ b}) = P(X ≤ a)P(Y ≤ b)

∀a, b ∈ R

then X, Y are independent.

Proof:

σ({(−∞, a] : a ∈ R}) = B(R) hence this holds by the previous lemma.

Corollary:

X, Y are independent iff

FX (a)FY (b) = P(X ≤ a)P(Y ≤ b)

= P({X ≤ a} ∩ {Y ≤ b})

= FX,Y (a, b)

∀a, b ∈ R

Lemma:

Let (Ωi , FN

i , µi )i=1,2 be σ-finite measure spaces then

∃1 µ = µ1 µ2 which is σ-finite and

µ(A1 × A2 ) = µ(A1 )µ2 (A2 )

∀Ai ∈ Fi i = 1, 2

N

µ is a measure on (Ω1 × Ω2 , F1 F2 )

Corollary:

Random variables X, Y with joint distribution PX,Y are independent iff

PX,Y = PX PY

Definition: Tail-σ Algebras

If {Fi }∞

i=1 are a sequence of σ-algebras then the tail-σ algebra is defined as

T =

∞

∞

[

\

{Fk }

σ

n=1

k=n

If {Xi }∞

i=1 are a sequence of random variables then the tail-σ algebra is defined as

T =

∞

∞

[

\

σ

{σ(Xk )}

n=1

k=n

Definition: Deterministic

A random varibale X is deterministic if ∃c ∈ [−∞, ∞] s.t. P(X = c) = 1

Definition: P-Trivial

T is P-trivial if

• A∈T

=⇒ P(A) ∈ {0, 1}

• If X is a T -measurable random variable then X is deterministic.

Lemma:

Let µ be a measure on measurable space (Ω, F) and {Ai }∞

i=1 ∈ F

• If Ai ⊆ Ai+1 ∀i then

µ( lim Ai ) = lim µ(Ai )

i→∞

i→∞

• If Ai ⊇ Ai+1 ∀i and µ(A1 ) < ∞ then

µ( lim Ai ) = lim µ(Ai )

i→∞

i→∞

8

INDEPENDENCE

2

INDEPENDENCE

Theorem: Kolmogorov

Suppose that {Fi }∞

i=1 are an independent sequence of σ-algebras then the associated σ-algebra is P-trivial

Proof:

P(A) ∈ {0, 1} ⇐⇒ P(A)2 P(A)

⇐⇒ P(A)P(A) = P(A ∩ A)

⇐⇒ A ⊥ A

S

∞

F

Let Tn = σ

k

k=n+1

T∞

and T = n=1 Tn Sn

Hn := σ

k=1 Fk

{Fk }∞

k=1 are independent hence

Hn ⊥ Tn hence

Hn ⊥ T

therefore A ∈ T is independent from every Hn

S

∞

n=1 Hn is a π-system hence it is sufficient that

A⊥σ

∞

[

∞

[

Hn = σ

F k = T0 ⊃ T

n=1

k=1

hence A ⊥ A

Let c ∈ R by the first part of the theorem we have that P(X ≤ c) ∈ {0, 1}

define c := sup{x : P(X ≤ x) = 0}

If c = −∞ then P(X = −∞) = 1

If c = ∞ then P(X = ∞) = 1

If |c| < ∞ then

1

)=0

n

1

P(X ≤ c + ) = 1

n

∀n ∈ N

P(X ≤ c −

∀n ∈ N

hence by the previous lemma

∞

[

1

= P(X < c)

P

{X ≤ c −

n

n=1

=0

∞

\

1

= P(X ≤ c)

P

{X ≤ c +

n

n=1

=1

P(X = c) = P(X ≤ c) − P(X < c)

=1

Definition: Limsup

Let (Ω, F, P) be a probability space with {Ai }∞

i=1 ∈ F then

9

2

{An : i.o.} =

∞ [

\

An

m=1 n≥m

= {ω : ∀m; ∃n ≥ m s.t. ω ∈ An }

= limsupn An

Definition: Liminf

Let (Ω, F, P) be a probability space with {Ai }∞

i=1 ∈ F then

{An : i.o.} =

∞ \

[

An

m=1 n≥m

= {ω : ∃m; ∀n ≥ m s.t. ω ∈ An }

= liminfn An

Lemma: Fatou

Let (Ω, F, µ) be a measure space and {An }∞

n=1 ∈ F then

•

µ(liminfn An ) ≤ liminfn µ(An )

• If µ(Ω) < ∞ then

µ(limsupn An ) ≥ limsupn µ(An )

Proof:

• Define Zm :=

T

n≥m

An is monotonic and µ(Zm ) < µ(An ) ∀n ≥ m

∞

[

Zm

µ(liminfn An ) = µ

m=1

= lim µ(Zm )

m→∞

≤ lim infn≥m µ(An )

m→∞

= liminfn µ(An )

•

µ(Ω) − µ(limsupn An ) = µ(Ω \ limsupn An )

= µ(liminfn (Ω \ An ))

≤ liminfn µ(Ω \ An )

= µ(Ω) − limsupn µ(An )

Since µ(Ω) < ∞ we have that

µ(limsupn An ) ≥ limsupn µ(An )

Lemma: Borel-Cantelli

Let (Ω, F, P) be a probability space and {Ai }∞

i=1 ∈ F

then

10

INDEPENDENCE

2

• If

INDEPENDENCE

P∞

P(An ) < ∞ then P(An : i.o.) = 0

P∞

• If {Ai }∞

i=1 are independent and

n=1 P(An ) = ∞ then P(An : i.o.) = 1

n=1

Proof:

S

• define Gm : n≥m An

T∞

then for k ∈ N we have m=1 Gm ⊂ Gk

P({An : i.o.) = P(limsupn An )

∞

\

= P(

Gm )

m=1

≤ P(Gk )

[ =P

An

≤

∞

X

∀k

P(An )

n=k

lim

k→∞

∞

X

P(An ) = 0

n=k

• {Ai }∞

i=1 independent =⇒

{Aci }∞

i=1 are independent.

r

r

\

Y

Acn =

P(Acn )

P

n=m

=

≤

n=m

r

Y

n=m

r

Y

by independence

1 − P(An )

e−P(An )

n=m

P

− rn=m P(An )

=e

lim e

r→∞

−

Pr

n=m

P(An )

=0

T

∞

c

hence we have that P

n=m An ) = 0

c

(limsupn An ) =

∞ \

[

Acn

m=1 n≥m

is the countable union of null sets hence is itself a null set hence

P(limsupn An ) = 1

Corollary:

If {An }∞

n=1 are independent then P(An i.o.) ∈ {0, 1}

Corollary:

T∞

If we write Fn = σ(An ) then {An i.o.} is a tail event of T = n=1 σ({Fi }∞

i=n )

Lemma: Existence Of Independent Random Variables

Let (Ωi , Fi , Pi ) i = 1, 2 be probability spaces with respective random variables X1 , X2 which have distributions

PX1 , PX2

11

3

EXPECTATION AND CONVERGENCE

N

If we set Ω = Ω1 × Ω2 , F = F1 F2 , P = P1 × P2

then for ω = (ω1 , ω2 ) ∈ Ω define

X̃1 (ω) = X1 (ω1 )

X̃2 (ω) = X2 (ω2 )

are defined on (Ω, F, P) and are independent.

3

Expectation And Convergence

Definition: Simple Function

A function f : S → R on measure space (S, F, µ) is simple if

∃{αi }ni=1 ∈ R|[0,∞] and partition {Ai }ni=1 ∈ F s.t.

f=

n

X

αi 1Ai

i=1

Definition: Integration Of Simple

Functions

Pn

If f : S → R is simple with f = i=1 αi 1Ai

then

Z

f dµ =

n

X

αi µ(Ai )

i=1

Definition: Integration Of Positive Measurable Functions

If f : S → R|[0,∞] is measurable

then

Z

o

nZ

gdµ : g ≤ f, g simple

f dµ = sup

Definition: µ-Integrable

f : S → R is µ-integrable if

Z

|f |dµ < ∞

Definition: Integration Of µ-Integrable Functions

If f : S → R is µ-integrable

then

Z

Z

Z

f dµ = f + dµ − f − dµ

Remark

We denote the space of µ-integrable functions as L1 (µ) = L(S, F, µ)

Lemma:

The following properties hold for L1 (µ)

• f, g ∈ L1 (µ), α, β ∈ R =⇒

Z

Z

αf + βgdµ = α

Z

f dµ + β

• 0 ≤ f ∈ L1 (µ) =⇒

Z

f dµ ≥ 0

• {fn }∞

n=1 , f measurable s.t. limn→∞ fn = f and fn ≤ fn+1 ∀n =⇒

Z

Z

lim

fn dµ = f dµ

n→∞

• f = 0 a.e. ⇐⇒

Z

f dµ = 0

12

gdµ

3

EXPECTATION AND CONVERGENCE

Theorem: Fubini’s

N Theorem

N

N

Let (S1 × S2 , F1N F2 , µ1 µ2 ) be a measure space and f ∈ L1 (µ1 µ2 )

then let µ = µ1 µ2

Z Z

Z

Z Z

f (s1 , s2 )dµ1 (s1 )dµ2 (s2 ) = f (s)dµ(s) =

f (s1 , s2 )dµ2 (s2 )dµ1 (s1 )

Moreover it is sufficient that f ≥ 0 measurable for the above integral inequality to hold.

Definition: Expectation

If X is a r.v. on (Ω, F, P) probability space X ∈ L1 (P) then

Z

E[X] := XdP

Lemma:

If X is a r.v. on (Ω, F, P) with distribution PX and h : R → R is Borel measurable then

• h ◦ X ∈ L1 (P) ⇐⇒ h ∈ L1 (PX )

R

R

• E[h ◦ X] = h ◦ XdP = hdPX

Corollary:

Z

E[X] =

Z

zdPX (z) =

XdP

Corollary:

Take h = 1B for B ∈ B(R) then

Z

E[1B ◦ XdP] = 1B ◦ XdP

Z

= 1X −1 (B) dP

= P(X −1 (B))

= P(X ∈ B)

= PX (B)

Z

= 1B dPX

This holds for all indicators hence by linearity holds for all simple functions therefore by monotone convergence

theorem holds for all positive measurable functions and all integrable functions.

Lemma:

If X, Y are independent r.vs on probability space (Ω, F, P) then

E[XY ] = E[X]E[Y ]

Proof:

Z

E[XY ] =

xydPX,Y

Z Z

=

XY dPX dPY

Z

Z

= Y

XdPX dPY

Z

Z

= XdPX Y dPY

by Fubini

= E[X]E[Y ]

13

3

EXPECTATION AND CONVERGENCE

Remark:

If X is a r.v on (Ω, F, P) and A ∈ F then we use the following notation:

Z

E[X; A] := E[X1A ] = X1A dP

Lemma: Markov’s Inequality

If X is a random variable, ε > 0 and g : R → R|[0,∞] is Borel measurable and non-decreasing then

•

P(g(X) > ε)ε ≤ E[g(X)]

•

P(X > ε)g(ε) ≤ E[g(X)]

Proof:

g ◦ X is measurable and non-negative by properties of measurable functions hence

•

Z

E[g(X)] =

g(X)dP

Z

≥

g(X)1{g(X)>ε} dP

Z

≥ ε1{g(X)>ε} dP

Z

≥ ε 1{g(X)>ε} dP

= εP(g(X) > ε)

•

Z

E[g(X)] =

g(X)dP

Z

≥

g(X)1{X>ε} dP

Z

≥ g(ε)1{X>ε} dP

Z

≥ g(ε) 1{X>ε} dP

= g(ε)P(X > ε)

Corollary: Chebyshev’s Inequality

If X is a r.v. with finite variance then

P(|X − E[X]| > ε) ≤

∀ε > 0

Proof:

P(|X − E[X]| > ε) = P((X − E[X])2 > ε2 )

≤

E[(X − E[X])2 ]

ε2

V ar[X]

=

ε2

14

V ar[X]

ε2

4

CONVERGANCE

Theorem:

If {Xi }ni=1 are independent random variables each with mean µ and variance σ 2 then for ε > 0

n

X

Xi

σ2

P − µ > ε ≤ 2

n

nε

i=1

Proof:

Pn

define Z = i=1 Xni then

E[Z] = µ

V ar[Z] = σ 2 /n

hence by Chebyshev’s inequality this clearly holds.

Lemma: Jensen’s Inequality

If X is a random variable with E[|X|] < ∞ s.t. f is convex on an interval containing the range of X and

E[|f ◦ X|] < ∞ then

f (E[X]) ≤ E[f (X)]

Proof:

Pn

Suppose that X is simple so let X = i=1 ai 1Ai

f (E[X]) = f

n

X

!

ai P(Ai )

i=1

≤

∞

X

P(Ai )f (ai )

by convexity

i=1

= E[f (X)]

Suppose that X is a positive r.v. then ∃{Xn }∞

n=1 r.vs which are increasing and converge to X a.s.

then

f (E[X]) = lim f (E[Xn ])

n→∞

≤ lim E[f (Xn )]

n→∞

= E[f (X)]

4

Convergance

Definition: Almost Sure Convergance

If {Xi }ni=1 are a sequence of r.vs on (Ω, F, P) then {Xi }ni=1 converge to r.v. X on (Ω, F, P) if

P({ω ∈ Ω : lim Xn (ω) = X(ω)}) = 1

n→∞

Remark:

Amost sure limits are unique only up to equality a.s.

Corollary:

X = Y a.s. iff

P({ω ∈ Ω : X(ω) = Y (ω)}) = 1

Lemma:

{Xn }∞

n=1 converge to X a.s. iff

P(|Xn − X| > ε i.o.) = 0

∀ε > 0, n ∈ N

Corollary: Stability Properties

• If {Xn }∞

n=1 are r.vs s.t. Xn → X a.s. and g : R → R ios continuous then g(Xn ) → g(X) a.s.

15

• If

∞

{Xn }∞

n=1 {Yn }n=1

4 CONVERGANCE

are r.vs s.t. Xn → X a.s. and Yn → Y a.s. with α, β ∈ R then αXn + βYn → αX + βY

Definition: Convergence In Probability

If {Xn }∞

n=1 , X are r.vs on (Ω, F, P) then Xn → X in probability if

lim P(|Xn − X| > ε) = 0

n→∞

Definition: Cauchy Convergence In Probability

If {Xn }∞

n=1 , X are r.vs on (Ω, F, P) then Xn → X Cauchy in probability if

lim P(|Xn − Xm | > ε) = 0

n,m→∞

Lemma:

Let {Xn }∞

n=1 , X be r.vs on (Ω, F, P)

If Xn → ∞ a.s. then Xn → X in probability

Proof:

Xn → ∞ a.s. implies

∀ε > 0

P(|Xn − X| > ε) ≤ P(supk≥n |Xk − X| > ε)

hence we have

lim P(|Xn − X| > ε) ≤ lim P(supk≥n |Xk − X| > ε)

n→∞

n→∞

≤ P(limn→∞ supk≥n |Xk − X| > ε)

= P(|Xn − X| > ε i.o.)

=0

Lemma:

Let {Xn }∞

n=1 , X be r.vs on (Ω, F, P)

Xn → X in probability iff Xn → X Cauchy in probability.

Proof:

Suppose Xn → X in probability.

then

|Xn − Xm | ≤ |Xn − X| + |X − Xm |

{|Xn − Xm | > ε} ⊆ {|Xn − X| > ε/2} ∪ {|X − Xm | > ε/2}

P(|Xn − Xm | > ε) ≤ P(|Xn − X| > ε/2) + P(|X − Xm | > ε/2)

lim P(|Xn − Xm | > ε) ≤

n,m→∞

lim P(|Xn − X| > ε/2) + P(|X − Xm | > ε/2)

n,m→∞

=0

Now suppose Xn → X Cauchy in probability

for any k ≥ 1 choose ε = 2−k

∃mk s.t. ∀n > m ≥ mk we have that

P(|Xn − Xm | > 2−k ) < 2−k

set n1 = m1 and recursively define nk+1 = max{nk + 1, mk+1 }

define Xk0 := Xnk

0

definePAk := {|Xk+1

− Xk0 | > 2−k }

∞

then k=1 P(Ak ) < ∞

then by Borel Cantelli

0

|Xk+1

(ω) − Xk0 (ω)| ≤ 2−k

∀ω ∈ Ω \ A,

where P(A) = 0

hence ∀ω ∈ Ω \ A

16

∀k ≥ K0

4

lim

n→∞

0

supm>n |Xm

−

Xn0 |

≤ lim

n→∞

∞

X

0

|Xm

− Xn0 |

k=n

∞

X

2−k

≤ lim

n→∞

k=n

=0

hence

P(limsupn Xn0 = liminfn Xn0 < ∞) = 1

define X := liminfn Xn0 since Xnk → X a.s.

by the previous lemma we have that Xnk → X in probability

hence ∀ε > 0

lim P(|Xk − X| > ε) ≤ lim (P(|Xk − Xnk | > ε/2) + P(|Xnk − X| > ε/2))

k→∞

k→∞

=0

Lemma:

LetP{Xn }∞

n=1 , X be random variables on (Ω, F, P)

∞

If n=1 P(|Xn − X| > ε) < ∞

∀ε > 0 then

Xn → X a.s.

Proof:

This follows directly from Borel Cantelli.

Lemma:

If {Xn }∞

n=1 , X are r.vs then

Xn → X in probability iff

∞

∀{Xnk }∞

k=1 ∃{Xnkr }r=1 s.t. Xnkr → X a.s.

Proof:

Suppose Xn → X in probability

the ∀ε > 0 find nkr ∈ N s.t. P(|Xnkr − X| > 1/r) < 2−r

then

∞

∞

X

X

P(|Xnkr − X| > 1/r) <

2−r < ∞

r=1

r=1

moreover ∀ε > 0

X

r>1/ε

P(|Xnkr − X| > ε) <

X

P(|Xnkr − X| > 1/r)

r>1/ε

≤

X

2−k

r>1/ε

<∞

hence we simply take the sequence {Xnkr }r>1/ε

which converges to X a.s.

Assume that every subsequence has a subsequence converging a.s.

we need to show that X is unique.

fix ε > 0

bn := P(|Xn − X| > ε) ∈ [0, 1] is compact hence

17

CONVERGANCE

4

∃bnk converging subsequence

by our assumption Xnk has a subsequence Xnkr → X a.s.

lim bnkr = lim P(|Xnkr − X| > ε) = 0

r→∞

r→∞

since a.s. convergence implies convergence in probability.

hence since bnk is convergent we must have that limk→∞ bnk = 0

hence every subsequence of bn which converges does so to 0

Theorem: The Weak Law Of Large Numbers

1

If {Xn }∞

n=1 are i.i.d r.vs with Xi ∈ L (Ω, F, P) then

lim

n→∞

n

X

Xk

k=1

n

= E[X1 ]

Proof:

Write

Xk∗ := Xk 1{|Xk |≤k1/4 }

Xk0 := Xk 1{|Xk |>k1/4 }

Yn∗ :=

Yn0 :=

n

X

X ∗ − E[X ∗ ]

k

k=1

n

X

k=1

k

n

Xk0 − E[Xk0 ]

n

It suffices to show that Yn∗ , Yn0 → 0 in probability.

V ar[Xk∗ ] ≤ E[Xk∗ ]2

V ar[Yk∗ ] ≤

≤ k 1/2

n

X

V ar[X ∗ ]

k

n2

k=1

≤

n

X

k 1/2

k=1

≤

n2

n

X

n1/2

k=1

n2

= n−1/2

by Chebyshev’s inequality

P(|Yn∗ | > ε) ≤

but

V ar[Yn∗ ]

ε2

V ar[Yn∗ ]

=0

n→∞

ε2

lim

hence Yn∗ → 0 in probability

E[|Xk0 |] = E[|X10 |1|X10 |>k1/4 ] → 0

by MCT

18

CONVERGANCE

4

CONVERGANCE

hence

E[|Yn0 |]

εP

P(|Yn0 | ≥ ε) ≤

≤

2

n

n

k=1

E[|Xk0 |]

ε

lim P(|Yn0 | ≥ ε) = 0

n→∞

hence Yn0 → 0 in probability.

Theorem: The Strong Law Of Large Numbers

let {Xn }∞

n=1 be independent r.vs s.t.

• E[|Xn |2 ] < ∞

∀n

• v := supXn {V ar[Xn ]} < ∞

then

n

X

Xk − E[Xk ]

n

k=1

→0

a.s.

Proof:

WLOG assume

Pn E[Xk ] = 0

define Sn = i=1 Xni

Notice that Sn → 0 in probability so we want a subsequence Snk → 0 a.s.

P(|Sn2 | > ε) ≤

hence

∞

X

V ar[Sn2 ]

n2 ε2

P(|Sn2 | > ε) < ∞

∀ε > 0

n=1

so we have that Sn2 → 0 a.s.

For m ∈ N let n = nm s.t.

n2 ≤ m ≤ (n + 1)2

Pk

write yk = ksk = i=1 Xi

P(|ym − yn2 | > εn2 ) ≤ ε−2 n−4 V ar

m

h X

Xi

i

i=n2 +1

2

≤ ε−2 n−4 v(m − n )

2

∞ (n+1) −1

v X X m − n2

P(|ym − yn2m | > εn ) ≤ 2

ε n=1

n4

2

m=1

∞

X

2

m=n

2n

X

k

v X

n = 1∞

≤ 2

ε

n4

k=1

∞

v X 2n(2n + 1)

= 2

ε n=1

2n4

≤

∞

v X 2

ε2 n=1 n2

<∞

hence since the intersection of two sets of full measure is also a set of full measure (where finite)

19

4

we get that

|Sm | = |ym /m| ≤ |ym /n2 | → 0

a.s.

Definition: Absolute Moment

Let X be a random variable on probability space (Ω, F, P)

then for p ∈ [1, ∞) the pth moment of X is defined as

Z

p

E[|X| ] = |X|p dP

Definition: Lp Space

Lp (Ω, F, P) := {X : Ω → R : E[|X|p ] < ∞, X measurable}

Definition: Lp Norm

The Lp norm is defined as

||X||p := E[|X|p ]1/p

Lemma: Holder’s Inequality

For p, q ∈ [1, ∞) s.t. p1 + 1q = 1

we have that for X ∈ Lp , Y ∈ Lq

E[|XY |] ≤ ||X||p ||Y ||q

moreover this holds for p = 1, q = ∞ where

||Y ||∞ := inf {s ≥ 0 : P(|Y | > s) = 0}

Lemma:

For p ∈ [1, ∞], X, Y ∈ Lp we have

||X + Y ||p ≤ ||X||p + ||Y ||p

Corollary:

||X||p = 0 =⇒ X = 0 a.s.

Definition: Quotient Space

Define N0 = {X ∈ Lp : X = 0 P a.s.}

then the quotient space of Lp is

Lp =

Lp

N0

Definition: Equivalence Class

We denote the equivalence class of X ∈ Lp to be

[X] := {Y : X = Y a.s.}

Definition: Convergance in Lp

p

Let p ∈ [1, ∞] and {Xn }∞

n=1 , X ∈ L

then

Xn → X

in Lp

if

lim ||Xn − X||p = 0

n→∞

Definition: Cauchy Sequence

p

{Xn }∞

n=1 is a Cauchy sequence in L if

∀ε > 0 ∃N ∈ N s.t. ∀n, m ≥ N we have that

||Xn − Xm ||p < ε

Theorem:

20

CONVERGANCE

4

{Xn }∞

n=1

p

CONVERGANCE

p

for p ∈ [1, ∞] let

∈ L be a Cauchy sequence in L then

∃X ∈ Lp s.t. Xn → X in Lp

Lemma:

If 1 ≤ s ≤ r then

Xn → X in Lr =⇒ Xn → X in Ls

Proof:

For s = 1, r = 2

E[|Xn − X|s ] =

Z

|Xn − X|dP

Z

|Xn − X|dP

=

Z

≤

1/2 Z

1/2

|Xn − X|2 dP

12 dP

by Cauchy-Schwarz inequality

= E[|Xn − X|2 ]1/2

lim E[|Xn − X|2 ] = 0 =⇒

n→∞

lim E[|Xn − X|2 ]1/2 = 0

n→∞

hence indeed the lemma holds for s = 1, r = 2

Lemma:

p

Let {Xn }∞

n=1 , X be r.vs s.t. Xn → X in L for p ≥ 1 then

Xn → X in probability.

Proof:

let ε > 0

P(|Xn − X| > ε) = P(|Xn − X|p > εp )

≤

E[|Xn − X|p ]

εp

by Markov’s inequality

p

lim

n→∞

E[|Xn − X| ]

=0

εp

by convergence in Lp

Lemma:

X ∈ L1 (Ω, F, P) iff

Z

|X|dP = 0

lim

k→∞

{|X|>k}

Definition: Uniformly Integrable

A set of random variables C on (Ω, F, P) is uniformly integrable if

Z

lim supX∈C

|X|dP = 0

k→∞

{|X|>k}

Theorem:

1

If X, {Xn }∞

n=1 ∈ L (Ω, F, P) then

1

Xn → X in L iff

• Xn → X in probability

• {Xn }∞

n=1 is uniformly integrable.

21

4

CONVERGANCE

Proof:

Xn → X in L1 =⇒ Xn → X in probability by a previous lemma.

moreover if Xn → X in L1 we have finite integrability since otherwise P(X = ∞) > 0 hence the expectation

cannot be finite.

Assume that Xn → X in probability and {Xn }∞

n=1 is uniformly integrable.

for k > 0 define

x>k

k

ϕk (x) = x

x ∈ [−k, k]

−k x < −k

then we have that

E[|Xn − X|] ≤ E[|Xn − ϕk (Xn )|] + E[|ϕk (Xn ) − ϕk (X)|] + E[|ϕk (X) − X|]

notice that

|ϕk (Xn ) − Xn | = 1{|Xn |>k} |k − |Xn | ≤ 2|Xn |1{|Xn |>k}

hence let ε > 0 then by uniform integrability we have that

∃k1 ∈ [0, ∞) s.t. ∀k ≥ k1

Z

supn

|Xn |dP ≤ ε/6

{|Xn |>k}

hence ∀n we have that k > k1 =⇒

Z

E[|Xn − ϕk (Xn )|] ≤ supn 2

|Xn |dP ≤ ε/3

{|Xn |>k}

Since X ∈ L1 we have that

Z

|X|dP = 0

lim

k→∞

{|X|>k}

hence ∃k2 ∈ [0, ∞) s.t. ∀k > k2

E[|X − ϕk (X)|] ≤ ε/3

set k0 = min{k1 , k2 }

P(ϕk0 (Xn ) − ϕk0 (X) > ε) ≤ P(Xn − X > ε)

hence since Xn → X in probability we have that ϕk (Xn ) → ϕk (X) in probability

so by the Dominated Convergence Theorem |ϕk0 | ≤ k0 so

∃N ∈ N s.t. ∀n ≥ N we have that

E[|ϕk (Xn ) − ϕ(X)|] < ε/3

hence indeed we have that for k = k0 and n ≥ N we have that

E[|Xn − X|] ≤ E[|Xn − ϕk (Xn )|] + E[|ϕk (Xn ) − ϕk (X)|] + E[|ϕk (X) − X|] ≤ ε

Definition: Probability Set

We define Prob(R) to be the set of probability measures on (R, B(R))

Definition: Continuous Bounded Functions

We define Cb (R) to be the set of continuous bounded functions f : R → R

Definition: Weak Convergence

• If µ, {µn }∞

n=1 ∈ Prob(R) then

µn → µ weakly if

Z

lim

n→∞

Z

f dµn =

f dµ

• If F, {Fn }∞

n=1 are distribution functions then

Fn → F weakly if µn → µ weakly

where µ, {µn }∞

n=1 are the associated probability measures

F (x) = µ((−∞, x])

22

∀f ∈ Cb (R)

4

CONVERGANCE

X, {Xn }∞

n=1

• If

are random variables then

Xn → X weakly if PXn → PX weakly

where PX (A) = P(X ∈ A)

Definition: Convergence In Distribution

• If F, {Fn }∞

n=1 are distribution functions then

Fn → F in distribution if for all continuous points x of F

lim Fn (x) = F (x)

n→∞

• If X, {Xn }∞

n=1 are random variables then

Xn → X in distribution if FPXn → FPX in distribution

where FPX (x) = P(X ≤ x)

Theorem:

∞

If {Fn }∞

n=1 , F are distribution functions of probability measures {µn }n=1 µ then

Fn → F weakly iff Fn → F in distribution.

Proof:

Suppose Fn → F weakly

fix x ∈ R s.t. F is continuous at x and let δ > 0

define

y≤x

1

y−x

h(y) = 1 − δ

y ∈ (x, x + δ)

0

y ≥x+δ

and

then since Fn (x) =

1

g(y) = 1 −

0

R

y ≤x−δ

y ∈ (x − δ, x)

y≥x

y−x

δ

1(−∞,x] dµn we have that

Z

Z

gdµn ≤ F (x) ≤

hdµn

Z

limsupn Fn (x) ≤ limsupn

Z

Z

liminfn Fn (x) ≥ liminfn

hdµ ≤ F (x + δ)

hdµn =

Z

gdµn =

gdµ ≥ F (x − δ)

by continuity of h, g

By continuity of F at x we have that

lim F (x + δ) = F (x) = lim F (x − δ)

δ→0

δ→0

hence

lim Fn (x) = F (x)

n→∞

hence Fn → F in distribution.

Suppose Fn → F in distribution.

then

Z

lim

n→∞

Z

1(−∞,x] dµn =

1(−∞,x] dµ

∀x ∈ R

Since any continuous bounded function can be approximated by sums of indicator functions and linearity of the

integral we indeed have that Fn → F weakly

Lemma:

Let {Xi }∞

i=1 , X be random variables on (Ω, F, P) then if Xn → X in probability then Xn → X weakly

Proof:

There are two different proofs of this

23

4 CONVERGANCE

• Since Xn → X in probability and f is continuous we have that f (Xn ) → f (X) in probability.

f (Xn ) is bounded by the proerties of f hence by the dominated convergance theorem

Z

Z

f (Xn )dP = f (X)dP

lim

n→∞

hence indeed Xn → X weakly.

• Since f is bounded we know that ∃k > 0 s.t. |f (Xn )| ≤ k

Z

limk→∞ supXn

∀n hence

|f (Xn )|dP = 0

{|f (Xn )>k|}

so f (Xn ) is uniformly integrable.

Since f is continuous and Xn → X in probability we have that f (Xn ) → f (X) in probability.

This along with uniform integrability implies that f (Xn ) → f (X) in L1

i.e. limn→∞ E[|f (Xn ) − f (X)|] = 0

hence indeed we have weak convergance.

Corollary:

Let {Xi }∞

i=1 , X be random variables on (Ω, F, P) then if Xn → X a.s. then Xn → X weakly

Definition: Conditional Probability

If (Ω, F, P) is a probability space with A, B ∈ F

then the conditional probabiltiy of B given A has occurred (where P(A) > 0 is

P(B|A) =

P(A ∩ B)

P(A)

Definition: Conditional Expectation

If (Ω, F, P) is a probability space with A ∈ F with strictly positive probability

then the conditional expectation of r.v X given A has occurred is

Z

1

E[X|A] =

XdP

P(A) A

morover if F0 = σ(G) for a countable partition G = {Ai }∞

i=1 of measurable sets on Ω

then

Z

X

1Ai

E[X|F0 ] =

XdP

P(Ai ) Ai

i:P(Ai )>0

is a random variable.

Theorem:

Let X ∈ L1 (Ω, F, P), F0 = σ(G) s.t. G is a countable partition {Ai }∞

i=1 ∈ Ω

Then E[X|F0 ] has the following properties:

• E[X|F0 ] is F0 measurable.

• ∀A ∈ F0 we have that

E[X1A ] = E[1A E[X|F0 ]]

Proof:

i]

• This is trivial since E[X;A

P(Ai ) is constant, 1Ai are measurable since Ai ∈ F0 and the countable sum of

measurable functions is measurable.

• Let A ∈ G with P(A) > 0

24

4

CONVERGANCE

1

A

i

E[1A E[X|F0 ]] = E 1A

E[X; Ai ]

P(Ai )

i:P(Ai )>0

1A

E[X; A]

=E

P(A)

E[1A ]E[X; A]

=

P(Ai )

= E[X; A]

X

since {Ai }∞

i=1 form a partition

= E[X1A ]

This extends to A ∈ F0 by standard operations of elements of σ-algebras. If P(A) = 0 then

X1A , E[X|F0 ]1A = 0 a.s. hence both sides of the equation are null.

Corollary:

Taking y = 1 in the second property we have that

E[X] = E[E[X|F0 ]]

Definition: Version Of Conditional Expectation

A random variable X0 ∈ L1 (Ω, F, P) is called a version E[X|F0 ] if

• X0 is F0 measurable

• ∀A ∈ F0 we have

E[1A X] = E[1A X0 ]

Theorem:

Let X1 , X2 ∈ L1 (Ω, F, P) then

•

E[X1 + X2 |F0 ] = E[X1 |F0 ] + E[X2 |F0 ]

P a.s.

• ∀c ∈ R

E[cX|F0 ] = cE[X|F0 ]

P a.s.

Proof:

For any choice of E[Xi |F0 ] we have that E[X1 |F0 ] + E[X2 |F0 ] is measurable hence

E[1A (E[X1 |F0 ] + E[X2 |F0 ])] = E[1A E[X1 |F0 ] + 1A E[X2 |F0 ]]

= E[1A E[X1 |F0 ]] + E[1A E[X2 |F0 ]]

= E[1A X1 ] + E[1A X2 ]

= E[1A (X1 + X2 )]

= E[1A E[X1 + X2 |F0 ]]

Theorem:

Let X1 , X2 ∈ L1 (Ω, F, P) s.t. X1 ≤ X2

then

E[X1 |F0 ] ≤ E[X2 |F0 ]

P a.s.

25

by linearity of expectation

4

Proof:

Let B0 := {ω ∈ Ω : E[X1 |F0 ] > E[X2 |F0 ]} ∈ F0 we want to show that P(B0 ) = 0

0 ≤ E[1B0 (E[X1 |F0 ] − E[X2 |F0 ])]

= E[1B0 (X1 − X2 )]

≤0

hence P(B0 ) = 0 as required.

Theorem:

If Z, W are conditional expectations of X|F0 for some X ∈ L1 then Z = W

Proof:

Z, W are F0 -measurable so write

A0 := {Z > W } ∈ F0

then we have

P a.s.

E[1A0 (Z − W )] = E[1A0 Z] − E[1A0 W ]

= E[1A0 X] − E[1A0 X]

=0

since 1A0 (Z − W ) ≥ 0 we have that 1A0 (Z − W ) = 0 a.s.

but since Z > W on A0 it must be that 1A0 = 0 a.s.

hence P(A0 ) = 0

this holds similarly for A1 := {W > Z}

P(Z 6= W ) = P({Z > W } ∪ {Z < W })

≤ P(A0 ) + P(A1 )

=0

Definition: Respective Density

If µ, ν are measures on measure space (Ω, F) then ν has density with respect to µ if

∃f : Ω → [0, ∞) that is F-measurable s.t.

∀A ∈ F we have

Z

ν(A) =

f dµ

A

Theorem: Radon-Nikodym

If µ, ν are finite measures on measurable space (Ω, F) then the following are equivalent:

• µ(A) = 0 =⇒ ν(A) = 0

• ν has density w.r.t. µ

Lemma:

If 0 ≤ X ∈ L1 and F0 ⊆ F is a sub-σ-algebra then take

µ := P|F0 and

ν : F0 → [0, ∞) s.t.

Z

∀A ∈ F0

ν(A) =

XdP

A

then if A is µ-null then by Radon-Nikodym ν has density g w.r.t. µ

Lemma:

26

CONVERGANCE

4

CONVERGANCE

If g is the density of ν w.r.t. µ where

Z

ν(A) :=

XdP

A

then g is a conditional expectation E[X|F0 ]

Proof:

let A0 ∈ F0

Z

E[g1A0 ] =

gdP

ZA0

=

gdµ

A0

Z

=

1A0 dν

Z

=

1A0 XdP

= E[1A0 X]

Corollary:

If X ∈ L1 then X = X+ − X− and if

g+ , g− are versions of E[X+ |F0 ], E[X− |F0 ] then by linearity

E[X|F0 ] = g+ − g−

Theorem:

If X ∈ L1 (Ω, F, P) and F0 ⊆ F1 ⊆ F are sub-σ-algebras then

X0 := E[E[X|F1 ]|F0 ] = E[X|F0 ]

Proof:

We want to show that E[X0 1A0 ] = E[X1A0 ]

E[X0 1A0 ] = E[1A0 E[E[X|F1 ]|F0 ]]

= E[1A0 E[X|F1 ]

since A0 ∈ F0

= E[X1A0 ]

since A0 ∈ F1

Theorem:

If X ∈ L1 (Ω, F, P) then

E[X|F0 ] ∈ L1 (Ω, F, P)

Proof:

Define A0 := {E[X|F0 ] ≥ 0} ∈ F0

then

E[E[X|F0 ]+ ] = E[E[X|F0 ]1A0 ]

= E[X1A0 ]

since X ∈ L1

<∞

this holds similarly for E[E[X|F0 ]− ]

hence this holds for E[X|F0 ] by linearity.

Theorem:

27

4 CONVERGANCE

Let (Ω, F, P) be a probability space with F0 ⊂ F sub-σ algebra, X ∈ L (Ω, F, P) where F0 ⊥ σ(X)

then

E[X|F0 ] = E[X]

P a.s.

1

Proof:

E[X] is F0 measurable since constant so let A ∈ F0

E[1A E[X]] = E[X]E[1A ]

since E[X] is constant

= E[1A X]

by independence

= E[1A E[X|F0 ]]

Theorem: MCT For Conditional Expectations

1

Let {Xn }∞

n=1 ∈ L be an increasing sequence s.t. Xn → X

then

P-a.s. for some X ∈ L1

E[Xn |F0 ] → E[X|F]

both P-a.s. and in L1

Proof:

Since E[Xn |F0 ] is an increasing sequence of random variables ∃Z which is an F0 measurable r.v. s.t.

E[Xn |F0 ] → Z P-a.s.

For A ∈ F0 we have that

E[Z1A ] = E[ lim E[Xn |F0 ]1A ]

n→∞

= lim E[E[Xn |F0 ]1A ]

by Monotone Convergance of E

n→∞

= lim E[Xn 1A ]

n→∞

= E[X1A ]

by MCT

hence Z = E[X|F0 ] P-a.s.

0 ≤ E[X|F0 ] − E[Xn |F0 ]

≤ E[X|F0 ] − E[X1 |F0 ]

P a.s.

1

∈L

hence by dominated convergence theorem

lim E[|E[X|F0 ] − E[Xn |F0 ]|] = 0

n→∞

Theorem: DCT For Conditional Expectations

1

Let {Xn }∞

P-a.s.

n=1 ∈ L converge to X

1

If ∃Z ∈ L s.t. ∀n ∈ N we have that |Xn | ≤ Z P-a.s. then

• X ∈ L1

• E[Xn |F0 ] → E[X|F0 ] P-a.s.

• E[Xn |F0 ] → E[X|F0 ]

∈ L1

Proof:

• X ∈ L1 since |Xn | ≤ Z

P-a.s. =⇒ |X| ≤ Z

P-a.s.

28

4

CONVERGANCE

• define Un := infm≥n Xm is an increasing sequence

and Vn := supm≥n Xm is a decreasing sequence

then Un , Vn → X P-a.s.

moreover

−Z ≤ Un ≤ Xn ≤ Vn ≤ Z

by MCT for conditional expectations we have that

E[Un |F0 ] → E[X|F0 ]

E[Vn |F0 ] → E[X|F0 ]

both P-a.s. and in L1

and by monotonicity of conditional expectations we have that

E[Un |F0 ] ≤ E[Xn |F0 ] ≤ E[Vn |F0 ]

so indeed E[Xn |F0 ] → E[X|F0 ] in L1 and P-a.s.

Theorem:

Let X ∈ L1 (Ω, F, P) and Y be F0 measurable s.t. XY ∈ L1 then

E[XY |F0 ] = Y E[X|F0 ]

Proof:

Suppose Y = 1A for some A ∈ F0

then take B ∈ F0

E[1B Y E[X|F0 ]] = E[1B∩A E[X|F0 ]]

since A ∩ B ∈ F0

= E[1B∩A X]

= E[1B Y X]

= E[1B E[XY |F0 ]]

hence the theorem

P∞ holds for indicator functions

Suppose Y = n=1 αn 1An

"

E[XY |F0 ] = E

∞

X

#

αn 1An X|F0

n=1

=

=

∞

X

n=1

∞

X

αn E[1An X|F0 ]

by linearity

αn 1An E[X|F0 ]

by the first part of the theorem

n=1

= Y E[X|F0 ]

hence the theorem holds for simple functions

Suppose Y is positive

let {Yn }∞

n=1 be an increasing sequence of simple functions s.t. Yn → Y

|Yn X| ≤ |Y X| ∈ L1

and Yn X → Y X

hence by DCT for conditional expectations we have that

29

4

Yn E[X|F0 ] = E[Yn X|F0 ] → E[Y X|F0 ]

CONVERGANCE

P − a.s.

moreover

Yn E[X|F0 ] → Y E[X|F0 ] P − a.s.

hence the theorem holds for positive functions.

For the general case write Y = Y+ − Y−

E[XY |F0 ] = E[X(Y+ − Y− )|F0 ]

= E[XY+ |F0 ] − E[XY− |F0 ]

= Y+ E[X|F0 ] − Y− E[X|F0 ]

= Y E[X|F0 ]

Lemma:

If X ∈ L1 , F0 ⊆ F is a sub-σ algebra then

||E[X|F0 ]||1 ≤ ||X||1

Proof:

Z

||E[X|F0 ]||1 =

|E[X|F0 ]|dP

Z

=

Z

=

Z

E[X|F0 ]+ dP +

E[X|F0 ]− dP

Z

E[X|F0 ]1E[X|F0 ]>0 dP + E[X|F0 ]1E[X|F0 ]<0 dP

= E[X1E[X|F0 ]>0 ] + E[X1E[X|F0 ]<0 ]

≤ E[|X|]

Z

= |X|dP

= ||X||1

Theorem: Conditional Jensen’s Inequality

Let I ⊂ R be open, ϕ : I → R be convex and X ∈ L1 a r.v. with X : Ω → I hence ϕ ◦ X ∈ L1

then

E[ϕ ◦ X|F0 ] ≥ ϕ(E[X|F0 ])

Proof:

From Jensen’s inequality we have that

ϕ(X) ≥ ϕ(E[X|F0 ]) + D− ϕ(E[X|F0 ])(X − E[X|F0 ])

let An = {ω ∈ Ω : |D− ϕ(E[X|F0 ])| ≤ n} ∈ F0

then

since 1An − F0 measurable

1An E[ϕ(X)|F0 ] = E[1An ϕ(X)|F0 ]

≥ E[1An ϕ(E[X|F0 ])] + E[1An D− ϕ(E[X|F0 ])(X − E[X|F0 ])]

= 1An ϕ(E[X|F0 ]) + 1An D− ϕ(E[X|F0 ])E[X − E[X|F0 ]]

= 1An ϕ(E[X|F0 ]) + 1An D− ϕ(E[X|F0 ])(E[X|F0 ] − E[X|F0 ]

= 1An ϕ(E[X|F0 ])

30

4

CONVERGANCE

this holds for all n hence also for limn→∞ An = Ω

Theorem:

Let p ≥ 1, X ∈ Lp then

||E[X|F0 ]||p ≤ ||X||p

Proof:

Write ϕ(x) = xp

Z

||E[X|F0 ]||p =

Z

=

Z

≤

is convex hence by Jensen’s inequality

|E[X|F0 ]|p dP

ϕ(|E[X|F0 ]|)dP

|E[ϕ(X)|F0 ]|dP

Z

= |E[X p |F0 ]|dP

= ||X||p

Corollary:

If (Ω, F), (Ω0 , F 0 ) are measurable spaces,

Y : (Ω, F) → (Ω0 , F 0 ) is F − F 0 measurable and

Z : Ω → R then

Z is σ(Y ) measurable iff

∃g : (Ω0 , F 0 ) → (R, B(R)) s.t. Z = g ◦ Y

Proof:

Clearly if ∃g : (Ω0 , F 0 ) → (R, B(R)) s.t. Z = g ◦ Y then Z is σ(Y ) measurable since the composition of measurable

functions is measurable.

Suppose

n

X

Z=

αk 1Ak

k=1

{Ak }nk=1

for

∈ σ(Y )

notice that σ(Y ) = {Y −1 (A0k ) : A0k ∈ F 0 }

hence Ak = Y −1 (A0k ) for some A0k ∈ F 0

so

Z=

=

=

=

n

X

k=1

n

X

k=1

n

X

αk 1Ak

αk 1Y −1 (A0k )

αk (1A0k ◦ Y )

k=1

n

X

!

αk 1A0k

◦Y

k=1

=g◦Y

where clearly g is F 0 − B(R) measurable since {A0k }nk=1 ∈ F 0

Suppose Z is positive.

then ∃{Zn }∞

n=1 simple increasing functions s.t. limn→∞ Zn = Z

0

0

then ∃{gn }∞

n=1 (Ω , F ) → (R, B(R)) s.t. Zn = gn ◦ Y

then define g = supn gn is clearly F 0 − B(R) measurable and Z = g ◦ Y

31

4

For general Z write Z = Z+ − Z−

then ∃g+ , g− (Ω0 , F 0 ) → (R, B(R)) s.t. Z+ = g+ ◦ Y, Z− = g− ◦ Y

so write g = g+ − g−

hence

Z = (g+ − g− ) ◦ Y = g ◦ Y

Definition: Factorised Conditional Expectation

f : (R, B(R)) → (R, B(R)) is a FCE of the r.v. X given the r.v. Y : Ω → R if

f ◦ Y is a version of the conditional expectation of X given Y

i.e.

E[X|Y ] = E[X|σ(Y )] = f (Y )

Corollary:

Let Y : (Ω, F) → (R, B(R)) and X ∈ L1 (Ω, F, P)

then

∃f FCE of X given Y and

Z

f dPY = E[1Y −1 (A) X]

A

Proof:

By the previous lemma

write Z = E[X|Y ] which is σ(Y ) measurable hence indeed

∃f FCE of X given Y

moreover

Z

Z

f dPY = 1Y −1 (A) XdP

A

= E[1Y −1 (A) X]

Remark:

E[X|Y = y] is unique PY -a.s.

Theorem:

Let Y : (Ω, F) → (R, B(R)) and X ∈ L1 (Ω, F, P)

If λ, µ are σ-finite measures on (R, B(R)) s.t. (X, Y ) has density g w.r.t. λ ⊗ µ

then

R

xg(x, y)dλ(x)

f (Y ) := RR

g(x, y)dλ(x)

R

is an FCE of X given Y

Proof:

recall that

Z

P((X, Y ) ∈ A1 × A2 ) =

g(x, y)d(λ ⊗ µ)(x, y)

ZA1 ×A2

E[h(X, Y )] =

h(x, y)g(x, y)d(λ ⊗ µ)(x, y)

R2

Suppose f takes the required form, hence clearly it is Borel measurable.

32

CONVERGANCE

5

MARTINGALES

Let A ∈ B(R)

Z

x1A (y)g(x, y)d(λ ⊗ µ)(x, y)

Z

Z

= 1A (y) xg(x, y)dλ(x)dµ(y)

Z

Z

= 1A (y)f (y) g(x, y)dλ(x)dµ(y)

Z

= 1A (y)f (y)g(x, y)d(λ ⊗ µ)(x, y)

E[X1Y −1 (A) ] =

by Fubini

= E[1A (y)f (y)]

hence indeed f (y) = E[X|Y ]

5

Martingales

Definition: Filtration

If (Ω, F) is a measurable space then {Fi }∞

i=1 sub-σ-algebras of F are a filtration if Fi ⊆ Fi+1 ⊆ F

Definition: Adapted

∞

A sequence of random variables {Xi }∞

i=1 is called adapted to {Fi }i=1 if

Xn is Fn -measurable ∀n

Definition: Martingale

If (Ω, F, P) is a probability space and {Fi }∞

i=1 is a filtration of (Ω, F) then

{Xn }∞

is

a

martingale

if:

n=1

• E[|Xn |] < ∞

∀n

∞

• {Xn }∞

n=1 are adapted to {Fn }n=1

• E[Xn+1 |Fn ] = Xn

Remark:

If {Xn }∞

n=1 has the first three properties of a martingale but

E[Xn+1 |Fn ] ≥ Xn then {Xn }∞

n=1 is a sub-martingale

E[Xn+1 |Fn ] ≤ Xn then {Xn }∞

n=1 is a super-martingale

Lemma:

∞

If {Xn }∞

n=1 is a martingale w.r.t. {Fn }n=1 then

E[Xn+m |Fn ] = 0

Proof:

E[Xn+m |Fn ] = E[E[Xn+m |Fn ∩ Fn+m−1 ]]

= E[E[Xn+m |Fn+m−1 ]|Fn ]

= E[Xn+m−1 |Fn ]

= E[Xn+1 |Fn ]

= Xn

Theorem:

2

∞

If {Xn }∞

n=1 ∈ L is a martingale w.r.t. {Fn }n=1 and r ≤ s ≤ t

then

• E[Xr (Xs − Xr )|Fr ] = 0

• E[Xr (Xt − Xs )|Fs ] = 0

33

∀i

5

• E[(Xt − Xs )(Xs − Xr )|Fs ] = 0

• E[Xr (Xs − Xr )] = 0

• E[Xr (Xt − Xs )] = 0

• E[(Xt − Xs )(Xs − Xr )] = 0

Proof:

•

E[Xr (Xs − Xr )|Fr ] = E[Xr Xs − Xr2 |Fr ]

= E[Xr Xs |Fr ] − E[Xr2 |Fr ]

= X r E[Xs |Fr ] − Xr2

=0

•

E[Xr (Xt − Xs )|Fs ] = E[Xr Xt − Xr Xs |Fs ]

= E[Xr Xt |Fs ] − E[Xr Xs |Fs ]

= X r E[Xt |Fs ] − X r Xs

=0

•

E[(Xt − Xs )(Xs − Xr )|Fs ] = E[Xt Xs − Xs2 − Xt Xr + Xs Xr |Fs ]

= E[Xt Xs |Fs ] − E[Xs2 |Fs ] − E[Xt Xr |Fs ] + E[Xs Xr |Fs ]

= Xs E[Xt |Fs ] − Xs2 − Xr E[Xt |Fs ] + Xs Xr

= Xs2 − Xs2 − Xr Xs + Xs Xr

=0

•

E[Xr (Xs − Xr )] = E[E[Xr (Xs − Xr )|Fr ]]

= E[0]

=0

E[Xr (Xt − Xs )] = E[E[Xr (Xt − Xs )|Fs ]]

= E[0]

=0

E[(Xt − Xs )(Xs − Xr )] = E[E[(Xt − Xs )(Xs − Xr )|Fs ]]

= E[0]

=0

34

MARTINGALES

5

MARTINGALES

n

Definition: L Bounded

A set of r.vs C is Ln bounded if

supX∈C E[|X|n ] < ∞

Theorem:

∞

2

Every L2 bounded martingale {Xn }∞

n=1 w.r.t. {Fn }n=1 converges in L

2

2

i.e. ∃I ∈ L s.t. limn→∞ E[|Xn − Y | ] = 0

Proof:

L2 is complete hence every cauchy sequence converges

n−1

X

E[(Xk+1 − Xk )2 ] =

k=1

n−1

X

2

E[(Xk+1

+ Xk2 − 2Xk Xk+1 ]

k=1

=

n−1

X

2

E[(Xk+1

+ Xk2 − 2Xk Xk+1 ]

k=1

= E[(Xn − X1 )2 ]

by a telescoping sum, orthogonality of increments

since Xn is L2 bounded

≤ 2c

this yields

lim supm≥n≥k E[(Xm − Xn )2 ] = lim supm≥n≥k

n→∞

k→∞

≤ lim supm≥n≥k

k→∞

m−1

X

E[(Xl+1 − Xl )2 ]

l=n

∞

X

E[(Xl+1 − Xl )2 ]

l=k

=0

Remark:

2

The previous theorem

S∞ also gives us that Y ∈ L (Ω, F∞ , P)

where F∞ := σ ( k=1 Fk )

Theorem: Levy

Let X ∈ L2 (Ω, F, P) and {Fk }∞

k=1 a filtration

define Xn := E[X|Fn ]

then Xn → E[X|F∞ ] in L2

Proof:

We have that Xn converges in L2 so let Xn → Y

We want to show that E[Y 1A ] = E[X1A ]

∀A ∈ Fn

let A ∈ Fn and m ≥ n then notice that

E[Xm 1A ] = E[E[X|Fm ]1A ]

= E[X1A ]

since Xm → Y in L2 we have that

lim |E[Xm 1A − Y 1A ]| ≤ lim (E[Xm − Y ]2 1A )1/2

m→∞

by the Cauchy-Schwarz inequality

m→∞

=0

hence indeed we have that E[X1A ] = E[Y 1A ] ∀A ∈ Fn

We need this ∀A ∈ F∞

35

5

MARTINGALES

define

D := {A ∈ F∞ : E[X1A ] = E[Y 1A ]}

We want to show that D is a dynkin system.

Ω ∈ D holds trivially since Ω ∈ Fn ∀n

Let A ∈ D

E[Y 1Ac ] = E[Y 1Ω ] − E[Y 1A ]

= E[X1Ω ] − E[X1A ]

= E[X1Ac ]

as required.

S∞

Suppose {Ai }∞

i=1 ∈ D are disjoint and A :=

i=1 Ai

"

E[Y 1A ] = E Y lim

n→∞

"

= lim E Y

n→∞

= lim

n→∞

= lim

n→∞

n

X

k=1

n

X

#

1Ak

#

1Ak

by DCT

k=1

n

X

k=1

n

X

E[Y 1Ak ]

E[X1Ak ]

k=1

"

= lim E X

n→∞

"

= E X lim

n→∞

n

X

k=1

n

X

#

1Ak

#

1Ak

k=1

= E[X1A ]

SinceSD is a Dynkin system if I ⊂ D is a π-system then σ(I) ⊂ D

∞

I := n=1 Fn ⊂ D is a π-system since

if {Ai }ni=1 ∈ I then ∀i ∃ki s.t. Ai ∈ Fki

hence since finite ∃K = maxi {ki }

then since {Fn }∞

n=1 is a filtration

Tn Ai ∈ FK ∀i

hence since FK is a σ-algebra i=1 Ai ∈ FK

thus F∞ = σ(I) ⊆ D ⊆ F∞

Lemma: DOOB

∞

2

∞

Let {Fn }∞

n=1 be a filtration of (Ω, F, P) and {Xn }n=1 ∈ L an adapted sequence of {Fn }n=1 then

1

∞

1

• ∃{An }∞

n=1 ⊂ L and martingale {Mn }n=1 ⊂ L with

– A1 = 0

– An+1

Fn -measurable

– Xn = Mn + An

which is a P-a.s. unique decomposition

• For Xn = Mn + An above we have that

∞

{Xn }∞

n=1 is a sub-martingale iff {An }n=1 is increasing P-a.s.

36

5

MARTINGALES

Proof:

• If we construct the sequence recursively then the sequence will be P-a.s unique

A1 = 0

=⇒ M1 = X1

Xn+1 = Mn+1 + An+1

E[Xn+1 |Fn ] = E[Mn+1 |Fn ] + E[An+1 |Fn ]

= Mn + An+1

since An+1

An+1 = E[Xn+1 |Fn ] − Mn

is clearly Fn measurable.

E[Mn+1 |Fn ] = E[Xn+1 − An+1 |Fn ]

= E[Xn+1 |Fn ] − E[An+1 |Fn ]

= An+1 + Mn − An+1

= Mn

hence {Mn }∞

n=1 is a martingale.

•

An+1 − An = E[Xn+1 |Fn ] − Mn − An

= E[Xn+1 |Fn ] − Xn

which is positive iff Xn ≤ E[Xn+1 |Fn ]

i.e. {Xn }∞

n=1 is a sub-martingale.

Definition: Stopping Time

Let {Fn }∞

n=1 be a filtration on (Ω, F, P) and let

Y : Ω → N ∪ {∞} be a random variable

then T is a stopping time if

{T ≤ n} ∈ Fn

∀n

Lemma:

Let (Ω, F, P) be a probability space with stopping times T, S on filtration {Fn }∞

n=1

then T ∩ S := min(T, S), T ∪ S := max(T, S) are stopping times.

Proof:

Clearly T ∩ S, T ∪ S are r.vs

{T ∩ S ≤ n} = {T ≤ n} ∪ {S ≤ n}

{T ∪ S ≤ n} = {T ≤ n} ∩ {S ≤ n}

Since T, S are stopping times {T ≤ n}, {S ≤ n} ∈ Fn

hence {T ∩ S ≤ n}, {T ∪ S ≤ n} ∈ Fn

Lemma:

Let (Ω, F, P) be a probability space with stopping time T on filtration {Fn }∞

n=1

then the set of events which are observable up top time T

FT := {A ∈ F : A ∩ {T ≤ n} ∈ Fn ∀n}

is a σ-algebra.

Lemma:

Let (Ω, F, P) be a probability space with stopping time T on filtration {Fn }∞

n=1

∞

If {Xn }∞

n=1 is adapted to {Fn }n=1 then

37

Fn -measurable

5

(

XT (ω) (ω) T (ω) < ∞

XT (ω) :=

0

o/w

is FT measurable

Proof:

Let Xn : (Ω, F) → (Ω0 , F 0 )

and let A ∈ F 0

{XT ∈ A} = {0 ∈ A, T = ∞} ∪

∞

[

{Xn ∈ A} ∩ {T = n} ∈ F

n=1

hence XT is a random variable

Moreover

{XT ∈ A} ∩ {T = n} = {Xn ∈ A} ∩ {T = n} ∈ Fn

since Xn adapted and T is a stopping time

hence {XT ∈ A} ∈ FT

Lemma:

∞

Let {Xn }∞

n=1 be a martingale on {Fn }n=1 and T stopping time s.t. T ≤ m a.s.

then E[Xm |T ] = XT P-a.s.

Proof:

XT is FT measurable

for k ∈ N|[1,m] and A ∈ FT

E[XT 1A 1T =k ] = E[Xk 1A∩{T =k} ]

= E[E[Xm |Fk ]1A∩{T =k} ]

= E[E[Xm 1A∩{T =k} |Fk ]]

= E[Xm 1A∩{T =k} ]

m

X

E[XT 1A ] =

E[XT 1A 1T =k ]

=

k=1

m

X

E[Xm 1A 1T =k ]

k=1

= E[Xm 1A ]

hence XT = E[Xm |FT ]

Lemma:

Let S ≤ T be stopping times on {Fn }∞

n=1 then

FS ⊆ FT

Proposition: Optimal Stopping

Let S ≤ T be two bounded stopping times on {Fn }∞

n=1

and {Xn }∞

n=1 be a martingale on the same filtration.

then E[XT |FS ] = XS

P-a.s.

Proof:

T ≤ m hence

E[XT |FS ] = E[E[Xm |FT ]|FS ]

= E[Xm |FS ]

= XS

38

MARTINGALES

5

Proposition:

Let S ≤ T be P-a.s. finite stopping times and {Xn }∞

n=1 a sub-martingale

then

E[XT |FS ] ≥ XS

Proposition:

Let S ≤ T be P-a.s. finite stopping times and {Xn }∞

n=1 a super-martingale

then

E[XT |FS ] ≤ XS

Theorem: First DOOB Inequality

Let {Xn }∞

n=1 be a sub-martingale, X n := maxi≤n Xi and a > 0 then

aP(X n ≥ a) ≤ E[Xn 1{X n ≥a} ]

Proof:

Let T = inf {n ∈ N : Xn ≥ a}

which is a stopping time.

Then

{X n ≥ a} = {T ≤ n} = {XT ∩n ≥ a}

hence

aP(X n ≥ a) = aP(XT ∩n ≥ a)

= aE[1T ∩n ]

= E[a1T ∩n ]

≤ E[XT ∩n 1T ∩n ]

≤ E[E[Xn |FT ∩n ]1T ∩n ]

= E[E[Xn 1T ∩n |FT ∩n ]]

= E[Xn 1T ∩n ]

Corollary:

If {Xn }∞

n=1 is a non-negative sub-martingale then ∀a ≥ 0, p ≥ 1

ap P(X n ≥ a) ≤ E[Xnp ]

Theorem: Second DOOB Inequality

If {Xn }∞

n=1 is a non-negative sub-martingale and p > 1 then

p

p

p

E[X n ] ≤

E[Xnp ]

p−1

Definition: Crosses

The sequence {Xn }∞

n=1 crosses the interval [a, b] if ∃i, j s.t. Xi < a < b < Xj

Lemma:

Let {Xn }∞

n=1 which crosses any rational interval only finitely often.

Then {Xn }∞

n=1 converges in R ∪ {±∞}

Proof:

Suppose liminfn Xn < limsupn Xn

then ∃a, b ∈ Q s.t.liminfn Xn < a < b < limsupn Xn

then {Xn }∞

n=1 crosses [a, b] i.o.

Definition: Up-Crossing

∞

If {Xn }∞

n=1 is an adapted sequence of {Fn }n=1 and a < b then

define T0 := 0 and recursively

Sk := inf {n ≥ Tk−1 : Xn ≤ a}

Tk := inf {n ≥ Sk : Xn ≥ b}

39

MARTINGALES

5

k

{Xn }Tn=S

k

Then

is the kth up-crossing of [a, b]

Remark:

We write the number of up-crossings of [a, b] by time n as

Una,b :=

n

X

1Tk ≤n

k=1

Lemma:

Let {Xn }∞

n=1 be a martingale then

E[Una,b ] ≤

Proof:

Let

Z :=

∞

X

E[(Xn − a)− ]

b−a

(XTk ∩n − XSk ∩n )

k=1

then

E[(XTk ∩n − XSk ∩n )] = E[XTk ∩n ] − E[XSk ∩n ]

= E[E[XTk ∩n |FSk ∩n ]] − E[XSk ∩n ]

= E[XSk ∩n ] − E[XSk ∩n ]

=0

E[Z] = 0

Suppose Una,b = m then

Z ≥ m(b − a) + (Xn − XSm +1∩n )

since Z crosses [a, b] m times and there could be one uncompleted crossing.

Xn − XSm +1∩n ≥ Xn − a hence

Z≥

∞

X

(1{Una,b =m} m(b − a) + 1{Una,b =m} (Xn − a))

m=0

0 = E[Z]

∞

X

≥

P(Una,b = m)m(b − a) − E[(Xn − a)− ]

m=0

= E[Una,b ](b − a) − E[(Xn − a)− ]

hence we have the required result.

Theorem: Martingale Convergence Theorem

Let {Xn }∞

n=1 be a martingale s.t. supn E[(Xn )− ] < ∞

then X∞ := limn→∞ Xn exists P-a.s.

moreover X∞ is F∞ measurable and X∞ ∈ L1

Proof:

By the previous lemma

E[(Xn − a)− ]

<∞

E[Una,b ] ≤

b−a

by monotone convergence of expectations we have

a,b

E[U∞

]<∞

a,b

a,b

but E[U∞

] ≥ 0 hence E[U∞

] < ∞ P-a.s.

40

MARTINGALES

5

MARTINGALES

Since a, b ∈ Q there are only a countable number of intervals hence

∃N ∈ F s.t. P(N ) = 0

and ∀a < b ∈ Q, ∀ω ∈ Ω \ N

a,b

we have that E[U∞

]<∞

moreover limn→∞ Xn (ω) exists on R ∪ {±∞} ∀ω ∈ Ω \ N

∞

Y := limn→∞ Xn is F∞ since {Xn }∞

n=1 is adapted to {Fn }n=1

E[Y+ ] = E[liminf (Xn )+ ]

≤ liminf E[(Xn )+ ]

by Fatou’s lemma

≤ E[(X0 )+ ]

by Jensen’s inequality

<∞

E[Y− ] = E[liminf (Xn )− ]

≤ liminf E[(Xn )− ]

by Fatou’s lemma

≤ E[(X0 )− ]

by Jensen’s inequality

<∞

hence E[Y ] < ∞ therefore Y ∈ L1

Remark:

• The upcrossing lemma and martingale convergence theorem remain true for super martingales

• In general we do not have L1 convergence

Theorem: Convergence For Uniformly Integrable Martingales

Suppose that {Xn }∞

n=1 is a uniformly integrable super-martingale.

Then Xn converges P-a.s. and in L1 (Ω, F, P) to some r.v. X∞ ∈ L1 Ω, F∞ , P)

and E[X∞ |Fn ] ≤ Xn

Proof:

For a super-martingale {Xn }∞

n=1 by uniform integrability

Z

limk→∞ supn

|Xn |dP = 0

{|Xn |>k}

Z

Z

(Xn )− dP ≤ |Xn |dP

Z

Z

=

|Xn |dP +

|Xn |dP

{|X |>k}

{|Xn |≤k}

Z n

Z

≤

|Xn |dP + k

dP

{|Xn |>k}

{|Xn |≤k}

Z

≤ supn

|Xn |dP + kP(|Xn | ≤ k)

{|Xn |>k}

<∞

Then by the martingale convergence theorem limn→∞ Xn = X∞ P-a.s.

and since a.s. convergence and uniform integrability ensure L1 convergence we have convergence in L1 (Ω, F, P)

i.e. limn→∞ ||X∞ − Xn || = 0

41

5

MARTINGALES

||E[X∞ |Fm ] − E[Xn |Fm ]|| = ||E[X∞ − Xn |Fm ]||

≤ ||X∞ − Xm ||

lim ||E[X∞ |Fm ] − E[Xn |Fm ]|| = 0

m→∞

Corollary:

Suppose that {Xn }∞

n=1 is a uniformly integrable sub-martingale.

Then Xn converges P-a.s. and in L1 (Ω, F, P) to some r.v. X∞ ∈ L1 (Ω, F∞ , P)

and E[X∞ |Fn ] ≥ Xn

Corollary:

Suppose that {Xn }∞

n=1 is a uniformly integrable martingale.

Then Xn converges P-a.s. and in L1 (Ω, F, P) to some r.v. X∞ ∈ L1 (Ω, F∞ , P)

and E[X∞ |Fn ] = Xn

Theorem: Backwards Martingale Theorem

Let (Ω, F, P) be a probability space and {G−n : n ∈ N} sub-σ-algebras of F s.t.

\

G∞ :=

G−k ⊆ G−(n+1) ⊆ G−n ⊆ G−1

k∈N

For X ∈ L1 (Ω, F, P) define

X−n := E[X|G−n ]

1

then limn→∞ X−n exists P-a.s. and in L

Theorem: Strong Law Of Large Numbers

Pn

Let {Xi }∞

i=1 be i.i.d r.vs with E[|Xn |] < ∞, µ = E[Xi ] and Sn =

i=1 Xi

then Sn /n → µ P-a.s.

Proof:

T∞

Define G−n := σ({Si }∞

i=n ) and G−∞ =

n=1 G−n

then E[X1 |G−n ] = E[X1 |Sn ] = Sn /n

∞

since G−n = σ(Sn , {Xi }∞

i=n+1 ) and independence of {Xi }i=1

hence

Sn

L := lim

n→∞ n

exists P-a.s. and in L1

moreover

Pn

Pn+k

Xi

Xi

L = limsupn i=1

= limsupn i=k

n

n

is measurable w.r.t. τk = σ({Xi }∞

)

Ti=k

i

hence is measurable w.r.t. τ = k=1 nf tyFk

hence by Komogorov’s zero-one law ∃c s.t. P(L = c) = 0

moreover µ := E[Sn /n] and limn→∞ E[Sn /n] = E[L] = c

hence indeed µ must be the limit.

Theorem: Central Limit Theorem

2

Let {Xk }∞

k=1 ∈ L be i.i.d random variables

denote µ := E[Xi ] ν := V ar[Xi ]

then

n

X

Xk − µ

√

→ N (0, 1)

Sn :=

νn

k=1

weakly

Proof:

√

WLOG let µ = 0, ν = 1 since otherwise we can use the transformation X = µ + νZ where Z ∼ N (0, 1) then

n

n

X

Xk − µ X Zk

√

√

=

νn

n

k=1

k=1

42

5

MARTINGALES

hence indeed it suffices to show for µ = 0, ν = 1

We need to show that ∀f ∈ Cb (R) that

Z

lim E[f (Sn )] =

n→∞

ex

2

/2

√

f (x)

dx

2π

however any f ∈ Cb (R) can be approximated by some f ∈ Cb (R) which has bounded and uniformly continuous

first and second derivatives.

∞

Let {Yk }∞

k=1 ∼i,i,d N (0, 1) be independent of {Xk }k=1

then it suffices to show that for

n

X

Y

√k

Tn :=

n

k=1

we have that

denote Xk,n

and define

lim E[f (Sn ) − f (Tn )] = 0

√

√

= Xk / n. Yk,n = Yk / n

n→∞

Wk,n =

k−1

X

Yj,n +

j=1

Then

f (Sn ) − f (Tn ) =

n

X

n

X

Xj,n

j=k+1

f (Wk,n + Xk,n ) − f (Wk,n + Yk,n )

k=1

by a telescoping sum, hence by Taylor’s theorem we have

1

2

+ RXk,n

f (Wk,n + Xk,n ) = f (Wk,n ) + f 0 (Wk,n )Xk,n + f 00 (Wk,n )Xk,n

2

1

2

+ RYk,n

f (Wk,n + Yk,n ) = f (Wk,n ) + f 0 (Wk,n )Yk,n + f 00 (Wk,n )Yk,n

2

1

2

2

− Yk,n

) + RXk,n − RYk,n

f (Wk,n + Xk,n ) − f (Wk,n + Yk,n ) = f 0 (Wk,n )(Xk,n − Yk,n ) + f 00 (Wk,n )(Xk,n

2

E[f (Wk,n + Xk,n ) − f (Wk,n + Yk,n )] ≤

2

2

E[|f 0 (Wk,n )(Xk,n − Yk,n )|] + E[|f 00 (Wk,n )(Xk,n

− Yk,n

)/2|] + E[|RXk,n | + |RYk,n |]

also from Taylor’s theorem we have

2

|RXk,n | ≤ Xk,n

||f 00 ||∞

so by uniform continuity we have that

∀ε > 0 ∃δ > 0 s.t.

2

|RXk,n | ≤ Xk,n

ε

∀|Xk,n | < δ

thus

2

2

|RXk,n | ≤ Xk,n

ε1{|Xk,n |<δ} + Xk,n

||f 00 ||∞ 1{|Xk,n |≥δ}

43

5

MARTINGALES

moreover

" n

#

X

E

|RXk,n | + |RYk,n |

k=1

≤

n

X

2

2

E[Xk,n

(ε1{|Xk,n |<δ} + ||f 00 ||∞ 1{|Xk,n |≥δ} ) + Yk,n

(ε1{|Yk,n |<δ} + ||f 00 ||∞ 1{|Yk,n |≥δ} )]

k=1

n

1X

E[Xn2 (ε1{|Xk,n |<δ} + ||f 00 ||∞ 1{|Xk,n |≥δ} ) + Yn2 (ε1{|Yk,n |<δ} + ||f 00 ||∞ 1{|Yk,n |≥δ} )]

=

n

k=1

!

n

n

X

1 X

2

00

2

2

00

E[Xn ||f ||∞ 1{|Xk,n |≥δ} ] +

≤ 2εE[X1 ] +

E[Yn ||f ||∞ 1{|Yk,n |≥δ} ]

n

k=1

=

2εE[X12 ]

+

k=1

2E[X12 ||f 00 ||∞ 1{|Xn |≥δ√n} ]

→n→∞ 0

By the dominated convergence theorem.

t remains to show that the first two terms are null

E[f 0 (Wk,n )Xk,n ] = E[f 0 (Wk,n )]E[Xk,n ]

by independence

=0

0

E[f (Wk,n )Yk,n ] = E[f 0 (Wk,n )]E[Yk,n ]

by independence

=0

E[f

00

2

(Wk,n )(Xk,n

−

2

Yk,n

)]

2

2

= E[f 00 (Wk,n )]E[Xk,n

− Yk,n

]

1

1

−

= E[f 00 (Wk,n )]

n n

=0

hence indeed the theorem holds.

44