6 Uninformative Mathematical Economic Models

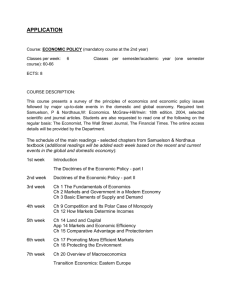

advertisement