MEMS and Caching for File Systems Andy Wang COP 5611

advertisement

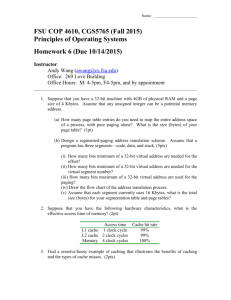

MEMS and Caching for File Systems Andy Wang COP 5611 Advanced Operating Systems MEMS MicroElectricMechnicalSystems 10 Gbytes of data in the size of a penny 100 MB – 1 GB/sec bandwidth Access times 10x faster than today’s drives ~100x less power than low-power HDs Integrate storage, RAM, and processing on the same die The drive is the computer Cost less than $10 [CMU PDL Lab] MEMS-Based Storage Actuators Read/Write tips Magnetic Media MEMS-based Storage side view Media Read/write tips Bits stored underneath each tip MEMS-based Storage Media Sled Y X MEMS-based Storage Springs Springs Springs Springs Y X MEMS-based Storage Anchor Anchor Anchors attach the springs to the chip. Y Anchor Anchor X MEMS-based Storage Sled is free to move Y X MEMS-based Storage Sled is free to move Y X MEMS-based Storage Springs pull sled toward center Y X MEMS-based Storage Springs pull sled toward center Y X MEMS-based Storage Actuator Actuators pull sled in both dimensions Actuator Y Actuator Actuator X MEMS-based Storage Actuators pull sled in both dimensions Y X MEMS-based Storage Actuators pull sled in both dimensions Y X MEMS-based Storage Actuators pull sled in both dimensions Y X MEMS-based Storage Actuators pull sled in both dimensions Y X MEMS-based Storage Probe tip Probe tips are fixed Probe tip Y X MEMS-based Storage Probe tips are fixed Y X MEMS-based Storage One probe tip per square Each tip accesses data at the same relative position Sled only moves over the area of a single square Y X MEMS-Based Management Similar to disk-based scheme Dominated by transfer time Challenges: Broken tips Slow erase cycle ~seconds/block a cylinder group track Caching for File Systems Conventional role of caching Performance improvement Assumptions: Locality Scarcity of RAM Shifting role of caching Shaping disk access patterns Assumptions: Locality Abundance of RAM Performance Improvement Essentially all file systems rely on caching to achieve acceptable performance Goal is to make FS run at the memory speeds Even though most of the data is on disk Issues in I/O Buffer Caching Cache size Cache replacement policy Cache write handling Cache-to-process data handling Cache size The bigger, the fewer the cache misses More data to keep in sync with disk What if…. RAM size = disk size? What are some implications in terms of disk layouts? Memory dump? LFS layout? What if…. RAM is big enough to cache all hot files What are some implications in terms of disk layouts? Optimized for the remaining files Cache Replacement Policy LRU works fairly well Can use “stack of pointers” to keep track of LRU info cheaply Need to watch out for cache pollutions LFU doesn’t work well because a block may get lots of hits, then not be used So, it takes a long time to get it out What is the optimal policy? MIN: Replacing a page that will not be used for the longest time… Hmm… What if your goal is to save power? Option 1: MIN replacement RAM will cache the hottest data items Disks will achieve maximum Hmm… idleness… What if you have multiple disks? And access patterns are skewed Access patterns Better Off Caching Cold Disks Spin down cold disks Access patterns Handling Writes to Cached Blocks Write-through cache: update propagate through various levels of caches immediately Write-back cache: delayed updates to amortize the cost of propagation What if…. Multiple levels of caching with different speeds and sizes? What are some tricky performance behaviors? istory’s Mystery Puzzling Conquest Microbenchmark Numbers… Geoff Kuenning: “If Conquest is slower than ext2fs, I will toss you off of the balcony…” With me hanging off a balcony… Original large-file microbenchmark: one 1-MB file (Conquest in-core file) 700 600 500 MB / sec 400 300 200 100 0 seq write seq read SGI XFS rand write rand read reiserfs ext2fs ramfs seq read Conquest Odd Microbenchmark Numbers Why are random reads slower than sequential reads? 700 600 500 MB / sec 400 300 200 100 0 seq write seq read SGI XFS rand write rand read reiserfs ext2fs ramfs seq read Conquest Odd Microbenchmark Numbers Why are RAM-based FSes slower than disk-based FSes? 700 600 500 MB / sec 400 300 200 100 0 seq write seq read SGI XFS rand write rand read reiserfs ext2fs ramfs seq read Conquest A Series of Hypotheses Warm-up effect? Bad initial states? Maybe Why do RAM-based systems warm up slower? No Pentium III streaming I/O option? No Effects of L2 Cache Footprints Large L2 cache footprint Small L2 cache footprint footprint footprint write a file sequentially footprint file end write a file sequentially footprint read the same file sequentially footprint read file file end read the same file sequentially footprint flush file end read file flush file end LFS Sprite Microbenchmarks Modified large-file microbenchmark: ten 1-MB files (in-core files) 700 600 500 MB / sec 400 300 200 100 0 seq write seq read SGI XFS rand write reiserfs ext2fs rand read ramfs seq read Conquest What if…. Multiple levels of caching with similar characteristics? (via network) A Cache Miss Multiple levels of caching with similar characteristics? (via network) A Cache Miss Multiple levels of caching with similar characteristics? (via network) Why cache the same data twice? What if…. A network of caches? Cache-to-Process Data Handling Data in buffer is destined for a user process (or came from one, on writes) But buffers are in system space How to get the data to the user space? Copy it Virtual memory techniques Use DMA in the first place