Distributed File Systems Andy Wang Operating Systems COP 4610 / CGS 5765

advertisement

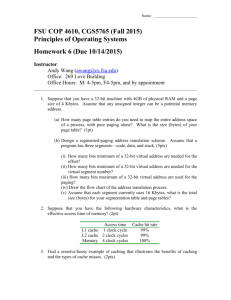

Distributed File Systems Andy Wang Operating Systems COP 4610 / CGS 5765 Distributed File System Provides transparent access to files stored on a remote disk Recurrent themes of design issues Failure handling Performance optimizations Cache consistency No Client Caching Use RPC to forward every file system request to the remote server open, seek, read, write Server cache: X read Client A cache: write Client B cache: No Client Caching + Server always has a consistent view of the file system - Poor performance - Server is a single point of failure Network File System (NFS) Uses client caching to reduce network load Built on top of RPC Server cache: X Client A cache: X Client B cache: X Network File System (NFS) + Performance better than no caching - Has to handle failures - Has to handle consistency Failure Modes If the server crashes Uncommitted data in memory are lost Current file positions may be lost The client may ask the server to perform unacknowledged operations again If a client crashes Modified data in the client cache may be lost NFS Failure Handling 1. Write-through caching 2. Stateless protocol: the server keeps no state about the client read open, seek, read, close No server recovery after a failure 3. Idempotent operations: repeated operations get the same result No static variables NFS Failure Handling 4. Transparent failures to clients Two options The client waits until the server comes back The client can return an error to the user application • Do you check the return value of close? NFS Weak Consistency Protocol A write updates the server immediately Other clients poll the server periodically for changes No guarantees for multiple writers NFS Summary + Simple and highly portable - May become inconsistent sometimes Does not happen very often Andrew File System (AFS) Developed at CMU Design principles Files are cached on each client’s disks NFS caches only in clients’ memory Callbacks: The server records who has the copy of a file Write-back cache on file close. The server then tells all clients that own an old copy. Session semantics: Updates are only visible on close AFS Illustrated Server cache: X Client A Client B AFS Illustrated callback list of X client A Server cache: X read X Client A read X Client B AFS Illustrated callback list of X client A Server cache: X read X Client A cache: X read X Client B AFS Illustrated callback list of X client A Server cache: X read X Client A cache: X Client B read X AFS Illustrated Server cache: X callback list of X client A client B read X Client A cache: X Client B read X AFS Illustrated Server cache: X callback list of X client A client B read X Client A cache: X Client B cache: X read X AFS Illustrated Server cache: X Client A cache: X write X, X X Client B cache: X AFS Illustrated Server cache: X XX Client A cache: X close X Client B cache: X AFS Illustrated Server cache: X XX Client A cache: X close X Client B cache: X AFS Illustrated Server cache: X Client A cache: X Client B cache: X close X AFS Illustrated Server cache: X X Client A cache: X Client B cache: X open X AFS Illustrated Server cache: X X Client A cache: X Client B cache: X open X AFS Failure Handling If the server crashes, it asks all clients to reconstruct the callback states AFS vs. NFS AFS Less server load due to clients’ disk caches Not involved for read-only files Both AFS and NFS Server is a performance bottleneck Single point of failure Serverless Network File Service (xFS) Idea: construct a file system as a parallel program and exploit the high-speed LAN Four major pieces Cooperative caching Write-ownership cache coherence Software RAID Distributed control Cooperative Caching Uses remote memory to avoid going to disk On a cache miss, check the local memory and remote memory, before checking the disk Before discarding the last cached memory copy, send the content to remote memory if possible Cooperative Caching Client A cache: X Client B cache: Client C cache: Client D cache: Cooperative Caching Client A cache: X Client B cache: X Client C cache: read X Client D cache: Cooperative Caching Client A cache: X Client B cache: X Client C cache: X read X Client D cache: Write-Ownership Cache Coherence Declares a client to be a owner of the file at writes No one else can have a copy Write-Ownership Cache Coherence owner, read-write Client A cache: X Client B cache: Client C cache: Client D cache: Write-Ownership Cache Coherence owner, read-write Client A cache: X Client B cache: Client C cache: Client D cache: read X Write-Ownership Cache Coherence read-only Client A cache: X Client B cache: X Client C cache: read X Client D cache: Write-Ownership Cache Coherence read-only Client A cache: X Client B cache: X Client C cache: X read-only Client D cache: Write-Ownership Cache Coherence read-only Client A cache: X Client B cache: Client C cache: X Client D cache: read-only write X Write-Ownership Cache Coherence Client A cache: Client B cache: Client C cache: X Client D cache: owner, read-write write X Other components Software RAID Stripe data redundantly over multiple disks Distributed control File system managers are spread across all machines xFS Summary Built on small, unreliable components Data, metadata, and control can live on any machine If one machine goes down, everything else continues to work When machines are added, xFS starts to use their resources xFS Summary - Complexity and associated performance degradation - Hard to upgrade software while keeping everything running