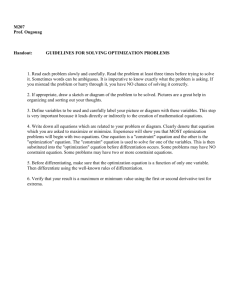

Reliability-Based Optimization Using Evolutionary Algorithms Please share

advertisement