Matthew: Effect or Fable? Working Paper 12-049

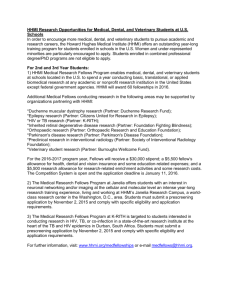

advertisement