Document 11263841

advertisement

Penn Institute for Economic Research

Department of Economics

University of Pennsylvania

3718 Locust Walk

Philadelphia, PA 19104-6297

pier@econ.upenn.edu

http://www.econ.upenn.edu/pier

PIER Working Paper 08-006

“Bootstrap Tests of Stochastic Dominance with Asymptotic

Similarity on the Boundary”

by

Oliver Linton, Kyungchul Song and Yoon-Jae Whang

http://ssrn.com/abstract=1097564

Bootstrap Tests of Stochastic Dominance with Asymptotic

Similarity on the Boundary

Oliver Linton1 , Kyungchul Song2 and Yoon-Jae Whang3

London School of Economics, University of Pennsylvania and Seoul National University

February 19, 2008

Abstract

We propose a new method of testing stochastic dominance which improves on

existing tests based on bootstrap or subsampling. Our test requires estimation of

the contact sets between the marginal distributions. Our tests have asymptotic

sizes that are exactly equal to the nominal level uniformly over the boundary

points of the null hypothesis and are therefore valid over the whole null hypothesis. We also allow the prospects to be indexed by infinite as well as finite

dimensional unknown parameters, so that the variables may be residuals from

nonparametric and semiparametric models. Our simulation results show that

our tests are indeed more powerful than the existing subsampling and recentered

bootstrap.

Key words and Phrases: Set estimation; Size of test; Unbiasedness; Similarity;

Bootstrap; Subsampling.

JEL Classifications: C12, C14, C52.

1

Introduction

There has been a growth of interest in testing stochastic dominance relations among variables such as investment strategies and income distributions. The ordering by stochastic

dominance covers a large class of utility functions and hence is more useful in general than

1

Department of Economics, London School of Economics, Houghton Street, London WC2A 2AE, United

Kingdom. E-mail address: o.linton@lse.ac.uk. Thanks to the ESRC and Leverhulme foundations for

financial support.

2

Department of Economics, University of Pennsylvania, 528 McNeil Building, 3718 Locust Walk, Philadelphia, Pennsylvania 19104-6297. Email: kysong@sas.upenn.edu

3

Department of Economics, Seoul National University, Seoul 151-742, Korea.

E-mail address:

whang@snu.ac.kr. Thanks to the Korea Research Foundation for financial support.

1

the notions of partial orderings that are specific to a certain class of utility functions. However, the main difficulty in testing stochastic dominance lies in the complexity that arises

in computing approximate critical values of the test. A stochastic dominance relation is a

weak inequality relation between distribution functions or integral transform of distribution

functions. Unlike nonparametric or semiparametric tests that are based on the equality

of functions, the convergence of test statistics of Kolmogorov-Smirnov type or Cramér-von

Mises type is not uniform over the probabilities under the null hypothesis. Discontinuity

of convergence arises precisely between the "interior points" of the null hypothesis and the

"boundary points" of the null hypothesis, where boundary points indicate those probabilities

under which all pairs of the distribution functions meet at least one point in the interior of

the support and interior points indicate probabilities under which at least one pair of the

distribution functions do not meet at any point in the interior of the support. In general, the

boundary points do not coincide with the least favorable subset of null hypothesis, i.e., the

set of probabilities under which all the pairs of competing distribution functions are equal.

As noted by Linton, Maasoumi, and Whang (2005), henceforth LMW, the usual approach

of recentered bootstrap or wild bootstrap does not provide tests with asymptotically valid

sizes uniformly over the probabilities under the null hypothesis; the asymptotic size of the

tests are exact only on the least favorable points and invalid for other points under the null

hypothesis. LMW proposed a subsampling method to obtain tests with exact asymptotic

sizes over the boundary points and asymptotically valid for each probability under the null

hypothesis.

This paper proposes a bootstrap-based test that has asymptotically valid size uniformly

under the null hypothesis and asymptotically exact size on the boundary points. The method

is based on bootstrap test statistics that are constructed to mimic the phenomenon of discontinuous convergence by using the estimated boundary points. This requires the estimation of

a certain set, we call the "contact set"; set estimation is a topic of considerable recent interest

in microeconometrics, see for example Moon and Schorfheide (2006), Chernozhukov, Hong,

and Tamer (2007), and Fan and Park (2007). Our method of bootstrap has the following

remarkable properties. First, it is asymptotically similar on the boundary. Second, more

importantly, the bootstrap method provides asymptotically exact sizes. Furthermore, we

can make the rejection probability under the null hypothesis converge to the true rejection

probability only slightly less fast than the asymptotic approximation. This implies that the

convergence of rejection probability can be made faster than that of subsampling.

The proposed test of stochastic dominance admits variables that contain unknown finitedimensional and infinite-dimensional parameters as long as those parameters have consistent

estimators. Hence the proposed test can be used to test stochastic dominance relations

2

among variables in the form of regression errors from the semiparametric regression models

such as single index restrictions or partially parametric regressions. For example, one can

detect the influence of a certain factor upon the stochastic dominance relation by comparing

test results with and without the factor of interest while controlling for other factors. In

some situations, it is desirable to control for publicly observed variables. It is a potential

concern for the researcher in such cases that the result of testing may rely sensitively on

the regression specification chosen to control certain factors stochastically. Semiparametric

specification of the regression can be useful in this situation.

We perform Monte Carlo simulations that compare three methods: recentered bootstrap,

subsampling method, and the bootstrap on the boundary domain proposed in this paper.

We verify the superior performance of our method.

Testing stochastic dominance has drawn attention in the literature over the last decade.

McFadden (1986), Anderson (1996) and Davidson and Duclos (2000) are among the early

works that considered testing stochastic dominance. Barrett and Donald (2003) proposed

a consistent bootstrap test that has an asymptotically exact size on the least favorable

points for the special case where the prospects are mutually independent. LMW suggested a

subsampling method that has asymptotically valid size on the boundary points and applies

to variables that contain unknown finite-dimensional parameters and allows the prospects

to be mutually dependent.

In Section 2, we define the null hypothesis of stochastic dominance and introduce notations. In Section 3, we suggest test statistics and develop asymptotic theory both under the

null hypothesis and local alternatives. Section 4 is devoted to the bootstrap procedure, explaining the method of obtaining bootstrap test statistics and establishing their asymptotic

properties. In Section 5, we describe Monte Carlo simulation studies and discuss results from

them. Section 6 concludes. All the technical proofs are relegated to the appendix.

2

2.1

Stochastic Dominance

The Null Hypothesis

Let {Xk }K

k=1 be a set of continuous outcome variables which may, for example, represent

different points in time or different regions. Let Fk (x) be the distribution function of the

(1)

k-th random variable Xk . Let Dk (x) = Fk (x) and

(s)

Dk (x)

=

Z

x

(s−1)

Dk

−∞

3

(t)dt.

(s)

(s)

Then we say that X1 stochastically dominates X2 at order s if D1 (x) ≤ D2 (x) for all x

with strict inequality for some x. This is equivalent to an ordering of expected utility over a

certain class of utility functions Us , see LMW.

(s)

(s)

(s)

Let Dkl (x) = Dk (x) − Dl (x) and define

d∗s

=

(s)

min sup Dkl (x)

k6=l x∈X

and

c∗s

= min

k6=l

Z

X

n

o2

(s)

max Dkl (x), 0 dx,

where X denotes a set contained in the union of the supports of Xk , k = 1, 2, . . . , K. We do

not assume that the set X is bounded. The null hypothesis that this paper focuses on takes

the following form:

(1)

H0,A : d∗s ≤ 0 vs. H1,A : d∗s > 0.

The null hypothesis represents the presence of a stochastic dominance relationship between

a pair of variables in {Xk }K

k=1 . The alternative hypothesis corresponds to no such incidence.

Alternatively, we might also consider the following form of the null and alternative hypotheses

H0,B : c∗s = 0 vs. H1,B : c∗s > 0.

(2)

Obviously the null hypothesis d∗s ≤ 0 implies the null hypothesis c∗s = 0. On the contrary,

(s)

when c∗s = 0, Dkl (x) ≤ 0, μ-a.e., for some pair (k, l), where μ denotes the Lebesgue measure

(s)

on X . Therefore, if the Dk ’s are continuous functions, both the null hypotheses in (1) and

(2) are equivalent, but in general, the null hypothesis in (1) is stronger than that in (2).

(s)

Of crucial importance in the sequel are the "contact" sets, these are denoted by Bkl the

(s)

(s)

subset of X such that Dk (x) and Dl (x) are equal, i.e.,

(s)

(s)

Bkl = {x ∈ X : Dkl (x) = 0}.

(3)

These sets can be empty, can contain a countable number of isolated points, or can be a

countable union of disjoint intervals. The limiting distributions of our test statistics depend

only these subsets of X .

2.2

Test Statistics and Asymptotic Theory

In many cases of practical interest the outcome variable maybe the residual from some model.

This arises in particular when data is limited and one may want to use a model to adjust

for systematic differences. Common practice is to cut the data into subsets, say of families

with two children, and then make comparisons across homogenous populations. When data

are limited this can be difficult and a modelling approach can overcome this difficulty. We

4

suppose that the variables Xk depend on an unknown finite dimensional parameter θ0 ∈ RdΘ

and on an infinite dimensional parameter τ 0 ∈ T (here, T is a totally bounded class of

functions with respect to a certain metric), so that we write Xk = Xk (θ0 , τ 0 ). For example,

the variable Xk may be the residual from the partially parametric regression Xk (θ0 , τ 0 ) =

>

>

θ0 −τ 0 (Z2k ) or the single index framework Xk (θ0 , τ 0 ) = Yk −τ 0 (Z1k

θ0 ). Generically we

Yk −Z1k

let Xk (θ, τ ) be specified as Xk (θ, τ ) = ϕk (W ; θ, τ ) where ϕk (·; θ, τ ) is a real-valued function

>

θ0 ),

known up to the parameter (θ, τ ) ∈ Θ × T . In the example of Xk (θ0 , τ 0 ) = Yk − τ 0 (Z1k

W denotes the vector of Yk and Z1k , k = 1, . . . , K. This set up is more general than in LMW

who allowed only linear regression.

We next specify further the precise properties that we require of the data generating

process. Let BΘ×T (δ) = {(θ, τ ) ∈ Θ × T : ||θ − θ0 || < δ, supP ∈P ||τ − τ 0 ||P,2 < δ}, where the

norm || · ||P,2 denotes the usual L2 (P )-norm. We introduce a bounded weight function q(x)

(s)

(see Assumption 3(ii)) and define hx (ϕ) = (x − ϕ)s−1 1{ϕ ≤ x}q(x). Let N[] (ε, T , || · ||P,r )

denote the bracketing number of T with respect to the Lr (P )-norm, i.e. the smallest number

of ε- brackets that are needed to cover the space T (e.g. van der Vaart and Wellner (1996)).

The conditions in Assumptions 1 and 2 below are concerned with the data generating process

of W and the map ϕk . Let P be the collection of all the potential distributions of W that

satisfy the conditions Assumptions 1-3 below.

Assumption 1 : (i) {Wi }N

i=1 is a random sample.

(ii) supP ∈P log N[] (ε, T , || · ||P,2 ) ≤ Cε−d for some d ∈ (0, 1].

(iii) For some δ > 0 and s > 0, supP ∈P EP [sup(θ,τ )∈BΘ×T (δ) |ϕk (W ; θ, τ )|2((s−1)∨1)+δ ] < ∞.

(iv) For some δ > 0, there exists a functional Γk,P (x)[θ − θ0 , τ − τ 0 ] of (θ − θ0 , τ − τ 0 ),

(θ, τ ) ∈ BΘ×T (δ), such that

¯

¯ £ (s)

¤

¤

£

¯EP hx (ϕk (W ; θ, τ )) − EP hx(s) (ϕk (W ; θ0 , τ 0 )) − Γk,P (x)[θ − θ0 , τ − τ 0 ]¯

≤ C1 ||θ − θ0 ||2 + C2 ||τ − τ 0 ||2P,2

with constants C1 and C2 that do not depend on P , and for each ε > 0

¯

¯

)

N

¯√

¯

X

1

¯

¯

ψx,k,P (Wi )¯ > ε = 0,

limsupN ≥1 supP ∈P P supx∈X ¯ NΓk,P (x)[θ̂ − θ0 , τ̂ − τ 0 ] − √

¯

¯

N i=1

(4)

where ψx,P (·) satisfies that there exist η, C > 0 and s1 ∈ (0, 1] such that for all x ∈

¤

£

X , EP ψx,k,P (Wi ) = 0, supP ∈P ||supx∈X ||ψx,k,P ||||P,2+η < ∞, and

(

h

¯2 i

¯

supP ∈P EP supx2 ∈X :|x−x2 |≤ε ¯ψ x,k,P (Wi ) − ψx2 ,k,P (Wi )¯ ≤ Cε2s1 , for all ε > 0.

5

The bracketing entropy condition for T in (ii) is satisfied by many classes of functions.

For example, when T is a Hölder class of smoothness α with the common domain of τ (·) ∈

T that is convex, and bounded in the dT -dim Euclidean space with dT /α ∈ (0, 1] (e.g.

Corollary 2.7.2 in van der Vaart and Wellner (1996)), the bracketing entropy condition

holds. The condition is also satisfied when T is contained in a class of uniformly bounded

functions of bounded variation. Condition (iii) is a moment condition with local uniform

boundedness. The moment condition is widely used in the literature of semiparametric

>

θ0 ) + εk , we can

inferences. In the example of single-index restrictions where Yk = τ 0 (Z1k

>

>

write ϕ(w; θ, τ ) = τ 0 (z1k θ0 ) − τ (z1k θ) + εk . If τ is uniformly bounded in the neighborhood of

τ 0 in L2 (P ), the moment condition is immediately satisfied when E[|εi |2((s−1)∨1)+δ ] < ∞. Or if

τ is Lipschitz continuous with a uniformly bounded Lipschitz coefficient and |τ 0 (v) − τ (v)| ≤

C|v|p , p ≥ 1, and E[||Z1k ||p∨(2((s−1)∨1)+δ) ] < ∞, then the moment condition holds. We may

check this condition for other semiparametric specifications in a similar manner. Condition

R (s)

(iv) is concerned with the pathwise differentiability of the functional hx (ϕk (W ; θ, τ ))dP

in (θ, τ ) ∈ BΘ×T (δ). Let ϕ1 = ϕ(W ; θ, τ ) and ϕ2 = ϕ(W ; θ0 , τ 0 ). Then we can write

(s)

s−1

h(s)

− (x − ϕ2 )s−1 }1{ϕ2 ≤ x}

x (ϕ1 ) − hx (ϕ2 ) = {(x − ϕ1 )

+(x − ϕ2 )s−1 {1{ϕ1 ≤ x} − 1{ϕ2 ≤ x}}.

Hence the pathwise differentiability is immediate when ϕ(w; θ, τ ) is continuously differentiable in (θ, τ ) and W is continuous and has a bounded density. The condition in (4) indicates

that the functional Γk,P at the estimators has an asymptotic linear representation. This condition can be established using the standard method of expanding the functional in terms of

the estimators, θ̂ and τ̂ , and using the asymptotic linear representation of these estimators.

This asymptotic linear representation for these estimators is available in many semiparametric models. Since our procedure does not make use of its particular characteristic beyond

the condition in (4), we keep this condition at a high level for the sake of brevity.

Assumption 2 : (i) ϕ(W ; θ0 , τ 0 ) is absolutely continuous with bounded density.

(ii) Condition (A) below holds when s = 1, and Condition (B), when s > 1.

(A) There exist δ, C > 0 and a subvector W1 of W such that (a) the conditional density

of ϕ(W ; θ, τ ) given W1 is bounded uniformly over (θ, τ ) ∈ BΘ×T (δ), (b) for each (θ, τ ) and

(θ0 , τ 0 ) ∈ BΘ×T (δ), ϕk (W ; θ, τ ) − ϕk (W ; θ0 , τ 0 ) is measurable with respect to the σ-field of

W1 , and (c) for each (θ1 , τ 1 ) ∈ BΘ×T (δ) and for each ε > 0,

h

i

supP ∈P supw1 EP sup(d2 ,τ 2 )∈BΘ×T (ε) |ϕk (W ; θ1 , τ 1 ) − ϕk (W ; θ2 , τ 2 )|2 |W1 = w1 ≤ Cε2s2 (5)

6

for some s2 ∈ (d, 1] with d in Assumption 1(ii), where the supremum over w1 runs in the

support of W1 .

(B) There exist δ, C > 0 such that Condition (c) above is satisfied.

Assumption 2(ii) contains two different conditions that are suited to each case of s = 1 or

s > 1. This different treatment is due to the nature of the function hx (ϕ) = (x − ϕ)s−1 1{ϕ ≤

x}q(x) that is discontinuous in ϕ when s = 1 and smooth in ϕ when s > 1. Condition

(A) can be viewed as a generalization of the set up of LMW. Condition (A)(a) is analogous

to Assumption 1(iii) of LMW. Condition (ii)(A)(b) is satisfied by many semiparametric

models. For example, in the case of a partially parametric specification: Xk (θ0 , τ 0 ) = Yk −

>

θ0 − τ 0 (Z2k ), we take W = (Y, Z1 , Z2 ) and W1 = (Z1 , Z2 ). In the case of single index

Z1k

restrictions: Xk (θ0 , τ 0 ) = Yk − τ 0 (Zk> θ0 ), W = (Y, Z) and W1 = Z. The condition in (5)

requires the function ϕ(W ; θ, τ ) to be (conditionally) locally uniformly L2 (P )-continuous in

(θ, τ ) ∈ BΘ×T (δ) uniformly over P ∈ P. Sufficient conditions and discussions can be found in

Chen, Linton, and van Keilegom (2003). We can weaken this condition to the unconditional

version when we consider only the case s > 1.

P

We now turn to the definition of the test statistics. Let F̄kN (x, θ, τ ) = N1 N

i=1 1 {Xki (θ, τ ) ≤ x}

and

(s)

(s)

(s)

D̄kl (x, θ, τ ) = D̄k (x, θ, τ ) − D̄l (x, θ, τ ) for s ≥ 1,

(1)

(s)

where D̄k (x, θ, τ ) = F̄kN (x, θ, τ ) and D̄k (x, θ, τ ) is defined through the following recursive

relation:

Z x

(s)

(s−1)

(6)

D̄kl (t, θ, τ )dt for s ≥ 2.

D̄kl (x, θ, τ ) =

−∞

The test statistics we consider are based on the weighted empirical analogues of d∗s and c∗s ,

namely,

(s)

DN

(s)

CN

√

(s)

= min sup q(x) N D̄kl (x, θ̂, τ̂ ) and

k6=l x∈X

Z

n

o2

√

(s)

= min

max q(x) N D̄kl (x, θ̂, τ̂ ), 0 dx.

k6=l

X

Horváth, Kokoszka, and Zitikis (2006) showed that in the case of the Kolmogorov-Smirnov

functional with s ≥ 3, it is necessary to employ a weight function q(x) such that q(x) → 0

as x → ∞ in order to obtain nondegenerate asymptotics. The numerical integration in (6)

can be cumbersome in practice. Integrating by parts, we have an alternative form

X

1

(x − Xki (θ, τ ))s−1 1{Xki (θ, τ ) ≤ x}.

N(s − 1)! i=1

N

(s)

D̄k (x, θ, τ ) =

7

(s)

(s)

Since D̄k and Dk are obtained by applying a linear operator to F̄kN and Fk , the estimated

(s)

(s)

function D̄k is an unbiased estimator for Dk . Regarding the estimators θ̂ and τ̂ and the

choice of the weight function q, we assume the following.

Assumption 3 : (i) ||θ̂ − θ0 || = oP (N −1/4 ), ||τ̂ − τ 0 ||P,2 = oP (N −1/4 ) uniformly in P ∈ P,

and supP ∈P P {τ̂ ∈ T } → 1 as N → ∞.

¡

¢

(ii) supx∈X 1 + |x|(s−1)∨(1+δ) q(x) < ∞, for some δ > 0 and for q, nonnegative, first order

continuously differentiable function on X with a bounded derivative.

When θ̂ is a solution from the M- estimation problem, its rate of convergence can be

obtained by following the procedure of Theorem 3.2.5 of van der Vaart and Wellner (1996).

The uniformity in P in this case can be ensured by combining the uniform (in P ) oscillation

behavior of the population objective function and the associated empirical processes. For

instance, one may employ a uniform oscillation exponential bound for empirical processes

established by Giné (1997), Theorem 6.1. The rate of convergence for the nonparametric

component τ̂ can also be established by controlling the bias and variance part uniformly in

P. In order to control the variance part, one may employ the framework of Giné and Zinn

(1991) and establish the central limit theorem that is uniform in P . The sufficient conditions

for the last condition in (i) can be checked, for example, from the results of Andrews (1994).

Condition (ii) is very convenient and is fulfilled by an appropriate choice of the weight

function. The use of the weight function is convenient as X is allowed to be unbounded.

Condition (ii) is stronger than that of Horváth, Kokoszka, and Zikitis (2006) who under a

¡

¢

set-up simpler than this paper, imposed that supx∈X 1 + (max(x, 0)(s−2)∨1 q(x) < ∞. Note

that the condition in (ii) implies that when X is a bounded set, we may simply take q(x) = 1.

When s = 1 so that our focus is on the first order stochastic dominance relation, we may

transform the variable Xki (θ, τ ) into one that has a bounded support by taking a smooth

strictly monotone transform. After this transformation, we can simply take q(x) = 1.

(s)

(s)

The first result below is concerned with the convergence in distribution of DN and CN

(s)

under the null hypothesis uniformly in a subset of P. As for hx defined prior to Assumption

(s)

(s)

(s)

∆

1, we define h∆

kl,i (x) = hx (Xki ) − hx (Xli ) and ψ kl,i (x) = ψ x,k,P (Wi ) − ψ x,l,P (Wi ). Let ν kl (·)

be a Gaussian process on X with covariance kernel given by

∆

∆

∆

CP (x1 , x2 ) = CovP (h∆

kl,i (x1 ) + ψ kl,i (x1 ), hkl,i (x2 ) + ψ kl,i (x2 )),

where CovP denotes the covariance under P. The asymptotic critical values are based on

this Gaussian process. By convention, we assume that the supremum over an empty set is

equal to −∞.

8

KS

Let P0 be the set of probabilities under the null hypothesis and let P00

be the set of

(s)

probabilities in P0 such that the contact set Bkl is nonempty for all pairs k 6= l.4 The set

KS

represents the "boundary" of the null hypothesis. In other words, the

of probabilities P00

(s)

boundary points are the probabilities under which the contact set Bkl is nonempty for all

(s)

k 6= l. We define the set of boundary points differently for Cramér-von Mises tests, CN . Let

(s)

CM

be the set of probabilities in P0 such that for each k 6= l, the contact set Bkl has a

P00

CM

KS

⊂ P00

.

positive Lebesgue measure. Hence, in general P00

Theorem 1 : Suppose that Assumptions 1-3 hold. Then under the null hypothesis, as

N → ∞,

(s)

DN

(s)

CN

→

D

→

D

(

(

(s)

KS

mink6=l supx∈B(s) (ν kl (x)), if P ∈ P00

kl

mink6=l

R

KS

−∞, if P ∈ P0 \P00

and

(s)

(s)

Bkl

CM

max{ν kl (x), 0}2 dx, if P ∈ P00

CM

0, if P ∈ P0 \P00

.

KS

on the boundary, the test statistic is asymptotiUnder a fixed probability P ∈ P00

KS

KS

. But under a fixed probability P ∈ P0 \P00

, we have

cally tight uniformly over P ∈ P00

(s)

supx∈X Dkl (x) < 0 for some k, l, and hence the test statistics diverge to −∞. The limit(s)

ing distribution of the test statistic DN exhibits discontinuity; they are not asymptotically

KS

, while they are so at each P ∈ P0KS . This phenomenon of distight at each P ∈ P0 \P00

continuity arises often in moment inequality models. (e.g. Moon and Schorfheide (2006),

Chernozhukov, Hong, and Tamer (2007), and Andrews and Guggenberger (2006)).

(s)

CM

,

As for CN , similar discontinuity arises in the limiting distribution. When P ∈ P00

(s)

CM

CN has a nondegenerate limiting distribution. However, when P ∈ P0 \P00 , the limiting

distribution becomes degenerate at zero. We introduce the following definition of a test

having an asymptotically exact size.

Definition 1 : (i) A test ϕα with a nominal level α is said to have an asymptotically exact

size if there exists a nonempty subset P00 ⊂ P0 such that:

limsupN→∞ supP ∈P0 EP ϕα ≤ α.

limsupN→∞ supP ∈P00 EP ϕα = α.

(7)

(ii) When a test ϕα satisfies (7), we say that the test is asymptotically similar on P00 .

4

KS

KS

Hence, that P ∈ P00

implies d∗s = 0 because P00

⊂ P0 . However, the converse is not true, in particular

when X is unbounded.

9

(s)

The Gaussian process ν kl in Theorem 1 depends on the unknown elements of the null

hypothesis, precluding the use of first order asymptotic critical values in practice. Barrett

and Donald (2003) suggested a bootstrap procedure and LMW, a subsampling approach.

Both studies have not paid attention to the issue of uniformity in the convergence of tests.

In order to deal with uniformity, we separate P0 into the boundary points and the interior

points and deal with asymptotic theory of the test statistic separately. The proof of Theorem

√

(s)

(s)

1 establishes the weak convergence of q(·) N{D̄kl (·, θ̂, τ̂ )−Dkl (·, θ0 , τ 0 )} to ν kl (·) uniformly

over P ∈ P. This result is obtained by showing that the class of functions indexing the

empirical process is a uniform Donsker class (Giné and Zinn (1991)). The remaining step is

to deal with the limiting behavior of the test statistic uniformly over the interior points.

One might consider a typical bootstrap procedure for this situation. The difficulty for

the bootstrap method tests of stochastic dominance lies mainly in the fact that it is hard to

impose the null hypothesis upon the test. There have been approaches that consider only

least favorable subset of the models of the null hypothesis as in the following.

F1 (x) = · · · = FK (x) for all x ∈ X .

(8)

This leads to the problem of asymptotic nonsimilarity in the sense that when the true data

generating process lies away from the least favorable subset of the models of the null hypothesis, and yet still lie at the boundary points, the bootstrap sizes become misleading even

in large samples. LMW calls this phenomenon asymptotic nonsimilarity on the boundary.

Bootstrap procedures that employ the usual recentering implicitly impose restrictions that

do not hold outside the least favorable set and hence asymptotically biased against such

probabilities outside the set.

To illustrate heuristically why a test that uses critical values from the least favorable case

of a composite null hypothesis can be asymptotically biased, let us consider a simple example

in the finite dimensional case. Suppose that the observations {Xi = (X1i , X2i ) : i = 1, . . . , N}

are mutually independently and identically distributed with unknown mean μ = (μ1 , μ2 ) and

known variance Σ = diag(1, 1). Let the hypotheses of interest be given by:

H0 : μ1 ≤ 0 and μ2 ≤ 0 vs. H1 : μ1 > 0 or μ2 > 0.

These hypotheses are equivalent to:

H0 : d∗ ≤ 0 vs. H1 : d∗ > 0,

(9)

where d∗ = max{μ1 , μ2 }. The "boundary" of the null hypothesis is given by BBD = {(μ1 , μ2 ) :

10

μ1 ≤ 0 and μ2 ≤ 0} ∩ {(μ1 , μ2 ) : μ1 = 0 or μ2 = 0}, while the "least favorable case (LFC)"

is given by BLF = {(μ1 , μ2 ) : μ1 = 0 and μ2 = 0} ⊂ BBD . To test (9), one may consider the

following the t-statistic:

TN = max{N 1/2 X 1 , N 1/2 X 2 },

P

where X k = N

i=1 Xki /N. Then, the asymptotic null distribution of TN is non-degenerate

provided the true μ lies on the boundary BBD , but the distribution depends on the location

of μ. That is, we have

⎧

⎪

μ = (0, 0)

⎨ max{Z1 , Z2 } if

d

TN →

if μ1 = 0, μ2 < 0 ,

Z1

⎪

⎩

if μ1 < 0, μ2 = 0

Z2

where Z1 and Z2 are mutually independent standard normal random variables. On the other

hand, TN diverges to −∞ under the "interior" of the null hypothesis. Suppose that zα∗

satisfies P (max{Z1 , Z2 } > zα∗ ) = α for α ∈ (0, 1). Then, the test based on the least favorable

case is asymptotically non-similar on the boundary because, for example, limN→∞ P (TN >

zα∗ |μ = (0, 0)) 6=limN→∞ P (TN > zα∗ |μ = (0, −1)). Now consider the following sequence of

local alternatives: for δ > 0,

δ

HN : μ1 = √ and μ2 < 0.

N

d

Then, under HN , it is easy to see that TN → N(δ, 1). However, the test based on the LFC

critical value may be biased against this local alternatives, because limN→∞ P (TN > zα∗ ) =

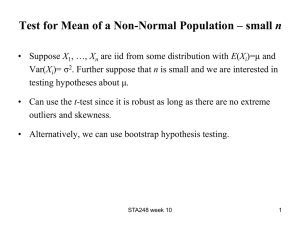

P (N(δ, 1) > zα∗ ) < α for some values of δ. To see this, in Figure 1, we draw a c.d.f.’s of

max{Z1 , Z2 } and N(δ, 1) for δ = 0.0, 0.2, and 1.5. Clearly, the distribution of max{Z1 , Z2 }

first-order stochastic dominates that of N(0.2, 1), i.e., TN is asymptotically biased against

HN for δ = 0.2.

11

Figure 1. Shows that TN is asymptocally biased against HN for δ = 0.2.

3

Bootstrap Procedure

In this section we discuss our method for obtaining asymptotically valid critical values. We

propose the wild bootstrap procedure as follows. Let

Xki (θ, τ ) = Yki − g(Zki ; θ, τ ),

for some function g. Among the necessary identification conditions for θ0 and τ 0 is the

condition that E [Yki − g(Zki ; θ, τ )|Zki ] = 0. Let Xi (θ, τ ) be a K- dimensional vector whose

N

k-th entry is given by Xki (θ, τ ). Let (X̂i )N

i=1 = (Xi (θ̂, τ̂ ))i=1 . Following the wild bootstrap

B

∗ ∗

procedure (Härdle and Mammen (1993)), we draw {(ε∗i,b )N

i=1 }b=1 such that E [εki,b |Zki ] = 0

2

∗

and E∗ [ε∗2

ki,b |Zki ] = X̂ki , where E [·|Zki ] denotes the bootstrap conditional distribution given

∗

= g(Zki ; θ̂, τ̂ ) + ε∗ki,b , k = 1, . . . , K and take

Zki and ε∗ki,b is the k-th element of ε∗i,b . Let Yki,b

∗

∗

∗

∗

= (Yki,b

, Zki )K

Wi,b

k=1 . Using this bootstrap sample, construct estimators θ̂ b and τ̂ b , for each

∗

∗

∗

= Yki,b

− g(Zki ; θ̂b , τ̂ ∗b ).

b = 1, . . . , B. Then, we define Xki,b

Now, we introduce the following bootstrap empirical process

(s)∗

D̄kl,b (x)

N n

h

io

X

1

∗

∗

hx (Xki,b

=

) − hx (Xli,b

) − EN hx (X̂ki ) − hx (X̂li ) , b = 1, 2, . . . , B,

N(s − 1)! i=1

12

where EN denotes the expectation with respect to the empirical measure of {X̂i }N

i=1 . The

(s)∗

(s)

quantity D̄kl,b (x) denotes the bootstrap counterpart of D̄kl (x). Given a sequence cN → 0

√

and cN N → ∞, we construct the estimated contact set:

(s)

B̂kl

o

n

(s)

= x ∈ X : q(x)|D̄kl (x)| < cN .

(10)

As for the weight function q(x), we may consider the following type of function. For z1 < z2

and for a constant a > 0, we set

⎧

⎪

⎨

1 if x ∈ [z1 , z2 ]

q(x) =

a/(a + |x − z2 |(s−1)∨1 ) if x > z2

⎪

⎩

a/(a + |x − z1 |(s−1)∨1 ) if x < z1 .

This is a modification of the weighting function considered by Horváth, Kokoszka, and Zikitis

(2006).

We propose the Kolmogorov-Smirnov type bootstrap test statistic as

(s)∗

DN,b =

⎧

√

(s)∗

(s)

⎪

Nq(x)D̄kl,b (x), if B̂kl 6= ∅ for all k 6= l

(s)

⎪

⎨ mink6=l supx∈B̂kl

⎪

⎪

⎩

π N if

(s)

B̂kl

(11)

= ∅ for some k 6= l,

where π N is a positive sequence such that π N → ∞. From this, we obtain the bootstrap

(s)∗

−1 B

critical values, c∗KS

α,N,B = inf{t : B Σb=1 1{DN,b ≤ t} ≥ 1 − α}, yielding an α-level bootstrap

(s)

∗

test: ϕKS

α , 1{DN > cα,N,B }. The sequence π N governs the asymptotic behavior of the size

of the test. When π N increases faster, the size of the test converges to zero faster in the

KS

.

interior points P ∈ P0 \P00

Likewise, we can define the Cramér-von Mises type bootstrap test statistic as

(s)∗

CN,b =

⎧

n

o2

R

(s)∗

(s)

⎪

⎪

N

min

max

q(x)

D̄

(x),

0

dx, if B̂kl 6= ∅ for all k 6= l

(s)

k6=l B̂

⎨

kl,b

kl

⎪

⎪

⎩

(12)

(s)

π N if B̂kl = ∅ for some k 6= l,

where π N is a positive sequence such that π N → ∞. The bootstrap critical values are

(s)∗

−1 B

obtained by c∗CM

α,N,B = inf{t : B Σb=1 1{CN,b ≤ t} ≥ 1 − α}, yielding an α-level bootstrap

(s)

, 1{CN > c∗CM

test: ϕCM

α

α,N,B }.

The main step we need to justify the bootstrap procedure uniformly in P ∈ P under this

general semiparametric environment is to establish the bootstrap uniform central limit theorem for the empirical processes in the test statistic, where uniformity holds over probability

13

measures in P. The uniform central limit theorem for empirical processes has been developed by Giné and Zinn (1991) and Sheehy and Wellner (1992) through the characterization

of uniform Donsker classes.

The bootstrap central limit theorem for empirical processes was established by Giné and

Zinn (1990) who considered a nonparametric bootstrap procedure. See also a nice lecture

note on the bootstrap by Giné (1997). We cannot directly use these results because the

∗ N

}i=1 is non-identically distributed both in the

bootstrap distribution for each sample {Wi,b

case of wild-bootstrap or residual-based bootstrap. In the Appendix, we show how we can

make the bootstrap CLT result of Giné (1997) accommodate this case by slightly modifying

his result.5

This paper’s bootstrap procedure does not depend on a specific estimation method for

θ0 and τ 0 . Due to this generality of our framework, we introduce the following additional

∗

assumptions about the bootstrap sample Wib∗ , the bootstrap estimators (θ̂ , τ̂ ∗ ), and the

function g(z; θ, τ ) introduced at the beginning of this section. Let GN be the σ-field generated

by {Wi }N

i=1 .

Assumption 4: (i) For arbitrary ε > 0,

o

n ∗

P ||θ̂ − θ̂|| + ||τ̂ ∗ − τ̂ ||P,2 > N −1/4 ε|GN →P 0, uniformly in P ∈ P.

(ii) For ψ x,k,P (·) in Assumption 1(iv), E[ψx,k,P (Wi )|Zki ] = 0, and for some rN → 0 with

limsupN→∞ rN /cN < ∞,

P

¯

¯

)

N

¯√

¯

X

1

¯

∗ ¯

supx∈X ¯ N Γ̂k,P (x) − √

ψx,k,P (Wi,b )¯ > rN ε|GN →P 0

¯

¯

N i=1

(

(s)

∗

(s)

uniformly in P ∈ P for any ε > 0, where Γ̂k,P (x) = EN [hx (ϕk (Wi∗ ; θ̂ , τ̂ ∗ ))]−EN [hx (ϕk (Wi∗ ; θ̂, τ̂ ))]

∗

)|Zki ] = 0.

and E∗ [ψx,k,P (Wi,b

(iii) There exists C such that for each (θ1 , τ 1 ) ∈ BΘ×T (δ) and for each ε > 0,

supz∈Zk sup(d2 ,τ 2 )∈BΘ×T (ε) |g(z; θ1 , τ 1 ) − g(z; θ2 , τ 2 )| ≤ Cε2s2 ,

(13)

for some s2 ∈ (d, 1], where Zk is the support of Zki .

(iv) For each k 6= l, there exists δ > 0 and constants ckl > 0 and pkl ≥ 1 such that for each

5

For a weak convergence of residual-based bootstrap process in a regression model, see Koul and Lahiri

(1994). For a parametric bootstrap procedure for goodness-of-fit tests, see, among others, Andrews (1997).

See also Härdle and Mammen (1993) and Whang (2000) for a wild-bootstrap procedure that contains finitedimensional estimators.

14

x, x0 ∈ X ,

¯

¯

¯

(s)

(s) 0 ¯

(s)

(s)

0

(s)

2

pkl /2

.

¯q(x)|Dkl (x)| − q(x )|Dkl (x )|¯ ≥ ckl {E[h(s)

x (Xki )−hx (Xli )−{hx0 (Xki )−hx0 (Xli )}] ∧δ}

Condition (i) requires that the bootstrap estimators should be consistent at a rate faster

than N −1/4 uniformly over P ∈ P. The bootstrap validity of M-estimators is established by

Arcones and Giné (1992). Infinite dimensional Z-estimators are dealt with by Wellner and

Zhan (1996). See also Abrevaya and Huang (2005) for a bootstrap inconsistency result for a

case where the estimators are not asymptotically linear. Condition (iii) implies the condition

in (5) by choosing W1i = Zki and can be weakened to the locally uniform L2 -continuity (e.g.

Chen, Linton, and van Keilegom (2003)) when we confine our attention to the case s > 1.

Condition (iv) is similar to Condition C.2 in Chernozhukov, Hong, and Tamer (2007) and is

used to control the convergence rate of the estimated set with respect an appropriate norm.

This condition is needed only for the convergence rate of the rejection probability.

The wild bootstrap procedure is introduced to ensure Condition (ii) which assumes the

√

∗

bootstrap analogue of the asymptotic linearity of NΓk,P (x)[θ̂ − θ̂, τ̂ ∗ − τ̂ ] with the same

influence function ψ x,P . In the case of parametric regression specification: Xki (θ0 ) = Yki −

g(Zki ; θ0 ) without the nonparametric function τ 0 , the parameter θ0 is typically identified by

the condition E[Xki (θ0 )] = 0 and in this case, we may use the residual-based bootstrap procePN

1

dure by replacing ε∗ki,b by those resampled from {X̂i − X̄N }N

i=1 where X̄N = N

i=1 Xi (θ̂, τ̂ ).

PN

1

∗

)−

Then we need Condition (ii) with the centered influence function √N i=1 {ψx,k,P (Wi,b

EN ψx,k,P (Ŵi )} where Ŵi = (Ŷi , Zi ) and Ŷki = g(Zki ; θ̂) + Xki (θ0 ). (See. e.g. Koul and Lahiri

(1994)).

One of the important conditions that are needed in this case is the uniform oscillation

behavior of the bootstrap empirical process, as established in the proof of Theorem 2.2 of

Giné (1997). We slightly modify his result so that empirical processes constructed from the

residual-based bootstrap procedure are accommodated. This latter result of Giné is also

crucial for our establishing the bootstrap central limit theorem that is uniform in P.

Theorem 2 : Suppose that the conditions of Theorem 1 and Assumption 4 hold and that

√

cN → 0 and cN N → ∞. Then the following holds.

(i) Uniformly over P ∈ P0 ,

o

n

p

(s)

≤

α

+

O

P DN > c∗KS

(c

− log cN ), and

P

N

α,∞

o

n

p

(s)

≤ α + OP (cN − log cN ).

P CN > c∗CM

α,∞

(s)

KS

(ii) Furthermore, suppose that for all P ∈ P00

, the distribution of mink6=l supx∈B(s) (ν kl (x))

kl

15

CM

is continuous, and for all P ∈ P00

, the distribution of mink6=l

continuous. Then

R

(s)

(s)

Bkl

max{ν kl (x), 0}2 dx is

o

n

p

(s)

KS

=

α

+

O

(c

− log cN ) uniformly over P ∈ P00

and

P DN > c∗KS

P N

α,∞

o

n

p

(s)

CM

= α + OP (cN − log cN ) uniformly over P ∈ P00

P CN > c∗CM

,

α,∞

The first result says that the bootstrap tests have asymptotically correct sizes. The

second result tells us that the bootstrap test is asymptotically similar on the boundary. The

second result combined with the first result establishes that the bootstrap test has exact

asymptotic size equal to α. The result is uniform over P ∈ P0 . The caveats of the absolute

continuity of the limiting distributions are necessary because in certain cases the distribution

can be discrete or even degenerate at one point. For example, consider the case where we

have K = 2, s = 1, and X = [0, 1] which is the common support for X1 and X2 whose

(s)

distribution functions meet only at 0 and 1 within X . Then, the Gaussian process ν kl (·) is

a variant of a Brownian bridge process taking values 0 at end points of 0 and 1 and hence

(s)

mink6=l supx∈B(s) (ν kl (x)) is a discrete random variable degenerate at zero. In this case, the

kl

asymptotic size is zero, violating the equality in (ii).

The limit behavior of the bootstrap test statistic mimics the discontinuity in Theorem 1.

Both the original and bootstrap test statistics diverge when the data generating process is

away from the boundary points. However, the direction of divergence is opposite. When the

(s)

bootstrap distribution is away from "the boundary points," i.e., if B̂kl = ∅ for some k 6= l,

the bootstrap test statistic diverges to ∞. However, outside the boundary points, the original

test statistic diverges to −∞. This opposite direction is imposed to keep the asymptotic size

KS

.

of the bootstrap test below α uniformly over all the probabilities P ∈ P0 \P00

Our requirement for the sequence π N to increase to infinity is necessary for our results

that are uniform in P. To see this, consider the following:

n

o √

√

√

(s)

(s)

(s)

(s)

N q(x)D̄kl (x, θ̂, τ̂ ) = Nq(x) D̄kl (x, θ̂, τ̂ ) − Dkl (x) + Nq(x)Dkl (x).

(14)

The first component on the right-hand side is uniformly asymptotically tight and its tail

probability goes to zero only when we increase the cut-off value to infinity. The behavior of

KS

. The worst case arises

the last term depends on the sequence of probabilities PN ∈ P0 \P00

√

(s)

when PN converges to a boundary point so fast that for some k 6= l, supx∈X Nq(x)Dkl (x)

also converges to zero. Considering uniformity in P, we should take this case into account.

Therefore, π N should increase to infinity in order to control the tail probability of the supremum of the combined quantities in (14) appropriately. On the other hand, for only pointwise

16

KS

results for each P ∈ P0 \P00

, it suffices to set π N to be any nonnegative number.

It is worth noting that the convergence of the rejection probability on the boundary

points is slightly slower than cN . Since we can take cN converge to zero faster than N −1/3 ,

the convergence of the rejection probability can be made to be faster than the subsamplingbased test.6

Suppose that the null hypotheses in (1) and (2) are equivalent. Let us compare the

(s)

(s)

asymptotic similarity property of two tests DN and CN that use bootstrap critical values

CM

KS

⊂ P00

. Hence the Kolmogorov-Smirnov tests are

as proposed above. In general P00

asymptotically similar over a wider set of probabilities than the Cramér-von Mises type

(s)

tests. Such a comparison is relevant when for all k 6= l, the contact sets Bkl are not empty

(s)

(s)

(s)

and for some k 6= l, Bkl is a finite set, as would happen, for example, with Dk and Dl

(s)

(s)

contact on a finite set of points and Dk (x) ≤ Dl (x). In that case, the Kolmogorov-Smirnov

test has an exact asymptotic size of α while the asymptotic size of Cramér-von Mises test is

zero.

The result of Theorem 2 allows for a wide range of choice for the sequence π N in (11)

and (12) in the bootstrap test statistic. In practice, we may consider two extreme cases

π N = log(N) and π N = ∞, and see the robustness of the results. The latter case with

π N = ∞ is tantamount to the rule of setting the test ϕ = 0 (i.e. do not reject the null) when

the contact set B̂ is empty.

4

Asymptotic Power Properties

In this section, we establish asymptotic power properties of the bootstrap test. First, we

consider consistency of the test.

Theorem 3 : Suppose that the conditions of Theorem 2 hold and that we are under a fixed

alternative P ∈ P\P0 . Then,

o

o

n

n

(s)

(s)

∗CM

→

1

and

lim

→ 1.

limN→∞ P DN > c∗KS

P

C

>

c

N→∞

α,∞

α,∞

N

Therefore, the bootstrap test is consistent against all types of alternatives. This property is

shared by other tests of LMW and Barrett and Donald (2003) for example.

Let us turn to asymptotic local power properties. We consider a sequence of prob(s)

(s)

(s)

abilities PN ∈ P\P0 and denote Dk,N (x) to be Dk (x) under PN . That is, Dk,N (x) =

(s)

(s)

EPN hx (ϕk (W ; θ0 , τ 0 )) using the notation of hx and the specification of Xk in a previous

6

The slower convergence rate for the bootstrap test than the asymptotic approximation is due to the

(s)

presence of the estimation error in the contact set B̂kl .

17

section, where EPN denotes the expectation under PN . We confine our attention to {PN }

(s)

KS(s)

(·) such that

such that for each k ∈ {1, . . . , K}, there exists functions Hk (·) and δ k

KS(s)

KS(s)

(s)

Dk,N (x) = Hk (x) + δ k

√

(x)/ N

(15)

as N → ∞.

In the case of Cramér-von Mises type tests, we consider the following local alternatives:

(s)

for the functions Hk (·) in (15), we assume that

CM(s)

Dk,N

CM(s)

(s)

(x) = Hk (x) + δ k

KS(s)

(s)

We assume the following for the functions Hk (·), δ k

(s)

√

(x)/ N.

(16)

CM(s)

(·) and δ k

(·).

(s)

Assumption 5: (i) Ckl = {x ∈ X : Hk (x) − Hl (x) = 0} is nonempty for all k 6= l.

(s)

(s)

(ii) mink6=l supx∈X (Hk (x) − Hl (x)) ≤ 0.

R

KS(s)

KS(s)

CM(s)

CM(s)

(x)−δ l

(x)) > 0 and Ckl (max{(δk

(x)−δ l

(x)), 0})2 dx > 0, for

(iii) supx∈Ckl (δ k

all k 6= l.

Assumption 5 comprises conditions for the sequences in (15) and (16) to constitute non(s)

(s)

void local alternatives. Note that when Hk (x) = EP hx (ϕk (W ; θ0 , τ 0 )) for some P ∈

(s)

P, these conditions for Hk imply that P ∈ P0 , that is, the probability associated with

(s)

Hk belongs to the null hypothesis, in particular, the boundary points.7 The conditions

KS(s)

CM(s)

(x) and δ k

(x) indicate that for each N, the probability PN belongs to the

for δ k

alternative hypothesis P\P0 . Therefore, the sequence PN represents local alternatives that

KS

) maintaining the

converge to the null hypothesis (in particular, to the boundary points P00

√

KS(s)

(s)

KS(s)

(x). The

convergence of Dk,N (x) to Hk (x) at the rate of N in the direction of δk

following theorem shows that the tests are asymptotically unbiased against such sequences

of local alternatives.

Theorem 4 : Suppose that the conditions of Theorem 2 and Assumption 5 hold.

(i) Under the local alternatives PN ∈ P\P0 satisfying the condition in (15),

n

o

(s)

∗KS

limN→∞ PN DN > cα,∞ ≥ α.

(ii) Under the local alternatives PN ∈ P\P0 satisfying the condition in (16),

7

n

o

(s)

≥ α.

limN→∞ PN CN > c∗CM

α,∞

Since the distributions are continuous, the contact sets are nonempty under the alternatives. Therefore,

any local alternatives that converge to the null hypothesis will have a limit in the set of boundary points.

18

Similarly as in LMW, the asymptotic unbiasedness of the test follows from Anderson’s

Lemma (Anderson (1952)).8 It is interesting to compare the asymptotic power of the bootstrap procedure that is based on the least favorable set of the null hypothesis. Using the

∗

: k = 1, ··, K}ni=1 , b = 1, . . . , B, this bootstrap procedure

same bootstrap sample {Xki,b

alternatively consider the following bootstrap test statistics

(s)∗LF

DN,b

(s)∗LF

CN,b

√

(s)∗

= min sup Nq(x)D̄kl,b (x) and

k6=l x∈X

Z

n

o2

(s)∗

= N min

max q(x)D̄kl,b (x), 0 dx.

k6=l

(17)

X

and c∗CM−LF

respectively.

Let the bootstrap critical values with B → ∞ denoted by c∗KS−LF

α,∞

α,∞

The results of this paper easily imply the following fact that this bootstrap procedure is

certainly inferior to the procedure that this paper proposes. For brevity, we state the result

(s)∗LF

(s)∗LF

for DN,b . We can obtain a similar result for CN,b .

Corollary 5 : Suppose that the conditions of Theorem 4 hold. Under the local alternatives

PN ∈ P\P0 satisfying the condition in (15),

n

o

n

o

(s)

(s)

∗KS

∗KS−LF

.

limN→∞ PN DN > cα,∞ ≥ limN→∞ PN DN > cα,∞

Furthermore, assume that for all k 6= l, the union of the closures of the contact sets Ckl is a

(s)

proper subset of the interior of X and the Gaussian processes ν kl (x) in Theorem 1 satisfy

(s)

that E[{supx∈Ckl ν kl (x)}2 ] > 0. Then the inequality above is strict.

The result of Corollary 5 is remarkable that the bootstrap test of this paper weakly

dominates the bootstrap in (17) regardless of the Pitman local alternative directions. Furthermore, when the union of the closures of the contact sets is a proper subset of the interior

of X , our test strictly dominates the bootstrap in (17) uniformly over the Pitman local alternatives. However, this latter condition for contact sets can be strong when the number K

of prospects are many. In this case, a full characterization of the case for strict dominance

appears to be complicated. The result of Corollary 5 is based on the nonsimilarity of the

bootstrap tests in (17) on the boundary. In fact, Corollary 5 implies that the test based on

the bootstrap procedure using (17) is inadmissible. This result is related to Hansen (2003)’s

finding in an environment of finite-dimensional composite hypothesis testing that a test that

is not asymptotically similar on the boundary is asymptotically inadmissible.

8

Anderson’s Lemma does not apply√to one-sided Kolmogorov-Smirnov type tests. Song (2008) demonstrates that there exist a class of n-converging Pitman local alternatives against which one-sided

Kolmogorov-Smirnov type tests are asymptotically biased. Also see the discussion at the end of Section

2.

19

5

5.1

Monte Carlo Experiments

Simulation Designs

In this section, we examine the finite sample performance of our tests using Monte Carlo

simulations. In particular, we compare our procedure with the subsampling method and

recentered bootstrap method which were suggested by LMW.

For a fair comparison of the simulation results, the simulation designs we consider are

exactly same as those considered by LMW: the Burr distributions also examined by Tse and

Zhang (2004), the lognormal distributions also considered by Barrett and Donald (2003) and

the exchangeable normal processes of Klecan et. al. (1991).

We first describe the details of the simulation designs. We consider the five different

designs from the Burr Type XII distribution, B(α, β) which is a two parameter family with

cdf defined by:

F (x) = 1 − (1 + xα )−β ,

x ≥ 0.

The parameters and the population values of d∗1 , d∗2 of the Burr designs are given below.

Design

X1

1a

1b

1c

1d

1e

B(4.7, 0.55)

B(2.0, 0.65)

B(4.7, 0.55)

B(4.6, 0.55)

B(4.5, 0.55)

d∗1

X2

d∗2

B(4.7, 0.55) 0.000(F SD) 0.0000(SSD)

B(2.0, 0.65) 0.0000(F SD) 0.0000(SSD)

B(2.0, 0.65)

0.1395

0.0784

B(2.0, 0.65)

0.1368

0.0773

B(2.0, 0.65)

0.1340

0.0761

On the other hand, we consider four different designs from the lognormal distribution

LN(μk , σ 2k ). That is, we consider the distributions of Xk = exp(μk + σk Zk ), where Zk are

i.i.d. N(0, 1). The parameters and the population values of d∗1 , d∗2 of the lognormal designs

are given below.

Design

2a

2b

2c

2d

X1

d∗1

X2

d∗2

LN(0.85, 0.62 ) LN(0.85, 0.62 ) 0.0000(F SD) 0.0000(SSD)

LN(0.85, 0.62 ) LN(0.7, 0.52 ) 0.0000(F SD) 0.0000(SSD)

LN(0.85, 0.62 ) LN(1.2, 0.22 )

0.0834

0.0000(SSD)

LN(0.85, 0.62 ) LN(0.2, 0.12 )

0.0609

0.0122

20

To define the multivariate normal designs, we let

³√

´i

h

p

Xki = (1 − λ) αk + β k

ρZ0i + 1 − ρZki + λXk,i−1 ,

where (Z0i , Z1i , Z2i ) are i.i.d. standard normal random variables, mutually independent.

The parameters λ = ρ = 0.1 determine the mutual correlation of X1i and X2i and their

autocorrelation. The parameters αk , β k are actually the mean and standard deviation of the

marginal distributions of X1i and X2i . The marginals and the true values of the multivariate

normal designs are:

Design

3a

3b

3c

X1

d∗1

X2

d∗2

N(0, 1) N(−1, 16)

0.1981

0.0000(SSD)

N(0, 16) N(1, 16) 0.0000(F SD) 0.0000(SSD)

N(0, 1)

N(1, 16)

0.1981

0.5967

(s)

In computing the suprema in DN we took a maximum over an equally spaced grid of

(2)

size n on the range of the pooled empirical distribution. To compute the integral for CN ,

we took the sum over the same grid points. We chose a total of 5 different subsamples for

each sample size N ∈ {50, 500, 1000}. We took an equally spaced grid of subsample sizes:

for N = 50, the subsample sizes are {20, 25, . . . , 45}; for N = 500 the subsample sizes are

{50, 100, . . . , 250}; for N = 1000 the subsample sizes are {100, 200, . . . , 500}.9 This grid of

subsamples are then used to compute the mean of the critical values from the grid, which had

the best overall performance among the automatic methods considered by LMW. We used

a total of 200 bootstrap repetitions in each case. In computing the suprema and integral in

each subsample and bootstrap test statistic, we took the same grid of points as was used in

the original test statistic.

To estimate the contact set

o

n

(s)

(s)

B̂kl = x ∈ X : |D̄kl (x)| < cN ,

we took the tuning parameter to be cN = cN −1/3 , where c ∈ {0.25, 0.50, 0.75} for s = 1 and

c ∈ {2.0, 3.0, 4.0} for s = 2. To compute the test statistics and the contact set, we took the

weight function q(x) = 1 for all x ∈ X , i.e., no weighting. In each experiment, the number

of replications was 1, 000.

9

LMW considered a finer grid, i.e., 20, of different subsample sizes but their simulation results are very

close to ours.

21

5.2

Simulation Results

Tables 1F - 3S present the rejection probabilities for the tests with nominal size 5%. The

simulation standard error is approximately 0.007.

The overall impression is that all the methods considered work reasonably well in samples

above 500. The full- sample methods work better than the subsample method under the null

hypothesis in small samples . Our procedure is always more powerful than the recentered

bootstrap method. In cases (1c,1d,1e,2d below) where the subsample method is better

than the recentered bootstrap, our procedure is strictly more powerful than the subsample

method. The performance of our procedure depend on the choice of the tuning parameter cN .

If it takes larger values, then the rejection probabilities get closer to those of the recentered

bootstrap, but the rejection probabilities increase as cN decreases. This is consistent with

our theory because the rejection probability is a monotonically non-increasing function of

cN . In a reasonable range of the tuning parameter, our procedure strictly dominates the the

subsample and recentered bootstrap methods in terms of power without sacrificing size in

all of the designs we considered.

The first two designs in Tables 1F and 1S are to evaluate the size performance, especially

in the least favorable case of the equality of two distributions, while the other three are to

evaluate the power performance. The subsample method tends to over-reject under the null

when N = 50 but the size distortions are negligible when N ≥ 500. Our procedure has a

good size performance over all tuning parameters we considered in Table 1F, but the case 1b

in Table 1S shows that it tends to over-reject when the tuning parameter is chosen to be too

small. The last three designs (case 1c, 1d, and 1e in Tables 1F and 1S) are quite conclusive.

For moderate and large samples (N ≥ 500), the subsample method is more powerful than

the recentered bootstrap method. However, in these cases, our procedure strictly dominates

the subsample method uniformly over all values of cN we considered. This is so even at

small samples (N = 50). This remarkable results forcefully demonstrate the power of our

procedure.

Tables 2F and 2S give the rejection probabilities under the lognormal designs. The results

under the least favorable case 2a are similar to those of 1a and 1b. The design 2b (in Table

2F) is quite instructive because it corresponds to the boundary case under which the two

distributions "kiss" at points in the interior of the support. The recentered bootstrap tends

to under-reject the null hypothesis and perform very differently from the least favorable case,

while our procedure tends to have correct size for a suitable value of cN . The design 2c in

Table 2F shows that, in small sample N = 50, the full sample method is more powerful than

the subsample method. In design 2d (Tables 2F and 2S), the subsample method is more

powerful than the recentered bootstrap method for all sample sizes, but again our procedure

22

dominates the subsample method.

Tables 3F and 3S consider the multivariate normal designs. The designs 3a (Table 2F)

and 3b (Tables 3F and 3S ) are to evaluate the size characteristics of the tests. The latter

designs correspond to the "interior" of the null hypothesis and the rejection probabilities tend

to zero as the sample size increases. This is consistent with our theory. The other designs are

to evaluate the power performance. They show that the subsample method is less powerful

than the recentered bootstrap and the latter is again dominated by our procedure.

Among the Kolmogorov-Smirnov and Cramer-von Mises type tests, the power performance depends on the alternatives. In designs 1c,1d,1e, and 2d, the Kolmogorov-Smirnov

type tests are more powerful, while in designs 2c, 3a, and 3c, the Cramer-von Mises type

tests are more powerful.

6

Conclusion

This paper proposes a new method for testing stochastic dominance that improves on the

existing methods. Specifically, our tests have asymptotic sizes that are exactly correct uniformly over the entire null hypothesis. In addition, we have extended the domain of applicability of our tests to a more general class of situations where the outcome variable is

the residual from some semiparametric model. Our simulation study demonstrates that our

method works better than existing methods for quite modest sample sizes.

Our setting throughout has been i.i.d. data, but many time series applications call for the

treatment of dependent data. In that case, one may have to use a block bootstrap algorithm

in place of our wild bootstrap method. We expect, however, that similar results will obtain

in that case.

7

Appendix: Mathematical Proofs

Throughout the proof, C denotes a constant that can assume different values in different places.

7.1

Proofs of the Main Results

Lemma A1 : Let V (x) = xq(x), and Ṽ (x) = |Dkl (x)|q(x) x ∈ X , and introduce pseudo metrics dV (x, x0 ) =

|V (x) − V (x0 )| and dṼ (x, x0 ) = |Ṽ (x) − Ṽ (x0 )|. Then,

©

ª

log N[] (ε, X , dV ) ≤ C log h−1 (C/ε) − log(ε) and

©

ª

log N[] (ε, X , dṼ ) ≤ C log h−1 (C/ε) − log(ε) ,

where h(·) is an arbitrary strictly increasing function.

23

Proof of Lemma A1 : Fix ε > 0. Note that |V | is uniformly bounded by Assumption 3(ii) and hence for

some C > 0, V (x) < C/h(x) for all x > 1 where h(x) is an increasing function. Put x = h−1 (2C/ε) where

h−1 (y) =inf{x : h(x) ≥ y : x ∈ X }, so that we obtain

V (h−1 (2C/ε)) < ε/2.

Then, we can choose L(ε) ≤ Ch−1 (2C/ε) such that L(ε) ≥ 1 and sup|x|≥L(ε) V (x) < ε/2. Partition

L

[−L(ε), L(ε)] into intervals {Xm }N

m=1 of length ε with the number of intervals NL(ε) not greater than : 2L(ε)/ε.

Let X0 = X \[−L(ε), L(ε)]. Then (∪m≥1 Xm ) ∪ X0 constitutes the partition of X . In terms of dV , the diameter of the set X0 is bounded by ε because supx,x0 ∈X0 |V (x) − V (x0 )| ≤ ε/2 + ε/2 = ε, and the diameter

of Xm , m ≥ 1, is also bounded by Cε because dV (x, x0 ) = |V (x) − V (x0 )| ≤ C|x − x0 | ≤ Cε for all

x, x0 ∈ Im . The second inequality uses the fact that q(x) is Lipschitz continuous. Therefore, we have

N (ε, X , dV ) ≤ 2L(ε)/ε + 1 ≤ Ch−1 (2C/ε)/ε + 1. Hence logN (ε, X , dV ) ≤ C log h−1 (C/ε) − log(ε).

We can obtain the same result for dṼ precisely in the same manner. Note that the properties of V (x)

that we used are that it is uniformly bounded and that |V (x) − V (x0 )| ≤ C|x − x0 |. By Assumption 2(i),

Ṽ (x) also satisfy these properties.

n

o

(s)

Lemma A2 : Let F = hx (ϕ(·; θ, τ )) : (x, θ, τ ) ∈ X × BΘ×T (δ) . Then,

supP ∈P log N[] (ε, F, || · ||P,2 ) ≤ Cε−2d/s2 + C log ε, if s = 1, and

supP ∈P log N[] (ε, F, || · ||P,2 ) ≤ Cε−d/s2 + C/ε, if s > 1.

(s)

Proof of Lemma A2 : Let H = {hx : x ∈R} and γ x (y) = 1{y ≤ x}. Observe that the function

(x − ϕ)s−1 γ x (ϕ) is monotone decreasing in ϕ for all x, z. Define Φ = {ϕ(·; θ, τ ) : (θ, τ ) ∈ Θ × T }. Then by

the local uniform Lp -continuity condition in Assumption 2(i)(c), we have

log N[] (ε, Φ, || · ||P,2 ) ≤ log N[] (Cε1/s2 , Θ × T , || · ||P,2 ) ≤ Cε−d/s2 .

(18)

R 2

1

∆1,j dP ≤ ε2 . For each j, let Qj,P be the distribution of

Choose ε-brackets (ϕj , ∆1,j )N

j=1 of Φ such that

ϕj (W ) where Wj is distributed as P.

Suppose s = 1. In Lemma A1, we take h(x) = (log x)s2 /d and obtain logN (ε, X , dV ) ≤ Cε−d/s2 −

L

C log(ε). Fix ε > 0 and choose the partition X = ∪N

m=0 Xm as above. Let xm be the center of Xm , m ≥ 1,

and let x0 be any point in X0 . Write

Then,

¯

¯

supϕ∈Φj supx∈Xm ¯γ x (ϕ(w))q(x) − γ xm (ϕj (w))q(xm )¯

¯

¯

≤ supϕ∈Φj supx∈Xm ¯γ x (ϕ(w))q(x) − γ xm (ϕj (w))q(x)¯

¯

¯

+supϕ∈Φj supx∈Xm ¯γ xm (ϕj (w))q(x) − γ xm (ϕj (w))q(xm )¯ = ∆∗m,j (w), say.

i

h

¯

¯2

E supϕ∈Φj supx∈Xm ¯γ x (ϕ(W )) − γ xm (ϕj (W ))¯ q(x)2 |W1

¤

£

≤ E supx∈Xm 1{x − ∆1,j (W1 ) − |x − xm | ≤ ϕj (W ) ≤ x + ∆1,j (W1 ) + |x − xm |}q(x)2 |W1

≤ supx∈X0 q(x)2 1{m = 0} + C {ε + ∆1,j (W1 )} 1{m ≥ 1}

≤ Cε2 1{m = 0} + C {ε + ∆1,j (W1 )} 1{m ≥ 1} ≤ Cε + C∆1,j (W1 ).

24

(19)

The second inequality is obtained by splitting the supremum into the case m = 0 and the case m ≥ 1.

The third and fourth inequalities follow because supx∈X0 |q(x)|2 ≤ sup|x|>L(ε) |x|2 |q(x)|2 ≤ ε2 /4. The last

inequality follows by subsuming Cε2 into Cε. Similarly we can show that

i

h

¯

¯2

E supϕ∈Φj supx∈Xm ¯γ xm (ϕj (W ))q(x) − γ xm (ϕj (W ))q(xk )¯ |W1 ≤ C|q(x) − q(xk )|2 ≤ Cε2 .

We conclude that ||∆∗k,j ||P,2 ≤ Cε1/2 . Hence

supP ∈P log N[] (Cε1/2 , F, Lp (P )) ≤ log N[] (ε, Φ, Lp (P )) + log N[] (ε, X , dV )

≤ Cε−d/s2 − C log ε.

Suppose s > 1. Then (x − ϕ)s−1 γ x (ϕ)q(x) is infinite times continuously differentiable in ϕ with bounded

1

derivatives. Choose ε-brackets (ϕj , ∆1,j )N

j=1 of Φ as before. Then for each j, we choose also ε-brackets

R

N2 (j)

(hk,j , ∆2,k,j )k=1 such that for all h ∈ H, |h − hk,j | ≤ ∆2,k,j and ∆p2,k,j dQj,P ≤ εp . Since H is monotone

increasing and uniformly bounded, we can take N2(j) ≤ N[] (ε, H, Lp (Qj,P )) ≤ C/ε by Birman and Solomjak

(1967). Now, observe that

¯

¯

¯ ¯

¯

¯

¯h(ϕ(w)) − hk,j (ϕj (w))¯ ≤ ¯h(ϕ(w)) − h(ϕj (w))¯ + ¯h(ϕj (w)) − hk,j (ϕj (w))¯

¯

¯

≤ ¯h(ϕj (w) − ∆1,j (w)) − h(ϕj (w) + ∆1,j (w))¯ + ∆2,k,j (ϕj (w))

≤ C∆1,j (w) + ∆2,k,j (ϕj (w)).

The last inequality is due to the fact that h is Lipschitz with a uniformly bounded coefficient. Take

∆∗k,j (w) = C∆1,j (w) + ∆2,k,j (ϕj (w)).

R

and note that (∆∗k,j )2 dP ≤ Cε. Therefore, log N[] (ε, F, || · ||P,2 ) ≤ log N[] (Cε, Φ, || · ||P,2 ) + C/ε. Observe

that the constants above do not depend on the choice of the measure P . By (18), we obtain the wanted

results.

Proof of Theorem 1 : Consider the following empirical process

N

X

1

(s)

vkN (f ) = √

{f (Wi ) − E [f (Wi )]} , f ∈ F

N (s − 1)! i=1

(20)

where F is as defined in Lemma A2 above. Recall that F is uniformly bounded. Therefore, by Lemma A2

and d/s2 < 1, F is asymptotically equicontinuous uniformly in P ∈ P and totally bounded for all P ∈ P.

By Proposition 3.1 and Theorem 2.3 of Giné and Zinn (1991) (See also Theorem 2.8.4 of van der Vaart and

(s)

Wellner (1996)), the weak convergence of vkN (·) in l∞ (F) uniform over P ∈ P immediately follows. Here

l∞ (F) denotes the space of uniformly bounded real functions on F.

(s)

Let FN = {f (·; θ, τ ) : (θ, τ ) ∈ ΘN × TN }. Let us consider the covariance kernel of the process vkN (f ) in

f ∈ FN . Choose f1,N (w) = f (w; θ1,N , τ 1,N ) and f2,N (w) = f (w; θ2,N , τ 2,N ), and let f1,0 (w) = f (w; θ0 , τ 0 )

25

and f2,0 (w) = f (w; θ0 , τ 0 ). Then,

|P f1,N f2,N − P f1,N P f2,N − {P f1,0 f2,0 − P f1,0 P f2,0 }|

≤ C |P (f1,N − f1,0 )| + C |P (f2,N − f2,0 )|

≤ C {||θ1,N − θ0 || + ||τ 1,N − τ 1,0 ||P,2 } + C {||θ2,N − θ0 || + ||τ 2,N − τ 2,0 ||P,2 } → 0.

(s)

The convergence is uniform over all P ∈ P. Hence, the uniform weak convergence of vkN (·) in l∞ (FN ) to a

Gaussian process follows by Theorem 2.11.23 of van der Vaart and Wellner (1996). The covariance kernel of

the Gaussian process is given by P f1,0 f2,0 − P f1,0 P f2,0 .

Suppose that we are under the null hypothesis. Note that

√

N

Eh(s) (ϕk (W ; θ0 , τ 0 ))

(s − 1)! x

N n

h

io

X

hx(s) (ϕk (Wi ; θ̂, τ̂ )) − E h(s)

x (ϕk (Wi ; θ̂, τ̂ ))

√

(s)

N D̄k (x, θ̂, τ̂ ) −

1

√

N (s − 1)! i=1

√

io

N n h (s)

+

E hx (ϕk (Wi ; θ̂, τ̂ )) − hx(s) (ϕk (Wi ; θ0 , τ 0 ))

(s − 1)!

N n

h

io

X

1

= √

hx(s) (ϕk (Wi ; θ0 , τ 0 )) − E h(s)

x (ϕk (W ; θ 0 , τ 0 ))

N (s − 1)! i=1

√

N

+

Γk,P (x)[θ̂ − θ0 , τ̂ − τ 0 ] + oP (1)

(s − 1)!

=

uniformly over P ∈ P. Hence we write

√

(s)

N D̄kl (x, θ̂, τ̂ ) −

=

√

N

Eh∆ (W )

(s − 1)! x,kl

N n

h

io

X

1

∆

∆

∆

√

+ oP (1).

h∆

x,kl (Wi ) + ψ x,kl (Wi ) − E hx,kl (W ) + ψ x,kl (W )

N (s − 1)! i=1

h

i

Under P ∈ P0 , let δ kl (x) = E h∆

(W

)

. Therefore,

x,kl

√

(s)

N D̄kl (x, θ̂, τ̂ ) −

=

√

N δ kl (x)

(s − 1)!

N n

h

io

X

1

∆

∆

∆

√

(W

)

+

ψ

(W

)

−

E

h

(W

)

+

ψ

(W

)

+ oP (1).

h∆

i

i

x,kl

x,kl

x,kl

x,kl

N (s − 1)! i=1

∆

Let Ψkl = {ψ ∆

x,kl : x ∈ X } and Hkl = {hx,kl : x ∈ X }. Consider the class of functions Fkl = {h + ψ : (h, ψ) ∈

Hkl ×Ψkl }. By Lemma A2 above and Theorem 6 of Andrews (1994), we have supP ∈P log N[] (ε, Hkl , ||·||P,2 ) <

Cε−((2d/s2 )∨1) and by Assumption 1(iv), supP ∈P log N[] (ε, Ψkl , || · ||P,2 ) < Cε−d . Note that Hkl is uniformly

bounded and Ψkl has an envelope 2ψ̄ such that ||2ψ̄||P,2+δ < C. Therefore, Fkl is a P -Donsker class. The

computation of the covariance kernel of the limiting Gaussian process is straightforward. Therefore,

√

(s)

N D̄kl (·, θ̂, τ̂ ) −

√

N δ kl (·)

(s)

=⇒ ν kl ,

(s − 1)!

26

uniformly in P ∈ P. This yields the limit results both for the Kolmogorov-Smirnov type test and the

Cramér-von Mises type test.

Let F be as defined in Lemma A2 above. Let the bootstrap process

N n

io

h

X

∗

1

∗(s)

∗

ν k,b (x) = √

; θ̂b , τ̂ ∗b )) − EN h(s)

(ϕ

(W

;

θ̂,

τ̂

))

, x ∈ X,

hx(s) (ϕk (Wi,b

i

k

x

N (s − 1)! i=1

where Wi∗ = (Yi∗ , Zi ). The following lemma is a key step for the bootstrap consistency of the test (Theorem

2). In the following, we use the usual stochastic convergence notation oP ∗ and OP ∗ that is with respect to

the conditional distribution given GN .

Lemma A3 : Under the assumptions of Theorem 2,

∗(s)

ν k,b (x) =

N n

o

X

1

∗

(s) ∗

√

(ε

(θ

,

τ

))

−

E

[h

(ε

(θ

,

τ

))]

h(s)

0

0

N

0

0

x

ki,b

x

ki,b

N (s − 1)! i=1

N

X

1

+√

ψ x,P (ε∗ib (θ0 , τ 0 )) + oP ∗ (1)

N (s − 1)! i=1

in P uniformly in P ∈ P. Furthermore, let FN be the class defined in the proof of Theorem 1. Then

∗(s)

(s)

ν kl,b → ν kl weakly in l∞ (F) in P uniformly in P ∈ P.10

Proof of Lemma A3 : Observe that

∗

(θ, τ , θ̃, τ̃ ) = g(Zki , θ̃, τ̃ ) − g(Zki , θ, τ ) + ε∗ki,b (θ̃, τ̃ ).

Xki,b

Then, we are interested in the weak convergence of the following bootstrap empirical process

N n

io

h

X

∗ ∗

1

∗

(s)

∗

√

h(s)

x (Xki,b (θ̂, τ̂ , θ̂ b , τ̂ b )) − EN hx (Xki,b (θ̂, τ̂ , θ̂, τ̂ ))

N (s − 1)! i=1

=

(21)

N n

o

X

∗ ∗

1

∗

(s)

∗

√

h(s)

x (Xki,b (θ̂, τ̂ , θ̂ b , τ̂ b )) − hx (Xki,b (θ̂, τ̂ , θ̂, τ̂ ))

N (s − 1)! i=1

N n

o

X

1

∗

(s)

∗

+√

(X

(

θ̂,

τ̂

,

θ̂,

τ̂

))

−

E

[h

(X

(

θ̂,

τ̂

,

θ̂,

τ̂

))]

.

h(s)

N

x

ki,b

x

ki,b

N (s − 1)! i=1

We consider the bootstrap empirical process

N n

o

X

1

∗

ν ∗1 (x, θ, τ ) = √

(ε

(θ,

τ

))]

.

hx(s) (ε∗ki,b (θ, τ )) − EN [h(s)

x

ki,b

N (s − 1)! i=1

(s)

We can view the process as indexed by hx ◦ ϕ(·; θ, τ ) ∈ F and the process as a l∞ (F)-valued random

(potentially nonmeasurable) element, where F is the class defined in Lemma A2. By Proposition 3.1 of Giné

and Zinn (1991), Lemma A2 implies that F is finitely uniformly pregaussian. Theorem 2.3 of Giné and

Zinn (1991) combined with Proposition B1 below gives the central limit theorem for the bootstrap empirical

10

For the definition of the bootstrap uniform weak convergence, see Section 7.2 below.

27

process ν ∗1 and so, the law of the process converges weakly to the law of a centered Gaussian process ν 1 on

X conditionally on GN in P uniformly in P ∈ P. The Gaussian process ν 1 has a covariance kernel

∆

CovP (h∆

x1 ,kl (W ; θ 1 , τ 1 ), hx2 ,kl (W ; θ 2 , τ 2 )),

(s)

(s)

where h∆

x,kl (W ; θ, τ ) = hx (ϕk (W ; θ, τ )) − hx (ϕl (W ; θ, τ )). The asymptotic equicontinuity associated with

this bootstrap uniform CLT implies that

¯

¯

¯

¯

supx ¯ν ∗1 (x, θ̂, τ̂ ) − ν ∗1 (x, θ0 , τ 0 )¯ = oP ∗ (1) in P,

uniformly in P. We turn to the term in the second line of (21). Then write it as

√

o

∗

N n

∗

∗

(θ̂, τ̂ , θ̂b , τ̂ ∗b )) − EN hx(s) (Xki,b

(θ̂, τ̂ , θ̂, τ̂ )) .

EN hx(s) (Xki,b

(s − 1)!

(22)

By Assumptions 1(iv) and 4(ii), we deduce that the process in (22) is asymptotically equivalent to

N

1 X

∗

√

ψ x,k,P (Wi,b

) + oP ∗ (cN ) in P,

N i=1

∗

∗

= ([Yki − g(Zki , θ̂, τ̂ )]∗ + g(Zki , θ̂, τ̂ ), Zki )K

uniformly in P ∈ P. Recall that Wi,b

k=1 where [·] is the part

obtained through the wild-bootstrap procedure. The above process is a bootstrap empirical process already

centered. The wanted result follows from the asymptotic equicontinuity condition, Assumption 3(i), and the

bootstrap CLT in Proposition B1.

(s)

(s)

(s)

(s)

Proof of Theorem 2 : (i) Let Bkl = {x : Dkl (x) = 0}. Denote [Bkl ]ε = {x : q(x)|Dkl (x)| < ε}. We will

show the following at the end of the proof:

o

n

(s)

(s)

(s)

P Bkl ⊂ B̂kl ⊂ [Bkl ]2cN → 1, uniformly in P ∈ P.

(23)

Note that [Bkl ]2cN ⊂ X from some sufficiently large N on by Assumption 1(i)(b). Let ν̄ ∗kl,b (x) =

√

(s)∗

N

N D̄kl,b (x) and define 1K = {Bkl 6= ∅ for all k 6= l} and 12c

= 1{[Bkl ]2cN 6= ∅ for all k 6= l}. Also define

K

n

o

(s)

(s)

(s) 2cN

2cN

N

=

1{[

B̂

]

=

6

∅

for

all

k

=

6

l}.

Since

P

B

⊂

B̂

⊂

[B

]

→

1̂K = {B̂kl 6= ∅ for all k 6= l} and 1̂2c

kl

K

kl

kl

kl

1 by (23) and cN → 0, it follows that with probability (uniformly over P ∈ P) approaching one,

N

supx∈Bkl ν̄ ∗kl,b (x)1K + π N (1 − 12c

K )

(24)

≤ supx∈B̂kl ν̄ ∗kl,b (x)1̂K + π N (1 − 1̂K )

N

≤ supx∈[Bkl ]2cN ν̄ ∗kl,b (x)12c

K + π N (1 − 1K ).

We can apply the weak convergence of the process ν̄ ∗kl,b in Lemma A3 so that for each c > 0,

n

o

(s)

P suph |E[h(ν̄ ∗kl,b )|GN ] − Eh(ν kl )| > c, i.o. → 0,

where the supremum is over the space of bounded linear functionals h on l∞ (F) and the convergence is

uniform over P ∈ P. (Corollary 2.7 of Giné and Zinn (1991). See also Giné (1997)). Furthermore, since the

class of functions indexing the bootstrap empirical process ν̄ ∗kl,b is uniformly pregaussian (as implied by the

28

bracketing entropy conditions in Lemma A2 and Proposition 3.1 of Giné and Zinn (1991)), the process ν

satisfies that

limε→0 supP ∈P EP supf1 ,f2 ∈F:||f1 −f2 ||P,2 <ε |ν(f1 ) − ν(f2 )| = 0.

(25)

(See Definition 2.4 of Giné and Zinn (1991).)

KS

. From (24), with probability approaching one,

Suppose that for all k, l, Bkl 6= ∅ so that P ∈ P00

supx∈Bkl ν̄ ∗kl,b (x) ≤ supx∈B̂kl ν̄ ∗kl,b (x)1̂K + πN (1 − 1̂K ) ≤ supx∈[Bkl ]2cN ν̄ ∗kl,b (x).

Therefore, we deduce that with probability approaching one,

¯

¯

¯

¯

¯supx∈B̂kl ν̄ ∗kl,b (x)1̂K + π N (1 − 1̂K ) − supx∈Bkl ν̄ ∗kl,b (x)¯

(26)

≤ supx∈[Bkl ]2cN ν̄ ∗kl,b (x) − supx∈Bkl ν̄ ∗kl,b (x)

¯

¯

≤ supx∈X : d (x,x0 )≤2c ¯ν̄ ∗kl,b (x) − ν̄ ∗kl,b (x0 )¯ .

Ṽ

N

Given samples {Wi }N

i=1 , we define a random metric:

dˆkl (x, x ) =

0

(

N

¯2

1 X ¯¯ (s)

¯

(s)

(s)

(

X̂

)}

−

{h

(

X̂

)

−

h

(

X̂

)}

¯{hx (X̂ki ) − h(s)

0

0

li

ki

li ¯

x

x

x

N i=1

)1/2

.

From the proof of Theorem 1, the class F is a uniform Donsker, and hence it follows that

supdṼ (x,x0 )<δ dˆkl (x, x0 ) = supdṼ (x,x0 )<δ dkl (x, x0 ) + OP (N −1/2 )

(s)

(s)