Document 11168781

advertisement

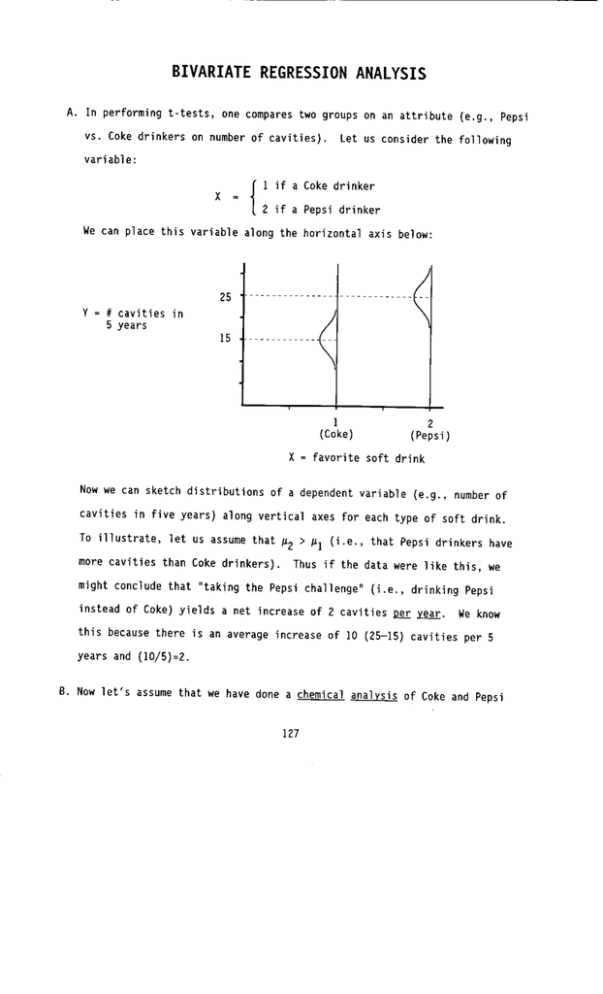

l o s t when t h e mean o f EACH v a r i a b l e i s c a l c u l a t e d . b. A c o r r e l a t i o n o f k.35 i s n o t v e r y l i k e l y t o occur by chance! (In t h i s sample o f o n l y 30 cases i t was almost s u f f i c i e n t l y l a r g e t o r e j e c t Ho. ) Thus i f one v a r i a b l e e x p l a i n s (1 i n e a r l y ) about 15% o f t h e v a r i a n c e i n another, a sample o f around 30 w i l l l i k e l y be l a r g e enough t o d e t e c t i t . T h i s should g i v e you some i n t u i t i v e " f e e l " f o r the r e l a t i v e streugth o f Irl = .35 . W. F i n d i n g a 95% c o n f i d e n c e i n t e r v a l f o r r RECALL t h a t or2 = 1- L $ . Thus when ( p 1 i s small, you would have a 1 a r g e r v a r i a n c e t h a n when large! Ip 1 is As a r e s u l t , y o u r confidence i n t e r v a l must be " l o p s i d e d " w i t h a l a r g e r d e v i a t i o n from r toward z e r o and a s m a l l e r d e v i a t i o n from r toward k1. To e s t i m a t e these d e v i a t i o n s we use Table E, which can be found a t t h e end o f t h e S y l l a b u s . Here a r e THE MECHANICS: 1. Table E p r o v i d e s a t r a n s f o r m a t i o n ( i . e . , v a r i a b l e w i t h a normal d i s t r i b u t i o n - a u n r e l a t e d t o p ' s magnitude. to a variable with a distribution Specifically, 2. I n t h e knee brace problem we have confidence i n t e r v a l i s r a "mapping") of r = .35 and n = 30 , t h u s t h e 95% 3. From Table E we f i n d t h a t T(.35) = .3654 . Thus t h e c o n f i d e n c e interval i s 4. BUT t h i s i s a c o n f i d e n c e i n t e r v a l around T ( r ) ! t h e s e two values back t o r ' s . ~-l(.743) (-.012, = .631 . This y i e l d s So we must c o n v e r t T-I(-,012) = -.012 r Thus t h e 95% c o n f i d e n c e i n t e r v a l f o r = and .35 is .631). 5. N o t i c e how these c o n f i d e n c e bounds l o o k on a number l i n e : I n p a r t i c u l a r , n o t i c e t h a t t h e bounds o f t h e i n t e r v a l a r e " l o p s i d e d . " That i s , n o t e t h a t 1 .35 - (-.012) A X. The d i s t r i b u t i o n o f a when x = 0 1 = .362 > .281 = ( .35 - .631 1 . . A 1. L e t a, equal t h e c o n s t a n t i n a b i v a r i a t e r e g r e s s i o n e q u a t i o n i n which t h e mean on t h e independent v a r i a b l e equals zero. When X i s transformed by s u b t r a c t i n g o u t i t s mean ( i . e . , then A when = 0 ), a = and A t h u s a, i s an e s t i m a t e o f py. NOTE: V a r i a b l e s a r e "centered" i f t h e i r means equal zero. A s u b s c r i p t s on a, and Xc a r e reminders t h a t Xc I 0 The " c " . A 2. The standard e r r o r o f a, i s u s u a l l y much s m a l l e r t h a n t h a t a s s o c i a t e d 4. We can now add t o t h i s graph t h e 95% p r e d i c t i o n i n t e r v a l around t h e p r e d i c t i o n o f 999 r o b b e r i e s i n LA next year: OR (218 t o 1780) - a VERY wide p r e d i c t i o n i n t e r v a l ! 5. F i n a l l y , note t h a t t h e smallest p r e d i c t i o n i n t e r v a l i s a t OR ( 5.06, 12.94 ) OR X : = from 5 t o 13 r o b b e r i e s AB. CONCLUSIONS: a. The f u r t h e r t h e b a s i s o f your p r e d i c t i o n ( i . e . , b. t h e s m a l l e r t h e sample s i z e X P ) i s from AND AND c. t h e smaller t h e estimated p o p u l a t i o n variance o f X, THE LESS PRECISE YOUR PREDICTION w i l l be. These conclusions f o l l o w as a d i r e c t consequence o f t h e formula f o r a prediction interval : NOTE: A c r i t i c a l value o f t ( r a t h e r than Z) w i l l be needed i n computing both confidence and p r e d i c t i o n i n t e r v a l s whenever k = n -k - 1 < 30 , t h e number o f independent v a r i a b l e s i n t h e r e g r e s s i o n equation. i n t h e b i v a r i a t e case, t h e number o f degrees o f freedom f o r t i s where Thus n - 2 . Stat 404 Assumptions Underlying Regression Analysis (continued) A. Assumption 5: The design matrix, X, is fixed, or measured without error. 1. A researcher “fixes” values on an independent variable when subjects are assigned to particular groups (e.g., as in a psychological experiment), or when “fixed” numbers of subjects are sampled within strata (e.g., 50 males and 50 females). 2. When X has been fixed, one speaks of its variables as having “fixed effects.” If the values of the independent variables in X are the unforeseen results of randomly sampled cases, these variables are said to have “random effects” on the dependent variable. Even though you may not have fixed X, it is important to be aware that you are assuming X to be fixed (or measured without error) when you do regression analysis. 3. When a researcher has less control over the values of the independent variables (i.e., when their effects are random ones), she must assume that these variables are measured without error. If one thinks of ex as the total influences that lead to inaccurate measurement of the independent variable, x, one might depict this assumption as follows: ex =0 x 4. Note that we shall NOT deal with issues of measurement error in Stat 404. 1 B. Assumption 6: X is not correlated with errors in the measurement of Y. 1. Put differently, this assumption suggests that variances in X and Y are not related to any third effect (e.g., time) such that they covary due to this third effect. A depiction of this assumption for a particular independent variable, x, would be as follows: eY 0= x Y 2. For our purposes the matrix, Y, is simply a vector of values for a single dependent variable, y. Thus, ey is simply the error terms (i.e., the ei) from the regression of y on x. 3. What does it mean to say that the xi are uncorrelated with the ei? a. To answer this question, let’s consider a theory of cognitive development according to which children learn self-confidence by following the example of (or by “modeling”) self-confident parents. b. You assemble data to test this theory. However, only after collecting your data do you realize (say, after spending a bit more time perusing other relevant literature) that self-confidence is also associated with physical stature. The taller the child, the more self-confident she is. Thus a child’s self-confidence has both psychological and physiological origins. Given that physiology is genetically inherited, we might sketch out the relations among parents’ and child’s stature and self-confidence as follows: 2 Parents’ physical stature Child’s physical stature Parents’ selfconfidence Child’s selfconfidence c. Unfortunately, you have no data on parents’ or child’s physiology, so you are left with a causal model of the following form: Other causes (eY) 0≠ Parents’ selfconfidence (x) Child’s selfconfidence (Y) d. Since here child’s physical stature is among the “other causes” excluded from the model and since this stature and parents’ self-confidence have a common origin in parents’ physical stature, you could NOT assume that parents’ self-confidence is uncorrelated with the errors from a regression of child’s self-confidence on parents’ self-confidence. C. Assumption 7: No independent variable (i.e., no column in the design matrix, X) may have all of its variance explained by any subset of the remaining independent variables. The assumption can be put mathematically as follows: 3 Rxi . x1 ... xi−1 xi+1 ... xk < 1, ∀i We shall soon discover that when this assumption is not met, your statistics program will be unable to calculate any regression coefficients. 4