Document 11163451

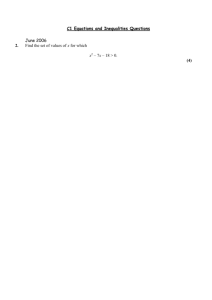

advertisement