Full Text: PDF (211.68KB)

advertisement

Published By Science Journal Publication

Science Journal of Medicine & Clinical Trial

ISSN: 2276-7487

http://www.sjpub.org/sjmct.html

© Author(s) 2013. CC Attribution 3.0 License.

Research Article

doi: 10.7237/sjmct/248

Hot Deck Propensity Score Imputation For Missing Values

Dr. Benjamin Mayer

Institute of Epidemiology and Medical Biometry, Ulm University, Schwabstr. 13, 89075 Ulm, Germany.

e-mail: benjamin.mayer@uni-ulm.de, Phone: +49-731-5026896, Fax: +49-731-5026902

Accepted 22th May, 2013

ABSTRACT

The adequate handling of missing data in medical research still

constitutes a major problem of statistical data analysis. Although

different imputation strategies and methods have been

developed in recent years, none of them can be used

unhesitatingly in arbitrary missing data situations. A commonly

observed drawback of the established imputation methods is

their sensitivity against the number of missing values in the

analysis set, the underlying missing data mechanism and scale

level of the incomplete variable. The extent of this sensitivity is

indeed different for individual methods. A novel approach for the

imputation of missing data was proposed here which especially

does not depend on the scale level of the variable that is affected

by missing values. This approach in a multiple imputation setting

was based on the calculation of propensity scores and their usage

in creating adequate imputation values, resting upon a Hot Deck

principle. In the course of a real-data based simulation study, the

proposed method was compared to a standard multiple

imputation approach and a complete case analysis strategy. The

results showed that there was a dependency of imputation’s

goodness from the assumed missing data proportion, too.

However, the developed imputation method generated

consistent estimations of the width of confidence intervals and

could be applied for different kinds of incomplete variables

straightforwardly, which is not possible with other imputation

approaches that easily in general.

KEYWORDS: Hot deck imputation; Missing values; Multiple

Imputation; Propensity Score.

1.0 INTRODUCTION

In medical research, the adequate handling of

missing values still constitutes a major problem.

Despite extensive methodological research on this

topic, there is still no uniform solution for the

handling and imputation of missing data yet. This

circumstance is also reflected in relevant guidelines

for clinical trials which indeed summarize the

problematic aspects of missing values and present

different approaches for their imputation

meanwhile. However, it has not been possible to

formulate uniform advices so far. Hence, it is much

more important to improve the existing methods

and to develop novel approaches for imputing

missing data. Within the latter, it is worthwhile to

investigate a preferably wide range of missing data

“situations”, which arise from different missing data

mechanisms and different types of variables which

are affected by missing values.

The problems if some values of a dataset cannot be

observed are substantial. First of all, each missing

value stands for a loss of information. The

generalization of study results is therefore limited

to a certain extent, depending on the amount of

missing items in the dataset on the one hand and

which variables are affected on the other hand. Just

ignoring those subjects with missing information

for one or more analysis variables may lead to a

considerable loss of statistical power, especially

when the number of analysis variables and thus the

probability for a missing observation is high. This

so called complete case analysis (CCA) strategy only

leads to convincing results if the missing data arise

independently of the underlying study conditions.

The most serious problem with missing values is

biased parameter estimates, however. Missing

values may destroy a generated structural equality

between comparison groups, for example, with the

result that an investigated treatment effect includes

some bias. With respect to the stated problems of

data analysis and interpretation, a methodological

examination of alternative approaches to handle

the missing data problem apart from only ignoring

them has been necessary.

There is a well established and accepted missing

data theory which can be significantly traced back

to Allison (2001), Little (2002) and Rubin (1987).

According to this theory, the occurrence of missing

data can be divided into three so called missing

data mechanisms, which describe the relation

between observed and missing values. The term

“Missing Completely At Random” (MCAR)

characterizes a situation where the incomplete

cases with missing values are a random sample of

the whole dataset, so the occurrence of missing

Corresponding Author: Dr. Benjamin Mayer

Institute of Epidemiology and Medical Biometry, Ulm University, Schwabstr. 13, 89075 Ulm, Germany.

e-mail: benjamin.mayer@uni-ulm.de, Phone: +49-731-5026896, Fax: +49-731-5026902

Science Journal of Medicine & Clinical Trial ISSN: 2276-7487

data is independent of other variables in the

dataset. An example for MCAR would be a patient

who is dying in the course of a clinical trial, but this

event is totally independent of the participation on

the trial. The second mechanism is called “Missing

At Random” (MAR) and describes the situation

where the occurrence of missing data can be

explained by other variables of the dataset which

are observed (e.g. patient fulfils exclusion criteria in

the course of a trial). If one has to assume that the

occurrence of missing values is in addition to it

related to unobserved variables, the missing data

mechanism is “Missing Not At Random” (MNAR).

One may think of a patient diagnosed with

depression who does not report about his actual

mental constitution because he is afraid of potential

consequences like inpatient treatment.

Numerous imputation methods have been

developed, whereas none of them is really

applicable in a MNAR situation. They can be

separated into either Single Imputation (SI) or

Multiple Imputation (MI) methods. The crucial

distinction between both strategies is that all SI

methods impute each missing by one value,

whereas the MI methods replace each missing value

by m (m > 1) imputation values. The main

advantage of MI over SI is that the imputation

values are estimated more precisely, since the

imputation itself as an additional source of

variability is explicitly considered at the estimation

of the variance. Therefore, MI approaches are

considered to be state of the art today (Schafer and

Graham, 2002). An example of a basic SI approach

is imputation by the mean of the observed values.

Often used MI methods are the Markov Chain

Monte Carlo (MCMC) method and a MI using

chained equations (White et al., 2011). However, as

already mentioned, there is still no uniform

solution for the handling and imputation of missing

data yet (European Medicines Agency, 2010).

A popular method of the SI strategy is, in a simple

version, the so called Hot Deck imputation that

includes a variety of approaches. According to the

Hot Deck principle, a missing value of an

incomplete variable is imputed by an observed

value of the same variable where the selection of

this observed value can be randomly or pursuant a

defined criterion. Often the imputation value for a

study participant is a random choice from a set of

observed values, where the participants show

similarities

with

respect

to

particular

characteristics. Different options to create this so

2

called “donor pool” are summarized and discussed

in Andridge and Little (2010).

Defining subjects similarly may be a complex

procedure in general. The investigated medical

problem strongly determines the most relevant

factors for such a definition. From a methodological

point of view usually multivariate approaches are

required. For a descriptive example one may think

of observational studies where patients are not

randomized to the treatment arms. Covariables and

potential confounders possibly are not equally

distributed to the comparison groups, so respective

analysis methods are required to avoid biased

effect estimates. A well-known approach in such a

situation to make patients more comparable and to

get adjusted effect estimates is the propensity score

(PS). It describes the conditional probability for a

patient to be exposed (e.g. belonging to the

treatment group) under a given covariate structure.

In this way the partially extensive information on a

patient can be reduced to a scalar value. Patients

with the same profile of covariables will have the

same conditional probability to be exposed.

The PS can then be used, for example, to adjust the

effect estimator for potential confounding in the

course of a regression analysis or to conduct a PSbased matching of exposed to non-exposed

patients. If the exposure status would be defined as

an indicator for the presence of missing values of an

analysis variable, then the PS can be used to assess

the similarity of patients with and without missing

values.

Within this paper, it is described how the two

concepts of propensity score estimation and Hot

Deck imputation can be combined to create a

flexible multiple imputation method. This approach

is called the Hot Deck Propensity Score (HDPS)

method. Related approaches are described in

Andridge and Little (2010), Mittinty and Chacko

(2004), Little (1986), Haziza and Beaumont (2007)

and Allison (2000). The proceeding is not

illustrated in detail in Andridge and Little, while

Mittinty and Chacko provide a nearest neighbor

imputation based on PS in a Single Imputation

setting only. The papers of Little and Haziza and

Beaumont, however, also rather describe Single

Imputation approaches, whereas they use the

Propensity Score to create imputation classes,

which is not the scope of this article. Allison indeed

describes a PS-based Multiple Imputation

procedure which is called “approximate Bayesian

bootstrap”, but this approach also rests upon

How to Cite this Article:Dr. Benjamin Mayer “Hot Deck Propensity Score Imputation For Missing Values,” Science Journal of Medicine and Clinical Trials,

Volume 2013 (2013), Article ID sjmct-248, Issue 2, 18 Pages, doi: 10.7237/sjmct/248

Science Journal of Medicine & Clinical Trial ISSN: 2276-7487

imputation classes and not on a nearest neighbor

approach.

The paper is organized as follows. After a short

presentation of the example data set which was

used for the simulation study, some theoretical

aspects of Hot Deck imputation and the calculation

of propensity scores are given. The presentation of

the HDPS method is additionally endorsed by a

simulation study which has been conducted to

investigate the performance of the method with

respect to effect estimation for different missing

data situations. Respective simulation results for a

complete case analysis and a standard multiple

imputation approach are also given to which the

HDPS results can be referred to.

2.0 Data Example

The SPICE data set (Holubarsch et al, 2008) was

derived from a cohort study in the field of

cardiology. This clinical trial investigated the

efficacy and safety of Crataegus extract in patients

with congestive heart failure. In an extended

evaluation of the SPICE data (Mayer et al., 2013), a

multivariate Cox regression analysis was

performed to find risk factors for a cardiac event,

which was a composite endpoint of cardiac death,

non-fatal myocardial infarction and hospitalization

due to progressive heart failure.

This analysis was based on 2170 patients who had

complete values in all necessary variables for the

regression model. The mean age of the patients was

59.8 years (SD 10.5) with a higher amount of male

patients (84%). Most relevant risk factors for

getting a cardiac event had been the intake of

cardioactive

medications

(glycosides,

antiarrhythmics, nitrates, diuretics, beta blockers,

and calcium antagonists), the extent of clinical

parameters (left ventricular ejection fraction

(LVEF), New York Heart Association (NYHA)

functional class) and the age of the patients. The

hazard ratios for those variables were significantly

different from 1.

The results of the multivariate Cox regression

analysis are presented more detailed in Table 2.

Parts thereof should be contrasted to the results of

the simulation study which will be described in the

following section 3, to capture the effect of a

missing value imputation via the proposed Hot

Deck Propensity Score method, and other strategies

to handle missing data.

3

3.0 Materials and Methods

Before the concepts of Hot Deck imputation,

propensity scores and a combination of both to

impute missing values are discussed, an

appropriate notation is introduced. This should

support a clear description of the algorithm for

HDPS imputation.

In the following, V=(V1,…,Vn)T denotes the

incomplete target variable from which missing

values have to be imputed, where n is the number

of subjects in the dataset. For a first view on the

method’s properties, it is assumed that only one

variable is affected by missing values. An

application of the proposed method for more than

one incomplete variable is then discussed within

section 5. Further, Xi=(Xi1,…,Xip) denotes the vector

of p completely observed variables for subject

i=1,…,n. Thus, X=(X1,…,Xn)T is the (n×p) matrix of

observed variables of the dataset. Furhtermore, let

R=(R1,…,Rn)T with Ri=1 if Vi is missing and Ri=0 if Vi

observed for all i=1,…,n be a missing data indicator

variable for V. Above that, the target variable V can

easily be separated into two components, the

observed part and the incomplete part: V=

(Vobs,Vmis). Consequently, the indicator variable R is

also separated into R=(R0,R1).

The main objective is to present a procedure for

missing value imputation. The introduced notation

should therefore not be interpreted in a way that

the regression of V on X is the aim, so V has not

necessarily to be the outcome variable of the final

analysis model. Instead, the aim is to conduct a

regression of the missing value indicator R on X to

estimate the conditional likelihood for each subject

to have a missing value in V. How the propensity

score is subsequently used to multiply impute the

missing values according to a Hot Deck approach is

described in section 3.3. Based on the completed

dataset, the final analysis model, for example a Cox

regression as in section 2, can be conducted.

3.1 The Concept of Hot Deck Imputation

According to the Hot Deck principle, every missing

value v* of a variable V (v* as a part of Vmis) is

imputed by an observed value v (v as a part of Vobs).

At this, the subject with the missing v* is often

labelled “recipient” whereas the subject who

provides the imputation value is called “donor”

(Andridge and Little, 2010). In general, one has to

differentiate two approaches: random Hot Deck

procedures choose a donor value v randomly out of

How to Cite this Article:Dr. Benjamin Mayer “Hot Deck Propensity Score Imputation For Missing Values,” Science Journal of Medicine and Clinical Trials,

Volume 2013 (2013), Article ID sjmct-248, Issue 2, 18 Pages, doi: 10.7237/sjmct/248

Science Journal of Medicine & Clinical Trial ISSN: 2276-7487

an underlying donor pool, and, by contrast,

deterministic Hot Deck methods select v according

to certain criteria. One searches for donor values,

for example, whose donors are preferably similar to

the recipient. For this decision, several approaches

which are able to capture the similarity between

subjects are possible.

In contrast to the so called Cold Deck principle

where the potential donor pool is based on data

which don’t origin from the actual analysis dataset,

the Hot Deck approaches go back to observed

values v of Vobs for the generation of the imputation

values. Since Hot Deck imputation is usually applied

in a SI setting, its general drawback is that the

amount of variability that can be traced back to the

imputation itself is not explicitly considered at the

imputation, so the variance is underestimated by

tendency, and confidence intervals are estimated to

narrow. The HDPS approach therefore aims to

implement the Hot Deck principle in a Multiple

Imputation setting by creating an appropriate

donor pool from which the imputation values are

chosen randomly.

3.2 The Calculation and Usage of Propensity

Scores

Basically, propensity scores describe the

conditional probability P( T=1 | X=x) to be exposed

with respect to a defined event, given the specific

covariable structure for a subject (Rosenbaum and

Rubin, 1983). The exposure status may indicate the

belonging to an intervention group or the presence

of a certain event like, for example, a missing value.

According to D’Agostino (1998), the PS are usually

estimated by a logistic regression model

logit P (T=1 | X=x) = ln (P/(1-P)) = β0 + β1x1 + … + βpxp.

The propensity score summarizes the partially

extensive information of a whole set of covariables

to a scalar value. This reduction of dimension could

be beneficial if the PS is used again within further

analyses.

In observational studies, for example, propensity

scores are frequently used to correct for potential

confounding variables which affect the estimation

of treatment effects negatively (Guo and Fraser,

2010). A major problem of observational studies

concerns the possible lack of structural equality of

comparison groups due to a not-feasible

randomization. By calculating an individual

conditional probability (on the particular covariate

4

structure) for each subject it is possible to adjust

for an unequal distribution of confounders in

comparison groups. The final analysis model,

whatever it is, has to consider the calculated PS as

an adjustment variable. Basically there are two

procedures to make use of the propensity score

(Guo and Fraser, 2010), either including it in the

final analysis model (e.g. as additional covariable in

a regression model) or to interpose a subject

matching according to the PS before a postmatching analysis is done (e.g. stratification based

on matched sample).

The PS approach is applicable to any type of

multivariate data when a binary, or even

multinomial, response variable is investigated.

Defining a binary missing data indicator variable R

as response, the PS methodology can be used to

estimate the chance for each study participant to

have a missing value in V, given the individual

structure of covariables X. The validity of this

likelihood is essentially dependent on the quality

and completeness of the covariables X. At that,

study participants with a similar covariable

structure have similar PS, so a matching of study

participants according to their calculated

conditional likelihoods can be made, for example.

Just that approach should be applied to create an

appropriate donor pool for study participants with

missing values in the course of a Hot Deck

imputation.

3.3 The Hot Deck Propensity Score (HDPS)

Approach

The objective of the HDPS method is to find a

respective donor element v of Vobs for each missing

value v* of Vmis. The approaches of Hot Deck

imputation and propensity score estimation are

combined for this purpose. According to the Hot

Deck principle, donors and recipients should be

preferably similar. The application of a missing data

indicator variable R enables to use a PS approach to

find appropriate pairs of donors and recipients, if R

defines the dependent

variable of the

corresponding logistic regression model and all

completely observed variables are used as

predictors. The strength of similarity is represented

by the PS of the subjects at this. Since a missing data

indicator variable is used which denotes the

presence or absence of the values of the target

variable V, the HDPS approach is not restricted to

an imputation of just certain variables in a sense

that there are no limitations with respect to the

scale level of V.

How to Cite this Article:Dr. Benjamin Mayer “Hot Deck Propensity Score Imputation For Missing Values,” Science Journal of Medicine and Clinical Trials,

Volume 2013 (2013), Article ID sjmct-248, Issue 2, 18 Pages, doi: 10.7237/sjmct/248

Science Journal of Medicine & Clinical Trial ISSN: 2276-7487

To avoid the general drawback of deterministic Hot

Deck procedures that each missing value is imputed

by just one donor value, the HDPS proposal rests

upon the creation of a donor pool from which an

imputation value is randomly chosen. In this way,

the HDPS approach can be implemented as a

Multiple Imputation method and is given through

the following algorithm:

1.

2.

3.

Calculate the PS via P(R=1 | X=x) where X is

the matrix of all completely observed

variables of the original data set and R is

the missing data indicator variable for the

target variable V

For all v* in Vmis search vi in Vobs, i=1,…,10:

Di | PS v* PS vi | 0

with min {Di:

i=1,…,10} := D1 ≤ D2 ≤ … ≤ D10

Do a MI (m = 5) by choosing randomly vi,

i=1,…,10 as imputation value for v*

PS

PS

vi

v* and

Here,

are

the

corresponding

propensity scores of v* and vi, respectively. For each

missing element of V (i.e. for each subject with a

missing in V) one has to search for a set of observed

values of the target variable (i.e. from all subjects

with observed values for V) such that the calculated

individual propensity scores show an absolute

deviation which tends to zero. The aim is to find

PS

vi

those propensity scores

which result in the ten

most minimal absolute differences when

PS

v* . In doing so, a donor

subtracting them from

pool of ten observed values of V is created. For each

missing value of the target variable then m = 5

elements of this pool are chosen randomly as

imputation values. The preferably high similarity

between subjects with missing values and observed

values is characterized and obtained by an absolute

deviation of their propensity scores which tends to

zero. The establishment of a donor pool allows

performing a MI, which has substantial advantages

over deterministic Hot Deck procedures which are

only able to conduct a SI. Simultaneously the

intended similarity between donors and recipients

is ensured by minimizing the absolute difference in

PS. The size of the donor pool has been set to a

number of ten potential donor values to guarantee a

sufficient variability among the imputation values

on the one hand, and to preserve an acceptable

extent of similarity on the other hand. The choice of

5

m = 5 corresponds to the established opinion with

respect to the number of imputations which are

sufficient (Rubin, 1987).

The HDPS approach is very intuitive. Initially one

investigates the relationship between the target

variable (by the respective missing data indicator

variable R) and all completely observed variables of

the data set. Using the PS as a scalar measure of

similarity of subjects, an adequate donor can be

identified easily. The consideration of the absolute

difference guarantees that the potential donor

subjects could have either smaller or larger PS

values. A priori one would expect that the ability of

the logistic regression model to capture a relation

between the missing data indicator and all observed

variables is high, especially if the data are missing

not completely at random (i.e. in case of MAR).

3.4 Simulation Study

To evaluate the proposed HDPS approach with

respect to its ability to impute missing values of

different scale levels properly, a real data-based

simulation study has been performed. On the basis

of the presented example dataset in section 2,

missing values have been artificially simulated and

imputed afterwards again. Therefore, the original

results of the Cox regression model (Table 2) can be

compared to results after imputation. Different

missing data situations have been investigated

which arise from different missing data mechanisms

and various proportions of missing values in the

dataset. Two alternative approaches to handle

missing data have also been applied to allow for a

comparison of the HDPS results to established

methods. The simulations have been performed

with the statistical software SAS.

3.4.1 Missing Data Situations

The simulation study has been set up to investigate

a number of different missing data situations. The

two missing data mechanisms MCAR and MAR have

been created, so the application of the HDPS

approach is restricted to so-called “ignorable”

missingness. Additionally, distinct proportions of

missing values in the dataset (5%, 10%, 20%, 30%,

40%, and 50%) have been examined. Although

there is no recommendation up to which rate of

missing values an imputation can, or should be

performed, a proportion higher than 50% seems

problematic in general with respect to data quality.

For that reason higher percentages have not been

considered for simulation. The investigated missing

How to Cite this Article:Dr. Benjamin Mayer “Hot Deck Propensity Score Imputation For Missing Values,” Science Journal of Medicine and Clinical Trials,

Volume 2013 (2013), Article ID sjmct-248, Issue 2, 18 Pages, doi: 10.7237/sjmct/248

Science Journal of Medicine & Clinical Trial ISSN: 2276-7487

rates represent more realistic scenarios which may

arise in practice. For each missing data situation,

1000 simulations have been run.

Hot Deck Propensity Score imputation is a flexible

approach since it is not restricted to a certain scale

level of the variable to be imputed. To show its

applicability for different types of variables, the

simulations have been run for both, one continuous

(LVEF) and one binary variable (glycosides intake).

3.4.2 Creation of Missingness

To create missing values according to the MCAR

mechanism, for both variables a simple random

sample has been chosen from the complete dataset

(2170 cases). For every patient a random number

generator has been used which indicated if the

respective value of LVEF or glycosides should be

deleted, where the likelihoods for deletion or not

were equal.

For generating the MAR mechanism a random

number generator has been used, too. But here the

likelihood for a value of LVEF or glycosides,

respectively, to be deleted was based on the values

of the variable NYHA class, and is therefore not

completely random. It has been assumed that

patients with a higher NYHA class are more likely to

have a missing in LVEF or glycosides. This

assumption might be realistic, since patients with a

higher NYHA class are severely ill and are, maybe,

more likely to miss an appointment for LVEF

examination, or do not know exactly if they ingest

glycosides besides all other medications. Overall,

the likelihoods for deleting a value or not have been

unequal, but resulted in the desired missing data

proportion for each simulation scenario.

3.4.3 Investigated Approaches to Handle

Missing Data

The incomplete datasets were subsequently treated

with three different approaches. The presented

HDPS method has been applied to the data, as well

as a standard multiple imputation approach

(Markov Chain Monte Carlo method (MCMC) or

Logistic method). Moreover, a complete case

analysis has been conducted. For the HDPS method,

the SAS macro “%hdps.sas” has been created which

implements the algorithm presented in section 3.3.

The MCMC method is default for the SAS procedure

PROC MI for the imputation of continuous variables

(LVEF). The imputation of binary variables

6

(glycosides) requires the Logistic method which is

also available in PROC MI. According to the HDPS

method, a number of 5 imputations have been

performed with the MCMC method and the Logistic

method, respectively. The Cox regression, as the

final analysis model, includes only completely

observed cases by default, so the performance of

CCA was straightforward by analyzing the

incomplete data sets directly.

3.4.4 Evaluation of Simulation Results

To evaluate the applicability of the proposed HDPS

method, the same Cox regression which created the

original results of Table 2 have been performed

again with all imputed datasets. The parameters of

primary interest for the two incomplete variables

were the hazard ratios with their corresponding

95% confidence intervals. The comparison between

the original results and those which have been

created after missing value imputation based on

these parameters. Since for every missing data

situation 1000 simulation runs have been

conducted, the mean and standard deviation over

all simulation results for every parameter estimate

was calculated for the particular missing data

situation.

The c-statistic of the logistic regression model to

calculate the propensity scores has additionally

been recorded for informative purposes. This value

serves for describing the ability of the logistic

regression to model the relationship between the

completely observed covariables and the missing

data indicator variable. Higher values of the cstatistic indicate a better selectivity of the logistic

regression model. Therefore, in simulation

scenarios where the completely observed variables

fit well to the missing data indicator variable, higher

c-values and more precise imputations are

expected. Evaluation of the results after imputing

the binary variable (glycosides) is also based on

capturing the fraction of accordances among

original and imputed values.

4.0 Results

4.1 General Remarks

In the following, the results of the real data-based

simulation study, which has been described within

the last section, are presented. The main objective

was to investigate the impact of a missing value

imputation, with respect to the estimated hazard

ratios and corresponding 95% confidence intervals

How to Cite this Article:Dr. Benjamin Mayer “Hot Deck Propensity Score Imputation For Missing Values,” Science Journal of Medicine and Clinical Trials,

Volume 2013 (2013), Article ID sjmct-248, Issue 2, 18 Pages, doi: 10.7237/sjmct/248

Science Journal of Medicine & Clinical Trial ISSN: 2276-7487

of the two target variables: LVEF and glycosides. No

efforts have been undertaken at the generation of

the HPDS results which explicitly affected the

goodness of the logistic regression model

(expressed by the c-statistic). The calculation of the

propensity scores, which subsequently were used

for choosing the surrogate values, was based on all

observed variables of the example data set. First of

all, salient differences between the particular

simulation scenarios should be investigated with

respect to different proportions of missing values

and missing data mechanisms.

Since the simulation has been conducted for two

different types of variables, the results were

presented in two parts. Beside the hazard ratios and

95% confidence intervals, the value of the c-statistic

was also given for those simulation scenarios where

the HDPS method has been used to impute missing

data. The analysis of the binary variable was a bit

more extended by presenting additionally the rate

of correctly imputed missing items (i.e. accordance

of original and imputed value) for the HDPS and MI

method. It has to be kept in mind that the presented

results were the mean values over all 1000

simulation scenarios.

4.2 Continuous Simulation Variable

The simulation results for the continuous variable

(LVEF) confirmed some previously formulated

expectations and brought out interesting findings

with respect to the intended comparison of the

HDPS method for imputing missing values with CCA

and Multiple Imputation approaches.

First of all, according to Table 3, the use of MI led to

almost unbiased estimates of the hazard ratio and

corresponding 95% confidence interval for LVEF.

This holds for both investigated missing data

mechanisms, MCAR and MAR, and proved again the

very good properties of the MCMC method in

imputing missing values. Moreover, it seemed that

there is no considerable impact of the rate of

missing values in the data set on the precision of

estimation. The maximal deviation of the MI

estimator from the original hazard ratio (0.985)

was 0.9863 (MAR, 50% missing values).

The HDPS method, however, generated slightly

inaccurate estimates of the hazard ratio and the

confidence limits for missing value rates of 20% and

higher. Further, precision of estimation depended

on the underlying missing data mechanism,

whereas the estimation was more precise in case of

7

a MCAR mechanism. The maximum difference

between original and simulated hazard ratio of

LVEF could be observed again for a MAR

mechanism and a missing value rate of 50% (0.985

vs. 0.9933). The upper and lower confidence limits

were estimated too large compared to the original

limits. Overall, strength of the estimated effect sizes

for LVEF was too high.

The CCA strategy resulted in relatively robust

estimations of the hazard ratio for both, MCAR and

MAR, but much more sensitive estimations of the

confidence limits. The maximal deviation of the

estimated hazard ratio was 0.9688. While both

confidence limits were estimated too large by

applying the HDPS method, the CCA strategy

brought out smaller estimators for the lower

confidence limit and larger estimators for the upper

confidence limit. The broadest confidence interval

was given for a MCAR mechanism and 50% missing

values.

The MI and HDPS methods were both able to

generate consistent estimations of the confidence

intervals’ width in every simulation scenario. The

MI estimations were generally very consistent, even

with respect to both interval limits, but not only

regarding the width of the interval. Although both

confidence limits were estimated too large by the

HDPS method, the width of the interval stayed

relatively consistent.

As already described, the inaccurate estimation of

confidence limits by the CCA strategy differed

considerably from the biased estimation of the

confidence limits by HDPS. Whereas the HDPS

method generated too large values of both

confidence limits, CCA estimated confidence

intervals which were too wide, especially for higher

rates of missing values. The significant impact of

LVEF to the occurrence of a cardiac event was kept

up by applying a MI approach to the incomplete

data, since the estimation of the upper confidence

limit was below 1 in each scenario. For missing

value rates of 20% and higher, the HDPS and CCA

strategies suggested that LVEF was not a significant

predictor of a cardiac event, since the 95%

confidence interval of the hazard ratio included 1.

Table 4 shows the mean c-statistic of each HDPS

simulation scenario. As expected, the c-value which

can be used to describe the ability of the logistic

regression model to model the relationship between

the completely observed covariables and the

missing data indicator variable, was just above 50%

How to Cite this Article:Dr. Benjamin Mayer “Hot Deck Propensity Score Imputation For Missing Values,” Science Journal of Medicine and Clinical Trials,

Volume 2013 (2013), Article ID sjmct-248, Issue 2, 18 Pages, doi: 10.7237/sjmct/248

Science Journal of Medicine & Clinical Trial ISSN: 2276-7487

(0.54-0.59) in case of a MCAR assumption. The

extent of the c-value was negatively correlated with

the number of missing values in the data set.

Assuming a MAR mechanism, the expectation of

higher values of the c-statistic was also confirmed

(0.79-0.82), whereas there seemed to be no

correlation with the rate of missing data.

Another very important finding was that the HDPS

and MI approaches are both able to estimate the

hazard ratios and corresponding confidence

intervals of all other variables consistently, while

CCA led to severely biased estimates for higher

rates of missing values (see Appendix).

4.2 Binary Simulation Variable

The simulations for the dichotomous variable

‘glycosides intake’ resulted in -at least- slightly

biased estimates of the hazard ratio and confidence

interval limits. All investigated strategies to handle

missing data revealed precision problems even for

smaller rates of missing data.

HDPS underestimated glycosides effect size by

tendency, as well as the corresponding upper and

lower limits of the confidence interval. The extent

depended on the underlying missing data

mechanism, whereas the results were a bit more

biased in case of MCAR (maximal deviation is

1.3919 vs. 1.970). The width of the confidence

interval, however, was estimated consistently for

almost every scenario. For the highest rates of

missing values, the width was estimated a bit too

narrow, and the interval did not contain the true

effect size anymore.

The MI approach also underestimated the effect size

in all scenarios, and the confidence interval as well.

8

Like for HDPS, the Multiple Imputation method also

brought out consistent estimations for the width of

the confidence interval, even for high amounts of

missing values. Moreover, there seemed to be no

substantial impact of the assumed missing data

mechanism on precision of estimation.

In contrast to HDPS and MI, the CCA strategy

generated effect sizes which were overestimated,

especially if the missing data mechanism was MAR

and the amount of missing data was high.

Additionally, the corresponding confidence limits

were biased and led to interval widths which were

too large (maximum at 50% and MAR).

Again, as in case of a missing value imputation of

LVEF, HDPS and MI approaches led to nearly

unbiased estimations of the hazard ratios and

confidence intervals of the other predictor variables

of the Cox regression model, whereas the CCA

strategy generated substantially biased estimates,

especially for higher amounts of missing data in

‘glycosides intake’ (see Appendix).

The values of the c-statistic were similar to those

from the imputation of LVEF. For MCAR, the range

was again 0.54 to 0.59 and confirmed again the

expectation that the logistic regression model was

not able to find a meaningful relationship between

the predictor variables and the missing data

indicator variable when the data were MCAR. In

case of MAR, the values ranged from 0.79 to 0.83.

For the dichotomous variable ‘glycosides intake’

additionally the fraction of accordances among

original and imputed values was investigated. This

rate was negatively correlated with the amount of

missing data for both, HDPS (0.51 to 0.95) and MI

(0.75 to 0.98), whereas the MI approach brought

out more accordance. Overall, these values were

higher in case of a MAR mechanism.

Table 1: Patient characteristics of the analysis set for the extended evaluation of the SPICE study

How to Cite this Article:Dr. Benjamin Mayer “Hot Deck Propensity Score Imputation For Missing Values,” Science Journal of Medicine and Clinical Trials,

Volume 2013 (2013), Article ID sjmct-248, Issue 2, 18 Pages, doi: 10.7237/sjmct/248

Science Journal of Medicine & Clinical Trial ISSN: 2276-7487

Gender (n(%))

Female

Male

Age (mean (SD)) [years]

Smoking (n(%))

9

Complete cases of the SPICE data set

(n=2170)

345 (16%)

1825 (84%)

59.8 (10.5)

Non-smoker

986 (45%)

Smoker

953 (44%)

Ex-smoker

NYHA class

I

II

III

Body mass index (mean (SD)) [kg/m²]

Left ventricular ejection fraction (mean (SD)) [%]

Cardioactive medications (n (%))

231 (11%)

8 (0.4%)

1225 (56%)

937 (43%)

26.8 (3.7)

23.8 (6.6)

Glycosides

1228 (57%)

Nitrates

1224 (56%)

Beta Blockers

1395 (64%)

Antiarrhythmics

Diuretics

Calcium antagonists

476 (22%)

1859 (86%)

26 (1%)

Table 2: Explanatory variables for the risk of a cardiac event in patients with congestive heart failure

How to Cite this Article:Dr. Benjamin Mayer “Hot Deck Propensity Score Imputation For Missing Values,” Science Journal of Medicine and Clinical Trials,

Volume 2013 (2013), Article ID sjmct-248, Issue 2, 18 Pages, doi: 10.7237/sjmct/248

Science Journal of Medicine & Clinical Trial ISSN: 2276-7487

Explanatory variable

10

Glycosides (yes vs. no)

increased

risk for

yes

1.970

[1.636 , 2.372]

<0.0001

NYHA class (III vs. II)

class III

1.602

[1.357 , 1.892]

<0.0001

Diuretics (yes vs. no)

yes

Antiarrhythmics (yes vs. no)

yes

Nitrates (yes vs. no)

yes

Age

yes

Calcium antagonists(yes vs. no)

yes

LVEF

95% CI

1.947

low LVEF

p-value

[1.636 , 2.317]

1.426

older age

Beta Blockers (yes vs. no)

HR

[1.203 , 1.690]

1.775

[1.249 , 2.521]

1.015

[1.007 , 1.023]

1.312

[1.105 , 1.559]

2.233

[1.316 , 4.136]

0.985

[0.974 , 0.997]

<0.0001

<0.0001

0.0014

0.0004

0.0020

0.0037

0.0177

Table 3: Simulation results for the continuous variable LVEF. Denoted are (averaged over all simulations)

the hazard ratio and corresponding 95% confidence interval for LVEF

HR

MCAR

LCL

HR

0.997

0.985

Original results

0.985

5%

HDPS

0.9861 0.9742 0.9981

CCA

0.9854 0.9729 0.9981

10%

20%

30%

CCA

MI

HDPS

MI

CCA

HDPS

MI

0.974

0.9855 0.9733 0.9978

0.9855 0.9736 0.9975

0.9867 0.9748 0.9988

0.9854 0.9735 0.9974

0.9855 0.9722 0.9989

0.9881 0.9761 1.0001

0.9854 0.9735 0.9975

CCA

0.9855 0.9712 0.9999

MI

0.9854 0.9734 0.9975

HDPS

MAR

LCL

UCL

0.9893 0.9774 1.0014

0.974

UCL

0.997

0.9856 0.9733 0.9980

0.9863 0.9744 0.9984

0.9855 0.9736 0.9976

0.9858 0.9731 0.9987

0.9874 0.9755 0.9994

0.9856 0.9737 0.9977

0.9862 0.9724 1.0002

0.9894 0.9774 1.0015

0.9859 0.9740 0.9979

0.9863 0.9714 1.0015

0.9905 0.9785 1.0027

0.9859 0.9740 0.9979

How to Cite this Article:Dr. Benjamin Mayer “Hot Deck Propensity Score Imputation For Missing Values,” Science Journal of Medicine and Clinical Trials,

Volume 2013 (2013), Article ID sjmct-248, Issue 2, 18 Pages, doi: 10.7237/sjmct/248

Science Journal of Medicine & Clinical Trial ISSN: 2276-7487

40%

50%

11

CCA

0.9854 0.9701 1.0010

0.9868 0.9703 1.0036

MI

0.9852 0.9732 0.9973

0.9858 0.9739 0.9978

HDPS

0.9907 0.9787 1.0028

CCA

0.9923 0.9802 1.0045

0.9853 0.9685 1.0024

HDPS

0.9868 0.9703 1.0037

0.9919 0.9799 1.0040

MI

0.9933 0.9812 1.0055

0.9852 0.9733 0.9974

0.9863 0.9744 0.9983

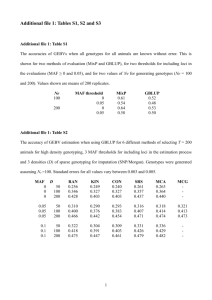

Table 4: C-statistic from the logistic regression model. Denoted is (averaged over all simulations) the cvalue of the logistic regression model before HPDS imputation for LVEF.

C-statistic

MCAR

MAR

5%

10%

20%

30%

40%

50%

0.591

0.567

0.550

0.543

0.541

0.540

0.804

0.810

0.824

0.790

0.826

0.820

Table 5: Simulation results for the binary variable glycosides intake. Denoted are (averaged over all

simulations) the hazard ratio and corresponding 95% confidence interval for glycosides intake.

MCAR

Original results

5%

10%

20%

CCA

HDPS

MI

CCA

HDPS

MI

CCA

HDPS

MI

CCA

MAR

HR

LCL

UCL

HR

LCL

UCL

1.970

1.636

2.372

1.970

1.636

2.372

1.9719 1.6297 2.3860

1.8938 1.5762 2.2753

1.9533 1.6226 2.3516

1.9726 1.6217 2.3995

1.8212 1.5189 2.1837

1.9369 1.6093 2.3311

1.9749 1.6040 2.4316

1.6956 1.4190 2.0261

1.8954 1.5761 2.2794

1.9780 1.5833 2.4712

1.9759 1.6321 2.3921

1.8974 1.5781 2.2812

1.9566 1.6252 2.3557

1.9836 1.6270 2.4185

1.8159 1.5129 2.1796

1.9371 1.6096 2.3312

2.0062 1.6192 2.4856

1.6797 1.4033 2.0106

1.9019 1.5821 2.2864

2.0196 1.6037 2.5434

How to Cite this Article:Dr. Benjamin Mayer “Hot Deck Propensity Score Imputation For Missing Values,” Science Journal of Medicine and Clinical Trials,

Volume 2013 (2013), Article ID sjmct-248, Issue 2, 18 Pages, doi: 10.7237/sjmct/248

Science Journal of Medicine & Clinical Trial ISSN: 2276-7487

30%

40%

50%

12

HDPS

1.5832 1.3290 1.8859

1.5779 1.3212 1.8846

CCA

1.9855 1.5606 2.5262

2.0456 1.5867 2.6373

MI

HDPS

MI

CCA

HDPS

MI

1.8649 1.5520 2.2410

1.4855 1.2503 1.7650

1.8421 1.5332 2.2134

1.9898 1.5277 2.5918

1.3919 1.1742 1.6499

1.8040 1.5017 2.1672

1.8768 1.5624 2.2544

1.4767 1.2387 1.7604

1.8423 1.5339 2.2128

2.0658 1.5608 2.7342

1.3959 1.1730 1.6612

1.8228 1.5173 2.1900

Table 6: Biased CCA estimates of confidence interval width due to 50% missing data in LVEF under

MAR assumption.

Explanatory variable

Glycosides (yes vs. no)

increased

risk for

yes

imputation

method

yes

class III

MI

1.980 [1.644 , 2.384]

CCA

MI

CCA

HDPS

Nitrates (yes vs. no)

yes

1.970 [1.636 , 2.372]

2.046 [1.585 , 2.641]

HDPS

NYHA class (III vs. II)

95% CI

CCA

HDPS

Antiarrhythmics (yes vs. no)

HR

MI

CCA

HDPS

1.981 [1.645 , 2.385]

1.947 [1.636 , 2.317]

1.925 [1.502 , 2.467]

1.947 [1.636 , 2.318]

1.945 [1.634 , 2.315]

1.602 [1.357 , 1.892]

1.617 [1.253 , 2.086]

1.618 [1.370 , 1.912]

1.603 [1.357 , 1.894]

1.426 [1.203 , 1.690]

1.381 [1.088 , 1.754]

1.425 [1.202 , 1.690]

How to Cite this Article:Dr. Benjamin Mayer “Hot Deck Propensity Score Imputation For Missing Values,” Science Journal of Medicine and Clinical Trials,

Volume 2013 (2013), Article ID sjmct-248, Issue 2, 18 Pages, doi: 10.7237/sjmct/248

Science Journal of Medicine & Clinical Trial ISSN: 2276-7487

Diuretics (yes vs. no)

13

yes

MI

1.419 [1.197 , 1.682]

CCA

1.777 [1.154 , 2.739]

HDPS

Age

older age

MI

CCA

HDPS

Beta Blockers (yes vs. no)

yes

MI

CCA

HDPS

Calcium antagonists (yes vs. no)

yes

MI

CCA

HDPS

LVEF

low LVEF

MI

CCA

HDPS

MI

1.775

[1.249 , 2.521]

1.797

[1.265 ,2.553]

1.777 [1.251 , 2.524]

1.015 [1.007 , 1.023]

1.015 [1.004 , 1.026]

1.014 [1.006 , 1.022]

1.014 [1.006 , 1.022]

1.312 [1.105 , 1.559]

1.268 [0.992 , 1.620]

1.316

[1.108 , 1.564]

2.233

[1.316 , 4.136]

1.320

2.540

2.333

2.322

[1.111 , 1.569]

[1.212 , 5.347]

[1.316 , 4.137]

[1.309 , 4.119]

0.985 [0.974 , 0.997]

0.986 [0.970 , 1.003]

0.993 [0.981 , 1.005]

0.986 [0.974 , 0.998]

Table 7: Biased CCA estimates of confidence interval width due to 50% missing data in glycosides

intake under MAR assumption.

How to Cite this Article:Dr. Benjamin Mayer “Hot Deck Propensity Score Imputation For Missing Values,” Science Journal of Medicine and Clinical Trials,

Volume 2013 (2013), Article ID sjmct-248, Issue 2, 18 Pages, doi: 10.7237/sjmct/248

Science Journal of Medicine & Clinical Trial ISSN: 2276-7487

Explanatory variable

Glycosides (yes vs. no)

14

‘

increased

risk for

yes

imputation

method

CCA

HDPS

Antiarrhythmics (yes vs. no)

yes

MI

CCA

HDPS

NYHA class (III vs. II)

class III

MI

CCA

HDPS

Nitrates (yes vs. no)

yes

MI

CCA

HDPS

Diuretics (yes vs. no)

yes

MI

CCA

HDPS

Age

older age

MI

CCA

HDPS

Beta Blockers (yes vs. no)

yes

MI

HR

95% CI

1.970

[1.636 , 2.372]

1.395

[1.173 , 1.661]

2.065

1.822

1.947

1.921

1.866

1.894

1.602

1.627

1.634

1.612

1.426

1.372

1.379

1.420

1.775

1.782

2.180

1.869

1.015

[1.560 , 2.734]

[1.517 , 2.190]

[1.636 , 2.317]

[1.459 , 2.529]

[1.568 , 2.221]

[1.592 , 2.255]

[1.357 , 1.892]

[1.192 , 2.223]

[1.383 , 1.931]

[1.365 , 1.904]

[1.203 , 1.690]

[1.053 , 1.787]

[1.163 , 1.635]

[1.197 , 1.684]

[1.249 , 2.521]

[1.120 , 2.839]

[1.546 , 3.075]

[1.318 , 2.651]

[1.007 , 1.023]

1.015 [1.003 , 1.028]

1.013 [1.005 , 1.021]

1.014 [1.005 , 1.022]

1.312

[1.105 , 1.559]

How to Cite this Article:Dr. Benjamin Mayer “Hot Deck Propensity Score Imputation For Missing Values,” Science Journal of Medicine and Clinical Trials,

Volume 2013 (2013), Article ID sjmct-248, Issue 2, 18 Pages, doi: 10.7237/sjmct/248

Science Journal of Medicine & Clinical Trial ISSN: 2276-7487

15

CCA

1.256

[0.957 , 1.647]

MI

1.291

[1.087 , 1.534]

HDPS

Calcium antagonists (yes vs. no)

yes

CCA

HDPS

LVEF

low LVEF

MI

2.233

2.615

2.320

2.281

0.985

CCA

0.987

MI

0.984

HDPS

5.0 Discussion

1.221

0.984

[1.029 , 1.449]

[1.316 , 4.136]

[1.175 , 5.866]

[1.308 , 4.115]

[1.286 , 4.047]

[0.974 , 0.997]

[0.968 , 1.005]

[0.972 , 0.996]

[0.972 , 0.996]

The presence of missing data is a common problem

in medical research. However, there is no unique

How to Cite this Article:Dr. Benjamin Mayer “Hot Deck Propensity Score Imputation For Missing Values,” Science Journal of Medicine and Clinical Trials,

Volume 2013 (2013), Article ID sjmct-248, Issue 2, 18 Pages, doi: 10.7237/sjmct/248

Science Journal of Medicine & Clinical Trial ISSN: 2276-7487

proposal to handle missing values yet which is

supposed to be appropriate in every kind of

‘missing data situation’. This circumstance is

especially reflected in a respective guideline

(European Medicines Agency, 2010) which just

summarizes the problems of missing values for data

analysis and some established strategies and

methods which can be applied to incomplete data.

Therefore, improving the existing methods and

developing new ones, respectively, should be aimed

in this important research field of medical statistics.

According to literature, methods of the MI strategy

are viewed as state of the art today (Engels and

Diehr, 2003; Newman, 2003; Schafer and Graham,

2002; White et al., 2011) because they lead to less

biased results with respect to the behaviour of

parameter estimates and respective confidence

intervals. In contrast, pragmatic SI methods, like

mean or regression imputation, are not proposed

anymore since they underestimate variance by

tendency. MI overcomes this problem by imputing

missing values m > 1 times, so the imputation itself

as an additional source of variance is explicitly

considered at the estimation process (Rubin, 1987).

Whenever missing values are present, an

imputation strategy is indeed much more

reasonable than just deleting incomplete cases from

the data set, although Vach and Blettner (1991)

showed that this CCA strategy generates unbiased

estimates in case of a MCAR mechanism. However,

the loss of statistical power can be tremendous

when the number of examined variables is high.

Major restrictions to available imputation methods

are their inappropriateness in case of a MNAR

mechanism and their sensitivity against the number

of missing values in a data set, whereas common MI

methods even work well in case of higher amounts

of missing values up to 50% (Engels and Diehr,

2003; Mayer, 2011). Further, each of the established

imputation methods is only applicable for a certain

kind of scale level. The MCMC method, for example,

can only be applied to continuous variables with

missing items, and the Logistic method is only

appropriate for imputing binary variables. In

practice, often more than one variable are affected

by missing values, so one has to apply different

imputation methods to fill the data set. These

distinct methods, however, have different

presumptions with respect to the structure of the

data set which may lead to a complex imputation

process in total. Moreover, many researchers are

not too familiar with possibilities to impute missing

data, so the utilization of different imputation

methods maybe confusing. On the other hand, there

16

is the Fully Conditional Specification (FCS) method

(van Buuren, 2007) which can perform a MI for

variables with either continuous or categorical scale

level, but this approach is some kind of a black box

since the underlying algorithm is quite complex.

Knowing these facts, the development of new and

easily understandable methods for imputing

missing values should preferably include a MI

methodology which can be applied to variables of

different scale levels. The proposed HDPS method

for missing value imputation combines both. A

propensity score approach is used to create a

respective donor pool of possible imputation values

for each missing observation of the data set, so the

HDPS method can be implemented in a MI setting

by multiply choosing one donor value (at random)

out of the pool. On the one hand, the donor pool

which is generated by those 10 donors which have a

PS most next to the respective PS of the recipient

must not be too large because then the similarity

assumption does not hold anymore. On the other

hand, however, the donor pool must not be too

small since there should be enough variability in the

donor data to get valid MI estimates. Choosing those

10 donors’ PS which are most next to the respective

recipient PS seemed to be an acceptable approach

for the initial implementation of the HDPS

procedure.

The simulation study showed that the HDPS method

can easily be applied to different types of

incomplete variables. To apply a standard MI

approach instead, the implementation of different

methods (MCMC or Logistic) is necessary. However,

the validity of parameter estimation is much better

for standard MI approaches which are almost

unbiased, even in case of high amounts of missing

data. Especially the simulations for the binary

variable show that HDPS and MI generate rather

conservative estimations by tendency in contrast to

a CCA approach which estimates higher hazard

ratios and confidence intervals in nearly all

scenarios. But although HDPS, as well as MI, leads to

hazard ratio estimates that are too low, the width of

the corresponding confidence intervals is validly

estimated. In comparison, CCA produces severely

biased estimates of the confidence interval widths.

This mainly traces back to the reduced sample size

for the respective analysis. Another very important

finding is that HDPS, also as well as standard MI

approaches, is able to generate unbiased parameter

estimates for all other variables which are included

in the original Cox regression model (see Appendix),

How to Cite this Article:Dr. Benjamin Mayer “Hot Deck Propensity Score Imputation For Missing Values,” Science Journal of Medicine and Clinical Trials,

Volume 2013 (2013), Article ID sjmct-248, Issue 2, 18 Pages, doi: 10.7237/sjmct/248

Science Journal of Medicine & Clinical Trial ISSN: 2276-7487

whereas CCA produces invalid estimations for most

of the other variables instead.

For both simulation variables, the c-values are

negatively correlated with the number of missings

in the data set in case of a MCAR mechanism. There

are much higher values in case of a simulated MAR

mechanism, but that was the expectation in advance

since the mechanism has been created artificially

from the data. However, there seems to be no

relation between the goodness of fit of the logistic

regression model (i.e. the ability of the model to

separate cases with missing items from those with

complete data by doing a regression of the missing

data indicator variable and all completely observed

covariates) and the validity of estimation. Besides,

the fraction of correctly imputed values in case of

the binary simulation variable is negatively

correlated with the number of missing values in the

data set. Thus, the HDPS method can produce valid

imputations for binary variables only in case of

lower amounts of missing data.

In practice, it is unlikely that only one variable is

affected by missing values, so the handling of

missing data in multiple variables is an important

issue. Thus, an application of the proposed HDPS

approach in such data situations should be

discussed at least. For this initial implementation of

the method and the conducted simulation study,

respectively, only one variable was assumed to have

missing items. The main focus of the article was to

present the newly developed procedure for missing

value imputation, so the first setting of the

simulation study was restricted to only one partially

missing variable to see the properties of HDPS

imputation. The conception of HDPS allows from a

theoretical point of view quite easily its application

in situations with multiply incomplete variables. As

already showed, the method can deal with different

types of missing data variables by using a missing

data indicator variable to create propensity scores

on the basis of all completely observed variables of

a data set. This proceeding can be conducted

repeatedly for each incomplete variable without

further theoretical problems regarding the

imputation procedure. However, one has to discuss

the presumptions with respect to available data in

general. The presented data set includes a huge

number of cases, and therefore the implementation

of the logistic model is unproblematic. If the

investigated data set is much smaller, however, a

valid estimation of PS may be doubtful. But it is

actually very difficult to give an advice at this point

up to which amount of observations the HDPS

17

method can be applied validly. Further research is

necessary here where the proposed method should

be applied to data sets of different sizes.

The simulations showed that there seems to be no

relation between goodness of fit of the logistic

regression model and the validity of estimations for

the HDPS procedure. Otherwise, one should has

been able to observe an increase of estimation

precision in case of MAR compared to MCAR since

the c-values have been much higher for MAR.

However, it should at least be examined within

further investigations what happens to estimation

precision, if the establishment of the logistic

regression model is modified with respect to the

included predictors. Moreover, the performance of

the HDPS procedure in a MNAR setting would give

useful advices regarding the extent of bias of

parameter estimates. And above that, of course,

efforts to prove the method’s ability to produce

acceptable estimations in case of multiply

incomplete

analysis

variables

should

be

undertaken.

The main conclusion of this article is that the

proposed HDPS approach can be easily applied to

different types of incomplete variables regarding

their scale level, which is a major advantage against

most of the established imputation methods which

are one only applicable to certain types of variables.

Although the simulation results showed that HDPS

estimations for the imputed variable were rather

conservative in a sense that the real effect was

underestimated by tendency, the method was able

to produce unbiased estimates for all other included

covariates of the original analysis model. Further

investigations are needed, however, to check for

possible improvements with respect to estimation

precision, for the method’s performance in data sets

of different structures, and for its application in

estimating distinct outcome variables.

Acknowledgements

The study was funded by the young scientists

program of the German network 'Health Services

Research Baden-Wuerttemberg' of the Ministry of

Science, Research and Arts in collaboration with the

Ministry of Employment and Social Order, Family,

Women and Senior Citizens, Baden-Wuerttemberg.

The data for the simulation study have been kindly

provided by Dr Willmar Schwabe Pharmaceuticals,

Karlsruhe, Germany. Thank you very much for that.

I also appreciate the support of Silvia Sander at the

implementation of the SAS macro for HDPS

How to Cite this Article:Dr. Benjamin Mayer “Hot Deck Propensity Score Imputation For Missing Values,” Science Journal of Medicine and Clinical Trials,

Volume 2013 (2013), Article ID sjmct-248, Issue 2, 18 Pages, doi: 10.7237/sjmct/248

Science Journal of Medicine & Clinical Trial ISSN: 2276-7487

imputation. Finally, I thank the independent

reviewers for their valuable comments which really

improved the manuscript.

References

1.

2.

3.

4.

5.

6.

7.

Allison, P.D. Multiple imputation for missing

data: A cautionary tale. Sociological Methods and

Research 2000; 28: 301–309

Allison, P.D. Missing Data. Sage University

Papers Series on Quantitative Applications in

the Social Sciences, Thousand Oaks, CA, 2001,

07-136

Andridge, R.R. and Little, R.J.A. A Review of Hot

Deck Imputation for Survey Non-response.

International Statistical Review 2010, 78, 1: 4064

D’Agostino, R.B. Propensity Score Methods for

Bias Reduction in the Comparison of a

Treatment to a non-randomized Control Group.

Statistics in Medicine 1998, 17, 2265-2281

Engels, J.M. and Diehr P. Imputation of missing

longitudinal data: a comparison of methods.

Journal of Clinical Epidemiology 2003, 56: 968976

European Medicines Agency. Guideline on

Missing Data in Confirmatory Clinical Trials.

2010

Guo, S., Fraser, M.W. Propensity score analysis:

statistical methods and applications. SAGE

Publications, Inc., Thousand Oaks, 2010

18

11. Little, R.J.A. and Rubin, D.B. Statistical Analysis

with Missing Data. John Wiley & Sons, Hoboken,

New Jersey, 2002

12. Mayer, B. Missing Values in clinical longitudinal

studies – Handling of Drop-outs. Dissertation at

the University of Ulm (in German), 2011

(http://vts.uniulm.de/docs/2011/7633/vts_7633_10939.pdf)

13. Mayer, B., Holubarsch, C., Muche, R., Gaus, W.

Prognostic Factors for Patients with Congestive

Heart Failure – An Extended Evaluation of the

SPICE-Study. In revision at British Journal of

Medicine and Medical Research 2013

14. Mittinty, M., Chacko, E. Imputation by Propensity

Matching. Proceedings of the Survey Research

Methods Section, ASA 2004: 4022-4028

15. Newman, D.A. Longitudinal Modelling with

Randomly and Systematically Missing Data: A

Simulation of Ad Hoc, Maximum Likelihood and

Multiple Imputation Techniques. Organizational

Research Methods 2003, 6: 328

16. Rosenbaum, P.R., Rubin, D.B. The central role of

the propensity score in observational studies for

causal effects. Biometrika 1983, 70: 41-55

17. Rubin,

D.B.

Multiple

Imputation

for

Nonresponse in Surveys. John Wiley & Sons,

Hoboken, New Jersey, 1987

18. Schafer, J.L., Graham, J.W. Missing data: our view

of the state of the art. Psychological Methods

2002, 7: 147-177

Haziza, D., Beaumont, J.F. On the Construction of

Imputation Classes in Surveys. International

Statistical Review 2007, 75: 25-43

19. Vach, W., Blettner, M. Biased estimation of the

odds ratio in case-control studies due to the use

of ad hoc methods of correcting for missing

values for confounding variables. American

Journal of Epidemiology 1991, 134: 895-907

10. Little, R.J.A. Survey Nonresponse Adjustments

for Estimates of Means. International Statistical

Review 1986, 54: 139-157

21. White, I.R., Royston, P., Wood, A.M. Multiple

imputation using chained equations: Issues and

guidance for practice. Statistics in Medicine

2011, 30: 377-399

8.

9.

Holubarsch C.J.F., Colucci W.S., Meinertz T., Gaus

W., Tendera M. The efficacy and safety of

Crataegus extract WS® 1442 in patients with

heart failure: The SPICE trial. European Journal

of Heart Failure 2008, 10: 1255–1263

20. van Buuren S. Multiple imputation of discrete

and continuous data by fully conditional

specification. Statistical Methods in Medical

Research 2007, 16: 219-242

How to Cite this Article:Dr. Benjamin Mayer “Hot Deck Propensity Score Imputation For Missing Values,” Science Journal of Medicine and Clinical Trials,

Volume 2013 (2013), Article ID sjmct-248, Issue 2, 18 Pages, doi: 10.7237/sjmct/248