Stat 231 Regression Summary Fall 2013 Steve Vardeman Iowa State University

advertisement

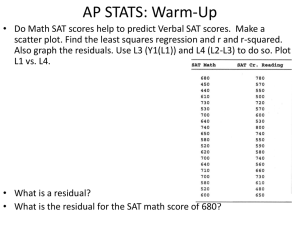

Stat 231 Regression Summary Fall 2013 Steve Vardeman Iowa State University November 19, 2013 Abstract This outline summarizes the main points of basic Simple and Multiple Linear Regression analysis as presented in Stat 231. Contents 1 Simple Linear Regression 1.1 SLR Model . . . . . . . . . . . . . . . . . . . . . . . . . . 1.2 Descriptive Analysis of Approximately Linear (x, y) Data 1.3 Parameter Estimates for SLR . . . . . . . . . . . . . . . . 1.4 Interval-Based Inference Methods for SLR . . . . . . . . . 1.5 Hypothesis Tests and SLR . . . . . . . . . . . . . . . . . . 1.6 ANOVA and SLR . . . . . . . . . . . . . . . . . . . . . . . 1.7 Standardized Residuals and SLR . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2 Multiple Linear Regression 2.1 Multiple Linear Regression Model . . . . . . . . . . . . . . . . . . 2.2 Descriptive Analysis of Approximately Linear (x1 , x2 , . . . , xk , y) Data . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2.3 Parameter Estimates for MLR . . . . . . . . . . . . . . . . . . . 2.4 Interval-Based Inference Methods for MLR . . . . . . . . . . . . 2.5 Hypothesis Tests and MLR . . . . . . . . . . . . . . . . . . . . . 2.6 ANOVA and MLR . . . . . . . . . . . . . . . . . . . . . . . . . . 2.7 "Partial F Tests" in MLR . . . . . . . . . . . . . . . . . . . . . . 2.8 Standardized Residuals in MLR . . . . . . . . . . . . . . . . . . . 2.9 Intervals and Tests for Linear Combinations of β’s in MLR . . . 1 2 2 2 4 4 5 5 6 6 6 7 8 8 9 9 10 11 11 1 Simple Linear Regression 1.1 SLR Model The basic (normal) "simple linear regression" model says that a response/output variable y depends on an explanatory/input/system variable x in a "noisy but linear" way. That is, one supposes that there is a linear relationship between x and mean y, μy|x = β0 + β1 x and that (for fixed x) there is around that mean a distribution of y that is normal. Further, the model assumption is that the standard deviation of the response distribution is constant in x. In symbols it is standard to write y = β0 + β1 x + where is normal with mean 0 and standard deviation σ. This describes one y. Where several observations yi with corresponding values xi are under consideration, the assumption is that the yi (the i ) are independent. (The i are conceptually equivalent to unrelated random draws from the same fixed normal continuous distribution.) The model statement in its full glory is then yi = β0 + β1 xi + i i for i = 1, 2, ..., n for i = 1, 2, ..., n are independent normal (0, σ 2 ) random variables The model statement above is a perfectly theoretical matter. One can begin with it, and for specific choices of β0 , β1 and σ find probabilities for y at given values of x. In applications, the real mode of operation is instead to take n data pairs (x1 , y1 ), (x2 , y2 ), ..., (xn , yn ) and use them to make inferences about the parameters β0 , β1 and σ and to make predictions based on the estimates (based on the empirically fitted model). 1.2 Descriptive Analysis of Approximately Linear (x, y) Data After plotting (x, y) data to determine that the "linear in x mean of y" model makes some sense, it is reasonable to try to quantify "how linear" the data look and to find a line of "best fit" to the scatterplot of the data. The sample correlation between y and x n (xi − x)(yi − y) r = n i=1 n 2 2 i=1 (xi − x) · i=1 (yi − y) is a measure of strength of linear relationship between x and y. the Calculus can be invoked to find a slope β1 and intercept β0 minimizing n sum of squared vertical distances from data points to a fitted line, i=1 (yi − 2 (β0 + β1 xi ))2 . These "least squares" values are n (x − x)(yi − y) n i b1 = i=1 2 i=1 (xi − x) and b 0 = y − b1 x It is further common to refer to the value of y on the "least squares line" corresponding to xi as a fitted or predicted value yi = b0 + b1 xi One might take the difference between what is observed (yi ) and what is "predicted" or "explained" ( yi ) as a kind of leftover part or "residual" corresponding to a data value ei = yi − yi n 2 The sum i=1 (yi − y) is most of the sample variance of the n values yi . It is a measure of raw variation in the response variable. People often call it the "total sum of squares" and write SST ot = n i=1 n (yi − y)2 2 The sum of squared residuals i=1 ei is a measure of variation in response remaining unaccounted for after fitting a line to the data. People often call it the "error sum of squares" and write SSE = n e2i = i=1 n i=1 (yi − yi )2 One is guaranteed that SST ot ≥ SSE. So the difference SST ot − SSE is a non-negative measure of variation accounted for in fitting a line to (x, y) data. People often call it the "regression sum of squares" and write SSR = SST ot − SSE The coefficient of determination expresses SSR as a fraction of SST ot and is SSR SST ot which is interpreted as "the fraction of raw variation in y accounted for in the model fitting process." As it turns out, this quantity is the squared correlation between the yi and yi , and thus in SLR (since the yi are perfectly correlated with the xi ) also the squared correlation between the yi and xi . R2 = 3 1.3 Parameter Estimates for SLR The descriptive statistics for (x, y) data can be used to provide "single number estimates" of the (typically unknown) parameters of the simple linear regression model. That is, the slope of the least squares line can serve as an estimate of β1 , β1 = b1 and the intercept of the least squares line can serve as an estimate of β0 , β0 = b0 The variance of y for a given x can be estimated by a kind of average of squared residuals n 1 2 SSE ei = s2 = n − 2 i=1 n−2 √ Of course, the square root of this "regression sample variance" is s = s2 and serves as a single number estimate of σ. 1.4 Interval-Based Inference Methods for SLR The normal simple linear regression model provides inference formulas for model parameters. Confidence limits for σ are n−2 n−2 and s s χ2upp er χ2lower where χ2upp er and χ2lower are upper and lower percentage points of the χ2 distribution with ν = n − 2 degrees of freedom. And confidence limits for β1 (the slope of the line relating mean y to x ... the rate of change of average y with respect to x) are s b1 ± t n 2 i=1 (xi − x) where t is a quantile of the t distribution with ν = n − 2 degrees of freedom. n 2 One might call the ratio s/ i=1 (xi − x) the standard error of b1 and use the symbols SEb1 for it. In these terms, the confidence limits for β1 are b1 ± tSEb1 Confidence limits for μy|x = β0 + β1 x (the mean value of y at a given value x) are (b0 + b1 x) ± ts (x − x)2 1 + n 2 n i=1 (xi − x) 4 1 (x − x)2 + n the standard 2 n i=1 (xi − x) error of the fitted mean and use the symbol SEy for it. (JMP calls this the Std Error of Predicted.) In these terms, the confidence limits for μy|x are One can abbreviate (b0 +b1 x) as y, and call s y ± tSEy (Note that by choosing x = 0, this formula provides confidence limits for β0 , though this parameter is rarely of independent practical interest.) Prediction limits for an additional observation y at a given value x are (x − x)2 1 (b0 + b1 x) ± ts 1 + + n 2 n i=1 (xi − x) (x − x)2 1 + n the prediction standard error 2 n i=1 (xi − x) for predicting an individual y and use the symbol SEyn e w for it. (JMP calls this Std Error of Individual). It is on occasion useful to notice that SEyn e w = s2 + SEy2 People sometimes call s 1 + Using the SEyn e w notation, prediction limits for an additional y at x are 1.5 y ± tSEyn e w Hypothesis Tests and SLR The normal simple linear regression model supports hypothesis testing. H0 :β1 = # can be tested using the test statistic T = b1 − # s n i=1 (xi − x)2 = b1 − # SEb1 and a tn−2 reference distribution. H0 :μy|x = # can be tested using the test statistic (b0 + b1 x) − # y − # T = = SEy (x − x)2 1 + n s 2 n i=1 (xi − x) and a tn−2 reference distribution. 1.6 ANOVA and SLR The breaking down of SST ot into SSR and SSE can be thought of as a kind of "analysis of variance" in y. That enterprise is often summarized in a special 5 kind of table. The general form is as below. Source Regression Error Total SS SSR SSE SST ot ANOVA Table (for SLR) df MS 1 M SR = SSR/1 n − 2 M SE = SSE/(n − 2) n−1 F F = M SR/M SE In this table the ratios of sums of squares to degrees of freedom are called "mean squares." The mean square for error is, in fact, the estimate of σ 2 (i.e. M SE = s2 ). As it turns out, the ratio in the "F" column can be used as a test statistic for the hypothesis H0 :β1 = 0. The reference distribution appropriate is the F1,n−2 distribution. As it turns out, the value F = M SR/M SE is the square of the t statistic for testing this hypothesis, and the F test produces exactly the same p-value as a two-sided t test. 1.7 Standardized Residuals and SLR The theoretical variances of the residuals turn out to depend upon their corresponding x values. As a means of putting these residuals all on the same footing, it is common to "standardize" them by dividing by an estimated standard deviation for each. This produces standardized residuals e∗i = s 1− ei (xi − x)2 1 − n 2 n i=1 (xi − x) These (if the normal simple linear regression model is a good one) "ought" to look as if they are approximately normal with mean 0 and standard deviation 1. Various kinds of plotting with these standardized residuals (or with the raw residuals) are used as means of "model checking" or "model diagnostics." 2 2.1 Multiple Linear Regression Multiple Linear Regression Model The basic (normal) "multiple linear regression" model says that a response/ output variable y depends on explanatory/input/system variables x1 , x2 , . . . , xk in a "noisy but linear" way. That is, one supposes that there is a linear relationship between x1 , x2 , . . . , xk and mean y, μy|x1 ,x2 ,...,xk = β0 + β1 x1 + β2 x2 + · · · + βk xk and that (for fixed x1 , x2 , . . . , xk ) there is around that mean a distribution of y that is normal. Further, the standard assumption is that the standard 6 deviation of the response distribution is constant in x1 , x2 , . . . xk . In symbols it is standard to write y = β0 + β1 x1 + β2 x2 + · · · + βk xk + where is normal with mean 0 and standard deviation σ. This describes one y. Where several observations yi with corresponding values x1i , x2i , . . . , xki are under consideration, the assumption is that the yi (the i ) are independent. (The i are conceptually equivalent to unrelated random draws from the same fixed normal continuous distribution.) The model statement in its full glory is then yi = β0 + β1 x1i + β2 x2i + · · · + βk xki + i i for i = 1, 2, ..., n for i = 1, 2, ..., n are independent normal (0, σ 2 ) random variables The model statement above is a perfectly theoretical matter. One can begin with it, and for specific choices of β0 , β1 , β2 , . . . , βk and σ find probabilities for y at given values of x1 , x2 , . . . , xk . In applications, the real mode of operation is instead to take n data vectors (x11 , x21 , . . . , xk1 , y1 ), (x12 , x22 , . . . , xk2 , y2 ), ..., (x1n , x2n , . . . , xkn , yn ) and use them to make inferences about the parameters β0 , β1 ,β2 , . . . , βk and σ and to make predictions based on the estimates (based on the empirically fitted model). 2.2 Descriptive Analysis of Approximately Linear (x1 , x2 , . . . , xk , y) Data Calculus can be invoked to find coefficients β0 , β1 , β2 , . . . , βk minimizing the sum of squared vertical ndistances from data points in (k + 1) dimensional space to a fitted surface, i=1 (yi − (β0 + β1 x1i + β2 x2i + · · · + βk xki ))2 . These "least squares" values DO NOT have simple formulas (unless one is willing to use matrix notation). And in particular, one can NOT simply somehow use the formulas from simple linear regression in this more complicated context. We will call these minimizing coefficients b0 , b1 , b2 , . . . , bk and need to rely upon JMP to produce them for us. It is further common to refer to the value of y on the "least squares surface" corresponding to x1i , x2i , . . . , xki as a fitted or predicted value yi = b0 + b1 x1i + b2 x2i + · · · + bk xki Exactly as in SLR, one takes the difference between what is observed (yi ) and what is "predicted" or "explained" ( yi ) as a kind of leftover part or "residual" corresponding to a data value ei = yi − yi n 2 The total sum of squares, SST ot = i=1 (yi − y) , is (still) most of the sample variance of the n values yi and measures raw variation in the response 7 n variable. Just as in SLR, the sum of squared residuals i=1 e2i is a measure of variation in response remaining unaccounted for after fitting the equation to the data. As in SLR, people call it the error sum of squares and write SSE = n e2i = i=1 n i=1 (yi − yi )2 (The formula looks exactly like the one for SLR. It is simply the case that now yi is computed using all k inputs, not just a single x.) One is still guaranteed that SST ot ≥ SSE. So the difference SST ot − SSE is a non-negative measure of variation accounted for in fitting the linear equation to the data. As in SLR, people call it the regression sum of squares and write SSR = SST ot − SSE The coefficient of (multiple) determination expresses SSR as a fraction of SST ot and is SSR R2 = SST ot which is interpreted as "the fraction of raw variation in y accounted for in the model fitting process." This quantity can also be interpreted in terms of a correlation, as it turns out to be the square of the sample linear correlation between the observations yi and the fitted or predicted values yi . 2.3 Parameter Estimates for MLR The descriptive statistics for (x1 , x2 , . . . , xk , y) data can be used to provide "single number estimates" of the (typically unknown) parameters of the multiple linear regression model. That is, the least squares coefficients b0 , b1 , b2 , . . . , bk serve as estimates of the parameters β0 , β1 , β2 , . . . , βk . The first of these is a kind of high-dimensional "intercept" and in the case where the predictors are not functionally related, the others serve as rates of change of average y with respect to a single x, provided the other x’s are held fixed. The variance of y for a fixed set of values x1 , x2 , . . . , xk can be estimated by a kind of average of squared residuals n 1 SSE e2 = s = n − k − 1 i=1 i n−k−1 2 The square root of this "regression sample variance" is s = single number estimate of σ. 2.4 √ s2 and serves as a Interval-Based Inference Methods for MLR The normal multiple linear regression model provides inference formulas for model parameters. Confidence limits for σ are n−k−1 n−k−1 s and s 2 χupp er χ2lower 8 where χ2upp er and χ2lower are upper and lower percentage points of the χ2 distribution with ν = n − k − 1 degrees of freedom. Confidence limits for βj (the rate of change of average y with respect to xj with other x’s held fixed) are bj ± tSEbj where t is a quantile of the t distribution with ν = n − k − 1 degrees of freedom and SEbj is a standard error of bj . There is no simple formula for SEbj , and in particular, one can NOT simply somehow use the formula from simple linear regression in this more complicated context. It IS the case that this standard error is a multiple of s, but we will have to rely upon JMP to provide it for us. Confidence limits for μy|x1 ,x2 ,...,xk = β0 + β1 x1 + β2 x2 + · · · + βk xk (the mean value of y at a particular choice of the x1 , x2 , . . . , xk ) are y ± tSEy where SEy depends upon which values of x1 , x2 , . . . , xk are under consideration. There is no simple formula for SEy and in particular one can NOT simply somehow use the formula from simple linear regression in this more complicated context. It IS the case that this standard error is a multiple of s, but we will have to rely upon JMP to provide it for us. Prediction limits for an additional observation y at a given vector (x1 , x2 , . . . , xk ) are y ± tSEyn e w where it remains true (as in SLR) that SEyn e w = s2 + SEy2 but otherwise there is no simple formula for it, and in particular one can NOT simply somehow use the formula from simple linear regression in this more complicated context. 2.5 Hypothesis Tests and MLR The normal multiple linear regression model supports hypothesis testing. H0 :βj = # can be tested using the test statistic T = bj − # SEbj and a tn−k−1 reference distribution. H0 :μy|x1 ,x2 ,...,xk = # can be tested using the test statistic y − # T = SEy and a tn−k−1 reference distribution. 2.6 ANOVA and MLR As in SLR, the breaking down of SST ot into SSR and SSE can be thought of as a kind of "analysis of variance" in y, and be summarized in a special kind of 9 table. The general form for MLR is as below. Source Regression Error Total ANOVA Table (for MLR Overall F Test) SS df MS SSR k M SR = SSR/k SSE n − k − 1 M SE = SSE/(n − k − 1) SST ot n − 1 F F = M SR/M SE (Note that as in SLR, the mean square for error is, in fact, the estimate of σ 2 (i.e. M SE = s2 ).) As it turns out, the ratio in the "F" column can be used as a test statistic for the hypothesis H0 :β1 = β2 = · · · = βk = 0. The reference distribution appropriate is the Fk,n−k−1 distribution. 2.7 "Partial F Tests" in MLR It is possible to use ANOVA ideas to invent F tests for investigating whether some whole group of β’s (short of the entire set) are all 0. For example one might want to test the hypothesis H0 :βl+1 = βl+2 = · · · = βk = 0 (This is the hypothesis that only the first l of the k input variables xi have any impact on the mean system response ... the hypothesis that the first l of the x’s are adequate to predict y ... the hypothesis that after accounting for the first l of the x’s, the others do not contribute "significantly" to one’s ability to explain or predict y.) If we call the model for y in terms of all k of the predictors the "full model" and the model for y involving only x1 through xl the "reduced model" then an F test of the above hypothesis can be made using the statistic 2 2 n−k−1 RFull − RReduced (SSRFull − SSRReduced )/(k − l) F = = 2 M SEFull k−l 1 − RFull and an Fk−l,n−k−1 reference distribution. SSRFull ≥ SSRReduced so the numerator here is non-negative. Finding a p-value for this kind of test is a means of judging whether R2 for the full model is "significantly"/detectably larger than R2 for the reduced model. (Caution here, statistical significant is not the same as practical importance. With a big enough data set, essentially any increase in R2 will produce a small p-value.) It is reasonably common to expand the basic MLR ANOVA table to organize calculations for this test statistic. This is 10 Source Regression x1 , ..., xl xl+1 , ..., xk |xl , ..., xp Error Total 2.8 (Expanded) ANOVA Table (for MLR) SS df MS SSRFull k M SRFull = SSR/k SSRRed p SSRFull − SSRRed k − l (SSRFull − SSRRed )/(k − l) SSEFull n − k − 1 M SEFull = SSEFull /(n − k − 1) SST ot n−1 F F = M SRFull /M SEFull (SSRF u l l −SSRR e d )/(k−l) M SEF u l l Standardized Residuals in MLR As in SLR, people sometimes wish to standardize residuals before using them to do model checking/diagnostics. While it is not possible to give a simple formula for the "standard error of ei " without using matrix notation, most MLR programs will compute these values. The standardized residual for data point i is then (as in SLR) e∗i = ei standard error of ei If the normal multiple linear regression model is a good one these "ought" to look as if they are approximately normal with mean 0 and standard deviation 1. 2.9 Intervals and Tests for Linear Combinations of β’s in MLR It is sometimes important to do inference for a linear combination of MLR model coefficients L = c0 β0 + c1 β1 + c2 β2 + · · · + ck βk (where c0 , c1 , . . . , ck are known constants). Note, for example, that μy|x1 ,x2 ,...,xk is of this form for c0 = 1, c1 = x1 , c2 = x2 , . . . ,and ck = xk . Note too that a difference in mean responses at two sets of predictors, say (x1 , x2 , . . . , xk ) and (x1 , x2 , . . . , xk ) is of this form for c0 = 0, c1 = x1 − x1 , c2 = x2 − x2 , . . . ,and ck = xk − xk . An obvious estimate of L is = c0 b0 + c1 b1 + c2 b2 + · · · + ck bk L Confidence limits for L are ± t(standard error of L) L This standard error There is no simple formula for "standard error of L". is a multiple of s, but we will have to rely upon JMP to provide it for us. 11 and its standard error is under the "Custom Test" option (Computation of L in JMP.) H0 :L = # can be tested using the test statistic T = −# L standard error of L and a tn−k−1 reference distribution. Or, if one thinks about it for a while, it is possible to find a reduced model that corresponds to the restriction that the null hypothesis places on the MLR model and to use a "l = k − 1" partial F test (with 1 and n − k − 1 degrees of freedom) equivalent to the t test for this purpose. 12